mirror of

https://github.com/router-for-me/CLIProxyAPIPlus.git

synced 2026-04-20 22:51:45 +00:00

Compare commits

558 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

0c48ef58e0 | ||

|

|

15b0d8d039 | ||

|

|

5dcca69e8c | ||

|

|

f5dc6483d5 | ||

|

|

d949921143 | ||

|

|

7b03f04670 | ||

|

|

1267fddf61 | ||

|

|

85c7d43bea | ||

|

|

44c74d6ea2 | ||

|

|

ba454dbfbf | ||

|

|

d1508ca030 | ||

|

|

d4a6a5ae15 | ||

|

|

7c24d54ca8 | ||

|

|

a4c1e32ff6 | ||

|

|

f56cf42461 | ||

|

|

3dea1da249 | ||

|

|

8fac29631d | ||

|

|

8fecd625d2 | ||

|

|

10b55b5ddd | ||

|

|

41ae2c81e7 | ||

|

|

278a89824c | ||

|

|

c4459c4346 | ||

|

|

61e0447f92 | ||

|

|

1dc3018fd6 | ||

|

|

26fd3eff03 | ||

|

|

5bfaf8086b | ||

|

|

6c0a1efd71 | ||

|

|

f5ed5c7453 | ||

|

|

65158cce46 | ||

|

|

1c6c3675d1 | ||

|

|

a583463d60 | ||

|

|

8ed290c1c4 | ||

|

|

727221df2e | ||

|

|

1d8e68ad15 | ||

|

|

0ab1f5412f | ||

|

|

9ded75d335 | ||

|

|

f135fdf7fc | ||

|

|

828df80088 | ||

|

|

c585caa0ce | ||

|

|

5bb69fa4ab | ||

|

|

344043b9f1 | ||

|

|

26c298ced1 | ||

|

|

5ab9afac83 | ||

|

|

65ce86338b | ||

|

|

2a97037d7b | ||

|

|

d801393841 | ||

|

|

b2c0cdfc88 | ||

|

|

f32c8c9620 | ||

|

|

0f45d89255 | ||

|

|

96056d0137 | ||

|

|

f780c289e8 | ||

|

|

ac36119a02 | ||

|

|

39dc4557c1 | ||

|

|

30e94b6792 | ||

|

|

938af75954 | ||

|

|

38f0ae5970 | ||

|

|

cf249586a9 | ||

|

|

1dba2d0f81 | ||

|

|

730809d8ea | ||

|

|

e8d1b79cb3 | ||

|

|

5e81b65f2f | ||

|

|

7e8e2226a6 | ||

|

|

f0c20e852f | ||

|

|

7cdf8e9872 | ||

|

|

c42480a574 | ||

|

|

55c146a0e7 | ||

|

|

e2e3c7dde0 | ||

|

|

9e0ab4d116 | ||

|

|

8783caf313 | ||

|

|

f6f4640c5e | ||

|

|

613fe6768d | ||

|

|

ad8e3964ff | ||

|

|

e9dc576409 | ||

|

|

941334da79 | ||

|

|

d54f816363 | ||

|

|

69b950db4c | ||

|

|

f43d25def1 | ||

|

|

a279192881 | ||

|

|

6a43d7285c | ||

|

|

578c312660 | ||

|

|

6bb9bf3132 | ||

|

|

343a2fc2f7 | ||

|

|

12b967118b | ||

|

|

70efd4e016 | ||

|

|

f5aa68ecda | ||

|

|

9a5f142c33 | ||

|

|

d390b95b76 | ||

|

|

d1f6224b70 | ||

|

|

fcc59d606d | ||

|

|

91e7591955 | ||

|

|

4607356333 | ||

|

|

9a9ed99072 | ||

|

|

5ae38584b8 | ||

|

|

c8b7e2b8d6 | ||

|

|

cad45ffa33 | ||

|

|

6a27bceec0 | ||

|

|

163d68318f | ||

|

|

0ea768011b | ||

|

|

8b9dbe10f0 | ||

|

|

341b4beea1 | ||

|

|

bea13f9724 | ||

|

|

9f5bdfaa31 | ||

|

|

9eabdd09db | ||

|

|

c3f8dc362e | ||

|

|

b85120873b | ||

|

|

6f58518c69 | ||

|

|

000fcb15fa | ||

|

|

ea43361492 | ||

|

|

c1818f197b | ||

|

|

b0653cec7b | ||

|

|

22a1a24cf5 | ||

|

|

7223fee2de | ||

|

|

ada8e2905e | ||

|

|

4ba10531da | ||

|

|

3774b56e9f | ||

|

|

c2d4137fb9 | ||

|

|

2ee938acaf | ||

|

|

8d5e470e1f | ||

|

|

65e9e892a4 | ||

|

|

3882494878 | ||

|

|

088c1d07f4 | ||

|

|

8430b28cfa | ||

|

|

f3ab8f4bc5 | ||

|

|

0e4f189c2e | ||

|

|

98509f615c | ||

|

|

e7a66ae504 | ||

|

|

754b126944 | ||

|

|

ae37ccffbf | ||

|

|

42c062bb5b | ||

|

|

87bf0b73d5 | ||

|

|

f389667ec3 | ||

|

|

29dba0399b | ||

|

|

a824e7cd0b | ||

|

|

140faef7dc | ||

|

|

adb580b344 | ||

|

|

06405f2129 | ||

|

|

b849bf79d6 | ||

|

|

59af2c57b1 | ||

|

|

d1fd2c4ad4 | ||

|

|

b6c6379bfa | ||

|

|

8f0e66b72e | ||

|

|

f63cf6ff7a | ||

|

|

d2419ed49d | ||

|

|

516d22c695 | ||

|

|

73cda6e836 | ||

|

|

0805989ee5 | ||

|

|

9b5ce8c64f | ||

|

|

058793c73a | ||

|

|

75da02af55 | ||

|

|

ab9ebea592 | ||

|

|

7ee37ee4b9 | ||

|

|

837afffb31 | ||

|

|

03a1bac898 | ||

|

|

3171d524f0 | ||

|

|

3e78a8d500 | ||

|

|

fcba912cc4 | ||

|

|

7170eeea5f | ||

|

|

e3eb048c7a | ||

|

|

a59e92435b | ||

|

|

108895fc04 | ||

|

|

abc293c642 | ||

|

|

da3a498a28 | ||

|

|

bb44671845 | ||

|

|

09e480036a | ||

|

|

249f969110 | ||

|

|

4f8acec2d8 | ||

|

|

34339f61ee | ||

|

|

4045378cb4 | ||

|

|

2df35449fe | ||

|

|

c744179645 | ||

|

|

9720b03a6b | ||

|

|

f2c0f3d325 | ||

|

|

4f99bc54f1 | ||

|

|

913f4a9c5f | ||

|

|

25d1c18a3f | ||

|

|

d09dd4d0b2 | ||

|

|

474fb042da | ||

|

|

8435c3d7be | ||

|

|

e783d0a62e | ||

|

|

b05f575e9b | ||

|

|

f5e9f01811 | ||

|

|

ff7dbb5867 | ||

|

|

e34b2b4f1d | ||

|

|

15c2f274ea | ||

|

|

37249339ac | ||

|

|

c422d16beb | ||

|

|

66cd50f603 | ||

|

|

caa529c282 | ||

|

|

51a4379bf4 | ||

|

|

acf98ed10e | ||

|

|

d1c07a091e | ||

|

|

c1a8adf1ab | ||

|

|

08e078fc25 | ||

|

|

105a21548f | ||

|

|

1734aa1664 | ||

|

|

ca11b236a7 | ||

|

|

6fdff8227d | ||

|

|

330e12d3c2 | ||

|

|

bd09c0bf09 | ||

|

|

b468ca79c3 | ||

|

|

d2c7e4e96a | ||

|

|

1c7003ff68 | ||

|

|

1b44364e78 | ||

|

|

ec77f4a4f5 | ||

|

|

f611dd6e96 | ||

|

|

07b7c1a1e0 | ||

|

|

51fd58d74f | ||

|

|

faae9c2f7c | ||

|

|

bc3a6e4646 | ||

|

|

b09b03e35e | ||

|

|

16231947e7 | ||

|

|

39b9a38fbc | ||

|

|

bd855abec9 | ||

|

|

7c3c2e9f64 | ||

|

|

c10f8ae2e2 | ||

|

|

a0bf33eca6 | ||

|

|

88dd9c715d | ||

|

|

a3e21df814 | ||

|

|

d3b94c9241 | ||

|

|

c1d7599829 | ||

|

|

d11936f292 | ||

|

|

17363edf25 | ||

|

|

279cbbbb8a | ||

|

|

486cd4c343 | ||

|

|

25feceb783 | ||

|

|

d26752250d | ||

|

|

b15453c369 | ||

|

|

04ba8c8bc3 | ||

|

|

6570692291 | ||

|

|

f73d55ddaa | ||

|

|

13aa5b3375 | ||

|

|

0fcc02fbea | ||

|

|

c03883ccf0 | ||

|

|

134a9eac9d | ||

|

|

6d8de0ade4 | ||

|

|

1587ff5e74 | ||

|

|

f033d3a6df | ||

|

|

145e0e0b5d | ||

|

|

f8d1bc06ea | ||

|

|

d5930f4e44 | ||

|

|

9b7d7021af | ||

|

|

e41c22ef44 | ||

|

|

5fc2bd393e | ||

|

|

55271403fb | ||

|

|

36fba66619 | ||

|

|

66eb12294a | ||

|

|

73b22ec29b | ||

|

|

c31ae2f3b5 | ||

|

|

76b53d6b5b | ||

|

|

a34dfed378 | ||

|

|

b9b127a7ea | ||

|

|

2741e7b7b3 | ||

|

|

1767a56d4f | ||

|

|

779e6c2d2f | ||

|

|

73c831747b | ||

|

|

b8b89f34f4 | ||

|

|

1fa094dac6 | ||

|

|

f55754621f | ||

|

|

ac26e7db43 | ||

|

|

10b824fcac | ||

|

|

e5d3541b5a | ||

|

|

79755e76ea | ||

|

|

35f158d526 | ||

|

|

6962e09dd9 | ||

|

|

4c4cbd44da | ||

|

|

26eca8b6ba | ||

|

|

62b17f40a1 | ||

|

|

511b8a992e | ||

|

|

7dccc7ba2f | ||

|

|

70c90687fd | ||

|

|

8144ffd5c8 | ||

|

|

0ab977c236 | ||

|

|

224f0de353 | ||

|

|

6b45d311ec | ||

|

|

d54de441d3 | ||

|

|

7386a70724 | ||

|

|

1821bf7051 | ||

|

|

d42b5d4e78 | ||

|

|

1b7447b682 | ||

|

|

40dee4453a | ||

|

|

8902e1cccb | ||

|

|

de5fe71478 | ||

|

|

dcfbec2990 | ||

|

|

c95620f90e | ||

|

|

754f3bcbc3 | ||

|

|

36973d4a6f | ||

|

|

9613f0b3f9 | ||

|

|

274f29e26b | ||

|

|

c8e79c3787 | ||

|

|

8afef43887 | ||

|

|

c1083cbfc6 | ||

|

|

c89d19b300 | ||

|

|

1e6bc81cfd | ||

|

|

1a149475e0 | ||

|

|

e5166841db | ||

|

|

19c52bcb60 | ||

|

|

bb9b2d1758 | ||

|

|

7fa527193c | ||

|

|

ed0eb51b4d | ||

|

|

0e4f669c8b | ||

|

|

76c064c729 | ||

|

|

d2f652f436 | ||

|

|

6a452a54d5 | ||

|

|

9e5693e74f | ||

|

|

528b1a2307 | ||

|

|

0cc978ec1d | ||

|

|

d312422ab4 | ||

|

|

fee736933b | ||

|

|

09c92aa0b5 | ||

|

|

8c67b3ae64 | ||

|

|

000e4ceb4e | ||

|

|

5c99846ecf | ||

|

|

cc32f5ff61 | ||

|

|

fbff68b9e0 | ||

|

|

7e1a543b79 | ||

|

|

d475aaba96 | ||

|

|

1dc4ecb1b8 | ||

|

|

1315f710f5 | ||

|

|

96f55570f7 | ||

|

|

0906aeca87 | ||

|

|

7333619f15 | ||

|

|

97c0487add | ||

|

|

74b862d8b8 | ||

|

|

2db8df8e38 | ||

|

|

a576088d5f | ||

|

|

66ff916838 | ||

|

|

7b0453074e | ||

|

|

a000eb523d | ||

|

|

18a4fedc7f | ||

|

|

5d6cdccda0 | ||

|

|

1b7f4ac3e1 | ||

|

|

afc1a5b814 | ||

|

|

7ed38db54f | ||

|

|

28c10f4e69 | ||

|

|

6e12441a3b | ||

|

|

65c439c18d | ||

|

|

0ed2d16596 | ||

|

|

db335ac616 | ||

|

|

f3c59165d7 | ||

|

|

e6690cb447 | ||

|

|

35907416b8 | ||

|

|

e8bb350467 | ||

|

|

5331d51f27 | ||

|

|

755ca75879 | ||

|

|

2398ebad55 | ||

|

|

c1bf298216 | ||

|

|

e005208d76 | ||

|

|

d1df70d02f | ||

|

|

f81acd0760 | ||

|

|

636da4c932 | ||

|

|

cccb77b552 | ||

|

|

2bd646ad70 | ||

|

|

52c1fa025e | ||

|

|

680105f84d | ||

|

|

f7069e9548 | ||

|

|

7275e99b41 | ||

|

|

c28b65f849 | ||

|

|

793840cdb4 | ||

|

|

8f421de532 | ||

|

|

be2dd60ee7 | ||

|

|

ea3e0b713e | ||

|

|

8179d5a8a4 | ||

|

|

6fa7abe434 | ||

|

|

5135c22cd6 | ||

|

|

1e27990561 | ||

|

|

e1e9fc43c1 | ||

|

|

b2921518ac | ||

|

|

dd64adbeeb | ||

|

|

616d41c06a | ||

|

|

e0e337aeb9 | ||

|

|

d52839fced | ||

|

|

4022e69651 | ||

|

|

56073ded69 | ||

|

|

9738a53f49 | ||

|

|

be3f8dbf7e | ||

|

|

9c6c3612a8 | ||

|

|

19e1a4447a | ||

|

|

36efcc6e28 | ||

|

|

a337ecf35c | ||

|

|

7c2ad4cda2 | ||

|

|

fb95813fbf | ||

|

|

db63f9b5d6 | ||

|

|

25f6c4a250 | ||

|

|

b24ae74216 | ||

|

|

59ad8f40dc | ||

|

|

ff03dc6a2c | ||

|

|

dc7187ca5b | ||

|

|

b1dcff778c | ||

|

|

cef2aeeb08 | ||

|

|

bcd1e8cc34 | ||

|

|

198b3f4a40 | ||

|

|

9fee7f488e | ||

|

|

1b46d39b8b | ||

|

|

c1241a98e2 | ||

|

|

8d8f5970ee | ||

|

|

f90120f846 | ||

|

|

0b94d36c4a | ||

|

|

152c310bb7 | ||

|

|

f6bbca35ab | ||

|

|

c8cee6a209 | ||

|

|

b5701f416b | ||

|

|

4b1a404fcb | ||

|

|

b93cce5412 | ||

|

|

c6cb24039d | ||

|

|

5382408489 | ||

|

|

67669196ed | ||

|

|

5c817a9b42 | ||

|

|

e08f68ed7c | ||

|

|

f09ed25fd3 | ||

|

|

58fd9bf964 | ||

|

|

7b3dfc67bc | ||

|

|

cdd24052d3 | ||

|

|

5da0decef6 | ||

|

|

733fd8edab | ||

|

|

af27f2b8bc | ||

|

|

2e1925d762 | ||

|

|

77254bd074 | ||

|

|

5b6342e6ac | ||

|

|

e166e56249 | ||

|

|

3960c93d51 | ||

|

|

339a81b650 | ||

|

|

560c020477 | ||

|

|

aec65e3be3 | ||

|

|

f44f0702f8 | ||

|

|

b76b79068f | ||

|

|

34c8ccb961 | ||

|

|

d08e164af3 | ||

|

|

8178efaeda | ||

|

|

86d5db472a | ||

|

|

020d36f6e8 | ||

|

|

1db23979e8 | ||

|

|

c3d5dbe96f | ||

|

|

5484489406 | ||

|

|

0ac52da460 | ||

|

|

817cebb321 | ||

|

|

683f3709d6 | ||

|

|

dbd42a42b2 | ||

|

|

ec24baf757 | ||

|

|

dea3e74d35 | ||

|

|

a6c3042e34 | ||

|

|

861537c9bd | ||

|

|

8c92cb0883 | ||

|

|

89d7be9525 | ||

|

|

2b79d7f22f | ||

|

|

2bb686f594 | ||

|

|

163fe287ce | ||

|

|

70988d387b | ||

|

|

52058a1659 | ||

|

|

df5595a0c9 | ||

|

|

ddaa9d2436 | ||

|

|

7b7b258c38 | ||

|

|

a00f774f5a | ||

|

|

9daf1ba8b5 | ||

|

|

76f2359637 | ||

|

|

dcb1c9be8a | ||

|

|

a24f4ace78 | ||

|

|

c631df8c3b | ||

|

|

54c3eb1b1e | ||

|

|

bb28cd26ad | ||

|

|

046865461e | ||

|

|

cf74ed2f0c | ||

|

|

c3762328a5 | ||

|

|

e333fbea3d | ||

|

|

efbe36d1d4 | ||

|

|

8553cfa40e | ||

|

|

30d5c95b26 | ||

|

|

d1e3195e6f | ||

|

|

05a35662ae | ||

|

|

ce53d3a287 | ||

|

|

5f58248016 | ||

|

|

4cc99e7449 | ||

|

|

71773fe032 | ||

|

|

a1e0fa0f39 | ||

|

|

fc2f0b6983 | ||

|

|

5c9997cdac | ||

|

|

6f81046730 | ||

|

|

0687472d01 | ||

|

|

7739738fb3 | ||

|

|

99d1ce247b | ||

|

|

f5941a411c | ||

|

|

ba672bbd07 | ||

|

|

d9c6627a53 | ||

|

|

2e9907c3ac | ||

|

|

90afb9cb73 | ||

|

|

d0cc0cd9a5 | ||

|

|

338321e553 | ||

|

|

182b31963a | ||

|

|

4f48e5254a | ||

|

|

15dd5db1d7 | ||

|

|

424711b718 | ||

|

|

91a2b1f0b4 | ||

|

|

2b134fc378 | ||

|

|

b9153719b0 | ||

|

|

631e5c8331 | ||

|

|

e9c60a0a67 | ||

|

|

98a1bb5a7f | ||

|

|

ca90487a8c | ||

|

|

1042489f85 | ||

|

|

38277c1ea6 | ||

|

|

ee0c24628f | ||

|

|

07d6689d87 | ||

|

|

3a18f6fcca | ||

|

|

099e734a02 | ||

|

|

a52da26b5d | ||

|

|

522a68a4ea | ||

|

|

a02eda54d0 | ||

|

|

97ef633c57 | ||

|

|

dae8463ba1 | ||

|

|

7c1299922e | ||

|

|

ddcf1f279d | ||

|

|

7e6bb8fdc5 | ||

|

|

9cee8ef87b | ||

|

|

93fb841bcb | ||

|

|

0c05131aeb | ||

|

|

5ebc58fab4 | ||

|

|

2b609dd891 | ||

|

|

a8cbc68c3e | ||

|

|

11a795a01c | ||

|

|

89c428216e | ||

|

|

2695a99623 | ||

|

|

242aecd924 | ||

|

|

ce8cc1ba33 | ||

|

|

ad5253bd2b | ||

|

|

97fdd2e088 | ||

|

|

9397f7049f | ||

|

|

a14d19b92c | ||

|

|

8ae0c05ea6 | ||

|

|

8822f20d17 | ||

|

|

553d6f50ea | ||

|

|

f0e5a5a367 | ||

|

|

f6dfea9357 | ||

|

|

cc8dc7f62c | ||

|

|

a3846ea513 | ||

|

|

8d44be858e | ||

|

|

0e6bb076e9 | ||

|

|

ac135fc7cb | ||

|

|

4e1d09809d | ||

|

|

9e855f8100 | ||

|

|

25680a8259 | ||

|

|

13c93e8cfd | ||

|

|

88aa1b9fd1 | ||

|

|

ac95e92829 | ||

|

|

8526c2da25 | ||

|

|

68a6cabf8b | ||

|

|

ac0e387da1 | ||

|

|

7fe1d102cb | ||

|

|

c51851689b | ||

|

|

419bf784ab | ||

|

|

7d6660d181 | ||

|

|

d8e3d4e2b6 | ||

|

|

dd44413ba5 | ||

|

|

10fa0f2062 | ||

|

|

30338ecec4 | ||

|

|

9a37defed3 | ||

|

|

c83a057996 | ||

|

|

b7588428c5 | ||

|

|

14cb2b95c6 | ||

|

|

fdeef48498 |

81

.github/workflows/agents-md-guard.yml

vendored

Normal file

81

.github/workflows/agents-md-guard.yml

vendored

Normal file

@@ -0,0 +1,81 @@

|

|||||||

|

name: agents-md-guard

|

||||||

|

|

||||||

|

on:

|

||||||

|

pull_request_target:

|

||||||

|

types:

|

||||||

|

- opened

|

||||||

|

- synchronize

|

||||||

|

- reopened

|

||||||

|

|

||||||

|

permissions:

|

||||||

|

contents: read

|

||||||

|

issues: write

|

||||||

|

pull-requests: write

|

||||||

|

|

||||||

|

jobs:

|

||||||

|

close-when-agents-md-changed:

|

||||||

|

runs-on: ubuntu-latest

|

||||||

|

steps:

|

||||||

|

- name: Detect AGENTS.md changes and close PR

|

||||||

|

uses: actions/github-script@v7

|

||||||

|

with:

|

||||||

|

script: |

|

||||||

|

const prNumber = context.payload.pull_request.number;

|

||||||

|

const { owner, repo } = context.repo;

|

||||||

|

|

||||||

|

const files = await github.paginate(github.rest.pulls.listFiles, {

|

||||||

|

owner,

|

||||||

|

repo,

|

||||||

|

pull_number: prNumber,

|

||||||

|

per_page: 100,

|

||||||

|

});

|

||||||

|

|

||||||

|

const touchesAgentsMd = (path) =>

|

||||||

|

typeof path === "string" &&

|

||||||

|

(path === "AGENTS.md" || path.endsWith("/AGENTS.md"));

|

||||||

|

|

||||||

|

const touched = files.filter(

|

||||||

|

(f) => touchesAgentsMd(f.filename) || touchesAgentsMd(f.previous_filename),

|

||||||

|

);

|

||||||

|

|

||||||

|

if (touched.length === 0) {

|

||||||

|

core.info("No AGENTS.md changes detected.");

|

||||||

|

return;

|

||||||

|

}

|

||||||

|

|

||||||

|

const changedList = touched

|

||||||

|

.map((f) =>

|

||||||

|

f.previous_filename && f.previous_filename !== f.filename

|

||||||

|

? `- ${f.previous_filename} -> ${f.filename}`

|

||||||

|

: `- ${f.filename}`,

|

||||||

|

)

|

||||||

|

.join("\n");

|

||||||

|

|

||||||

|

const body = [

|

||||||

|

"This repository does not allow modifying `AGENTS.md` in pull requests.",

|

||||||

|

"",

|

||||||

|

"Detected changes:",

|

||||||

|

changedList,

|

||||||

|

"",

|

||||||

|

"Please revert these changes and open a new PR without touching `AGENTS.md`.",

|

||||||

|

].join("\n");

|

||||||

|

|

||||||

|

try {

|

||||||

|

await github.rest.issues.createComment({

|

||||||

|

owner,

|

||||||

|

repo,

|

||||||

|

issue_number: prNumber,

|

||||||

|

body,

|

||||||

|

});

|

||||||

|

} catch (error) {

|

||||||

|

core.warning(`Failed to comment on PR #${prNumber}: ${error.message}`);

|

||||||

|

}

|

||||||

|

|

||||||

|

await github.rest.pulls.update({

|

||||||

|

owner,

|

||||||

|

repo,

|

||||||

|

pull_number: prNumber,

|

||||||

|

state: "closed",

|

||||||

|

});

|

||||||

|

|

||||||

|

core.setFailed("PR modifies AGENTS.md");

|

||||||

73

.github/workflows/auto-retarget-main-pr-to-dev.yml

vendored

Normal file

73

.github/workflows/auto-retarget-main-pr-to-dev.yml

vendored

Normal file

@@ -0,0 +1,73 @@

|

|||||||

|

name: auto-retarget-main-pr-to-dev

|

||||||

|

|

||||||

|

on:

|

||||||

|

pull_request_target:

|

||||||

|

types:

|

||||||

|

- opened

|

||||||

|

- reopened

|

||||||

|

- edited

|

||||||

|

branches:

|

||||||

|

- main

|

||||||

|

|

||||||

|

permissions:

|

||||||

|

contents: read

|

||||||

|

issues: write

|

||||||

|

pull-requests: write

|

||||||

|

|

||||||

|

jobs:

|

||||||

|

retarget:

|

||||||

|

if: github.actor != 'github-actions[bot]'

|

||||||

|

runs-on: ubuntu-latest

|

||||||

|

steps:

|

||||||

|

- name: Retarget PR base to dev

|

||||||

|

uses: actions/github-script@v7

|

||||||

|

with:

|

||||||

|

script: |

|

||||||

|

const pr = context.payload.pull_request;

|

||||||

|

const prNumber = pr.number;

|

||||||

|

const { owner, repo } = context.repo;

|

||||||

|

|

||||||

|

const baseRef = pr.base?.ref;

|

||||||

|

const headRef = pr.head?.ref;

|

||||||

|

const desiredBase = "dev";

|

||||||

|

|

||||||

|

if (baseRef !== "main") {

|

||||||

|

core.info(`PR #${prNumber} base is ${baseRef}; nothing to do.`);

|

||||||

|

return;

|

||||||

|

}

|

||||||

|

|

||||||

|

if (headRef === desiredBase) {

|

||||||

|

core.info(`PR #${prNumber} is ${desiredBase} -> main; skipping retarget.`);

|

||||||

|

return;

|

||||||

|

}

|

||||||

|

|

||||||

|

core.info(`Retargeting PR #${prNumber} base from ${baseRef} to ${desiredBase}.`);

|

||||||

|

|

||||||

|

try {

|

||||||

|

await github.rest.pulls.update({

|

||||||

|

owner,

|

||||||

|

repo,

|

||||||

|

pull_number: prNumber,

|

||||||

|

base: desiredBase,

|

||||||

|

});

|

||||||

|

} catch (error) {

|

||||||

|

core.setFailed(`Failed to retarget PR #${prNumber} to ${desiredBase}: ${error.message}`);

|

||||||

|

return;

|

||||||

|

}

|

||||||

|

|

||||||

|

const body = [

|

||||||

|

`This pull request targeted \`${baseRef}\`.`,

|

||||||

|

"",

|

||||||

|

`The base branch has been automatically changed to \`${desiredBase}\`.`,

|

||||||

|

].join("\n");

|

||||||

|

|

||||||

|

try {

|

||||||

|

await github.rest.issues.createComment({

|

||||||

|

owner,

|

||||||

|

repo,

|

||||||

|

issue_number: prNumber,

|

||||||

|

body,

|

||||||

|

});

|

||||||

|

} catch (error) {

|

||||||

|

core.warning(`Failed to comment on PR #${prNumber}: ${error.message}`);

|

||||||

|

}

|

||||||

14

.github/workflows/docker-image.yml

vendored

14

.github/workflows/docker-image.yml

vendored

@@ -16,6 +16,10 @@ jobs:

|

|||||||

steps:

|

steps:

|

||||||

- name: Checkout

|

- name: Checkout

|

||||||

uses: actions/checkout@v4

|

uses: actions/checkout@v4

|

||||||

|

- name: Refresh models catalog

|

||||||

|

run: |

|

||||||

|

git fetch --depth 1 https://github.com/router-for-me/models.git main

|

||||||

|

git show FETCH_HEAD:models.json > internal/registry/models/models.json

|

||||||

- name: Set up Docker Buildx

|

- name: Set up Docker Buildx

|

||||||

uses: docker/setup-buildx-action@v3

|

uses: docker/setup-buildx-action@v3

|

||||||

- name: Login to DockerHub

|

- name: Login to DockerHub

|

||||||

@@ -25,7 +29,7 @@ jobs:

|

|||||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||||

- name: Generate Build Metadata

|

- name: Generate Build Metadata

|

||||||

run: |

|

run: |

|

||||||

echo VERSION=`git describe --tags --always --dirty` >> $GITHUB_ENV

|

echo "VERSION=${GITHUB_REF_NAME}" >> $GITHUB_ENV

|

||||||

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

||||||

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

||||||

- name: Build and push (amd64)

|

- name: Build and push (amd64)

|

||||||

@@ -47,6 +51,10 @@ jobs:

|

|||||||

steps:

|

steps:

|

||||||

- name: Checkout

|

- name: Checkout

|

||||||

uses: actions/checkout@v4

|

uses: actions/checkout@v4

|

||||||

|

- name: Refresh models catalog

|

||||||

|

run: |

|

||||||

|

git fetch --depth 1 https://github.com/router-for-me/models.git main

|

||||||

|

git show FETCH_HEAD:models.json > internal/registry/models/models.json

|

||||||

- name: Set up Docker Buildx

|

- name: Set up Docker Buildx

|

||||||

uses: docker/setup-buildx-action@v3

|

uses: docker/setup-buildx-action@v3

|

||||||

- name: Login to DockerHub

|

- name: Login to DockerHub

|

||||||

@@ -56,7 +64,7 @@ jobs:

|

|||||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||||

- name: Generate Build Metadata

|

- name: Generate Build Metadata

|

||||||

run: |

|

run: |

|

||||||

echo VERSION=`git describe --tags --always --dirty` >> $GITHUB_ENV

|

echo "VERSION=${GITHUB_REF_NAME}" >> $GITHUB_ENV

|

||||||

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

||||||

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

||||||

- name: Build and push (arm64)

|

- name: Build and push (arm64)

|

||||||

@@ -90,7 +98,7 @@ jobs:

|

|||||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||||

- name: Generate Build Metadata

|

- name: Generate Build Metadata

|

||||||

run: |

|

run: |

|

||||||

echo VERSION=`git describe --tags --always --dirty` >> $GITHUB_ENV

|

echo "VERSION=${GITHUB_REF_NAME}" >> $GITHUB_ENV

|

||||||

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

||||||

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

||||||

- name: Create and push multi-arch manifests

|

- name: Create and push multi-arch manifests

|

||||||

|

|||||||

4

.github/workflows/pr-test-build.yml

vendored

4

.github/workflows/pr-test-build.yml

vendored

@@ -12,6 +12,10 @@ jobs:

|

|||||||

steps:

|

steps:

|

||||||

- name: Checkout

|

- name: Checkout

|

||||||

uses: actions/checkout@v4

|

uses: actions/checkout@v4

|

||||||

|

- name: Refresh models catalog

|

||||||

|

run: |

|

||||||

|

git fetch --depth 1 https://github.com/router-for-me/models.git main

|

||||||

|

git show FETCH_HEAD:models.json > internal/registry/models/models.json

|

||||||

- name: Set up Go

|

- name: Set up Go

|

||||||

uses: actions/setup-go@v5

|

uses: actions/setup-go@v5

|

||||||

with:

|

with:

|

||||||

|

|||||||

9

.github/workflows/release.yaml

vendored

9

.github/workflows/release.yaml

vendored

@@ -16,6 +16,10 @@ jobs:

|

|||||||

- uses: actions/checkout@v4

|

- uses: actions/checkout@v4

|

||||||

with:

|

with:

|

||||||

fetch-depth: 0

|

fetch-depth: 0

|

||||||

|

- name: Refresh models catalog

|

||||||

|

run: |

|

||||||

|

git fetch --depth 1 https://github.com/router-for-me/models.git main

|

||||||

|

git show FETCH_HEAD:models.json > internal/registry/models/models.json

|

||||||

- run: git fetch --force --tags

|

- run: git fetch --force --tags

|

||||||

- uses: actions/setup-go@v4

|

- uses: actions/setup-go@v4

|

||||||

with:

|

with:

|

||||||

@@ -23,15 +27,14 @@ jobs:

|

|||||||

cache: true

|

cache: true

|

||||||

- name: Generate Build Metadata

|

- name: Generate Build Metadata

|

||||||

run: |

|

run: |

|

||||||

VERSION=$(git describe --tags --always --dirty)

|

echo "VERSION=${GITHUB_REF_NAME}" >> $GITHUB_ENV

|

||||||

echo "VERSION=${VERSION}" >> $GITHUB_ENV

|

|

||||||

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

||||||

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

||||||

- uses: goreleaser/goreleaser-action@v4

|

- uses: goreleaser/goreleaser-action@v4

|

||||||

with:

|

with:

|

||||||

distribution: goreleaser

|

distribution: goreleaser

|

||||||

version: latest

|

version: latest

|

||||||

args: release --clean

|

args: release --clean --skip=validate

|

||||||

env:

|

env:

|

||||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||||

VERSION: ${{ env.VERSION }}

|

VERSION: ${{ env.VERSION }}

|

||||||

|

|||||||

10

.gitignore

vendored

10

.gitignore

vendored

@@ -1,6 +1,7 @@

|

|||||||

# Binaries

|

# Binaries

|

||||||

cli-proxy-api

|

cli-proxy-api

|

||||||

cliproxy

|

cliproxy

|

||||||

|

/server

|

||||||

*.exe

|

*.exe

|

||||||

|

|

||||||

|

|

||||||

@@ -36,15 +37,16 @@ GEMINI.md

|

|||||||

|

|

||||||

# Tooling metadata

|

# Tooling metadata

|

||||||

.vscode/*

|

.vscode/*

|

||||||

|

.worktrees/

|

||||||

.codex/*

|

.codex/*

|

||||||

.claude/*

|

.claude/*

|

||||||

.gemini/*

|

.gemini/*

|

||||||

.serena/*

|

.serena/*

|

||||||

.agent/*

|

.agent/*

|

||||||

.agents/*

|

.agents/*

|

||||||

.agents/*

|

|

||||||

.opencode/*

|

.opencode/*

|

||||||

.idea/*

|

.idea/*

|

||||||

|

.beads/*

|

||||||

.bmad/*

|

.bmad/*

|

||||||

_bmad/*

|

_bmad/*

|

||||||

_bmad-output/*

|

_bmad-output/*

|

||||||

@@ -53,4 +55,10 @@ _bmad-output/*

|

|||||||

# macOS

|

# macOS

|

||||||

.DS_Store

|

.DS_Store

|

||||||

._*

|

._*

|

||||||

|

|

||||||

|

# Opencode

|

||||||

|

.beads/

|

||||||

|

.opencode/

|

||||||

|

.cli-proxy-api/

|

||||||

|

.venv/

|

||||||

*.bak

|

*.bak

|

||||||

|

|||||||

@@ -1,3 +1,5 @@

|

|||||||

|

version: 2

|

||||||

|

|

||||||

builds:

|

builds:

|

||||||

- id: "cli-proxy-api-plus"

|

- id: "cli-proxy-api-plus"

|

||||||

env:

|

env:

|

||||||

@@ -6,6 +8,7 @@ builds:

|

|||||||

- linux

|

- linux

|

||||||

- windows

|

- windows

|

||||||

- darwin

|

- darwin

|

||||||

|

- freebsd

|

||||||

goarch:

|

goarch:

|

||||||

- amd64

|

- amd64

|

||||||

- arm64

|

- arm64

|

||||||

|

|||||||

58

AGENTS.md

Normal file

58

AGENTS.md

Normal file

@@ -0,0 +1,58 @@

|

|||||||

|

# AGENTS.md

|

||||||

|

|

||||||

|

Go 1.26+ proxy server providing OpenAI/Gemini/Claude/Codex compatible APIs with OAuth and round-robin load balancing.

|

||||||

|

|

||||||

|

## Repository

|

||||||

|

- GitHub: https://github.com/router-for-me/CLIProxyAPI

|

||||||

|

|

||||||

|

## Commands

|

||||||

|

```bash

|

||||||

|

gofmt -w . # Format (required after Go changes)

|

||||||

|

go build -o cli-proxy-api ./cmd/server # Build

|

||||||

|

go run ./cmd/server # Run dev server

|

||||||

|

go test ./... # Run all tests

|

||||||

|

go test -v -run TestName ./path/to/pkg # Run single test

|

||||||

|

go build -o test-output ./cmd/server && rm test-output # Verify compile (REQUIRED after changes)

|

||||||

|

```

|

||||||

|

- Common flags: `--config <path>`, `--tui`, `--standalone`, `--local-model`, `--no-browser`, `--oauth-callback-port <port>`

|

||||||

|

|

||||||

|

## Config

|

||||||

|

- Default config: `config.yaml` (template: `config.example.yaml`)

|

||||||

|

- `.env` is auto-loaded from the working directory

|

||||||

|

- Auth material defaults under `auths/`

|

||||||

|

- Storage backends: file-based default; optional Postgres/git/object store (`PGSTORE_*`, `GITSTORE_*`, `OBJECTSTORE_*`)

|

||||||

|

|

||||||

|

## Architecture

|

||||||

|

- `cmd/server/` — Server entrypoint

|

||||||

|

- `internal/api/` — Gin HTTP API (routes, middleware, modules)

|

||||||

|

- `internal/api/modules/amp/` — Amp integration (Amp-style routes + reverse proxy)

|

||||||

|

- `internal/thinking/` — Main thinking/reasoning pipeline. `ApplyThinking()` (apply.go) parses suffixes (`suffix.go`, suffix overrides body), normalizes config to canonical `ThinkingConfig` (`types.go`), normalizes and validates centrally (`validate.go`/`convert.go`), then applies provider-specific output via `ProviderApplier`. Do not break this "canonical representation → per-provider translation" architecture.

|

||||||

|

- `internal/runtime/executor/` — Per-provider runtime executors (incl. Codex WebSocket)

|

||||||

|

- `internal/translator/` — Provider protocol translators (and shared `common`)

|

||||||

|

- `internal/registry/` — Model registry + remote updater (`StartModelsUpdater`); `--local-model` disables remote updates

|

||||||

|

- `internal/store/` — Storage implementations and secret resolution

|

||||||

|

- `internal/managementasset/` — Config snapshots and management assets

|

||||||

|

- `internal/cache/` — Request signature caching

|

||||||

|

- `internal/watcher/` — Config hot-reload and watchers

|

||||||

|

- `internal/wsrelay/` — WebSocket relay sessions

|

||||||

|

- `internal/usage/` — Usage and token accounting

|

||||||

|

- `internal/tui/` — Bubbletea terminal UI (`--tui`, `--standalone`)

|

||||||

|

- `sdk/cliproxy/` — Embeddable SDK entry (service/builder/watchers/pipeline)

|

||||||

|

- `test/` — Cross-module integration tests

|

||||||

|

|

||||||

|

## Code Conventions

|

||||||

|

- Keep changes small and simple (KISS)

|

||||||

|

- Comments in English only

|

||||||

|

- If editing code that already contains non-English comments, translate them to English (don’t add new non-English comments)

|

||||||

|

- For user-visible strings, keep the existing language used in that file/area

|

||||||

|

- New Markdown docs should be in English unless the file is explicitly language-specific (e.g. `README_CN.md`)

|

||||||

|

- As a rule, do not make standalone changes to `internal/translator/`. You may modify it only as part of broader changes elsewhere.

|

||||||

|

- If a task requires changing only `internal/translator/`, run `gh repo view --json viewerPermission -q .viewerPermission` to confirm you have `WRITE`, `MAINTAIN`, or `ADMIN`. If you do, you may proceed; otherwise, file a GitHub issue including the goal, rationale, and the intended implementation code, then stop further work.

|

||||||

|

- `internal/runtime/executor/` should contain executors and their unit tests only. Place any helper/supporting files under `internal/runtime/executor/helps/`.

|

||||||

|

- Follow `gofmt`; keep imports goimports-style; wrap errors with context where helpful

|

||||||

|

- Do not use `log.Fatal`/`log.Fatalf` (terminates the process); prefer returning errors and logging via logrus

|

||||||

|

- Shadowed variables: use method suffix (`errStart := server.Start()`)

|

||||||

|

- Wrap defer errors: `defer func() { if err := f.Close(); err != nil { log.Errorf(...) } }()`

|

||||||

|

- Use logrus structured logging; avoid leaking secrets/tokens in logs

|

||||||

|

- Avoid panics in HTTP handlers; prefer logged errors and meaningful HTTP status codes

|

||||||

|

- Timeouts are allowed only during credential acquisition; after an upstream connection is established, do not set timeouts for any subsequent network behavior. Intentional exceptions that must remain allowed are the Codex websocket liveness deadlines in `internal/runtime/executor/codex_websockets_executor.go`, the wsrelay session deadlines in `internal/wsrelay/session.go`, the management APICall timeout in `internal/api/handlers/management/api_tools.go`, and the `cmd/fetch_antigravity_models` utility timeouts

|

||||||

117

README.md

117

README.md

@@ -8,123 +8,6 @@ All third-party provider support is maintained by community contributors; CLIPro

|

|||||||

|

|

||||||

The Plus release stays in lockstep with the mainline features.

|

The Plus release stays in lockstep with the mainline features.

|

||||||

|

|

||||||

## Differences from the Mainline

|

|

||||||

|

|

||||||

[](https://z.ai/subscribe?ic=8JVLJQFSKB)

|

|

||||||

|

|

||||||

## New Features (Plus Enhanced)

|

|

||||||

|

|

||||||

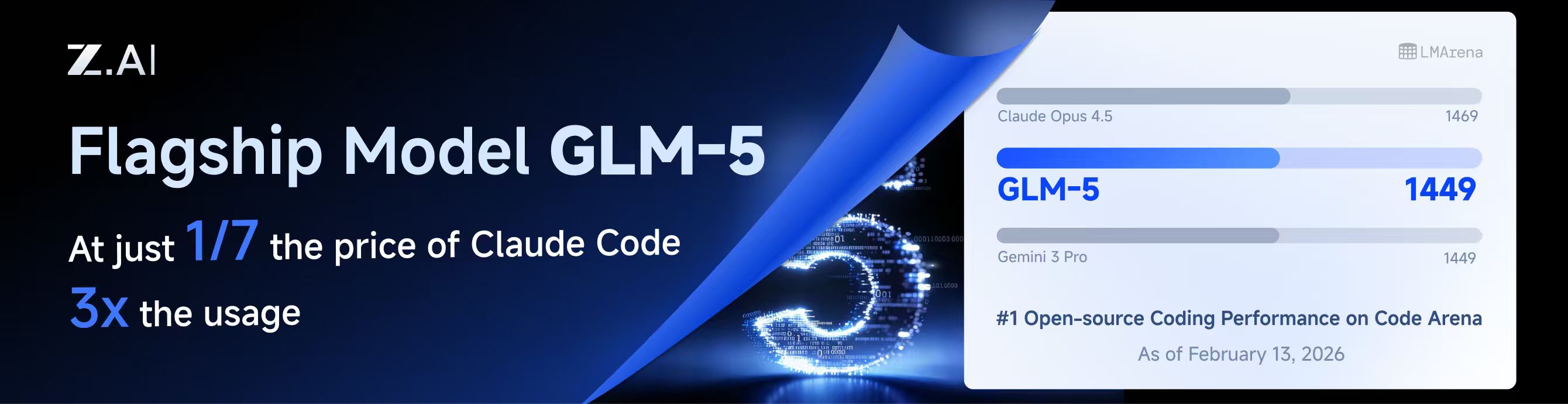

GLM CODING PLAN is a subscription service designed for AI coding, starting at just $10/month. It provides access to their flagship GLM-4.7 & (GLM-5 Only Available for Pro Users)model across 10+ popular AI coding tools (Claude Code, Cline, Roo Code, etc.), offering developers top-tier, fast, and stable coding experiences.

|

|

||||||

|

|

||||||

## Kiro Authentication

|

|

||||||

|

|

||||||

### CLI Login

|

|

||||||

|

|

||||||

> **Note:** Google/GitHub login is not available for third-party applications due to AWS Cognito restrictions.

|

|

||||||

|

|

||||||

**AWS Builder ID** (recommended):

|

|

||||||

|

|

||||||

```bash

|

|

||||||

# Device code flow

|

|

||||||

./CLIProxyAPI --kiro-aws-login

|

|

||||||

|

|

||||||

# Authorization code flow

|

|

||||||

./CLIProxyAPI --kiro-aws-authcode

|

|

||||||

```

|

|

||||||

|

|

||||||

**Import token from Kiro IDE:**

|

|

||||||

|

|

||||||

```bash

|

|

||||||

./CLIProxyAPI --kiro-import

|

|

||||||

```

|

|

||||||

|

|

||||||

To get a token from Kiro IDE:

|

|

||||||

|

|

||||||

1. Open Kiro IDE and login with Google (or GitHub)

|

|

||||||

2. Find the token file: `~/.kiro/kiro-auth-token.json`

|

|

||||||

3. Run: `./CLIProxyAPI --kiro-import`

|

|

||||||

|

|

||||||

**AWS IAM Identity Center (IDC):**

|

|

||||||

|

|

||||||

```bash

|

|

||||||

./CLIProxyAPI --kiro-idc-login --kiro-idc-start-url https://d-xxxxxxxxxx.awsapps.com/start

|

|

||||||

|

|

||||||

# Specify region

|

|

||||||

./CLIProxyAPI --kiro-idc-login --kiro-idc-start-url https://d-xxxxxxxxxx.awsapps.com/start --kiro-idc-region us-west-2

|

|

||||||

```

|

|

||||||

|

|

||||||

**Additional flags:**

|

|

||||||

|

|

||||||

| Flag | Description |

|

|

||||||

|------|-------------|

|

|

||||||

| `--no-browser` | Don't open browser automatically, print URL instead |

|

|

||||||

| `--no-incognito` | Use existing browser session (Kiro defaults to incognito). Useful for corporate SSO that requires an authenticated browser session |

|

|

||||||

| `--kiro-idc-start-url` | IDC Start URL (required with `--kiro-idc-login`) |

|

|

||||||

| `--kiro-idc-region` | IDC region (default: `us-east-1`) |

|

|

||||||

| `--kiro-idc-flow` | IDC flow type: `authcode` (default) or `device` |

|

|

||||||

|

|

||||||

### Web-based OAuth Login

|

|

||||||

|

|

||||||

Access the Kiro OAuth web interface at:

|

|

||||||

|

|

||||||

```

|

|

||||||

http://your-server:8080/v0/oauth/kiro

|

|

||||||

```

|

|

||||||

|

|

||||||

This provides a browser-based OAuth flow for Kiro (AWS CodeWhisperer) authentication with:

|

|

||||||

- AWS Builder ID login

|

|

||||||

- AWS Identity Center (IDC) login

|

|

||||||

- Token import from Kiro IDE

|

|

||||||

|

|

||||||

## Quick Deployment with Docker

|

|

||||||

|

|

||||||

### One-Command Deployment

|

|

||||||

|

|

||||||

```bash

|

|

||||||

# Create deployment directory

|

|

||||||

mkdir -p ~/cli-proxy && cd ~/cli-proxy

|

|

||||||

|

|

||||||

# Create docker-compose.yml

|

|

||||||

cat > docker-compose.yml << 'EOF'

|

|

||||||

services:

|

|

||||||

cli-proxy-api:

|

|

||||||

image: eceasy/cli-proxy-api-plus:latest

|

|

||||||

container_name: cli-proxy-api-plus

|

|

||||||

ports:

|

|

||||||

- "8317:8317"

|

|

||||||

volumes:

|

|

||||||

- ./config.yaml:/CLIProxyAPI/config.yaml

|

|

||||||

- ./auths:/root/.cli-proxy-api

|

|

||||||

- ./logs:/CLIProxyAPI/logs

|

|

||||||

restart: unless-stopped

|

|

||||||

EOF

|

|

||||||

|

|

||||||

# Download example config

|

|

||||||

curl -o config.yaml https://raw.githubusercontent.com/router-for-me/CLIProxyAPIPlus/main/config.example.yaml

|

|

||||||

|

|

||||||

# Pull and start

|

|

||||||

docker compose pull && docker compose up -d

|

|

||||||

```

|

|

||||||

|

|

||||||

### Configuration

|

|

||||||

|

|

||||||

Edit `config.yaml` before starting:

|

|

||||||

|

|

||||||

```yaml

|

|

||||||

# Basic configuration example

|

|

||||||

server:

|

|

||||||

port: 8317

|

|

||||||

|

|

||||||

# Add your provider configurations here

|

|

||||||

```

|

|

||||||

|

|

||||||

### Update to Latest Version

|

|

||||||

|

|

||||||

```bash

|

|

||||||

cd ~/cli-proxy

|

|

||||||

docker compose pull && docker compose up -d

|

|

||||||

```

|

|

||||||

|

|

||||||

## Contributing

|

## Contributing

|

||||||

|

|

||||||

This project only accepts pull requests that relate to third-party provider support. Any pull requests unrelated to third-party provider support will be rejected.

|

This project only accepts pull requests that relate to third-party provider support. Any pull requests unrelated to third-party provider support will be rejected.

|

||||||

|

|||||||

121

README_CN.md

121

README_CN.md

@@ -6,125 +6,6 @@

|

|||||||

|

|

||||||

所有的第三方供应商支持都由第三方社区维护者提供,CLIProxyAPI 不提供技术支持。如需取得支持,请与对应的社区维护者联系。

|

所有的第三方供应商支持都由第三方社区维护者提供,CLIProxyAPI 不提供技术支持。如需取得支持,请与对应的社区维护者联系。

|

||||||

|

|

||||||

该 Plus 版本的主线功能与主线功能强制同步。

|

|

||||||

|

|

||||||

## 与主线版本版本差异

|

|

||||||

|

|

||||||

[](https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII)

|

|

||||||

|

|

||||||

## 新增功能 (Plus 增强版)

|

|

||||||

|

|

||||||

GLM CODING PLAN 是专为AI编码打造的订阅套餐,每月最低仅需20元,即可在十余款主流AI编码工具如 Claude Code、Cline、Roo Code 中畅享智谱旗舰模型GLM-4.7(受限于算力,目前仅限Pro用户开放),为开发者提供顶尖的编码体验。

|

|

||||||

|

|

||||||

智谱AI为本产品提供了特别优惠,使用以下链接购买可以享受九折优惠:https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII

|

|

||||||

|

|

||||||

### 命令行登录

|

|

||||||

|

|

||||||

> **注意:** 由于 AWS Cognito 限制,Google/GitHub 登录不可用于第三方应用。

|

|

||||||

|

|

||||||

**AWS Builder ID**(推荐):

|

|

||||||

|

|

||||||

```bash

|

|

||||||

# 设备码流程

|

|

||||||

./CLIProxyAPI --kiro-aws-login

|

|

||||||

|

|

||||||

# 授权码流程

|

|

||||||

./CLIProxyAPI --kiro-aws-authcode

|

|

||||||

```

|

|

||||||

|

|

||||||

**从 Kiro IDE 导入令牌:**

|

|

||||||

|

|

||||||

```bash

|

|

||||||

./CLIProxyAPI --kiro-import

|

|

||||||

```

|

|

||||||

|

|

||||||

获取令牌步骤:

|

|

||||||

|

|

||||||

1. 打开 Kiro IDE,使用 Google(或 GitHub)登录

|

|

||||||

2. 找到令牌文件:`~/.kiro/kiro-auth-token.json`

|

|

||||||

3. 运行:`./CLIProxyAPI --kiro-import`

|

|

||||||

|

|

||||||

**AWS IAM Identity Center (IDC):**

|

|

||||||

|

|

||||||

```bash

|

|

||||||

./CLIProxyAPI --kiro-idc-login --kiro-idc-start-url https://d-xxxxxxxxxx.awsapps.com/start

|

|

||||||

|

|

||||||

# 指定区域

|

|

||||||

./CLIProxyAPI --kiro-idc-login --kiro-idc-start-url https://d-xxxxxxxxxx.awsapps.com/start --kiro-idc-region us-west-2

|

|

||||||

```

|

|

||||||

|

|

||||||

**附加参数:**

|

|

||||||

|

|

||||||

| 参数 | 说明 |

|

|

||||||

|------|------|

|

|

||||||

| `--no-browser` | 不自动打开浏览器,打印 URL |

|

|

||||||

| `--no-incognito` | 使用已有浏览器会话(Kiro 默认使用无痕模式),适用于需要已登录浏览器会话的企业 SSO 场景 |

|

|

||||||

| `--kiro-idc-start-url` | IDC Start URL(`--kiro-idc-login` 必需) |

|

|

||||||

| `--kiro-idc-region` | IDC 区域(默认:`us-east-1`) |

|

|

||||||

| `--kiro-idc-flow` | IDC 流程类型:`authcode`(默认)或 `device` |

|

|

||||||

|

|

||||||

### 网页端 OAuth 登录

|

|

||||||

|

|

||||||

访问 Kiro OAuth 网页认证界面:

|

|

||||||

|

|

||||||

```

|

|

||||||

http://your-server:8080/v0/oauth/kiro

|

|

||||||

```

|

|

||||||

|

|

||||||

提供基于浏览器的 Kiro (AWS CodeWhisperer) OAuth 认证流程,支持:

|

|

||||||

- AWS Builder ID 登录

|

|

||||||

- AWS Identity Center (IDC) 登录

|

|

||||||

- 从 Kiro IDE 导入令牌

|

|

||||||

|

|

||||||

## Docker 快速部署

|

|

||||||

|

|

||||||

### 一键部署

|

|

||||||

|

|

||||||

```bash

|

|

||||||

# 创建部署目录

|

|

||||||

mkdir -p ~/cli-proxy && cd ~/cli-proxy

|

|

||||||

|

|

||||||

# 创建 docker-compose.yml

|

|

||||||

cat > docker-compose.yml << 'EOF'

|

|

||||||

services:

|

|

||||||

cli-proxy-api:

|

|

||||||

image: eceasy/cli-proxy-api-plus:latest

|

|

||||||

container_name: cli-proxy-api-plus

|

|

||||||

ports:

|

|

||||||

- "8317:8317"

|

|

||||||

volumes:

|

|

||||||

- ./config.yaml:/CLIProxyAPI/config.yaml

|

|

||||||

- ./auths:/root/.cli-proxy-api

|

|

||||||

- ./logs:/CLIProxyAPI/logs

|

|

||||||

restart: unless-stopped

|

|

||||||

EOF

|

|

||||||

|

|

||||||

# 下载示例配置

|

|

||||||

curl -o config.yaml https://raw.githubusercontent.com/router-for-me/CLIProxyAPIPlus/main/config.example.yaml

|

|

||||||

|

|

||||||

# 拉取并启动

|

|

||||||

docker compose pull && docker compose up -d

|

|

||||||

```

|

|

||||||

|

|

||||||

### 配置说明

|

|

||||||

|

|

||||||

启动前请编辑 `config.yaml`:

|

|

||||||

|

|

||||||

```yaml

|

|

||||||

# 基本配置示例

|

|

||||||

server:

|

|

||||||

port: 8317

|

|

||||||

|

|

||||||

# 在此添加你的供应商配置

|

|

||||||

```

|

|

||||||

|

|

||||||

### 更新到最新版本

|

|

||||||

|

|

||||||

```bash

|

|

||||||

cd ~/cli-proxy

|

|

||||||

docker compose pull && docker compose up -d

|

|

||||||

```

|

|

||||||

|

|

||||||

## 贡献

|

## 贡献

|

||||||

|

|

||||||

该项目仅接受第三方供应商支持的 Pull Request。任何非第三方供应商支持的 Pull Request 都将被拒绝。

|

该项目仅接受第三方供应商支持的 Pull Request。任何非第三方供应商支持的 Pull Request 都将被拒绝。

|

||||||

@@ -133,4 +14,4 @@ docker compose pull && docker compose up -d

|

|||||||

|

|

||||||

## 许可证

|

## 许可证

|

||||||

|

|

||||||

此项目根据 MIT 许可证授权 - 有关详细信息,请参阅 [LICENSE](LICENSE) 文件。

|

此项目根据 MIT 许可证授权 - 有关详细信息,请参阅 [LICENSE](LICENSE) 文件。

|

||||||

|

|||||||

BIN

assets/bmoplus.png

Normal file

BIN

assets/bmoplus.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 28 KiB |

BIN

assets/lingtrue.png

Normal file

BIN

assets/lingtrue.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 129 KiB |

276

cmd/fetch_antigravity_models/main.go

Normal file

276

cmd/fetch_antigravity_models/main.go

Normal file

@@ -0,0 +1,276 @@

|

|||||||

|

// Command fetch_antigravity_models connects to the Antigravity API using the

|

||||||

|

// stored auth credentials and saves the dynamically fetched model list to a

|

||||||

|

// JSON file for inspection or offline use.

|

||||||

|

//

|

||||||

|

// Usage:

|

||||||

|

//

|

||||||

|

// go run ./cmd/fetch_antigravity_models [flags]

|

||||||

|

//

|

||||||

|

// Flags:

|

||||||

|

//

|

||||||

|

// --auths-dir <path> Directory containing auth JSON files (default: "auths")

|

||||||

|

// --output <path> Output JSON file path (default: "antigravity_models.json")

|

||||||

|

// --pretty Pretty-print the output JSON (default: true)

|

||||||

|

package main

|

||||||

|

|

||||||

|

import (

|

||||||

|

"context"

|

||||||

|

"encoding/json"

|

||||||

|

"flag"

|

||||||

|

"fmt"

|

||||||

|

"io"

|

||||||

|

"net/http"

|

||||||

|

"os"

|

||||||

|

"path/filepath"

|

||||||

|

"strings"

|

||||||

|

"time"

|

||||||

|

|

||||||

|

"github.com/router-for-me/CLIProxyAPI/v6/internal/logging"

|

||||||

|

"github.com/router-for-me/CLIProxyAPI/v6/internal/misc"

|

||||||

|

sdkauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/auth"

|

||||||

|

coreauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/cliproxy/auth"

|

||||||

|

"github.com/router-for-me/CLIProxyAPI/v6/sdk/proxyutil"

|

||||||

|

log "github.com/sirupsen/logrus"

|

||||||

|

"github.com/tidwall/gjson"

|

||||||

|

)

|

||||||

|

|

||||||

|

const (

|

||||||

|

antigravityBaseURLDaily = "https://daily-cloudcode-pa.googleapis.com"

|

||||||

|

antigravitySandboxBaseURLDaily = "https://daily-cloudcode-pa.sandbox.googleapis.com"

|

||||||

|

antigravityBaseURLProd = "https://cloudcode-pa.googleapis.com"

|

||||||

|

antigravityModelsPath = "/v1internal:fetchAvailableModels"

|

||||||

|

)

|

||||||

|

|

||||||

|

func init() {

|

||||||

|

logging.SetupBaseLogger()

|

||||||

|

log.SetLevel(log.InfoLevel)

|

||||||

|

}

|

||||||

|

|

||||||

|

// modelOutput wraps the fetched model list with fetch metadata.

|

||||||

|

type modelOutput struct {

|

||||||

|

Models []modelEntry `json:"models"`

|

||||||

|

}

|

||||||

|

|

||||||

|

// modelEntry contains only the fields we want to keep for static model definitions.

|

||||||

|

type modelEntry struct {

|

||||||

|

ID string `json:"id"`

|

||||||

|

Object string `json:"object"`

|

||||||

|

OwnedBy string `json:"owned_by"`

|

||||||

|

Type string `json:"type"`

|

||||||

|

DisplayName string `json:"display_name"`

|

||||||

|

Name string `json:"name"`

|

||||||

|

Description string `json:"description"`

|

||||||

|

ContextLength int `json:"context_length,omitempty"`

|

||||||

|

MaxCompletionTokens int `json:"max_completion_tokens,omitempty"`

|

||||||

|

}

|

||||||

|

|

||||||

|

func main() {

|

||||||

|

var authsDir string

|

||||||

|

var outputPath string

|

||||||

|

var pretty bool

|

||||||

|

|

||||||

|

flag.StringVar(&authsDir, "auths-dir", "auths", "Directory containing auth JSON files")

|

||||||

|

flag.StringVar(&outputPath, "output", "antigravity_models.json", "Output JSON file path")

|

||||||

|

flag.BoolVar(&pretty, "pretty", true, "Pretty-print the output JSON")

|

||||||

|

flag.Parse()

|

||||||

|

|

||||||

|

// Resolve relative paths against the working directory.

|

||||||

|

wd, err := os.Getwd()

|

||||||

|

if err != nil {

|

||||||

|

fmt.Fprintf(os.Stderr, "error: cannot get working directory: %v\n", err)

|

||||||

|

os.Exit(1)

|

||||||

|

}

|

||||||

|

if !filepath.IsAbs(authsDir) {

|

||||||

|

authsDir = filepath.Join(wd, authsDir)

|

||||||

|

}

|

||||||

|

if !filepath.IsAbs(outputPath) {

|

||||||

|

outputPath = filepath.Join(wd, outputPath)

|

||||||

|

}

|

||||||

|

|

||||||

|

fmt.Printf("Scanning auth files in: %s\n", authsDir)

|

||||||

|

|

||||||

|

// Load all auth records from the directory.

|

||||||

|

fileStore := sdkauth.NewFileTokenStore()

|

||||||

|

fileStore.SetBaseDir(authsDir)

|

||||||

|

|

||||||

|

ctx := context.Background()

|

||||||

|

auths, err := fileStore.List(ctx)

|

||||||

|

if err != nil {

|

||||||

|

fmt.Fprintf(os.Stderr, "error: failed to list auth files: %v\n", err)

|

||||||

|

os.Exit(1)

|

||||||

|

}

|

||||||

|

if len(auths) == 0 {

|

||||||

|

fmt.Fprintf(os.Stderr, "error: no auth files found in %s\n", authsDir)

|

||||||

|

os.Exit(1)

|

||||||

|

}

|

||||||

|

|

||||||

|

// Find the first enabled antigravity auth.

|

||||||

|

var chosen *coreauth.Auth

|

||||||

|

for _, a := range auths {

|

||||||

|

if a == nil || a.Disabled {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

if strings.EqualFold(strings.TrimSpace(a.Provider), "antigravity") {

|

||||||

|

chosen = a

|

||||||

|

break

|

||||||

|

}

|

||||||

|

}

|

||||||

|

if chosen == nil {

|

||||||

|

fmt.Fprintf(os.Stderr, "error: no enabled antigravity auth found in %s\n", authsDir)

|

||||||

|

os.Exit(1)

|

||||||

|

}

|

||||||

|

|

||||||

|

fmt.Printf("Using auth: id=%s label=%s\n", chosen.ID, chosen.Label)

|

||||||

|

|

||||||

|

// Fetch models from the upstream Antigravity API.

|

||||||

|

fmt.Println("Fetching Antigravity model list from upstream...")

|

||||||

|

|

||||||

|

fetchCtx, cancel := context.WithTimeout(ctx, 30*time.Second)

|

||||||

|

defer cancel()

|

||||||

|

|

||||||

|

models := fetchModels(fetchCtx, chosen)

|

||||||

|

if len(models) == 0 {

|

||||||

|

fmt.Fprintln(os.Stderr, "warning: no models returned (API may be unavailable or token expired)")

|

||||||

|

} else {

|

||||||

|

fmt.Printf("Fetched %d models.\n", len(models))

|

||||||

|

}

|

||||||

|

|

||||||

|

// Build the output payload.

|

||||||

|

out := modelOutput{

|

||||||

|

Models: models,

|

||||||

|

}

|

||||||

|

|

||||||

|

// Marshal to JSON.

|

||||||

|

var raw []byte

|

||||||

|

if pretty {

|

||||||

|

raw, err = json.MarshalIndent(out, "", " ")

|

||||||

|

} else {

|

||||||

|

raw, err = json.Marshal(out)

|

||||||

|

}

|

||||||

|

if err != nil {

|

||||||

|

fmt.Fprintf(os.Stderr, "error: failed to marshal JSON: %v\n", err)

|

||||||

|

os.Exit(1)

|

||||||

|

}

|

||||||

|

|

||||||

|

if err = os.WriteFile(outputPath, raw, 0o644); err != nil {

|

||||||

|

fmt.Fprintf(os.Stderr, "error: failed to write output file %s: %v\n", outputPath, err)

|

||||||

|

os.Exit(1)

|

||||||

|

}

|

||||||

|

|

||||||

|

fmt.Printf("Model list saved to: %s\n", outputPath)

|

||||||

|

}

|

||||||

|

|

||||||

|

func fetchModels(ctx context.Context, auth *coreauth.Auth) []modelEntry {

|

||||||

|

accessToken := metaStringValue(auth.Metadata, "access_token")

|

||||||

|

if accessToken == "" {

|

||||||

|

fmt.Fprintln(os.Stderr, "error: no access token found in auth")

|

||||||

|

return nil

|

||||||

|

}

|

||||||

|

|

||||||

|

baseURLs := []string{antigravityBaseURLProd, antigravityBaseURLDaily, antigravitySandboxBaseURLDaily}

|

||||||

|

|

||||||

|

for _, baseURL := range baseURLs {

|

||||||

|

modelsURL := baseURL + antigravityModelsPath

|

||||||

|

|

||||||

|

var payload []byte

|

||||||

|

if auth != nil && auth.Metadata != nil {

|

||||||

|

if pid, ok := auth.Metadata["project_id"].(string); ok && strings.TrimSpace(pid) != "" {

|

||||||

|

payload = []byte(fmt.Sprintf(`{"project": "%s"}`, strings.TrimSpace(pid)))

|

||||||

|

}

|

||||||

|

}

|

||||||

|

if len(payload) == 0 {

|

||||||

|

payload = []byte(`{}`)

|

||||||

|

}

|

||||||

|

|

||||||

|

httpReq, errReq := http.NewRequestWithContext(ctx, http.MethodPost, modelsURL, strings.NewReader(string(payload)))

|

||||||

|

if errReq != nil {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

httpReq.Close = true

|

||||||

|

httpReq.Header.Set("Content-Type", "application/json")

|

||||||

|

httpReq.Header.Set("Authorization", "Bearer "+accessToken)

|

||||||

|

httpReq.Header.Set("User-Agent", misc.AntigravityUserAgent())

|

||||||

|

|

||||||

|

httpClient := &http.Client{Timeout: 30 * time.Second}

|

||||||

|

if transport, _, errProxy := proxyutil.BuildHTTPTransport(auth.ProxyURL); errProxy == nil && transport != nil {

|

||||||

|

httpClient.Transport = transport

|

||||||

|

}

|

||||||

|

httpResp, errDo := httpClient.Do(httpReq)

|

||||||

|

if errDo != nil {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

|

||||||

|

bodyBytes, errRead := io.ReadAll(httpResp.Body)

|

||||||

|

httpResp.Body.Close()

|

||||||

|

if errRead != nil {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

|

||||||

|

if httpResp.StatusCode < http.StatusOK || httpResp.StatusCode >= http.StatusMultipleChoices {

|

||||||

|

continue

|

||||||

|