mirror of

https://github.com/router-for-me/CLIProxyAPIPlus.git

synced 2026-03-09 23:33:24 +00:00

Compare commits

119 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

05a35662ae | ||

|

|

ce53d3a287 | ||

|

|

4cc99e7449 | ||

|

|

71773fe032 | ||

|

|

a1e0fa0f39 | ||

|

|

fc2f0b6983 | ||

|

|

5c9997cdac | ||

|

|

6f81046730 | ||

|

|

0687472d01 | ||

|

|

7739738fb3 | ||

|

|

99d1ce247b | ||

|

|

f5941a411c | ||

|

|

ba672bbd07 | ||

|

|

d9c6627a53 | ||

|

|

2e9907c3ac | ||

|

|

90afb9cb73 | ||

|

|

d0cc0cd9a5 | ||

|

|

338321e553 | ||

|

|

182b31963a | ||

|

|

4f48e5254a | ||

|

|

15dd5db1d7 | ||

|

|

424711b718 | ||

|

|

91a2b1f0b4 | ||

|

|

2b134fc378 | ||

|

|

b9153719b0 | ||

|

|

631e5c8331 | ||

|

|

e9c60a0a67 | ||

|

|

98a1bb5a7f | ||

|

|

ca90487a8c | ||

|

|

1042489f85 | ||

|

|

38277c1ea6 | ||

|

|

ee0c24628f | ||

|

|

3a18f6fcca | ||

|

|

099e734a02 | ||

|

|

a52da26b5d | ||

|

|

522a68a4ea | ||

|

|

a02eda54d0 | ||

|

|

97ef633c57 | ||

|

|

dae8463ba1 | ||

|

|

7c1299922e | ||

|

|

ddcf1f279d | ||

|

|

7e6bb8fdc5 | ||

|

|

9cee8ef87b | ||

|

|

93fb841bcb | ||

|

|

0c05131aeb | ||

|

|

5ebc58fab4 | ||

|

|

2b609dd891 | ||

|

|

a8cbc68c3e | ||

|

|

11a795a01c | ||

|

|

89c428216e | ||

|

|

2695a99623 | ||

|

|

242aecd924 | ||

|

|

ce8cc1ba33 | ||

|

|

ad5253bd2b | ||

|

|

97fdd2e088 | ||

|

|

9397f7049f | ||

|

|

a14d19b92c | ||

|

|

8ae0c05ea6 | ||

|

|

8822f20d17 | ||

|

|

553d6f50ea | ||

|

|

f0e5a5a367 | ||

|

|

f6dfea9357 | ||

|

|

cc8dc7f62c | ||

|

|

a3846ea513 | ||

|

|

8d44be858e | ||

|

|

0e6bb076e9 | ||

|

|

ac135fc7cb | ||

|

|

4e1d09809d | ||

|

|

9e855f8100 | ||

|

|

25680a8259 | ||

|

|

13c93e8cfd | ||

|

|

88aa1b9fd1 | ||

|

|

352cb98ff0 | ||

|

|

ac95e92829 | ||

|

|

8526c2da25 | ||

|

|

68a6cabf8b | ||

|

|

ac0e387da1 | ||

|

|

7fe1d102cb | ||

|

|

5850492a93 | ||

|

|

fdbd4041ca | ||

|

|

ebef1fae2a | ||

|

|

c51851689b | ||

|

|

419bf784ab | ||

|

|

4bbeb92e9a | ||

|

|

b436dad8bc | ||

|

|

6ae15d6c44 | ||

|

|

0468bde0d6 | ||

|

|

1d7329e797 | ||

|

|

48ffc4dee7 | ||

|

|

7ebd8f0c44 | ||

|

|

b680c146c1 | ||

|

|

7d6660d181 | ||

|

|

d8e3d4e2b6 | ||

|

|

d26ad8224d | ||

|

|

5c84d69d42 | ||

|

|

527e4b7f26 | ||

|

|

b48485b42b | ||

|

|

79009bb3d4 | ||

|

|

26fc611f86 | ||

|

|

b43743d4f1 | ||

|

|

179e5434b1 | ||

|

|

9f95b31158 | ||

|

|

5da07eae4c | ||

|

|

835ae178d4 | ||

|

|

c80ab8bf0d | ||

|

|

ce87714ef1 | ||

|

|

0452b869e8 | ||

|

|

d2e5857b82 | ||

|

|

f9b005f21f | ||

|

|

532107b4fa | ||

|

|

c44793789b | ||

|

|

4e99525279 | ||

|

|

dd44413ba5 | ||

|

|

10fa0f2062 | ||

|

|

30338ecec4 | ||

|

|

9a37defed3 | ||

|

|

c83a057996 | ||

|

|

b7588428c5 | ||

|

|

2615f489d6 |

117

README.md

117

README.md

@@ -8,123 +8,6 @@ All third-party provider support is maintained by community contributors; CLIPro

|

||||

|

||||

The Plus release stays in lockstep with the mainline features.

|

||||

|

||||

## Differences from the Mainline

|

||||

|

||||

[](https://z.ai/subscribe?ic=8JVLJQFSKB)

|

||||

|

||||

## New Features (Plus Enhanced)

|

||||

|

||||

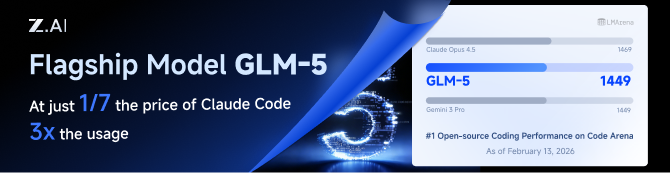

GLM CODING PLAN is a subscription service designed for AI coding, starting at just $10/month. It provides access to their flagship GLM-4.7 & (GLM-5 Only Available for Pro Users)model across 10+ popular AI coding tools (Claude Code, Cline, Roo Code, etc.), offering developers top-tier, fast, and stable coding experiences.

|

||||

|

||||

## Kiro Authentication

|

||||

|

||||

### CLI Login

|

||||

|

||||

> **Note:** Google/GitHub login is not available for third-party applications due to AWS Cognito restrictions.

|

||||

|

||||

**AWS Builder ID** (recommended):

|

||||

|

||||

```bash

|

||||

# Device code flow

|

||||

./CLIProxyAPI --kiro-aws-login

|

||||

|

||||

# Authorization code flow

|

||||

./CLIProxyAPI --kiro-aws-authcode

|

||||

```

|

||||

|

||||

**Import token from Kiro IDE:**

|

||||

|

||||

```bash

|

||||

./CLIProxyAPI --kiro-import

|

||||

```

|

||||

|

||||

To get a token from Kiro IDE:

|

||||

|

||||

1. Open Kiro IDE and login with Google (or GitHub)

|

||||

2. Find the token file: `~/.kiro/kiro-auth-token.json`

|

||||

3. Run: `./CLIProxyAPI --kiro-import`

|

||||

|

||||

**AWS IAM Identity Center (IDC):**

|

||||

|

||||

```bash

|

||||

./CLIProxyAPI --kiro-idc-login --kiro-idc-start-url https://d-xxxxxxxxxx.awsapps.com/start

|

||||

|

||||

# Specify region

|

||||

./CLIProxyAPI --kiro-idc-login --kiro-idc-start-url https://d-xxxxxxxxxx.awsapps.com/start --kiro-idc-region us-west-2

|

||||

```

|

||||

|

||||

**Additional flags:**

|

||||

|

||||

| Flag | Description |

|

||||

|------|-------------|

|

||||

| `--no-browser` | Don't open browser automatically, print URL instead |

|

||||

| `--no-incognito` | Use existing browser session (Kiro defaults to incognito). Useful for corporate SSO that requires an authenticated browser session |

|

||||

| `--kiro-idc-start-url` | IDC Start URL (required with `--kiro-idc-login`) |

|

||||

| `--kiro-idc-region` | IDC region (default: `us-east-1`) |

|

||||

| `--kiro-idc-flow` | IDC flow type: `authcode` (default) or `device` |

|

||||

|

||||

### Web-based OAuth Login

|

||||

|

||||

Access the Kiro OAuth web interface at:

|

||||

|

||||

```

|

||||

http://your-server:8080/v0/oauth/kiro

|

||||

```

|

||||

|

||||

This provides a browser-based OAuth flow for Kiro (AWS CodeWhisperer) authentication with:

|

||||

- AWS Builder ID login

|

||||

- AWS Identity Center (IDC) login

|

||||

- Token import from Kiro IDE

|

||||

|

||||

## Quick Deployment with Docker

|

||||

|

||||

### One-Command Deployment

|

||||

|

||||

```bash

|

||||

# Create deployment directory

|

||||

mkdir -p ~/cli-proxy && cd ~/cli-proxy

|

||||

|

||||

# Create docker-compose.yml

|

||||

cat > docker-compose.yml << 'EOF'

|

||||

services:

|

||||

cli-proxy-api:

|

||||

image: eceasy/cli-proxy-api-plus:latest

|

||||

container_name: cli-proxy-api-plus

|

||||

ports:

|

||||

- "8317:8317"

|

||||

volumes:

|

||||

- ./config.yaml:/CLIProxyAPI/config.yaml

|

||||

- ./auths:/root/.cli-proxy-api

|

||||

- ./logs:/CLIProxyAPI/logs

|

||||

restart: unless-stopped

|

||||

EOF

|

||||

|

||||

# Download example config

|

||||

curl -o config.yaml https://raw.githubusercontent.com/router-for-me/CLIProxyAPIPlus/main/config.example.yaml

|

||||

|

||||

# Pull and start

|

||||

docker compose pull && docker compose up -d

|

||||

```

|

||||

|

||||

### Configuration

|

||||

|

||||

Edit `config.yaml` before starting:

|

||||

|

||||

```yaml

|

||||

# Basic configuration example

|

||||

server:

|

||||

port: 8317

|

||||

|

||||

# Add your provider configurations here

|

||||

```

|

||||

|

||||

### Update to Latest Version

|

||||

|

||||

```bash

|

||||

cd ~/cli-proxy

|

||||

docker compose pull && docker compose up -d

|

||||

```

|

||||

|

||||

## Contributing

|

||||

|

||||

This project only accepts pull requests that relate to third-party provider support. Any pull requests unrelated to third-party provider support will be rejected.

|

||||

|

||||

117

README_CN.md

117

README_CN.md

@@ -8,123 +8,6 @@

|

||||

|

||||

该 Plus 版本的主线功能与主线功能强制同步。

|

||||

|

||||

## 与主线版本版本差异

|

||||

|

||||

[](https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII)

|

||||

|

||||

## 新增功能 (Plus 增强版)

|

||||

|

||||

GLM CODING PLAN 是专为AI编码打造的订阅套餐,每月最低仅需20元,即可在十余款主流AI编码工具如 Claude Code、Cline、Roo Code 中畅享智谱旗舰模型GLM-4.7(受限于算力,目前仅限Pro用户开放),为开发者提供顶尖的编码体验。

|

||||

|

||||

智谱AI为本产品提供了特别优惠,使用以下链接购买可以享受九折优惠:https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII

|

||||

|

||||

### 命令行登录

|

||||

|

||||

> **注意:** 由于 AWS Cognito 限制,Google/GitHub 登录不可用于第三方应用。

|

||||

|

||||

**AWS Builder ID**(推荐):

|

||||

|

||||

```bash

|

||||

# 设备码流程

|

||||

./CLIProxyAPI --kiro-aws-login

|

||||

|

||||

# 授权码流程

|

||||

./CLIProxyAPI --kiro-aws-authcode

|

||||

```

|

||||

|

||||

**从 Kiro IDE 导入令牌:**

|

||||

|

||||

```bash

|

||||

./CLIProxyAPI --kiro-import

|

||||

```

|

||||

|

||||

获取令牌步骤:

|

||||

|

||||

1. 打开 Kiro IDE,使用 Google(或 GitHub)登录

|

||||

2. 找到令牌文件:`~/.kiro/kiro-auth-token.json`

|

||||

3. 运行:`./CLIProxyAPI --kiro-import`

|

||||

|

||||

**AWS IAM Identity Center (IDC):**

|

||||

|

||||

```bash

|

||||

./CLIProxyAPI --kiro-idc-login --kiro-idc-start-url https://d-xxxxxxxxxx.awsapps.com/start

|

||||

|

||||

# 指定区域

|

||||

./CLIProxyAPI --kiro-idc-login --kiro-idc-start-url https://d-xxxxxxxxxx.awsapps.com/start --kiro-idc-region us-west-2

|

||||

```

|

||||

|

||||

**附加参数:**

|

||||

|

||||

| 参数 | 说明 |

|

||||

|------|------|

|

||||

| `--no-browser` | 不自动打开浏览器,打印 URL |

|

||||

| `--no-incognito` | 使用已有浏览器会话(Kiro 默认使用无痕模式),适用于需要已登录浏览器会话的企业 SSO 场景 |

|

||||

| `--kiro-idc-start-url` | IDC Start URL(`--kiro-idc-login` 必需) |

|

||||

| `--kiro-idc-region` | IDC 区域(默认:`us-east-1`) |

|

||||

| `--kiro-idc-flow` | IDC 流程类型:`authcode`(默认)或 `device` |

|

||||

|

||||

### 网页端 OAuth 登录

|

||||

|

||||

访问 Kiro OAuth 网页认证界面:

|

||||

|

||||

```

|

||||

http://your-server:8080/v0/oauth/kiro

|

||||

```

|

||||

|

||||

提供基于浏览器的 Kiro (AWS CodeWhisperer) OAuth 认证流程,支持:

|

||||

- AWS Builder ID 登录

|

||||

- AWS Identity Center (IDC) 登录

|

||||

- 从 Kiro IDE 导入令牌

|

||||

|

||||

## Docker 快速部署

|

||||

|

||||

### 一键部署

|

||||

|

||||

```bash

|

||||

# 创建部署目录

|

||||

mkdir -p ~/cli-proxy && cd ~/cli-proxy

|

||||

|

||||

# 创建 docker-compose.yml

|

||||

cat > docker-compose.yml << 'EOF'

|

||||

services:

|

||||

cli-proxy-api:

|

||||

image: eceasy/cli-proxy-api-plus:latest

|

||||

container_name: cli-proxy-api-plus

|

||||

ports:

|

||||

- "8317:8317"

|

||||

volumes:

|

||||

- ./config.yaml:/CLIProxyAPI/config.yaml

|

||||

- ./auths:/root/.cli-proxy-api

|

||||

- ./logs:/CLIProxyAPI/logs

|

||||

restart: unless-stopped

|

||||

EOF

|

||||

|

||||

# 下载示例配置

|

||||

curl -o config.yaml https://raw.githubusercontent.com/router-for-me/CLIProxyAPIPlus/main/config.example.yaml

|

||||

|

||||

# 拉取并启动

|

||||

docker compose pull && docker compose up -d

|

||||

```

|

||||

|

||||

### 配置说明

|

||||

|

||||

启动前请编辑 `config.yaml`:

|

||||

|

||||

```yaml

|

||||

# 基本配置示例

|

||||

server:

|

||||

port: 8317

|

||||

|

||||

# 在此添加你的供应商配置

|

||||

```

|

||||

|

||||

### 更新到最新版本

|

||||

|

||||

```bash

|

||||

cd ~/cli-proxy

|

||||

docker compose pull && docker compose up -d

|

||||

```

|

||||

|

||||

## 贡献

|

||||

|

||||

该项目仅接受第三方供应商支持的 Pull Request。任何非第三方供应商支持的 Pull Request 都将被拒绝。

|

||||

|

||||

@@ -219,6 +219,17 @@ nonstream-keepalive-interval: 0

|

||||

# models: # The models supported by the provider.

|

||||

# - name: "moonshotai/kimi-k2:free" # The actual model name.

|

||||

# alias: "kimi-k2" # The alias used in the API.

|

||||

# # You may repeat the same alias to build an internal model pool.

|

||||

# # The client still sees only one alias in the model list.

|

||||

# # Requests to that alias will round-robin across the upstream names below,

|

||||

# # and if the chosen upstream fails before producing output, the request will

|

||||

# # continue with the next upstream model in the same alias pool.

|

||||

# - name: "qwen3.5-plus"

|

||||

# alias: "claude-opus-4.66"

|

||||

# - name: "glm-5"

|

||||

# alias: "claude-opus-4.66"

|

||||

# - name: "kimi-k2.5"

|

||||

# alias: "claude-opus-4.66"

|

||||

|

||||

# Vertex API keys (Vertex-compatible endpoints, use API key + base URL)

|

||||

# vertex-api-key:

|

||||

@@ -233,6 +244,9 @@ nonstream-keepalive-interval: 0

|

||||

# alias: "vertex-flash" # client-visible alias

|

||||

# - name: "gemini-2.5-pro"

|

||||

# alias: "vertex-pro"

|

||||

# excluded-models: # optional: models to exclude from listing

|

||||

# - "imagen-3.0-generate-002"

|

||||

# - "imagen-*"

|

||||

|

||||

# Amp Integration

|

||||

# ampcode:

|

||||

|

||||

@@ -16,6 +16,7 @@ import (

|

||||

"net/url"

|

||||

"os"

|

||||

"path/filepath"

|

||||

"runtime"

|

||||

"sort"

|

||||

"strconv"

|

||||

"strings"

|

||||

@@ -192,17 +193,6 @@ func startCallbackForwarder(port int, provider, targetBase string) (*callbackFor

|

||||

return forwarder, nil

|

||||

}

|

||||

|

||||

func stopCallbackForwarder(port int) {

|

||||

callbackForwardersMu.Lock()

|

||||

forwarder := callbackForwarders[port]

|

||||

if forwarder != nil {

|

||||

delete(callbackForwarders, port)

|

||||

}

|

||||

callbackForwardersMu.Unlock()

|

||||

|

||||

stopForwarderInstance(port, forwarder)

|

||||

}

|

||||

|

||||

func stopCallbackForwarderInstance(port int, forwarder *callbackForwarder) {

|

||||

if forwarder == nil {

|

||||

return

|

||||

@@ -644,44 +634,85 @@ func (h *Handler) DeleteAuthFile(c *gin.Context) {

|

||||

c.JSON(400, gin.H{"error": "invalid name"})

|

||||

return

|

||||

}

|

||||

full := filepath.Join(h.cfg.AuthDir, filepath.Base(name))

|

||||

if !filepath.IsAbs(full) {

|

||||

if abs, errAbs := filepath.Abs(full); errAbs == nil {

|

||||

full = abs

|

||||

|

||||

targetPath := filepath.Join(h.cfg.AuthDir, filepath.Base(name))

|

||||

targetID := ""

|

||||

if targetAuth := h.findAuthForDelete(name); targetAuth != nil {

|

||||

targetID = strings.TrimSpace(targetAuth.ID)

|

||||

if path := strings.TrimSpace(authAttribute(targetAuth, "path")); path != "" {

|

||||

targetPath = path

|

||||

}

|

||||

}

|

||||

if err := os.Remove(full); err != nil {

|

||||

if os.IsNotExist(err) {

|

||||

if !filepath.IsAbs(targetPath) {

|

||||

if abs, errAbs := filepath.Abs(targetPath); errAbs == nil {

|

||||

targetPath = abs

|

||||

}

|

||||

}

|

||||

if errRemove := os.Remove(targetPath); errRemove != nil {

|

||||

if os.IsNotExist(errRemove) {

|

||||

c.JSON(404, gin.H{"error": "file not found"})

|

||||

} else {

|

||||

c.JSON(500, gin.H{"error": fmt.Sprintf("failed to remove file: %v", err)})

|

||||

c.JSON(500, gin.H{"error": fmt.Sprintf("failed to remove file: %v", errRemove)})

|

||||

}

|

||||

return

|

||||

}

|

||||

if err := h.deleteTokenRecord(ctx, full); err != nil {

|

||||

c.JSON(500, gin.H{"error": err.Error()})

|

||||

if errDeleteRecord := h.deleteTokenRecord(ctx, targetPath); errDeleteRecord != nil {

|

||||

c.JSON(500, gin.H{"error": errDeleteRecord.Error()})

|

||||

return

|

||||

}

|

||||

h.disableAuth(ctx, full)

|

||||

if targetID != "" {

|

||||

h.disableAuth(ctx, targetID)

|

||||

} else {

|

||||

h.disableAuth(ctx, targetPath)

|

||||

}

|

||||

c.JSON(200, gin.H{"status": "ok"})

|

||||

}

|

||||

|

||||

func (h *Handler) findAuthForDelete(name string) *coreauth.Auth {

|

||||

if h == nil || h.authManager == nil {

|

||||

return nil

|

||||

}

|

||||

name = strings.TrimSpace(name)

|

||||

if name == "" {

|

||||

return nil

|

||||

}

|

||||

if auth, ok := h.authManager.GetByID(name); ok {

|

||||

return auth

|

||||

}

|

||||

auths := h.authManager.List()

|

||||

for _, auth := range auths {

|

||||

if auth == nil {

|

||||

continue

|

||||

}

|

||||

if strings.TrimSpace(auth.FileName) == name {

|

||||

return auth

|

||||

}

|

||||

if filepath.Base(strings.TrimSpace(authAttribute(auth, "path"))) == name {

|

||||

return auth

|

||||

}

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func (h *Handler) authIDForPath(path string) string {

|

||||

path = strings.TrimSpace(path)

|

||||

if path == "" {

|

||||

return ""

|

||||

}

|

||||

if h == nil || h.cfg == nil {

|

||||

return path

|

||||

id := path

|

||||

if h != nil && h.cfg != nil {

|

||||

authDir := strings.TrimSpace(h.cfg.AuthDir)

|

||||

if authDir != "" {

|

||||

if rel, errRel := filepath.Rel(authDir, path); errRel == nil && rel != "" {

|

||||

id = rel

|

||||

}

|

||||

}

|

||||

}

|

||||

authDir := strings.TrimSpace(h.cfg.AuthDir)

|

||||

if authDir == "" {

|

||||

return path

|

||||

// On Windows, normalize ID casing to avoid duplicate auth entries caused by case-insensitive paths.

|

||||

if runtime.GOOS == "windows" {

|

||||

id = strings.ToLower(id)

|

||||

}

|

||||

if rel, err := filepath.Rel(authDir, path); err == nil && rel != "" {

|

||||

return rel

|

||||

}

|

||||

return path

|

||||

return id

|

||||

}

|

||||

|

||||

func (h *Handler) registerAuthFromFile(ctx context.Context, path string, data []byte) error {

|

||||

@@ -899,10 +930,19 @@ func (h *Handler) disableAuth(ctx context.Context, id string) {

|

||||

if h == nil || h.authManager == nil {

|

||||

return

|

||||

}

|

||||

authID := h.authIDForPath(id)

|

||||

if authID == "" {

|

||||

authID = strings.TrimSpace(id)

|

||||

id = strings.TrimSpace(id)

|

||||

if id == "" {

|

||||

return

|

||||

}

|

||||

if auth, ok := h.authManager.GetByID(id); ok {

|

||||

auth.Disabled = true

|

||||

auth.Status = coreauth.StatusDisabled

|

||||

auth.StatusMessage = "removed via management API"

|

||||

auth.UpdatedAt = time.Now()

|

||||

_, _ = h.authManager.Update(ctx, auth)

|

||||

return

|

||||

}

|

||||

authID := h.authIDForPath(id)

|

||||

if authID == "" {

|

||||

return

|

||||

}

|

||||

@@ -1272,12 +1312,12 @@ func (h *Handler) RequestGeminiCLIToken(c *gin.Context) {

|

||||

projects, errAll := onboardAllGeminiProjects(ctx, gemClient, &ts)

|

||||

if errAll != nil {

|

||||

log.Errorf("Failed to complete Gemini CLI onboarding: %v", errAll)

|

||||

SetOAuthSessionError(state, "Failed to complete Gemini CLI onboarding")

|

||||

SetOAuthSessionError(state, fmt.Sprintf("Failed to complete Gemini CLI onboarding: %v", errAll))

|

||||

return

|

||||

}

|

||||

if errVerify := ensureGeminiProjectsEnabled(ctx, gemClient, projects); errVerify != nil {

|

||||

log.Errorf("Failed to verify Cloud AI API status: %v", errVerify)

|

||||

SetOAuthSessionError(state, "Failed to verify Cloud AI API status")

|

||||

SetOAuthSessionError(state, fmt.Sprintf("Failed to verify Cloud AI API status: %v", errVerify))

|

||||

return

|

||||

}

|

||||

ts.ProjectID = strings.Join(projects, ",")

|

||||

@@ -1286,7 +1326,7 @@ func (h *Handler) RequestGeminiCLIToken(c *gin.Context) {

|

||||

ts.Auto = false

|

||||

if errSetup := performGeminiCLISetup(ctx, gemClient, &ts, ""); errSetup != nil {

|

||||

log.Errorf("Google One auto-discovery failed: %v", errSetup)

|

||||

SetOAuthSessionError(state, "Google One auto-discovery failed")

|

||||

SetOAuthSessionError(state, fmt.Sprintf("Google One auto-discovery failed: %v", errSetup))

|

||||

return

|

||||

}

|

||||

if strings.TrimSpace(ts.ProjectID) == "" {

|

||||

@@ -1297,19 +1337,19 @@ func (h *Handler) RequestGeminiCLIToken(c *gin.Context) {

|

||||

isChecked, errCheck := checkCloudAPIIsEnabled(ctx, gemClient, ts.ProjectID)

|

||||

if errCheck != nil {

|

||||

log.Errorf("Failed to verify Cloud AI API status: %v", errCheck)

|

||||

SetOAuthSessionError(state, "Failed to verify Cloud AI API status")

|

||||

SetOAuthSessionError(state, fmt.Sprintf("Failed to verify Cloud AI API status: %v", errCheck))

|

||||

return

|

||||

}

|

||||

ts.Checked = isChecked

|

||||

if !isChecked {

|

||||

log.Error("Cloud AI API is not enabled for the auto-discovered project")

|

||||

SetOAuthSessionError(state, "Cloud AI API not enabled")

|

||||

SetOAuthSessionError(state, fmt.Sprintf("Cloud AI API not enabled for project %s", ts.ProjectID))

|

||||

return

|

||||

}

|

||||

} else {

|

||||

if errEnsure := ensureGeminiProjectAndOnboard(ctx, gemClient, &ts, requestedProjectID); errEnsure != nil {

|

||||

log.Errorf("Failed to complete Gemini CLI onboarding: %v", errEnsure)

|

||||

SetOAuthSessionError(state, "Failed to complete Gemini CLI onboarding")

|

||||

SetOAuthSessionError(state, fmt.Sprintf("Failed to complete Gemini CLI onboarding: %v", errEnsure))

|

||||

return

|

||||

}

|

||||

|

||||

@@ -1322,13 +1362,13 @@ func (h *Handler) RequestGeminiCLIToken(c *gin.Context) {

|

||||

isChecked, errCheck := checkCloudAPIIsEnabled(ctx, gemClient, ts.ProjectID)

|

||||

if errCheck != nil {

|

||||

log.Errorf("Failed to verify Cloud AI API status: %v", errCheck)

|

||||

SetOAuthSessionError(state, "Failed to verify Cloud AI API status")

|

||||

SetOAuthSessionError(state, fmt.Sprintf("Failed to verify Cloud AI API status: %v", errCheck))

|

||||

return

|

||||

}

|

||||

ts.Checked = isChecked

|

||||

if !isChecked {

|

||||

log.Error("Cloud AI API is not enabled for the selected project")

|

||||

SetOAuthSessionError(state, "Cloud AI API not enabled")

|

||||

SetOAuthSessionError(state, fmt.Sprintf("Cloud AI API not enabled for project %s", ts.ProjectID))

|

||||

return

|

||||

}

|

||||

}

|

||||

@@ -2549,6 +2589,7 @@ func PopulateAuthContext(ctx context.Context, c *gin.Context) context.Context {

|

||||

}

|

||||

return coreauth.WithRequestInfo(ctx, info)

|

||||

}

|

||||

|

||||

const kiroCallbackPort = 9876

|

||||

|

||||

func (h *Handler) RequestKiroToken(c *gin.Context) {

|

||||

@@ -2685,6 +2726,7 @@ func (h *Handler) RequestKiroToken(c *gin.Context) {

|

||||

}

|

||||

|

||||

isWebUI := isWebUIRequest(c)

|

||||

var forwarder *callbackForwarder

|

||||

if isWebUI {

|

||||

targetURL, errTarget := h.managementCallbackURL("/kiro/callback")

|

||||

if errTarget != nil {

|

||||

@@ -2692,7 +2734,8 @@ func (h *Handler) RequestKiroToken(c *gin.Context) {

|

||||

c.JSON(http.StatusInternalServerError, gin.H{"error": "callback server unavailable"})

|

||||

return

|

||||

}

|

||||

if _, errStart := startCallbackForwarder(kiroCallbackPort, "kiro", targetURL); errStart != nil {

|

||||

var errStart error

|

||||

if forwarder, errStart = startCallbackForwarder(kiroCallbackPort, "kiro", targetURL); errStart != nil {

|

||||

log.WithError(errStart).Error("failed to start kiro callback forwarder")

|

||||

c.JSON(http.StatusInternalServerError, gin.H{"error": "failed to start callback server"})

|

||||

return

|

||||

@@ -2701,7 +2744,7 @@ func (h *Handler) RequestKiroToken(c *gin.Context) {

|

||||

|

||||

go func() {

|

||||

if isWebUI {

|

||||

defer stopCallbackForwarder(kiroCallbackPort)

|

||||

defer stopCallbackForwarderInstance(kiroCallbackPort, forwarder)

|

||||

}

|

||||

|

||||

socialClient := kiroauth.NewSocialAuthClient(h.cfg)

|

||||

@@ -2904,7 +2947,7 @@ func (h *Handler) RequestKiloToken(c *gin.Context) {

|

||||

Metadata: map[string]any{

|

||||

"email": status.UserEmail,

|

||||

"organization_id": orgID,

|

||||

"model": defaults.Model,

|

||||

"model": defaults.Model,

|

||||

},

|

||||

}

|

||||

|

||||

|

||||

129

internal/api/handlers/management/auth_files_delete_test.go

Normal file

129

internal/api/handlers/management/auth_files_delete_test.go

Normal file

@@ -0,0 +1,129 @@

|

||||

package management

|

||||

|

||||

import (

|

||||

"context"

|

||||

"encoding/json"

|

||||

"net/http"

|

||||

"net/http/httptest"

|

||||

"net/url"

|

||||

"os"

|

||||

"path/filepath"

|

||||

"testing"

|

||||

|

||||

"github.com/gin-gonic/gin"

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/config"

|

||||

coreauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/cliproxy/auth"

|

||||

)

|

||||

|

||||

func TestDeleteAuthFile_UsesAuthPathFromManager(t *testing.T) {

|

||||

t.Setenv("MANAGEMENT_PASSWORD", "")

|

||||

gin.SetMode(gin.TestMode)

|

||||

|

||||

tempDir := t.TempDir()

|

||||

authDir := filepath.Join(tempDir, "auth")

|

||||

externalDir := filepath.Join(tempDir, "external")

|

||||

if errMkdirAuth := os.MkdirAll(authDir, 0o700); errMkdirAuth != nil {

|

||||

t.Fatalf("failed to create auth dir: %v", errMkdirAuth)

|

||||

}

|

||||

if errMkdirExternal := os.MkdirAll(externalDir, 0o700); errMkdirExternal != nil {

|

||||

t.Fatalf("failed to create external dir: %v", errMkdirExternal)

|

||||

}

|

||||

|

||||

fileName := "codex-user@example.com-plus.json"

|

||||

shadowPath := filepath.Join(authDir, fileName)

|

||||

realPath := filepath.Join(externalDir, fileName)

|

||||

if errWriteShadow := os.WriteFile(shadowPath, []byte(`{"type":"codex","email":"shadow@example.com"}`), 0o600); errWriteShadow != nil {

|

||||

t.Fatalf("failed to write shadow file: %v", errWriteShadow)

|

||||

}

|

||||

if errWriteReal := os.WriteFile(realPath, []byte(`{"type":"codex","email":"real@example.com"}`), 0o600); errWriteReal != nil {

|

||||

t.Fatalf("failed to write real file: %v", errWriteReal)

|

||||

}

|

||||

|

||||

manager := coreauth.NewManager(nil, nil, nil)

|

||||

record := &coreauth.Auth{

|

||||

ID: "legacy/" + fileName,

|

||||

FileName: fileName,

|

||||

Provider: "codex",

|

||||

Status: coreauth.StatusError,

|

||||

Unavailable: true,

|

||||

Attributes: map[string]string{

|

||||

"path": realPath,

|

||||

},

|

||||

Metadata: map[string]any{

|

||||

"type": "codex",

|

||||

"email": "real@example.com",

|

||||

},

|

||||

}

|

||||

if _, errRegister := manager.Register(context.Background(), record); errRegister != nil {

|

||||

t.Fatalf("failed to register auth record: %v", errRegister)

|

||||

}

|

||||

|

||||

h := NewHandlerWithoutConfigFilePath(&config.Config{AuthDir: authDir}, manager)

|

||||

h.tokenStore = &memoryAuthStore{}

|

||||

|

||||

deleteRec := httptest.NewRecorder()

|

||||

deleteCtx, _ := gin.CreateTestContext(deleteRec)

|

||||

deleteReq := httptest.NewRequest(http.MethodDelete, "/v0/management/auth-files?name="+url.QueryEscape(fileName), nil)

|

||||

deleteCtx.Request = deleteReq

|

||||

h.DeleteAuthFile(deleteCtx)

|

||||

|

||||

if deleteRec.Code != http.StatusOK {

|

||||

t.Fatalf("expected delete status %d, got %d with body %s", http.StatusOK, deleteRec.Code, deleteRec.Body.String())

|

||||

}

|

||||

if _, errStatReal := os.Stat(realPath); !os.IsNotExist(errStatReal) {

|

||||

t.Fatalf("expected managed auth file to be removed, stat err: %v", errStatReal)

|

||||

}

|

||||

if _, errStatShadow := os.Stat(shadowPath); errStatShadow != nil {

|

||||

t.Fatalf("expected shadow auth file to remain, stat err: %v", errStatShadow)

|

||||

}

|

||||

|

||||

listRec := httptest.NewRecorder()

|

||||

listCtx, _ := gin.CreateTestContext(listRec)

|

||||

listReq := httptest.NewRequest(http.MethodGet, "/v0/management/auth-files", nil)

|

||||

listCtx.Request = listReq

|

||||

h.ListAuthFiles(listCtx)

|

||||

|

||||

if listRec.Code != http.StatusOK {

|

||||

t.Fatalf("expected list status %d, got %d with body %s", http.StatusOK, listRec.Code, listRec.Body.String())

|

||||

}

|

||||

var listPayload map[string]any

|

||||

if errUnmarshal := json.Unmarshal(listRec.Body.Bytes(), &listPayload); errUnmarshal != nil {

|

||||

t.Fatalf("failed to decode list payload: %v", errUnmarshal)

|

||||

}

|

||||

filesRaw, ok := listPayload["files"].([]any)

|

||||

if !ok {

|

||||

t.Fatalf("expected files array, payload: %#v", listPayload)

|

||||

}

|

||||

if len(filesRaw) != 0 {

|

||||

t.Fatalf("expected removed auth to be hidden from list, got %d entries", len(filesRaw))

|

||||

}

|

||||

}

|

||||

|

||||

func TestDeleteAuthFile_FallbackToAuthDirPath(t *testing.T) {

|

||||

t.Setenv("MANAGEMENT_PASSWORD", "")

|

||||

gin.SetMode(gin.TestMode)

|

||||

|

||||

authDir := t.TempDir()

|

||||

fileName := "fallback-user.json"

|

||||

filePath := filepath.Join(authDir, fileName)

|

||||

if errWrite := os.WriteFile(filePath, []byte(`{"type":"codex"}`), 0o600); errWrite != nil {

|

||||

t.Fatalf("failed to write auth file: %v", errWrite)

|

||||

}

|

||||

|

||||

manager := coreauth.NewManager(nil, nil, nil)

|

||||

h := NewHandlerWithoutConfigFilePath(&config.Config{AuthDir: authDir}, manager)

|

||||

h.tokenStore = &memoryAuthStore{}

|

||||

|

||||

deleteRec := httptest.NewRecorder()

|

||||

deleteCtx, _ := gin.CreateTestContext(deleteRec)

|

||||

deleteReq := httptest.NewRequest(http.MethodDelete, "/v0/management/auth-files?name="+url.QueryEscape(fileName), nil)

|

||||

deleteCtx.Request = deleteReq

|

||||

h.DeleteAuthFile(deleteCtx)

|

||||

|

||||

if deleteRec.Code != http.StatusOK {

|

||||

t.Fatalf("expected delete status %d, got %d with body %s", http.StatusOK, deleteRec.Code, deleteRec.Body.String())

|

||||

}

|

||||

if _, errStat := os.Stat(filePath); !os.IsNotExist(errStat) {

|

||||

t.Fatalf("expected auth file to be removed from auth dir, stat err: %v", errStat)

|

||||

}

|

||||

}

|

||||

@@ -516,12 +516,13 @@ func (h *Handler) PutVertexCompatKeys(c *gin.Context) {

|

||||

}

|

||||

func (h *Handler) PatchVertexCompatKey(c *gin.Context) {

|

||||

type vertexCompatPatch struct {

|

||||

APIKey *string `json:"api-key"`

|

||||

Prefix *string `json:"prefix"`

|

||||

BaseURL *string `json:"base-url"`

|

||||

ProxyURL *string `json:"proxy-url"`

|

||||

Headers *map[string]string `json:"headers"`

|

||||

Models *[]config.VertexCompatModel `json:"models"`

|

||||

APIKey *string `json:"api-key"`

|

||||

Prefix *string `json:"prefix"`

|

||||

BaseURL *string `json:"base-url"`

|

||||

ProxyURL *string `json:"proxy-url"`

|

||||

Headers *map[string]string `json:"headers"`

|

||||

Models *[]config.VertexCompatModel `json:"models"`

|

||||

ExcludedModels *[]string `json:"excluded-models"`

|

||||

}

|

||||

var body struct {

|

||||

Index *int `json:"index"`

|

||||

@@ -585,6 +586,9 @@ func (h *Handler) PatchVertexCompatKey(c *gin.Context) {

|

||||

if body.Value.Models != nil {

|

||||

entry.Models = append([]config.VertexCompatModel(nil), (*body.Value.Models)...)

|

||||

}

|

||||

if body.Value.ExcludedModels != nil {

|

||||

entry.ExcludedModels = config.NormalizeExcludedModels(*body.Value.ExcludedModels)

|

||||

}

|

||||

normalizeVertexCompatKey(&entry)

|

||||

h.cfg.VertexCompatAPIKey[targetIndex] = entry

|

||||

h.cfg.SanitizeVertexCompatKeys()

|

||||

@@ -1029,6 +1033,7 @@ func normalizeVertexCompatKey(entry *config.VertexCompatKey) {

|

||||

entry.BaseURL = strings.TrimSpace(entry.BaseURL)

|

||||

entry.ProxyURL = strings.TrimSpace(entry.ProxyURL)

|

||||

entry.Headers = config.NormalizeHeaders(entry.Headers)

|

||||

entry.ExcludedModels = config.NormalizeExcludedModels(entry.ExcludedModels)

|

||||

if len(entry.Models) == 0 {

|

||||

return

|

||||

}

|

||||

|

||||

@@ -8,6 +8,8 @@ import (

|

||||

"fmt"

|

||||

"io"

|

||||

"net/http"

|

||||

"net/url"

|

||||

"strings"

|

||||

"time"

|

||||

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/config"

|

||||

@@ -222,6 +224,97 @@ func (c *CopilotAuth) MakeAuthenticatedRequest(ctx context.Context, method, url

|

||||

return req, nil

|

||||

}

|

||||

|

||||

// CopilotModelEntry represents a single model entry returned by the Copilot /models API.

|

||||

type CopilotModelEntry struct {

|

||||

ID string `json:"id"`

|

||||

Object string `json:"object"`

|

||||

Created int64 `json:"created"`

|

||||

OwnedBy string `json:"owned_by"`

|

||||

Name string `json:"name,omitempty"`

|

||||

Version string `json:"version,omitempty"`

|

||||

Capabilities map[string]any `json:"capabilities,omitempty"`

|

||||

}

|

||||

|

||||

// CopilotModelsResponse represents the response from the Copilot /models endpoint.

|

||||

type CopilotModelsResponse struct {

|

||||

Data []CopilotModelEntry `json:"data"`

|

||||

Object string `json:"object"`

|

||||

}

|

||||

|

||||

// maxModelsResponseSize is the maximum allowed response size from the /models endpoint (2 MB).

|

||||

const maxModelsResponseSize = 2 * 1024 * 1024

|

||||

|

||||

// allowedCopilotAPIHosts is the set of hosts that are considered safe for Copilot API requests.

|

||||

var allowedCopilotAPIHosts = map[string]bool{

|

||||

"api.githubcopilot.com": true,

|

||||

"api.individual.githubcopilot.com": true,

|

||||

"api.business.githubcopilot.com": true,

|

||||

"copilot-proxy.githubusercontent.com": true,

|

||||

}

|

||||

|

||||

// ListModels fetches the list of available models from the Copilot API.

|

||||

// It requires a valid Copilot API token (not the GitHub access token).

|

||||

func (c *CopilotAuth) ListModels(ctx context.Context, apiToken *CopilotAPIToken) ([]CopilotModelEntry, error) {

|

||||

if apiToken == nil || apiToken.Token == "" {

|

||||

return nil, fmt.Errorf("copilot: api token is required for listing models")

|

||||

}

|

||||

|

||||

// Build models URL, validating the endpoint host to prevent SSRF.

|

||||

modelsURL := copilotAPIEndpoint + "/models"

|

||||

if ep := strings.TrimRight(apiToken.Endpoints.API, "/"); ep != "" {

|

||||

parsed, err := url.Parse(ep)

|

||||

if err == nil && parsed.Scheme == "https" && allowedCopilotAPIHosts[parsed.Host] {

|

||||

modelsURL = ep + "/models"

|

||||

} else {

|

||||

log.Warnf("copilot: ignoring untrusted API endpoint %q, using default", ep)

|

||||

}

|

||||

}

|

||||

|

||||

req, err := c.MakeAuthenticatedRequest(ctx, http.MethodGet, modelsURL, nil, apiToken)

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("copilot: failed to create models request: %w", err)

|

||||

}

|

||||

|

||||

resp, err := c.httpClient.Do(req)

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("copilot: models request failed: %w", err)

|

||||

}

|

||||

defer func() {

|

||||

if errClose := resp.Body.Close(); errClose != nil {

|

||||

log.Errorf("copilot list models: close body error: %v", errClose)

|

||||

}

|

||||

}()

|

||||

|

||||

// Limit response body to prevent memory exhaustion.

|

||||

limitedReader := io.LimitReader(resp.Body, maxModelsResponseSize)

|

||||

bodyBytes, err := io.ReadAll(limitedReader)

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("copilot: failed to read models response: %w", err)

|

||||

}

|

||||

|

||||

if !isHTTPSuccess(resp.StatusCode) {

|

||||

return nil, fmt.Errorf("copilot: list models failed with status %d: %s", resp.StatusCode, string(bodyBytes))

|

||||

}

|

||||

|

||||

var modelsResp CopilotModelsResponse

|

||||

if err = json.Unmarshal(bodyBytes, &modelsResp); err != nil {

|

||||

return nil, fmt.Errorf("copilot: failed to parse models response: %w", err)

|

||||

}

|

||||

|

||||

return modelsResp.Data, nil

|

||||

}

|

||||

|

||||

// ListModelsWithGitHubToken is a convenience method that exchanges a GitHub access token

|

||||

// for a Copilot API token and then fetches the available models.

|

||||

func (c *CopilotAuth) ListModelsWithGitHubToken(ctx context.Context, githubAccessToken string) ([]CopilotModelEntry, error) {

|

||||

apiToken, err := c.GetCopilotAPIToken(ctx, githubAccessToken)

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("copilot: failed to get API token for model listing: %w", err)

|

||||

}

|

||||

|

||||

return c.ListModels(ctx, apiToken)

|

||||

}

|

||||

|

||||

// buildChatCompletionURL builds the URL for chat completions API.

|

||||

func buildChatCompletionURL() string {

|

||||

return copilotAPIEndpoint + "/chat/completions"

|

||||

|

||||

@@ -1,5 +1,7 @@

|

||||

package config

|

||||

|

||||

import "strings"

|

||||

|

||||

// defaultKiroAliases returns default oauth-model-alias entries for Kiro.

|

||||

// These aliases expose standard Claude IDs for Kiro-prefixed upstream models.

|

||||

func defaultKiroAliases() []OAuthModelAlias {

|

||||

@@ -35,3 +37,25 @@ func defaultGitHubCopilotAliases() []OAuthModelAlias {

|

||||

{Name: "claude-sonnet-4.6", Alias: "claude-sonnet-4-6", Fork: true},

|

||||

}

|

||||

}

|

||||

|

||||

// GitHubCopilotAliasesFromModels generates oauth-model-alias entries from a dynamic

|

||||

// list of model IDs fetched from the Copilot API. It auto-creates aliases for

|

||||

// models whose ID contains a dot (e.g. "claude-opus-4.6" → "claude-opus-4-6"),

|

||||

// which is the pattern used by Claude models on Copilot.

|

||||

func GitHubCopilotAliasesFromModels(modelIDs []string) []OAuthModelAlias {

|

||||

var aliases []OAuthModelAlias

|

||||

seen := make(map[string]struct{})

|

||||

for _, id := range modelIDs {

|

||||

if !strings.Contains(id, ".") {

|

||||

continue

|

||||

}

|

||||

hyphenID := strings.ReplaceAll(id, ".", "-")

|

||||

key := id + "→" + hyphenID

|

||||

if _, ok := seen[key]; ok {

|

||||

continue

|

||||

}

|

||||

seen[key] = struct{}{}

|

||||

aliases = append(aliases, OAuthModelAlias{Name: id, Alias: hyphenID, Fork: true})

|

||||

}

|

||||

return aliases

|

||||

}

|

||||

|

||||

@@ -34,6 +34,9 @@ type VertexCompatKey struct {

|

||||

|

||||

// Models defines the model configurations including aliases for routing.

|

||||

Models []VertexCompatModel `yaml:"models,omitempty" json:"models,omitempty"`

|

||||

|

||||

// ExcludedModels lists model IDs that should be excluded for this provider.

|

||||

ExcludedModels []string `yaml:"excluded-models,omitempty" json:"excluded-models,omitempty"`

|

||||

}

|

||||

|

||||

func (k VertexCompatKey) GetAPIKey() string { return k.APIKey }

|

||||

@@ -74,6 +77,7 @@ func (cfg *Config) SanitizeVertexCompatKeys() {

|

||||

}

|

||||

entry.ProxyURL = strings.TrimSpace(entry.ProxyURL)

|

||||

entry.Headers = NormalizeHeaders(entry.Headers)

|

||||

entry.ExcludedModels = NormalizeExcludedModels(entry.ExcludedModels)

|

||||

|

||||

// Sanitize models: remove entries without valid alias

|

||||

sanitizedModels := make([]VertexCompatModel, 0, len(entry.Models))

|

||||

|

||||

@@ -23,7 +23,6 @@ import (

|

||||

// - kiro

|

||||

// - kilo

|

||||

// - github-copilot

|

||||

// - kiro

|

||||

// - amazonq

|

||||

// - antigravity (returns static overrides only)

|

||||

func GetStaticModelDefinitionsByChannel(channel string) []*ModelInfo {

|

||||

@@ -152,6 +151,7 @@ func GetGitHubCopilotModels() []*ModelInfo {

|

||||

Description: "OpenAI GPT-4.1 via GitHub Copilot",

|

||||

ContextLength: 128000,

|

||||

MaxCompletionTokens: 16384,

|

||||

SupportedEndpoints: []string{"/chat/completions", "/responses"},

|

||||

},

|

||||

}

|

||||

|

||||

@@ -166,6 +166,7 @@ func GetGitHubCopilotModels() []*ModelInfo {

|

||||

Description: entry.Description,

|

||||

ContextLength: 128000,

|

||||

MaxCompletionTokens: 16384,

|

||||

SupportedEndpoints: []string{"/chat/completions", "/responses"},

|

||||

})

|

||||

}

|

||||

|

||||

|

||||

@@ -37,7 +37,7 @@ func GetClaudeModels() []*ModelInfo {

|

||||

DisplayName: "Claude 4.6 Sonnet",

|

||||

ContextLength: 200000,

|

||||

MaxCompletionTokens: 64000,

|

||||

Thinking: &ThinkingSupport{Min: 1024, Max: 128000, ZeroAllowed: true, DynamicAllowed: false},

|

||||

Thinking: &ThinkingSupport{Min: 1024, Max: 128000, ZeroAllowed: true, DynamicAllowed: false, Levels: []string{"low", "medium", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "claude-opus-4-6",

|

||||

@@ -49,7 +49,7 @@ func GetClaudeModels() []*ModelInfo {

|

||||

Description: "Premium model combining maximum intelligence with practical performance",

|

||||

ContextLength: 1000000,

|

||||

MaxCompletionTokens: 128000,

|

||||

Thinking: &ThinkingSupport{Min: 1024, Max: 128000, ZeroAllowed: true, DynamicAllowed: false},

|

||||

Thinking: &ThinkingSupport{Min: 1024, Max: 128000, ZeroAllowed: true, DynamicAllowed: false, Levels: []string{"low", "medium", "high", "max"}},

|

||||

},

|

||||

{

|

||||

ID: "claude-sonnet-4-6",

|

||||

@@ -211,6 +211,21 @@ func GetGeminiModels() []*ModelInfo {

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3.1-flash-image-preview",

|

||||

Object: "model",

|

||||

Created: 1771459200,

|

||||

OwnedBy: "google",

|

||||

Type: "gemini",

|

||||

Name: "models/gemini-3.1-flash-image-preview",

|

||||

Version: "3.1",

|

||||

DisplayName: "Gemini 3.1 Flash Image Preview",

|

||||

Description: "Gemini 3.1 Flash Image Preview",

|

||||

InputTokenLimit: 1048576,

|

||||

OutputTokenLimit: 65536,

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3-flash-preview",

|

||||

Object: "model",

|

||||

@@ -220,12 +235,27 @@ func GetGeminiModels() []*ModelInfo {

|

||||

Name: "models/gemini-3-flash-preview",

|

||||

Version: "3.0",

|

||||

DisplayName: "Gemini 3 Flash Preview",

|

||||

Description: "Gemini 3 Flash Preview",

|

||||

Description: "Our most intelligent model built for speed, combining frontier intelligence with superior search and grounding.",

|

||||

InputTokenLimit: 1048576,

|

||||

OutputTokenLimit: 65536,

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "low", "medium", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3.1-flash-lite-preview",

|

||||

Object: "model",

|

||||

Created: 1776288000,

|

||||

OwnedBy: "google",

|

||||

Type: "gemini",

|

||||

Name: "models/gemini-3.1-flash-lite-preview",

|

||||

Version: "3.1",

|

||||

DisplayName: "Gemini 3.1 Flash Lite Preview",

|

||||

Description: "Our smallest and most cost effective model, built for at scale usage.",

|

||||

InputTokenLimit: 1048576,

|

||||

OutputTokenLimit: 65536,

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3-pro-image-preview",

|

||||

Object: "model",

|

||||

@@ -336,6 +366,32 @@ func GetGeminiVertexModels() []*ModelInfo {

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3.1-flash-image-preview",

|

||||

Object: "model",

|

||||

Created: 1771459200,

|

||||

OwnedBy: "google",

|

||||

Type: "gemini",

|

||||

Name: "models/gemini-3.1-flash-image-preview",

|

||||

Version: "3.1",

|

||||

DisplayName: "Gemini 3.1 Flash Image Preview",

|

||||

Description: "Gemini 3.1 Flash Image Preview",

|

||||

},

|

||||

{

|

||||

ID: "gemini-3.1-flash-lite-preview",

|

||||

Object: "model",

|

||||

Created: 1776288000,

|

||||

OwnedBy: "google",

|

||||

Type: "gemini",

|

||||

Name: "models/gemini-3.1-flash-lite-preview",

|

||||

Version: "3.1",

|

||||

DisplayName: "Gemini 3.1 Flash Lite Preview",

|

||||

Description: "Our smallest and most cost effective model, built for at scale usage.",

|

||||

InputTokenLimit: 1048576,

|

||||

OutputTokenLimit: 65536,

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3-pro-image-preview",

|

||||

Object: "model",

|

||||

@@ -508,6 +564,21 @@ func GetGeminiCLIModels() []*ModelInfo {

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "low", "medium", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3.1-flash-lite-preview",

|

||||

Object: "model",

|

||||

Created: 1776288000,

|

||||

OwnedBy: "google",

|

||||

Type: "gemini",

|

||||

Name: "models/gemini-3.1-flash-lite-preview",

|

||||

Version: "3.1",

|

||||

DisplayName: "Gemini 3.1 Flash Lite Preview",

|

||||

Description: "Our smallest and most cost effective model, built for at scale usage.",

|

||||

InputTokenLimit: 1048576,

|

||||

OutputTokenLimit: 65536,

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}},

|

||||

},

|

||||

}

|

||||

}

|

||||

|

||||

@@ -604,6 +675,21 @@ func GetAIStudioModels() []*ModelInfo {

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3.1-flash-lite-preview",

|

||||

Object: "model",

|

||||

Created: 1776288000,

|

||||

OwnedBy: "google",

|

||||

Type: "gemini",

|

||||

Name: "models/gemini-3.1-flash-lite-preview",

|

||||

Version: "3.1",

|

||||

DisplayName: "Gemini 3.1 Flash Lite Preview",

|

||||

Description: "Our smallest and most cost effective model, built for at scale usage.",

|

||||

InputTokenLimit: 1048576,

|

||||

OutputTokenLimit: 65536,

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-pro-latest",

|

||||

Object: "model",

|

||||

@@ -839,6 +925,20 @@ func GetOpenAIModels() []*ModelInfo {

|

||||

SupportedParameters: []string{"tools"},

|

||||

Thinking: &ThinkingSupport{Levels: []string{"low", "medium", "high", "xhigh"}},

|

||||

},

|

||||

{

|

||||

ID: "gpt-5.4",

|

||||

Object: "model",

|

||||

Created: 1772668800,

|

||||

OwnedBy: "openai",

|

||||

Type: "openai",

|

||||

Version: "gpt-5.4",

|

||||

DisplayName: "GPT 5.4",

|

||||

Description: "Stable version of GPT 5.4",

|

||||

ContextLength: 1_050_000,

|

||||

MaxCompletionTokens: 128000,

|

||||

SupportedParameters: []string{"tools"},

|

||||

Thinking: &ThinkingSupport{Levels: []string{"low", "medium", "high", "xhigh"}},

|

||||

},

|

||||

}

|

||||

}

|

||||

|

||||

@@ -966,6 +1066,7 @@ func GetAntigravityModelConfig() map[string]*AntigravityModelConfig {

|

||||

"gemini-3.1-pro-high": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}}},

|

||||

"gemini-3.1-pro-low": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}}},

|

||||

"gemini-3.1-flash-image": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}}},

|

||||

"gemini-3.1-flash-lite-preview": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}}},

|

||||

"gemini-3-flash": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "low", "medium", "high"}}},

|

||||

"claude-opus-4-6-thinking": {Thinking: &ThinkingSupport{Min: 1024, Max: 64000, ZeroAllowed: true, DynamicAllowed: true}, MaxCompletionTokens: 64000},

|

||||

"claude-sonnet-4-6": {Thinking: &ThinkingSupport{Min: 1024, Max: 64000, ZeroAllowed: true, DynamicAllowed: true}, MaxCompletionTokens: 64000},

|

||||

|

||||

@@ -64,6 +64,11 @@ type ModelInfo struct {

|

||||

UserDefined bool `json:"-"`

|

||||

}

|

||||

|

||||

type availableModelsCacheEntry struct {

|

||||

models []map[string]any

|

||||

expiresAt time.Time

|

||||

}

|

||||

|

||||

// ThinkingSupport describes a model family's supported internal reasoning budget range.

|

||||

// Values are interpreted in provider-native token units.

|

||||

type ThinkingSupport struct {

|

||||

@@ -118,6 +123,8 @@ type ModelRegistry struct {

|

||||

clientProviders map[string]string

|

||||

// mutex ensures thread-safe access to the registry

|

||||

mutex *sync.RWMutex

|

||||

// availableModelsCache stores per-handler snapshots for GetAvailableModels.

|

||||

availableModelsCache map[string]availableModelsCacheEntry

|

||||

// hook is an optional callback sink for model registration changes

|

||||

hook ModelRegistryHook

|

||||

}

|

||||

@@ -130,15 +137,28 @@ var registryOnce sync.Once

|

||||

func GetGlobalRegistry() *ModelRegistry {

|

||||

registryOnce.Do(func() {

|

||||

globalRegistry = &ModelRegistry{

|

||||

models: make(map[string]*ModelRegistration),

|

||||

clientModels: make(map[string][]string),

|

||||

clientModelInfos: make(map[string]map[string]*ModelInfo),

|

||||

clientProviders: make(map[string]string),

|

||||

mutex: &sync.RWMutex{},

|

||||

models: make(map[string]*ModelRegistration),

|

||||

clientModels: make(map[string][]string),

|

||||

clientModelInfos: make(map[string]map[string]*ModelInfo),

|

||||

clientProviders: make(map[string]string),

|

||||

availableModelsCache: make(map[string]availableModelsCacheEntry),

|

||||

mutex: &sync.RWMutex{},

|

||||

}

|

||||

})

|

||||

return globalRegistry

|

||||

}

|

||||

func (r *ModelRegistry) ensureAvailableModelsCacheLocked() {

|

||||

if r.availableModelsCache == nil {

|

||||

r.availableModelsCache = make(map[string]availableModelsCacheEntry)

|

||||

}

|

||||

}

|

||||

|

||||

func (r *ModelRegistry) invalidateAvailableModelsCacheLocked() {

|

||||

if len(r.availableModelsCache) == 0 {

|

||||

return

|

||||

}

|

||||

clear(r.availableModelsCache)

|

||||

}

|

||||

|

||||

// LookupModelInfo searches dynamic registry (provider-specific > global) then static definitions.

|

||||

func LookupModelInfo(modelID string, provider ...string) *ModelInfo {

|

||||

@@ -153,9 +173,9 @@ func LookupModelInfo(modelID string, provider ...string) *ModelInfo {

|

||||

}

|

||||

|

||||

if info := GetGlobalRegistry().GetModelInfo(modelID, p); info != nil {

|

||||

return info

|

||||

return cloneModelInfo(info)

|

||||

}

|

||||

return LookupStaticModelInfo(modelID)

|

||||

return cloneModelInfo(LookupStaticModelInfo(modelID))

|

||||

}

|

||||

|

||||

// SetHook sets an optional hook for observing model registration changes.

|

||||

@@ -213,6 +233,7 @@ func (r *ModelRegistry) triggerModelsUnregistered(provider, clientID string) {

|

||||

func (r *ModelRegistry) RegisterClient(clientID, clientProvider string, models []*ModelInfo) {

|

||||

r.mutex.Lock()

|

||||

defer r.mutex.Unlock()

|

||||

r.ensureAvailableModelsCacheLocked()

|

||||

|

||||

provider := strings.ToLower(clientProvider)

|

||||

uniqueModelIDs := make([]string, 0, len(models))

|

||||

@@ -238,6 +259,7 @@ func (r *ModelRegistry) RegisterClient(clientID, clientProvider string, models [

|

||||

delete(r.clientModels, clientID)

|

||||

delete(r.clientModelInfos, clientID)

|

||||

delete(r.clientProviders, clientID)

|

||||

r.invalidateAvailableModelsCacheLocked()

|

||||

misc.LogCredentialSeparator()

|

||||

return

|

||||

}

|

||||

@@ -265,6 +287,7 @@ func (r *ModelRegistry) RegisterClient(clientID, clientProvider string, models [

|

||||

} else {

|

||||

delete(r.clientProviders, clientID)

|

||||

}

|

||||

r.invalidateAvailableModelsCacheLocked()

|

||||

r.triggerModelsRegistered(provider, clientID, models)

|

||||

log.Debugf("Registered client %s from provider %s with %d models", clientID, clientProvider, len(rawModelIDs))

|

||||

misc.LogCredentialSeparator()

|

||||

@@ -408,6 +431,7 @@ func (r *ModelRegistry) RegisterClient(clientID, clientProvider string, models [

|

||||

delete(r.clientProviders, clientID)

|

||||

}

|

||||

|

||||

r.invalidateAvailableModelsCacheLocked()

|

||||

r.triggerModelsRegistered(provider, clientID, models)

|

||||

if len(added) == 0 && len(removed) == 0 && !providerChanged {

|

||||

// Only metadata (e.g., display name) changed; skip separator when no log output.

|

||||

@@ -511,6 +535,13 @@ func cloneModelInfo(model *ModelInfo) *ModelInfo {

|

||||

if len(model.SupportedOutputModalities) > 0 {

|

||||

copyModel.SupportedOutputModalities = append([]string(nil), model.SupportedOutputModalities...)

|

||||

}

|

||||

if model.Thinking != nil {

|

||||

copyThinking := *model.Thinking

|

||||

if len(model.Thinking.Levels) > 0 {

|

||||

copyThinking.Levels = append([]string(nil), model.Thinking.Levels...)

|

||||

}

|

||||

copyModel.Thinking = ©Thinking

|

||||

}

|

||||

return ©Model

|

||||

}

|

||||

|

||||

@@ -540,6 +571,7 @@ func (r *ModelRegistry) UnregisterClient(clientID string) {

|

||||

r.mutex.Lock()

|

||||

defer r.mutex.Unlock()

|

||||

r.unregisterClientInternal(clientID)

|

||||

r.invalidateAvailableModelsCacheLocked()

|

||||

}

|

||||

|

||||

// unregisterClientInternal performs the actual client unregistration (internal, no locking)

|

||||

@@ -606,9 +638,12 @@ func (r *ModelRegistry) unregisterClientInternal(clientID string) {

|

||||

func (r *ModelRegistry) SetModelQuotaExceeded(clientID, modelID string) {

|

||||

r.mutex.Lock()

|

||||

defer r.mutex.Unlock()

|

||||

r.ensureAvailableModelsCacheLocked()

|

||||

|

||||

if registration, exists := r.models[modelID]; exists {

|

||||

registration.QuotaExceededClients[clientID] = new(time.Now())

|

||||

now := time.Now()

|

||||

registration.QuotaExceededClients[clientID] = &now

|

||||

r.invalidateAvailableModelsCacheLocked()

|

||||

log.Debugf("Marked model %s as quota exceeded for client %s", modelID, clientID)

|

||||

}

|

||||

}

|

||||

@@ -620,9 +655,11 @@ func (r *ModelRegistry) SetModelQuotaExceeded(clientID, modelID string) {

|

||||

func (r *ModelRegistry) ClearModelQuotaExceeded(clientID, modelID string) {

|

||||

r.mutex.Lock()

|

||||

defer r.mutex.Unlock()

|

||||

r.ensureAvailableModelsCacheLocked()

|

||||

|

||||

if registration, exists := r.models[modelID]; exists {

|

||||

delete(registration.QuotaExceededClients, clientID)

|

||||

r.invalidateAvailableModelsCacheLocked()

|

||||

// log.Debugf("Cleared quota exceeded status for model %s and client %s", modelID, clientID)

|

||||

}

|

||||

}

|

||||

@@ -638,6 +675,7 @@ func (r *ModelRegistry) SuspendClientModel(clientID, modelID, reason string) {

|

||||

}

|

||||

r.mutex.Lock()

|

||||

defer r.mutex.Unlock()

|

||||

r.ensureAvailableModelsCacheLocked()

|

||||

|

||||

registration, exists := r.models[modelID]

|

||||

if !exists || registration == nil {

|

||||

@@ -651,6 +689,7 @@ func (r *ModelRegistry) SuspendClientModel(clientID, modelID, reason string) {

|

||||

}

|

||||

registration.SuspendedClients[clientID] = reason

|

||||

registration.LastUpdated = time.Now()

|

||||

r.invalidateAvailableModelsCacheLocked()

|

||||

if reason != "" {

|

||||

log.Debugf("Suspended client %s for model %s: %s", clientID, modelID, reason)

|

||||

} else {

|

||||

@@ -668,6 +707,7 @@ func (r *ModelRegistry) ResumeClientModel(clientID, modelID string) {

|

||||

}

|

||||

r.mutex.Lock()

|

||||

defer r.mutex.Unlock()

|

||||

r.ensureAvailableModelsCacheLocked()

|

||||

|

||||

registration, exists := r.models[modelID]

|

||||

if !exists || registration == nil || registration.SuspendedClients == nil {

|

||||

@@ -678,6 +718,7 @@ func (r *ModelRegistry) ResumeClientModel(clientID, modelID string) {

|

||||

}

|

||||

delete(registration.SuspendedClients, clientID)

|

||||

registration.LastUpdated = time.Now()

|

||||

r.invalidateAvailableModelsCacheLocked()

|

||||

log.Debugf("Resumed client %s for model %s", clientID, modelID)

|