mirror of

https://github.com/router-for-me/CLIProxyAPIPlus.git

synced 2026-04-04 19:51:18 +00:00

Compare commits

45 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

7223fee2de | ||

|

|

ada8e2905e | ||

|

|

4ba10531da | ||

|

|

3774b56e9f | ||

|

|

c2d4137fb9 | ||

|

|

2ee938acaf | ||

|

|

8d5e470e1f | ||

|

|

3882494878 | ||

|

|

088c1d07f4 | ||

|

|

8430b28cfa | ||

|

|

f3ab8f4bc5 | ||

|

|

0e4f189c2e | ||

|

|

98509f615c | ||

|

|

e7a66ae504 | ||

|

|

754b126944 | ||

|

|

ae37ccffbf | ||

|

|

42c062bb5b | ||

|

|

87bf0b73d5 | ||

|

|

f389667ec3 | ||

|

|

29dba0399b | ||

|

|

a824e7cd0b | ||

|

|

140faef7dc | ||

|

|

adb580b344 | ||

|

|

06405f2129 | ||

|

|

b849bf79d6 | ||

|

|

59af2c57b1 | ||

|

|

d1fd2c4ad4 | ||

|

|

b6c6379bfa | ||

|

|

8f0e66b72e | ||

|

|

f63cf6ff7a | ||

|

|

d2419ed49d | ||

|

|

516d22c695 | ||

|

|

73cda6e836 | ||

|

|

0805989ee5 | ||

|

|

75da02af55 | ||

|

|

ab9ebea592 | ||

|

|

7ee37ee4b9 | ||

|

|

837afffb31 | ||

|

|

f5e9f01811 | ||

|

|

ff7dbb5867 | ||

|

|

e34b2b4f1d | ||

|

|

36efcc6e28 | ||

|

|

a337ecf35c | ||

|

|

14cb2b95c6 | ||

|

|

fdeef48498 |

6

.gitignore

vendored

6

.gitignore

vendored

@@ -54,4 +54,10 @@ _bmad-output/*

|

|||||||

# macOS

|

# macOS

|

||||||

.DS_Store

|

.DS_Store

|

||||||

._*

|

._*

|

||||||

|

|

||||||

|

# Opencode

|

||||||

|

.beads/

|

||||||

|

.opencode/

|

||||||

|

.cli-proxy-api/

|

||||||

|

.venv/

|

||||||

*.bak

|

*.bak

|

||||||

|

|||||||

93

README.md

93

README.md

@@ -14,99 +14,6 @@ This project only accepts pull requests that relate to third-party provider supp

|

|||||||

|

|

||||||

If you need to submit any non-third-party provider changes, please open them against the [mainline](https://github.com/router-for-me/CLIProxyAPI) repository.

|

If you need to submit any non-third-party provider changes, please open them against the [mainline](https://github.com/router-for-me/CLIProxyAPI) repository.

|

||||||

|

|

||||||

1. Fork the repository

|

|

||||||

2. Create your feature branch (`git checkout -b feature/amazing-feature`)

|

|

||||||

3. Commit your changes (`git commit -m 'Add some amazing feature'`)

|

|

||||||

4. Push to the branch (`git push origin feature/amazing-feature`)

|

|

||||||

5. Open a Pull Request

|

|

||||||

|

|

||||||

## Who is with us?

|

|

||||||

|

|

||||||

Those projects are based on CLIProxyAPI:

|

|

||||||

|

|

||||||

### [vibeproxy](https://github.com/automazeio/vibeproxy)

|

|

||||||

|

|

||||||

Native macOS menu bar app to use your Claude Code & ChatGPT subscriptions with AI coding tools - no API keys needed

|

|

||||||

|

|

||||||

### [Subtitle Translator](https://github.com/VjayC/SRT-Subtitle-Translator-Validator)

|

|

||||||

|

|

||||||

Browser-based tool to translate SRT subtitles using your Gemini subscription via CLIProxyAPI with automatic validation/error correction - no API keys needed

|

|

||||||

|

|

||||||

### [CCS (Claude Code Switch)](https://github.com/kaitranntt/ccs)

|

|

||||||

|

|

||||||

CLI wrapper for instant switching between multiple Claude accounts and alternative models (Gemini, Codex, Antigravity) via CLIProxyAPI OAuth - no API keys needed

|

|

||||||

|

|

||||||

### [Quotio](https://github.com/nguyenphutrong/quotio)

|

|

||||||

|

|

||||||

Native macOS menu bar app that unifies Claude, Gemini, OpenAI, Qwen, and Antigravity subscriptions with real-time quota tracking and smart auto-failover for AI coding tools like Claude Code, OpenCode, and Droid - no API keys needed.

|

|

||||||

|

|

||||||

### [CodMate](https://github.com/loocor/CodMate)

|

|

||||||

|

|

||||||

Native macOS SwiftUI app for managing CLI AI sessions (Codex, Claude Code, Gemini CLI) with unified provider management, Git review, project organization, global search, and terminal integration. Integrates CLIProxyAPI to provide OAuth authentication for Codex, Claude, Gemini, Antigravity, and Qwen Code, with built-in and third-party provider rerouting through a single proxy endpoint - no API keys needed for OAuth providers.

|

|

||||||

|

|

||||||

### [ProxyPilot](https://github.com/Finesssee/ProxyPilot)

|

|

||||||

|

|

||||||

Windows-native CLIProxyAPI fork with TUI, system tray, and multi-provider OAuth for AI coding tools - no API keys needed.

|

|

||||||

|

|

||||||

### [Claude Proxy VSCode](https://github.com/uzhao/claude-proxy-vscode)

|

|

||||||

|

|

||||||

VSCode extension for quick switching between Claude Code models, featuring integrated CLIProxyAPI as its backend with automatic background lifecycle management.

|

|

||||||

|

|

||||||

### [ZeroLimit](https://github.com/0xtbug/zero-limit)

|

|

||||||

|

|

||||||

Windows desktop app built with Tauri + React for monitoring AI coding assistant quotas via CLIProxyAPI. Track usage across Gemini, Claude, OpenAI Codex, and Antigravity accounts with real-time dashboard, system tray integration, and one-click proxy control - no API keys needed.

|

|

||||||

|

|

||||||

### [CPA-XXX Panel](https://github.com/ferretgeek/CPA-X)

|

|

||||||

|

|

||||||

A lightweight web admin panel for CLIProxyAPI with health checks, resource monitoring, real-time logs, auto-update, request statistics and pricing display. Supports one-click installation and systemd service.

|

|

||||||

|

|

||||||

### [CLIProxyAPI Tray](https://github.com/kitephp/CLIProxyAPI_Tray)

|

|

||||||

|

|

||||||

A Windows tray application implemented using PowerShell scripts, without relying on any third-party libraries. The main features include: automatic creation of shortcuts, silent running, password management, channel switching (Main / Plus), and automatic downloading and updating.

|

|

||||||

|

|

||||||

### [霖君](https://github.com/wangdabaoqq/LinJun)

|

|

||||||

|

|

||||||

霖君 is a cross-platform desktop application for managing AI programming assistants, supporting macOS, Windows, and Linux systems. Unified management of Claude Code, Gemini CLI, OpenAI Codex, Qwen Code, and other AI coding tools, with local proxy for multi-account quota tracking and one-click configuration.

|

|

||||||

|

|

||||||

### [CLIProxyAPI Dashboard](https://github.com/itsmylife44/cliproxyapi-dashboard)

|

|

||||||

|

|

||||||

A modern web-based management dashboard for CLIProxyAPI built with Next.js, React, and PostgreSQL. Features real-time log streaming, structured configuration editing, API key management, OAuth provider integration for Claude/Gemini/Codex, usage analytics, container management, and config sync with OpenCode via companion plugin - no manual YAML editing needed.

|

|

||||||

|

|

||||||

### [All API Hub](https://github.com/qixing-jk/all-api-hub)

|

|

||||||

|

|

||||||

Browser extension for one-stop management of New API-compatible relay site accounts, featuring balance and usage dashboards, auto check-in, one-click key export to common apps, in-page API availability testing, and channel/model sync and redirection. It integrates with CLIProxyAPI through the Management API for one-click provider import and config sync.

|

|

||||||

|

|

||||||

### [Shadow AI](https://github.com/HEUDavid/shadow-ai)

|

|

||||||

|

|

||||||

Shadow AI is an AI assistant tool designed specifically for restricted environments. It provides a stealthy operation

|

|

||||||

mode without windows or traces, and enables cross-device AI Q&A interaction and control via the local area network (

|

|

||||||

LAN). Essentially, it is an automated collaboration layer of "screen/audio capture + AI inference + low-friction delivery",

|

|

||||||

helping users to immersively use AI assistants across applications on controlled devices or in restricted environments.

|

|

||||||

|

|

||||||

### [ProxyPal](https://github.com/buddingnewinsights/proxypal)

|

|

||||||

|

|

||||||

Cross-platform desktop app (macOS, Windows, Linux) wrapping CLIProxyAPI with a native GUI. Connects Claude, ChatGPT, Gemini, GitHub Copilot, Qwen, iFlow, and custom OpenAI-compatible endpoints with usage analytics, request monitoring, and auto-configuration for popular coding tools - no API keys needed.

|

|

||||||

|

|

||||||

> [!NOTE]

|

|

||||||

> If you developed a project based on CLIProxyAPI, please open a PR to add it to this list.

|

|

||||||

|

|

||||||

## More choices

|

|

||||||

|

|

||||||

Those projects are ports of CLIProxyAPI or inspired by it:

|

|

||||||

|

|

||||||

### [9Router](https://github.com/decolua/9router)

|

|

||||||

|

|

||||||

A Next.js implementation inspired by CLIProxyAPI, easy to install and use, built from scratch with format translation (OpenAI/Claude/Gemini/Ollama), combo system with auto-fallback, multi-account management with exponential backoff, a Next.js web dashboard, and support for CLI tools (Cursor, Claude Code, Cline, RooCode) - no API keys needed.

|

|

||||||

|

|

||||||

### [OmniRoute](https://github.com/diegosouzapw/OmniRoute)

|

|

||||||

|

|

||||||

Never stop coding. Smart routing to FREE & low-cost AI models with automatic fallback.

|

|

||||||

|

|

||||||

OmniRoute is an AI gateway for multi-provider LLMs: an OpenAI-compatible endpoint with smart routing, load balancing, retries, and fallbacks. Add policies, rate limits, caching, and observability for reliable, cost-aware inference.

|

|

||||||

|

|

||||||

> [!NOTE]

|

|

||||||

> If you have developed a port of CLIProxyAPI or a project inspired by it, please open a PR to add it to this list.

|

|

||||||

|

|

||||||

## License

|

## License

|

||||||

|

|

||||||

This project is licensed under the MIT License - see the [LICENSE](LICENSE) file for details.

|

This project is licensed under the MIT License - see the [LICENSE](LICENSE) file for details.

|

||||||

|

|||||||

92

README_CN.md

92

README_CN.md

@@ -1,6 +1,6 @@

|

|||||||

# CLIProxyAPI Plus

|

# CLIProxyAPI Plus

|

||||||

|

|

||||||

[English](README.md) | 中文 | [日本語](README_JA.md)

|

[English](README.md) | 中文

|

||||||

|

|

||||||

这是 [CLIProxyAPI](https://github.com/router-for-me/CLIProxyAPI) 的 Plus 版本,在原有基础上增加了第三方供应商的支持。

|

这是 [CLIProxyAPI](https://github.com/router-for-me/CLIProxyAPI) 的 Plus 版本,在原有基础上增加了第三方供应商的支持。

|

||||||

|

|

||||||

@@ -12,96 +12,6 @@

|

|||||||

|

|

||||||

如果需要提交任何非第三方供应商支持的 Pull Request,请提交到[主线](https://github.com/router-for-me/CLIProxyAPI)版本。

|

如果需要提交任何非第三方供应商支持的 Pull Request,请提交到[主线](https://github.com/router-for-me/CLIProxyAPI)版本。

|

||||||

|

|

||||||

1. Fork 仓库

|

|

||||||

2. 创建您的功能分支(`git checkout -b feature/amazing-feature`)

|

|

||||||

3. 提交您的更改(`git commit -m 'Add some amazing feature'`)

|

|

||||||

4. 推送到分支(`git push origin feature/amazing-feature`)

|

|

||||||

5. 打开 Pull Request

|

|

||||||

|

|

||||||

## 谁与我们在一起?

|

|

||||||

|

|

||||||

这些项目基于 CLIProxyAPI:

|

|

||||||

|

|

||||||

### [vibeproxy](https://github.com/automazeio/vibeproxy)

|

|

||||||

|

|

||||||

一个原生 macOS 菜单栏应用,让您可以使用 Claude Code & ChatGPT 订阅服务和 AI 编程工具,无需 API 密钥。

|

|

||||||

|

|

||||||

### [Subtitle Translator](https://github.com/VjayC/SRT-Subtitle-Translator-Validator)

|

|

||||||

|

|

||||||

一款基于浏览器的 SRT 字幕翻译工具,可通过 CLI 代理 API 使用您的 Gemini 订阅。内置自动验证与错误修正功能,无需 API 密钥。

|

|

||||||

|

|

||||||

### [CCS (Claude Code Switch)](https://github.com/kaitranntt/ccs)

|

|

||||||

|

|

||||||

CLI 封装器,用于通过 CLIProxyAPI OAuth 即时切换多个 Claude 账户和替代模型(Gemini, Codex, Antigravity),无需 API 密钥。

|

|

||||||

|

|

||||||

### [Quotio](https://github.com/nguyenphutrong/quotio)

|

|

||||||

|

|

||||||

原生 macOS 菜单栏应用,统一管理 Claude、Gemini、OpenAI、Qwen 和 Antigravity 订阅,提供实时配额追踪和智能自动故障转移,支持 Claude Code、OpenCode 和 Droid 等 AI 编程工具,无需 API 密钥。

|

|

||||||

|

|

||||||

### [CodMate](https://github.com/loocor/CodMate)

|

|

||||||

|

|

||||||

原生 macOS SwiftUI 应用,用于管理 CLI AI 会话(Claude Code、Codex、Gemini CLI),提供统一的提供商管理、Git 审查、项目组织、全局搜索和终端集成。集成 CLIProxyAPI 为 Codex、Claude、Gemini、Antigravity 和 Qwen Code 提供统一的 OAuth 认证,支持内置和第三方提供商通过单一代理端点重路由 - OAuth 提供商无需 API 密钥。

|

|

||||||

|

|

||||||

### [ProxyPilot](https://github.com/Finesssee/ProxyPilot)

|

|

||||||

|

|

||||||

原生 Windows CLIProxyAPI 分支,集成 TUI、系统托盘及多服务商 OAuth 认证,专为 AI 编程工具打造,无需 API 密钥。

|

|

||||||

|

|

||||||

### [Claude Proxy VSCode](https://github.com/uzhao/claude-proxy-vscode)

|

|

||||||

|

|

||||||

一款 VSCode 扩展,提供了在 VSCode 中快速切换 Claude Code 模型的功能,内置 CLIProxyAPI 作为其后端,支持后台自动启动和关闭。

|

|

||||||

|

|

||||||

### [ZeroLimit](https://github.com/0xtbug/zero-limit)

|

|

||||||

|

|

||||||

Windows 桌面应用,基于 Tauri + React 构建,用于通过 CLIProxyAPI 监控 AI 编程助手配额。支持跨 Gemini、Claude、OpenAI Codex 和 Antigravity 账户的使用量追踪,提供实时仪表盘、系统托盘集成和一键代理控制,无需 API 密钥。

|

|

||||||

|

|

||||||

### [CPA-XXX Panel](https://github.com/ferretgeek/CPA-X)

|

|

||||||

|

|

||||||

面向 CLIProxyAPI 的 Web 管理面板,提供健康检查、资源监控、日志查看、自动更新、请求统计与定价展示,支持一键安装与 systemd 服务。

|

|

||||||

|

|

||||||

### [CLIProxyAPI Tray](https://github.com/kitephp/CLIProxyAPI_Tray)

|

|

||||||

|

|

||||||

Windows 托盘应用,基于 PowerShell 脚本实现,不依赖任何第三方库。主要功能包括:自动创建快捷方式、静默运行、密码管理、通道切换(Main / Plus)以及自动下载与更新。

|

|

||||||

|

|

||||||

### [霖君](https://github.com/wangdabaoqq/LinJun)

|

|

||||||

|

|

||||||

霖君是一款用于管理AI编程助手的跨平台桌面应用,支持macOS、Windows、Linux系统。统一管理Claude Code、Gemini CLI、OpenAI Codex、Qwen Code等AI编程工具,本地代理实现多账户配额跟踪和一键配置。

|

|

||||||

|

|

||||||

### [CLIProxyAPI Dashboard](https://github.com/itsmylife44/cliproxyapi-dashboard)

|

|

||||||

|

|

||||||

一个面向 CLIProxyAPI 的现代化 Web 管理仪表盘,基于 Next.js、React 和 PostgreSQL 构建。支持实时日志流、结构化配置编辑、API Key 管理、Claude/Gemini/Codex 的 OAuth 提供方集成、使用量分析、容器管理,并可通过配套插件与 OpenCode 同步配置,无需手动编辑 YAML。

|

|

||||||

|

|

||||||

### [All API Hub](https://github.com/qixing-jk/all-api-hub)

|

|

||||||

|

|

||||||

用于一站式管理 New API 兼容中转站账号的浏览器扩展,提供余额与用量看板、自动签到、密钥一键导出到常用应用、网页内 API 可用性测试,以及渠道与模型同步和重定向。支持通过 CLIProxyAPI Management API 一键导入 Provider 与同步配置。

|

|

||||||

|

|

||||||

### [Shadow AI](https://github.com/HEUDavid/shadow-ai)

|

|

||||||

|

|

||||||

Shadow AI 是一款专为受限环境设计的 AI 辅助工具。提供无窗口、无痕迹的隐蔽运行方式,并通过局域网实现跨设备的 AI 问答交互与控制。本质上是一个「屏幕/音频采集 + AI 推理 + 低摩擦投送」的自动化协作层,帮助用户在受控设备/受限环境下沉浸式跨应用地使用 AI 助手。

|

|

||||||

|

|

||||||

### [ProxyPal](https://github.com/buddingnewinsights/proxypal)

|

|

||||||

|

|

||||||

跨平台桌面应用(macOS、Windows、Linux),以原生 GUI 封装 CLIProxyAPI。支持连接 Claude、ChatGPT、Gemini、GitHub Copilot、Qwen、iFlow 及自定义 OpenAI 兼容端点,具备使用分析、请求监控和热门编程工具自动配置功能,无需 API 密钥。

|

|

||||||

|

|

||||||

> [!NOTE]

|

|

||||||

> 如果你开发了基于 CLIProxyAPI 的项目,请提交一个 PR(拉取请求)将其添加到此列表中。

|

|

||||||

|

|

||||||

## 更多选择

|

|

||||||

|

|

||||||

以下项目是 CLIProxyAPI 的移植版或受其启发:

|

|

||||||

|

|

||||||

### [9Router](https://github.com/decolua/9router)

|

|

||||||

|

|

||||||

基于 Next.js 的实现,灵感来自 CLIProxyAPI,易于安装使用;自研格式转换(OpenAI/Claude/Gemini/Ollama)、组合系统与自动回退、多账户管理(指数退避)、Next.js Web 控制台,并支持 Cursor、Claude Code、Cline、RooCode 等 CLI 工具,无需 API 密钥。

|

|

||||||

|

|

||||||

### [OmniRoute](https://github.com/diegosouzapw/OmniRoute)

|

|

||||||

|

|

||||||

代码不止,创新不停。智能路由至免费及低成本 AI 模型,并支持自动故障转移。

|

|

||||||

|

|

||||||

OmniRoute 是一个面向多供应商大语言模型的 AI 网关:它提供兼容 OpenAI 的端点,具备智能路由、负载均衡、重试及回退机制。通过添加策略、速率限制、缓存和可观测性,确保推理过程既可靠又具备成本意识。

|

|

||||||

|

|

||||||

> [!NOTE]

|

|

||||||

> 如果你开发了 CLIProxyAPI 的移植或衍生项目,请提交 PR 将其添加到此列表中。

|

|

||||||

|

|

||||||

## 许可证

|

## 许可证

|

||||||

|

|

||||||

此项目根据 MIT 许可证授权 - 有关详细信息,请参阅 [LICENSE](LICENSE) 文件。

|

此项目根据 MIT 许可证授权 - 有关详细信息,请参阅 [LICENSE](LICENSE) 文件。

|

||||||

|

|||||||

199

README_JA.md

199

README_JA.md

@@ -1,199 +0,0 @@

|

|||||||

# CLI Proxy API

|

|

||||||

|

|

||||||

[English](README.md) | [中文](README_CN.md) | 日本語

|

|

||||||

|

|

||||||

CLI向けのOpenAI/Gemini/Claude/Codex互換APIインターフェースを提供するプロキシサーバーです。

|

|

||||||

|

|

||||||

OAuth経由でOpenAI Codex(GPTモデル)およびClaude Codeもサポートしています。

|

|

||||||

|

|

||||||

ローカルまたはマルチアカウントのCLIアクセスを、OpenAI(Responses含む)/Gemini/Claude互換のクライアントやSDKで利用できます。

|

|

||||||

|

|

||||||

## スポンサー

|

|

||||||

|

|

||||||

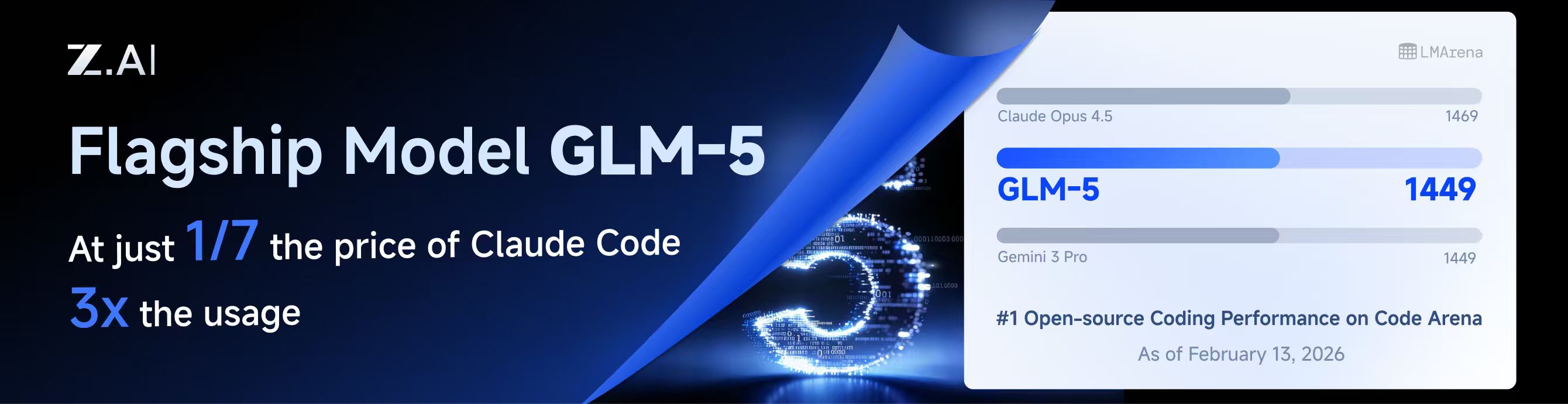

[](https://z.ai/subscribe?ic=8JVLJQFSKB)

|

|

||||||

|

|

||||||

本プロジェクトはZ.aiにスポンサーされており、GLM CODING PLANの提供を受けています。

|

|

||||||

|

|

||||||

GLM CODING PLANはAIコーディング向けに設計されたサブスクリプションサービスで、月額わずか$10から利用可能です。フラッグシップのGLM-4.7および(GLM-5はProユーザーのみ利用可能)モデルを10以上の人気AIコーディングツール(Claude Code、Cline、Roo Codeなど)で利用でき、開発者にトップクラスの高速かつ安定したコーディング体験を提供します。

|

|

||||||

|

|

||||||

GLM CODING PLANを10%割引で取得:https://z.ai/subscribe?ic=8JVLJQFSKB

|

|

||||||

|

|

||||||

---

|

|

||||||

|

|

||||||

<table>

|

|

||||||

<tbody>

|

|

||||||

<tr>

|

|

||||||

<td width="180"><a href="https://www.packyapi.com/register?aff=cliproxyapi"><img src="./assets/packycode.png" alt="PackyCode" width="150"></a></td>

|

|

||||||

<td>PackyCodeのスポンサーシップに感謝します!PackyCodeは信頼性が高く効率的なAPIリレーサービスプロバイダーで、Claude Code、Codex、Geminiなどのリレーサービスを提供しています。PackyCodeは当ソフトウェアのユーザーに特別割引を提供しています:<a href="https://www.packyapi.com/register?aff=cliproxyapi">こちらのリンク</a>から登録し、チャージ時にプロモーションコード「cliproxyapi」を入力すると10%割引になります。</td>

|

|

||||||

</tr>

|

|

||||||

<tr>

|

|

||||||

<td width="180"><a href="https://www.aicodemirror.com/register?invitecode=TJNAIF"><img src="./assets/aicodemirror.png" alt="AICodeMirror" width="150"></a></td>

|

|

||||||

<td>AICodeMirrorのスポンサーシップに感謝します!AICodeMirrorはClaude Code / Codex / Gemini CLI向けの公式高安定性リレーサービスを提供しており、エンタープライズグレードの同時接続、迅速な請求書発行、24時間365日の専任技術サポートを備えています。Claude Code / Codex / Geminiの公式チャネルが元の価格の38% / 2% / 9%で利用でき、チャージ時にはさらに割引があります!CLIProxyAPIユーザー向けの特別特典:<a href="https://www.aicodemirror.com/register?invitecode=TJNAIF">こちらのリンク</a>から登録すると、初回チャージが20%割引になり、エンタープライズのお客様は最大25%割引を受けられます!</td>

|

|

||||||

</tr>

|

|

||||||

<tr>

|

|

||||||

<td width="180"><a href="https://shop.bmoplus.com/?utm_source=github"><img src="./assets/bmoplus.png" alt="BmoPlus" width="150"></a></td>

|

|

||||||

<td>本プロジェクトにご支援いただいた BmoPlus に感謝いたします!BmoPlusは、AIサブスクリプションのヘビーユーザー向けに特化した信頼性の高いAIアカウントサービスプロバイダーであり、安定した ChatGPT Plus / ChatGPT Pro (完全保証) / Claude Pro / Super Grok / Gemini Pro の公式代行チャージおよび即納アカウントを提供しています。こちらの<a href="https://shop.bmoplus.com/?utm_source=github">BmoPlus AIアカウント専門店/代行チャージ</a>経由でご登録・ご注文いただいたユーザー様は、GPTを <b>公式サイト価格の約1割(90% OFF)</b> という驚異的な価格でご利用いただけます!</td>

|

|

||||||

</tr>

|

|

||||||

<tr>

|

|

||||||

<td width="180"><a href="https://www.lingtrue.com/register"><img src="./assets/lingtrue.png" alt="LingtrueAPI" width="150"></a></td>

|

|

||||||

<td>LingtrueAPIのスポンサーシップに感謝します!LingtrueAPIはグローバルな大規模モデルAPIリレーサービスプラットフォームで、Claude Code、Codex、GeminiなどのトップモデルAPI呼び出しサービスを提供し、ユーザーが低コストかつ高い安定性で世界中のAI能力に接続できるよう支援しています。LingtrueAPIは本ソフトウェアのユーザーに特別割引を提供しています:<a href="https://www.lingtrue.com/register">こちらのリンク</a>から登録し、初回チャージ時にプロモーションコード「LingtrueAPI」を入力すると10%割引になります。</td>

|

|

||||||

</tr>

|

|

||||||

</tbody>

|

|

||||||

</table>

|

|

||||||

|

|

||||||

## 概要

|

|

||||||

|

|

||||||

- CLIモデル向けのOpenAI/Gemini/Claude互換APIエンドポイント

|

|

||||||

- OAuthログインによるOpenAI Codexサポート(GPTモデル)

|

|

||||||

- OAuthログインによるClaude Codeサポート

|

|

||||||

- OAuthログインによるQwen Codeサポート

|

|

||||||

- OAuthログインによるiFlowサポート

|

|

||||||

- プロバイダールーティングによるAmp CLIおよびIDE拡張機能のサポート

|

|

||||||

- ストリーミングおよび非ストリーミングレスポンス

|

|

||||||

- 関数呼び出し/ツールのサポート

|

|

||||||

- マルチモーダル入力サポート(テキストと画像)

|

|

||||||

- ラウンドロビン負荷分散による複数アカウント対応(Gemini、OpenAI、Claude、QwenおよびiFlow)

|

|

||||||

- シンプルなCLI認証フロー(Gemini、OpenAI、Claude、QwenおよびiFlow)

|

|

||||||

- Generative Language APIキーのサポート

|

|

||||||

- AI Studioビルドのマルチアカウント負荷分散

|

|

||||||

- Gemini CLIのマルチアカウント負荷分散

|

|

||||||

- Claude Codeのマルチアカウント負荷分散

|

|

||||||

- Qwen Codeのマルチアカウント負荷分散

|

|

||||||

- iFlowのマルチアカウント負荷分散

|

|

||||||

- OpenAI Codexのマルチアカウント負荷分散

|

|

||||||

- 設定によるOpenAI互換アップストリームプロバイダー(例:OpenRouter)

|

|

||||||

- プロキシ埋め込み用の再利用可能なGo SDK(`docs/sdk-usage.md`を参照)

|

|

||||||

|

|

||||||

## はじめに

|

|

||||||

|

|

||||||

CLIProxyAPIガイド:[https://help.router-for.me/](https://help.router-for.me/)

|

|

||||||

|

|

||||||

## 管理API

|

|

||||||

|

|

||||||

[MANAGEMENT_API.md](https://help.router-for.me/management/api)を参照

|

|

||||||

|

|

||||||

## Amp CLIサポート

|

|

||||||

|

|

||||||

CLIProxyAPIは[Amp CLI](https://ampcode.com)およびAmp IDE拡張機能の統合サポートを含んでおり、Google/ChatGPT/ClaudeのOAuthサブスクリプションをAmpのコーディングツールで使用できます:

|

|

||||||

|

|

||||||

- Ampの APIパターン用のプロバイダールートエイリアス(`/api/provider/{provider}/v1...`)

|

|

||||||

- OAuth認証およびアカウント機能用の管理プロキシ

|

|

||||||

- 自動ルーティングによるスマートモデルフォールバック

|

|

||||||

- 利用できないモデルを代替モデルにルーティングする**モデルマッピング**(例:`claude-opus-4.5` → `claude-sonnet-4`)

|

|

||||||

- localhostのみの管理エンドポイントによるセキュリティファーストの設計

|

|

||||||

|

|

||||||

特定のバックエンド系統のリクエスト/レスポンス形状が必要な場合は、統合された `/v1/...` エンドポイントよりも provider-specific のパスを優先してください。

|

|

||||||

|

|

||||||

- messages 系のバックエンドには `/api/provider/{provider}/v1/messages`

|

|

||||||

- モデル単位の generate 系エンドポイントには `/api/provider/{provider}/v1beta/models/...`

|

|

||||||

- chat-completions 系のバックエンドには `/api/provider/{provider}/v1/chat/completions`

|

|

||||||

|

|

||||||

これらのパスはプロトコル面の選択には役立ちますが、同じクライアント向けモデル名が複数バックエンドで再利用されている場合、それだけで推論実行系が一意に固定されるわけではありません。実際の推論ルーティングは、引き続きリクエスト内の model/alias 解決に従います。厳密にバックエンドを固定したい場合は、一意な alias や prefix を使うか、クライアント向けモデル名の重複自体を避けてください。

|

|

||||||

|

|

||||||

**→ [Amp CLI統合ガイドの完全版](https://help.router-for.me/agent-client/amp-cli.html)**

|

|

||||||

|

|

||||||

## SDKドキュメント

|

|

||||||

|

|

||||||

- 使い方:[docs/sdk-usage.md](docs/sdk-usage.md)

|

|

||||||

- 上級(エグゼキューターとトランスレーター):[docs/sdk-advanced.md](docs/sdk-advanced.md)

|

|

||||||

- アクセス:[docs/sdk-access.md](docs/sdk-access.md)

|

|

||||||

- ウォッチャー:[docs/sdk-watcher.md](docs/sdk-watcher.md)

|

|

||||||

- カスタムプロバイダーの例:`examples/custom-provider`

|

|

||||||

|

|

||||||

## コントリビューション

|

|

||||||

|

|

||||||

コントリビューションを歓迎します!お気軽にPull Requestを送ってください。

|

|

||||||

|

|

||||||

1. リポジトリをフォーク

|

|

||||||

2. フィーチャーブランチを作成(`git checkout -b feature/amazing-feature`)

|

|

||||||

3. 変更をコミット(`git commit -m 'Add some amazing feature'`)

|

|

||||||

4. ブランチにプッシュ(`git push origin feature/amazing-feature`)

|

|

||||||

5. Pull Requestを作成

|

|

||||||

|

|

||||||

## 関連プロジェクト

|

|

||||||

|

|

||||||

CLIProxyAPIをベースにした以下のプロジェクトがあります:

|

|

||||||

|

|

||||||

### [vibeproxy](https://github.com/automazeio/vibeproxy)

|

|

||||||

|

|

||||||

macOSネイティブのメニューバーアプリで、Claude CodeとChatGPTのサブスクリプションをAIコーディングツールで使用可能 - APIキー不要

|

|

||||||

|

|

||||||

### [Subtitle Translator](https://github.com/VjayC/SRT-Subtitle-Translator-Validator)

|

|

||||||

|

|

||||||

CLIProxyAPI経由でGeminiサブスクリプションを使用してSRT字幕を翻訳するブラウザベースのツール。自動検証/エラー修正機能付き - APIキー不要

|

|

||||||

|

|

||||||

### [CCS (Claude Code Switch)](https://github.com/kaitranntt/ccs)

|

|

||||||

|

|

||||||

CLIProxyAPI OAuthを使用して複数のClaudeアカウントや代替モデル(Gemini、Codex、Antigravity)を即座に切り替えるCLIラッパー - APIキー不要

|

|

||||||

|

|

||||||

### [Quotio](https://github.com/nguyenphutrong/quotio)

|

|

||||||

|

|

||||||

Claude、Gemini、OpenAI、Qwen、Antigravityのサブスクリプションを統合し、リアルタイムのクォータ追跡とスマート自動フェイルオーバーを備えたmacOSネイティブのメニューバーアプリ。Claude Code、OpenCode、Droidなどのコーディングツール向け - APIキー不要

|

|

||||||

|

|

||||||

### [CodMate](https://github.com/loocor/CodMate)

|

|

||||||

|

|

||||||

CLI AIセッション(Codex、Claude Code、Gemini CLI)を管理するmacOS SwiftUIネイティブアプリ。統合プロバイダー管理、Gitレビュー、プロジェクト整理、グローバル検索、ターミナル統合機能を搭載。CLIProxyAPIと統合し、Codex、Claude、Gemini、Antigravity、Qwen CodeのOAuth認証を提供。単一のプロキシエンドポイントを通じた組み込みおよびサードパーティプロバイダーの再ルーティングに対応 - OAuthプロバイダーではAPIキー不要

|

|

||||||

|

|

||||||

### [ProxyPilot](https://github.com/Finesssee/ProxyPilot)

|

|

||||||

|

|

||||||

TUI、システムトレイ、マルチプロバイダーOAuthを備えたWindows向けCLIProxyAPIフォーク - AIコーディングツール用、APIキー不要

|

|

||||||

|

|

||||||

### [Claude Proxy VSCode](https://github.com/uzhao/claude-proxy-vscode)

|

|

||||||

|

|

||||||

Claude Codeモデルを素早く切り替えるVSCode拡張機能。バックエンドとしてCLIProxyAPIを統合し、バックグラウンドでの自動ライフサイクル管理を搭載

|

|

||||||

|

|

||||||

### [ZeroLimit](https://github.com/0xtbug/zero-limit)

|

|

||||||

|

|

||||||

CLIProxyAPIを使用してAIコーディングアシスタントのクォータを監視するTauri + React製のWindowsデスクトップアプリ。Gemini、Claude、OpenAI Codex、Antigravityアカウントの使用量をリアルタイムダッシュボード、システムトレイ統合、ワンクリックプロキシコントロールで追跡 - APIキー不要

|

|

||||||

|

|

||||||

### [CPA-XXX Panel](https://github.com/ferretgeek/CPA-X)

|

|

||||||

|

|

||||||

CLIProxyAPI向けの軽量Web管理パネル。ヘルスチェック、リソース監視、リアルタイムログ、自動更新、リクエスト統計、料金表示機能を搭載。ワンクリックインストールとsystemdサービスに対応

|

|

||||||

|

|

||||||

### [CLIProxyAPI Tray](https://github.com/kitephp/CLIProxyAPI_Tray)

|

|

||||||

|

|

||||||

PowerShellスクリプトで実装されたWindowsトレイアプリケーション。サードパーティライブラリに依存せず、ショートカットの自動作成、サイレント実行、パスワード管理、チャネル切り替え(Main / Plus)、自動ダウンロードおよび自動更新に対応

|

|

||||||

|

|

||||||

### [霖君](https://github.com/wangdabaoqq/LinJun)

|

|

||||||

|

|

||||||

霖君はAIプログラミングアシスタントを管理するクロスプラットフォームデスクトップアプリケーションで、macOS、Windows、Linuxシステムに対応。Claude Code、Gemini CLI、OpenAI Codex、Qwen Codeなどのコーディングツールを統合管理し、ローカルプロキシによるマルチアカウントクォータ追跡とワンクリック設定が可能

|

|

||||||

|

|

||||||

### [CLIProxyAPI Dashboard](https://github.com/itsmylife44/cliproxyapi-dashboard)

|

|

||||||

|

|

||||||

Next.js、React、PostgreSQLで構築されたCLIProxyAPI用のモダンなWebベース管理ダッシュボード。リアルタイムログストリーミング、構造化された設定編集、APIキー管理、Claude/Gemini/Codex向けOAuthプロバイダー統合、使用量分析、コンテナ管理、コンパニオンプラグインによるOpenCodeとの設定同期機能を搭載 - 手動でのYAML編集は不要

|

|

||||||

|

|

||||||

### [All API Hub](https://github.com/qixing-jk/all-api-hub)

|

|

||||||

|

|

||||||

New API互換リレーサイトアカウントをワンストップで管理するブラウザ拡張機能。残高と使用量のダッシュボード、自動チェックイン、一般的なアプリへのワンクリックキーエクスポート、ページ内API可用性テスト、チャネル/モデルの同期とリダイレクト機能を搭載。Management APIを通じてCLIProxyAPIと統合し、ワンクリックでプロバイダーのインポートと設定同期が可能

|

|

||||||

|

|

||||||

### [Shadow AI](https://github.com/HEUDavid/shadow-ai)

|

|

||||||

|

|

||||||

Shadow AIは制限された環境向けに特別に設計されたAIアシスタントツールです。ウィンドウや痕跡のないステルス動作モードを提供し、LAN(ローカルエリアネットワーク)を介したクロスデバイスAI質疑応答のインタラクションと制御を可能にします。本質的には「画面/音声キャプチャ + AI推論 + 低摩擦デリバリー」の自動化コラボレーションレイヤーであり、制御されたデバイスや制限された環境でアプリケーション横断的にAIアシスタントを没入的に使用できるようユーザーを支援します。

|

|

||||||

|

|

||||||

### [ProxyPal](https://github.com/buddingnewinsights/proxypal)

|

|

||||||

|

|

||||||

CLIProxyAPIをネイティブGUIでラップしたクロスプラットフォームデスクトップアプリ(macOS、Windows、Linux)。Claude、ChatGPT、Gemini、GitHub Copilot、Qwen、iFlow、カスタムOpenAI互換エンドポイントに対応し、使用状況分析、リクエスト監視、人気コーディングツールの自動設定機能を搭載 - APIキー不要

|

|

||||||

|

|

||||||

> [!NOTE]

|

|

||||||

> CLIProxyAPIをベースにプロジェクトを開発した場合は、PRを送ってこのリストに追加してください。

|

|

||||||

|

|

||||||

## その他の選択肢

|

|

||||||

|

|

||||||

以下のプロジェクトはCLIProxyAPIの移植版またはそれに触発されたものです:

|

|

||||||

|

|

||||||

### [9Router](https://github.com/decolua/9router)

|

|

||||||

|

|

||||||

CLIProxyAPIに触発されたNext.js実装。インストールと使用が簡単で、フォーマット変換(OpenAI/Claude/Gemini/Ollama)、自動フォールバック付きコンボシステム、指数バックオフ付きマルチアカウント管理、Next.js Webダッシュボード、CLIツール(Cursor、Claude Code、Cline、RooCode)のサポートをゼロから構築 - APIキー不要

|

|

||||||

|

|

||||||

### [OmniRoute](https://github.com/diegosouzapw/OmniRoute)

|

|

||||||

|

|

||||||

コーディングを止めない。無料および低コストのAIモデルへのスマートルーティングと自動フォールバック。

|

|

||||||

|

|

||||||

OmniRouteはマルチプロバイダーLLM向けのAIゲートウェイです:スマートルーティング、負荷分散、リトライ、フォールバックを備えたOpenAI互換エンドポイント。ポリシー、レート制限、キャッシュ、可観測性を追加して、信頼性が高くコストを意識した推論を実現します。

|

|

||||||

|

|

||||||

> [!NOTE]

|

|

||||||

> CLIProxyAPIの移植版またはそれに触発されたプロジェクトを開発した場合は、PRを送ってこのリストに追加してください。

|

|

||||||

|

|

||||||

## ライセンス

|

|

||||||

|

|

||||||

本プロジェクトはMITライセンスの下でライセンスされています - 詳細は[LICENSE](LICENSE)ファイルを参照してください。

|

|

||||||

@@ -26,6 +26,7 @@ import (

|

|||||||

"time"

|

"time"

|

||||||

|

|

||||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/logging"

|

"github.com/router-for-me/CLIProxyAPI/v6/internal/logging"

|

||||||

|

"github.com/router-for-me/CLIProxyAPI/v6/internal/misc"

|

||||||

sdkauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/auth"

|

sdkauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/auth"

|

||||||

coreauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/cliproxy/auth"

|

coreauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/cliproxy/auth"

|

||||||

"github.com/router-for-me/CLIProxyAPI/v6/sdk/proxyutil"

|

"github.com/router-for-me/CLIProxyAPI/v6/sdk/proxyutil"

|

||||||

@@ -188,7 +189,7 @@ func fetchModels(ctx context.Context, auth *coreauth.Auth) []modelEntry {

|

|||||||

httpReq.Close = true

|

httpReq.Close = true

|

||||||

httpReq.Header.Set("Content-Type", "application/json")

|

httpReq.Header.Set("Content-Type", "application/json")

|

||||||

httpReq.Header.Set("Authorization", "Bearer "+accessToken)

|

httpReq.Header.Set("Authorization", "Bearer "+accessToken)

|

||||||

httpReq.Header.Set("User-Agent", "antigravity/1.19.6 darwin/arm64")

|

httpReq.Header.Set("User-Agent", misc.AntigravityUserAgent())

|

||||||

|

|

||||||

httpClient := &http.Client{Timeout: 30 * time.Second}

|

httpClient := &http.Client{Timeout: 30 * time.Second}

|

||||||

if transport, _, errProxy := proxyutil.BuildHTTPTransport(auth.ProxyURL); errProxy == nil && transport != nil {

|

if transport, _, errProxy := proxyutil.BuildHTTPTransport(auth.ProxyURL); errProxy == nil && transport != nil {

|

||||||

|

|||||||

@@ -99,6 +99,7 @@ func main() {

|

|||||||

var codeBuddyLogin bool

|

var codeBuddyLogin bool

|

||||||

var projectID string

|

var projectID string

|

||||||

var vertexImport string

|

var vertexImport string

|

||||||

|

var vertexImportPrefix string

|

||||||

var configPath string

|

var configPath string

|

||||||

var password string

|

var password string

|

||||||

var tuiMode bool

|

var tuiMode bool

|

||||||

@@ -139,6 +140,7 @@ func main() {

|

|||||||

flag.StringVar(&projectID, "project_id", "", "Project ID (Gemini only, not required)")

|

flag.StringVar(&projectID, "project_id", "", "Project ID (Gemini only, not required)")

|

||||||

flag.StringVar(&configPath, "config", DefaultConfigPath, "Configure File Path")

|

flag.StringVar(&configPath, "config", DefaultConfigPath, "Configure File Path")

|

||||||

flag.StringVar(&vertexImport, "vertex-import", "", "Import Vertex service account key JSON file")

|

flag.StringVar(&vertexImport, "vertex-import", "", "Import Vertex service account key JSON file")

|

||||||

|

flag.StringVar(&vertexImportPrefix, "vertex-import-prefix", "", "Prefix for Vertex model namespacing (use with -vertex-import)")

|

||||||

flag.StringVar(&password, "password", "", "")

|

flag.StringVar(&password, "password", "", "")

|

||||||

flag.BoolVar(&tuiMode, "tui", false, "Start with terminal management UI")

|

flag.BoolVar(&tuiMode, "tui", false, "Start with terminal management UI")

|

||||||

flag.BoolVar(&standalone, "standalone", false, "In TUI mode, start an embedded local server")

|

flag.BoolVar(&standalone, "standalone", false, "In TUI mode, start an embedded local server")

|

||||||

@@ -510,7 +512,7 @@ func main() {

|

|||||||

|

|

||||||

if vertexImport != "" {

|

if vertexImport != "" {

|

||||||

// Handle Vertex service account import

|

// Handle Vertex service account import

|

||||||

cmd.DoVertexImport(cfg, vertexImport)

|

cmd.DoVertexImport(cfg, vertexImport, vertexImportPrefix)

|

||||||

} else if login {

|

} else if login {

|

||||||

// Handle Google/Gemini login

|

// Handle Google/Gemini login

|

||||||

cmd.DoLogin(cfg, projectID, options)

|

cmd.DoLogin(cfg, projectID, options)

|

||||||

@@ -596,6 +598,7 @@ func main() {

|

|||||||

if standalone {

|

if standalone {

|

||||||

// Standalone mode: start an embedded local server and connect TUI client to it.

|

// Standalone mode: start an embedded local server and connect TUI client to it.

|

||||||

managementasset.StartAutoUpdater(context.Background(), configFilePath)

|

managementasset.StartAutoUpdater(context.Background(), configFilePath)

|

||||||

|

misc.StartAntigravityVersionUpdater(context.Background())

|

||||||

if !localModel {

|

if !localModel {

|

||||||

registry.StartModelsUpdater(context.Background())

|

registry.StartModelsUpdater(context.Background())

|

||||||

}

|

}

|

||||||

@@ -671,6 +674,7 @@ func main() {

|

|||||||

} else {

|

} else {

|

||||||

// Start the main proxy service

|

// Start the main proxy service

|

||||||

managementasset.StartAutoUpdater(context.Background(), configFilePath)

|

managementasset.StartAutoUpdater(context.Background(), configFilePath)

|

||||||

|

misc.StartAntigravityVersionUpdater(context.Background())

|

||||||

if !localModel {

|

if !localModel {

|

||||||

registry.StartModelsUpdater(context.Background())

|

registry.StartModelsUpdater(context.Background())

|

||||||

}

|

}

|

||||||

|

|||||||

@@ -105,6 +105,10 @@ routing:

|

|||||||

# When true, enable authentication for the WebSocket API (/v1/ws).

|

# When true, enable authentication for the WebSocket API (/v1/ws).

|

||||||

ws-auth: false

|

ws-auth: false

|

||||||

|

|

||||||

|

# When true, enable Gemini CLI internal endpoints (/v1internal:*).

|

||||||

|

# Default is false for safety.

|

||||||

|

enable-gemini-cli-endpoint: false

|

||||||

|

|

||||||

# When > 0, emit blank lines every N seconds for non-streaming responses to prevent idle timeouts.

|

# When > 0, emit blank lines every N seconds for non-streaming responses to prevent idle timeouts.

|

||||||

nonstream-keepalive-interval: 0

|

nonstream-keepalive-interval: 0

|

||||||

|

|

||||||

|

|||||||

@@ -13,6 +13,7 @@ import (

|

|||||||

|

|

||||||

"github.com/fxamacker/cbor/v2"

|

"github.com/fxamacker/cbor/v2"

|

||||||

"github.com/gin-gonic/gin"

|

"github.com/gin-gonic/gin"

|

||||||

|

"github.com/router-for-me/CLIProxyAPI/v6/internal/config"

|

||||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/runtime/geminicli"

|

"github.com/router-for-me/CLIProxyAPI/v6/internal/runtime/geminicli"

|

||||||

coreauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/cliproxy/auth"

|

coreauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/cliproxy/auth"

|

||||||

"github.com/router-for-me/CLIProxyAPI/v6/sdk/proxyutil"

|

"github.com/router-for-me/CLIProxyAPI/v6/sdk/proxyutil"

|

||||||

@@ -700,6 +701,11 @@ func (h *Handler) apiCallTransport(auth *coreauth.Auth) http.RoundTripper {

|

|||||||

if proxyStr := strings.TrimSpace(auth.ProxyURL); proxyStr != "" {

|

if proxyStr := strings.TrimSpace(auth.ProxyURL); proxyStr != "" {

|

||||||

proxyCandidates = append(proxyCandidates, proxyStr)

|

proxyCandidates = append(proxyCandidates, proxyStr)

|

||||||

}

|

}

|

||||||

|

if h != nil && h.cfg != nil {

|

||||||

|

if proxyStr := strings.TrimSpace(proxyURLFromAPIKeyConfig(h.cfg, auth)); proxyStr != "" {

|

||||||

|

proxyCandidates = append(proxyCandidates, proxyStr)

|

||||||

|

}

|

||||||

|

}

|

||||||

}

|

}

|

||||||

if h != nil && h.cfg != nil {

|

if h != nil && h.cfg != nil {

|

||||||

if proxyStr := strings.TrimSpace(h.cfg.ProxyURL); proxyStr != "" {

|

if proxyStr := strings.TrimSpace(h.cfg.ProxyURL); proxyStr != "" {

|

||||||

@@ -722,6 +728,123 @@ func (h *Handler) apiCallTransport(auth *coreauth.Auth) http.RoundTripper {

|

|||||||

return clone

|

return clone

|

||||||

}

|

}

|

||||||

|

|

||||||

|

type apiKeyConfigEntry interface {

|

||||||

|

GetAPIKey() string

|

||||||

|

GetBaseURL() string

|

||||||

|

}

|

||||||

|

|

||||||

|

func resolveAPIKeyConfig[T apiKeyConfigEntry](entries []T, auth *coreauth.Auth) *T {

|

||||||

|

if auth == nil || len(entries) == 0 {

|

||||||

|

return nil

|

||||||

|

}

|

||||||

|

attrKey, attrBase := "", ""

|

||||||

|

if auth.Attributes != nil {

|

||||||

|

attrKey = strings.TrimSpace(auth.Attributes["api_key"])

|

||||||

|

attrBase = strings.TrimSpace(auth.Attributes["base_url"])

|

||||||

|

}

|

||||||

|

for i := range entries {

|

||||||

|

entry := &entries[i]

|

||||||

|

cfgKey := strings.TrimSpace((*entry).GetAPIKey())

|

||||||

|

cfgBase := strings.TrimSpace((*entry).GetBaseURL())

|

||||||

|

if attrKey != "" && attrBase != "" {

|

||||||

|

if strings.EqualFold(cfgKey, attrKey) && strings.EqualFold(cfgBase, attrBase) {

|

||||||

|

return entry

|

||||||

|

}

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

if attrKey != "" && strings.EqualFold(cfgKey, attrKey) {

|

||||||

|

if cfgBase == "" || strings.EqualFold(cfgBase, attrBase) {

|

||||||

|

return entry

|

||||||

|

}

|

||||||

|

}

|

||||||

|

if attrKey == "" && attrBase != "" && strings.EqualFold(cfgBase, attrBase) {

|

||||||

|

return entry

|

||||||

|

}

|

||||||

|

}

|

||||||

|

if attrKey != "" {

|

||||||

|

for i := range entries {

|

||||||

|

entry := &entries[i]

|

||||||

|

if strings.EqualFold(strings.TrimSpace((*entry).GetAPIKey()), attrKey) {

|

||||||

|

return entry

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

return nil

|

||||||

|

}

|

||||||

|

|

||||||

|

func proxyURLFromAPIKeyConfig(cfg *config.Config, auth *coreauth.Auth) string {

|

||||||

|

if cfg == nil || auth == nil {

|

||||||

|

return ""

|

||||||

|

}

|

||||||

|

authKind, authAccount := auth.AccountInfo()

|

||||||

|

if !strings.EqualFold(strings.TrimSpace(authKind), "api_key") {

|

||||||

|

return ""

|

||||||

|

}

|

||||||

|

|

||||||

|

attrs := auth.Attributes

|

||||||

|

compatName := ""

|

||||||

|

providerKey := ""

|

||||||

|

if len(attrs) > 0 {

|

||||||

|

compatName = strings.TrimSpace(attrs["compat_name"])

|

||||||

|

providerKey = strings.TrimSpace(attrs["provider_key"])

|

||||||

|

}

|

||||||

|

if compatName != "" || strings.EqualFold(strings.TrimSpace(auth.Provider), "openai-compatibility") {

|

||||||

|

return resolveOpenAICompatAPIKeyProxyURL(cfg, auth, strings.TrimSpace(authAccount), providerKey, compatName)

|

||||||

|

}

|

||||||

|

|

||||||

|

switch strings.ToLower(strings.TrimSpace(auth.Provider)) {

|

||||||

|

case "gemini":

|

||||||

|

if entry := resolveAPIKeyConfig(cfg.GeminiKey, auth); entry != nil {

|

||||||

|

return strings.TrimSpace(entry.ProxyURL)

|

||||||

|

}

|

||||||

|

case "claude":

|

||||||

|

if entry := resolveAPIKeyConfig(cfg.ClaudeKey, auth); entry != nil {

|

||||||

|

return strings.TrimSpace(entry.ProxyURL)

|

||||||

|

}

|

||||||

|

case "codex":

|

||||||

|

if entry := resolveAPIKeyConfig(cfg.CodexKey, auth); entry != nil {

|

||||||

|

return strings.TrimSpace(entry.ProxyURL)

|

||||||

|

}

|

||||||

|

}

|

||||||

|

return ""

|

||||||

|

}

|

||||||

|

|

||||||

|

func resolveOpenAICompatAPIKeyProxyURL(cfg *config.Config, auth *coreauth.Auth, apiKey, providerKey, compatName string) string {

|

||||||

|

if cfg == nil || auth == nil {

|

||||||

|

return ""

|

||||||

|

}

|

||||||

|

apiKey = strings.TrimSpace(apiKey)

|

||||||

|

if apiKey == "" {

|

||||||

|

return ""

|

||||||

|

}

|

||||||

|

candidates := make([]string, 0, 3)

|

||||||

|

if v := strings.TrimSpace(compatName); v != "" {

|

||||||

|

candidates = append(candidates, v)

|

||||||

|

}

|

||||||

|

if v := strings.TrimSpace(providerKey); v != "" {

|

||||||

|

candidates = append(candidates, v)

|

||||||

|

}

|

||||||

|

if v := strings.TrimSpace(auth.Provider); v != "" {

|

||||||

|

candidates = append(candidates, v)

|

||||||

|

}

|

||||||

|

|

||||||

|

for i := range cfg.OpenAICompatibility {

|

||||||

|

compat := &cfg.OpenAICompatibility[i]

|

||||||

|

for _, candidate := range candidates {

|

||||||

|

if candidate != "" && strings.EqualFold(strings.TrimSpace(candidate), compat.Name) {

|

||||||

|

for j := range compat.APIKeyEntries {

|

||||||

|

entry := &compat.APIKeyEntries[j]

|

||||||

|

if strings.EqualFold(strings.TrimSpace(entry.APIKey), apiKey) {

|

||||||

|

return strings.TrimSpace(entry.ProxyURL)

|

||||||

|

}

|

||||||

|

}

|

||||||

|

return ""

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

return ""

|

||||||

|

}

|

||||||

|

|

||||||

func buildProxyTransport(proxyStr string) *http.Transport {

|

func buildProxyTransport(proxyStr string) *http.Transport {

|

||||||

transport, _, errBuild := proxyutil.BuildHTTPTransport(proxyStr)

|

transport, _, errBuild := proxyutil.BuildHTTPTransport(proxyStr)

|

||||||

if errBuild != nil {

|

if errBuild != nil {

|

||||||

|

|||||||

@@ -58,6 +58,105 @@ func TestAPICallTransportInvalidAuthFallsBackToGlobalProxy(t *testing.T) {

|

|||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

|

func TestAPICallTransportAPIKeyAuthFallsBackToConfigProxyURL(t *testing.T) {

|

||||||

|

t.Parallel()

|

||||||

|

|

||||||

|

h := &Handler{

|

||||||

|

cfg: &config.Config{

|

||||||

|

SDKConfig: sdkconfig.SDKConfig{ProxyURL: "http://global-proxy.example.com:8080"},

|

||||||

|

GeminiKey: []config.GeminiKey{{

|

||||||

|

APIKey: "gemini-key",

|

||||||

|

ProxyURL: "http://gemini-proxy.example.com:8080",

|

||||||

|

}},

|

||||||

|

ClaudeKey: []config.ClaudeKey{{

|

||||||

|

APIKey: "claude-key",

|

||||||

|

ProxyURL: "http://claude-proxy.example.com:8080",

|

||||||

|

}},

|

||||||

|

CodexKey: []config.CodexKey{{

|

||||||

|

APIKey: "codex-key",

|

||||||

|

ProxyURL: "http://codex-proxy.example.com:8080",

|

||||||

|

}},

|

||||||

|

OpenAICompatibility: []config.OpenAICompatibility{{

|

||||||

|

Name: "bohe",

|

||||||

|

BaseURL: "https://bohe.example.com",

|

||||||

|

APIKeyEntries: []config.OpenAICompatibilityAPIKey{{

|

||||||

|

APIKey: "compat-key",

|

||||||

|

ProxyURL: "http://compat-proxy.example.com:8080",

|

||||||

|

}},

|

||||||

|

}},

|

||||||

|

},

|

||||||

|

}

|

||||||

|

|

||||||

|

cases := []struct {

|

||||||

|

name string

|

||||||

|

auth *coreauth.Auth

|

||||||

|

wantProxy string

|

||||||

|

}{

|

||||||

|

{

|

||||||

|

name: "gemini",

|

||||||

|

auth: &coreauth.Auth{

|

||||||

|

Provider: "gemini",

|

||||||

|

Attributes: map[string]string{"api_key": "gemini-key"},

|

||||||

|

},

|

||||||

|

wantProxy: "http://gemini-proxy.example.com:8080",

|

||||||

|

},

|

||||||

|

{

|

||||||

|

name: "claude",

|

||||||

|

auth: &coreauth.Auth{

|

||||||

|

Provider: "claude",

|

||||||

|

Attributes: map[string]string{"api_key": "claude-key"},

|

||||||

|

},

|

||||||

|

wantProxy: "http://claude-proxy.example.com:8080",

|

||||||

|

},

|

||||||

|

{

|

||||||

|

name: "codex",

|

||||||

|

auth: &coreauth.Auth{

|

||||||

|

Provider: "codex",

|

||||||

|

Attributes: map[string]string{"api_key": "codex-key"},

|

||||||

|

},

|

||||||

|

wantProxy: "http://codex-proxy.example.com:8080",

|

||||||

|

},

|

||||||

|

{

|

||||||

|

name: "openai-compatibility",

|

||||||

|

auth: &coreauth.Auth{

|

||||||

|

Provider: "bohe",

|

||||||

|

Attributes: map[string]string{

|

||||||

|

"api_key": "compat-key",

|

||||||

|

"compat_name": "bohe",

|

||||||

|

"provider_key": "bohe",

|

||||||

|

},

|

||||||

|

},

|

||||||

|

wantProxy: "http://compat-proxy.example.com:8080",

|

||||||

|

},

|

||||||

|

}

|

||||||

|

|

||||||

|

for _, tc := range cases {

|

||||||

|

tc := tc

|

||||||

|

t.Run(tc.name, func(t *testing.T) {

|

||||||

|

t.Parallel()

|

||||||

|

|

||||||

|

transport := h.apiCallTransport(tc.auth)

|

||||||

|

httpTransport, ok := transport.(*http.Transport)

|

||||||

|

if !ok {

|

||||||

|

t.Fatalf("transport type = %T, want *http.Transport", transport)

|

||||||

|

}

|

||||||

|

|

||||||

|

req, errRequest := http.NewRequest(http.MethodGet, "https://example.com", nil)

|

||||||

|

if errRequest != nil {

|

||||||

|

t.Fatalf("http.NewRequest returned error: %v", errRequest)

|

||||||

|

}

|

||||||

|

|

||||||

|

proxyURL, errProxy := httpTransport.Proxy(req)

|

||||||

|

if errProxy != nil {

|

||||||

|

t.Fatalf("httpTransport.Proxy returned error: %v", errProxy)

|

||||||

|

}

|

||||||

|

if proxyURL == nil || proxyURL.String() != tc.wantProxy {

|

||||||

|

t.Fatalf("proxy URL = %v, want %s", proxyURL, tc.wantProxy)

|

||||||

|

}

|

||||||

|

})

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

func TestAuthByIndexDistinguishesSharedAPIKeysAcrossProviders(t *testing.T) {

|

func TestAuthByIndexDistinguishesSharedAPIKeysAcrossProviders(t *testing.T) {

|

||||||

t.Parallel()

|

t.Parallel()

|

||||||

|

|

||||||

|

|||||||

@@ -253,6 +253,7 @@ func (fh *FallbackHandler) WrapHandler(handler gin.HandlerFunc) gin.HandlerFunc

|

|||||||

log.Debugf("amp model mapping: request %s -> %s", normalizedModel, resolvedModel)

|

log.Debugf("amp model mapping: request %s -> %s", normalizedModel, resolvedModel)

|

||||||

logAmpRouting(RouteTypeModelMapping, modelName, resolvedModel, providerName, requestPath)

|

logAmpRouting(RouteTypeModelMapping, modelName, resolvedModel, providerName, requestPath)

|

||||||

rewriter := NewResponseRewriter(c.Writer, modelName)

|

rewriter := NewResponseRewriter(c.Writer, modelName)

|

||||||

|

rewriter.suppressThinking = true

|

||||||

c.Writer = rewriter

|

c.Writer = rewriter

|

||||||

// Filter Anthropic-Beta header only for local handling paths

|

// Filter Anthropic-Beta header only for local handling paths

|

||||||

filterAntropicBetaHeader(c)

|

filterAntropicBetaHeader(c)

|

||||||

@@ -267,6 +268,7 @@ func (fh *FallbackHandler) WrapHandler(handler gin.HandlerFunc) gin.HandlerFunc

|

|||||||

// proxies (e.g. NewAPI) may return a different model name and lack

|

// proxies (e.g. NewAPI) may return a different model name and lack

|

||||||

// Amp-required fields like thinking.signature.

|

// Amp-required fields like thinking.signature.

|

||||||

rewriter := NewResponseRewriter(c.Writer, modelName)

|

rewriter := NewResponseRewriter(c.Writer, modelName)

|

||||||

|

rewriter.suppressThinking = providerName != "claude"

|

||||||

c.Writer = rewriter

|

c.Writer = rewriter

|

||||||

// Filter Anthropic-Beta header only for local handling paths

|

// Filter Anthropic-Beta header only for local handling paths

|

||||||

filterAntropicBetaHeader(c)

|

filterAntropicBetaHeader(c)

|

||||||

|

|||||||

@@ -17,19 +17,18 @@ import (

|

|||||||

// and to keep Amp-compatible response shapes.

|

// and to keep Amp-compatible response shapes.

|

||||||

type ResponseRewriter struct {

|

type ResponseRewriter struct {

|

||||||

gin.ResponseWriter

|

gin.ResponseWriter

|

||||||

body *bytes.Buffer

|

body *bytes.Buffer

|

||||||

originalModel string

|

originalModel string

|

||||||

isStreaming bool

|

isStreaming bool

|

||||||

suppressedContentBlock map[int]struct{}

|

suppressThinking bool

|

||||||

}

|

}

|

||||||

|

|

||||||

// NewResponseRewriter creates a new response rewriter for model name substitution.

|

// NewResponseRewriter creates a new response rewriter for model name substitution.

|

||||||

func NewResponseRewriter(w gin.ResponseWriter, originalModel string) *ResponseRewriter {

|

func NewResponseRewriter(w gin.ResponseWriter, originalModel string) *ResponseRewriter {

|

||||||

return &ResponseRewriter{

|

return &ResponseRewriter{

|

||||||

ResponseWriter: w,

|

ResponseWriter: w,

|

||||||

body: &bytes.Buffer{},

|

body: &bytes.Buffer{},

|

||||||

originalModel: originalModel,

|

originalModel: originalModel,

|

||||||

suppressedContentBlock: make(map[int]struct{}),

|

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

@@ -99,7 +98,8 @@ func (rw *ResponseRewriter) Write(data []byte) (int, error) {

|

|||||||

}

|

}

|

||||||

|

|

||||||

if rw.isStreaming {

|

if rw.isStreaming {

|

||||||

n, err := rw.ResponseWriter.Write(rw.rewriteStreamChunk(data))

|

rewritten := rw.rewriteStreamChunk(data)

|

||||||

|

n, err := rw.ResponseWriter.Write(rewritten)

|

||||||

if err == nil {

|

if err == nil {

|

||||||

if flusher, ok := rw.ResponseWriter.(http.Flusher); ok {

|

if flusher, ok := rw.ResponseWriter.(http.Flusher); ok {

|

||||||

flusher.Flush()

|

flusher.Flush()

|

||||||

@@ -162,19 +162,10 @@ func ensureAmpSignature(data []byte) []byte {

|

|||||||

return data

|

return data

|

||||||

}

|

}

|

||||||

|

|

||||||

func (rw *ResponseRewriter) markSuppressedContentBlock(index int) {

|

|

||||||

if rw.suppressedContentBlock == nil {

|

|

||||||

rw.suppressedContentBlock = make(map[int]struct{})

|

|

||||||

}

|

|

||||||

rw.suppressedContentBlock[index] = struct{}{}

|

|

||||||

}

|

|

||||||

|

|

||||||

func (rw *ResponseRewriter) isSuppressedContentBlock(index int) bool {

|

|

||||||

_, ok := rw.suppressedContentBlock[index]

|

|

||||||

return ok

|

|

||||||

}

|

|

||||||

|

|

||||||

func (rw *ResponseRewriter) suppressAmpThinking(data []byte) []byte {

|

func (rw *ResponseRewriter) suppressAmpThinking(data []byte) []byte {

|

||||||

|

if !rw.suppressThinking {

|

||||||

|

return data

|

||||||

|

}

|

||||||

if gjson.GetBytes(data, `content.#(type=="tool_use")`).Exists() {

|

if gjson.GetBytes(data, `content.#(type=="tool_use")`).Exists() {

|

||||||

filtered := gjson.GetBytes(data, `content.#(type!="thinking")#`)

|

filtered := gjson.GetBytes(data, `content.#(type!="thinking")#`)

|

||||||

if filtered.Exists() {

|

if filtered.Exists() {

|

||||||

@@ -185,33 +176,11 @@ func (rw *ResponseRewriter) suppressAmpThinking(data []byte) []byte {

|

|||||||

data, err = sjson.SetBytes(data, "content", filtered.Value())

|

data, err = sjson.SetBytes(data, "content", filtered.Value())

|

||||||

if err != nil {

|

if err != nil {

|

||||||

log.Warnf("Amp ResponseRewriter: failed to suppress thinking blocks: %v", err)

|

log.Warnf("Amp ResponseRewriter: failed to suppress thinking blocks: %v", err)

|

||||||

} else {

|

|

||||||

log.Debugf("Amp ResponseRewriter: Suppressed %d thinking blocks due to tool usage", originalCount-filteredCount)

|

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

eventType := gjson.GetBytes(data, "type").String()

|

|

||||||

indexResult := gjson.GetBytes(data, "index")

|

|

||||||

if eventType == "content_block_start" && gjson.GetBytes(data, "content_block.type").String() == "thinking" && indexResult.Exists() {

|

|

||||||

rw.markSuppressedContentBlock(int(indexResult.Int()))

|

|

||||||

return nil

|

|

||||||

}

|

|

||||||

if gjson.GetBytes(data, "delta.type").String() == "thinking_delta" {

|

|

||||||

if indexResult.Exists() {

|

|

||||||

rw.markSuppressedContentBlock(int(indexResult.Int()))

|

|

||||||

}

|

|

||||||

return nil

|

|

||||||

}

|

|

||||||

if eventType == "content_block_stop" && indexResult.Exists() {

|

|

||||||

index := int(indexResult.Int())

|

|

||||||

if rw.isSuppressedContentBlock(index) {

|

|

||||||

delete(rw.suppressedContentBlock, index)

|

|

||||||

return nil

|

|

||||||

}

|

|

||||||

}

|

|

||||||

|

|

||||||

return data

|

return data

|

||||||

}

|

}

|

||||||

|

|

||||||

@@ -262,6 +231,10 @@ func (rw *ResponseRewriter) rewriteStreamChunk(chunk []byte) []byte {

|

|||||||

jsonData := bytes.TrimPrefix(bytes.TrimSpace(lines[dataIdx]), []byte("data: "))

|

jsonData := bytes.TrimPrefix(bytes.TrimSpace(lines[dataIdx]), []byte("data: "))

|

||||||

if len(jsonData) > 0 && jsonData[0] == '{' {

|

if len(jsonData) > 0 && jsonData[0] == '{' {

|

||||||

rewritten := rw.rewriteStreamEvent(jsonData)

|

rewritten := rw.rewriteStreamEvent(jsonData)

|

||||||

|

if rewritten == nil {

|

||||||

|

i = dataIdx + 1

|

||||||

|

continue

|

||||||

|

}

|

||||||

// Emit event line

|

// Emit event line

|

||||||

out = append(out, line)

|

out = append(out, line)

|

||||||

// Emit blank lines between event and data

|

// Emit blank lines between event and data

|

||||||

@@ -287,7 +260,9 @@ func (rw *ResponseRewriter) rewriteStreamChunk(chunk []byte) []byte {

|

|||||||

jsonData := bytes.TrimPrefix(trimmed, []byte("data: "))

|

jsonData := bytes.TrimPrefix(trimmed, []byte("data: "))

|

||||||

if len(jsonData) > 0 && jsonData[0] == '{' {

|

if len(jsonData) > 0 && jsonData[0] == '{' {

|

||||||

rewritten := rw.rewriteStreamEvent(jsonData)

|

rewritten := rw.rewriteStreamEvent(jsonData)

|

||||||

out = append(out, append([]byte("data: "), rewritten...))

|

if rewritten != nil {

|

||||||

|

out = append(out, append([]byte("data: "), rewritten...))

|

||||||

|

}

|

||||||

i++

|

i++

|

||||||

continue

|

continue

|

||||||

}

|

}

|

||||||

|

|||||||

@@ -102,7 +102,7 @@ func TestRewriteStreamChunk_MessageModel(t *testing.T) {

|

|||||||

}

|

}

|

||||||

|

|

||||||

func TestRewriteStreamChunk_PreservesThinkingWithSignatureInjection(t *testing.T) {

|

func TestRewriteStreamChunk_PreservesThinkingWithSignatureInjection(t *testing.T) {

|

||||||

rw := &ResponseRewriter{suppressedContentBlock: make(map[int]struct{})}

|

rw := &ResponseRewriter{}

|

||||||

|

|

||||||

chunk := []byte("event: content_block_start\ndata: {\"type\":\"content_block_start\",\"index\":0,\"content_block\":{\"type\":\"thinking\",\"thinking\":\"\"}}\n\nevent: content_block_delta\ndata: {\"type\":\"content_block_delta\",\"index\":0,\"delta\":{\"type\":\"thinking_delta\",\"thinking\":\"abc\"}}\n\nevent: content_block_stop\ndata: {\"type\":\"content_block_stop\",\"index\":0}\n\nevent: content_block_start\ndata: {\"type\":\"content_block_start\",\"index\":1,\"content_block\":{\"type\":\"tool_use\",\"name\":\"bash\",\"input\":{}}}\n\n")

|

chunk := []byte("event: content_block_start\ndata: {\"type\":\"content_block_start\",\"index\":0,\"content_block\":{\"type\":\"thinking\",\"thinking\":\"\"}}\n\nevent: content_block_delta\ndata: {\"type\":\"content_block_delta\",\"index\":0,\"delta\":{\"type\":\"thinking_delta\",\"thinking\":\"abc\"}}\n\nevent: content_block_stop\ndata: {\"type\":\"content_block_stop\",\"index\":0}\n\nevent: content_block_start\ndata: {\"type\":\"content_block_start\",\"index\":1,\"content_block\":{\"type\":\"tool_use\",\"name\":\"bash\",\"input\":{}}}\n\n")

|

||||||

result := rw.rewriteStreamChunk(chunk)

|

result := rw.rewriteStreamChunk(chunk)

|

||||||

|

|||||||

@@ -323,6 +323,10 @@ func NewServer(cfg *config.Config, authManager *auth.Manager, accessManager *sdk

|

|||||||

// setupRoutes configures the API routes for the server.

|

// setupRoutes configures the API routes for the server.

|

||||||

// It defines the endpoints and associates them with their respective handlers.

|

// It defines the endpoints and associates them with their respective handlers.

|

||||||

func (s *Server) setupRoutes() {

|

func (s *Server) setupRoutes() {

|

||||||

|

s.engine.GET("/healthz", func(c *gin.Context) {

|

||||||

|

c.JSON(http.StatusOK, gin.H{"status": "ok"})

|

||||||

|

})

|

||||||

|

|

||||||

s.engine.GET("/management.html", s.serveManagementControlPanel)

|

s.engine.GET("/management.html", s.serveManagementControlPanel)

|

||||||

openaiHandlers := openai.NewOpenAIAPIHandler(s.handlers)

|

openaiHandlers := openai.NewOpenAIAPIHandler(s.handlers)

|

||||||

geminiHandlers := gemini.NewGeminiAPIHandler(s.handlers)

|

geminiHandlers := gemini.NewGeminiAPIHandler(s.handlers)

|

||||||

@@ -569,6 +573,8 @@ func (s *Server) registerManagementRoutes() {

|

|||||||

mgmt.PUT("/quota-exceeded/switch-preview-model", s.mgmt.PutSwitchPreviewModel)

|

mgmt.PUT("/quota-exceeded/switch-preview-model", s.mgmt.PutSwitchPreviewModel)

|

||||||

mgmt.PATCH("/quota-exceeded/switch-preview-model", s.mgmt.PutSwitchPreviewModel)

|

mgmt.PATCH("/quota-exceeded/switch-preview-model", s.mgmt.PutSwitchPreviewModel)

|

||||||

|

|

||||||

|

mgmt.GET("/copilot-quota", s.mgmt.GetCopilotQuota)

|

||||||

|

|

||||||

mgmt.GET("/api-keys", s.mgmt.GetAPIKeys)

|

mgmt.GET("/api-keys", s.mgmt.GetAPIKeys)

|

||||||

mgmt.PUT("/api-keys", s.mgmt.PutAPIKeys)

|

mgmt.PUT("/api-keys", s.mgmt.PutAPIKeys)

|

||||||

mgmt.PATCH("/api-keys", s.mgmt.PatchAPIKeys)

|

mgmt.PATCH("/api-keys", s.mgmt.PatchAPIKeys)

|

||||||

|

|||||||

@@ -1,6 +1,7 @@

|

|||||||

package api

|

package api

|

||||||

|

|

||||||

import (

|

import (

|

||||||

|

"encoding/json"

|

||||||

"net/http"

|

"net/http"

|

||||||

"net/http/httptest"

|

"net/http/httptest"

|

||||||

"os"

|

"os"

|

||||||

@@ -46,6 +47,28 @@ func newTestServer(t *testing.T) *Server {

|

|||||||

return NewServer(cfg, authManager, accessManager, configPath)

|

return NewServer(cfg, authManager, accessManager, configPath)

|

||||||

}

|

}

|

||||||

|

|

||||||

|

func TestHealthz(t *testing.T) {

|

||||||

|

server := newTestServer(t)

|

||||||

|

|

||||||

|

req := httptest.NewRequest(http.MethodGet, "/healthz", nil)

|

||||||

|

rr := httptest.NewRecorder()

|

||||||

|

server.engine.ServeHTTP(rr, req)

|

||||||

|

|

||||||

|

if rr.Code != http.StatusOK {

|

||||||

|

t.Fatalf("unexpected status code: got %d want %d; body=%s", rr.Code, http.StatusOK, rr.Body.String())

|

||||||

|

}

|

||||||

|

|

||||||

|

var resp struct {

|

||||||

|

Status string `json:"status"`

|

||||||

|

}

|

||||||

|

if err := json.Unmarshal(rr.Body.Bytes(), &resp); err != nil {

|

||||||

|

t.Fatalf("failed to parse response JSON: %v; body=%s", err, rr.Body.String())

|

||||||

|

}

|

||||||

|

if resp.Status != "ok" {

|

||||||

|

t.Fatalf("unexpected response status: got %q want %q", resp.Status, "ok")

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

func TestAmpProviderModelRoutes(t *testing.T) {

|

func TestAmpProviderModelRoutes(t *testing.T) {

|

||||||

testCases := []struct {

|

testCases := []struct {

|

||||||

name string

|

name string

|

||||||

|

|||||||

@@ -235,6 +235,74 @@ type CopilotModelEntry struct {

|

|||||||

Capabilities map[string]any `json:"capabilities,omitempty"`

|