mirror of

https://github.com/router-for-me/CLIProxyAPIPlus.git

synced 2026-03-30 01:06:39 +00:00

Compare commits

383 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

f8d1bc06ea | ||

|

|

d5930f4e44 | ||

|

|

9b7d7021af | ||

|

|

e41c22ef44 | ||

|

|

55271403fb | ||

|

|

36fba66619 | ||

|

|

b9b127a7ea | ||

|

|

2741e7b7b3 | ||

|

|

1767a56d4f | ||

|

|

779e6c2d2f | ||

|

|

73c831747b | ||

|

|

b8b89f34f4 | ||

|

|

1fa094dac6 | ||

|

|

f55754621f | ||

|

|

ac26e7db43 | ||

|

|

10b824fcac | ||

|

|

e5d3541b5a | ||

|

|

79755e76ea | ||

|

|

35f158d526 | ||

|

|

6962e09dd9 | ||

|

|

4c4cbd44da | ||

|

|

26eca8b6ba | ||

|

|

62b17f40a1 | ||

|

|

511b8a992e | ||

|

|

7dccc7ba2f | ||

|

|

70c90687fd | ||

|

|

8144ffd5c8 | ||

|

|

0ab977c236 | ||

|

|

224f0de353 | ||

|

|

6b45d311ec | ||

|

|

d54de441d3 | ||

|

|

7386a70724 | ||

|

|

1821bf7051 | ||

|

|

d42b5d4e78 | ||

|

|

1b7447b682 | ||

|

|

40dee4453a | ||

|

|

8902e1cccb | ||

|

|

de5fe71478 | ||

|

|

dcfbec2990 | ||

|

|

c95620f90e | ||

|

|

754f3bcbc3 | ||

|

|

36973d4a6f | ||

|

|

9613f0b3f9 | ||

|

|

274f29e26b | ||

|

|

c8e79c3787 | ||

|

|

8afef43887 | ||

|

|

c1083cbfc6 | ||

|

|

c89d19b300 | ||

|

|

1e6bc81cfd | ||

|

|

1a149475e0 | ||

|

|

e5166841db | ||

|

|

19c52bcb60 | ||

|

|

bb9b2d1758 | ||

|

|

7fa527193c | ||

|

|

ed0eb51b4d | ||

|

|

0e4f669c8b | ||

|

|

76c064c729 | ||

|

|

d2f652f436 | ||

|

|

6a452a54d5 | ||

|

|

9e5693e74f | ||

|

|

528b1a2307 | ||

|

|

0cc978ec1d | ||

|

|

d312422ab4 | ||

|

|

fee736933b | ||

|

|

09c92aa0b5 | ||

|

|

8c67b3ae64 | ||

|

|

000e4ceb4e | ||

|

|

5c99846ecf | ||

|

|

cc32f5ff61 | ||

|

|

fbff68b9e0 | ||

|

|

7e1a543b79 | ||

|

|

d475aaba96 | ||

|

|

1dc4ecb1b8 | ||

|

|

1315f710f5 | ||

|

|

96f55570f7 | ||

|

|

0906aeca87 | ||

|

|

7333619f15 | ||

|

|

97c0487add | ||

|

|

74b862d8b8 | ||

|

|

2db8df8e38 | ||

|

|

a576088d5f | ||

|

|

66ff916838 | ||

|

|

7b0453074e | ||

|

|

a000eb523d | ||

|

|

18a4fedc7f | ||

|

|

5d6cdccda0 | ||

|

|

1b7f4ac3e1 | ||

|

|

afc1a5b814 | ||

|

|

7ed38db54f | ||

|

|

28c10f4e69 | ||

|

|

6e12441a3b | ||

|

|

65c439c18d | ||

|

|

0ed2d16596 | ||

|

|

db335ac616 | ||

|

|

f3c59165d7 | ||

|

|

e6690cb447 | ||

|

|

35907416b8 | ||

|

|

e8bb350467 | ||

|

|

5331d51f27 | ||

|

|

755ca75879 | ||

|

|

2398ebad55 | ||

|

|

c1bf298216 | ||

|

|

e005208d76 | ||

|

|

d1df70d02f | ||

|

|

f81acd0760 | ||

|

|

636da4c932 | ||

|

|

cccb77b552 | ||

|

|

2bd646ad70 | ||

|

|

52c1fa025e | ||

|

|

680105f84d | ||

|

|

f7069e9548 | ||

|

|

7275e99b41 | ||

|

|

c28b65f849 | ||

|

|

793840cdb4 | ||

|

|

8f421de532 | ||

|

|

be2dd60ee7 | ||

|

|

ea3e0b713e | ||

|

|

8179d5a8a4 | ||

|

|

6fa7abe434 | ||

|

|

5135c22cd6 | ||

|

|

1e27990561 | ||

|

|

e1e9fc43c1 | ||

|

|

b2921518ac | ||

|

|

dd64adbeeb | ||

|

|

616d41c06a | ||

|

|

e0e337aeb9 | ||

|

|

d52839fced | ||

|

|

4022e69651 | ||

|

|

56073ded69 | ||

|

|

9738a53f49 | ||

|

|

be3f8dbf7e | ||

|

|

9c6c3612a8 | ||

|

|

19e1a4447a | ||

|

|

7c2ad4cda2 | ||

|

|

fb95813fbf | ||

|

|

db63f9b5d6 | ||

|

|

25f6c4a250 | ||

|

|

b24ae74216 | ||

|

|

59ad8f40dc | ||

|

|

ff03dc6a2c | ||

|

|

dc7187ca5b | ||

|

|

b1dcff778c | ||

|

|

cef2aeeb08 | ||

|

|

bcd1e8cc34 | ||

|

|

198b3f4a40 | ||

|

|

9fee7f488e | ||

|

|

1b46d39b8b | ||

|

|

c1241a98e2 | ||

|

|

8d8f5970ee | ||

|

|

f90120f846 | ||

|

|

0b94d36c4a | ||

|

|

152c310bb7 | ||

|

|

f6bbca35ab | ||

|

|

c8cee6a209 | ||

|

|

b5701f416b | ||

|

|

4b1a404fcb | ||

|

|

b93cce5412 | ||

|

|

c6cb24039d | ||

|

|

5382408489 | ||

|

|

67669196ed | ||

|

|

5c817a9b42 | ||

|

|

58fd9bf964 | ||

|

|

7b3dfc67bc | ||

|

|

cdd24052d3 | ||

|

|

5da0decef6 | ||

|

|

733fd8edab | ||

|

|

af27f2b8bc | ||

|

|

2e1925d762 | ||

|

|

77254bd074 | ||

|

|

5b6342e6ac | ||

|

|

3960c93d51 | ||

|

|

339a81b650 | ||

|

|

560c020477 | ||

|

|

aec65e3be3 | ||

|

|

f44f0702f8 | ||

|

|

b76b79068f | ||

|

|

34c8ccb961 | ||

|

|

d08e164af3 | ||

|

|

8178efaeda | ||

|

|

86d5db472a | ||

|

|

020d36f6e8 | ||

|

|

1db23979e8 | ||

|

|

c3d5dbe96f | ||

|

|

5484489406 | ||

|

|

0ac52da460 | ||

|

|

817cebb321 | ||

|

|

683f3709d6 | ||

|

|

dbd42a42b2 | ||

|

|

ec24baf757 | ||

|

|

dea3e74d35 | ||

|

|

a6c3042e34 | ||

|

|

861537c9bd | ||

|

|

8c92cb0883 | ||

|

|

89d7be9525 | ||

|

|

2b79d7f22f | ||

|

|

2bb686f594 | ||

|

|

163fe287ce | ||

|

|

70988d387b | ||

|

|

52058a1659 | ||

|

|

df5595a0c9 | ||

|

|

ddaa9d2436 | ||

|

|

7b7b258c38 | ||

|

|

a00f774f5a | ||

|

|

9daf1ba8b5 | ||

|

|

76f2359637 | ||

|

|

dcb1c9be8a | ||

|

|

a24f4ace78 | ||

|

|

c631df8c3b | ||

|

|

54c3eb1b1e | ||

|

|

bb28cd26ad | ||

|

|

046865461e | ||

|

|

cf74ed2f0c | ||

|

|

c3762328a5 | ||

|

|

e333fbea3d | ||

|

|

efbe36d1d4 | ||

|

|

8553cfa40e | ||

|

|

30d5c95b26 | ||

|

|

d1e3195e6f | ||

|

|

05a35662ae | ||

|

|

ce53d3a287 | ||

|

|

4cc99e7449 | ||

|

|

71773fe032 | ||

|

|

a1e0fa0f39 | ||

|

|

fc2f0b6983 | ||

|

|

5c9997cdac | ||

|

|

6f81046730 | ||

|

|

0687472d01 | ||

|

|

7739738fb3 | ||

|

|

99d1ce247b | ||

|

|

f5941a411c | ||

|

|

ba672bbd07 | ||

|

|

d9c6627a53 | ||

|

|

2e9907c3ac | ||

|

|

90afb9cb73 | ||

|

|

d0cc0cd9a5 | ||

|

|

338321e553 | ||

|

|

182b31963a | ||

|

|

4f48e5254a | ||

|

|

15dd5db1d7 | ||

|

|

424711b718 | ||

|

|

91a2b1f0b4 | ||

|

|

2b134fc378 | ||

|

|

b9153719b0 | ||

|

|

631e5c8331 | ||

|

|

e9c60a0a67 | ||

|

|

98a1bb5a7f | ||

|

|

ca90487a8c | ||

|

|

1042489f85 | ||

|

|

38277c1ea6 | ||

|

|

ee0c24628f | ||

|

|

3a18f6fcca | ||

|

|

099e734a02 | ||

|

|

a52da26b5d | ||

|

|

522a68a4ea | ||

|

|

a02eda54d0 | ||

|

|

97ef633c57 | ||

|

|

dae8463ba1 | ||

|

|

7c1299922e | ||

|

|

ddcf1f279d | ||

|

|

7e6bb8fdc5 | ||

|

|

9cee8ef87b | ||

|

|

93fb841bcb | ||

|

|

0c05131aeb | ||

|

|

5ebc58fab4 | ||

|

|

2b609dd891 | ||

|

|

a8cbc68c3e | ||

|

|

11a795a01c | ||

|

|

89c428216e | ||

|

|

2695a99623 | ||

|

|

242aecd924 | ||

|

|

ce8cc1ba33 | ||

|

|

ad5253bd2b | ||

|

|

97fdd2e088 | ||

|

|

9397f7049f | ||

|

|

a14d19b92c | ||

|

|

8ae0c05ea6 | ||

|

|

8822f20d17 | ||

|

|

553d6f50ea | ||

|

|

f0e5a5a367 | ||

|

|

f6dfea9357 | ||

|

|

cc8dc7f62c | ||

|

|

a3846ea513 | ||

|

|

8d44be858e | ||

|

|

0e6bb076e9 | ||

|

|

ac135fc7cb | ||

|

|

4e1d09809d | ||

|

|

9e855f8100 | ||

|

|

25680a8259 | ||

|

|

13c93e8cfd | ||

|

|

88aa1b9fd1 | ||

|

|

352cb98ff0 | ||

|

|

ac95e92829 | ||

|

|

8526c2da25 | ||

|

|

68a6cabf8b | ||

|

|

ac0e387da1 | ||

|

|

7fe1d102cb | ||

|

|

5850492a93 | ||

|

|

fdbd4041ca | ||

|

|

ebef1fae2a | ||

|

|

c51851689b | ||

|

|

419bf784ab | ||

|

|

4bbeb92e9a | ||

|

|

b436dad8bc | ||

|

|

6ae15d6c44 | ||

|

|

0468bde0d6 | ||

|

|

1d7329e797 | ||

|

|

48ffc4dee7 | ||

|

|

7ebd8f0c44 | ||

|

|

b680c146c1 | ||

|

|

7d6660d181 | ||

|

|

d8e3d4e2b6 | ||

|

|

d26ad8224d | ||

|

|

5c84d69d42 | ||

|

|

527e4b7f26 | ||

|

|

b48485b42b | ||

|

|

79009bb3d4 | ||

|

|

26fc611f86 | ||

|

|

b43743d4f1 | ||

|

|

179e5434b1 | ||

|

|

9f95b31158 | ||

|

|

5da07eae4c | ||

|

|

835ae178d4 | ||

|

|

c80ab8bf0d | ||

|

|

ce87714ef1 | ||

|

|

0452b869e8 | ||

|

|

d2e5857b82 | ||

|

|

f9b005f21f | ||

|

|

532107b4fa | ||

|

|

c44793789b | ||

|

|

4e99525279 | ||

|

|

7547d1d0b3 | ||

|

|

68934942d0 | ||

|

|

09fec34e1c | ||

|

|

9229708b6c | ||

|

|

914db94e79 | ||

|

|

660bd7eff5 | ||

|

|

b907d21851 | ||

|

|

dd44413ba5 | ||

|

|

10fa0f2062 | ||

|

|

d6cc976d1f | ||

|

|

8aa2cce8c5 | ||

|

|

bf9b2c49df | ||

|

|

77b42c6165 | ||

|

|

446150a747 | ||

|

|

1cbc4834e1 | ||

|

|

30338ecec4 | ||

|

|

9a37defed3 | ||

|

|

c83a057996 | ||

|

|

a8a5d03c33 | ||

|

|

76aa917882 | ||

|

|

6ac9b31e4e | ||

|

|

0ad3e8457f | ||

|

|

444a47ae63 | ||

|

|

725f4fdff4 | ||

|

|

c23e46f45d | ||

|

|

b148820c35 | ||

|

|

134f41496d | ||

|

|

c5838dd58d | ||

|

|

b6ca5ef7ce | ||

|

|

1ae994b4aa | ||

|

|

84e9793e61 | ||

|

|

32e64dacfd | ||

|

|

cc1d8f6629 | ||

|

|

5446cd2b02 | ||

|

|

8de0885b7d | ||

|

|

68dd2bfe82 | ||

|

|

2baf35b3ef | ||

|

|

846e75b893 | ||

|

|

fc0257d6d9 | ||

|

|

f3c164d345 | ||

|

|

4040b1e766 | ||

|

|

b7588428c5 | ||

|

|

8f97a5f77c | ||

|

|

2a4d3e60f3 | ||

|

|

8b5af2ab84 | ||

|

|

d887716ebd | ||

|

|

5dc1848466 | ||

|

|

9491517b26 | ||

|

|

9370b5bd04 | ||

|

|

abb51a0d93 | ||

|

|

c8d809131b | ||

|

|

dd71c73a9f | ||

|

|

2615f489d6 |

@@ -31,6 +31,7 @@ bin/*

|

|||||||

.agent/*

|

.agent/*

|

||||||

.agents/*

|

.agents/*

|

||||||

.opencode/*

|

.opencode/*

|

||||||

|

.idea/*

|

||||||

.bmad/*

|

.bmad/*

|

||||||

_bmad/*

|

_bmad/*

|

||||||

_bmad-output/*

|

_bmad-output/*

|

||||||

|

|||||||

14

.github/workflows/docker-image.yml

vendored

14

.github/workflows/docker-image.yml

vendored

@@ -16,6 +16,10 @@ jobs:

|

|||||||

steps:

|

steps:

|

||||||

- name: Checkout

|

- name: Checkout

|

||||||

uses: actions/checkout@v4

|

uses: actions/checkout@v4

|

||||||

|

- name: Refresh models catalog

|

||||||

|

run: |

|

||||||

|

git fetch --depth 1 https://github.com/router-for-me/models.git main

|

||||||

|

git show FETCH_HEAD:models.json > internal/registry/models/models.json

|

||||||

- name: Set up Docker Buildx

|

- name: Set up Docker Buildx

|

||||||

uses: docker/setup-buildx-action@v3

|

uses: docker/setup-buildx-action@v3

|

||||||

- name: Login to DockerHub

|

- name: Login to DockerHub

|

||||||

@@ -25,7 +29,7 @@ jobs:

|

|||||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||||

- name: Generate Build Metadata

|

- name: Generate Build Metadata

|

||||||

run: |

|

run: |

|

||||||

echo VERSION=`git describe --tags --always --dirty` >> $GITHUB_ENV

|

echo "VERSION=${GITHUB_REF_NAME}" >> $GITHUB_ENV

|

||||||

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

||||||

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

||||||

- name: Build and push (amd64)

|

- name: Build and push (amd64)

|

||||||

@@ -47,6 +51,10 @@ jobs:

|

|||||||

steps:

|

steps:

|

||||||

- name: Checkout

|

- name: Checkout

|

||||||

uses: actions/checkout@v4

|

uses: actions/checkout@v4

|

||||||

|

- name: Refresh models catalog

|

||||||

|

run: |

|

||||||

|

git fetch --depth 1 https://github.com/router-for-me/models.git main

|

||||||

|

git show FETCH_HEAD:models.json > internal/registry/models/models.json

|

||||||

- name: Set up Docker Buildx

|

- name: Set up Docker Buildx

|

||||||

uses: docker/setup-buildx-action@v3

|

uses: docker/setup-buildx-action@v3

|

||||||

- name: Login to DockerHub

|

- name: Login to DockerHub

|

||||||

@@ -56,7 +64,7 @@ jobs:

|

|||||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||||

- name: Generate Build Metadata

|

- name: Generate Build Metadata

|

||||||

run: |

|

run: |

|

||||||

echo VERSION=`git describe --tags --always --dirty` >> $GITHUB_ENV

|

echo "VERSION=${GITHUB_REF_NAME}" >> $GITHUB_ENV

|

||||||

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

||||||

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

||||||

- name: Build and push (arm64)

|

- name: Build and push (arm64)

|

||||||

@@ -90,7 +98,7 @@ jobs:

|

|||||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||||

- name: Generate Build Metadata

|

- name: Generate Build Metadata

|

||||||

run: |

|

run: |

|

||||||

echo VERSION=`git describe --tags --always --dirty` >> $GITHUB_ENV

|

echo "VERSION=${GITHUB_REF_NAME}" >> $GITHUB_ENV

|

||||||

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

||||||

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

||||||

- name: Create and push multi-arch manifests

|

- name: Create and push multi-arch manifests

|

||||||

|

|||||||

4

.github/workflows/pr-test-build.yml

vendored

4

.github/workflows/pr-test-build.yml

vendored

@@ -12,6 +12,10 @@ jobs:

|

|||||||

steps:

|

steps:

|

||||||

- name: Checkout

|

- name: Checkout

|

||||||

uses: actions/checkout@v4

|

uses: actions/checkout@v4

|

||||||

|

- name: Refresh models catalog

|

||||||

|

run: |

|

||||||

|

git fetch --depth 1 https://github.com/router-for-me/models.git main

|

||||||

|

git show FETCH_HEAD:models.json > internal/registry/models/models.json

|

||||||

- name: Set up Go

|

- name: Set up Go

|

||||||

uses: actions/setup-go@v5

|

uses: actions/setup-go@v5

|

||||||

with:

|

with:

|

||||||

|

|||||||

9

.github/workflows/release.yaml

vendored

9

.github/workflows/release.yaml

vendored

@@ -16,6 +16,10 @@ jobs:

|

|||||||

- uses: actions/checkout@v4

|

- uses: actions/checkout@v4

|

||||||

with:

|

with:

|

||||||

fetch-depth: 0

|

fetch-depth: 0

|

||||||

|

- name: Refresh models catalog

|

||||||

|

run: |

|

||||||

|

git fetch --depth 1 https://github.com/router-for-me/models.git main

|

||||||

|

git show FETCH_HEAD:models.json > internal/registry/models/models.json

|

||||||

- run: git fetch --force --tags

|

- run: git fetch --force --tags

|

||||||

- uses: actions/setup-go@v4

|

- uses: actions/setup-go@v4

|

||||||

with:

|

with:

|

||||||

@@ -23,15 +27,14 @@ jobs:

|

|||||||

cache: true

|

cache: true

|

||||||

- name: Generate Build Metadata

|

- name: Generate Build Metadata

|

||||||

run: |

|

run: |

|

||||||

VERSION=$(git describe --tags --always --dirty)

|

echo "VERSION=${GITHUB_REF_NAME}" >> $GITHUB_ENV

|

||||||

echo "VERSION=${VERSION}" >> $GITHUB_ENV

|

|

||||||

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

||||||

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

||||||

- uses: goreleaser/goreleaser-action@v4

|

- uses: goreleaser/goreleaser-action@v4

|

||||||

with:

|

with:

|

||||||

distribution: goreleaser

|

distribution: goreleaser

|

||||||

version: latest

|

version: latest

|

||||||

args: release --clean

|

args: release --clean --skip=validate

|

||||||

env:

|

env:

|

||||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||||

VERSION: ${{ env.VERSION }}

|

VERSION: ${{ env.VERSION }}

|

||||||

|

|||||||

2

.gitignore

vendored

2

.gitignore

vendored

@@ -1,6 +1,7 @@

|

|||||||

# Binaries

|

# Binaries

|

||||||

cli-proxy-api

|

cli-proxy-api

|

||||||

cliproxy

|

cliproxy

|

||||||

|

/server

|

||||||

*.exe

|

*.exe

|

||||||

|

|

||||||

|

|

||||||

@@ -44,6 +45,7 @@ GEMINI.md

|

|||||||

.agents/*

|

.agents/*

|

||||||

.agents/*

|

.agents/*

|

||||||

.opencode/*

|

.opencode/*

|

||||||

|

.idea/*

|

||||||

.bmad/*

|

.bmad/*

|

||||||

_bmad/*

|

_bmad/*

|

||||||

_bmad-output/*

|

_bmad-output/*

|

||||||

|

|||||||

@@ -1,3 +1,5 @@

|

|||||||

|

version: 2

|

||||||

|

|

||||||

builds:

|

builds:

|

||||||

- id: "cli-proxy-api-plus"

|

- id: "cli-proxy-api-plus"

|

||||||

env:

|

env:

|

||||||

@@ -6,6 +8,7 @@ builds:

|

|||||||

- linux

|

- linux

|

||||||

- windows

|

- windows

|

||||||

- darwin

|

- darwin

|

||||||

|

- freebsd

|

||||||

goarch:

|

goarch:

|

||||||

- amd64

|

- amd64

|

||||||

- arm64

|

- arm64

|

||||||

|

|||||||

126

README.md

126

README.md

@@ -8,132 +8,6 @@ All third-party provider support is maintained by community contributors; CLIPro

|

|||||||

|

|

||||||

The Plus release stays in lockstep with the mainline features.

|

The Plus release stays in lockstep with the mainline features.

|

||||||

|

|

||||||

## Differences from the Mainline

|

|

||||||

|

|

||||||

- Added GitHub Copilot support (OAuth login), provided by [em4go](https://github.com/em4go/CLIProxyAPI/tree/feature/github-copilot-auth)

|

|

||||||

- Added Kiro (AWS CodeWhisperer) support (OAuth login), provided by [fuko2935](https://github.com/fuko2935/CLIProxyAPI/tree/feature/kiro-integration), [Ravens2121](https://github.com/Ravens2121/CLIProxyAPIPlus/)

|

|

||||||

|

|

||||||

## New Features (Plus Enhanced)

|

|

||||||

|

|

||||||

- **OAuth Web Authentication**: Browser-based OAuth login for Kiro with beautiful web UI

|

|

||||||

- **Rate Limiter**: Built-in request rate limiting to prevent API abuse

|

|

||||||

- **Background Token Refresh**: Automatic token refresh 10 minutes before expiration

|

|

||||||

- **Metrics & Monitoring**: Request metrics collection for monitoring and debugging

|

|

||||||

- **Device Fingerprint**: Device fingerprint generation for enhanced security

|

|

||||||

- **Cooldown Management**: Smart cooldown mechanism for API rate limits

|

|

||||||

- **Usage Checker**: Real-time usage monitoring and quota management

|

|

||||||

- **Model Converter**: Unified model name conversion across providers

|

|

||||||

- **UTF-8 Stream Processing**: Improved streaming response handling

|

|

||||||

|

|

||||||

## Kiro Authentication

|

|

||||||

|

|

||||||

### CLI Login

|

|

||||||

|

|

||||||

> **Note:** Google/GitHub login is not available for third-party applications due to AWS Cognito restrictions.

|

|

||||||

|

|

||||||

**AWS Builder ID** (recommended):

|

|

||||||

|

|

||||||

```bash

|

|

||||||

# Device code flow

|

|

||||||

./CLIProxyAPI --kiro-aws-login

|

|

||||||

|

|

||||||

# Authorization code flow

|

|

||||||

./CLIProxyAPI --kiro-aws-authcode

|

|

||||||

```

|

|

||||||

|

|

||||||

**Import token from Kiro IDE:**

|

|

||||||

|

|

||||||

```bash

|

|

||||||

./CLIProxyAPI --kiro-import

|

|

||||||

```

|

|

||||||

|

|

||||||

To get a token from Kiro IDE:

|

|

||||||

|

|

||||||

1. Open Kiro IDE and login with Google (or GitHub)

|

|

||||||

2. Find the token file: `~/.kiro/kiro-auth-token.json`

|

|

||||||

3. Run: `./CLIProxyAPI --kiro-import`

|

|

||||||

|

|

||||||

**AWS IAM Identity Center (IDC):**

|

|

||||||

|

|

||||||

```bash

|

|

||||||

./CLIProxyAPI --kiro-idc-login --kiro-idc-start-url https://d-xxxxxxxxxx.awsapps.com/start

|

|

||||||

|

|

||||||

# Specify region

|

|

||||||

./CLIProxyAPI --kiro-idc-login --kiro-idc-start-url https://d-xxxxxxxxxx.awsapps.com/start --kiro-idc-region us-west-2

|

|

||||||

```

|

|

||||||

|

|

||||||

**Additional flags:**

|

|

||||||

|

|

||||||

| Flag | Description |

|

|

||||||

|------|-------------|

|

|

||||||

| `--no-browser` | Don't open browser automatically, print URL instead |

|

|

||||||

| `--no-incognito` | Use existing browser session (Kiro defaults to incognito). Useful for corporate SSO that requires an authenticated browser session |

|

|

||||||

| `--kiro-idc-start-url` | IDC Start URL (required with `--kiro-idc-login`) |

|

|

||||||

| `--kiro-idc-region` | IDC region (default: `us-east-1`) |

|

|

||||||

| `--kiro-idc-flow` | IDC flow type: `authcode` (default) or `device` |

|

|

||||||

|

|

||||||

### Web-based OAuth Login

|

|

||||||

|

|

||||||

Access the Kiro OAuth web interface at:

|

|

||||||

|

|

||||||

```

|

|

||||||

http://your-server:8080/v0/oauth/kiro

|

|

||||||

```

|

|

||||||

|

|

||||||

This provides a browser-based OAuth flow for Kiro (AWS CodeWhisperer) authentication with:

|

|

||||||

- AWS Builder ID login

|

|

||||||

- AWS Identity Center (IDC) login

|

|

||||||

- Token import from Kiro IDE

|

|

||||||

|

|

||||||

## Quick Deployment with Docker

|

|

||||||

|

|

||||||

### One-Command Deployment

|

|

||||||

|

|

||||||

```bash

|

|

||||||

# Create deployment directory

|

|

||||||

mkdir -p ~/cli-proxy && cd ~/cli-proxy

|

|

||||||

|

|

||||||

# Create docker-compose.yml

|

|

||||||

cat > docker-compose.yml << 'EOF'

|

|

||||||

services:

|

|

||||||

cli-proxy-api:

|

|

||||||

image: eceasy/cli-proxy-api-plus:latest

|

|

||||||

container_name: cli-proxy-api-plus

|

|

||||||

ports:

|

|

||||||

- "8317:8317"

|

|

||||||

volumes:

|

|

||||||

- ./config.yaml:/CLIProxyAPI/config.yaml

|

|

||||||

- ./auths:/root/.cli-proxy-api

|

|

||||||

- ./logs:/CLIProxyAPI/logs

|

|

||||||

restart: unless-stopped

|

|

||||||

EOF

|

|

||||||

|

|

||||||

# Download example config

|

|

||||||

curl -o config.yaml https://raw.githubusercontent.com/router-for-me/CLIProxyAPIPlus/main/config.example.yaml

|

|

||||||

|

|

||||||

# Pull and start

|

|

||||||

docker compose pull && docker compose up -d

|

|

||||||

```

|

|

||||||

|

|

||||||

### Configuration

|

|

||||||

|

|

||||||

Edit `config.yaml` before starting:

|

|

||||||

|

|

||||||

```yaml

|

|

||||||

# Basic configuration example

|

|

||||||

server:

|

|

||||||

port: 8317

|

|

||||||

|

|

||||||

# Add your provider configurations here

|

|

||||||

```

|

|

||||||

|

|

||||||

### Update to Latest Version

|

|

||||||

|

|

||||||

```bash

|

|

||||||

cd ~/cli-proxy

|

|

||||||

docker compose pull && docker compose up -d

|

|

||||||

```

|

|

||||||

|

|

||||||

## Contributing

|

## Contributing

|

||||||

|

|

||||||

This project only accepts pull requests that relate to third-party provider support. Any pull requests unrelated to third-party provider support will be rejected.

|

This project only accepts pull requests that relate to third-party provider support. Any pull requests unrelated to third-party provider support will be rejected.

|

||||||

|

|||||||

130

README_CN.md

130

README_CN.md

@@ -1,139 +1,11 @@

|

|||||||

# CLIProxyAPI Plus

|

# CLIProxyAPI Plus

|

||||||

|

|

||||||

[English](README.md) | 中文

|

[English](README.md) | 中文 | [日本語](README_JA.md)

|

||||||

|

|

||||||

这是 [CLIProxyAPI](https://github.com/router-for-me/CLIProxyAPI) 的 Plus 版本,在原有基础上增加了第三方供应商的支持。

|

这是 [CLIProxyAPI](https://github.com/router-for-me/CLIProxyAPI) 的 Plus 版本,在原有基础上增加了第三方供应商的支持。

|

||||||

|

|

||||||

所有的第三方供应商支持都由第三方社区维护者提供,CLIProxyAPI 不提供技术支持。如需取得支持,请与对应的社区维护者联系。

|

所有的第三方供应商支持都由第三方社区维护者提供,CLIProxyAPI 不提供技术支持。如需取得支持,请与对应的社区维护者联系。

|

||||||

|

|

||||||

该 Plus 版本的主线功能与主线功能强制同步。

|

|

||||||

|

|

||||||

## 与主线版本版本差异

|

|

||||||

|

|

||||||

- 新增 GitHub Copilot 支持(OAuth 登录),由[em4go](https://github.com/em4go/CLIProxyAPI/tree/feature/github-copilot-auth)提供

|

|

||||||

- 新增 Kiro (AWS CodeWhisperer) 支持 (OAuth 登录), 由[fuko2935](https://github.com/fuko2935/CLIProxyAPI/tree/feature/kiro-integration)、[Ravens2121](https://github.com/Ravens2121/CLIProxyAPIPlus/)提供

|

|

||||||

|

|

||||||

## 新增功能 (Plus 增强版)

|

|

||||||

|

|

||||||

- **OAuth Web 认证**: 基于浏览器的 Kiro OAuth 登录,提供美观的 Web UI

|

|

||||||

- **请求限流器**: 内置请求限流,防止 API 滥用

|

|

||||||

- **后台令牌刷新**: 过期前 10 分钟自动刷新令牌

|

|

||||||

- **监控指标**: 请求指标收集,用于监控和调试

|

|

||||||

- **设备指纹**: 设备指纹生成,增强安全性

|

|

||||||

- **冷却管理**: 智能冷却机制,应对 API 速率限制

|

|

||||||

- **用量检查器**: 实时用量监控和配额管理

|

|

||||||

- **模型转换器**: 跨供应商的统一模型名称转换

|

|

||||||

- **UTF-8 流处理**: 改进的流式响应处理

|

|

||||||

|

|

||||||

## Kiro 认证

|

|

||||||

|

|

||||||

### 命令行登录

|

|

||||||

|

|

||||||

> **注意:** 由于 AWS Cognito 限制,Google/GitHub 登录不可用于第三方应用。

|

|

||||||

|

|

||||||

**AWS Builder ID**(推荐):

|

|

||||||

|

|

||||||

```bash

|

|

||||||

# 设备码流程

|

|

||||||

./CLIProxyAPI --kiro-aws-login

|

|

||||||

|

|

||||||

# 授权码流程

|

|

||||||

./CLIProxyAPI --kiro-aws-authcode

|

|

||||||

```

|

|

||||||

|

|

||||||

**从 Kiro IDE 导入令牌:**

|

|

||||||

|

|

||||||

```bash

|

|

||||||

./CLIProxyAPI --kiro-import

|

|

||||||

```

|

|

||||||

|

|

||||||

获取令牌步骤:

|

|

||||||

|

|

||||||

1. 打开 Kiro IDE,使用 Google(或 GitHub)登录

|

|

||||||

2. 找到令牌文件:`~/.kiro/kiro-auth-token.json`

|

|

||||||

3. 运行:`./CLIProxyAPI --kiro-import`

|

|

||||||

|

|

||||||

**AWS IAM Identity Center (IDC):**

|

|

||||||

|

|

||||||

```bash

|

|

||||||

./CLIProxyAPI --kiro-idc-login --kiro-idc-start-url https://d-xxxxxxxxxx.awsapps.com/start

|

|

||||||

|

|

||||||

# 指定区域

|

|

||||||

./CLIProxyAPI --kiro-idc-login --kiro-idc-start-url https://d-xxxxxxxxxx.awsapps.com/start --kiro-idc-region us-west-2

|

|

||||||

```

|

|

||||||

|

|

||||||

**附加参数:**

|

|

||||||

|

|

||||||

| 参数 | 说明 |

|

|

||||||

|------|------|

|

|

||||||

| `--no-browser` | 不自动打开浏览器,打印 URL |

|

|

||||||

| `--no-incognito` | 使用已有浏览器会话(Kiro 默认使用无痕模式),适用于需要已登录浏览器会话的企业 SSO 场景 |

|

|

||||||

| `--kiro-idc-start-url` | IDC Start URL(`--kiro-idc-login` 必需) |

|

|

||||||

| `--kiro-idc-region` | IDC 区域(默认:`us-east-1`) |

|

|

||||||

| `--kiro-idc-flow` | IDC 流程类型:`authcode`(默认)或 `device` |

|

|

||||||

|

|

||||||

### 网页端 OAuth 登录

|

|

||||||

|

|

||||||

访问 Kiro OAuth 网页认证界面:

|

|

||||||

|

|

||||||

```

|

|

||||||

http://your-server:8080/v0/oauth/kiro

|

|

||||||

```

|

|

||||||

|

|

||||||

提供基于浏览器的 Kiro (AWS CodeWhisperer) OAuth 认证流程,支持:

|

|

||||||

- AWS Builder ID 登录

|

|

||||||

- AWS Identity Center (IDC) 登录

|

|

||||||

- 从 Kiro IDE 导入令牌

|

|

||||||

|

|

||||||

## Docker 快速部署

|

|

||||||

|

|

||||||

### 一键部署

|

|

||||||

|

|

||||||

```bash

|

|

||||||

# 创建部署目录

|

|

||||||

mkdir -p ~/cli-proxy && cd ~/cli-proxy

|

|

||||||

|

|

||||||

# 创建 docker-compose.yml

|

|

||||||

cat > docker-compose.yml << 'EOF'

|

|

||||||

services:

|

|

||||||

cli-proxy-api:

|

|

||||||

image: eceasy/cli-proxy-api-plus:latest

|

|

||||||

container_name: cli-proxy-api-plus

|

|

||||||

ports:

|

|

||||||

- "8317:8317"

|

|

||||||

volumes:

|

|

||||||

- ./config.yaml:/CLIProxyAPI/config.yaml

|

|

||||||

- ./auths:/root/.cli-proxy-api

|

|

||||||

- ./logs:/CLIProxyAPI/logs

|

|

||||||

restart: unless-stopped

|

|

||||||

EOF

|

|

||||||

|

|

||||||

# 下载示例配置

|

|

||||||

curl -o config.yaml https://raw.githubusercontent.com/router-for-me/CLIProxyAPIPlus/main/config.example.yaml

|

|

||||||

|

|

||||||

# 拉取并启动

|

|

||||||

docker compose pull && docker compose up -d

|

|

||||||

```

|

|

||||||

|

|

||||||

### 配置说明

|

|

||||||

|

|

||||||

启动前请编辑 `config.yaml`:

|

|

||||||

|

|

||||||

```yaml

|

|

||||||

# 基本配置示例

|

|

||||||

server:

|

|

||||||

port: 8317

|

|

||||||

|

|

||||||

# 在此添加你的供应商配置

|

|

||||||

```

|

|

||||||

|

|

||||||

### 更新到最新版本

|

|

||||||

|

|

||||||

```bash

|

|

||||||

cd ~/cli-proxy

|

|

||||||

docker compose pull && docker compose up -d

|

|

||||||

```

|

|

||||||

|

|

||||||

## 贡献

|

## 贡献

|

||||||

|

|

||||||

该项目仅接受第三方供应商支持的 Pull Request。任何非第三方供应商支持的 Pull Request 都将被拒绝。

|

该项目仅接受第三方供应商支持的 Pull Request。任何非第三方供应商支持的 Pull Request 都将被拒绝。

|

||||||

|

|||||||

199

README_JA.md

Normal file

199

README_JA.md

Normal file

@@ -0,0 +1,199 @@

|

|||||||

|

# CLI Proxy API

|

||||||

|

|

||||||

|

[English](README.md) | [中文](README_CN.md) | 日本語

|

||||||

|

|

||||||

|

CLI向けのOpenAI/Gemini/Claude/Codex互換APIインターフェースを提供するプロキシサーバーです。

|

||||||

|

|

||||||

|

OAuth経由でOpenAI Codex(GPTモデル)およびClaude Codeもサポートしています。

|

||||||

|

|

||||||

|

ローカルまたはマルチアカウントのCLIアクセスを、OpenAI(Responses含む)/Gemini/Claude互換のクライアントやSDKで利用できます。

|

||||||

|

|

||||||

|

## スポンサー

|

||||||

|

|

||||||

|

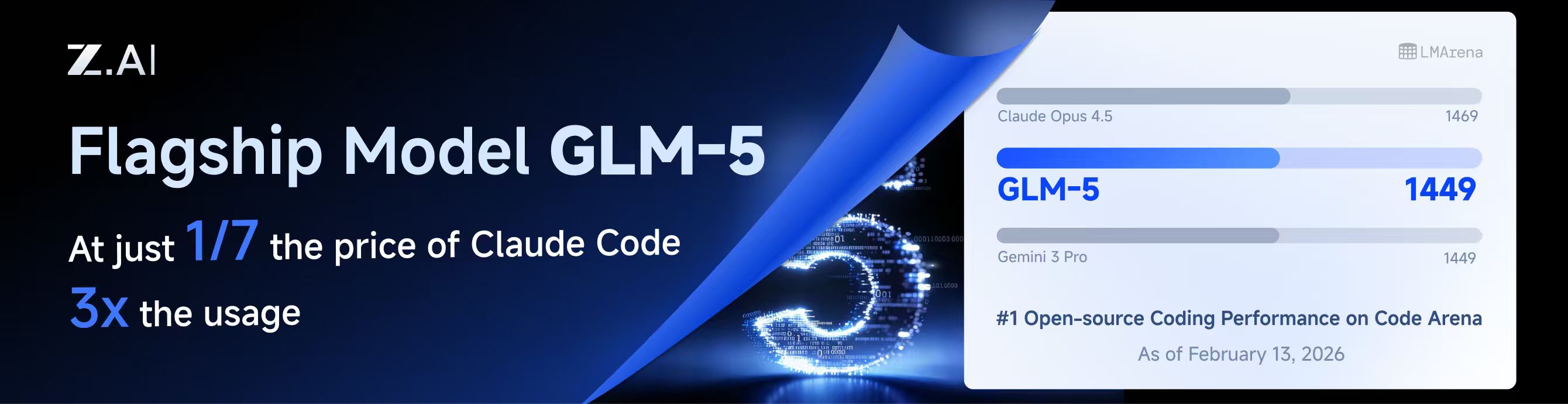

[](https://z.ai/subscribe?ic=8JVLJQFSKB)

|

||||||

|

|

||||||

|

本プロジェクトはZ.aiにスポンサーされており、GLM CODING PLANの提供を受けています。

|

||||||

|

|

||||||

|

GLM CODING PLANはAIコーディング向けに設計されたサブスクリプションサービスで、月額わずか$10から利用可能です。フラッグシップのGLM-4.7および(GLM-5はProユーザーのみ利用可能)モデルを10以上の人気AIコーディングツール(Claude Code、Cline、Roo Codeなど)で利用でき、開発者にトップクラスの高速かつ安定したコーディング体験を提供します。

|

||||||

|

|

||||||

|

GLM CODING PLANを10%割引で取得:https://z.ai/subscribe?ic=8JVLJQFSKB

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

<table>

|

||||||

|

<tbody>

|

||||||

|

<tr>

|

||||||

|

<td width="180"><a href="https://www.packyapi.com/register?aff=cliproxyapi"><img src="./assets/packycode.png" alt="PackyCode" width="150"></a></td>

|

||||||

|

<td>PackyCodeのスポンサーシップに感謝します!PackyCodeは信頼性が高く効率的なAPIリレーサービスプロバイダーで、Claude Code、Codex、Geminiなどのリレーサービスを提供しています。PackyCodeは当ソフトウェアのユーザーに特別割引を提供しています:<a href="https://www.packyapi.com/register?aff=cliproxyapi">こちらのリンク</a>から登録し、チャージ時にプロモーションコード「cliproxyapi」を入力すると10%割引になります。</td>

|

||||||

|

</tr>

|

||||||

|

<tr>

|

||||||

|

<td width="180"><a href="https://www.aicodemirror.com/register?invitecode=TJNAIF"><img src="./assets/aicodemirror.png" alt="AICodeMirror" width="150"></a></td>

|

||||||

|

<td>AICodeMirrorのスポンサーシップに感謝します!AICodeMirrorはClaude Code / Codex / Gemini CLI向けの公式高安定性リレーサービスを提供しており、エンタープライズグレードの同時接続、迅速な請求書発行、24時間365日の専任技術サポートを備えています。Claude Code / Codex / Geminiの公式チャネルが元の価格の38% / 2% / 9%で利用でき、チャージ時にはさらに割引があります!CLIProxyAPIユーザー向けの特別特典:<a href="https://www.aicodemirror.com/register?invitecode=TJNAIF">こちらのリンク</a>から登録すると、初回チャージが20%割引になり、エンタープライズのお客様は最大25%割引を受けられます!</td>

|

||||||

|

</tr>

|

||||||

|

<tr>

|

||||||

|

<td width="180"><a href="https://shop.bmoplus.com/?utm_source=github"><img src="./assets/bmoplus.png" alt="BmoPlus" width="150"></a></td>

|

||||||

|

<td>本プロジェクトにご支援いただいた BmoPlus に感謝いたします!BmoPlusは、AIサブスクリプションのヘビーユーザー向けに特化した信頼性の高いAIアカウントサービスプロバイダーであり、安定した ChatGPT Plus / ChatGPT Pro (完全保証) / Claude Pro / Super Grok / Gemini Pro の公式代行チャージおよび即納アカウントを提供しています。こちらの<a href="https://shop.bmoplus.com/?utm_source=github">BmoPlus AIアカウント専門店/代行チャージ</a>経由でご登録・ご注文いただいたユーザー様は、GPTを <b>公式サイト価格の約1割(90% OFF)</b> という驚異的な価格でご利用いただけます!</td>

|

||||||

|

</tr>

|

||||||

|

<tr>

|

||||||

|

<td width="180"><a href="https://www.lingtrue.com/register"><img src="./assets/lingtrue.png" alt="LingtrueAPI" width="150"></a></td>

|

||||||

|

<td>LingtrueAPIのスポンサーシップに感謝します!LingtrueAPIはグローバルな大規模モデルAPIリレーサービスプラットフォームで、Claude Code、Codex、GeminiなどのトップモデルAPI呼び出しサービスを提供し、ユーザーが低コストかつ高い安定性で世界中のAI能力に接続できるよう支援しています。LingtrueAPIは本ソフトウェアのユーザーに特別割引を提供しています:<a href="https://www.lingtrue.com/register">こちらのリンク</a>から登録し、初回チャージ時にプロモーションコード「LingtrueAPI」を入力すると10%割引になります。</td>

|

||||||

|

</tr>

|

||||||

|

</tbody>

|

||||||

|

</table>

|

||||||

|

|

||||||

|

## 概要

|

||||||

|

|

||||||

|

- CLIモデル向けのOpenAI/Gemini/Claude互換APIエンドポイント

|

||||||

|

- OAuthログインによるOpenAI Codexサポート(GPTモデル)

|

||||||

|

- OAuthログインによるClaude Codeサポート

|

||||||

|

- OAuthログインによるQwen Codeサポート

|

||||||

|

- OAuthログインによるiFlowサポート

|

||||||

|

- プロバイダールーティングによるAmp CLIおよびIDE拡張機能のサポート

|

||||||

|

- ストリーミングおよび非ストリーミングレスポンス

|

||||||

|

- 関数呼び出し/ツールのサポート

|

||||||

|

- マルチモーダル入力サポート(テキストと画像)

|

||||||

|

- ラウンドロビン負荷分散による複数アカウント対応(Gemini、OpenAI、Claude、QwenおよびiFlow)

|

||||||

|

- シンプルなCLI認証フロー(Gemini、OpenAI、Claude、QwenおよびiFlow)

|

||||||

|

- Generative Language APIキーのサポート

|

||||||

|

- AI Studioビルドのマルチアカウント負荷分散

|

||||||

|

- Gemini CLIのマルチアカウント負荷分散

|

||||||

|

- Claude Codeのマルチアカウント負荷分散

|

||||||

|

- Qwen Codeのマルチアカウント負荷分散

|

||||||

|

- iFlowのマルチアカウント負荷分散

|

||||||

|

- OpenAI Codexのマルチアカウント負荷分散

|

||||||

|

- 設定によるOpenAI互換アップストリームプロバイダー(例:OpenRouter)

|

||||||

|

- プロキシ埋め込み用の再利用可能なGo SDK(`docs/sdk-usage.md`を参照)

|

||||||

|

|

||||||

|

## はじめに

|

||||||

|

|

||||||

|

CLIProxyAPIガイド:[https://help.router-for.me/](https://help.router-for.me/)

|

||||||

|

|

||||||

|

## 管理API

|

||||||

|

|

||||||

|

[MANAGEMENT_API.md](https://help.router-for.me/management/api)を参照

|

||||||

|

|

||||||

|

## Amp CLIサポート

|

||||||

|

|

||||||

|

CLIProxyAPIは[Amp CLI](https://ampcode.com)およびAmp IDE拡張機能の統合サポートを含んでおり、Google/ChatGPT/ClaudeのOAuthサブスクリプションをAmpのコーディングツールで使用できます:

|

||||||

|

|

||||||

|

- Ampの APIパターン用のプロバイダールートエイリアス(`/api/provider/{provider}/v1...`)

|

||||||

|

- OAuth認証およびアカウント機能用の管理プロキシ

|

||||||

|

- 自動ルーティングによるスマートモデルフォールバック

|

||||||

|

- 利用できないモデルを代替モデルにルーティングする**モデルマッピング**(例:`claude-opus-4.5` → `claude-sonnet-4`)

|

||||||

|

- localhostのみの管理エンドポイントによるセキュリティファーストの設計

|

||||||

|

|

||||||

|

特定のバックエンド系統のリクエスト/レスポンス形状が必要な場合は、統合された `/v1/...` エンドポイントよりも provider-specific のパスを優先してください。

|

||||||

|

|

||||||

|

- messages 系のバックエンドには `/api/provider/{provider}/v1/messages`

|

||||||

|

- モデル単位の generate 系エンドポイントには `/api/provider/{provider}/v1beta/models/...`

|

||||||

|

- chat-completions 系のバックエンドには `/api/provider/{provider}/v1/chat/completions`

|

||||||

|

|

||||||

|

これらのパスはプロトコル面の選択には役立ちますが、同じクライアント向けモデル名が複数バックエンドで再利用されている場合、それだけで推論実行系が一意に固定されるわけではありません。実際の推論ルーティングは、引き続きリクエスト内の model/alias 解決に従います。厳密にバックエンドを固定したい場合は、一意な alias や prefix を使うか、クライアント向けモデル名の重複自体を避けてください。

|

||||||

|

|

||||||

|

**→ [Amp CLI統合ガイドの完全版](https://help.router-for.me/agent-client/amp-cli.html)**

|

||||||

|

|

||||||

|

## SDKドキュメント

|

||||||

|

|

||||||

|

- 使い方:[docs/sdk-usage.md](docs/sdk-usage.md)

|

||||||

|

- 上級(エグゼキューターとトランスレーター):[docs/sdk-advanced.md](docs/sdk-advanced.md)

|

||||||

|

- アクセス:[docs/sdk-access.md](docs/sdk-access.md)

|

||||||

|

- ウォッチャー:[docs/sdk-watcher.md](docs/sdk-watcher.md)

|

||||||

|

- カスタムプロバイダーの例:`examples/custom-provider`

|

||||||

|

|

||||||

|

## コントリビューション

|

||||||

|

|

||||||

|

コントリビューションを歓迎します!お気軽にPull Requestを送ってください。

|

||||||

|

|

||||||

|

1. リポジトリをフォーク

|

||||||

|

2. フィーチャーブランチを作成(`git checkout -b feature/amazing-feature`)

|

||||||

|

3. 変更をコミット(`git commit -m 'Add some amazing feature'`)

|

||||||

|

4. ブランチにプッシュ(`git push origin feature/amazing-feature`)

|

||||||

|

5. Pull Requestを作成

|

||||||

|

|

||||||

|

## 関連プロジェクト

|

||||||

|

|

||||||

|

CLIProxyAPIをベースにした以下のプロジェクトがあります:

|

||||||

|

|

||||||

|

### [vibeproxy](https://github.com/automazeio/vibeproxy)

|

||||||

|

|

||||||

|

macOSネイティブのメニューバーアプリで、Claude CodeとChatGPTのサブスクリプションをAIコーディングツールで使用可能 - APIキー不要

|

||||||

|

|

||||||

|

### [Subtitle Translator](https://github.com/VjayC/SRT-Subtitle-Translator-Validator)

|

||||||

|

|

||||||

|

CLIProxyAPI経由でGeminiサブスクリプションを使用してSRT字幕を翻訳するブラウザベースのツール。自動検証/エラー修正機能付き - APIキー不要

|

||||||

|

|

||||||

|

### [CCS (Claude Code Switch)](https://github.com/kaitranntt/ccs)

|

||||||

|

|

||||||

|

CLIProxyAPI OAuthを使用して複数のClaudeアカウントや代替モデル(Gemini、Codex、Antigravity)を即座に切り替えるCLIラッパー - APIキー不要

|

||||||

|

|

||||||

|

### [ProxyPal](https://github.com/heyhuynhgiabuu/proxypal)

|

||||||

|

|

||||||

|

CLIProxyAPI管理用のmacOSネイティブGUI:OAuth経由でプロバイダー、モデルマッピング、エンドポイントを設定 - APIキー不要

|

||||||

|

|

||||||

|

### [Quotio](https://github.com/nguyenphutrong/quotio)

|

||||||

|

|

||||||

|

Claude、Gemini、OpenAI、Qwen、Antigravityのサブスクリプションを統合し、リアルタイムのクォータ追跡とスマート自動フェイルオーバーを備えたmacOSネイティブのメニューバーアプリ。Claude Code、OpenCode、Droidなどのコーディングツール向け - APIキー不要

|

||||||

|

|

||||||

|

### [CodMate](https://github.com/loocor/CodMate)

|

||||||

|

|

||||||

|

CLI AIセッション(Codex、Claude Code、Gemini CLI)を管理するmacOS SwiftUIネイティブアプリ。統合プロバイダー管理、Gitレビュー、プロジェクト整理、グローバル検索、ターミナル統合機能を搭載。CLIProxyAPIと統合し、Codex、Claude、Gemini、Antigravity、Qwen CodeのOAuth認証を提供。単一のプロキシエンドポイントを通じた組み込みおよびサードパーティプロバイダーの再ルーティングに対応 - OAuthプロバイダーではAPIキー不要

|

||||||

|

|

||||||

|

### [ProxyPilot](https://github.com/Finesssee/ProxyPilot)

|

||||||

|

|

||||||

|

TUI、システムトレイ、マルチプロバイダーOAuthを備えたWindows向けCLIProxyAPIフォーク - AIコーディングツール用、APIキー不要

|

||||||

|

|

||||||

|

### [Claude Proxy VSCode](https://github.com/uzhao/claude-proxy-vscode)

|

||||||

|

|

||||||

|

Claude Codeモデルを素早く切り替えるVSCode拡張機能。バックエンドとしてCLIProxyAPIを統合し、バックグラウンドでの自動ライフサイクル管理を搭載

|

||||||

|

|

||||||

|

### [ZeroLimit](https://github.com/0xtbug/zero-limit)

|

||||||

|

|

||||||

|

CLIProxyAPIを使用してAIコーディングアシスタントのクォータを監視するTauri + React製のWindowsデスクトップアプリ。Gemini、Claude、OpenAI Codex、Antigravityアカウントの使用量をリアルタイムダッシュボード、システムトレイ統合、ワンクリックプロキシコントロールで追跡 - APIキー不要

|

||||||

|

|

||||||

|

### [CPA-XXX Panel](https://github.com/ferretgeek/CPA-X)

|

||||||

|

|

||||||

|

CLIProxyAPI向けの軽量Web管理パネル。ヘルスチェック、リソース監視、リアルタイムログ、自動更新、リクエスト統計、料金表示機能を搭載。ワンクリックインストールとsystemdサービスに対応

|

||||||

|

|

||||||

|

### [CLIProxyAPI Tray](https://github.com/kitephp/CLIProxyAPI_Tray)

|

||||||

|

|

||||||

|

PowerShellスクリプトで実装されたWindowsトレイアプリケーション。サードパーティライブラリに依存せず、ショートカットの自動作成、サイレント実行、パスワード管理、チャネル切り替え(Main / Plus)、自動ダウンロードおよび自動更新に対応

|

||||||

|

|

||||||

|

### [霖君](https://github.com/wangdabaoqq/LinJun)

|

||||||

|

|

||||||

|

霖君はAIプログラミングアシスタントを管理するクロスプラットフォームデスクトップアプリケーションで、macOS、Windows、Linuxシステムに対応。Claude Code、Gemini CLI、OpenAI Codex、Qwen Codeなどのコーディングツールを統合管理し、ローカルプロキシによるマルチアカウントクォータ追跡とワンクリック設定が可能

|

||||||

|

|

||||||

|

### [CLIProxyAPI Dashboard](https://github.com/itsmylife44/cliproxyapi-dashboard)

|

||||||

|

|

||||||

|

Next.js、React、PostgreSQLで構築されたCLIProxyAPI用のモダンなWebベース管理ダッシュボード。リアルタイムログストリーミング、構造化された設定編集、APIキー管理、Claude/Gemini/Codex向けOAuthプロバイダー統合、使用量分析、コンテナ管理、コンパニオンプラグインによるOpenCodeとの設定同期機能を搭載 - 手動でのYAML編集は不要

|

||||||

|

|

||||||

|

### [All API Hub](https://github.com/qixing-jk/all-api-hub)

|

||||||

|

|

||||||

|

New API互換リレーサイトアカウントをワンストップで管理するブラウザ拡張機能。残高と使用量のダッシュボード、自動チェックイン、一般的なアプリへのワンクリックキーエクスポート、ページ内API可用性テスト、チャネル/モデルの同期とリダイレクト機能を搭載。Management APIを通じてCLIProxyAPIと統合し、ワンクリックでプロバイダーのインポートと設定同期が可能

|

||||||

|

|

||||||

|

### [Shadow AI](https://github.com/HEUDavid/shadow-ai)

|

||||||

|

|

||||||

|

Shadow AIは制限された環境向けに特別に設計されたAIアシスタントツールです。ウィンドウや痕跡のないステルス動作モードを提供し、LAN(ローカルエリアネットワーク)を介したクロスデバイスAI質疑応答のインタラクションと制御を可能にします。本質的には「画面/音声キャプチャ + AI推論 + 低摩擦デリバリー」の自動化コラボレーションレイヤーであり、制御されたデバイスや制限された環境でアプリケーション横断的にAIアシスタントを没入的に使用できるようユーザーを支援します。

|

||||||

|

|

||||||

|

> [!NOTE]

|

||||||

|

> CLIProxyAPIをベースにプロジェクトを開発した場合は、PRを送ってこのリストに追加してください。

|

||||||

|

|

||||||

|

## その他の選択肢

|

||||||

|

|

||||||

|

以下のプロジェクトはCLIProxyAPIの移植版またはそれに触発されたものです:

|

||||||

|

|

||||||

|

### [9Router](https://github.com/decolua/9router)

|

||||||

|

|

||||||

|

CLIProxyAPIに触発されたNext.js実装。インストールと使用が簡単で、フォーマット変換(OpenAI/Claude/Gemini/Ollama)、自動フォールバック付きコンボシステム、指数バックオフ付きマルチアカウント管理、Next.js Webダッシュボード、CLIツール(Cursor、Claude Code、Cline、RooCode)のサポートをゼロから構築 - APIキー不要

|

||||||

|

|

||||||

|

### [OmniRoute](https://github.com/diegosouzapw/OmniRoute)

|

||||||

|

|

||||||

|

コーディングを止めない。無料および低コストのAIモデルへのスマートルーティングと自動フォールバック。

|

||||||

|

|

||||||

|

OmniRouteはマルチプロバイダーLLM向けのAIゲートウェイです:スマートルーティング、負荷分散、リトライ、フォールバックを備えたOpenAI互換エンドポイント。ポリシー、レート制限、キャッシュ、可観測性を追加して、信頼性が高くコストを意識した推論を実現します。

|

||||||

|

|

||||||

|

> [!NOTE]

|

||||||

|

> CLIProxyAPIの移植版またはそれに触発されたプロジェクトを開発した場合は、PRを送ってこのリストに追加してください。

|

||||||

|

|

||||||

|

## ライセンス

|

||||||

|

|

||||||

|

本プロジェクトはMITライセンスの下でライセンスされています - 詳細は[LICENSE](LICENSE)ファイルを参照してください。

|

||||||

BIN

assets/bmoplus.png

Normal file

BIN

assets/bmoplus.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 28 KiB |

BIN

assets/lingtrue.png

Normal file

BIN

assets/lingtrue.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 129 KiB |

275

cmd/fetch_antigravity_models/main.go

Normal file

275

cmd/fetch_antigravity_models/main.go

Normal file

@@ -0,0 +1,275 @@

|

|||||||

|

// Command fetch_antigravity_models connects to the Antigravity API using the

|

||||||

|

// stored auth credentials and saves the dynamically fetched model list to a

|

||||||

|

// JSON file for inspection or offline use.

|

||||||

|

//

|

||||||

|

// Usage:

|

||||||

|

//

|

||||||

|

// go run ./cmd/fetch_antigravity_models [flags]

|

||||||

|

//

|

||||||

|

// Flags:

|

||||||

|

//

|

||||||

|

// --auths-dir <path> Directory containing auth JSON files (default: "auths")

|

||||||

|

// --output <path> Output JSON file path (default: "antigravity_models.json")

|

||||||

|

// --pretty Pretty-print the output JSON (default: true)

|

||||||

|

package main

|

||||||

|

|

||||||

|

import (

|

||||||

|

"context"

|

||||||

|

"encoding/json"

|

||||||

|

"flag"

|

||||||

|

"fmt"

|

||||||

|

"io"

|

||||||

|

"net/http"

|

||||||

|

"os"

|

||||||

|

"path/filepath"

|

||||||

|

"strings"

|

||||||

|

"time"

|

||||||

|

|

||||||

|

"github.com/router-for-me/CLIProxyAPI/v6/internal/logging"

|

||||||

|

sdkauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/auth"

|

||||||

|

coreauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/cliproxy/auth"

|

||||||

|

"github.com/router-for-me/CLIProxyAPI/v6/sdk/proxyutil"

|

||||||

|

log "github.com/sirupsen/logrus"

|

||||||

|

"github.com/tidwall/gjson"

|

||||||

|

)

|

||||||

|

|

||||||

|

const (

|

||||||

|

antigravityBaseURLDaily = "https://daily-cloudcode-pa.googleapis.com"

|

||||||

|

antigravitySandboxBaseURLDaily = "https://daily-cloudcode-pa.sandbox.googleapis.com"

|

||||||

|

antigravityBaseURLProd = "https://cloudcode-pa.googleapis.com"

|

||||||

|

antigravityModelsPath = "/v1internal:fetchAvailableModels"

|

||||||

|

)

|

||||||

|

|

||||||

|

func init() {

|

||||||

|

logging.SetupBaseLogger()

|

||||||

|

log.SetLevel(log.InfoLevel)

|

||||||

|

}

|

||||||

|

|

||||||

|

// modelOutput wraps the fetched model list with fetch metadata.

|

||||||

|

type modelOutput struct {

|

||||||

|

Models []modelEntry `json:"models"`

|

||||||

|

}

|

||||||

|

|

||||||

|

// modelEntry contains only the fields we want to keep for static model definitions.

|

||||||

|

type modelEntry struct {

|

||||||

|

ID string `json:"id"`

|

||||||

|

Object string `json:"object"`

|

||||||

|

OwnedBy string `json:"owned_by"`

|

||||||

|

Type string `json:"type"`

|

||||||

|

DisplayName string `json:"display_name"`

|

||||||

|

Name string `json:"name"`

|

||||||

|

Description string `json:"description"`

|

||||||

|

ContextLength int `json:"context_length,omitempty"`

|

||||||

|

MaxCompletionTokens int `json:"max_completion_tokens,omitempty"`

|

||||||

|

}

|

||||||

|

|

||||||

|

func main() {

|

||||||

|

var authsDir string

|

||||||

|

var outputPath string

|

||||||

|

var pretty bool

|

||||||

|

|

||||||

|

flag.StringVar(&authsDir, "auths-dir", "auths", "Directory containing auth JSON files")

|

||||||

|

flag.StringVar(&outputPath, "output", "antigravity_models.json", "Output JSON file path")

|

||||||

|

flag.BoolVar(&pretty, "pretty", true, "Pretty-print the output JSON")

|

||||||

|

flag.Parse()

|

||||||

|

|

||||||

|

// Resolve relative paths against the working directory.

|

||||||

|

wd, err := os.Getwd()

|

||||||

|

if err != nil {

|

||||||

|

fmt.Fprintf(os.Stderr, "error: cannot get working directory: %v\n", err)

|

||||||

|

os.Exit(1)

|

||||||

|

}

|

||||||

|

if !filepath.IsAbs(authsDir) {

|

||||||

|

authsDir = filepath.Join(wd, authsDir)

|

||||||

|

}

|

||||||

|

if !filepath.IsAbs(outputPath) {

|

||||||

|

outputPath = filepath.Join(wd, outputPath)

|

||||||

|

}

|

||||||

|

|

||||||

|

fmt.Printf("Scanning auth files in: %s\n", authsDir)

|

||||||

|

|

||||||

|

// Load all auth records from the directory.

|

||||||

|

fileStore := sdkauth.NewFileTokenStore()

|

||||||

|

fileStore.SetBaseDir(authsDir)

|

||||||

|

|

||||||

|

ctx := context.Background()

|

||||||

|

auths, err := fileStore.List(ctx)

|

||||||

|

if err != nil {

|

||||||

|

fmt.Fprintf(os.Stderr, "error: failed to list auth files: %v\n", err)

|

||||||

|

os.Exit(1)

|

||||||

|

}

|

||||||

|

if len(auths) == 0 {

|

||||||

|

fmt.Fprintf(os.Stderr, "error: no auth files found in %s\n", authsDir)

|

||||||

|

os.Exit(1)

|

||||||

|

}

|

||||||

|

|

||||||

|

// Find the first enabled antigravity auth.

|

||||||

|

var chosen *coreauth.Auth

|

||||||

|

for _, a := range auths {

|

||||||

|

if a == nil || a.Disabled {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

if strings.EqualFold(strings.TrimSpace(a.Provider), "antigravity") {

|

||||||

|

chosen = a

|

||||||

|

break

|

||||||

|

}

|

||||||

|

}

|

||||||

|

if chosen == nil {

|

||||||

|

fmt.Fprintf(os.Stderr, "error: no enabled antigravity auth found in %s\n", authsDir)

|

||||||

|

os.Exit(1)

|

||||||

|

}

|

||||||

|

|

||||||

|

fmt.Printf("Using auth: id=%s label=%s\n", chosen.ID, chosen.Label)

|

||||||

|

|

||||||

|

// Fetch models from the upstream Antigravity API.

|

||||||

|

fmt.Println("Fetching Antigravity model list from upstream...")

|

||||||

|

|

||||||

|

fetchCtx, cancel := context.WithTimeout(ctx, 30*time.Second)

|

||||||

|

defer cancel()

|

||||||

|

|

||||||

|

models := fetchModels(fetchCtx, chosen)

|

||||||

|

if len(models) == 0 {

|

||||||

|

fmt.Fprintln(os.Stderr, "warning: no models returned (API may be unavailable or token expired)")

|

||||||

|

} else {

|

||||||

|

fmt.Printf("Fetched %d models.\n", len(models))

|

||||||

|

}

|

||||||

|

|

||||||

|

// Build the output payload.

|

||||||

|

out := modelOutput{

|

||||||

|

Models: models,

|

||||||

|

}

|

||||||

|

|

||||||

|

// Marshal to JSON.

|

||||||

|

var raw []byte

|

||||||

|

if pretty {

|

||||||

|

raw, err = json.MarshalIndent(out, "", " ")

|

||||||

|

} else {

|

||||||

|

raw, err = json.Marshal(out)

|

||||||

|

}

|

||||||

|

if err != nil {

|

||||||

|

fmt.Fprintf(os.Stderr, "error: failed to marshal JSON: %v\n", err)

|

||||||

|

os.Exit(1)

|

||||||

|

}

|

||||||

|

|

||||||

|

if err = os.WriteFile(outputPath, raw, 0o644); err != nil {

|

||||||

|

fmt.Fprintf(os.Stderr, "error: failed to write output file %s: %v\n", outputPath, err)

|

||||||

|

os.Exit(1)

|

||||||

|

}

|

||||||

|

|

||||||

|

fmt.Printf("Model list saved to: %s\n", outputPath)

|

||||||

|

}

|

||||||

|

|

||||||

|

func fetchModels(ctx context.Context, auth *coreauth.Auth) []modelEntry {

|

||||||

|

accessToken := metaStringValue(auth.Metadata, "access_token")

|

||||||

|

if accessToken == "" {

|

||||||

|

fmt.Fprintln(os.Stderr, "error: no access token found in auth")

|

||||||

|

return nil

|

||||||

|

}

|

||||||

|

|

||||||

|

baseURLs := []string{antigravityBaseURLProd, antigravityBaseURLDaily, antigravitySandboxBaseURLDaily}

|

||||||

|

|

||||||

|

for _, baseURL := range baseURLs {

|

||||||

|

modelsURL := baseURL + antigravityModelsPath

|

||||||

|

|

||||||

|

var payload []byte

|

||||||

|

if auth != nil && auth.Metadata != nil {

|

||||||

|

if pid, ok := auth.Metadata["project_id"].(string); ok && strings.TrimSpace(pid) != "" {

|

||||||

|

payload = []byte(fmt.Sprintf(`{"project": "%s"}`, strings.TrimSpace(pid)))

|

||||||

|

}

|

||||||

|

}

|

||||||

|

if len(payload) == 0 {

|

||||||

|

payload = []byte(`{}`)

|

||||||

|

}

|

||||||

|

|

||||||

|

httpReq, errReq := http.NewRequestWithContext(ctx, http.MethodPost, modelsURL, strings.NewReader(string(payload)))

|

||||||

|

if errReq != nil {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

httpReq.Close = true

|

||||||

|

httpReq.Header.Set("Content-Type", "application/json")

|

||||||

|

httpReq.Header.Set("Authorization", "Bearer "+accessToken)

|

||||||

|

httpReq.Header.Set("User-Agent", "antigravity/1.19.6 darwin/arm64")

|

||||||

|

|

||||||

|

httpClient := &http.Client{Timeout: 30 * time.Second}

|

||||||

|

if transport, _, errProxy := proxyutil.BuildHTTPTransport(auth.ProxyURL); errProxy == nil && transport != nil {

|

||||||

|

httpClient.Transport = transport

|

||||||

|

}

|

||||||

|

httpResp, errDo := httpClient.Do(httpReq)

|

||||||

|

if errDo != nil {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

|

||||||

|

bodyBytes, errRead := io.ReadAll(httpResp.Body)

|

||||||

|

httpResp.Body.Close()

|

||||||

|

if errRead != nil {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

|

||||||

|

if httpResp.StatusCode < http.StatusOK || httpResp.StatusCode >= http.StatusMultipleChoices {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

|

||||||

|

result := gjson.GetBytes(bodyBytes, "models")

|

||||||

|

if !result.Exists() {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

|

||||||

|

var models []modelEntry

|

||||||

|

|

||||||

|

for originalName, modelData := range result.Map() {

|

||||||

|

modelID := strings.TrimSpace(originalName)

|

||||||

|

if modelID == "" {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

// Skip internal/experimental models

|

||||||

|

switch modelID {

|

||||||

|

case "chat_20706", "chat_23310", "tab_flash_lite_preview", "tab_jump_flash_lite_preview", "gemini-2.5-flash-thinking", "gemini-2.5-pro":

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

|

||||||

|

displayName := modelData.Get("displayName").String()

|

||||||

|

if displayName == "" {

|

||||||

|

displayName = modelID

|

||||||

|

}

|

||||||

|

|

||||||

|

entry := modelEntry{

|

||||||

|

ID: modelID,

|

||||||

|

Object: "model",

|

||||||

|

OwnedBy: "antigravity",

|

||||||

|

Type: "antigravity",

|

||||||

|

DisplayName: displayName,

|

||||||

|

Name: modelID,

|

||||||

|

Description: displayName,

|

||||||

|

}

|

||||||

|

|

||||||

|

if maxTok := modelData.Get("maxTokens").Int(); maxTok > 0 {

|

||||||

|

entry.ContextLength = int(maxTok)

|

||||||

|

}

|

||||||

|

if maxOut := modelData.Get("maxOutputTokens").Int(); maxOut > 0 {

|

||||||

|

entry.MaxCompletionTokens = int(maxOut)

|

||||||

|

}

|

||||||

|

|

||||||

|

models = append(models, entry)

|

||||||

|

}

|

||||||

|

|

||||||

|

return models

|

||||||

|

}

|

||||||

|

|

||||||

|

return nil

|

||||||

|

}

|

||||||

|

|

||||||

|

func metaStringValue(m map[string]interface{}, key string) string {

|

||||||

|

if m == nil {

|

||||||

|

return ""

|

||||||

|

}

|

||||||

|

v, ok := m[key]

|

||||||

|

if !ok {

|

||||||

|

return ""

|

||||||

|

}

|

||||||

|

switch val := v.(type) {

|

||||||

|

case string:

|

||||||

|

return val

|

||||||

|

default:

|

||||||

|

return ""

|

||||||

|

}

|

||||||

|

}

|

||||||

20

cmd/mcpdebug/main.go

Normal file

20

cmd/mcpdebug/main.go

Normal file

@@ -0,0 +1,20 @@

|

|||||||

|

package main

|

||||||

|

|

||||||

|

import (

|

||||||

|

"encoding/hex"

|

||||||

|

"fmt"

|

||||||

|

"os"

|

||||||

|

|

||||||

|

cursorproto "github.com/router-for-me/CLIProxyAPI/v6/internal/auth/cursor/proto"

|

||||||

|

)

|

||||||

|

|

||||||

|

func main() {

|

||||||

|

// Encode MCP result with empty execId

|

||||||

|

resultBytes := cursorproto.EncodeExecMcpResult(1, "", `{"test": "data"}`, false)

|

||||||

|

fmt.Printf("Result protobuf hex: %s\n", hex.EncodeToString(resultBytes))

|

||||||

|

fmt.Printf("Result length: %d bytes\n", len(resultBytes))

|

||||||

|

|

||||||

|

// Write to file for analysis

|

||||||

|

os.WriteFile("mcp_result.bin", resultBytes)

|

||||||

|

fmt.Println("Wrote mcp_result.bin")

|

||||||

|

}

|

||||||

32

cmd/protocheck/main.go

Normal file

32

cmd/protocheck/main.go

Normal file

@@ -0,0 +1,32 @@

|

|||||||

|

package main

|

||||||

|

|

||||||

|

import (

|

||||||

|

"fmt"

|

||||||

|

cursorproto "github.com/router-for-me/CLIProxyAPI/v6/internal/auth/cursor/proto"

|

||||||

|

)

|

||||||

|

|

||||||

|

func main() {

|

||||||

|

ecm := cursorproto.NewMsg("ExecClientMessage")

|

||||||

|

|

||||||

|

// Try different field names

|

||||||

|

names := []string{

|

||||||

|