mirror of

https://github.com/router-for-me/CLIProxyAPIPlus.git

synced 2026-03-09 15:25:17 +00:00

Compare commits

46 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

7547d1d0b3 | ||

|

|

68934942d0 | ||

|

|

09fec34e1c | ||

|

|

9229708b6c | ||

|

|

914db94e79 | ||

|

|

660bd7eff5 | ||

|

|

b907d21851 | ||

|

|

d6cc976d1f | ||

|

|

8aa2cce8c5 | ||

|

|

bf9b2c49df | ||

|

|

77b42c6165 | ||

|

|

446150a747 | ||

|

|

1cbc4834e1 | ||

|

|

a8a5d03c33 | ||

|

|

76aa917882 | ||

|

|

6ac9b31e4e | ||

|

|

0ad3e8457f | ||

|

|

444a47ae63 | ||

|

|

725f4fdff4 | ||

|

|

c23e46f45d | ||

|

|

b148820c35 | ||

|

|

134f41496d | ||

|

|

c5838dd58d | ||

|

|

b6ca5ef7ce | ||

|

|

1ae994b4aa | ||

|

|

84e9793e61 | ||

|

|

32e64dacfd | ||

|

|

cc1d8f6629 | ||

|

|

5446cd2b02 | ||

|

|

8de0885b7d | ||

|

|

68dd2bfe82 | ||

|

|

2baf35b3ef | ||

|

|

846e75b893 | ||

|

|

fc0257d6d9 | ||

|

|

f3c164d345 | ||

|

|

4040b1e766 | ||

|

|

8f97a5f77c | ||

|

|

2a4d3e60f3 | ||

|

|

8b5af2ab84 | ||

|

|

d887716ebd | ||

|

|

5dc1848466 | ||

|

|

9491517b26 | ||

|

|

9370b5bd04 | ||

|

|

abb51a0d93 | ||

|

|

c8d809131b | ||

|

|

dd71c73a9f |

@@ -31,6 +31,7 @@ bin/*

|

||||

.agent/*

|

||||

.agents/*

|

||||

.opencode/*

|

||||

.idea/*

|

||||

.bmad/*

|

||||

_bmad/*

|

||||

_bmad-output/*

|

||||

|

||||

1

.gitignore

vendored

1

.gitignore

vendored

@@ -44,6 +44,7 @@ GEMINI.md

|

||||

.agents/*

|

||||

.agents/*

|

||||

.opencode/*

|

||||

.idea/*

|

||||

.bmad/*

|

||||

_bmad/*

|

||||

_bmad-output/*

|

||||

|

||||

13

README.md

13

README.md

@@ -10,20 +10,11 @@ The Plus release stays in lockstep with the mainline features.

|

||||

|

||||

## Differences from the Mainline

|

||||

|

||||

- Added GitHub Copilot support (OAuth login), provided by [em4go](https://github.com/em4go/CLIProxyAPI/tree/feature/github-copilot-auth)

|

||||

- Added Kiro (AWS CodeWhisperer) support (OAuth login), provided by [fuko2935](https://github.com/fuko2935/CLIProxyAPI/tree/feature/kiro-integration), [Ravens2121](https://github.com/Ravens2121/CLIProxyAPIPlus/)

|

||||

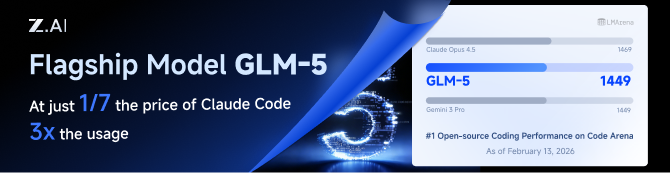

[](https://z.ai/subscribe?ic=8JVLJQFSKB)

|

||||

|

||||

## New Features (Plus Enhanced)

|

||||

|

||||

- **OAuth Web Authentication**: Browser-based OAuth login for Kiro with beautiful web UI

|

||||

- **Rate Limiter**: Built-in request rate limiting to prevent API abuse

|

||||

- **Background Token Refresh**: Automatic token refresh 10 minutes before expiration

|

||||

- **Metrics & Monitoring**: Request metrics collection for monitoring and debugging

|

||||

- **Device Fingerprint**: Device fingerprint generation for enhanced security

|

||||

- **Cooldown Management**: Smart cooldown mechanism for API rate limits

|

||||

- **Usage Checker**: Real-time usage monitoring and quota management

|

||||

- **Model Converter**: Unified model name conversion across providers

|

||||

- **UTF-8 Stream Processing**: Improved streaming response handling

|

||||

GLM CODING PLAN is a subscription service designed for AI coding, starting at just $10/month. It provides access to their flagship GLM-4.7 & (GLM-5 Only Available for Pro Users)model across 10+ popular AI coding tools (Claude Code, Cline, Roo Code, etc.), offering developers top-tier, fast, and stable coding experiences.

|

||||

|

||||

## Kiro Authentication

|

||||

|

||||

|

||||

15

README_CN.md

15

README_CN.md

@@ -10,22 +10,13 @@

|

||||

|

||||

## 与主线版本版本差异

|

||||

|

||||

- 新增 GitHub Copilot 支持(OAuth 登录),由[em4go](https://github.com/em4go/CLIProxyAPI/tree/feature/github-copilot-auth)提供

|

||||

- 新增 Kiro (AWS CodeWhisperer) 支持 (OAuth 登录), 由[fuko2935](https://github.com/fuko2935/CLIProxyAPI/tree/feature/kiro-integration)、[Ravens2121](https://github.com/Ravens2121/CLIProxyAPIPlus/)提供

|

||||

[](https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII)

|

||||

|

||||

## 新增功能 (Plus 增强版)

|

||||

|

||||

- **OAuth Web 认证**: 基于浏览器的 Kiro OAuth 登录,提供美观的 Web UI

|

||||

- **请求限流器**: 内置请求限流,防止 API 滥用

|

||||

- **后台令牌刷新**: 过期前 10 分钟自动刷新令牌

|

||||

- **监控指标**: 请求指标收集,用于监控和调试

|

||||

- **设备指纹**: 设备指纹生成,增强安全性

|

||||

- **冷却管理**: 智能冷却机制,应对 API 速率限制

|

||||

- **用量检查器**: 实时用量监控和配额管理

|

||||

- **模型转换器**: 跨供应商的统一模型名称转换

|

||||

- **UTF-8 流处理**: 改进的流式响应处理

|

||||

GLM CODING PLAN 是专为AI编码打造的订阅套餐,每月最低仅需20元,即可在十余款主流AI编码工具如 Claude Code、Cline、Roo Code 中畅享智谱旗舰模型GLM-4.7(受限于算力,目前仅限Pro用户开放),为开发者提供顶尖的编码体验。

|

||||

|

||||

## Kiro 认证

|

||||

智谱AI为本产品提供了特别优惠,使用以下链接购买可以享受九折优惠:https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII

|

||||

|

||||

### 命令行登录

|

||||

|

||||

|

||||

@@ -80,6 +80,10 @@ passthrough-headers: false

|

||||

# Number of times to retry a request. Retries will occur if the HTTP response code is 403, 408, 500, 502, 503, or 504.

|

||||

request-retry: 3

|

||||

|

||||

# Maximum number of different credentials to try for one failed request.

|

||||

# Set to 0 to keep legacy behavior (try all available credentials).

|

||||

max-retry-credentials: 0

|

||||

|

||||

# Maximum wait time in seconds for a cooled-down credential before triggering a retry.

|

||||

max-retry-interval: 30

|

||||

|

||||

|

||||

@@ -48,14 +48,11 @@ import (

|

||||

var lastRefreshKeys = []string{"last_refresh", "lastRefresh", "last_refreshed_at", "lastRefreshedAt"}

|

||||

|

||||

const (

|

||||

anthropicCallbackPort = 54545

|

||||

geminiCallbackPort = 8085

|

||||

codexCallbackPort = 1455

|

||||

geminiCLIEndpoint = "https://cloudcode-pa.googleapis.com"

|

||||

geminiCLIVersion = "v1internal"

|

||||

geminiCLIUserAgent = "google-api-nodejs-client/9.15.1"

|

||||

geminiCLIApiClient = "gl-node/22.17.0"

|

||||

geminiCLIClientMetadata = "ideType=IDE_UNSPECIFIED,platform=PLATFORM_UNSPECIFIED,pluginType=GEMINI"

|

||||

anthropicCallbackPort = 54545

|

||||

geminiCallbackPort = 8085

|

||||

codexCallbackPort = 1455

|

||||

geminiCLIEndpoint = "https://cloudcode-pa.googleapis.com"

|

||||

geminiCLIVersion = "v1internal"

|

||||

)

|

||||

|

||||

type callbackForwarder struct {

|

||||

@@ -2384,9 +2381,7 @@ func callGeminiCLI(ctx context.Context, httpClient *http.Client, endpoint string

|

||||

return fmt.Errorf("create request: %w", errRequest)

|

||||

}

|

||||

req.Header.Set("Content-Type", "application/json")

|

||||

req.Header.Set("User-Agent", geminiCLIUserAgent)

|

||||

req.Header.Set("X-Goog-Api-Client", geminiCLIApiClient)

|

||||

req.Header.Set("Client-Metadata", geminiCLIClientMetadata)

|

||||

req.Header.Set("User-Agent", misc.GeminiCLIUserAgent(""))

|

||||

|

||||

resp, errDo := httpClient.Do(req)

|

||||

if errDo != nil {

|

||||

@@ -2456,7 +2451,7 @@ func checkCloudAPIIsEnabled(ctx context.Context, httpClient *http.Client, projec

|

||||

return false, fmt.Errorf("failed to create request: %w", errRequest)

|

||||

}

|

||||

req.Header.Set("Content-Type", "application/json")

|

||||

req.Header.Set("User-Agent", geminiCLIUserAgent)

|

||||

req.Header.Set("User-Agent", misc.GeminiCLIUserAgent(""))

|

||||

resp, errDo := httpClient.Do(req)

|

||||

if errDo != nil {

|

||||

return false, fmt.Errorf("failed to execute request: %w", errDo)

|

||||

@@ -2477,7 +2472,7 @@ func checkCloudAPIIsEnabled(ctx context.Context, httpClient *http.Client, projec

|

||||

return false, fmt.Errorf("failed to create request: %w", errRequest)

|

||||

}

|

||||

req.Header.Set("Content-Type", "application/json")

|

||||

req.Header.Set("User-Agent", geminiCLIUserAgent)

|

||||

req.Header.Set("User-Agent", misc.GeminiCLIUserAgent(""))

|

||||

resp, errDo = httpClient.Do(req)

|

||||

if errDo != nil {

|

||||

return false, fmt.Errorf("failed to execute request: %w", errDo)

|

||||

|

||||

@@ -15,6 +15,7 @@ import (

|

||||

"strings"

|

||||

|

||||

"github.com/gin-gonic/gin"

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/misc"

|

||||

log "github.com/sirupsen/logrus"

|

||||

)

|

||||

|

||||

@@ -77,6 +78,9 @@ func createReverseProxy(upstreamURL string, secretSource SecretSource) (*httputi

|

||||

req.Header.Del("X-Api-Key")

|

||||

req.Header.Del("X-Goog-Api-Key")

|

||||

|

||||

// Remove proxy, client identity, and browser fingerprint headers

|

||||

misc.ScrubProxyAndFingerprintHeaders(req)

|

||||

|

||||

// Remove query-based credentials if they match the authenticated client API key.

|

||||

// This prevents leaking client auth material to the Amp upstream while avoiding

|

||||

// breaking unrelated upstream query parameters.

|

||||

|

||||

@@ -258,7 +258,7 @@ func NewServer(cfg *config.Config, authManager *auth.Manager, accessManager *sdk

|

||||

s.oldConfigYaml, _ = yaml.Marshal(cfg)

|

||||

s.applyAccessConfig(nil, cfg)

|

||||

if authManager != nil {

|

||||

authManager.SetRetryConfig(cfg.RequestRetry, time.Duration(cfg.MaxRetryInterval)*time.Second)

|

||||

authManager.SetRetryConfig(cfg.RequestRetry, time.Duration(cfg.MaxRetryInterval)*time.Second, cfg.MaxRetryCredentials)

|

||||

}

|

||||

managementasset.SetCurrentConfig(cfg)

|

||||

auth.SetQuotaCooldownDisabled(cfg.DisableCooling)

|

||||

@@ -944,7 +944,7 @@ func (s *Server) UpdateClients(cfg *config.Config) {

|

||||

}

|

||||

|

||||

if s.handlers != nil && s.handlers.AuthManager != nil {

|

||||

s.handlers.AuthManager.SetRetryConfig(cfg.RequestRetry, time.Duration(cfg.MaxRetryInterval)*time.Second)

|

||||

s.handlers.AuthManager.SetRetryConfig(cfg.RequestRetry, time.Duration(cfg.MaxRetryInterval)*time.Second, cfg.MaxRetryCredentials)

|

||||

}

|

||||

|

||||

// Update log level dynamically when debug flag changes

|

||||

|

||||

@@ -20,6 +20,7 @@ import (

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/auth/gemini"

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/config"

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/interfaces"

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/misc"

|

||||

sdkAuth "github.com/router-for-me/CLIProxyAPI/v6/sdk/auth"

|

||||

cliproxyauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/cliproxy/auth"

|

||||

log "github.com/sirupsen/logrus"

|

||||

@@ -27,11 +28,8 @@ import (

|

||||

)

|

||||

|

||||

const (

|

||||

geminiCLIEndpoint = "https://cloudcode-pa.googleapis.com"

|

||||

geminiCLIVersion = "v1internal"

|

||||

geminiCLIUserAgent = "google-api-nodejs-client/9.15.1"

|

||||

geminiCLIApiClient = "gl-node/22.17.0"

|

||||

geminiCLIClientMetadata = "ideType=IDE_UNSPECIFIED,platform=PLATFORM_UNSPECIFIED,pluginType=GEMINI"

|

||||

geminiCLIEndpoint = "https://cloudcode-pa.googleapis.com"

|

||||

geminiCLIVersion = "v1internal"

|

||||

)

|

||||

|

||||

type projectSelectionRequiredError struct{}

|

||||

@@ -409,9 +407,7 @@ func callGeminiCLI(ctx context.Context, httpClient *http.Client, endpoint string

|

||||

return fmt.Errorf("create request: %w", errRequest)

|

||||

}

|

||||

req.Header.Set("Content-Type", "application/json")

|

||||

req.Header.Set("User-Agent", geminiCLIUserAgent)

|

||||

req.Header.Set("X-Goog-Api-Client", geminiCLIApiClient)

|

||||

req.Header.Set("Client-Metadata", geminiCLIClientMetadata)

|

||||

req.Header.Set("User-Agent", misc.GeminiCLIUserAgent(""))

|

||||

|

||||

resp, errDo := httpClient.Do(req)

|

||||

if errDo != nil {

|

||||

@@ -630,7 +626,7 @@ func checkCloudAPIIsEnabled(ctx context.Context, httpClient *http.Client, projec

|

||||

return false, fmt.Errorf("failed to create request: %w", errRequest)

|

||||

}

|

||||

req.Header.Set("Content-Type", "application/json")

|

||||

req.Header.Set("User-Agent", geminiCLIUserAgent)

|

||||

req.Header.Set("User-Agent", misc.GeminiCLIUserAgent(""))

|

||||

resp, errDo := httpClient.Do(req)

|

||||

if errDo != nil {

|

||||

return false, fmt.Errorf("failed to execute request: %w", errDo)

|

||||

@@ -651,7 +647,7 @@ func checkCloudAPIIsEnabled(ctx context.Context, httpClient *http.Client, projec

|

||||

return false, fmt.Errorf("failed to create request: %w", errRequest)

|

||||

}

|

||||

req.Header.Set("Content-Type", "application/json")

|

||||

req.Header.Set("User-Agent", geminiCLIUserAgent)

|

||||

req.Header.Set("User-Agent", misc.GeminiCLIUserAgent(""))

|

||||

resp, errDo = httpClient.Do(req)

|

||||

if errDo != nil {

|

||||

return false, fmt.Errorf("failed to execute request: %w", errDo)

|

||||

|

||||

@@ -69,6 +69,9 @@ type Config struct {

|

||||

|

||||

// RequestRetry defines the retry times when the request failed.

|

||||

RequestRetry int `yaml:"request-retry" json:"request-retry"`

|

||||

// MaxRetryCredentials defines the maximum number of credentials to try for a failed request.

|

||||

// Set to 0 or a negative value to keep trying all available credentials (legacy behavior).

|

||||

MaxRetryCredentials int `yaml:"max-retry-credentials" json:"max-retry-credentials"`

|

||||

// MaxRetryInterval defines the maximum wait time in seconds before retrying a cooled-down credential.

|

||||

MaxRetryInterval int `yaml:"max-retry-interval" json:"max-retry-interval"`

|

||||

|

||||

@@ -576,16 +579,6 @@ func LoadConfig(configFile string) (*Config, error) {

|

||||

// If optional is true and the file is missing, it returns an empty Config.

|

||||

// If optional is true and the file is empty or invalid, it returns an empty Config.

|

||||

func LoadConfigOptional(configFile string, optional bool) (*Config, error) {

|

||||

// NOTE: Startup oauth-model-alias migration is intentionally disabled.

|

||||

// Reason: avoid mutating config.yaml during server startup.

|

||||

// Re-enable the block below if automatic startup migration is needed again.

|

||||

// if migrated, err := MigrateOAuthModelAlias(configFile); err != nil {

|

||||

// // Log warning but don't fail - config loading should still work

|

||||

// fmt.Printf("Warning: oauth-model-alias migration failed: %v\n", err)

|

||||

// } else if migrated {

|

||||

// fmt.Println("Migrated oauth-model-mappings to oauth-model-alias")

|

||||

// }

|

||||

|

||||

// Read the entire configuration file into memory.

|

||||

data, err := os.ReadFile(configFile)

|

||||

if err != nil {

|

||||

@@ -673,6 +666,10 @@ func LoadConfigOptional(configFile string, optional bool) (*Config, error) {

|

||||

cfg.ErrorLogsMaxFiles = 10

|

||||

}

|

||||

|

||||

if cfg.MaxRetryCredentials < 0 {

|

||||

cfg.MaxRetryCredentials = 0

|

||||

}

|

||||

|

||||

// Sanitize Gemini API key configuration and migrate legacy entries.

|

||||

cfg.SanitizeGeminiKeys()

|

||||

|

||||

@@ -1669,9 +1666,6 @@ func pruneMappingToGeneratedKeys(dstRoot, srcRoot *yaml.Node, key string) {

|

||||

srcIdx := findMapKeyIndex(srcRoot, key)

|

||||

if srcIdx < 0 {

|

||||

// Keep an explicit empty mapping for oauth-model-alias when it was previously present.

|

||||

//

|

||||

// Rationale: LoadConfig runs MigrateOAuthModelAlias before unmarshalling. If the

|

||||

// oauth-model-alias key is missing, migration will add the default antigravity aliases.

|

||||

// When users delete the last channel from oauth-model-alias via the management API,

|

||||

// we want that deletion to persist across hot reloads and restarts.

|

||||

if key == "oauth-model-alias" {

|

||||

|

||||

37

internal/config/oauth_model_alias_defaults.go

Normal file

37

internal/config/oauth_model_alias_defaults.go

Normal file

@@ -0,0 +1,37 @@

|

||||

package config

|

||||

|

||||

// defaultKiroAliases returns default oauth-model-alias entries for Kiro.

|

||||

// These aliases expose standard Claude IDs for Kiro-prefixed upstream models.

|

||||

func defaultKiroAliases() []OAuthModelAlias {

|

||||

return []OAuthModelAlias{

|

||||

// Sonnet 4.6

|

||||

{Name: "kiro-claude-sonnet-4-6", Alias: "claude-sonnet-4-6", Fork: true},

|

||||

// Sonnet 4.5

|

||||

{Name: "kiro-claude-sonnet-4-5", Alias: "claude-sonnet-4-5-20250929", Fork: true},

|

||||

{Name: "kiro-claude-sonnet-4-5", Alias: "claude-sonnet-4-5", Fork: true},

|

||||

// Sonnet 4

|

||||

{Name: "kiro-claude-sonnet-4", Alias: "claude-sonnet-4-20250514", Fork: true},

|

||||

{Name: "kiro-claude-sonnet-4", Alias: "claude-sonnet-4", Fork: true},

|

||||

// Opus 4.6

|

||||

{Name: "kiro-claude-opus-4-6", Alias: "claude-opus-4-6", Fork: true},

|

||||

// Opus 4.5

|

||||

{Name: "kiro-claude-opus-4-5", Alias: "claude-opus-4-5-20251101", Fork: true},

|

||||

{Name: "kiro-claude-opus-4-5", Alias: "claude-opus-4-5", Fork: true},

|

||||

// Haiku 4.5

|

||||

{Name: "kiro-claude-haiku-4-5", Alias: "claude-haiku-4-5-20251001", Fork: true},

|

||||

{Name: "kiro-claude-haiku-4-5", Alias: "claude-haiku-4-5", Fork: true},

|

||||

}

|

||||

}

|

||||

|

||||

// defaultGitHubCopilotAliases returns default oauth-model-alias entries for

|

||||

// GitHub Copilot Claude models. It exposes hyphen-style IDs used by clients.

|

||||

func defaultGitHubCopilotAliases() []OAuthModelAlias {

|

||||

return []OAuthModelAlias{

|

||||

{Name: "claude-haiku-4.5", Alias: "claude-haiku-4-5", Fork: true},

|

||||

{Name: "claude-opus-4.1", Alias: "claude-opus-4-1", Fork: true},

|

||||

{Name: "claude-opus-4.5", Alias: "claude-opus-4-5", Fork: true},

|

||||

{Name: "claude-opus-4.6", Alias: "claude-opus-4-6", Fork: true},

|

||||

{Name: "claude-sonnet-4.5", Alias: "claude-sonnet-4-5", Fork: true},

|

||||

{Name: "claude-sonnet-4.6", Alias: "claude-sonnet-4-6", Fork: true},

|

||||

}

|

||||

}

|

||||

@@ -1,316 +0,0 @@

|

||||

package config

|

||||

|

||||

import (

|

||||

"os"

|

||||

"strings"

|

||||

|

||||

"gopkg.in/yaml.v3"

|

||||

)

|

||||

|

||||

// antigravityModelConversionTable maps old built-in aliases to actual model names

|

||||

// for the antigravity channel during migration.

|

||||

var antigravityModelConversionTable = map[string]string{

|

||||

"gemini-2.5-computer-use-preview-10-2025": "rev19-uic3-1p",

|

||||

"gemini-3-pro-image-preview": "gemini-3-pro-image",

|

||||

"gemini-3-pro-preview": "gemini-3-pro-high",

|

||||

"gemini-3-flash-preview": "gemini-3-flash",

|

||||

"gemini-claude-sonnet-4-5": "claude-sonnet-4-5",

|

||||

"gemini-claude-sonnet-4-5-thinking": "claude-sonnet-4-5-thinking",

|

||||

"gemini-claude-opus-4-5-thinking": "claude-opus-4-5-thinking",

|

||||

"gemini-claude-opus-4-6-thinking": "claude-opus-4-6-thinking",

|

||||

}

|

||||

|

||||

// defaultKiroAliases returns the default oauth-model-alias configuration

|

||||

// for the kiro channel. Maps kiro-prefixed model names to standard Claude model

|

||||

// names so that clients like Claude Code can use standard names directly.

|

||||

func defaultKiroAliases() []OAuthModelAlias {

|

||||

return []OAuthModelAlias{

|

||||

// Sonnet 4.6

|

||||

{Name: "kiro-claude-sonnet-4-6", Alias: "claude-sonnet-4-6", Fork: true},

|

||||

// Sonnet 4.5

|

||||

{Name: "kiro-claude-sonnet-4-5", Alias: "claude-sonnet-4-5-20250929", Fork: true},

|

||||

{Name: "kiro-claude-sonnet-4-5", Alias: "claude-sonnet-4-5", Fork: true},

|

||||

// Sonnet 4

|

||||

{Name: "kiro-claude-sonnet-4", Alias: "claude-sonnet-4-20250514", Fork: true},

|

||||

{Name: "kiro-claude-sonnet-4", Alias: "claude-sonnet-4", Fork: true},

|

||||

// Opus 4.6

|

||||

{Name: "kiro-claude-opus-4-6", Alias: "claude-opus-4-6", Fork: true},

|

||||

// Opus 4.5

|

||||

{Name: "kiro-claude-opus-4-5", Alias: "claude-opus-4-5-20251101", Fork: true},

|

||||

{Name: "kiro-claude-opus-4-5", Alias: "claude-opus-4-5", Fork: true},

|

||||

// Haiku 4.5

|

||||

{Name: "kiro-claude-haiku-4-5", Alias: "claude-haiku-4-5-20251001", Fork: true},

|

||||

{Name: "kiro-claude-haiku-4-5", Alias: "claude-haiku-4-5", Fork: true},

|

||||

}

|

||||

}

|

||||

|

||||

// defaultGitHubCopilotAliases returns default oauth-model-alias entries that

|

||||

// expose Claude hyphen-style IDs for GitHub Copilot Claude models.

|

||||

// This keeps compatibility with clients (e.g. Claude Code) that use

|

||||

// Anthropic-style model IDs like "claude-opus-4-6".

|

||||

func defaultGitHubCopilotAliases() []OAuthModelAlias {

|

||||

return []OAuthModelAlias{

|

||||

{Name: "claude-haiku-4.5", Alias: "claude-haiku-4-5", Fork: true},

|

||||

{Name: "claude-opus-4.1", Alias: "claude-opus-4-1", Fork: true},

|

||||

{Name: "claude-opus-4.5", Alias: "claude-opus-4-5", Fork: true},

|

||||

{Name: "claude-opus-4.6", Alias: "claude-opus-4-6", Fork: true},

|

||||

{Name: "claude-sonnet-4.5", Alias: "claude-sonnet-4-5", Fork: true},

|

||||

{Name: "claude-sonnet-4.6", Alias: "claude-sonnet-4-6", Fork: true},

|

||||

}

|

||||

}

|

||||

|

||||

// defaultAntigravityAliases returns the default oauth-model-alias configuration

|

||||

// for the antigravity channel when neither field exists.

|

||||

func defaultAntigravityAliases() []OAuthModelAlias {

|

||||

return []OAuthModelAlias{

|

||||

{Name: "rev19-uic3-1p", Alias: "gemini-2.5-computer-use-preview-10-2025"},

|

||||

{Name: "gemini-3-pro-image", Alias: "gemini-3-pro-image-preview"},

|

||||

{Name: "gemini-3-pro-high", Alias: "gemini-3-pro-preview"},

|

||||

{Name: "gemini-3-flash", Alias: "gemini-3-flash-preview"},

|

||||

{Name: "claude-sonnet-4-5", Alias: "gemini-claude-sonnet-4-5"},

|

||||

{Name: "claude-sonnet-4-5-thinking", Alias: "gemini-claude-sonnet-4-5-thinking"},

|

||||

{Name: "claude-opus-4-5-thinking", Alias: "gemini-claude-opus-4-5-thinking"},

|

||||

{Name: "claude-opus-4-6-thinking", Alias: "gemini-claude-opus-4-6-thinking"},

|

||||

}

|

||||

}

|

||||

|

||||

// MigrateOAuthModelAlias checks for and performs migration from oauth-model-mappings

|

||||

// to oauth-model-alias at startup. Returns true if migration was performed.

|

||||

//

|

||||

// Migration flow:

|

||||

// 1. Check if oauth-model-alias exists -> skip migration

|

||||

// 2. Check if oauth-model-mappings exists -> convert and migrate

|

||||

// - For antigravity channel, convert old built-in aliases to actual model names

|

||||

//

|

||||

// 3. Neither exists -> add default antigravity config

|

||||

func MigrateOAuthModelAlias(configFile string) (bool, error) {

|

||||

data, err := os.ReadFile(configFile)

|

||||

if err != nil {

|

||||

if os.IsNotExist(err) {

|

||||

return false, nil

|

||||

}

|

||||

return false, err

|

||||

}

|

||||

if len(data) == 0 {

|

||||

return false, nil

|

||||

}

|

||||

|

||||

// Parse YAML into node tree to preserve structure

|

||||

var root yaml.Node

|

||||

if err := yaml.Unmarshal(data, &root); err != nil {

|

||||

return false, nil

|

||||

}

|

||||

if root.Kind != yaml.DocumentNode || len(root.Content) == 0 {

|

||||

return false, nil

|

||||

}

|

||||

rootMap := root.Content[0]

|

||||

if rootMap == nil || rootMap.Kind != yaml.MappingNode {

|

||||

return false, nil

|

||||

}

|

||||

|

||||

// Check if oauth-model-alias already exists

|

||||

if findMapKeyIndex(rootMap, "oauth-model-alias") >= 0 {

|

||||

return false, nil

|

||||

}

|

||||

|

||||

// Check if oauth-model-mappings exists

|

||||

oldIdx := findMapKeyIndex(rootMap, "oauth-model-mappings")

|

||||

if oldIdx >= 0 {

|

||||

// Migrate from old field

|

||||

return migrateFromOldField(configFile, &root, rootMap, oldIdx)

|

||||

}

|

||||

|

||||

// Neither field exists - add default antigravity config

|

||||

return addDefaultAntigravityConfig(configFile, &root, rootMap)

|

||||

}

|

||||

|

||||

// migrateFromOldField converts oauth-model-mappings to oauth-model-alias

|

||||

func migrateFromOldField(configFile string, root *yaml.Node, rootMap *yaml.Node, oldIdx int) (bool, error) {

|

||||

if oldIdx+1 >= len(rootMap.Content) {

|

||||

return false, nil

|

||||

}

|

||||

oldValue := rootMap.Content[oldIdx+1]

|

||||

if oldValue == nil || oldValue.Kind != yaml.MappingNode {

|

||||

return false, nil

|

||||

}

|

||||

|

||||

// Parse the old aliases

|

||||

oldAliases := parseOldAliasNode(oldValue)

|

||||

if len(oldAliases) == 0 {

|

||||

// Remove the old field and write

|

||||

removeMapKeyByIndex(rootMap, oldIdx)

|

||||

return writeYAMLNode(configFile, root)

|

||||

}

|

||||

|

||||

// Convert model names for antigravity channel

|

||||

newAliases := make(map[string][]OAuthModelAlias, len(oldAliases))

|

||||

for channel, entries := range oldAliases {

|

||||

converted := make([]OAuthModelAlias, 0, len(entries))

|

||||

for _, entry := range entries {

|

||||

newEntry := OAuthModelAlias{

|

||||

Name: entry.Name,

|

||||

Alias: entry.Alias,

|

||||

Fork: entry.Fork,

|

||||

}

|

||||

// Convert model names for antigravity channel

|

||||

if strings.EqualFold(channel, "antigravity") {

|

||||

if actual, ok := antigravityModelConversionTable[entry.Name]; ok {

|

||||

newEntry.Name = actual

|

||||

}

|

||||

}

|

||||

converted = append(converted, newEntry)

|

||||

}

|

||||

newAliases[channel] = converted

|

||||

}

|

||||

|

||||

// For antigravity channel, supplement missing default aliases

|

||||

if antigravityEntries, exists := newAliases["antigravity"]; exists {

|

||||

// Build a set of already configured model names (upstream names)

|

||||

configuredModels := make(map[string]bool, len(antigravityEntries))

|

||||

for _, entry := range antigravityEntries {

|

||||

configuredModels[entry.Name] = true

|

||||

}

|

||||

|

||||

// Add missing default aliases

|

||||

for _, defaultAlias := range defaultAntigravityAliases() {

|

||||

if !configuredModels[defaultAlias.Name] {

|

||||

antigravityEntries = append(antigravityEntries, defaultAlias)

|

||||

}

|

||||

}

|

||||

newAliases["antigravity"] = antigravityEntries

|

||||

}

|

||||

|

||||

// Build new node

|

||||

newNode := buildOAuthModelAliasNode(newAliases)

|

||||

|

||||

// Replace old key with new key and value

|

||||

rootMap.Content[oldIdx].Value = "oauth-model-alias"

|

||||

rootMap.Content[oldIdx+1] = newNode

|

||||

|

||||

return writeYAMLNode(configFile, root)

|

||||

}

|

||||

|

||||

// addDefaultAntigravityConfig adds the default antigravity configuration

|

||||

func addDefaultAntigravityConfig(configFile string, root *yaml.Node, rootMap *yaml.Node) (bool, error) {

|

||||

defaults := map[string][]OAuthModelAlias{

|

||||

"antigravity": defaultAntigravityAliases(),

|

||||

}

|

||||

newNode := buildOAuthModelAliasNode(defaults)

|

||||

|

||||

// Add new key-value pair

|

||||

keyNode := &yaml.Node{Kind: yaml.ScalarNode, Tag: "!!str", Value: "oauth-model-alias"}

|

||||

rootMap.Content = append(rootMap.Content, keyNode, newNode)

|

||||

|

||||

return writeYAMLNode(configFile, root)

|

||||

}

|

||||

|

||||

// parseOldAliasNode parses the old oauth-model-mappings node structure

|

||||

func parseOldAliasNode(node *yaml.Node) map[string][]OAuthModelAlias {

|

||||

if node == nil || node.Kind != yaml.MappingNode {

|

||||

return nil

|

||||

}

|

||||

result := make(map[string][]OAuthModelAlias)

|

||||

for i := 0; i+1 < len(node.Content); i += 2 {

|

||||

channelNode := node.Content[i]

|

||||

entriesNode := node.Content[i+1]

|

||||

if channelNode == nil || entriesNode == nil {

|

||||

continue

|

||||

}

|

||||

channel := strings.ToLower(strings.TrimSpace(channelNode.Value))

|

||||

if channel == "" || entriesNode.Kind != yaml.SequenceNode {

|

||||

continue

|

||||

}

|

||||

entries := make([]OAuthModelAlias, 0, len(entriesNode.Content))

|

||||

for _, entryNode := range entriesNode.Content {

|

||||

if entryNode == nil || entryNode.Kind != yaml.MappingNode {

|

||||

continue

|

||||

}

|

||||

entry := parseAliasEntry(entryNode)

|

||||

if entry.Name != "" && entry.Alias != "" {

|

||||

entries = append(entries, entry)

|

||||

}

|

||||

}

|

||||

if len(entries) > 0 {

|

||||

result[channel] = entries

|

||||

}

|

||||

}

|

||||

return result

|

||||

}

|

||||

|

||||

// parseAliasEntry parses a single alias entry node

|

||||

func parseAliasEntry(node *yaml.Node) OAuthModelAlias {

|

||||

var entry OAuthModelAlias

|

||||

for i := 0; i+1 < len(node.Content); i += 2 {

|

||||

keyNode := node.Content[i]

|

||||

valNode := node.Content[i+1]

|

||||

if keyNode == nil || valNode == nil {

|

||||

continue

|

||||

}

|

||||

switch strings.ToLower(strings.TrimSpace(keyNode.Value)) {

|

||||

case "name":

|

||||

entry.Name = strings.TrimSpace(valNode.Value)

|

||||

case "alias":

|

||||

entry.Alias = strings.TrimSpace(valNode.Value)

|

||||

case "fork":

|

||||

entry.Fork = strings.ToLower(strings.TrimSpace(valNode.Value)) == "true"

|

||||

}

|

||||

}

|

||||

return entry

|

||||

}

|

||||

|

||||

// buildOAuthModelAliasNode creates a YAML node for oauth-model-alias

|

||||

func buildOAuthModelAliasNode(aliases map[string][]OAuthModelAlias) *yaml.Node {

|

||||

node := &yaml.Node{Kind: yaml.MappingNode, Tag: "!!map"}

|

||||

for channel, entries := range aliases {

|

||||

channelNode := &yaml.Node{Kind: yaml.ScalarNode, Tag: "!!str", Value: channel}

|

||||

entriesNode := &yaml.Node{Kind: yaml.SequenceNode, Tag: "!!seq"}

|

||||

for _, entry := range entries {

|

||||

entryNode := &yaml.Node{Kind: yaml.MappingNode, Tag: "!!map"}

|

||||

entryNode.Content = append(entryNode.Content,

|

||||

&yaml.Node{Kind: yaml.ScalarNode, Tag: "!!str", Value: "name"},

|

||||

&yaml.Node{Kind: yaml.ScalarNode, Tag: "!!str", Value: entry.Name},

|

||||

&yaml.Node{Kind: yaml.ScalarNode, Tag: "!!str", Value: "alias"},

|

||||

&yaml.Node{Kind: yaml.ScalarNode, Tag: "!!str", Value: entry.Alias},

|

||||

)

|

||||

if entry.Fork {

|

||||

entryNode.Content = append(entryNode.Content,

|

||||

&yaml.Node{Kind: yaml.ScalarNode, Tag: "!!str", Value: "fork"},

|

||||

&yaml.Node{Kind: yaml.ScalarNode, Tag: "!!bool", Value: "true"},

|

||||

)

|

||||

}

|

||||

entriesNode.Content = append(entriesNode.Content, entryNode)

|

||||

}

|

||||

node.Content = append(node.Content, channelNode, entriesNode)

|

||||

}

|

||||

return node

|

||||

}

|

||||

|

||||

// removeMapKeyByIndex removes a key-value pair from a mapping node by index

|

||||

func removeMapKeyByIndex(mapNode *yaml.Node, keyIdx int) {

|

||||

if mapNode == nil || mapNode.Kind != yaml.MappingNode {

|

||||

return

|

||||

}

|

||||

if keyIdx < 0 || keyIdx+1 >= len(mapNode.Content) {

|

||||

return

|

||||

}

|

||||

mapNode.Content = append(mapNode.Content[:keyIdx], mapNode.Content[keyIdx+2:]...)

|

||||

}

|

||||

|

||||

// writeYAMLNode writes the YAML node tree back to file

|

||||

func writeYAMLNode(configFile string, root *yaml.Node) (bool, error) {

|

||||

f, err := os.Create(configFile)

|

||||

if err != nil {

|

||||

return false, err

|

||||

}

|

||||

defer f.Close()

|

||||

|

||||

enc := yaml.NewEncoder(f)

|

||||

enc.SetIndent(2)

|

||||

if err := enc.Encode(root); err != nil {

|

||||

return false, err

|

||||

}

|

||||

if err := enc.Close(); err != nil {

|

||||

return false, err

|

||||

}

|

||||

return true, nil

|

||||

}

|

||||

@@ -1,245 +0,0 @@

|

||||

package config

|

||||

|

||||

import (

|

||||

"os"

|

||||

"path/filepath"

|

||||

"strings"

|

||||

"testing"

|

||||

|

||||

"gopkg.in/yaml.v3"

|

||||

)

|

||||

|

||||

func TestMigrateOAuthModelAlias_SkipsIfNewFieldExists(t *testing.T) {

|

||||

t.Parallel()

|

||||

|

||||

dir := t.TempDir()

|

||||

configFile := filepath.Join(dir, "config.yaml")

|

||||

|

||||

content := `oauth-model-alias:

|

||||

gemini-cli:

|

||||

- name: "gemini-2.5-pro"

|

||||

alias: "g2.5p"

|

||||

`

|

||||

if err := os.WriteFile(configFile, []byte(content), 0644); err != nil {

|

||||

t.Fatal(err)

|

||||

}

|

||||

|

||||

migrated, err := MigrateOAuthModelAlias(configFile)

|

||||

if err != nil {

|

||||

t.Fatalf("unexpected error: %v", err)

|

||||

}

|

||||

if migrated {

|

||||

t.Fatal("expected no migration when oauth-model-alias already exists")

|

||||

}

|

||||

|

||||

// Verify file unchanged

|

||||

data, _ := os.ReadFile(configFile)

|

||||

if !strings.Contains(string(data), "oauth-model-alias:") {

|

||||

t.Fatal("file should still contain oauth-model-alias")

|

||||

}

|

||||

}

|

||||

|

||||

func TestMigrateOAuthModelAlias_MigratesOldField(t *testing.T) {

|

||||

t.Parallel()

|

||||

|

||||

dir := t.TempDir()

|

||||

configFile := filepath.Join(dir, "config.yaml")

|

||||

|

||||

content := `oauth-model-mappings:

|

||||

gemini-cli:

|

||||

- name: "gemini-2.5-pro"

|

||||

alias: "g2.5p"

|

||||

fork: true

|

||||

`

|

||||

if err := os.WriteFile(configFile, []byte(content), 0644); err != nil {

|

||||

t.Fatal(err)

|

||||

}

|

||||

|

||||

migrated, err := MigrateOAuthModelAlias(configFile)

|

||||

if err != nil {

|

||||

t.Fatalf("unexpected error: %v", err)

|

||||

}

|

||||

if !migrated {

|

||||

t.Fatal("expected migration to occur")

|

||||

}

|

||||

|

||||

// Verify new field exists and old field removed

|

||||

data, _ := os.ReadFile(configFile)

|

||||

if strings.Contains(string(data), "oauth-model-mappings:") {

|

||||

t.Fatal("old field should be removed")

|

||||

}

|

||||

if !strings.Contains(string(data), "oauth-model-alias:") {

|

||||

t.Fatal("new field should exist")

|

||||

}

|

||||

|

||||

// Parse and verify structure

|

||||

var root yaml.Node

|

||||

if err := yaml.Unmarshal(data, &root); err != nil {

|

||||

t.Fatal(err)

|

||||

}

|

||||

}

|

||||

|

||||

func TestMigrateOAuthModelAlias_ConvertsAntigravityModels(t *testing.T) {

|

||||

t.Parallel()

|

||||

|

||||

dir := t.TempDir()

|

||||

configFile := filepath.Join(dir, "config.yaml")

|

||||

|

||||

// Use old model names that should be converted

|

||||

content := `oauth-model-mappings:

|

||||

antigravity:

|

||||

- name: "gemini-2.5-computer-use-preview-10-2025"

|

||||

alias: "computer-use"

|

||||

- name: "gemini-3-pro-preview"

|

||||

alias: "g3p"

|

||||

`

|

||||

if err := os.WriteFile(configFile, []byte(content), 0644); err != nil {

|

||||

t.Fatal(err)

|

||||

}

|

||||

|

||||

migrated, err := MigrateOAuthModelAlias(configFile)

|

||||

if err != nil {

|

||||

t.Fatalf("unexpected error: %v", err)

|

||||

}

|

||||

if !migrated {

|

||||

t.Fatal("expected migration to occur")

|

||||

}

|

||||

|

||||

// Verify model names were converted

|

||||

data, _ := os.ReadFile(configFile)

|

||||

content = string(data)

|

||||

if !strings.Contains(content, "rev19-uic3-1p") {

|

||||

t.Fatal("expected gemini-2.5-computer-use-preview-10-2025 to be converted to rev19-uic3-1p")

|

||||

}

|

||||

if !strings.Contains(content, "gemini-3-pro-high") {

|

||||

t.Fatal("expected gemini-3-pro-preview to be converted to gemini-3-pro-high")

|

||||

}

|

||||

|

||||

// Verify missing default aliases were supplemented

|

||||

if !strings.Contains(content, "gemini-3-pro-image") {

|

||||

t.Fatal("expected missing default alias gemini-3-pro-image to be added")

|

||||

}

|

||||

if !strings.Contains(content, "gemini-3-flash") {

|

||||

t.Fatal("expected missing default alias gemini-3-flash to be added")

|

||||

}

|

||||

if !strings.Contains(content, "claude-sonnet-4-5") {

|

||||

t.Fatal("expected missing default alias claude-sonnet-4-5 to be added")

|

||||

}

|

||||

if !strings.Contains(content, "claude-sonnet-4-5-thinking") {

|

||||

t.Fatal("expected missing default alias claude-sonnet-4-5-thinking to be added")

|

||||

}

|

||||

if !strings.Contains(content, "claude-opus-4-5-thinking") {

|

||||

t.Fatal("expected missing default alias claude-opus-4-5-thinking to be added")

|

||||

}

|

||||

if !strings.Contains(content, "claude-opus-4-6-thinking") {

|

||||

t.Fatal("expected missing default alias claude-opus-4-6-thinking to be added")

|

||||

}

|

||||

}

|

||||

|

||||

func TestMigrateOAuthModelAlias_AddsDefaultIfNeitherExists(t *testing.T) {

|

||||

t.Parallel()

|

||||

|

||||

dir := t.TempDir()

|

||||

configFile := filepath.Join(dir, "config.yaml")

|

||||

|

||||

content := `debug: true

|

||||

port: 8080

|

||||

`

|

||||

if err := os.WriteFile(configFile, []byte(content), 0644); err != nil {

|

||||

t.Fatal(err)

|

||||

}

|

||||

|

||||

migrated, err := MigrateOAuthModelAlias(configFile)

|

||||

if err != nil {

|

||||

t.Fatalf("unexpected error: %v", err)

|

||||

}

|

||||

if !migrated {

|

||||

t.Fatal("expected migration to add default config")

|

||||

}

|

||||

|

||||

// Verify default antigravity config was added

|

||||

data, _ := os.ReadFile(configFile)

|

||||

content = string(data)

|

||||

if !strings.Contains(content, "oauth-model-alias:") {

|

||||

t.Fatal("expected oauth-model-alias to be added")

|

||||

}

|

||||

if !strings.Contains(content, "antigravity:") {

|

||||

t.Fatal("expected antigravity channel to be added")

|

||||

}

|

||||

if !strings.Contains(content, "rev19-uic3-1p") {

|

||||

t.Fatal("expected default antigravity aliases to include rev19-uic3-1p")

|

||||

}

|

||||

}

|

||||

|

||||

func TestMigrateOAuthModelAlias_PreservesOtherConfig(t *testing.T) {

|

||||

t.Parallel()

|

||||

|

||||

dir := t.TempDir()

|

||||

configFile := filepath.Join(dir, "config.yaml")

|

||||

|

||||

content := `debug: true

|

||||

port: 8080

|

||||

oauth-model-mappings:

|

||||

gemini-cli:

|

||||

- name: "test"

|

||||

alias: "t"

|

||||

api-keys:

|

||||

- "key1"

|

||||

- "key2"

|

||||

`

|

||||

if err := os.WriteFile(configFile, []byte(content), 0644); err != nil {

|

||||

t.Fatal(err)

|

||||

}

|

||||

|

||||

migrated, err := MigrateOAuthModelAlias(configFile)

|

||||

if err != nil {

|

||||

t.Fatalf("unexpected error: %v", err)

|

||||

}

|

||||

if !migrated {

|

||||

t.Fatal("expected migration to occur")

|

||||

}

|

||||

|

||||

// Verify other config preserved

|

||||

data, _ := os.ReadFile(configFile)

|

||||

content = string(data)

|

||||

if !strings.Contains(content, "debug: true") {

|

||||

t.Fatal("expected debug field to be preserved")

|

||||

}

|

||||

if !strings.Contains(content, "port: 8080") {

|

||||

t.Fatal("expected port field to be preserved")

|

||||

}

|

||||

if !strings.Contains(content, "api-keys:") {

|

||||

t.Fatal("expected api-keys field to be preserved")

|

||||

}

|

||||

}

|

||||

|

||||

func TestMigrateOAuthModelAlias_NonexistentFile(t *testing.T) {

|

||||

t.Parallel()

|

||||

|

||||

migrated, err := MigrateOAuthModelAlias("/nonexistent/path/config.yaml")

|

||||

if err != nil {

|

||||

t.Fatalf("unexpected error for nonexistent file: %v", err)

|

||||

}

|

||||

if migrated {

|

||||

t.Fatal("expected no migration for nonexistent file")

|

||||

}

|

||||

}

|

||||

|

||||

func TestMigrateOAuthModelAlias_EmptyFile(t *testing.T) {

|

||||

t.Parallel()

|

||||

|

||||

dir := t.TempDir()

|

||||

configFile := filepath.Join(dir, "config.yaml")

|

||||

|

||||

if err := os.WriteFile(configFile, []byte(""), 0644); err != nil {

|

||||

t.Fatal(err)

|

||||

}

|

||||

|

||||

migrated, err := MigrateOAuthModelAlias(configFile)

|

||||

if err != nil {

|

||||

t.Fatalf("unexpected error: %v", err)

|

||||

}

|

||||

if migrated {

|

||||

t.Fatal("expected no migration for empty file")

|

||||

}

|

||||

}

|

||||

@@ -4,10 +4,98 @@

|

||||

package misc

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

"net/http"

|

||||

"runtime"

|

||||

"strings"

|

||||

)

|

||||

|

||||

const (

|

||||

// GeminiCLIVersion is the version string reported in the User-Agent for upstream requests.

|

||||

GeminiCLIVersion = "0.31.0"

|

||||

|

||||

// GeminiCLIApiClientHeader is the value for the X-Goog-Api-Client header sent to the Gemini CLI upstream.

|

||||

GeminiCLIApiClientHeader = "google-genai-sdk/1.41.0 gl-node/v22.19.0"

|

||||

)

|

||||

|

||||

// geminiCLIOS maps Go runtime OS names to the Node.js-style platform strings used by Gemini CLI.

|

||||

func geminiCLIOS() string {

|

||||

switch runtime.GOOS {

|

||||

case "windows":

|

||||

return "win32"

|

||||

default:

|

||||

return runtime.GOOS

|

||||

}

|

||||

}

|

||||

|

||||

// geminiCLIArch maps Go runtime architecture names to the Node.js-style arch strings used by Gemini CLI.

|

||||

func geminiCLIArch() string {

|

||||

switch runtime.GOARCH {

|

||||

case "amd64":

|

||||

return "x64"

|

||||

case "386":

|

||||

return "x86"

|

||||

default:

|

||||

return runtime.GOARCH

|

||||

}

|

||||

}

|

||||

|

||||

// GeminiCLIUserAgent returns a User-Agent string that matches the Gemini CLI format.

|

||||

// The model parameter is included in the UA; pass "" or "unknown" when the model is not applicable.

|

||||

func GeminiCLIUserAgent(model string) string {

|

||||

if model == "" {

|

||||

model = "unknown"

|

||||

}

|

||||

return fmt.Sprintf("GeminiCLI/%s/%s (%s; %s)", GeminiCLIVersion, model, geminiCLIOS(), geminiCLIArch())

|

||||

}

|

||||

|

||||

// ScrubProxyAndFingerprintHeaders removes all headers that could reveal

|

||||

// proxy infrastructure, client identity, or browser fingerprints from an

|

||||

// outgoing request. This ensures requests to upstream services look like they

|

||||

// originate directly from a native client rather than a third-party client

|

||||

// behind a reverse proxy.

|

||||

func ScrubProxyAndFingerprintHeaders(req *http.Request) {

|

||||

if req == nil {

|

||||

return

|

||||

}

|

||||

|

||||

// --- Proxy tracing headers ---

|

||||

req.Header.Del("X-Forwarded-For")

|

||||

req.Header.Del("X-Forwarded-Host")

|

||||

req.Header.Del("X-Forwarded-Proto")

|

||||

req.Header.Del("X-Forwarded-Port")

|

||||

req.Header.Del("X-Real-IP")

|

||||

req.Header.Del("Forwarded")

|

||||

req.Header.Del("Via")

|

||||

|

||||

// --- Client identity headers ---

|

||||

req.Header.Del("X-Title")

|

||||

req.Header.Del("X-Stainless-Lang")

|

||||

req.Header.Del("X-Stainless-Package-Version")

|

||||

req.Header.Del("X-Stainless-Os")

|

||||

req.Header.Del("X-Stainless-Arch")

|

||||

req.Header.Del("X-Stainless-Runtime")

|

||||

req.Header.Del("X-Stainless-Runtime-Version")

|

||||

req.Header.Del("Http-Referer")

|

||||

req.Header.Del("Referer")

|

||||

|

||||

// --- Browser / Chromium fingerprint headers ---

|

||||

// These are sent by Electron-based clients (e.g. CherryStudio) using the

|

||||

// Fetch API, but NOT by Node.js https module (which Antigravity uses).

|

||||

req.Header.Del("Sec-Ch-Ua")

|

||||

req.Header.Del("Sec-Ch-Ua-Mobile")

|

||||

req.Header.Del("Sec-Ch-Ua-Platform")

|

||||

req.Header.Del("Sec-Fetch-Mode")

|

||||

req.Header.Del("Sec-Fetch-Site")

|

||||

req.Header.Del("Sec-Fetch-Dest")

|

||||

req.Header.Del("Priority")

|

||||

|

||||

// --- Encoding negotiation ---

|

||||

// Antigravity (Node.js) sends "gzip, deflate, br" by default;

|

||||

// Electron-based clients may add "zstd" which is a fingerprint mismatch.

|

||||

req.Header.Del("Accept-Encoding")

|

||||

}

|

||||

|

||||

// EnsureHeader ensures that a header exists in the target header map by checking

|

||||

// multiple sources in order of priority: source headers, existing target headers,

|

||||

// and finally the default value. It only sets the header if it's not already present

|

||||

|

||||

@@ -959,22 +959,17 @@ type AntigravityModelConfig struct {

|

||||

// Keys use upstream model names returned by the Antigravity models endpoint.

|

||||

func GetAntigravityModelConfig() map[string]*AntigravityModelConfig {

|

||||

return map[string]*AntigravityModelConfig{

|

||||

// "rev19-uic3-1p": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true}},

|

||||

"gemini-2.5-flash": {Thinking: &ThinkingSupport{Min: 0, Max: 24576, ZeroAllowed: true, DynamicAllowed: true}},

|

||||

"gemini-2.5-flash-lite": {Thinking: &ThinkingSupport{Min: 0, Max: 24576, ZeroAllowed: true, DynamicAllowed: true}},

|

||||

"gemini-3-pro-high": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}}},

|

||||

"gemini-3-pro-image": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}}},

|

||||

"gemini-3.1-pro-high": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}}},

|

||||

"gemini-3.1-flash-image": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}}},

|

||||

"gemini-3-flash": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "low", "medium", "high"}}},

|

||||

"claude-opus-4-5-thinking": {Thinking: &ThinkingSupport{Min: 1024, Max: 128000, ZeroAllowed: true, DynamicAllowed: true}, MaxCompletionTokens: 64000},

|

||||

"claude-opus-4-6-thinking": {Thinking: &ThinkingSupport{Min: 1024, Max: 128000, ZeroAllowed: true, DynamicAllowed: true}, MaxCompletionTokens: 64000},

|

||||

"claude-sonnet-4-5": {MaxCompletionTokens: 64000},

|

||||

"claude-sonnet-4-5-thinking": {Thinking: &ThinkingSupport{Min: 1024, Max: 128000, ZeroAllowed: true, DynamicAllowed: true}, MaxCompletionTokens: 64000},

|

||||

"claude-sonnet-4-6": {MaxCompletionTokens: 64000},

|

||||

"claude-sonnet-4-6-thinking": {Thinking: &ThinkingSupport{Min: 1024, Max: 128000, ZeroAllowed: true, DynamicAllowed: true}, MaxCompletionTokens: 64000},

|

||||

"gpt-oss-120b-medium": {},

|

||||

"tab_flash_lite_preview": {},

|

||||

"gemini-2.5-flash": {Thinking: &ThinkingSupport{Min: 0, Max: 24576, ZeroAllowed: true, DynamicAllowed: true}},

|

||||

"gemini-2.5-flash-lite": {Thinking: &ThinkingSupport{Min: 0, Max: 24576, ZeroAllowed: true, DynamicAllowed: true}},

|

||||

"gemini-3-pro-high": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}}},

|

||||

"gemini-3-pro-low": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}}},

|

||||

"gemini-3.1-pro-high": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}}},

|

||||

"gemini-3.1-pro-low": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}}},

|

||||

"gemini-3.1-flash-image": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}}},

|

||||

"gemini-3-flash": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "low", "medium", "high"}}},

|

||||

"claude-opus-4-6-thinking": {Thinking: &ThinkingSupport{Min: 1024, Max: 64000, ZeroAllowed: true, DynamicAllowed: true}, MaxCompletionTokens: 64000},

|

||||

"claude-sonnet-4-6": {Thinking: &ThinkingSupport{Min: 1024, Max: 64000, ZeroAllowed: true, DynamicAllowed: true}, MaxCompletionTokens: 64000},

|

||||

"gpt-oss-120b-medium": {},

|

||||

}

|

||||

}

|

||||

|

||||

|

||||

@@ -49,6 +49,10 @@ type ModelInfo struct {

|

||||

SupportedParameters []string `json:"supported_parameters,omitempty"`

|

||||

// SupportedEndpoints lists supported API endpoints (e.g., "/chat/completions", "/responses").

|

||||

SupportedEndpoints []string `json:"supported_endpoints,omitempty"`

|

||||

// SupportedInputModalities lists supported input modalities (e.g., TEXT, IMAGE, VIDEO, AUDIO)

|

||||

SupportedInputModalities []string `json:"supportedInputModalities,omitempty"`

|

||||

// SupportedOutputModalities lists supported output modalities (e.g., TEXT, IMAGE)

|

||||

SupportedOutputModalities []string `json:"supportedOutputModalities,omitempty"`

|

||||

|

||||

// Thinking holds provider-specific reasoning/thinking budget capabilities.

|

||||

// This is optional and currently used for Gemini thinking budget normalization.

|

||||

@@ -501,8 +505,11 @@ func cloneModelInfo(model *ModelInfo) *ModelInfo {

|

||||

if len(model.SupportedParameters) > 0 {

|

||||

copyModel.SupportedParameters = append([]string(nil), model.SupportedParameters...)

|

||||

}

|

||||

if len(model.SupportedEndpoints) > 0 {

|

||||

copyModel.SupportedEndpoints = append([]string(nil), model.SupportedEndpoints...)

|

||||

if len(model.SupportedInputModalities) > 0 {

|

||||

copyModel.SupportedInputModalities = append([]string(nil), model.SupportedInputModalities...)

|

||||

}

|

||||

if len(model.SupportedOutputModalities) > 0 {

|

||||

copyModel.SupportedOutputModalities = append([]string(nil), model.SupportedOutputModalities...)

|

||||

}

|

||||

return ©Model

|

||||

}

|

||||

@@ -1089,6 +1096,12 @@ func (r *ModelRegistry) convertModelToMap(model *ModelInfo, handlerType string)

|

||||

if len(model.SupportedGenerationMethods) > 0 {

|

||||

result["supportedGenerationMethods"] = model.SupportedGenerationMethods

|

||||

}

|

||||

if len(model.SupportedInputModalities) > 0 {

|

||||

result["supportedInputModalities"] = model.SupportedInputModalities

|

||||

}

|

||||

if len(model.SupportedOutputModalities) > 0 {

|

||||

result["supportedOutputModalities"] = model.SupportedOutputModalities

|

||||

}

|

||||

return result

|

||||

|

||||

default:

|

||||

|

||||

@@ -8,6 +8,7 @@ import (

|

||||

"bytes"

|

||||

"context"

|

||||

"crypto/sha256"

|

||||

"crypto/tls"

|

||||

"encoding/binary"

|

||||

"encoding/json"

|

||||

"errors"

|

||||

@@ -45,10 +46,10 @@ const (

|

||||

antigravityModelsPath = "/v1internal:fetchAvailableModels"

|

||||

antigravityClientID = "1071006060591-tmhssin2h21lcre235vtolojh4g403ep.apps.googleusercontent.com"

|

||||

antigravityClientSecret = "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"

|

||||

defaultAntigravityAgent = "antigravity/1.104.0 darwin/arm64"

|

||||

defaultAntigravityAgent = "antigravity/1.19.6 darwin/arm64"

|

||||

antigravityAuthType = "antigravity"

|

||||

refreshSkew = 3000 * time.Second

|

||||

systemInstruction = "You are Antigravity, a powerful agentic AI coding assistant designed by the Google Deepmind team working on Advanced Agentic Coding.You are pair programming with a USER to solve their coding task. The task may require creating a new codebase, modifying or debugging an existing codebase, or simply answering a question.**Absolute paths only****Proactiveness**"

|

||||

// systemInstruction = "You are Antigravity, a powerful agentic AI coding assistant designed by the Google Deepmind team working on Advanced Agentic Coding.You are pair programming with a USER to solve their coding task. The task may require creating a new codebase, modifying or debugging an existing codebase, or simply answering a question.**Absolute paths only****Proactiveness**"

|

||||

)

|

||||

|

||||

var (

|

||||

@@ -142,6 +143,62 @@ func NewAntigravityExecutor(cfg *config.Config) *AntigravityExecutor {

|

||||

return &AntigravityExecutor{cfg: cfg}

|

||||

}

|

||||

|

||||

// antigravityTransport is a singleton HTTP/1.1 transport shared by all Antigravity requests.

|

||||

// It is initialized once via antigravityTransportOnce to avoid leaking a new connection pool

|

||||

// (and the goroutines managing it) on every request.

|

||||

var (

|

||||

antigravityTransport *http.Transport

|

||||

antigravityTransportOnce sync.Once

|

||||

)

|

||||

|

||||

func cloneTransportWithHTTP11(base *http.Transport) *http.Transport {

|

||||

if base == nil {

|

||||

return nil

|

||||

}

|

||||

|

||||

clone := base.Clone()

|

||||

clone.ForceAttemptHTTP2 = false

|

||||

// Wipe TLSNextProto to prevent implicit HTTP/2 upgrade.

|

||||

clone.TLSNextProto = make(map[string]func(authority string, c *tls.Conn) http.RoundTripper)

|

||||

if clone.TLSClientConfig == nil {

|

||||

clone.TLSClientConfig = &tls.Config{}

|

||||

} else {

|

||||

clone.TLSClientConfig = clone.TLSClientConfig.Clone()

|

||||

}

|

||||

// Actively advertise only HTTP/1.1 in the ALPN handshake.

|

||||

clone.TLSClientConfig.NextProtos = []string{"http/1.1"}

|

||||

return clone

|

||||

}

|

||||

|

||||

// initAntigravityTransport creates the shared HTTP/1.1 transport exactly once.

|

||||

func initAntigravityTransport() {

|

||||

base, ok := http.DefaultTransport.(*http.Transport)

|

||||

if !ok {

|

||||

base = &http.Transport{}

|

||||

}

|

||||

antigravityTransport = cloneTransportWithHTTP11(base)

|

||||

}

|

||||

|

||||

// newAntigravityHTTPClient creates an HTTP client specifically for Antigravity,

|

||||

// enforcing HTTP/1.1 by disabling HTTP/2 to perfectly mimic Node.js https defaults.

|

||||

// The underlying Transport is a singleton to avoid leaking connection pools.

|

||||

func newAntigravityHTTPClient(ctx context.Context, cfg *config.Config, auth *cliproxyauth.Auth, timeout time.Duration) *http.Client {

|

||||

antigravityTransportOnce.Do(initAntigravityTransport)

|

||||

|

||||

client := newProxyAwareHTTPClient(ctx, cfg, auth, timeout)

|

||||

// If no transport is set, use the shared HTTP/1.1 transport.

|

||||

if client.Transport == nil {

|

||||

client.Transport = antigravityTransport

|

||||

return client

|

||||

}

|

||||

|

||||

// Preserve proxy settings from proxy-aware transports while forcing HTTP/1.1.

|

||||

if transport, ok := client.Transport.(*http.Transport); ok {

|

||||

client.Transport = cloneTransportWithHTTP11(transport)

|

||||

}

|

||||

return client

|

||||

}

|

||||

|

||||

// Identifier returns the executor identifier.

|

||||

func (e *AntigravityExecutor) Identifier() string { return antigravityAuthType }

|

||||

|

||||

@@ -162,6 +219,8 @@ func (e *AntigravityExecutor) PrepareRequest(req *http.Request, auth *cliproxyau

|

||||

}

|

||||

|

||||

// HttpRequest injects Antigravity credentials into the request and executes it.

|

||||

// It uses a whitelist approach: all incoming headers are stripped and only

|

||||

// the minimum set required by the Antigravity protocol is explicitly set.

|

||||

func (e *AntigravityExecutor) HttpRequest(ctx context.Context, auth *cliproxyauth.Auth, req *http.Request) (*http.Response, error) {

|

||||

if req == nil {

|

||||

return nil, fmt.Errorf("antigravity executor: request is nil")

|

||||

@@ -170,10 +229,29 @@ func (e *AntigravityExecutor) HttpRequest(ctx context.Context, auth *cliproxyaut

|

||||

ctx = req.Context()

|

||||

}

|

||||

httpReq := req.WithContext(ctx)

|

||||

|

||||

// --- Whitelist: save only the headers we need from the original request ---

|

||||

contentType := httpReq.Header.Get("Content-Type")

|

||||

|

||||

// Wipe ALL incoming headers

|

||||

for k := range httpReq.Header {

|

||||

delete(httpReq.Header, k)

|

||||

}

|

||||

|

||||

// --- Set only the headers Antigravity actually sends ---

|

||||

if contentType != "" {

|

||||

httpReq.Header.Set("Content-Type", contentType)

|

||||

}

|

||||

// Content-Length is managed automatically by Go's http.Client from the Body

|

||||

httpReq.Header.Set("User-Agent", resolveUserAgent(auth))

|

||||

httpReq.Close = true // sends Connection: close

|

||||

|

||||

// Inject Authorization: Bearer <token>

|

||||

if err := e.PrepareRequest(httpReq, auth); err != nil {

|

||||

return nil, err

|

||||

}

|

||||

httpClient := newProxyAwareHTTPClient(ctx, e.cfg, auth, 0)

|

||||

|

||||

httpClient := newAntigravityHTTPClient(ctx, e.cfg, auth, 0)

|

||||

return httpClient.Do(httpReq)

|

||||

}

|

||||

|

||||

@@ -185,7 +263,7 @@ func (e *AntigravityExecutor) Execute(ctx context.Context, auth *cliproxyauth.Au

|

||||

baseModel := thinking.ParseSuffix(req.Model).ModelName

|

||||

isClaude := strings.Contains(strings.ToLower(baseModel), "claude")

|

||||

|

||||

if isClaude || strings.Contains(baseModel, "gemini-3-pro") {

|

||||

if isClaude || strings.Contains(baseModel, "gemini-3-pro") || strings.Contains(baseModel, "gemini-3.1-flash-image") {

|

||||

return e.executeClaudeNonStream(ctx, auth, req, opts)

|

||||

}

|

||||

|

||||

@@ -220,7 +298,7 @@ func (e *AntigravityExecutor) Execute(ctx context.Context, auth *cliproxyauth.Au

|

||||

translated = applyPayloadConfigWithRoot(e.cfg, baseModel, "antigravity", "request", translated, originalTranslated, requestedModel)

|

||||

|

||||

baseURLs := antigravityBaseURLFallbackOrder(auth)

|

||||

httpClient := newProxyAwareHTTPClient(ctx, e.cfg, auth, 0)

|

||||

httpClient := newAntigravityHTTPClient(ctx, e.cfg, auth, 0)

|

||||

|

||||

attempts := antigravityRetryAttempts(auth, e.cfg)

|

||||

|

||||

@@ -362,7 +440,7 @@ func (e *AntigravityExecutor) executeClaudeNonStream(ctx context.Context, auth *

|

||||

translated = applyPayloadConfigWithRoot(e.cfg, baseModel, "antigravity", "request", translated, originalTranslated, requestedModel)

|

||||

|

||||

baseURLs := antigravityBaseURLFallbackOrder(auth)

|

||||

httpClient := newProxyAwareHTTPClient(ctx, e.cfg, auth, 0)

|

||||

httpClient := newAntigravityHTTPClient(ctx, e.cfg, auth, 0)

|

||||

|

||||

attempts := antigravityRetryAttempts(auth, e.cfg)

|

||||

|

||||

@@ -754,7 +832,7 @@ func (e *AntigravityExecutor) ExecuteStream(ctx context.Context, auth *cliproxya

|

||||

translated = applyPayloadConfigWithRoot(e.cfg, baseModel, "antigravity", "request", translated, originalTranslated, requestedModel)

|

||||

|

||||

baseURLs := antigravityBaseURLFallbackOrder(auth)

|

||||

httpClient := newProxyAwareHTTPClient(ctx, e.cfg, auth, 0)

|

||||

httpClient := newAntigravityHTTPClient(ctx, e.cfg, auth, 0)

|

||||

|

||||

attempts := antigravityRetryAttempts(auth, e.cfg)

|

||||

|

||||

@@ -956,7 +1034,7 @@ func (e *AntigravityExecutor) CountTokens(ctx context.Context, auth *cliproxyaut

|

||||

payload = deleteJSONField(payload, "request.safetySettings")

|

||||

|

||||

baseURLs := antigravityBaseURLFallbackOrder(auth)

|

||||

httpClient := newProxyAwareHTTPClient(ctx, e.cfg, auth, 0)

|

||||

httpClient := newAntigravityHTTPClient(ctx, e.cfg, auth, 0)

|

||||

|

||||