mirror of

https://github.com/router-for-me/CLIProxyAPIPlus.git

synced 2026-04-03 19:21:17 +00:00

Compare commits

92 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

516d22c695 | ||

|

|

73cda6e836 | ||

|

|

0805989ee5 | ||

|

|

75da02af55 | ||

|

|

ab9ebea592 | ||

|

|

7ee37ee4b9 | ||

|

|

837afffb31 | ||

|

|

03a1bac898 | ||

|

|

3171d524f0 | ||

|

|

3e78a8d500 | ||

|

|

fcba912cc4 | ||

|

|

7170eeea5f | ||

|

|

e3eb048c7a | ||

|

|

a59e92435b | ||

|

|

108895fc04 | ||

|

|

abc293c642 | ||

|

|

bb44671845 | ||

|

|

09e480036a | ||

|

|

249f969110 | ||

|

|

4f8acec2d8 | ||

|

|

34339f61ee | ||

|

|

4045378cb4 | ||

|

|

2df35449fe | ||

|

|

c744179645 | ||

|

|

9720b03a6b | ||

|

|

f2c0f3d325 | ||

|

|

4f99bc54f1 | ||

|

|

913f4a9c5f | ||

|

|

25d1c18a3f | ||

|

|

d09dd4d0b2 | ||

|

|

474fb042da | ||

|

|

8435c3d7be | ||

|

|

e783d0a62e | ||

|

|

b05f575e9b | ||

|

|

f5e9f01811 | ||

|

|

ff7dbb5867 | ||

|

|

e34b2b4f1d | ||

|

|

15c2f274ea | ||

|

|

37249339ac | ||

|

|

c422d16beb | ||

|

|

66cd50f603 | ||

|

|

caa529c282 | ||

|

|

51a4379bf4 | ||

|

|

acf98ed10e | ||

|

|

d1c07a091e | ||

|

|

c1a8adf1ab | ||

|

|

08e078fc25 | ||

|

|

105a21548f | ||

|

|

1734aa1664 | ||

|

|

ca11b236a7 | ||

|

|

6fdff8227d | ||

|

|

330e12d3c2 | ||

|

|

bd09c0bf09 | ||

|

|

b468ca79c3 | ||

|

|

d2c7e4e96a | ||

|

|

1c7003ff68 | ||

|

|

1b44364e78 | ||

|

|

ec77f4a4f5 | ||

|

|

f611dd6e96 | ||

|

|

07b7c1a1e0 | ||

|

|

51fd58d74f | ||

|

|

faae9c2f7c | ||

|

|

bc3a6e4646 | ||

|

|

b09b03e35e | ||

|

|

16231947e7 | ||

|

|

39b9a38fbc | ||

|

|

bd855abec9 | ||

|

|

7c3c2e9f64 | ||

|

|

c10f8ae2e2 | ||

|

|

a0bf33eca6 | ||

|

|

88dd9c715d | ||

|

|

a3e21df814 | ||

|

|

d3b94c9241 | ||

|

|

c1d7599829 | ||

|

|

d11936f292 | ||

|

|

17363edf25 | ||

|

|

279cbbbb8a | ||

|

|

486cd4c343 | ||

|

|

25feceb783 | ||

|

|

d26752250d | ||

|

|

b15453c369 | ||

|

|

04ba8c8bc3 | ||

|

|

6570692291 | ||

|

|

f73d55ddaa | ||

|

|

13aa5b3375 | ||

|

|

0fcc02fbea | ||

|

|

c03883ccf0 | ||

|

|

134a9eac9d | ||

|

|

6d8de0ade4 | ||

|

|

1587ff5e74 | ||

|

|

f033d3a6df | ||

|

|

145e0e0b5d |

2

.gitignore

vendored

2

.gitignore

vendored

@@ -37,13 +37,13 @@ GEMINI.md

|

||||

|

||||

# Tooling metadata

|

||||

.vscode/*

|

||||

.worktrees/

|

||||

.codex/*

|

||||

.claude/*

|

||||

.gemini/*

|

||||

.serena/*

|

||||

.agent/*

|

||||

.agents/*

|

||||

.agents/*

|

||||

.opencode/*

|

||||

.idea/*

|

||||

.bmad/*

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

# CLIProxyAPI Plus

|

||||

|

||||

[English](README.md) | 中文 | [日本語](README_JA.md)

|

||||

[English](README.md) | 中文

|

||||

|

||||

这是 [CLIProxyAPI](https://github.com/router-for-me/CLIProxyAPI) 的 Plus 版本,在原有基础上增加了第三方供应商的支持。

|

||||

|

||||

|

||||

199

README_JA.md

199

README_JA.md

@@ -1,199 +0,0 @@

|

||||

# CLI Proxy API

|

||||

|

||||

[English](README.md) | [中文](README_CN.md) | 日本語

|

||||

|

||||

CLI向けのOpenAI/Gemini/Claude/Codex互換APIインターフェースを提供するプロキシサーバーです。

|

||||

|

||||

OAuth経由でOpenAI Codex(GPTモデル)およびClaude Codeもサポートしています。

|

||||

|

||||

ローカルまたはマルチアカウントのCLIアクセスを、OpenAI(Responses含む)/Gemini/Claude互換のクライアントやSDKで利用できます。

|

||||

|

||||

## スポンサー

|

||||

|

||||

[](https://z.ai/subscribe?ic=8JVLJQFSKB)

|

||||

|

||||

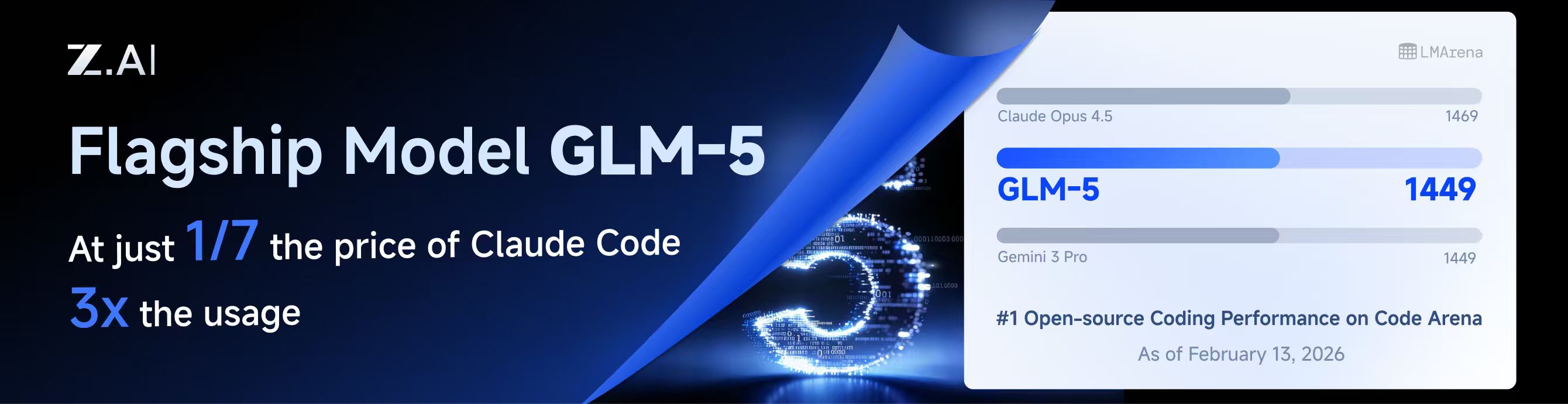

本プロジェクトはZ.aiにスポンサーされており、GLM CODING PLANの提供を受けています。

|

||||

|

||||

GLM CODING PLANはAIコーディング向けに設計されたサブスクリプションサービスで、月額わずか$10から利用可能です。フラッグシップのGLM-4.7および(GLM-5はProユーザーのみ利用可能)モデルを10以上の人気AIコーディングツール(Claude Code、Cline、Roo Codeなど)で利用でき、開発者にトップクラスの高速かつ安定したコーディング体験を提供します。

|

||||

|

||||

GLM CODING PLANを10%割引で取得:https://z.ai/subscribe?ic=8JVLJQFSKB

|

||||

|

||||

---

|

||||

|

||||

<table>

|

||||

<tbody>

|

||||

<tr>

|

||||

<td width="180"><a href="https://www.packyapi.com/register?aff=cliproxyapi"><img src="./assets/packycode.png" alt="PackyCode" width="150"></a></td>

|

||||

<td>PackyCodeのスポンサーシップに感謝します!PackyCodeは信頼性が高く効率的なAPIリレーサービスプロバイダーで、Claude Code、Codex、Geminiなどのリレーサービスを提供しています。PackyCodeは当ソフトウェアのユーザーに特別割引を提供しています:<a href="https://www.packyapi.com/register?aff=cliproxyapi">こちらのリンク</a>から登録し、チャージ時にプロモーションコード「cliproxyapi」を入力すると10%割引になります。</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td width="180"><a href="https://www.aicodemirror.com/register?invitecode=TJNAIF"><img src="./assets/aicodemirror.png" alt="AICodeMirror" width="150"></a></td>

|

||||

<td>AICodeMirrorのスポンサーシップに感謝します!AICodeMirrorはClaude Code / Codex / Gemini CLI向けの公式高安定性リレーサービスを提供しており、エンタープライズグレードの同時接続、迅速な請求書発行、24時間365日の専任技術サポートを備えています。Claude Code / Codex / Geminiの公式チャネルが元の価格の38% / 2% / 9%で利用でき、チャージ時にはさらに割引があります!CLIProxyAPIユーザー向けの特別特典:<a href="https://www.aicodemirror.com/register?invitecode=TJNAIF">こちらのリンク</a>から登録すると、初回チャージが20%割引になり、エンタープライズのお客様は最大25%割引を受けられます!</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td width="180"><a href="https://shop.bmoplus.com/?utm_source=github"><img src="./assets/bmoplus.png" alt="BmoPlus" width="150"></a></td>

|

||||

<td>本プロジェクトにご支援いただいた BmoPlus に感謝いたします!BmoPlusは、AIサブスクリプションのヘビーユーザー向けに特化した信頼性の高いAIアカウントサービスプロバイダーであり、安定した ChatGPT Plus / ChatGPT Pro (完全保証) / Claude Pro / Super Grok / Gemini Pro の公式代行チャージおよび即納アカウントを提供しています。こちらの<a href="https://shop.bmoplus.com/?utm_source=github">BmoPlus AIアカウント専門店/代行チャージ</a>経由でご登録・ご注文いただいたユーザー様は、GPTを <b>公式サイト価格の約1割(90% OFF)</b> という驚異的な価格でご利用いただけます!</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td width="180"><a href="https://www.lingtrue.com/register"><img src="./assets/lingtrue.png" alt="LingtrueAPI" width="150"></a></td>

|

||||

<td>LingtrueAPIのスポンサーシップに感謝します!LingtrueAPIはグローバルな大規模モデルAPIリレーサービスプラットフォームで、Claude Code、Codex、GeminiなどのトップモデルAPI呼び出しサービスを提供し、ユーザーが低コストかつ高い安定性で世界中のAI能力に接続できるよう支援しています。LingtrueAPIは本ソフトウェアのユーザーに特別割引を提供しています:<a href="https://www.lingtrue.com/register">こちらのリンク</a>から登録し、初回チャージ時にプロモーションコード「LingtrueAPI」を入力すると10%割引になります。</td>

|

||||

</tr>

|

||||

</tbody>

|

||||

</table>

|

||||

|

||||

## 概要

|

||||

|

||||

- CLIモデル向けのOpenAI/Gemini/Claude互換APIエンドポイント

|

||||

- OAuthログインによるOpenAI Codexサポート(GPTモデル)

|

||||

- OAuthログインによるClaude Codeサポート

|

||||

- OAuthログインによるQwen Codeサポート

|

||||

- OAuthログインによるiFlowサポート

|

||||

- プロバイダールーティングによるAmp CLIおよびIDE拡張機能のサポート

|

||||

- ストリーミングおよび非ストリーミングレスポンス

|

||||

- 関数呼び出し/ツールのサポート

|

||||

- マルチモーダル入力サポート(テキストと画像)

|

||||

- ラウンドロビン負荷分散による複数アカウント対応(Gemini、OpenAI、Claude、QwenおよびiFlow)

|

||||

- シンプルなCLI認証フロー(Gemini、OpenAI、Claude、QwenおよびiFlow)

|

||||

- Generative Language APIキーのサポート

|

||||

- AI Studioビルドのマルチアカウント負荷分散

|

||||

- Gemini CLIのマルチアカウント負荷分散

|

||||

- Claude Codeのマルチアカウント負荷分散

|

||||

- Qwen Codeのマルチアカウント負荷分散

|

||||

- iFlowのマルチアカウント負荷分散

|

||||

- OpenAI Codexのマルチアカウント負荷分散

|

||||

- 設定によるOpenAI互換アップストリームプロバイダー(例:OpenRouter)

|

||||

- プロキシ埋め込み用の再利用可能なGo SDK(`docs/sdk-usage.md`を参照)

|

||||

|

||||

## はじめに

|

||||

|

||||

CLIProxyAPIガイド:[https://help.router-for.me/](https://help.router-for.me/)

|

||||

|

||||

## 管理API

|

||||

|

||||

[MANAGEMENT_API.md](https://help.router-for.me/management/api)を参照

|

||||

|

||||

## Amp CLIサポート

|

||||

|

||||

CLIProxyAPIは[Amp CLI](https://ampcode.com)およびAmp IDE拡張機能の統合サポートを含んでおり、Google/ChatGPT/ClaudeのOAuthサブスクリプションをAmpのコーディングツールで使用できます:

|

||||

|

||||

- Ampの APIパターン用のプロバイダールートエイリアス(`/api/provider/{provider}/v1...`)

|

||||

- OAuth認証およびアカウント機能用の管理プロキシ

|

||||

- 自動ルーティングによるスマートモデルフォールバック

|

||||

- 利用できないモデルを代替モデルにルーティングする**モデルマッピング**(例:`claude-opus-4.5` → `claude-sonnet-4`)

|

||||

- localhostのみの管理エンドポイントによるセキュリティファーストの設計

|

||||

|

||||

特定のバックエンド系統のリクエスト/レスポンス形状が必要な場合は、統合された `/v1/...` エンドポイントよりも provider-specific のパスを優先してください。

|

||||

|

||||

- messages 系のバックエンドには `/api/provider/{provider}/v1/messages`

|

||||

- モデル単位の generate 系エンドポイントには `/api/provider/{provider}/v1beta/models/...`

|

||||

- chat-completions 系のバックエンドには `/api/provider/{provider}/v1/chat/completions`

|

||||

|

||||

これらのパスはプロトコル面の選択には役立ちますが、同じクライアント向けモデル名が複数バックエンドで再利用されている場合、それだけで推論実行系が一意に固定されるわけではありません。実際の推論ルーティングは、引き続きリクエスト内の model/alias 解決に従います。厳密にバックエンドを固定したい場合は、一意な alias や prefix を使うか、クライアント向けモデル名の重複自体を避けてください。

|

||||

|

||||

**→ [Amp CLI統合ガイドの完全版](https://help.router-for.me/agent-client/amp-cli.html)**

|

||||

|

||||

## SDKドキュメント

|

||||

|

||||

- 使い方:[docs/sdk-usage.md](docs/sdk-usage.md)

|

||||

- 上級(エグゼキューターとトランスレーター):[docs/sdk-advanced.md](docs/sdk-advanced.md)

|

||||

- アクセス:[docs/sdk-access.md](docs/sdk-access.md)

|

||||

- ウォッチャー:[docs/sdk-watcher.md](docs/sdk-watcher.md)

|

||||

- カスタムプロバイダーの例:`examples/custom-provider`

|

||||

|

||||

## コントリビューション

|

||||

|

||||

コントリビューションを歓迎します!お気軽にPull Requestを送ってください。

|

||||

|

||||

1. リポジトリをフォーク

|

||||

2. フィーチャーブランチを作成(`git checkout -b feature/amazing-feature`)

|

||||

3. 変更をコミット(`git commit -m 'Add some amazing feature'`)

|

||||

4. ブランチにプッシュ(`git push origin feature/amazing-feature`)

|

||||

5. Pull Requestを作成

|

||||

|

||||

## 関連プロジェクト

|

||||

|

||||

CLIProxyAPIをベースにした以下のプロジェクトがあります:

|

||||

|

||||

### [vibeproxy](https://github.com/automazeio/vibeproxy)

|

||||

|

||||

macOSネイティブのメニューバーアプリで、Claude CodeとChatGPTのサブスクリプションをAIコーディングツールで使用可能 - APIキー不要

|

||||

|

||||

### [Subtitle Translator](https://github.com/VjayC/SRT-Subtitle-Translator-Validator)

|

||||

|

||||

CLIProxyAPI経由でGeminiサブスクリプションを使用してSRT字幕を翻訳するブラウザベースのツール。自動検証/エラー修正機能付き - APIキー不要

|

||||

|

||||

### [CCS (Claude Code Switch)](https://github.com/kaitranntt/ccs)

|

||||

|

||||

CLIProxyAPI OAuthを使用して複数のClaudeアカウントや代替モデル(Gemini、Codex、Antigravity)を即座に切り替えるCLIラッパー - APIキー不要

|

||||

|

||||

### [ProxyPal](https://github.com/heyhuynhgiabuu/proxypal)

|

||||

|

||||

CLIProxyAPI管理用のmacOSネイティブGUI:OAuth経由でプロバイダー、モデルマッピング、エンドポイントを設定 - APIキー不要

|

||||

|

||||

### [Quotio](https://github.com/nguyenphutrong/quotio)

|

||||

|

||||

Claude、Gemini、OpenAI、Qwen、Antigravityのサブスクリプションを統合し、リアルタイムのクォータ追跡とスマート自動フェイルオーバーを備えたmacOSネイティブのメニューバーアプリ。Claude Code、OpenCode、Droidなどのコーディングツール向け - APIキー不要

|

||||

|

||||

### [CodMate](https://github.com/loocor/CodMate)

|

||||

|

||||

CLI AIセッション(Codex、Claude Code、Gemini CLI)を管理するmacOS SwiftUIネイティブアプリ。統合プロバイダー管理、Gitレビュー、プロジェクト整理、グローバル検索、ターミナル統合機能を搭載。CLIProxyAPIと統合し、Codex、Claude、Gemini、Antigravity、Qwen CodeのOAuth認証を提供。単一のプロキシエンドポイントを通じた組み込みおよびサードパーティプロバイダーの再ルーティングに対応 - OAuthプロバイダーではAPIキー不要

|

||||

|

||||

### [ProxyPilot](https://github.com/Finesssee/ProxyPilot)

|

||||

|

||||

TUI、システムトレイ、マルチプロバイダーOAuthを備えたWindows向けCLIProxyAPIフォーク - AIコーディングツール用、APIキー不要

|

||||

|

||||

### [Claude Proxy VSCode](https://github.com/uzhao/claude-proxy-vscode)

|

||||

|

||||

Claude Codeモデルを素早く切り替えるVSCode拡張機能。バックエンドとしてCLIProxyAPIを統合し、バックグラウンドでの自動ライフサイクル管理を搭載

|

||||

|

||||

### [ZeroLimit](https://github.com/0xtbug/zero-limit)

|

||||

|

||||

CLIProxyAPIを使用してAIコーディングアシスタントのクォータを監視するTauri + React製のWindowsデスクトップアプリ。Gemini、Claude、OpenAI Codex、Antigravityアカウントの使用量をリアルタイムダッシュボード、システムトレイ統合、ワンクリックプロキシコントロールで追跡 - APIキー不要

|

||||

|

||||

### [CPA-XXX Panel](https://github.com/ferretgeek/CPA-X)

|

||||

|

||||

CLIProxyAPI向けの軽量Web管理パネル。ヘルスチェック、リソース監視、リアルタイムログ、自動更新、リクエスト統計、料金表示機能を搭載。ワンクリックインストールとsystemdサービスに対応

|

||||

|

||||

### [CLIProxyAPI Tray](https://github.com/kitephp/CLIProxyAPI_Tray)

|

||||

|

||||

PowerShellスクリプトで実装されたWindowsトレイアプリケーション。サードパーティライブラリに依存せず、ショートカットの自動作成、サイレント実行、パスワード管理、チャネル切り替え(Main / Plus)、自動ダウンロードおよび自動更新に対応

|

||||

|

||||

### [霖君](https://github.com/wangdabaoqq/LinJun)

|

||||

|

||||

霖君はAIプログラミングアシスタントを管理するクロスプラットフォームデスクトップアプリケーションで、macOS、Windows、Linuxシステムに対応。Claude Code、Gemini CLI、OpenAI Codex、Qwen Codeなどのコーディングツールを統合管理し、ローカルプロキシによるマルチアカウントクォータ追跡とワンクリック設定が可能

|

||||

|

||||

### [CLIProxyAPI Dashboard](https://github.com/itsmylife44/cliproxyapi-dashboard)

|

||||

|

||||

Next.js、React、PostgreSQLで構築されたCLIProxyAPI用のモダンなWebベース管理ダッシュボード。リアルタイムログストリーミング、構造化された設定編集、APIキー管理、Claude/Gemini/Codex向けOAuthプロバイダー統合、使用量分析、コンテナ管理、コンパニオンプラグインによるOpenCodeとの設定同期機能を搭載 - 手動でのYAML編集は不要

|

||||

|

||||

### [All API Hub](https://github.com/qixing-jk/all-api-hub)

|

||||

|

||||

New API互換リレーサイトアカウントをワンストップで管理するブラウザ拡張機能。残高と使用量のダッシュボード、自動チェックイン、一般的なアプリへのワンクリックキーエクスポート、ページ内API可用性テスト、チャネル/モデルの同期とリダイレクト機能を搭載。Management APIを通じてCLIProxyAPIと統合し、ワンクリックでプロバイダーのインポートと設定同期が可能

|

||||

|

||||

### [Shadow AI](https://github.com/HEUDavid/shadow-ai)

|

||||

|

||||

Shadow AIは制限された環境向けに特別に設計されたAIアシスタントツールです。ウィンドウや痕跡のないステルス動作モードを提供し、LAN(ローカルエリアネットワーク)を介したクロスデバイスAI質疑応答のインタラクションと制御を可能にします。本質的には「画面/音声キャプチャ + AI推論 + 低摩擦デリバリー」の自動化コラボレーションレイヤーであり、制御されたデバイスや制限された環境でアプリケーション横断的にAIアシスタントを没入的に使用できるようユーザーを支援します。

|

||||

|

||||

> [!NOTE]

|

||||

> CLIProxyAPIをベースにプロジェクトを開発した場合は、PRを送ってこのリストに追加してください。

|

||||

|

||||

## その他の選択肢

|

||||

|

||||

以下のプロジェクトはCLIProxyAPIの移植版またはそれに触発されたものです:

|

||||

|

||||

### [9Router](https://github.com/decolua/9router)

|

||||

|

||||

CLIProxyAPIに触発されたNext.js実装。インストールと使用が簡単で、フォーマット変換(OpenAI/Claude/Gemini/Ollama)、自動フォールバック付きコンボシステム、指数バックオフ付きマルチアカウント管理、Next.js Webダッシュボード、CLIツール(Cursor、Claude Code、Cline、RooCode)のサポートをゼロから構築 - APIキー不要

|

||||

|

||||

### [OmniRoute](https://github.com/diegosouzapw/OmniRoute)

|

||||

|

||||

コーディングを止めない。無料および低コストのAIモデルへのスマートルーティングと自動フォールバック。

|

||||

|

||||

OmniRouteはマルチプロバイダーLLM向けのAIゲートウェイです:スマートルーティング、負荷分散、リトライ、フォールバックを備えたOpenAI互換エンドポイント。ポリシー、レート制限、キャッシュ、可観測性を追加して、信頼性が高くコストを意識した推論を実現します。

|

||||

|

||||

> [!NOTE]

|

||||

> CLIProxyAPIの移植版またはそれに触発されたプロジェクトを開発した場合は、PRを送ってこのリストに追加してください。

|

||||

|

||||

## ライセンス

|

||||

|

||||

本プロジェクトはMITライセンスの下でライセンスされています - 詳細は[LICENSE](LICENSE)ファイルを参照してください。

|

||||

@@ -96,6 +96,7 @@ max-retry-interval: 30

|

||||

quota-exceeded:

|

||||

switch-project: true # Whether to automatically switch to another project when a quota is exceeded

|

||||

switch-preview-model: true # Whether to automatically switch to a preview model when a quota is exceeded

|

||||

antigravity-credits: true # Whether to retry Antigravity quota_exhausted 429s once with enabledCreditTypes=["GOOGLE_ONE_AI"]

|

||||

|

||||

# Routing strategy for selecting credentials when multiple match.

|

||||

routing:

|

||||

@@ -177,6 +178,8 @@ nonstream-keepalive-interval: 0

|

||||

# - "API"

|

||||

# - "proxy"

|

||||

# cache-user-id: true # optional: default is false; set true to reuse cached user_id per API key instead of generating a random one each request

|

||||

# experimental-cch-signing: false # optional: default is false; when true, sign the final /v1/messages body using the current Claude Code cch algorithm

|

||||

# # keep this disabled unless you explicitly need the behavior, so upstream seed changes fall back to legacy proxy behavior

|

||||

|

||||

# Default headers for Claude API requests. Update when Claude Code releases new versions.

|

||||

# In legacy mode, user-agent/package-version/runtime-version/timeout are used as fallbacks

|

||||

|

||||

1

go.mod

1

go.mod

@@ -83,6 +83,7 @@ require (

|

||||

github.com/muesli/cancelreader v0.2.2 // indirect

|

||||

github.com/muesli/termenv v0.16.0 // indirect

|

||||

github.com/pelletier/go-toml/v2 v2.2.2 // indirect

|

||||

github.com/pierrec/xxHash v0.1.5

|

||||

github.com/pjbgf/sha1cd v0.5.0 // indirect

|

||||

github.com/rivo/uniseg v0.4.7 // indirect

|

||||

github.com/rs/xid v1.5.0 // indirect

|

||||

|

||||

2

go.sum

2

go.sum

@@ -154,6 +154,8 @@ github.com/muesli/termenv v0.16.0 h1:S5AlUN9dENB57rsbnkPyfdGuWIlkmzJjbFf0Tf5FWUc

|

||||

github.com/muesli/termenv v0.16.0/go.mod h1:ZRfOIKPFDYQoDFF4Olj7/QJbW60Ol/kL1pU3VfY/Cnk=

|

||||

github.com/pelletier/go-toml/v2 v2.2.2 h1:aYUidT7k73Pcl9nb2gScu7NSrKCSHIDE89b3+6Wq+LM=

|

||||

github.com/pelletier/go-toml/v2 v2.2.2/go.mod h1:1t835xjRzz80PqgE6HHgN2JOsmgYu/h4qDAS4n929Rs=

|

||||

github.com/pierrec/xxHash v0.1.5 h1:n/jBpwTHiER4xYvK3/CdPVnLDPchj8eTJFFLUb4QHBo=

|

||||

github.com/pierrec/xxHash v0.1.5/go.mod h1:w2waW5Zoa/Wc4Yqe0wgrIYAGKqRMf7czn2HNKXmuL+I=

|

||||

github.com/pjbgf/sha1cd v0.5.0 h1:a+UkboSi1znleCDUNT3M5YxjOnN1fz2FhN48FlwCxs0=

|

||||

github.com/pjbgf/sha1cd v0.5.0/go.mod h1:lhpGlyHLpQZoxMv8HcgXvZEhcGs0PG/vsZnEJ7H0iCM=

|

||||

github.com/pkg/browser v0.0.0-20240102092130-5ac0b6a4141c h1:+mdjkGKdHQG3305AYmdv1U2eRNDiU2ErMBj1gwrq8eQ=

|

||||

|

||||

@@ -1047,6 +1047,7 @@ func (h *Handler) buildAuthFromFileData(path string, data []byte) (*coreauth.Aut

|

||||

auth.Runtime = existing.Runtime

|

||||

}

|

||||

}

|

||||

coreauth.ApplyCustomHeadersFromMetadata(auth)

|

||||

return auth, nil

|

||||

}

|

||||

|

||||

@@ -1129,7 +1130,7 @@ func (h *Handler) PatchAuthFileStatus(c *gin.Context) {

|

||||

c.JSON(http.StatusOK, gin.H{"status": "ok", "disabled": *req.Disabled})

|

||||

}

|

||||

|

||||

// PatchAuthFileFields updates editable fields (prefix, proxy_url, priority, note) of an auth file.

|

||||

// PatchAuthFileFields updates editable fields (prefix, proxy_url, headers, priority, note) of an auth file.

|

||||

func (h *Handler) PatchAuthFileFields(c *gin.Context) {

|

||||

if h.authManager == nil {

|

||||

c.JSON(http.StatusServiceUnavailable, gin.H{"error": "core auth manager unavailable"})

|

||||

@@ -1137,11 +1138,12 @@ func (h *Handler) PatchAuthFileFields(c *gin.Context) {

|

||||

}

|

||||

|

||||

var req struct {

|

||||

Name string `json:"name"`

|

||||

Prefix *string `json:"prefix"`

|

||||

ProxyURL *string `json:"proxy_url"`

|

||||

Priority *int `json:"priority"`

|

||||

Note *string `json:"note"`

|

||||

Name string `json:"name"`

|

||||

Prefix *string `json:"prefix"`

|

||||

ProxyURL *string `json:"proxy_url"`

|

||||

Headers map[string]string `json:"headers"`

|

||||

Priority *int `json:"priority"`

|

||||

Note *string `json:"note"`

|

||||

}

|

||||

if err := c.ShouldBindJSON(&req); err != nil {

|

||||

c.JSON(http.StatusBadRequest, gin.H{"error": "invalid request body"})

|

||||

@@ -1177,13 +1179,107 @@ func (h *Handler) PatchAuthFileFields(c *gin.Context) {

|

||||

|

||||

changed := false

|

||||

if req.Prefix != nil {

|

||||

targetAuth.Prefix = *req.Prefix

|

||||

prefix := strings.TrimSpace(*req.Prefix)

|

||||

targetAuth.Prefix = prefix

|

||||

if targetAuth.Metadata == nil {

|

||||

targetAuth.Metadata = make(map[string]any)

|

||||

}

|

||||

if prefix == "" {

|

||||

delete(targetAuth.Metadata, "prefix")

|

||||

} else {

|

||||

targetAuth.Metadata["prefix"] = prefix

|

||||

}

|

||||

changed = true

|

||||

}

|

||||

if req.ProxyURL != nil {

|

||||

targetAuth.ProxyURL = *req.ProxyURL

|

||||

proxyURL := strings.TrimSpace(*req.ProxyURL)

|

||||

targetAuth.ProxyURL = proxyURL

|

||||

if targetAuth.Metadata == nil {

|

||||

targetAuth.Metadata = make(map[string]any)

|

||||

}

|

||||

if proxyURL == "" {

|

||||

delete(targetAuth.Metadata, "proxy_url")

|

||||

} else {

|

||||

targetAuth.Metadata["proxy_url"] = proxyURL

|

||||

}

|

||||

changed = true

|

||||

}

|

||||

if len(req.Headers) > 0 {

|

||||

existingHeaders := coreauth.ExtractCustomHeadersFromMetadata(targetAuth.Metadata)

|

||||

nextHeaders := make(map[string]string, len(existingHeaders))

|

||||

for k, v := range existingHeaders {

|

||||

nextHeaders[k] = v

|

||||

}

|

||||

headerChanged := false

|

||||

|

||||

for key, value := range req.Headers {

|

||||

name := strings.TrimSpace(key)

|

||||

if name == "" {

|

||||

continue

|

||||

}

|

||||

val := strings.TrimSpace(value)

|

||||

attrKey := "header:" + name

|

||||

if val == "" {

|

||||

if _, ok := nextHeaders[name]; ok {

|

||||

delete(nextHeaders, name)

|

||||

headerChanged = true

|

||||

}

|

||||

if targetAuth.Attributes != nil {

|

||||

if _, ok := targetAuth.Attributes[attrKey]; ok {

|

||||

headerChanged = true

|

||||

}

|

||||

}

|

||||

continue

|

||||

}

|

||||

if prev, ok := nextHeaders[name]; !ok || prev != val {

|

||||

headerChanged = true

|

||||

}

|

||||

nextHeaders[name] = val

|

||||

if targetAuth.Attributes != nil {

|

||||

if prev, ok := targetAuth.Attributes[attrKey]; !ok || prev != val {

|

||||

headerChanged = true

|

||||

}

|

||||

} else {

|

||||

headerChanged = true

|

||||

}

|

||||

}

|

||||

|

||||

if headerChanged {

|

||||

if targetAuth.Metadata == nil {

|

||||

targetAuth.Metadata = make(map[string]any)

|

||||

}

|

||||

if targetAuth.Attributes == nil {

|

||||

targetAuth.Attributes = make(map[string]string)

|

||||

}

|

||||

|

||||

for key, value := range req.Headers {

|

||||

name := strings.TrimSpace(key)

|

||||

if name == "" {

|

||||

continue

|

||||

}

|

||||

val := strings.TrimSpace(value)

|

||||

attrKey := "header:" + name

|

||||

if val == "" {

|

||||

delete(nextHeaders, name)

|

||||

delete(targetAuth.Attributes, attrKey)

|

||||

continue

|

||||

}

|

||||

nextHeaders[name] = val

|

||||

targetAuth.Attributes[attrKey] = val

|

||||

}

|

||||

|

||||

if len(nextHeaders) == 0 {

|

||||

delete(targetAuth.Metadata, "headers")

|

||||

} else {

|

||||

metaHeaders := make(map[string]any, len(nextHeaders))

|

||||

for k, v := range nextHeaders {

|

||||

metaHeaders[k] = v

|

||||

}

|

||||

targetAuth.Metadata["headers"] = metaHeaders

|

||||

}

|

||||

changed = true

|

||||

}

|

||||

}

|

||||

if req.Priority != nil || req.Note != nil {

|

||||

if targetAuth.Metadata == nil {

|

||||

targetAuth.Metadata = make(map[string]any)

|

||||

|

||||

164

internal/api/handlers/management/auth_files_patch_fields_test.go

Normal file

164

internal/api/handlers/management/auth_files_patch_fields_test.go

Normal file

@@ -0,0 +1,164 @@

|

||||

package management

|

||||

|

||||

import (

|

||||

"context"

|

||||

"encoding/json"

|

||||

"net/http"

|

||||

"net/http/httptest"

|

||||

"strings"

|

||||

"testing"

|

||||

|

||||

"github.com/gin-gonic/gin"

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/config"

|

||||

coreauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/cliproxy/auth"

|

||||

)

|

||||

|

||||

func TestPatchAuthFileFields_MergeHeadersAndDeleteEmptyValues(t *testing.T) {

|

||||

t.Setenv("MANAGEMENT_PASSWORD", "")

|

||||

gin.SetMode(gin.TestMode)

|

||||

|

||||

store := &memoryAuthStore{}

|

||||

manager := coreauth.NewManager(store, nil, nil)

|

||||

record := &coreauth.Auth{

|

||||

ID: "test.json",

|

||||

FileName: "test.json",

|

||||

Provider: "claude",

|

||||

Attributes: map[string]string{

|

||||

"path": "/tmp/test.json",

|

||||

"header:X-Old": "old",

|

||||

"header:X-Remove": "gone",

|

||||

},

|

||||

Metadata: map[string]any{

|

||||

"type": "claude",

|

||||

"headers": map[string]any{

|

||||

"X-Old": "old",

|

||||

"X-Remove": "gone",

|

||||

},

|

||||

},

|

||||

}

|

||||

if _, errRegister := manager.Register(context.Background(), record); errRegister != nil {

|

||||

t.Fatalf("failed to register auth record: %v", errRegister)

|

||||

}

|

||||

|

||||

h := NewHandlerWithoutConfigFilePath(&config.Config{AuthDir: t.TempDir()}, manager)

|

||||

|

||||

body := `{"name":"test.json","prefix":"p1","proxy_url":"http://proxy.local","headers":{"X-Old":"new","X-New":"v","X-Remove":" ","X-Nope":""}}`

|

||||

rec := httptest.NewRecorder()

|

||||

ctx, _ := gin.CreateTestContext(rec)

|

||||

req := httptest.NewRequest(http.MethodPatch, "/v0/management/auth-files/fields", strings.NewReader(body))

|

||||

req.Header.Set("Content-Type", "application/json")

|

||||

ctx.Request = req

|

||||

h.PatchAuthFileFields(ctx)

|

||||

|

||||

if rec.Code != http.StatusOK {

|

||||

t.Fatalf("expected status %d, got %d with body %s", http.StatusOK, rec.Code, rec.Body.String())

|

||||

}

|

||||

|

||||

updated, ok := manager.GetByID("test.json")

|

||||

if !ok || updated == nil {

|

||||

t.Fatalf("expected auth record to exist after patch")

|

||||

}

|

||||

|

||||

if updated.Prefix != "p1" {

|

||||

t.Fatalf("prefix = %q, want %q", updated.Prefix, "p1")

|

||||

}

|

||||

if updated.ProxyURL != "http://proxy.local" {

|

||||

t.Fatalf("proxy_url = %q, want %q", updated.ProxyURL, "http://proxy.local")

|

||||

}

|

||||

|

||||

if updated.Metadata == nil {

|

||||

t.Fatalf("expected metadata to be non-nil")

|

||||

}

|

||||

if got, _ := updated.Metadata["prefix"].(string); got != "p1" {

|

||||

t.Fatalf("metadata.prefix = %q, want %q", got, "p1")

|

||||

}

|

||||

if got, _ := updated.Metadata["proxy_url"].(string); got != "http://proxy.local" {

|

||||

t.Fatalf("metadata.proxy_url = %q, want %q", got, "http://proxy.local")

|

||||

}

|

||||

|

||||

headersMeta, ok := updated.Metadata["headers"].(map[string]any)

|

||||

if !ok {

|

||||

raw, _ := json.Marshal(updated.Metadata["headers"])

|

||||

t.Fatalf("metadata.headers = %T (%s), want map[string]any", updated.Metadata["headers"], string(raw))

|

||||

}

|

||||

if got := headersMeta["X-Old"]; got != "new" {

|

||||

t.Fatalf("metadata.headers.X-Old = %#v, want %q", got, "new")

|

||||

}

|

||||

if got := headersMeta["X-New"]; got != "v" {

|

||||

t.Fatalf("metadata.headers.X-New = %#v, want %q", got, "v")

|

||||

}

|

||||

if _, ok := headersMeta["X-Remove"]; ok {

|

||||

t.Fatalf("expected metadata.headers.X-Remove to be deleted")

|

||||

}

|

||||

if _, ok := headersMeta["X-Nope"]; ok {

|

||||

t.Fatalf("expected metadata.headers.X-Nope to be absent")

|

||||

}

|

||||

|

||||

if got := updated.Attributes["header:X-Old"]; got != "new" {

|

||||

t.Fatalf("attrs header:X-Old = %q, want %q", got, "new")

|

||||

}

|

||||

if got := updated.Attributes["header:X-New"]; got != "v" {

|

||||

t.Fatalf("attrs header:X-New = %q, want %q", got, "v")

|

||||

}

|

||||

if _, ok := updated.Attributes["header:X-Remove"]; ok {

|

||||

t.Fatalf("expected attrs header:X-Remove to be deleted")

|

||||

}

|

||||

if _, ok := updated.Attributes["header:X-Nope"]; ok {

|

||||

t.Fatalf("expected attrs header:X-Nope to be absent")

|

||||

}

|

||||

}

|

||||

|

||||

func TestPatchAuthFileFields_HeadersEmptyMapIsNoop(t *testing.T) {

|

||||

t.Setenv("MANAGEMENT_PASSWORD", "")

|

||||

gin.SetMode(gin.TestMode)

|

||||

|

||||

store := &memoryAuthStore{}

|

||||

manager := coreauth.NewManager(store, nil, nil)

|

||||

record := &coreauth.Auth{

|

||||

ID: "noop.json",

|

||||

FileName: "noop.json",

|

||||

Provider: "claude",

|

||||

Attributes: map[string]string{

|

||||

"path": "/tmp/noop.json",

|

||||

"header:X-Kee": "1",

|

||||

},

|

||||

Metadata: map[string]any{

|

||||

"type": "claude",

|

||||

"headers": map[string]any{

|

||||

"X-Kee": "1",

|

||||

},

|

||||

},

|

||||

}

|

||||

if _, errRegister := manager.Register(context.Background(), record); errRegister != nil {

|

||||

t.Fatalf("failed to register auth record: %v", errRegister)

|

||||

}

|

||||

|

||||

h := NewHandlerWithoutConfigFilePath(&config.Config{AuthDir: t.TempDir()}, manager)

|

||||

|

||||

body := `{"name":"noop.json","note":"hello","headers":{}}`

|

||||

rec := httptest.NewRecorder()

|

||||

ctx, _ := gin.CreateTestContext(rec)

|

||||

req := httptest.NewRequest(http.MethodPatch, "/v0/management/auth-files/fields", strings.NewReader(body))

|

||||

req.Header.Set("Content-Type", "application/json")

|

||||

ctx.Request = req

|

||||

h.PatchAuthFileFields(ctx)

|

||||

|

||||

if rec.Code != http.StatusOK {

|

||||

t.Fatalf("expected status %d, got %d with body %s", http.StatusOK, rec.Code, rec.Body.String())

|

||||

}

|

||||

|

||||

updated, ok := manager.GetByID("noop.json")

|

||||

if !ok || updated == nil {

|

||||

t.Fatalf("expected auth record to exist after patch")

|

||||

}

|

||||

if got := updated.Attributes["header:X-Kee"]; got != "1" {

|

||||

t.Fatalf("attrs header:X-Kee = %q, want %q", got, "1")

|

||||

}

|

||||

headersMeta, ok := updated.Metadata["headers"].(map[string]any)

|

||||

if !ok {

|

||||

t.Fatalf("expected metadata.headers to remain a map, got %T", updated.Metadata["headers"])

|

||||

}

|

||||

if got := headersMeta["X-Kee"]; got != "1" {

|

||||

t.Fatalf("metadata.headers.X-Kee = %#v, want %q", got, "1")

|

||||

}

|

||||

}

|

||||

@@ -15,6 +15,8 @@ import (

|

||||

)

|

||||

|

||||

const requestBodyOverrideContextKey = "REQUEST_BODY_OVERRIDE"

|

||||

const responseBodyOverrideContextKey = "RESPONSE_BODY_OVERRIDE"

|

||||

const websocketTimelineOverrideContextKey = "WEBSOCKET_TIMELINE_OVERRIDE"

|

||||

|

||||

// RequestInfo holds essential details of an incoming HTTP request for logging purposes.

|

||||

type RequestInfo struct {

|

||||

@@ -304,6 +306,10 @@ func (w *ResponseWriterWrapper) Finalize(c *gin.Context) error {

|

||||

if len(apiResponse) > 0 {

|

||||

_ = w.streamWriter.WriteAPIResponse(apiResponse)

|

||||

}

|

||||

apiWebsocketTimeline := w.extractAPIWebsocketTimeline(c)

|

||||

if len(apiWebsocketTimeline) > 0 {

|

||||

_ = w.streamWriter.WriteAPIWebsocketTimeline(apiWebsocketTimeline)

|

||||

}

|

||||

if err := w.streamWriter.Close(); err != nil {

|

||||

w.streamWriter = nil

|

||||

return err

|

||||

@@ -312,7 +318,7 @@ func (w *ResponseWriterWrapper) Finalize(c *gin.Context) error {

|

||||

return nil

|

||||

}

|

||||

|

||||

return w.logRequest(w.extractRequestBody(c), finalStatusCode, w.cloneHeaders(), w.body.Bytes(), w.extractAPIRequest(c), w.extractAPIResponse(c), w.extractAPIResponseTimestamp(c), slicesAPIResponseError, forceLog)

|

||||

return w.logRequest(w.extractRequestBody(c), finalStatusCode, w.cloneHeaders(), w.extractResponseBody(c), w.extractWebsocketTimeline(c), w.extractAPIRequest(c), w.extractAPIResponse(c), w.extractAPIWebsocketTimeline(c), w.extractAPIResponseTimestamp(c), slicesAPIResponseError, forceLog)

|

||||

}

|

||||

|

||||

func (w *ResponseWriterWrapper) cloneHeaders() map[string][]string {

|

||||

@@ -352,6 +358,18 @@ func (w *ResponseWriterWrapper) extractAPIResponse(c *gin.Context) []byte {

|

||||

return data

|

||||

}

|

||||

|

||||

func (w *ResponseWriterWrapper) extractAPIWebsocketTimeline(c *gin.Context) []byte {

|

||||

apiTimeline, isExist := c.Get("API_WEBSOCKET_TIMELINE")

|

||||

if !isExist {

|

||||

return nil

|

||||

}

|

||||

data, ok := apiTimeline.([]byte)

|

||||

if !ok || len(data) == 0 {

|

||||

return nil

|

||||

}

|

||||

return bytes.Clone(data)

|

||||

}

|

||||

|

||||

func (w *ResponseWriterWrapper) extractAPIResponseTimestamp(c *gin.Context) time.Time {

|

||||

ts, isExist := c.Get("API_RESPONSE_TIMESTAMP")

|

||||

if !isExist {

|

||||

@@ -364,19 +382,8 @@ func (w *ResponseWriterWrapper) extractAPIResponseTimestamp(c *gin.Context) time

|

||||

}

|

||||

|

||||

func (w *ResponseWriterWrapper) extractRequestBody(c *gin.Context) []byte {

|

||||

if c != nil {

|

||||

if bodyOverride, isExist := c.Get(requestBodyOverrideContextKey); isExist {

|

||||

switch value := bodyOverride.(type) {

|

||||

case []byte:

|

||||

if len(value) > 0 {

|

||||

return bytes.Clone(value)

|

||||

}

|

||||

case string:

|

||||

if strings.TrimSpace(value) != "" {

|

||||

return []byte(value)

|

||||

}

|

||||

}

|

||||

}

|

||||

if body := extractBodyOverride(c, requestBodyOverrideContextKey); len(body) > 0 {

|

||||

return body

|

||||

}

|

||||

if w.requestInfo != nil && len(w.requestInfo.Body) > 0 {

|

||||

return w.requestInfo.Body

|

||||

@@ -384,13 +391,48 @@ func (w *ResponseWriterWrapper) extractRequestBody(c *gin.Context) []byte {

|

||||

return nil

|

||||

}

|

||||

|

||||

func (w *ResponseWriterWrapper) logRequest(requestBody []byte, statusCode int, headers map[string][]string, body []byte, apiRequestBody, apiResponseBody []byte, apiResponseTimestamp time.Time, apiResponseErrors []*interfaces.ErrorMessage, forceLog bool) error {

|

||||

func (w *ResponseWriterWrapper) extractResponseBody(c *gin.Context) []byte {

|

||||

if body := extractBodyOverride(c, responseBodyOverrideContextKey); len(body) > 0 {

|

||||

return body

|

||||

}

|

||||

if w.body == nil || w.body.Len() == 0 {

|

||||

return nil

|

||||

}

|

||||

return bytes.Clone(w.body.Bytes())

|

||||

}

|

||||

|

||||

func (w *ResponseWriterWrapper) extractWebsocketTimeline(c *gin.Context) []byte {

|

||||

return extractBodyOverride(c, websocketTimelineOverrideContextKey)

|

||||

}

|

||||

|

||||

func extractBodyOverride(c *gin.Context, key string) []byte {

|

||||

if c == nil {

|

||||

return nil

|

||||

}

|

||||

bodyOverride, isExist := c.Get(key)

|

||||

if !isExist {

|

||||

return nil

|

||||

}

|

||||

switch value := bodyOverride.(type) {

|

||||

case []byte:

|

||||

if len(value) > 0 {

|

||||

return bytes.Clone(value)

|

||||

}

|

||||

case string:

|

||||

if strings.TrimSpace(value) != "" {

|

||||

return []byte(value)

|

||||

}

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func (w *ResponseWriterWrapper) logRequest(requestBody []byte, statusCode int, headers map[string][]string, body, websocketTimeline, apiRequestBody, apiResponseBody, apiWebsocketTimeline []byte, apiResponseTimestamp time.Time, apiResponseErrors []*interfaces.ErrorMessage, forceLog bool) error {

|

||||

if w.requestInfo == nil {

|

||||

return nil

|

||||

}

|

||||

|

||||

if loggerWithOptions, ok := w.logger.(interface {

|

||||

LogRequestWithOptions(string, string, map[string][]string, []byte, int, map[string][]string, []byte, []byte, []byte, []*interfaces.ErrorMessage, bool, string, time.Time, time.Time) error

|

||||

LogRequestWithOptions(string, string, map[string][]string, []byte, int, map[string][]string, []byte, []byte, []byte, []byte, []byte, []*interfaces.ErrorMessage, bool, string, time.Time, time.Time) error

|

||||

}); ok {

|

||||

return loggerWithOptions.LogRequestWithOptions(

|

||||

w.requestInfo.URL,

|

||||

@@ -400,8 +442,10 @@ func (w *ResponseWriterWrapper) logRequest(requestBody []byte, statusCode int, h

|

||||

statusCode,

|

||||

headers,

|

||||

body,

|

||||

websocketTimeline,

|

||||

apiRequestBody,

|

||||

apiResponseBody,

|

||||

apiWebsocketTimeline,

|

||||

apiResponseErrors,

|

||||

forceLog,

|

||||

w.requestInfo.RequestID,

|

||||

@@ -418,8 +462,10 @@ func (w *ResponseWriterWrapper) logRequest(requestBody []byte, statusCode int, h

|

||||

statusCode,

|

||||

headers,

|

||||

body,

|

||||

websocketTimeline,

|

||||

apiRequestBody,

|

||||

apiResponseBody,

|

||||

apiWebsocketTimeline,

|

||||

apiResponseErrors,

|

||||

w.requestInfo.RequestID,

|

||||

w.requestInfo.Timestamp,

|

||||

|

||||

@@ -1,10 +1,14 @@

|

||||

package middleware

|

||||

|

||||

import (

|

||||

"bytes"

|

||||

"net/http/httptest"

|

||||

"testing"

|

||||

"time"

|

||||

|

||||

"github.com/gin-gonic/gin"

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/interfaces"

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/logging"

|

||||

)

|

||||

|

||||

func TestExtractRequestBodyPrefersOverride(t *testing.T) {

|

||||

@@ -33,7 +37,7 @@ func TestExtractRequestBodySupportsStringOverride(t *testing.T) {

|

||||

recorder := httptest.NewRecorder()

|

||||

c, _ := gin.CreateTestContext(recorder)

|

||||

|

||||

wrapper := &ResponseWriterWrapper{}

|

||||

wrapper := &ResponseWriterWrapper{body: &bytes.Buffer{}}

|

||||

c.Set(requestBodyOverrideContextKey, "override-as-string")

|

||||

|

||||

body := wrapper.extractRequestBody(c)

|

||||

@@ -41,3 +45,158 @@ func TestExtractRequestBodySupportsStringOverride(t *testing.T) {

|

||||

t.Fatalf("request body = %q, want %q", string(body), "override-as-string")

|

||||

}

|

||||

}

|

||||

|

||||

func TestExtractResponseBodyPrefersOverride(t *testing.T) {

|

||||

gin.SetMode(gin.TestMode)

|

||||

recorder := httptest.NewRecorder()

|

||||

c, _ := gin.CreateTestContext(recorder)

|

||||

|

||||

wrapper := &ResponseWriterWrapper{body: &bytes.Buffer{}}

|

||||

wrapper.body.WriteString("original-response")

|

||||

|

||||

body := wrapper.extractResponseBody(c)

|

||||

if string(body) != "original-response" {

|

||||

t.Fatalf("response body = %q, want %q", string(body), "original-response")

|

||||

}

|

||||

|

||||

c.Set(responseBodyOverrideContextKey, []byte("override-response"))

|

||||

body = wrapper.extractResponseBody(c)

|

||||

if string(body) != "override-response" {

|

||||

t.Fatalf("response body = %q, want %q", string(body), "override-response")

|

||||

}

|

||||

|

||||

body[0] = 'X'

|

||||

if got := wrapper.extractResponseBody(c); string(got) != "override-response" {

|

||||

t.Fatalf("response override should be cloned, got %q", string(got))

|

||||

}

|

||||

}

|

||||

|

||||

func TestExtractResponseBodySupportsStringOverride(t *testing.T) {

|

||||

gin.SetMode(gin.TestMode)

|

||||

recorder := httptest.NewRecorder()

|

||||

c, _ := gin.CreateTestContext(recorder)

|

||||

|

||||

wrapper := &ResponseWriterWrapper{}

|

||||

c.Set(responseBodyOverrideContextKey, "override-response-as-string")

|

||||

|

||||

body := wrapper.extractResponseBody(c)

|

||||

if string(body) != "override-response-as-string" {

|

||||

t.Fatalf("response body = %q, want %q", string(body), "override-response-as-string")

|

||||

}

|

||||

}

|

||||

|

||||

func TestExtractBodyOverrideClonesBytes(t *testing.T) {

|

||||

gin.SetMode(gin.TestMode)

|

||||

recorder := httptest.NewRecorder()

|

||||

c, _ := gin.CreateTestContext(recorder)

|

||||

|

||||

override := []byte("body-override")

|

||||

c.Set(requestBodyOverrideContextKey, override)

|

||||

|

||||

body := extractBodyOverride(c, requestBodyOverrideContextKey)

|

||||

if !bytes.Equal(body, override) {

|

||||

t.Fatalf("body override = %q, want %q", string(body), string(override))

|

||||

}

|

||||

|

||||

body[0] = 'X'

|

||||

if !bytes.Equal(override, []byte("body-override")) {

|

||||

t.Fatalf("override mutated: %q", string(override))

|

||||

}

|

||||

}

|

||||

|

||||

func TestExtractWebsocketTimelineUsesOverride(t *testing.T) {

|

||||

gin.SetMode(gin.TestMode)

|

||||

recorder := httptest.NewRecorder()

|

||||

c, _ := gin.CreateTestContext(recorder)

|

||||

|

||||

wrapper := &ResponseWriterWrapper{}

|

||||

if got := wrapper.extractWebsocketTimeline(c); got != nil {

|

||||

t.Fatalf("expected nil websocket timeline, got %q", string(got))

|

||||

}

|

||||

|

||||

c.Set(websocketTimelineOverrideContextKey, []byte("timeline"))

|

||||

body := wrapper.extractWebsocketTimeline(c)

|

||||

if string(body) != "timeline" {

|

||||

t.Fatalf("websocket timeline = %q, want %q", string(body), "timeline")

|

||||

}

|

||||

}

|

||||

|

||||

func TestFinalizeStreamingWritesAPIWebsocketTimeline(t *testing.T) {

|

||||

gin.SetMode(gin.TestMode)

|

||||

recorder := httptest.NewRecorder()

|

||||

c, _ := gin.CreateTestContext(recorder)

|

||||

|

||||

streamWriter := &testStreamingLogWriter{}

|

||||

wrapper := &ResponseWriterWrapper{

|

||||

ResponseWriter: c.Writer,

|

||||

logger: &testRequestLogger{enabled: true},

|

||||

requestInfo: &RequestInfo{

|

||||

URL: "/v1/responses",

|

||||

Method: "POST",

|

||||

Headers: map[string][]string{"Content-Type": {"application/json"}},

|

||||

RequestID: "req-1",

|

||||

Timestamp: time.Date(2026, time.April, 1, 12, 0, 0, 0, time.UTC),

|

||||

},

|

||||

isStreaming: true,

|

||||

streamWriter: streamWriter,

|

||||

}

|

||||

|

||||

c.Set("API_WEBSOCKET_TIMELINE", []byte("Timestamp: 2026-04-01T12:00:00Z\nEvent: api.websocket.request\n{}"))

|

||||

|

||||

if err := wrapper.Finalize(c); err != nil {

|

||||

t.Fatalf("Finalize error: %v", err)

|

||||

}

|

||||

if string(streamWriter.apiWebsocketTimeline) != "Timestamp: 2026-04-01T12:00:00Z\nEvent: api.websocket.request\n{}" {

|

||||

t.Fatalf("stream writer websocket timeline = %q", string(streamWriter.apiWebsocketTimeline))

|

||||

}

|

||||

if !streamWriter.closed {

|

||||

t.Fatal("expected stream writer to be closed")

|

||||

}

|

||||

}

|

||||

|

||||

type testRequestLogger struct {

|

||||

enabled bool

|

||||

}

|

||||

|

||||

func (l *testRequestLogger) LogRequest(string, string, map[string][]string, []byte, int, map[string][]string, []byte, []byte, []byte, []byte, []byte, []*interfaces.ErrorMessage, string, time.Time, time.Time) error {

|

||||

return nil

|

||||

}

|

||||

|

||||

func (l *testRequestLogger) LogStreamingRequest(string, string, map[string][]string, []byte, string) (logging.StreamingLogWriter, error) {

|

||||

return &testStreamingLogWriter{}, nil

|

||||

}

|

||||

|

||||

func (l *testRequestLogger) IsEnabled() bool {

|

||||

return l.enabled

|

||||

}

|

||||

|

||||

type testStreamingLogWriter struct {

|

||||

apiWebsocketTimeline []byte

|

||||

closed bool

|

||||

}

|

||||

|

||||

func (w *testStreamingLogWriter) WriteChunkAsync([]byte) {}

|

||||

|

||||

func (w *testStreamingLogWriter) WriteStatus(int, map[string][]string) error {

|

||||

return nil

|

||||

}

|

||||

|

||||

func (w *testStreamingLogWriter) WriteAPIRequest([]byte) error {

|

||||

return nil

|

||||

}

|

||||

|

||||

func (w *testStreamingLogWriter) WriteAPIResponse([]byte) error {

|

||||

return nil

|

||||

}

|

||||

|

||||

func (w *testStreamingLogWriter) WriteAPIWebsocketTimeline(apiWebsocketTimeline []byte) error {

|

||||

w.apiWebsocketTimeline = bytes.Clone(apiWebsocketTimeline)

|

||||

return nil

|

||||

}

|

||||

|

||||

func (w *testStreamingLogWriter) SetFirstChunkTimestamp(time.Time) {}

|

||||

|

||||

func (w *testStreamingLogWriter) Close() error {

|

||||

w.closed = true

|

||||

return nil

|

||||

}

|

||||

|

||||

@@ -123,6 +123,10 @@ func (fh *FallbackHandler) WrapHandler(handler gin.HandlerFunc) gin.HandlerFunc

|

||||

return

|

||||

}

|

||||

|

||||

// Sanitize request body: remove thinking blocks with invalid signatures

|

||||

// to prevent upstream API 400 errors

|

||||

bodyBytes = SanitizeAmpRequestBody(bodyBytes)

|

||||

|

||||

// Restore the body for the handler to read

|

||||

c.Request.Body = io.NopCloser(bytes.NewReader(bodyBytes))

|

||||

|

||||

@@ -249,6 +253,7 @@ func (fh *FallbackHandler) WrapHandler(handler gin.HandlerFunc) gin.HandlerFunc

|

||||

log.Debugf("amp model mapping: request %s -> %s", normalizedModel, resolvedModel)

|

||||

logAmpRouting(RouteTypeModelMapping, modelName, resolvedModel, providerName, requestPath)

|

||||

rewriter := NewResponseRewriter(c.Writer, modelName)

|

||||

rewriter.suppressThinking = true

|

||||

c.Writer = rewriter

|

||||

// Filter Anthropic-Beta header only for local handling paths

|

||||

filterAntropicBetaHeader(c)

|

||||

@@ -259,10 +264,17 @@ func (fh *FallbackHandler) WrapHandler(handler gin.HandlerFunc) gin.HandlerFunc

|

||||

} else if len(providers) > 0 {

|

||||

// Log: Using local provider (free)

|

||||

logAmpRouting(RouteTypeLocalProvider, modelName, resolvedModel, providerName, requestPath)

|

||||

// Wrap with ResponseRewriter for local providers too, because upstream

|

||||

// proxies (e.g. NewAPI) may return a different model name and lack

|

||||

// Amp-required fields like thinking.signature.

|

||||

rewriter := NewResponseRewriter(c.Writer, modelName)

|

||||

rewriter.suppressThinking = providerName != "claude"

|

||||

c.Writer = rewriter

|

||||

// Filter Anthropic-Beta header only for local handling paths

|

||||

filterAntropicBetaHeader(c)

|

||||

c.Request.Body = io.NopCloser(bytes.NewReader(bodyBytes))

|

||||

handler(c)

|

||||

rewriter.Flush()

|

||||

} else {

|

||||

// No provider, no mapping, no proxy: fall back to the wrapped handler so it can return an error response

|

||||

c.Request.Body = io.NopCloser(bytes.NewReader(bodyBytes))

|

||||

|

||||

@@ -2,6 +2,7 @@ package amp

|

||||

|

||||

import (

|

||||

"bytes"

|

||||

"fmt"

|

||||

"net/http"

|

||||

"strings"

|

||||

|

||||

@@ -12,15 +13,17 @@ import (

|

||||

)

|

||||

|

||||

// ResponseRewriter wraps a gin.ResponseWriter to intercept and modify the response body

|

||||

// It's used to rewrite model names in responses when model mapping is used

|

||||

// It is used to rewrite model names in responses when model mapping is used

|

||||

// and to keep Amp-compatible response shapes.

|

||||

type ResponseRewriter struct {

|

||||

gin.ResponseWriter

|

||||

body *bytes.Buffer

|

||||

originalModel string

|

||||

isStreaming bool

|

||||

body *bytes.Buffer

|

||||

originalModel string

|

||||

isStreaming bool

|

||||

suppressThinking bool

|

||||

}

|

||||

|

||||

// NewResponseRewriter creates a new response rewriter for model name substitution

|

||||

// NewResponseRewriter creates a new response rewriter for model name substitution.

|

||||

func NewResponseRewriter(w gin.ResponseWriter, originalModel string) *ResponseRewriter {

|

||||

return &ResponseRewriter{

|

||||

ResponseWriter: w,

|

||||

@@ -33,15 +36,15 @@ const maxBufferedResponseBytes = 2 * 1024 * 1024 // 2MB safety cap

|

||||

|

||||

func looksLikeSSEChunk(data []byte) bool {

|

||||

// Fallback detection: some upstreams may omit/lie about Content-Type, causing SSE to be buffered.

|

||||

// Heuristics are intentionally simple and cheap.

|

||||

return bytes.Contains(data, []byte("data:")) ||

|

||||

bytes.Contains(data, []byte("event:")) ||

|

||||

bytes.Contains(data, []byte("message_start")) ||

|

||||

bytes.Contains(data, []byte("message_delta")) ||

|

||||

bytes.Contains(data, []byte("content_block_start")) ||

|

||||

bytes.Contains(data, []byte("content_block_delta")) ||

|

||||

bytes.Contains(data, []byte("content_block_stop")) ||

|

||||

bytes.Contains(data, []byte("\n\n"))

|

||||

// We conservatively detect SSE by checking for "data:" / "event:" at the start of any line.

|

||||

for _, line := range bytes.Split(data, []byte("\n")) {

|

||||

trimmed := bytes.TrimSpace(line)

|

||||

if bytes.HasPrefix(trimmed, []byte("data:")) ||

|

||||

bytes.HasPrefix(trimmed, []byte("event:")) {

|

||||

return true

|

||||

}

|

||||

}

|

||||

return false

|

||||

}

|

||||

|

||||

func (rw *ResponseRewriter) enableStreaming(reason string) error {

|

||||

@@ -95,7 +98,8 @@ func (rw *ResponseRewriter) Write(data []byte) (int, error) {

|

||||

}

|

||||

|

||||

if rw.isStreaming {

|

||||

n, err := rw.ResponseWriter.Write(rw.rewriteStreamChunk(data))

|

||||

rewritten := rw.rewriteStreamChunk(data)

|

||||

n, err := rw.ResponseWriter.Write(rewritten)

|

||||

if err == nil {

|

||||

if flusher, ok := rw.ResponseWriter.(http.Flusher); ok {

|

||||

flusher.Flush()

|

||||

@@ -106,7 +110,6 @@ func (rw *ResponseRewriter) Write(data []byte) (int, error) {

|

||||

return rw.body.Write(data)

|

||||

}

|

||||

|

||||

// Flush writes the buffered response with model names rewritten

|

||||

func (rw *ResponseRewriter) Flush() {

|

||||

if rw.isStreaming {

|

||||

if flusher, ok := rw.ResponseWriter.(http.Flusher); ok {

|

||||

@@ -115,40 +118,79 @@ func (rw *ResponseRewriter) Flush() {

|

||||

return

|

||||

}

|

||||

if rw.body.Len() > 0 {

|

||||

if _, err := rw.ResponseWriter.Write(rw.rewriteModelInResponse(rw.body.Bytes())); err != nil {

|

||||

rewritten := rw.rewriteModelInResponse(rw.body.Bytes())

|

||||

// Update Content-Length to match the rewritten body size, since

|

||||

// signature injection and model name changes alter the payload length.

|

||||

rw.ResponseWriter.Header().Set("Content-Length", fmt.Sprintf("%d", len(rewritten)))

|

||||

if _, err := rw.ResponseWriter.Write(rewritten); err != nil {

|

||||

log.Warnf("amp response rewriter: failed to write rewritten response: %v", err)

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

// modelFieldPaths lists all JSON paths where model name may appear

|

||||

var modelFieldPaths = []string{"message.model", "model", "modelVersion", "response.model", "response.modelVersion"}

|

||||

|

||||

// rewriteModelInResponse replaces all occurrences of the mapped model with the original model in JSON

|

||||

// It also suppresses "thinking" blocks if "tool_use" is present to ensure Amp client compatibility

|

||||

func (rw *ResponseRewriter) rewriteModelInResponse(data []byte) []byte {

|

||||

// 1. Amp Compatibility: Suppress thinking blocks if tool use is detected

|

||||

// The Amp client struggles when both thinking and tool_use blocks are present

|

||||

// ensureAmpSignature injects empty signature fields into tool_use/thinking blocks

|

||||

// in API responses so that the Amp TUI does not crash on P.signature.length.

|

||||

func ensureAmpSignature(data []byte) []byte {

|

||||

for index, block := range gjson.GetBytes(data, "content").Array() {

|

||||

blockType := block.Get("type").String()

|

||||

if blockType != "tool_use" && blockType != "thinking" {

|

||||

continue

|

||||

}

|

||||

signaturePath := fmt.Sprintf("content.%d.signature", index)

|

||||

if gjson.GetBytes(data, signaturePath).Exists() {

|

||||

continue

|

||||

}

|

||||

var err error

|

||||

data, err = sjson.SetBytes(data, signaturePath, "")

|

||||

if err != nil {

|

||||

log.Warnf("Amp ResponseRewriter: failed to add empty signature to %s block: %v", blockType, err)

|

||||

break

|

||||

}

|

||||

}

|

||||

|

||||

contentBlockType := gjson.GetBytes(data, "content_block.type").String()

|

||||

if (contentBlockType == "tool_use" || contentBlockType == "thinking") && !gjson.GetBytes(data, "content_block.signature").Exists() {

|

||||

var err error

|

||||

data, err = sjson.SetBytes(data, "content_block.signature", "")

|

||||

if err != nil {

|

||||

log.Warnf("Amp ResponseRewriter: failed to add empty signature to streaming %s block: %v", contentBlockType, err)

|

||||

}

|

||||

}

|

||||

|

||||

return data

|

||||

}

|

||||

|

||||

func (rw *ResponseRewriter) suppressAmpThinking(data []byte) []byte {

|

||||

if !rw.suppressThinking {

|

||||

return data

|

||||

}

|

||||

if gjson.GetBytes(data, `content.#(type=="tool_use")`).Exists() {

|

||||

filtered := gjson.GetBytes(data, `content.#(type!="thinking")#`)

|

||||

if filtered.Exists() {

|

||||

originalCount := gjson.GetBytes(data, "content.#").Int()

|

||||

filteredCount := filtered.Get("#").Int()

|

||||

|

||||

if originalCount > filteredCount {

|

||||

var err error

|

||||

data, err = sjson.SetBytes(data, "content", filtered.Value())

|

||||

if err != nil {

|

||||

log.Warnf("Amp ResponseRewriter: failed to suppress thinking blocks: %v", err)

|

||||

} else {

|

||||

log.Debugf("Amp ResponseRewriter: Suppressed %d thinking blocks due to tool usage", originalCount-filteredCount)

|

||||

// Log the result for verification

|

||||

log.Debugf("Amp ResponseRewriter: Resulting content: %s", gjson.GetBytes(data, "content").String())

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

return data

|

||||

}

|

||||

|

||||

func (rw *ResponseRewriter) rewriteModelInResponse(data []byte) []byte {

|

||||

data = ensureAmpSignature(data)

|

||||

data = rw.suppressAmpThinking(data)

|

||||

if len(data) == 0 {

|

||||

return data

|

||||

}

|

||||

|

||||

if rw.originalModel == "" {

|

||||

return data

|

||||

}

|

||||

@@ -160,24 +202,154 @@ func (rw *ResponseRewriter) rewriteModelInResponse(data []byte) []byte {

|

||||

return data

|

||||

}

|

||||

|

||||

// rewriteStreamChunk rewrites model names in SSE stream chunks

|

||||

func (rw *ResponseRewriter) rewriteStreamChunk(chunk []byte) []byte {

|

||||

if rw.originalModel == "" {

|

||||

return chunk

|

||||

lines := bytes.Split(chunk, []byte("\n"))

|

||||

var out [][]byte

|

||||

|

||||

i := 0

|

||||

for i < len(lines) {

|

||||

line := lines[i]

|

||||

trimmed := bytes.TrimSpace(line)

|

||||

|

||||

// Case 1: "event:" line - look ahead for its "data:" line

|

||||

if bytes.HasPrefix(trimmed, []byte("event: ")) {

|

||||

// Scan forward past blank lines to find the data: line

|

||||

dataIdx := -1

|

||||

for j := i + 1; j < len(lines); j++ {

|

||||

t := bytes.TrimSpace(lines[j])

|

||||

if len(t) == 0 {

|

||||

continue

|

||||

}

|

||||

if bytes.HasPrefix(t, []byte("data: ")) {

|

||||

dataIdx = j

|

||||

}

|

||||

break

|

||||

}

|

||||

|

||||

if dataIdx >= 0 {

|

||||

// Found event+data pair - process through rewriter

|

||||

jsonData := bytes.TrimPrefix(bytes.TrimSpace(lines[dataIdx]), []byte("data: "))

|

||||

if len(jsonData) > 0 && jsonData[0] == '{' {

|

||||

rewritten := rw.rewriteStreamEvent(jsonData)

|

||||

if rewritten == nil {

|

||||

i = dataIdx + 1

|

||||

continue

|

||||

}

|

||||

// Emit event line

|

||||

out = append(out, line)

|

||||

// Emit blank lines between event and data

|

||||

for k := i + 1; k < dataIdx; k++ {

|

||||

out = append(out, lines[k])

|

||||

}

|

||||

// Emit rewritten data

|

||||

out = append(out, append([]byte("data: "), rewritten...))

|

||||

i = dataIdx + 1

|

||||

continue

|

||||

}

|

||||

}

|

||||

|

||||

// No data line found (orphan event from cross-chunk split)

|

||||

// Pass it through as-is - the data will arrive in the next chunk

|

||||

out = append(out, line)

|

||||

i++

|

||||

continue

|

||||

}

|

||||

|

||||

// Case 2: standalone "data:" line (no preceding event: in this chunk)

|

||||

if bytes.HasPrefix(trimmed, []byte("data: ")) {

|

||||

jsonData := bytes.TrimPrefix(trimmed, []byte("data: "))

|

||||

if len(jsonData) > 0 && jsonData[0] == '{' {

|

||||

rewritten := rw.rewriteStreamEvent(jsonData)

|

||||

if rewritten != nil {

|

||||

out = append(out, append([]byte("data: "), rewritten...))

|

||||

}

|

||||

i++

|

||||

continue

|

||||

}

|

||||

}

|

||||

|

||||

// Case 3: everything else

|

||||

out = append(out, line)

|

||||

i++

|

||||

}

|

||||

|

||||

// SSE format: "data: {json}\n\n"

|

||||

lines := bytes.Split(chunk, []byte("\n"))

|

||||

for i, line := range lines {

|

||||

if bytes.HasPrefix(line, []byte("data: ")) {

|

||||

jsonData := bytes.TrimPrefix(line, []byte("data: "))

|

||||

if len(jsonData) > 0 && jsonData[0] == '{' {

|

||||

// Rewrite JSON in the data line

|

||||

rewritten := rw.rewriteModelInResponse(jsonData)

|

||||

lines[i] = append([]byte("data: "), rewritten...)

|

||||

return bytes.Join(out, []byte("\n"))

|

||||

}

|

||||

|

||||

// rewriteStreamEvent processes a single JSON event in the SSE stream.

|

||||

// It rewrites model names and ensures signature fields exist.

|

||||

// NOTE: streaming mode does NOT suppress thinking blocks - they are

|

||||

// passed through with signature injection to avoid breaking SSE index

|

||||

// alignment and TUI rendering.

|

||||

func (rw *ResponseRewriter) rewriteStreamEvent(data []byte) []byte {

|

||||

// Inject empty signature where needed

|

||||

data = ensureAmpSignature(data)

|

||||

|

||||

// Rewrite model name

|

||||

if rw.originalModel != "" {

|

||||

for _, path := range modelFieldPaths {

|

||||

if gjson.GetBytes(data, path).Exists() {

|

||||

data, _ = sjson.SetBytes(data, path, rw.originalModel)

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

return bytes.Join(lines, []byte("\n"))

|

||||

return data

|

||||

}

|

||||

|

||||

// SanitizeAmpRequestBody removes thinking blocks with empty/missing/invalid signatures

|

||||

// from the messages array in a request body before forwarding to the upstream API.

|

||||

// This prevents 400 errors from the API which requires valid signatures on thinking blocks.

|

||||

func SanitizeAmpRequestBody(body []byte) []byte {

|

||||

messages := gjson.GetBytes(body, "messages")

|

||||

if !messages.Exists() || !messages.IsArray() {

|

||||

return body

|

||||

}

|

||||

|

||||

modified := false

|

||||

for msgIdx, msg := range messages.Array() {

|

||||

if msg.Get("role").String() != "assistant" {

|

||||

continue

|

||||

}

|

||||

content := msg.Get("content")

|

||||

if !content.Exists() || !content.IsArray() {

|

||||

continue

|

||||

}

|

||||

|

||||

var keepBlocks []interface{}

|

||||

removedCount := 0

|

||||

|

||||

for _, block := range content.Array() {

|

||||

blockType := block.Get("type").String()

|

||||

if blockType == "thinking" {

|

||||

sig := block.Get("signature")

|

||||

if !sig.Exists() || sig.Type != gjson.String || strings.TrimSpace(sig.String()) == "" {

|

||||

removedCount++

|

||||

continue

|

||||

}

|

||||

}

|

||||

keepBlocks = append(keepBlocks, block.Value())

|

||||

}

|

||||

|

||||

if removedCount > 0 {

|

||||

contentPath := fmt.Sprintf("messages.%d.content", msgIdx)

|

||||

var err error

|

||||

if len(keepBlocks) == 0 {

|

||||

body, err = sjson.SetBytes(body, contentPath, []interface{}{})

|

||||

} else {

|

||||

body, err = sjson.SetBytes(body, contentPath, keepBlocks)

|

||||

}