mirror of

https://github.com/router-for-me/CLIProxyAPIPlus.git

synced 2026-03-27 22:27:28 +00:00

Compare commits

188 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

b8b89f34f4 | ||

|

|

1fa094dac6 | ||

|

|

f55754621f | ||

|

|

ac26e7db43 | ||

|

|

10b824fcac | ||

|

|

7dccc7ba2f | ||

|

|

70c90687fd | ||

|

|

8144ffd5c8 | ||

|

|

6b45d311ec | ||

|

|

7386a70724 | ||

|

|

1821bf7051 | ||

|

|

d42b5d4e78 | ||

|

|

1b7447b682 | ||

|

|

40dee4453a | ||

|

|

8902e1cccb | ||

|

|

de5fe71478 | ||

|

|

dcfbec2990 | ||

|

|

c95620f90e | ||

|

|

9613f0b3f9 | ||

|

|

274f29e26b | ||

|

|

c8e79c3787 | ||

|

|

8afef43887 | ||

|

|

c1083cbfc6 | ||

|

|

1e6bc81cfd | ||

|

|

1a149475e0 | ||

|

|

e5166841db | ||

|

|

19c52bcb60 | ||

|

|

bb9b2d1758 | ||

|

|

7fa527193c | ||

|

|

ed0eb51b4d | ||

|

|

0e4f669c8b | ||

|

|

76c064c729 | ||

|

|

d2f652f436 | ||

|

|

6a452a54d5 | ||

|

|

9e5693e74f | ||

|

|

528b1a2307 | ||

|

|

0cc978ec1d | ||

|

|

d312422ab4 | ||

|

|

fee736933b | ||

|

|

09c92aa0b5 | ||

|

|

8c67b3ae64 | ||

|

|

000e4ceb4e | ||

|

|

5c99846ecf | ||

|

|

d475aaba96 | ||

|

|

1dc4ecb1b8 | ||

|

|

1315f710f5 | ||

|

|

96f55570f7 | ||

|

|

0906aeca87 | ||

|

|

7333619f15 | ||

|

|

97c0487add | ||

|

|

2db8df8e38 | ||

|

|

a576088d5f | ||

|

|

66ff916838 | ||

|

|

7b0453074e | ||

|

|

a000eb523d | ||

|

|

18a4fedc7f | ||

|

|

5d6cdccda0 | ||

|

|

1b7f4ac3e1 | ||

|

|

afc1a5b814 | ||

|

|

7ed38db54f | ||

|

|

28c10f4e69 | ||

|

|

6e12441a3b | ||

|

|

65c439c18d | ||

|

|

0ed2d16596 | ||

|

|

db335ac616 | ||

|

|

f3c59165d7 | ||

|

|

e6690cb447 | ||

|

|

35907416b8 | ||

|

|

e8bb350467 | ||

|

|

5331d51f27 | ||

|

|

755ca75879 | ||

|

|

2398ebad55 | ||

|

|

c1bf298216 | ||

|

|

e005208d76 | ||

|

|

d1df70d02f | ||

|

|

f81acd0760 | ||

|

|

636da4c932 | ||

|

|

cccb77b552 | ||

|

|

2bd646ad70 | ||

|

|

52c1fa025e | ||

|

|

680105f84d | ||

|

|

f7069e9548 | ||

|

|

7275e99b41 | ||

|

|

c28b65f849 | ||

|

|

793840cdb4 | ||

|

|

8f421de532 | ||

|

|

be2dd60ee7 | ||

|

|

ea3e0b713e | ||

|

|

8179d5a8a4 | ||

|

|

6fa7abe434 | ||

|

|

5135c22cd6 | ||

|

|

1e27990561 | ||

|

|

e1e9fc43c1 | ||

|

|

b2921518ac | ||

|

|

dd64adbeeb | ||

|

|

616d41c06a | ||

|

|

e0e337aeb9 | ||

|

|

d52839fced | ||

|

|

4022e69651 | ||

|

|

56073ded69 | ||

|

|

9738a53f49 | ||

|

|

be3f8dbf7e | ||

|

|

9c6c3612a8 | ||

|

|

19e1a4447a | ||

|

|

7c2ad4cda2 | ||

|

|

fb95813fbf | ||

|

|

db63f9b5d6 | ||

|

|

25f6c4a250 | ||

|

|

b24ae74216 | ||

|

|

59ad8f40dc | ||

|

|

ff03dc6a2c | ||

|

|

dc7187ca5b | ||

|

|

b1dcff778c | ||

|

|

cef2aeeb08 | ||

|

|

bcd1e8cc34 | ||

|

|

198b3f4a40 | ||

|

|

9fee7f488e | ||

|

|

1b46d39b8b | ||

|

|

c1241a98e2 | ||

|

|

8d8f5970ee | ||

|

|

f90120f846 | ||

|

|

0b94d36c4a | ||

|

|

152c310bb7 | ||

|

|

f6bbca35ab | ||

|

|

c8cee6a209 | ||

|

|

b5701f416b | ||

|

|

4b1a404fcb | ||

|

|

b93cce5412 | ||

|

|

c6cb24039d | ||

|

|

5382408489 | ||

|

|

67669196ed | ||

|

|

58fd9bf964 | ||

|

|

7b3dfc67bc | ||

|

|

cdd24052d3 | ||

|

|

5da0decef6 | ||

|

|

733fd8edab | ||

|

|

af27f2b8bc | ||

|

|

2e1925d762 | ||

|

|

77254bd074 | ||

|

|

5b6342e6ac | ||

|

|

3960c93d51 | ||

|

|

339a81b650 | ||

|

|

560c020477 | ||

|

|

aec65e3be3 | ||

|

|

f44f0702f8 | ||

|

|

b76b79068f | ||

|

|

34c8ccb961 | ||

|

|

d08e164af3 | ||

|

|

8178efaeda | ||

|

|

86d5db472a | ||

|

|

020d36f6e8 | ||

|

|

1db23979e8 | ||

|

|

c3d5dbe96f | ||

|

|

5484489406 | ||

|

|

0ac52da460 | ||

|

|

817cebb321 | ||

|

|

683f3709d6 | ||

|

|

dbd42a42b2 | ||

|

|

ec24baf757 | ||

|

|

dea3e74d35 | ||

|

|

a6c3042e34 | ||

|

|

861537c9bd | ||

|

|

8c92cb0883 | ||

|

|

89d7be9525 | ||

|

|

2b79d7f22f | ||

|

|

2bb686f594 | ||

|

|

163fe287ce | ||

|

|

70988d387b | ||

|

|

52058a1659 | ||

|

|

df5595a0c9 | ||

|

|

ddaa9d2436 | ||

|

|

7b7b258c38 | ||

|

|

a00f774f5a | ||

|

|

9daf1ba8b5 | ||

|

|

76f2359637 | ||

|

|

dcb1c9be8a | ||

|

|

a24f4ace78 | ||

|

|

c631df8c3b | ||

|

|

54c3eb1b1e | ||

|

|

bb28cd26ad | ||

|

|

046865461e | ||

|

|

cf74ed2f0c | ||

|

|

c3762328a5 | ||

|

|

e333fbea3d | ||

|

|

efbe36d1d4 | ||

|

|

8553cfa40e | ||

|

|

30d5c95b26 | ||

|

|

d1e3195e6f |

14

.github/workflows/docker-image.yml

vendored

14

.github/workflows/docker-image.yml

vendored

@@ -16,6 +16,10 @@ jobs:

|

|||||||

steps:

|

steps:

|

||||||

- name: Checkout

|

- name: Checkout

|

||||||

uses: actions/checkout@v4

|

uses: actions/checkout@v4

|

||||||

|

- name: Refresh models catalog

|

||||||

|

run: |

|

||||||

|

git fetch --depth 1 https://github.com/router-for-me/models.git main

|

||||||

|

git show FETCH_HEAD:models.json > internal/registry/models/models.json

|

||||||

- name: Set up Docker Buildx

|

- name: Set up Docker Buildx

|

||||||

uses: docker/setup-buildx-action@v3

|

uses: docker/setup-buildx-action@v3

|

||||||

- name: Login to DockerHub

|

- name: Login to DockerHub

|

||||||

@@ -25,7 +29,7 @@ jobs:

|

|||||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||||

- name: Generate Build Metadata

|

- name: Generate Build Metadata

|

||||||

run: |

|

run: |

|

||||||

echo VERSION=`git describe --tags --always --dirty` >> $GITHUB_ENV

|

echo "VERSION=${GITHUB_REF_NAME}" >> $GITHUB_ENV

|

||||||

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

||||||

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

||||||

- name: Build and push (amd64)

|

- name: Build and push (amd64)

|

||||||

@@ -47,6 +51,10 @@ jobs:

|

|||||||

steps:

|

steps:

|

||||||

- name: Checkout

|

- name: Checkout

|

||||||

uses: actions/checkout@v4

|

uses: actions/checkout@v4

|

||||||

|

- name: Refresh models catalog

|

||||||

|

run: |

|

||||||

|

git fetch --depth 1 https://github.com/router-for-me/models.git main

|

||||||

|

git show FETCH_HEAD:models.json > internal/registry/models/models.json

|

||||||

- name: Set up Docker Buildx

|

- name: Set up Docker Buildx

|

||||||

uses: docker/setup-buildx-action@v3

|

uses: docker/setup-buildx-action@v3

|

||||||

- name: Login to DockerHub

|

- name: Login to DockerHub

|

||||||

@@ -56,7 +64,7 @@ jobs:

|

|||||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||||

- name: Generate Build Metadata

|

- name: Generate Build Metadata

|

||||||

run: |

|

run: |

|

||||||

echo VERSION=`git describe --tags --always --dirty` >> $GITHUB_ENV

|

echo "VERSION=${GITHUB_REF_NAME}" >> $GITHUB_ENV

|

||||||

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

||||||

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

||||||

- name: Build and push (arm64)

|

- name: Build and push (arm64)

|

||||||

@@ -90,7 +98,7 @@ jobs:

|

|||||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||||

- name: Generate Build Metadata

|

- name: Generate Build Metadata

|

||||||

run: |

|

run: |

|

||||||

echo VERSION=`git describe --tags --always --dirty` >> $GITHUB_ENV

|

echo "VERSION=${GITHUB_REF_NAME}" >> $GITHUB_ENV

|

||||||

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

||||||

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

||||||

- name: Create and push multi-arch manifests

|

- name: Create and push multi-arch manifests

|

||||||

|

|||||||

4

.github/workflows/pr-test-build.yml

vendored

4

.github/workflows/pr-test-build.yml

vendored

@@ -12,6 +12,10 @@ jobs:

|

|||||||

steps:

|

steps:

|

||||||

- name: Checkout

|

- name: Checkout

|

||||||

uses: actions/checkout@v4

|

uses: actions/checkout@v4

|

||||||

|

- name: Refresh models catalog

|

||||||

|

run: |

|

||||||

|

git fetch --depth 1 https://github.com/router-for-me/models.git main

|

||||||

|

git show FETCH_HEAD:models.json > internal/registry/models/models.json

|

||||||

- name: Set up Go

|

- name: Set up Go

|

||||||

uses: actions/setup-go@v5

|

uses: actions/setup-go@v5

|

||||||

with:

|

with:

|

||||||

|

|||||||

9

.github/workflows/release.yaml

vendored

9

.github/workflows/release.yaml

vendored

@@ -16,6 +16,10 @@ jobs:

|

|||||||

- uses: actions/checkout@v4

|

- uses: actions/checkout@v4

|

||||||

with:

|

with:

|

||||||

fetch-depth: 0

|

fetch-depth: 0

|

||||||

|

- name: Refresh models catalog

|

||||||

|

run: |

|

||||||

|

git fetch --depth 1 https://github.com/router-for-me/models.git main

|

||||||

|

git show FETCH_HEAD:models.json > internal/registry/models/models.json

|

||||||

- run: git fetch --force --tags

|

- run: git fetch --force --tags

|

||||||

- uses: actions/setup-go@v4

|

- uses: actions/setup-go@v4

|

||||||

with:

|

with:

|

||||||

@@ -23,15 +27,14 @@ jobs:

|

|||||||

cache: true

|

cache: true

|

||||||

- name: Generate Build Metadata

|

- name: Generate Build Metadata

|

||||||

run: |

|

run: |

|

||||||

VERSION=$(git describe --tags --always --dirty)

|

echo "VERSION=${GITHUB_REF_NAME}" >> $GITHUB_ENV

|

||||||

echo "VERSION=${VERSION}" >> $GITHUB_ENV

|

|

||||||

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

|

||||||

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

|

||||||

- uses: goreleaser/goreleaser-action@v4

|

- uses: goreleaser/goreleaser-action@v4

|

||||||

with:

|

with:

|

||||||

distribution: goreleaser

|

distribution: goreleaser

|

||||||

version: latest

|

version: latest

|

||||||

args: release --clean

|

args: release --clean --skip=validate

|

||||||

env:

|

env:

|

||||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||||

VERSION: ${{ env.VERSION }}

|

VERSION: ${{ env.VERSION }}

|

||||||

|

|||||||

1

.gitignore

vendored

1

.gitignore

vendored

@@ -1,6 +1,7 @@

|

|||||||

# Binaries

|

# Binaries

|

||||||

cli-proxy-api

|

cli-proxy-api

|

||||||

cliproxy

|

cliproxy

|

||||||

|

/server

|

||||||

*.exe

|

*.exe

|

||||||

|

|

||||||

|

|

||||||

|

|||||||

@@ -1,3 +1,5 @@

|

|||||||

|

version: 2

|

||||||

|

|

||||||

builds:

|

builds:

|

||||||

- id: "cli-proxy-api-plus"

|

- id: "cli-proxy-api-plus"

|

||||||

env:

|

env:

|

||||||

@@ -6,6 +8,7 @@ builds:

|

|||||||

- linux

|

- linux

|

||||||

- windows

|

- windows

|

||||||

- darwin

|

- darwin

|

||||||

|

- freebsd

|

||||||

goarch:

|

goarch:

|

||||||

- amd64

|

- amd64

|

||||||

- arm64

|

- arm64

|

||||||

|

|||||||

@@ -1,13 +1,11 @@

|

|||||||

# CLIProxyAPI Plus

|

# CLIProxyAPI Plus

|

||||||

|

|

||||||

[English](README.md) | 中文

|

[English](README.md) | 中文 | [日本語](README_JA.md)

|

||||||

|

|

||||||

这是 [CLIProxyAPI](https://github.com/router-for-me/CLIProxyAPI) 的 Plus 版本,在原有基础上增加了第三方供应商的支持。

|

这是 [CLIProxyAPI](https://github.com/router-for-me/CLIProxyAPI) 的 Plus 版本,在原有基础上增加了第三方供应商的支持。

|

||||||

|

|

||||||

所有的第三方供应商支持都由第三方社区维护者提供,CLIProxyAPI 不提供技术支持。如需取得支持,请与对应的社区维护者联系。

|

所有的第三方供应商支持都由第三方社区维护者提供,CLIProxyAPI 不提供技术支持。如需取得支持,请与对应的社区维护者联系。

|

||||||

|

|

||||||

该 Plus 版本的主线功能与主线功能强制同步。

|

|

||||||

|

|

||||||

## 贡献

|

## 贡献

|

||||||

|

|

||||||

该项目仅接受第三方供应商支持的 Pull Request。任何非第三方供应商支持的 Pull Request 都将被拒绝。

|

该项目仅接受第三方供应商支持的 Pull Request。任何非第三方供应商支持的 Pull Request 都将被拒绝。

|

||||||

@@ -16,4 +14,4 @@

|

|||||||

|

|

||||||

## 许可证

|

## 许可证

|

||||||

|

|

||||||

此项目根据 MIT 许可证授权 - 有关详细信息,请参阅 [LICENSE](LICENSE) 文件。

|

此项目根据 MIT 许可证授权 - 有关详细信息,请参阅 [LICENSE](LICENSE) 文件。

|

||||||

|

|||||||

187

README_JA.md

Normal file

187

README_JA.md

Normal file

@@ -0,0 +1,187 @@

|

|||||||

|

# CLI Proxy API

|

||||||

|

|

||||||

|

[English](README.md) | [中文](README_CN.md) | 日本語

|

||||||

|

|

||||||

|

CLI向けのOpenAI/Gemini/Claude/Codex互換APIインターフェースを提供するプロキシサーバーです。

|

||||||

|

|

||||||

|

OAuth経由でOpenAI Codex(GPTモデル)およびClaude Codeもサポートしています。

|

||||||

|

|

||||||

|

ローカルまたはマルチアカウントのCLIアクセスを、OpenAI(Responses含む)/Gemini/Claude互換のクライアントやSDKで利用できます。

|

||||||

|

|

||||||

|

## スポンサー

|

||||||

|

|

||||||

|

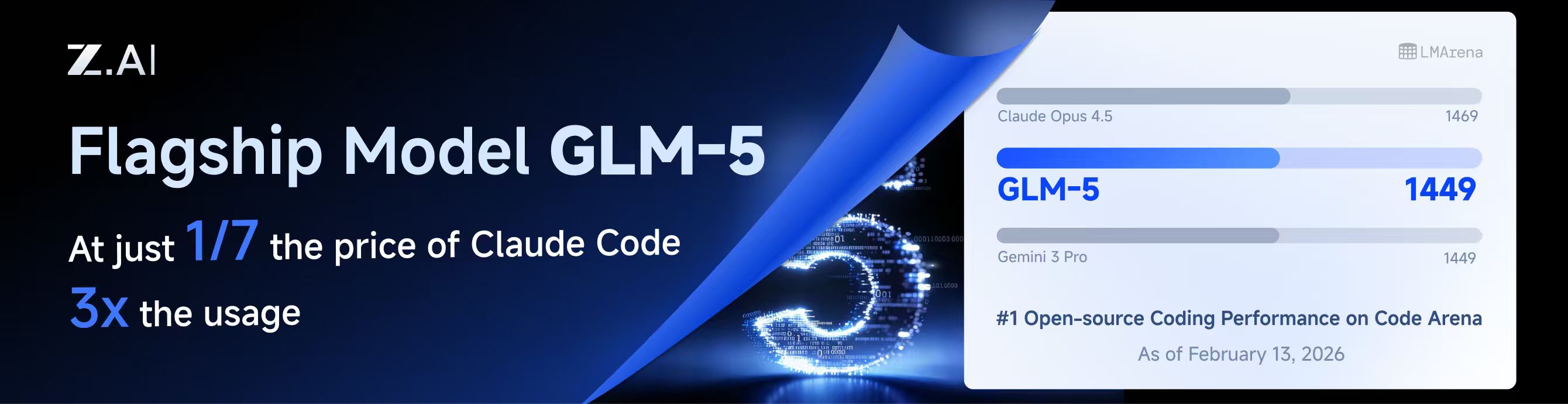

[](https://z.ai/subscribe?ic=8JVLJQFSKB)

|

||||||

|

|

||||||

|

本プロジェクトはZ.aiにスポンサーされており、GLM CODING PLANの提供を受けています。

|

||||||

|

|

||||||

|

GLM CODING PLANはAIコーディング向けに設計されたサブスクリプションサービスで、月額わずか$10から利用可能です。フラッグシップのGLM-4.7および(GLM-5はProユーザーのみ利用可能)モデルを10以上の人気AIコーディングツール(Claude Code、Cline、Roo Codeなど)で利用でき、開発者にトップクラスの高速かつ安定したコーディング体験を提供します。

|

||||||

|

|

||||||

|

GLM CODING PLANを10%割引で取得:https://z.ai/subscribe?ic=8JVLJQFSKB

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

<table>

|

||||||

|

<tbody>

|

||||||

|

<tr>

|

||||||

|

<td width="180"><a href="https://www.packyapi.com/register?aff=cliproxyapi"><img src="./assets/packycode.png" alt="PackyCode" width="150"></a></td>

|

||||||

|

<td>PackyCodeのスポンサーシップに感謝します!PackyCodeは信頼性が高く効率的なAPIリレーサービスプロバイダーで、Claude Code、Codex、Geminiなどのリレーサービスを提供しています。PackyCodeは当ソフトウェアのユーザーに特別割引を提供しています:<a href="https://www.packyapi.com/register?aff=cliproxyapi">こちらのリンク</a>から登録し、チャージ時にプロモーションコード「cliproxyapi」を入力すると10%割引になります。</td>

|

||||||

|

</tr>

|

||||||

|

<tr>

|

||||||

|

<td width="180"><a href="https://www.aicodemirror.com/register?invitecode=TJNAIF"><img src="./assets/aicodemirror.png" alt="AICodeMirror" width="150"></a></td>

|

||||||

|

<td>AICodeMirrorのスポンサーシップに感謝します!AICodeMirrorはClaude Code / Codex / Gemini CLI向けの公式高安定性リレーサービスを提供しており、エンタープライズグレードの同時接続、迅速な請求書発行、24時間365日の専任技術サポートを備えています。Claude Code / Codex / Geminiの公式チャネルが元の価格の38% / 2% / 9%で利用でき、チャージ時にはさらに割引があります!CLIProxyAPIユーザー向けの特別特典:<a href="https://www.aicodemirror.com/register?invitecode=TJNAIF">こちらのリンク</a>から登録すると、初回チャージが20%割引になり、エンタープライズのお客様は最大25%割引を受けられます!</td>

|

||||||

|

</tr>

|

||||||

|

<tr>

|

||||||

|

<td width="180"><a href="https://shop.bmoplus.com/?utm_source=github"><img src="./assets/bmoplus.png" alt="BmoPlus" width="150"></a></td>

|

||||||

|

<td>本プロジェクトにご支援いただいた BmoPlus に感謝いたします!BmoPlusは、AIサブスクリプションのヘビーユーザー向けに特化した信頼性の高いAIアカウントサービスプロバイダーであり、安定した ChatGPT Plus / ChatGPT Pro (完全保証) / Claude Pro / Super Grok / Gemini Pro の公式代行チャージおよび即納アカウントを提供しています。こちらの<a href="https://shop.bmoplus.com/?utm_source=github">BmoPlus AIアカウント専門店/代行チャージ</a>経由でご登録・ご注文いただいたユーザー様は、GPTを <b>公式サイト価格の約1割(90% OFF)</b> という驚異的な価格でご利用いただけます!</td>

|

||||||

|

</tr>

|

||||||

|

</tbody>

|

||||||

|

</table>

|

||||||

|

|

||||||

|

## 概要

|

||||||

|

|

||||||

|

- CLIモデル向けのOpenAI/Gemini/Claude互換APIエンドポイント

|

||||||

|

- OAuthログインによるOpenAI Codexサポート(GPTモデル)

|

||||||

|

- OAuthログインによるClaude Codeサポート

|

||||||

|

- OAuthログインによるQwen Codeサポート

|

||||||

|

- OAuthログインによるiFlowサポート

|

||||||

|

- プロバイダールーティングによるAmp CLIおよびIDE拡張機能のサポート

|

||||||

|

- ストリーミングおよび非ストリーミングレスポンス

|

||||||

|

- 関数呼び出し/ツールのサポート

|

||||||

|

- マルチモーダル入力サポート(テキストと画像)

|

||||||

|

- ラウンドロビン負荷分散による複数アカウント対応(Gemini、OpenAI、Claude、QwenおよびiFlow)

|

||||||

|

- シンプルなCLI認証フロー(Gemini、OpenAI、Claude、QwenおよびiFlow)

|

||||||

|

- Generative Language APIキーのサポート

|

||||||

|

- AI Studioビルドのマルチアカウント負荷分散

|

||||||

|

- Gemini CLIのマルチアカウント負荷分散

|

||||||

|

- Claude Codeのマルチアカウント負荷分散

|

||||||

|

- Qwen Codeのマルチアカウント負荷分散

|

||||||

|

- iFlowのマルチアカウント負荷分散

|

||||||

|

- OpenAI Codexのマルチアカウント負荷分散

|

||||||

|

- 設定によるOpenAI互換アップストリームプロバイダー(例:OpenRouter)

|

||||||

|

- プロキシ埋め込み用の再利用可能なGo SDK(`docs/sdk-usage.md`を参照)

|

||||||

|

|

||||||

|

## はじめに

|

||||||

|

|

||||||

|

CLIProxyAPIガイド:[https://help.router-for.me/](https://help.router-for.me/)

|

||||||

|

|

||||||

|

## 管理API

|

||||||

|

|

||||||

|

[MANAGEMENT_API.md](https://help.router-for.me/management/api)を参照

|

||||||

|

|

||||||

|

## Amp CLIサポート

|

||||||

|

|

||||||

|

CLIProxyAPIは[Amp CLI](https://ampcode.com)およびAmp IDE拡張機能の統合サポートを含んでおり、Google/ChatGPT/ClaudeのOAuthサブスクリプションをAmpのコーディングツールで使用できます:

|

||||||

|

|

||||||

|

- Ampの APIパターン用のプロバイダールートエイリアス(`/api/provider/{provider}/v1...`)

|

||||||

|

- OAuth認証およびアカウント機能用の管理プロキシ

|

||||||

|

- 自動ルーティングによるスマートモデルフォールバック

|

||||||

|

- 利用できないモデルを代替モデルにルーティングする**モデルマッピング**(例:`claude-opus-4.5` → `claude-sonnet-4`)

|

||||||

|

- localhostのみの管理エンドポイントによるセキュリティファーストの設計

|

||||||

|

|

||||||

|

**→ [Amp CLI統合ガイドの完全版](https://help.router-for.me/agent-client/amp-cli.html)**

|

||||||

|

|

||||||

|

## SDKドキュメント

|

||||||

|

|

||||||

|

- 使い方:[docs/sdk-usage.md](docs/sdk-usage.md)

|

||||||

|

- 上級(エグゼキューターとトランスレーター):[docs/sdk-advanced.md](docs/sdk-advanced.md)

|

||||||

|

- アクセス:[docs/sdk-access.md](docs/sdk-access.md)

|

||||||

|

- ウォッチャー:[docs/sdk-watcher.md](docs/sdk-watcher.md)

|

||||||

|

- カスタムプロバイダーの例:`examples/custom-provider`

|

||||||

|

|

||||||

|

## コントリビューション

|

||||||

|

|

||||||

|

コントリビューションを歓迎します!お気軽にPull Requestを送ってください。

|

||||||

|

|

||||||

|

1. リポジトリをフォーク

|

||||||

|

2. フィーチャーブランチを作成(`git checkout -b feature/amazing-feature`)

|

||||||

|

3. 変更をコミット(`git commit -m 'Add some amazing feature'`)

|

||||||

|

4. ブランチにプッシュ(`git push origin feature/amazing-feature`)

|

||||||

|

5. Pull Requestを作成

|

||||||

|

|

||||||

|

## 関連プロジェクト

|

||||||

|

|

||||||

|

CLIProxyAPIをベースにした以下のプロジェクトがあります:

|

||||||

|

|

||||||

|

### [vibeproxy](https://github.com/automazeio/vibeproxy)

|

||||||

|

|

||||||

|

macOSネイティブのメニューバーアプリで、Claude CodeとChatGPTのサブスクリプションをAIコーディングツールで使用可能 - APIキー不要

|

||||||

|

|

||||||

|

### [Subtitle Translator](https://github.com/VjayC/SRT-Subtitle-Translator-Validator)

|

||||||

|

|

||||||

|

CLIProxyAPI経由でGeminiサブスクリプションを使用してSRT字幕を翻訳するブラウザベースのツール。自動検証/エラー修正機能付き - APIキー不要

|

||||||

|

|

||||||

|

### [CCS (Claude Code Switch)](https://github.com/kaitranntt/ccs)

|

||||||

|

|

||||||

|

CLIProxyAPI OAuthを使用して複数のClaudeアカウントや代替モデル(Gemini、Codex、Antigravity)を即座に切り替えるCLIラッパー - APIキー不要

|

||||||

|

|

||||||

|

### [ProxyPal](https://github.com/heyhuynhgiabuu/proxypal)

|

||||||

|

|

||||||

|

CLIProxyAPI管理用のmacOSネイティブGUI:OAuth経由でプロバイダー、モデルマッピング、エンドポイントを設定 - APIキー不要

|

||||||

|

|

||||||

|

### [Quotio](https://github.com/nguyenphutrong/quotio)

|

||||||

|

|

||||||

|

Claude、Gemini、OpenAI、Qwen、Antigravityのサブスクリプションを統合し、リアルタイムのクォータ追跡とスマート自動フェイルオーバーを備えたmacOSネイティブのメニューバーアプリ。Claude Code、OpenCode、Droidなどのコーディングツール向け - APIキー不要

|

||||||

|

|

||||||

|

### [CodMate](https://github.com/loocor/CodMate)

|

||||||

|

|

||||||

|

CLI AIセッション(Codex、Claude Code、Gemini CLI)を管理するmacOS SwiftUIネイティブアプリ。統合プロバイダー管理、Gitレビュー、プロジェクト整理、グローバル検索、ターミナル統合機能を搭載。CLIProxyAPIと統合し、Codex、Claude、Gemini、Antigravity、Qwen CodeのOAuth認証を提供。単一のプロキシエンドポイントを通じた組み込みおよびサードパーティプロバイダーの再ルーティングに対応 - OAuthプロバイダーではAPIキー不要

|

||||||

|

|

||||||

|

### [ProxyPilot](https://github.com/Finesssee/ProxyPilot)

|

||||||

|

|

||||||

|

TUI、システムトレイ、マルチプロバイダーOAuthを備えたWindows向けCLIProxyAPIフォーク - AIコーディングツール用、APIキー不要

|

||||||

|

|

||||||

|

### [Claude Proxy VSCode](https://github.com/uzhao/claude-proxy-vscode)

|

||||||

|

|

||||||

|

Claude Codeモデルを素早く切り替えるVSCode拡張機能。バックエンドとしてCLIProxyAPIを統合し、バックグラウンドでの自動ライフサイクル管理を搭載

|

||||||

|

|

||||||

|

### [ZeroLimit](https://github.com/0xtbug/zero-limit)

|

||||||

|

|

||||||

|

CLIProxyAPIを使用してAIコーディングアシスタントのクォータを監視するTauri + React製のWindowsデスクトップアプリ。Gemini、Claude、OpenAI Codex、Antigravityアカウントの使用量をリアルタイムダッシュボード、システムトレイ統合、ワンクリックプロキシコントロールで追跡 - APIキー不要

|

||||||

|

|

||||||

|

### [CPA-XXX Panel](https://github.com/ferretgeek/CPA-X)

|

||||||

|

|

||||||

|

CLIProxyAPI向けの軽量Web管理パネル。ヘルスチェック、リソース監視、リアルタイムログ、自動更新、リクエスト統計、料金表示機能を搭載。ワンクリックインストールとsystemdサービスに対応

|

||||||

|

|

||||||

|

### [CLIProxyAPI Tray](https://github.com/kitephp/CLIProxyAPI_Tray)

|

||||||

|

|

||||||

|

PowerShellスクリプトで実装されたWindowsトレイアプリケーション。サードパーティライブラリに依存せず、ショートカットの自動作成、サイレント実行、パスワード管理、チャネル切り替え(Main / Plus)、自動ダウンロードおよび自動更新に対応

|

||||||

|

|

||||||

|

### [霖君](https://github.com/wangdabaoqq/LinJun)

|

||||||

|

|

||||||

|

霖君はAIプログラミングアシスタントを管理するクロスプラットフォームデスクトップアプリケーションで、macOS、Windows、Linuxシステムに対応。Claude Code、Gemini CLI、OpenAI Codex、Qwen Codeなどのコーディングツールを統合管理し、ローカルプロキシによるマルチアカウントクォータ追跡とワンクリック設定が可能

|

||||||

|

|

||||||

|

### [CLIProxyAPI Dashboard](https://github.com/itsmylife44/cliproxyapi-dashboard)

|

||||||

|

|

||||||

|

Next.js、React、PostgreSQLで構築されたCLIProxyAPI用のモダンなWebベース管理ダッシュボード。リアルタイムログストリーミング、構造化された設定編集、APIキー管理、Claude/Gemini/Codex向けOAuthプロバイダー統合、使用量分析、コンテナ管理、コンパニオンプラグインによるOpenCodeとの設定同期機能を搭載 - 手動でのYAML編集は不要

|

||||||

|

|

||||||

|

### [All API Hub](https://github.com/qixing-jk/all-api-hub)

|

||||||

|

|

||||||

|

New API互換リレーサイトアカウントをワンストップで管理するブラウザ拡張機能。残高と使用量のダッシュボード、自動チェックイン、一般的なアプリへのワンクリックキーエクスポート、ページ内API可用性テスト、チャネル/モデルの同期とリダイレクト機能を搭載。Management APIを通じてCLIProxyAPIと統合し、ワンクリックでプロバイダーのインポートと設定同期が可能

|

||||||

|

|

||||||

|

### [Shadow AI](https://github.com/HEUDavid/shadow-ai)

|

||||||

|

|

||||||

|

Shadow AIは制限された環境向けに特別に設計されたAIアシスタントツールです。ウィンドウや痕跡のないステルス動作モードを提供し、LAN(ローカルエリアネットワーク)を介したクロスデバイスAI質疑応答のインタラクションと制御を可能にします。本質的には「画面/音声キャプチャ + AI推論 + 低摩擦デリバリー」の自動化コラボレーションレイヤーであり、制御されたデバイスや制限された環境でアプリケーション横断的にAIアシスタントを没入的に使用できるようユーザーを支援します。

|

||||||

|

|

||||||

|

> [!NOTE]

|

||||||

|

> CLIProxyAPIをベースにプロジェクトを開発した場合は、PRを送ってこのリストに追加してください。

|

||||||

|

|

||||||

|

## その他の選択肢

|

||||||

|

|

||||||

|

以下のプロジェクトはCLIProxyAPIの移植版またはそれに触発されたものです:

|

||||||

|

|

||||||

|

### [9Router](https://github.com/decolua/9router)

|

||||||

|

|

||||||

|

CLIProxyAPIに触発されたNext.js実装。インストールと使用が簡単で、フォーマット変換(OpenAI/Claude/Gemini/Ollama)、自動フォールバック付きコンボシステム、指数バックオフ付きマルチアカウント管理、Next.js Webダッシュボード、CLIツール(Cursor、Claude Code、Cline、RooCode)のサポートをゼロから構築 - APIキー不要

|

||||||

|

|

||||||

|

### [OmniRoute](https://github.com/diegosouzapw/OmniRoute)

|

||||||

|

|

||||||

|

コーディングを止めない。無料および低コストのAIモデルへのスマートルーティングと自動フォールバック。

|

||||||

|

|

||||||

|

OmniRouteはマルチプロバイダーLLM向けのAIゲートウェイです:スマートルーティング、負荷分散、リトライ、フォールバックを備えたOpenAI互換エンドポイント。ポリシー、レート制限、キャッシュ、可観測性を追加して、信頼性が高くコストを意識した推論を実現します。

|

||||||

|

|

||||||

|

> [!NOTE]

|

||||||

|

> CLIProxyAPIの移植版またはそれに触発されたプロジェクトを開発した場合は、PRを送ってこのリストに追加してください。

|

||||||

|

|

||||||

|

## ライセンス

|

||||||

|

|

||||||

|

本プロジェクトはMITライセンスの下でライセンスされています - 詳細は[LICENSE](LICENSE)ファイルを参照してください。

|

||||||

BIN

assets/bmoplus.png

Normal file

BIN

assets/bmoplus.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 28 KiB |

275

cmd/fetch_antigravity_models/main.go

Normal file

275

cmd/fetch_antigravity_models/main.go

Normal file

@@ -0,0 +1,275 @@

|

|||||||

|

// Command fetch_antigravity_models connects to the Antigravity API using the

|

||||||

|

// stored auth credentials and saves the dynamically fetched model list to a

|

||||||

|

// JSON file for inspection or offline use.

|

||||||

|

//

|

||||||

|

// Usage:

|

||||||

|

//

|

||||||

|

// go run ./cmd/fetch_antigravity_models [flags]

|

||||||

|

//

|

||||||

|

// Flags:

|

||||||

|

//

|

||||||

|

// --auths-dir <path> Directory containing auth JSON files (default: "auths")

|

||||||

|

// --output <path> Output JSON file path (default: "antigravity_models.json")

|

||||||

|

// --pretty Pretty-print the output JSON (default: true)

|

||||||

|

package main

|

||||||

|

|

||||||

|

import (

|

||||||

|

"context"

|

||||||

|

"encoding/json"

|

||||||

|

"flag"

|

||||||

|

"fmt"

|

||||||

|

"io"

|

||||||

|

"net/http"

|

||||||

|

"os"

|

||||||

|

"path/filepath"

|

||||||

|

"strings"

|

||||||

|

"time"

|

||||||

|

|

||||||

|

"github.com/router-for-me/CLIProxyAPI/v6/internal/logging"

|

||||||

|

sdkauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/auth"

|

||||||

|

coreauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/cliproxy/auth"

|

||||||

|

"github.com/router-for-me/CLIProxyAPI/v6/sdk/proxyutil"

|

||||||

|

log "github.com/sirupsen/logrus"

|

||||||

|

"github.com/tidwall/gjson"

|

||||||

|

)

|

||||||

|

|

||||||

|

const (

|

||||||

|

antigravityBaseURLDaily = "https://daily-cloudcode-pa.googleapis.com"

|

||||||

|

antigravitySandboxBaseURLDaily = "https://daily-cloudcode-pa.sandbox.googleapis.com"

|

||||||

|

antigravityBaseURLProd = "https://cloudcode-pa.googleapis.com"

|

||||||

|

antigravityModelsPath = "/v1internal:fetchAvailableModels"

|

||||||

|

)

|

||||||

|

|

||||||

|

func init() {

|

||||||

|

logging.SetupBaseLogger()

|

||||||

|

log.SetLevel(log.InfoLevel)

|

||||||

|

}

|

||||||

|

|

||||||

|

// modelOutput wraps the fetched model list with fetch metadata.

|

||||||

|

type modelOutput struct {

|

||||||

|

Models []modelEntry `json:"models"`

|

||||||

|

}

|

||||||

|

|

||||||

|

// modelEntry contains only the fields we want to keep for static model definitions.

|

||||||

|

type modelEntry struct {

|

||||||

|

ID string `json:"id"`

|

||||||

|

Object string `json:"object"`

|

||||||

|

OwnedBy string `json:"owned_by"`

|

||||||

|

Type string `json:"type"`

|

||||||

|

DisplayName string `json:"display_name"`

|

||||||

|

Name string `json:"name"`

|

||||||

|

Description string `json:"description"`

|

||||||

|

ContextLength int `json:"context_length,omitempty"`

|

||||||

|

MaxCompletionTokens int `json:"max_completion_tokens,omitempty"`

|

||||||

|

}

|

||||||

|

|

||||||

|

func main() {

|

||||||

|

var authsDir string

|

||||||

|

var outputPath string

|

||||||

|

var pretty bool

|

||||||

|

|

||||||

|

flag.StringVar(&authsDir, "auths-dir", "auths", "Directory containing auth JSON files")

|

||||||

|

flag.StringVar(&outputPath, "output", "antigravity_models.json", "Output JSON file path")

|

||||||

|

flag.BoolVar(&pretty, "pretty", true, "Pretty-print the output JSON")

|

||||||

|

flag.Parse()

|

||||||

|

|

||||||

|

// Resolve relative paths against the working directory.

|

||||||

|

wd, err := os.Getwd()

|

||||||

|

if err != nil {

|

||||||

|

fmt.Fprintf(os.Stderr, "error: cannot get working directory: %v\n", err)

|

||||||

|

os.Exit(1)

|

||||||

|

}

|

||||||

|

if !filepath.IsAbs(authsDir) {

|

||||||

|

authsDir = filepath.Join(wd, authsDir)

|

||||||

|

}

|

||||||

|

if !filepath.IsAbs(outputPath) {

|

||||||

|

outputPath = filepath.Join(wd, outputPath)

|

||||||

|

}

|

||||||

|

|

||||||

|

fmt.Printf("Scanning auth files in: %s\n", authsDir)

|

||||||

|

|

||||||

|

// Load all auth records from the directory.

|

||||||

|

fileStore := sdkauth.NewFileTokenStore()

|

||||||

|

fileStore.SetBaseDir(authsDir)

|

||||||

|

|

||||||

|

ctx := context.Background()

|

||||||

|

auths, err := fileStore.List(ctx)

|

||||||

|

if err != nil {

|

||||||

|

fmt.Fprintf(os.Stderr, "error: failed to list auth files: %v\n", err)

|

||||||

|

os.Exit(1)

|

||||||

|

}

|

||||||

|

if len(auths) == 0 {

|

||||||

|

fmt.Fprintf(os.Stderr, "error: no auth files found in %s\n", authsDir)

|

||||||

|

os.Exit(1)

|

||||||

|

}

|

||||||

|

|

||||||

|

// Find the first enabled antigravity auth.

|

||||||

|

var chosen *coreauth.Auth

|

||||||

|

for _, a := range auths {

|

||||||

|

if a == nil || a.Disabled {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

if strings.EqualFold(strings.TrimSpace(a.Provider), "antigravity") {

|

||||||

|

chosen = a

|

||||||

|

break

|

||||||

|

}

|

||||||

|

}

|

||||||

|

if chosen == nil {

|

||||||

|

fmt.Fprintf(os.Stderr, "error: no enabled antigravity auth found in %s\n", authsDir)

|

||||||

|

os.Exit(1)

|

||||||

|

}

|

||||||

|

|

||||||

|

fmt.Printf("Using auth: id=%s label=%s\n", chosen.ID, chosen.Label)

|

||||||

|

|

||||||

|

// Fetch models from the upstream Antigravity API.

|

||||||

|

fmt.Println("Fetching Antigravity model list from upstream...")

|

||||||

|

|

||||||

|

fetchCtx, cancel := context.WithTimeout(ctx, 30*time.Second)

|

||||||

|

defer cancel()

|

||||||

|

|

||||||

|

models := fetchModels(fetchCtx, chosen)

|

||||||

|

if len(models) == 0 {

|

||||||

|

fmt.Fprintln(os.Stderr, "warning: no models returned (API may be unavailable or token expired)")

|

||||||

|

} else {

|

||||||

|

fmt.Printf("Fetched %d models.\n", len(models))

|

||||||

|

}

|

||||||

|

|

||||||

|

// Build the output payload.

|

||||||

|

out := modelOutput{

|

||||||

|

Models: models,

|

||||||

|

}

|

||||||

|

|

||||||

|

// Marshal to JSON.

|

||||||

|

var raw []byte

|

||||||

|

if pretty {

|

||||||

|

raw, err = json.MarshalIndent(out, "", " ")

|

||||||

|

} else {

|

||||||

|

raw, err = json.Marshal(out)

|

||||||

|

}

|

||||||

|

if err != nil {

|

||||||

|

fmt.Fprintf(os.Stderr, "error: failed to marshal JSON: %v\n", err)

|

||||||

|

os.Exit(1)

|

||||||

|

}

|

||||||

|

|

||||||

|

if err = os.WriteFile(outputPath, raw, 0o644); err != nil {

|

||||||

|

fmt.Fprintf(os.Stderr, "error: failed to write output file %s: %v\n", outputPath, err)

|

||||||

|

os.Exit(1)

|

||||||

|

}

|

||||||

|

|

||||||

|

fmt.Printf("Model list saved to: %s\n", outputPath)

|

||||||

|

}

|

||||||

|

|

||||||

|

func fetchModels(ctx context.Context, auth *coreauth.Auth) []modelEntry {

|

||||||

|

accessToken := metaStringValue(auth.Metadata, "access_token")

|

||||||

|

if accessToken == "" {

|

||||||

|

fmt.Fprintln(os.Stderr, "error: no access token found in auth")

|

||||||

|

return nil

|

||||||

|

}

|

||||||

|

|

||||||

|

baseURLs := []string{antigravityBaseURLProd, antigravityBaseURLDaily, antigravitySandboxBaseURLDaily}

|

||||||

|

|

||||||

|

for _, baseURL := range baseURLs {

|

||||||

|

modelsURL := baseURL + antigravityModelsPath

|

||||||

|

|

||||||

|

var payload []byte

|

||||||

|

if auth != nil && auth.Metadata != nil {

|

||||||

|

if pid, ok := auth.Metadata["project_id"].(string); ok && strings.TrimSpace(pid) != "" {

|

||||||

|

payload = []byte(fmt.Sprintf(`{"project": "%s"}`, strings.TrimSpace(pid)))

|

||||||

|

}

|

||||||

|

}

|

||||||

|

if len(payload) == 0 {

|

||||||

|

payload = []byte(`{}`)

|

||||||

|

}

|

||||||

|

|

||||||

|

httpReq, errReq := http.NewRequestWithContext(ctx, http.MethodPost, modelsURL, strings.NewReader(string(payload)))

|

||||||

|

if errReq != nil {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

httpReq.Close = true

|

||||||

|

httpReq.Header.Set("Content-Type", "application/json")

|

||||||

|

httpReq.Header.Set("Authorization", "Bearer "+accessToken)

|

||||||

|

httpReq.Header.Set("User-Agent", "antigravity/1.19.6 darwin/arm64")

|

||||||

|

|

||||||

|

httpClient := &http.Client{Timeout: 30 * time.Second}

|

||||||

|

if transport, _, errProxy := proxyutil.BuildHTTPTransport(auth.ProxyURL); errProxy == nil && transport != nil {

|

||||||

|

httpClient.Transport = transport

|

||||||

|

}

|

||||||

|

httpResp, errDo := httpClient.Do(httpReq)

|

||||||

|

if errDo != nil {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

|

||||||

|

bodyBytes, errRead := io.ReadAll(httpResp.Body)

|

||||||

|

httpResp.Body.Close()

|

||||||

|

if errRead != nil {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

|

||||||

|

if httpResp.StatusCode < http.StatusOK || httpResp.StatusCode >= http.StatusMultipleChoices {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

|

||||||

|

result := gjson.GetBytes(bodyBytes, "models")

|

||||||

|

if !result.Exists() {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

|

||||||

|

var models []modelEntry

|

||||||

|

|

||||||

|

for originalName, modelData := range result.Map() {

|

||||||

|

modelID := strings.TrimSpace(originalName)

|

||||||

|

if modelID == "" {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

// Skip internal/experimental models

|

||||||

|

switch modelID {

|

||||||

|

case "chat_20706", "chat_23310", "tab_flash_lite_preview", "tab_jump_flash_lite_preview", "gemini-2.5-flash-thinking", "gemini-2.5-pro":

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

|

||||||

|

displayName := modelData.Get("displayName").String()

|

||||||

|

if displayName == "" {

|

||||||

|

displayName = modelID

|

||||||

|

}

|

||||||

|

|

||||||

|

entry := modelEntry{

|

||||||

|

ID: modelID,

|

||||||

|

Object: "model",

|

||||||

|

OwnedBy: "antigravity",

|

||||||

|

Type: "antigravity",

|

||||||

|

DisplayName: displayName,

|

||||||

|

Name: modelID,

|

||||||

|

Description: displayName,

|

||||||

|

}

|

||||||

|

|

||||||

|

if maxTok := modelData.Get("maxTokens").Int(); maxTok > 0 {

|

||||||

|

entry.ContextLength = int(maxTok)

|

||||||

|

}

|

||||||

|

if maxOut := modelData.Get("maxOutputTokens").Int(); maxOut > 0 {

|

||||||

|

entry.MaxCompletionTokens = int(maxOut)

|

||||||

|

}

|

||||||

|

|

||||||

|

models = append(models, entry)

|

||||||

|

}

|

||||||

|

|

||||||

|

return models

|

||||||

|

}

|

||||||

|

|

||||||

|

return nil

|

||||||

|

}

|

||||||

|

|

||||||

|

func metaStringValue(m map[string]interface{}, key string) string {

|

||||||

|

if m == nil {

|

||||||

|

return ""

|

||||||

|

}

|

||||||

|

v, ok := m[key]

|

||||||

|

if !ok {

|

||||||

|

return ""

|

||||||

|

}

|

||||||

|

switch val := v.(type) {

|

||||||

|

case string:

|

||||||

|

return val

|

||||||

|

default:

|

||||||

|

return ""

|

||||||

|

}

|

||||||

|

}

|

||||||

20

cmd/mcpdebug/main.go

Normal file

20

cmd/mcpdebug/main.go

Normal file

@@ -0,0 +1,20 @@

|

|||||||

|

package main

|

||||||

|

|

||||||

|

import (

|

||||||

|

"encoding/hex"

|

||||||

|

"fmt"

|

||||||

|

"os"

|

||||||

|

|

||||||

|

cursorproto "github.com/router-for-me/CLIProxyAPI/v6/internal/auth/cursor/proto"

|

||||||

|

)

|

||||||

|

|

||||||

|

func main() {

|

||||||

|

// Encode MCP result with empty execId

|

||||||

|

resultBytes := cursorproto.EncodeExecMcpResult(1, "", `{"test": "data"}`, false)

|

||||||

|

fmt.Printf("Result protobuf hex: %s\n", hex.EncodeToString(resultBytes))

|

||||||

|

fmt.Printf("Result length: %d bytes\n", len(resultBytes))

|

||||||

|

|

||||||

|

// Write to file for analysis

|

||||||

|

os.WriteFile("mcp_result.bin", resultBytes)

|

||||||

|

fmt.Println("Wrote mcp_result.bin")

|

||||||

|

}

|

||||||

32

cmd/protocheck/main.go

Normal file

32

cmd/protocheck/main.go

Normal file

@@ -0,0 +1,32 @@

|

|||||||

|

package main

|

||||||

|

|

||||||

|

import (

|

||||||

|

"fmt"

|

||||||

|

cursorproto "github.com/router-for-me/CLIProxyAPI/v6/internal/auth/cursor/proto"

|

||||||

|

)

|

||||||

|

|

||||||

|

func main() {

|

||||||

|

ecm := cursorproto.NewMsg("ExecClientMessage")

|

||||||

|

|

||||||

|

// Try different field names

|

||||||

|

names := []string{

|

||||||

|

"mcp_result", "mcpResult", "McpResult", "MCP_RESULT",

|

||||||

|

"shell_result", "shellResult",

|

||||||

|

}

|

||||||

|

|

||||||

|

for _, name := range names {

|

||||||

|

fd := ecm.Descriptor().Fields().ByName(name)

|

||||||

|

if fd != nil {

|

||||||

|

fmt.Printf("Found field %q: number=%d, kind=%s\n", name, fd.Number(), fd.Kind())

|

||||||

|

} else {

|

||||||

|

fmt.Printf("Field %q NOT FOUND\n", name)

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

// List all fields

|

||||||

|

fmt.Println("\nAll fields in ExecClientMessage:")

|

||||||

|

for i := 0; i < ecm.Descriptor().Fields().Len(); i++ {

|

||||||

|

f := ecm.Descriptor().Fields().Get(i)

|

||||||

|

fmt.Printf(" %d: %q (number=%d)\n", i, f.Name(), f.Number())

|

||||||

|

}

|

||||||

|

}

|

||||||

@@ -25,6 +25,7 @@ import (

|

|||||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/logging"

|

"github.com/router-for-me/CLIProxyAPI/v6/internal/logging"

|

||||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/managementasset"

|

"github.com/router-for-me/CLIProxyAPI/v6/internal/managementasset"

|

||||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/misc"

|

"github.com/router-for-me/CLIProxyAPI/v6/internal/misc"

|

||||||

|

"github.com/router-for-me/CLIProxyAPI/v6/internal/registry"

|

||||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/store"

|

"github.com/router-for-me/CLIProxyAPI/v6/internal/store"

|

||||||

_ "github.com/router-for-me/CLIProxyAPI/v6/internal/translator"

|

_ "github.com/router-for-me/CLIProxyAPI/v6/internal/translator"

|

||||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/tui"

|

"github.com/router-for-me/CLIProxyAPI/v6/internal/tui"

|

||||||

@@ -78,10 +79,13 @@ func main() {

|

|||||||

var kiloLogin bool

|

var kiloLogin bool

|

||||||

var iflowLogin bool

|

var iflowLogin bool

|

||||||

var iflowCookie bool

|

var iflowCookie bool

|

||||||

|

var gitlabLogin bool

|

||||||

|

var gitlabTokenLogin bool

|

||||||

var noBrowser bool

|

var noBrowser bool

|

||||||

var oauthCallbackPort int

|

var oauthCallbackPort int

|

||||||

var antigravityLogin bool

|

var antigravityLogin bool

|

||||||

var kimiLogin bool

|

var kimiLogin bool

|

||||||

|

var cursorLogin bool

|

||||||

var kiroLogin bool

|

var kiroLogin bool

|

||||||

var kiroGoogleLogin bool

|

var kiroGoogleLogin bool

|

||||||

var kiroAWSLogin bool

|

var kiroAWSLogin bool

|

||||||

@@ -92,6 +96,7 @@ func main() {

|

|||||||

var kiroIDCRegion string

|

var kiroIDCRegion string

|

||||||

var kiroIDCFlow string

|

var kiroIDCFlow string

|

||||||

var githubCopilotLogin bool

|

var githubCopilotLogin bool

|

||||||

|

var codeBuddyLogin bool

|

||||||

var projectID string

|

var projectID string

|

||||||

var vertexImport string

|

var vertexImport string

|

||||||

var configPath string

|

var configPath string

|

||||||

@@ -100,6 +105,7 @@ func main() {

|

|||||||

var standalone bool

|

var standalone bool

|

||||||

var noIncognito bool

|

var noIncognito bool

|

||||||

var useIncognito bool

|

var useIncognito bool

|

||||||

|

var localModel bool

|

||||||

|

|

||||||

// Define command-line flags for different operation modes.

|

// Define command-line flags for different operation modes.

|

||||||

flag.BoolVar(&login, "login", false, "Login Google Account")

|

flag.BoolVar(&login, "login", false, "Login Google Account")

|

||||||

@@ -110,12 +116,15 @@ func main() {

|

|||||||

flag.BoolVar(&kiloLogin, "kilo-login", false, "Login to Kilo AI using device flow")

|

flag.BoolVar(&kiloLogin, "kilo-login", false, "Login to Kilo AI using device flow")

|

||||||

flag.BoolVar(&iflowLogin, "iflow-login", false, "Login to iFlow using OAuth")

|

flag.BoolVar(&iflowLogin, "iflow-login", false, "Login to iFlow using OAuth")

|

||||||

flag.BoolVar(&iflowCookie, "iflow-cookie", false, "Login to iFlow using Cookie")

|

flag.BoolVar(&iflowCookie, "iflow-cookie", false, "Login to iFlow using Cookie")

|

||||||

|

flag.BoolVar(&gitlabLogin, "gitlab-login", false, "Login to GitLab Duo using OAuth")

|

||||||

|

flag.BoolVar(&gitlabTokenLogin, "gitlab-token-login", false, "Login to GitLab Duo using a personal access token")

|

||||||

flag.BoolVar(&noBrowser, "no-browser", false, "Don't open browser automatically for OAuth")

|

flag.BoolVar(&noBrowser, "no-browser", false, "Don't open browser automatically for OAuth")

|

||||||

flag.IntVar(&oauthCallbackPort, "oauth-callback-port", 0, "Override OAuth callback port (defaults to provider-specific port)")

|

flag.IntVar(&oauthCallbackPort, "oauth-callback-port", 0, "Override OAuth callback port (defaults to provider-specific port)")

|

||||||

flag.BoolVar(&useIncognito, "incognito", false, "Open browser in incognito/private mode for OAuth (useful for multiple accounts)")

|

flag.BoolVar(&useIncognito, "incognito", false, "Open browser in incognito/private mode for OAuth (useful for multiple accounts)")

|

||||||

flag.BoolVar(&noIncognito, "no-incognito", false, "Force disable incognito mode (uses existing browser session)")

|

flag.BoolVar(&noIncognito, "no-incognito", false, "Force disable incognito mode (uses existing browser session)")

|

||||||

flag.BoolVar(&antigravityLogin, "antigravity-login", false, "Login to Antigravity using OAuth")

|

flag.BoolVar(&antigravityLogin, "antigravity-login", false, "Login to Antigravity using OAuth")

|

||||||

flag.BoolVar(&kimiLogin, "kimi-login", false, "Login to Kimi using OAuth")

|

flag.BoolVar(&kimiLogin, "kimi-login", false, "Login to Kimi using OAuth")

|

||||||

|

flag.BoolVar(&cursorLogin, "cursor-login", false, "Login to Cursor using OAuth")

|

||||||

flag.BoolVar(&kiroLogin, "kiro-login", false, "Login to Kiro using Google OAuth")

|

flag.BoolVar(&kiroLogin, "kiro-login", false, "Login to Kiro using Google OAuth")

|

||||||

flag.BoolVar(&kiroGoogleLogin, "kiro-google-login", false, "Login to Kiro using Google OAuth (same as --kiro-login)")

|

flag.BoolVar(&kiroGoogleLogin, "kiro-google-login", false, "Login to Kiro using Google OAuth (same as --kiro-login)")

|

||||||

flag.BoolVar(&kiroAWSLogin, "kiro-aws-login", false, "Login to Kiro using AWS Builder ID (device code flow)")

|

flag.BoolVar(&kiroAWSLogin, "kiro-aws-login", false, "Login to Kiro using AWS Builder ID (device code flow)")

|

||||||

@@ -126,12 +135,14 @@ func main() {

|

|||||||

flag.StringVar(&kiroIDCRegion, "kiro-idc-region", "", "IDC region (default: us-east-1)")

|

flag.StringVar(&kiroIDCRegion, "kiro-idc-region", "", "IDC region (default: us-east-1)")

|

||||||

flag.StringVar(&kiroIDCFlow, "kiro-idc-flow", "", "IDC flow type: authcode (default) or device")

|

flag.StringVar(&kiroIDCFlow, "kiro-idc-flow", "", "IDC flow type: authcode (default) or device")

|

||||||

flag.BoolVar(&githubCopilotLogin, "github-copilot-login", false, "Login to GitHub Copilot using device flow")

|

flag.BoolVar(&githubCopilotLogin, "github-copilot-login", false, "Login to GitHub Copilot using device flow")

|

||||||

|

flag.BoolVar(&codeBuddyLogin, "codebuddy-login", false, "Login to CodeBuddy using browser OAuth flow")

|

||||||

flag.StringVar(&projectID, "project_id", "", "Project ID (Gemini only, not required)")

|

flag.StringVar(&projectID, "project_id", "", "Project ID (Gemini only, not required)")

|

||||||

flag.StringVar(&configPath, "config", DefaultConfigPath, "Configure File Path")

|

flag.StringVar(&configPath, "config", DefaultConfigPath, "Configure File Path")

|

||||||

flag.StringVar(&vertexImport, "vertex-import", "", "Import Vertex service account key JSON file")

|

flag.StringVar(&vertexImport, "vertex-import", "", "Import Vertex service account key JSON file")

|

||||||

flag.StringVar(&password, "password", "", "")

|

flag.StringVar(&password, "password", "", "")

|

||||||

flag.BoolVar(&tuiMode, "tui", false, "Start with terminal management UI")

|

flag.BoolVar(&tuiMode, "tui", false, "Start with terminal management UI")

|

||||||

flag.BoolVar(&standalone, "standalone", false, "In TUI mode, start an embedded local server")

|

flag.BoolVar(&standalone, "standalone", false, "In TUI mode, start an embedded local server")

|

||||||

|

flag.BoolVar(&localModel, "local-model", false, "Use embedded model catalog only, skip remote model fetching")

|

||||||

|

|

||||||

flag.CommandLine.Usage = func() {

|

flag.CommandLine.Usage = func() {

|

||||||

out := flag.CommandLine.Output()

|

out := flag.CommandLine.Output()

|

||||||

@@ -509,6 +520,9 @@ func main() {

|

|||||||

} else if githubCopilotLogin {

|

} else if githubCopilotLogin {

|

||||||

// Handle GitHub Copilot login

|

// Handle GitHub Copilot login

|

||||||

cmd.DoGitHubCopilotLogin(cfg, options)

|

cmd.DoGitHubCopilotLogin(cfg, options)

|

||||||

|

} else if codeBuddyLogin {

|

||||||

|

// Handle CodeBuddy login

|

||||||

|

cmd.DoCodeBuddyLogin(cfg, options)

|

||||||

} else if codexLogin {

|

} else if codexLogin {

|

||||||

// Handle Codex login

|

// Handle Codex login

|

||||||

cmd.DoCodexLogin(cfg, options)

|

cmd.DoCodexLogin(cfg, options)

|

||||||

@@ -526,8 +540,14 @@ func main() {

|

|||||||

cmd.DoIFlowLogin(cfg, options)

|

cmd.DoIFlowLogin(cfg, options)

|

||||||

} else if iflowCookie {

|

} else if iflowCookie {

|

||||||

cmd.DoIFlowCookieAuth(cfg, options)

|

cmd.DoIFlowCookieAuth(cfg, options)

|

||||||

|

} else if gitlabLogin {

|

||||||

|

cmd.DoGitLabLogin(cfg, options)

|

||||||

|

} else if gitlabTokenLogin {

|

||||||

|

cmd.DoGitLabTokenLogin(cfg, options)

|

||||||

} else if kimiLogin {

|

} else if kimiLogin {

|

||||||

cmd.DoKimiLogin(cfg, options)

|

cmd.DoKimiLogin(cfg, options)

|

||||||

|

} else if cursorLogin {

|

||||||

|

cmd.DoCursorLogin(cfg, options)

|

||||||

} else if kiroLogin {

|

} else if kiroLogin {

|

||||||

// For Kiro auth, default to incognito mode for multi-account support

|

// For Kiro auth, default to incognito mode for multi-account support

|

||||||

// Users can explicitly override with --no-incognito

|

// Users can explicitly override with --no-incognito

|

||||||

@@ -569,10 +589,16 @@ func main() {

|

|||||||

cmd.WaitForCloudDeploy()

|

cmd.WaitForCloudDeploy()

|

||||||

return

|

return

|

||||||

}

|

}

|

||||||

|

if localModel && (!tuiMode || standalone) {

|

||||||

|

log.Info("Local model mode: using embedded model catalog, remote model updates disabled")

|

||||||

|

}

|

||||||

if tuiMode {

|

if tuiMode {

|

||||||

if standalone {

|

if standalone {

|

||||||

// Standalone mode: start an embedded local server and connect TUI client to it.

|

// Standalone mode: start an embedded local server and connect TUI client to it.

|

||||||

managementasset.StartAutoUpdater(context.Background(), configFilePath)

|

managementasset.StartAutoUpdater(context.Background(), configFilePath)

|

||||||

|

if !localModel {

|

||||||

|

registry.StartModelsUpdater(context.Background())

|

||||||

|

}

|

||||||

hook := tui.NewLogHook(2000)

|

hook := tui.NewLogHook(2000)

|

||||||

hook.SetFormatter(&logging.LogFormatter{})

|

hook.SetFormatter(&logging.LogFormatter{})

|

||||||

log.AddHook(hook)

|

log.AddHook(hook)

|

||||||

@@ -643,15 +669,18 @@ func main() {

|

|||||||

}

|

}

|

||||||

}

|

}

|

||||||

} else {

|

} else {

|

||||||

// Start the main proxy service

|

// Start the main proxy service

|

||||||

managementasset.StartAutoUpdater(context.Background(), configFilePath)

|

managementasset.StartAutoUpdater(context.Background(), configFilePath)

|

||||||

|

if !localModel {

|

||||||

|

registry.StartModelsUpdater(context.Background())

|

||||||

|

}

|

||||||

|

|

||||||

if cfg.AuthDir != "" {

|

if cfg.AuthDir != "" {

|

||||||

kiro.InitializeAndStart(cfg.AuthDir, cfg)

|

kiro.InitializeAndStart(cfg.AuthDir, cfg)

|

||||||

defer kiro.StopGlobalRefreshManager()

|

defer kiro.StopGlobalRefreshManager()

|

||||||

}

|

}

|

||||||

|

|

||||||

cmd.StartService(cfg, configFilePath, password)

|

cmd.StartService(cfg, configFilePath, password)

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|||||||

@@ -25,6 +25,10 @@ remote-management:

|

|||||||

# Disable the bundled management control panel asset download and HTTP route when true.

|

# Disable the bundled management control panel asset download and HTTP route when true.

|

||||||

disable-control-panel: false

|

disable-control-panel: false

|

||||||

|

|

||||||

|

# Disable automatic periodic background updates of the management panel from GitHub (default: false).

|

||||||

|

# When enabled, the panel is only downloaded on first access if missing, and never auto-updated afterward.

|

||||||

|

# disable-auto-update-panel: false

|

||||||

|

|

||||||

# GitHub repository for the management control panel. Accepts a repository URL or releases API URL.

|

# GitHub repository for the management control panel. Accepts a repository URL or releases API URL.

|

||||||

panel-github-repository: 'https://github.com/router-for-me/Cli-Proxy-API-Management-Center'

|

panel-github-repository: 'https://github.com/router-for-me/Cli-Proxy-API-Management-Center'

|

||||||

|

|

||||||

@@ -68,7 +72,8 @@ error-logs-max-files: 10

|

|||||||

usage-statistics-enabled: false

|

usage-statistics-enabled: false

|

||||||

|

|

||||||

# Proxy URL. Supports socks5/http/https protocols. Example: socks5://user:pass@192.168.1.1:1080/

|

# Proxy URL. Supports socks5/http/https protocols. Example: socks5://user:pass@192.168.1.1:1080/

|

||||||

proxy-url: ''

|

# Per-entry proxy-url also supports "direct" or "none" to bypass both the global proxy-url and environment proxies explicitly.

|

||||||

|

proxy-url: ""

|

||||||

|

|

||||||

# When true, unprefixed model requests only use credentials without a prefix (except when prefix == model name).

|

# When true, unprefixed model requests only use credentials without a prefix (except when prefix == model name).

|

||||||

force-model-prefix: false

|

force-model-prefix: false

|

||||||

@@ -115,6 +120,7 @@ nonstream-keepalive-interval: 0

|

|||||||

# headers:

|

# headers:

|

||||||

# X-Custom-Header: "custom-value"

|

# X-Custom-Header: "custom-value"

|

||||||

# proxy-url: "socks5://proxy.example.com:1080"

|

# proxy-url: "socks5://proxy.example.com:1080"

|

||||||

|

# # proxy-url: "direct" # optional: explicit direct connect for this credential

|

||||||

# models:

|

# models:

|

||||||

# - name: "gemini-2.5-flash" # upstream model name

|

# - name: "gemini-2.5-flash" # upstream model name

|

||||||

# alias: "gemini-flash" # client alias mapped to the upstream model

|

# alias: "gemini-flash" # client alias mapped to the upstream model

|

||||||

@@ -133,6 +139,7 @@ nonstream-keepalive-interval: 0

|

|||||||

# headers:

|

# headers:

|

||||||

# X-Custom-Header: "custom-value"

|

# X-Custom-Header: "custom-value"

|

||||||

# proxy-url: "socks5://proxy.example.com:1080" # optional: per-key proxy override

|

# proxy-url: "socks5://proxy.example.com:1080" # optional: per-key proxy override

|

||||||

|

# # proxy-url: "direct" # optional: explicit direct connect for this credential

|

||||||

# models:

|

# models:

|

||||||

# - name: "gpt-5-codex" # upstream model name

|

# - name: "gpt-5-codex" # upstream model name

|

||||||

# alias: "codex-latest" # client alias mapped to the upstream model

|

# alias: "codex-latest" # client alias mapped to the upstream model

|

||||||

@@ -151,6 +158,7 @@ nonstream-keepalive-interval: 0

|

|||||||

# headers:

|

# headers:

|

||||||

# X-Custom-Header: "custom-value"

|

# X-Custom-Header: "custom-value"

|

||||||

# proxy-url: "socks5://proxy.example.com:1080" # optional: per-key proxy override

|

# proxy-url: "socks5://proxy.example.com:1080" # optional: per-key proxy override

|

||||||

|

# # proxy-url: "direct" # optional: explicit direct connect for this credential

|

||||||

# models:

|

# models:

|

||||||

# - name: "claude-3-5-sonnet-20241022" # upstream model name

|

# - name: "claude-3-5-sonnet-20241022" # upstream model name

|

||||||

# alias: "claude-sonnet-latest" # client alias mapped to the upstream model

|

# alias: "claude-sonnet-latest" # client alias mapped to the upstream model

|

||||||

@@ -171,12 +179,27 @@ nonstream-keepalive-interval: 0

|

|||||||

# cache-user-id: true # optional: default is false; set true to reuse cached user_id per API key instead of generating a random one each request

|

# cache-user-id: true # optional: default is false; set true to reuse cached user_id per API key instead of generating a random one each request

|

||||||

|

|

||||||

# Default headers for Claude API requests. Update when Claude Code releases new versions.

|

# Default headers for Claude API requests. Update when Claude Code releases new versions.

|

||||||

# These are used as fallbacks when the client does not send its own headers.

|

# In legacy mode, user-agent/package-version/runtime-version/timeout are used as fallbacks

|

||||||

|

# when the client omits them, while OS/arch remain runtime-derived. When

|

||||||

|

# stabilize-device-profile is enabled, OS/arch stay pinned to the baseline values below,

|

||||||

|

# while user-agent/package-version/runtime-version seed a software fingerprint that can

|

||||||

|

# still upgrade to newer official Claude client versions.

|

||||||

# claude-header-defaults:

|

# claude-header-defaults:

|

||||||

# user-agent: "claude-cli/2.1.44 (external, sdk-cli)"

|

# user-agent: "claude-cli/2.1.44 (external, sdk-cli)"

|

||||||

# package-version: "0.74.0"

|

# package-version: "0.74.0"

|

||||||

# runtime-version: "v24.3.0"

|

# runtime-version: "v24.3.0"

|

||||||

|

# os: "MacOS"

|

||||||

|

# arch: "arm64"

|

||||||

# timeout: "600"

|

# timeout: "600"

|

||||||

|

# stabilize-device-profile: false # optional, default false; set true to enable per-auth/API-key fingerprint pinning

|

||||||

|

|

||||||

|

# Default headers for Codex OAuth model requests.

|

||||||

|

# These are used only for file-backed/OAuth Codex requests when the client

|

||||||

|

# does not send the header. `user-agent` applies to HTTP and websocket requests;

|

||||||

|

# `beta-features` only applies to websocket requests. They do not apply to codex-api-key entries.

|

||||||

|

# codex-header-defaults:

|

||||||

|

# user-agent: "codex_cli_rs/0.114.0 (Mac OS 14.2.0; x86_64) vscode/1.111.0"

|

||||||

|

# beta-features: "multi_agent"

|

||||||

|

|

||||||

# Kiro (AWS CodeWhisperer) configuration

|

# Kiro (AWS CodeWhisperer) configuration

|

||||||

# Note: Kiro API currently only operates in us-east-1 region

|

# Note: Kiro API currently only operates in us-east-1 region

|

||||||

@@ -215,10 +238,13 @@ nonstream-keepalive-interval: 0

|

|||||||

# api-key-entries:

|

# api-key-entries:

|

||||||

# - api-key: "sk-or-v1-...b780"

|

# - api-key: "sk-or-v1-...b780"

|

||||||

# proxy-url: "socks5://proxy.example.com:1080" # optional: per-key proxy override

|

# proxy-url: "socks5://proxy.example.com:1080" # optional: per-key proxy override

|

||||||

|

# # proxy-url: "direct" # optional: explicit direct connect for this credential

|

||||||

# - api-key: "sk-or-v1-...b781" # without proxy-url

|

# - api-key: "sk-or-v1-...b781" # without proxy-url

|

||||||

# models: # The models supported by the provider.

|

# models: # The models supported by the provider.

|

||||||

# - name: "moonshotai/kimi-k2:free" # The actual model name.

|

# - name: "moonshotai/kimi-k2:free" # The actual model name.

|

||||||

# alias: "kimi-k2" # The alias used in the API.

|

# alias: "kimi-k2" # The alias used in the API.

|

||||||

|

# thinking: # optional: omit to default to levels ["low","medium","high"]

|

||||||

|

# levels: ["low", "medium", "high"]

|

||||||

# # You may repeat the same alias to build an internal model pool.

|

# # You may repeat the same alias to build an internal model pool.

|

||||||

# # The client still sees only one alias in the model list.

|

# # The client still sees only one alias in the model list.

|

||||||

# # Requests to that alias will round-robin across the upstream names below,

|