mirror of

https://github.com/router-for-me/CLIProxyAPIPlus.git

synced 2026-03-16 14:02:34 +00:00

Compare commits

61 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

ee0c24628f | ||

|

|

ddcf1f279d | ||

|

|

7e6bb8fdc5 | ||

|

|

9cee8ef87b | ||

|

|

93fb841bcb | ||

|

|

0c05131aeb | ||

|

|

5ebc58fab4 | ||

|

|

2b609dd891 | ||

|

|

a8cbc68c3e | ||

|

|

89c428216e | ||

|

|

2695a99623 | ||

|

|

ad5253bd2b | ||

|

|

9397f7049f | ||

|

|

a14d19b92c | ||

|

|

8ae0c05ea6 | ||

|

|

8822f20d17 | ||

|

|

f0e5a5a367 | ||

|

|

f6dfea9357 | ||

|

|

cc8dc7f62c | ||

|

|

a3846ea513 | ||

|

|

8d44be858e | ||

|

|

0e6bb076e9 | ||

|

|

ac135fc7cb | ||

|

|

4e1d09809d | ||

|

|

9e855f8100 | ||

|

|

25680a8259 | ||

|

|

13c93e8cfd | ||

|

|

88aa1b9fd1 | ||

|

|

352cb98ff0 | ||

|

|

ac95e92829 | ||

|

|

8526c2da25 | ||

|

|

68a6cabf8b | ||

|

|

ac0e387da1 | ||

|

|

7fe1d102cb | ||

|

|

5850492a93 | ||

|

|

fdbd4041ca | ||

|

|

ebef1fae2a | ||

|

|

c51851689b | ||

|

|

419bf784ab | ||

|

|

4bbeb92e9a | ||

|

|

b436dad8bc | ||

|

|

6ae15d6c44 | ||

|

|

0468bde0d6 | ||

|

|

1d7329e797 | ||

|

|

48ffc4dee7 | ||

|

|

7ebd8f0c44 | ||

|

|

b680c146c1 | ||

|

|

7d6660d181 | ||

|

|

d8e3d4e2b6 | ||

|

|

d26ad8224d | ||

|

|

5c84d69d42 | ||

|

|

527e4b7f26 | ||

|

|

b48485b42b | ||

|

|

79009bb3d4 | ||

|

|

dd44413ba5 | ||

|

|

10fa0f2062 | ||

|

|

30338ecec4 | ||

|

|

9a37defed3 | ||

|

|

c83a057996 | ||

|

|

b7588428c5 | ||

|

|

2615f489d6 |

@@ -10,7 +10,7 @@ The Plus release stays in lockstep with the mainline features.

|

||||

|

||||

## Differences from the Mainline

|

||||

|

||||

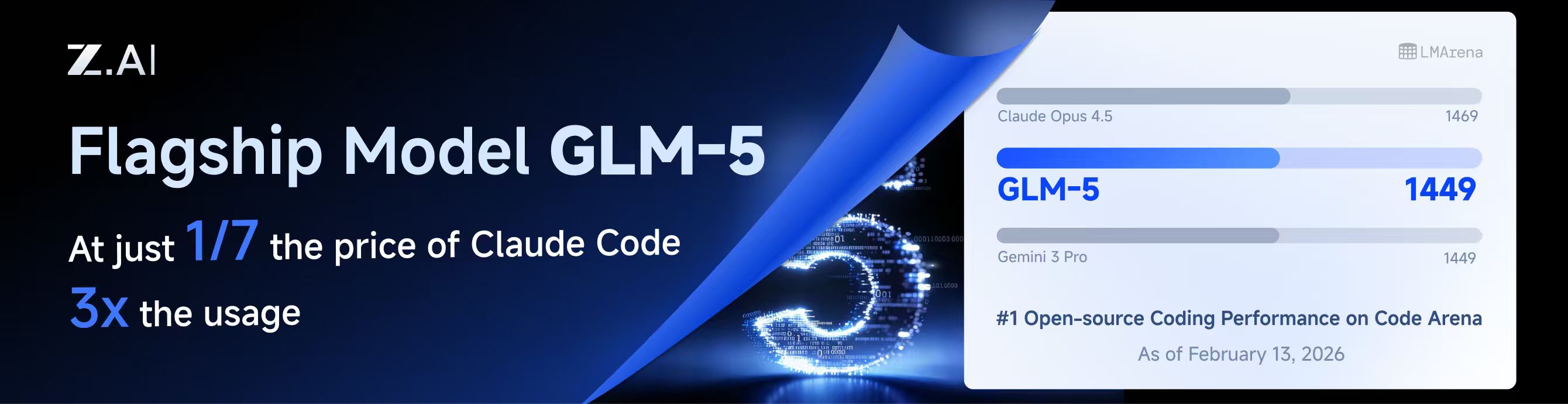

[](https://z.ai/subscribe?ic=8JVLJQFSKB)

|

||||

[](https://z.ai/subscribe?ic=8JVLJQFSKB)

|

||||

|

||||

## New Features (Plus Enhanced)

|

||||

|

||||

|

||||

@@ -10,7 +10,7 @@

|

||||

|

||||

## 与主线版本版本差异

|

||||

|

||||

[](https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII)

|

||||

[](https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII)

|

||||

|

||||

## 新增功能 (Plus 增强版)

|

||||

|

||||

|

||||

@@ -233,6 +233,9 @@ nonstream-keepalive-interval: 0

|

||||

# alias: "vertex-flash" # client-visible alias

|

||||

# - name: "gemini-2.5-pro"

|

||||

# alias: "vertex-pro"

|

||||

# excluded-models: # optional: models to exclude from listing

|

||||

# - "imagen-3.0-generate-002"

|

||||

# - "imagen-*"

|

||||

|

||||

# Amp Integration

|

||||

# ampcode:

|

||||

|

||||

@@ -16,6 +16,7 @@ import (

|

||||

"net/url"

|

||||

"os"

|

||||

"path/filepath"

|

||||

"runtime"

|

||||

"sort"

|

||||

"strconv"

|

||||

"strings"

|

||||

@@ -698,17 +699,20 @@ func (h *Handler) authIDForPath(path string) string {

|

||||

if path == "" {

|

||||

return ""

|

||||

}

|

||||

if h == nil || h.cfg == nil {

|

||||

return path

|

||||

id := path

|

||||

if h != nil && h.cfg != nil {

|

||||

authDir := strings.TrimSpace(h.cfg.AuthDir)

|

||||

if authDir != "" {

|

||||

if rel, errRel := filepath.Rel(authDir, path); errRel == nil && rel != "" {

|

||||

id = rel

|

||||

}

|

||||

}

|

||||

}

|

||||

authDir := strings.TrimSpace(h.cfg.AuthDir)

|

||||

if authDir == "" {

|

||||

return path

|

||||

// On Windows, normalize ID casing to avoid duplicate auth entries caused by case-insensitive paths.

|

||||

if runtime.GOOS == "windows" {

|

||||

id = strings.ToLower(id)

|

||||

}

|

||||

if rel, err := filepath.Rel(authDir, path); err == nil && rel != "" {

|

||||

return rel

|

||||

}

|

||||

return path

|

||||

return id

|

||||

}

|

||||

|

||||

func (h *Handler) registerAuthFromFile(ctx context.Context, path string, data []byte) error {

|

||||

|

||||

@@ -516,12 +516,13 @@ func (h *Handler) PutVertexCompatKeys(c *gin.Context) {

|

||||

}

|

||||

func (h *Handler) PatchVertexCompatKey(c *gin.Context) {

|

||||

type vertexCompatPatch struct {

|

||||

APIKey *string `json:"api-key"`

|

||||

Prefix *string `json:"prefix"`

|

||||

BaseURL *string `json:"base-url"`

|

||||

ProxyURL *string `json:"proxy-url"`

|

||||

Headers *map[string]string `json:"headers"`

|

||||

Models *[]config.VertexCompatModel `json:"models"`

|

||||

APIKey *string `json:"api-key"`

|

||||

Prefix *string `json:"prefix"`

|

||||

BaseURL *string `json:"base-url"`

|

||||

ProxyURL *string `json:"proxy-url"`

|

||||

Headers *map[string]string `json:"headers"`

|

||||

Models *[]config.VertexCompatModel `json:"models"`

|

||||

ExcludedModels *[]string `json:"excluded-models"`

|

||||

}

|

||||

var body struct {

|

||||

Index *int `json:"index"`

|

||||

@@ -585,6 +586,9 @@ func (h *Handler) PatchVertexCompatKey(c *gin.Context) {

|

||||

if body.Value.Models != nil {

|

||||

entry.Models = append([]config.VertexCompatModel(nil), (*body.Value.Models)...)

|

||||

}

|

||||

if body.Value.ExcludedModels != nil {

|

||||

entry.ExcludedModels = config.NormalizeExcludedModels(*body.Value.ExcludedModels)

|

||||

}

|

||||

normalizeVertexCompatKey(&entry)

|

||||

h.cfg.VertexCompatAPIKey[targetIndex] = entry

|

||||

h.cfg.SanitizeVertexCompatKeys()

|

||||

@@ -1029,6 +1033,7 @@ func normalizeVertexCompatKey(entry *config.VertexCompatKey) {

|

||||

entry.BaseURL = strings.TrimSpace(entry.BaseURL)

|

||||

entry.ProxyURL = strings.TrimSpace(entry.ProxyURL)

|

||||

entry.Headers = config.NormalizeHeaders(entry.Headers)

|

||||

entry.ExcludedModels = config.NormalizeExcludedModels(entry.ExcludedModels)

|

||||

if len(entry.Models) == 0 {

|

||||

return

|

||||

}

|

||||

|

||||

@@ -8,6 +8,8 @@ import (

|

||||

"fmt"

|

||||

"io"

|

||||

"net/http"

|

||||

"net/url"

|

||||

"strings"

|

||||

"time"

|

||||

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/config"

|

||||

@@ -222,6 +224,97 @@ func (c *CopilotAuth) MakeAuthenticatedRequest(ctx context.Context, method, url

|

||||

return req, nil

|

||||

}

|

||||

|

||||

// CopilotModelEntry represents a single model entry returned by the Copilot /models API.

|

||||

type CopilotModelEntry struct {

|

||||

ID string `json:"id"`

|

||||

Object string `json:"object"`

|

||||

Created int64 `json:"created"`

|

||||

OwnedBy string `json:"owned_by"`

|

||||

Name string `json:"name,omitempty"`

|

||||

Version string `json:"version,omitempty"`

|

||||

Capabilities map[string]any `json:"capabilities,omitempty"`

|

||||

}

|

||||

|

||||

// CopilotModelsResponse represents the response from the Copilot /models endpoint.

|

||||

type CopilotModelsResponse struct {

|

||||

Data []CopilotModelEntry `json:"data"`

|

||||

Object string `json:"object"`

|

||||

}

|

||||

|

||||

// maxModelsResponseSize is the maximum allowed response size from the /models endpoint (2 MB).

|

||||

const maxModelsResponseSize = 2 * 1024 * 1024

|

||||

|

||||

// allowedCopilotAPIHosts is the set of hosts that are considered safe for Copilot API requests.

|

||||

var allowedCopilotAPIHosts = map[string]bool{

|

||||

"api.githubcopilot.com": true,

|

||||

"api.individual.githubcopilot.com": true,

|

||||

"api.business.githubcopilot.com": true,

|

||||

"copilot-proxy.githubusercontent.com": true,

|

||||

}

|

||||

|

||||

// ListModels fetches the list of available models from the Copilot API.

|

||||

// It requires a valid Copilot API token (not the GitHub access token).

|

||||

func (c *CopilotAuth) ListModels(ctx context.Context, apiToken *CopilotAPIToken) ([]CopilotModelEntry, error) {

|

||||

if apiToken == nil || apiToken.Token == "" {

|

||||

return nil, fmt.Errorf("copilot: api token is required for listing models")

|

||||

}

|

||||

|

||||

// Build models URL, validating the endpoint host to prevent SSRF.

|

||||

modelsURL := copilotAPIEndpoint + "/models"

|

||||

if ep := strings.TrimRight(apiToken.Endpoints.API, "/"); ep != "" {

|

||||

parsed, err := url.Parse(ep)

|

||||

if err == nil && parsed.Scheme == "https" && allowedCopilotAPIHosts[parsed.Host] {

|

||||

modelsURL = ep + "/models"

|

||||

} else {

|

||||

log.Warnf("copilot: ignoring untrusted API endpoint %q, using default", ep)

|

||||

}

|

||||

}

|

||||

|

||||

req, err := c.MakeAuthenticatedRequest(ctx, http.MethodGet, modelsURL, nil, apiToken)

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("copilot: failed to create models request: %w", err)

|

||||

}

|

||||

|

||||

resp, err := c.httpClient.Do(req)

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("copilot: models request failed: %w", err)

|

||||

}

|

||||

defer func() {

|

||||

if errClose := resp.Body.Close(); errClose != nil {

|

||||

log.Errorf("copilot list models: close body error: %v", errClose)

|

||||

}

|

||||

}()

|

||||

|

||||

// Limit response body to prevent memory exhaustion.

|

||||

limitedReader := io.LimitReader(resp.Body, maxModelsResponseSize)

|

||||

bodyBytes, err := io.ReadAll(limitedReader)

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("copilot: failed to read models response: %w", err)

|

||||

}

|

||||

|

||||

if !isHTTPSuccess(resp.StatusCode) {

|

||||

return nil, fmt.Errorf("copilot: list models failed with status %d: %s", resp.StatusCode, string(bodyBytes))

|

||||

}

|

||||

|

||||

var modelsResp CopilotModelsResponse

|

||||

if err = json.Unmarshal(bodyBytes, &modelsResp); err != nil {

|

||||

return nil, fmt.Errorf("copilot: failed to parse models response: %w", err)

|

||||

}

|

||||

|

||||

return modelsResp.Data, nil

|

||||

}

|

||||

|

||||

// ListModelsWithGitHubToken is a convenience method that exchanges a GitHub access token

|

||||

// for a Copilot API token and then fetches the available models.

|

||||

func (c *CopilotAuth) ListModelsWithGitHubToken(ctx context.Context, githubAccessToken string) ([]CopilotModelEntry, error) {

|

||||

apiToken, err := c.GetCopilotAPIToken(ctx, githubAccessToken)

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("copilot: failed to get API token for model listing: %w", err)

|

||||

}

|

||||

|

||||

return c.ListModels(ctx, apiToken)

|

||||

}

|

||||

|

||||

// buildChatCompletionURL builds the URL for chat completions API.

|

||||

func buildChatCompletionURL() string {

|

||||

return copilotAPIEndpoint + "/chat/completions"

|

||||

|

||||

@@ -1,5 +1,7 @@

|

||||

package config

|

||||

|

||||

import "strings"

|

||||

|

||||

// defaultKiroAliases returns default oauth-model-alias entries for Kiro.

|

||||

// These aliases expose standard Claude IDs for Kiro-prefixed upstream models.

|

||||

func defaultKiroAliases() []OAuthModelAlias {

|

||||

@@ -35,3 +37,25 @@ func defaultGitHubCopilotAliases() []OAuthModelAlias {

|

||||

{Name: "claude-sonnet-4.6", Alias: "claude-sonnet-4-6", Fork: true},

|

||||

}

|

||||

}

|

||||

|

||||

// GitHubCopilotAliasesFromModels generates oauth-model-alias entries from a dynamic

|

||||

// list of model IDs fetched from the Copilot API. It auto-creates aliases for

|

||||

// models whose ID contains a dot (e.g. "claude-opus-4.6" → "claude-opus-4-6"),

|

||||

// which is the pattern used by Claude models on Copilot.

|

||||

func GitHubCopilotAliasesFromModels(modelIDs []string) []OAuthModelAlias {

|

||||

var aliases []OAuthModelAlias

|

||||

seen := make(map[string]struct{})

|

||||

for _, id := range modelIDs {

|

||||

if !strings.Contains(id, ".") {

|

||||

continue

|

||||

}

|

||||

hyphenID := strings.ReplaceAll(id, ".", "-")

|

||||

key := id + "→" + hyphenID

|

||||

if _, ok := seen[key]; ok {

|

||||

continue

|

||||

}

|

||||

seen[key] = struct{}{}

|

||||

aliases = append(aliases, OAuthModelAlias{Name: id, Alias: hyphenID, Fork: true})

|

||||

}

|

||||

return aliases

|

||||

}

|

||||

|

||||

@@ -34,6 +34,9 @@ type VertexCompatKey struct {

|

||||

|

||||

// Models defines the model configurations including aliases for routing.

|

||||

Models []VertexCompatModel `yaml:"models,omitempty" json:"models,omitempty"`

|

||||

|

||||

// ExcludedModels lists model IDs that should be excluded for this provider.

|

||||

ExcludedModels []string `yaml:"excluded-models,omitempty" json:"excluded-models,omitempty"`

|

||||

}

|

||||

|

||||

func (k VertexCompatKey) GetAPIKey() string { return k.APIKey }

|

||||

@@ -74,6 +77,7 @@ func (cfg *Config) SanitizeVertexCompatKeys() {

|

||||

}

|

||||

entry.ProxyURL = strings.TrimSpace(entry.ProxyURL)

|

||||

entry.Headers = NormalizeHeaders(entry.Headers)

|

||||

entry.ExcludedModels = NormalizeExcludedModels(entry.ExcludedModels)

|

||||

|

||||

// Sanitize models: remove entries without valid alias

|

||||

sanitizedModels := make([]VertexCompatModel, 0, len(entry.Models))

|

||||

|

||||

@@ -23,7 +23,6 @@ import (

|

||||

// - kiro

|

||||

// - kilo

|

||||

// - github-copilot

|

||||

// - kiro

|

||||

// - amazonq

|

||||

// - antigravity (returns static overrides only)

|

||||

func GetStaticModelDefinitionsByChannel(channel string) []*ModelInfo {

|

||||

@@ -152,6 +151,7 @@ func GetGitHubCopilotModels() []*ModelInfo {

|

||||

Description: "OpenAI GPT-4.1 via GitHub Copilot",

|

||||

ContextLength: 128000,

|

||||

MaxCompletionTokens: 16384,

|

||||

SupportedEndpoints: []string{"/chat/completions", "/responses"},

|

||||

},

|

||||

}

|

||||

|

||||

@@ -166,6 +166,7 @@ func GetGitHubCopilotModels() []*ModelInfo {

|

||||

Description: entry.Description,

|

||||

ContextLength: 128000,

|

||||

MaxCompletionTokens: 16384,

|

||||

SupportedEndpoints: []string{"/chat/completions", "/responses"},

|

||||

})

|

||||

}

|

||||

|

||||

|

||||

@@ -220,12 +220,27 @@ func GetGeminiModels() []*ModelInfo {

|

||||

Name: "models/gemini-3-flash-preview",

|

||||

Version: "3.0",

|

||||

DisplayName: "Gemini 3 Flash Preview",

|

||||

Description: "Gemini 3 Flash Preview",

|

||||

Description: "Our most intelligent model built for speed, combining frontier intelligence with superior search and grounding.",

|

||||

InputTokenLimit: 1048576,

|

||||

OutputTokenLimit: 65536,

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "low", "medium", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3.1-flash-lite-preview",

|

||||

Object: "model",

|

||||

Created: 1776288000,

|

||||

OwnedBy: "google",

|

||||

Type: "gemini",

|

||||

Name: "models/gemini-3.1-flash-lite-preview",

|

||||

Version: "3.1",

|

||||

DisplayName: "Gemini 3.1 Flash Lite Preview",

|

||||

Description: "Our smallest and most cost effective model, built for at scale usage.",

|

||||

InputTokenLimit: 1048576,

|

||||

OutputTokenLimit: 65536,

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3-pro-image-preview",

|

||||

Object: "model",

|

||||

@@ -336,6 +351,21 @@ func GetGeminiVertexModels() []*ModelInfo {

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3.1-flash-lite-preview",

|

||||

Object: "model",

|

||||

Created: 1776288000,

|

||||

OwnedBy: "google",

|

||||

Type: "gemini",

|

||||

Name: "models/gemini-3.1-flash-lite-preview",

|

||||

Version: "3.1",

|

||||

DisplayName: "Gemini 3.1 Flash Lite Preview",

|

||||

Description: "Our smallest and most cost effective model, built for at scale usage.",

|

||||

InputTokenLimit: 1048576,

|

||||

OutputTokenLimit: 65536,

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3-pro-image-preview",

|

||||

Object: "model",

|

||||

@@ -508,6 +538,21 @@ func GetGeminiCLIModels() []*ModelInfo {

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "low", "medium", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3.1-flash-lite-preview",

|

||||

Object: "model",

|

||||

Created: 1776288000,

|

||||

OwnedBy: "google",

|

||||

Type: "gemini",

|

||||

Name: "models/gemini-3.1-flash-lite-preview",

|

||||

Version: "3.1",

|

||||

DisplayName: "Gemini 3.1 Flash Lite Preview",

|

||||

Description: "Our smallest and most cost effective model, built for at scale usage.",

|

||||

InputTokenLimit: 1048576,

|

||||

OutputTokenLimit: 65536,

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}},

|

||||

},

|

||||

}

|

||||

}

|

||||

|

||||

@@ -604,6 +649,21 @@ func GetAIStudioModels() []*ModelInfo {

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true},

|

||||

},

|

||||

{

|

||||

ID: "gemini-3.1-flash-lite-preview",

|

||||

Object: "model",

|

||||

Created: 1776288000,

|

||||

OwnedBy: "google",

|

||||

Type: "gemini",

|

||||

Name: "models/gemini-3.1-flash-lite-preview",

|

||||

Version: "3.1",

|

||||

DisplayName: "Gemini 3.1 Flash Lite Preview",

|

||||

Description: "Our smallest and most cost effective model, built for at scale usage.",

|

||||

InputTokenLimit: 1048576,

|

||||

OutputTokenLimit: 65536,

|

||||

SupportedGenerationMethods: []string{"generateContent", "countTokens", "createCachedContent", "batchGenerateContent"},

|

||||

Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}},

|

||||

},

|

||||

{

|

||||

ID: "gemini-pro-latest",

|

||||

Object: "model",

|

||||

@@ -839,6 +899,20 @@ func GetOpenAIModels() []*ModelInfo {

|

||||

SupportedParameters: []string{"tools"},

|

||||

Thinking: &ThinkingSupport{Levels: []string{"low", "medium", "high", "xhigh"}},

|

||||

},

|

||||

{

|

||||

ID: "gpt-5.4",

|

||||

Object: "model",

|

||||

Created: 1772668800,

|

||||

OwnedBy: "openai",

|

||||

Type: "openai",

|

||||

Version: "gpt-5.4",

|

||||

DisplayName: "GPT 5.4",

|

||||

Description: "Stable version of GPT 5.4",

|

||||

ContextLength: 1_050_000,

|

||||

MaxCompletionTokens: 128000,

|

||||

SupportedParameters: []string{"tools"},

|

||||

Thinking: &ThinkingSupport{Levels: []string{"low", "medium", "high", "xhigh"}},

|

||||

},

|

||||

}

|

||||

}

|

||||

|

||||

@@ -966,6 +1040,7 @@ func GetAntigravityModelConfig() map[string]*AntigravityModelConfig {

|

||||

"gemini-3.1-pro-high": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}}},

|

||||

"gemini-3.1-pro-low": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"low", "high"}}},

|

||||

"gemini-3.1-flash-image": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}}},

|

||||

"gemini-3.1-flash-lite-preview": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "high"}}},

|

||||

"gemini-3-flash": {Thinking: &ThinkingSupport{Min: 128, Max: 32768, ZeroAllowed: false, DynamicAllowed: true, Levels: []string{"minimal", "low", "medium", "high"}}},

|

||||

"claude-opus-4-6-thinking": {Thinking: &ThinkingSupport{Min: 1024, Max: 64000, ZeroAllowed: true, DynamicAllowed: true}, MaxCompletionTokens: 64000},

|

||||

"claude-sonnet-4-6": {Thinking: &ThinkingSupport{Min: 1024, Max: 64000, ZeroAllowed: true, DynamicAllowed: true}, MaxCompletionTokens: 64000},

|

||||

|

||||

@@ -59,6 +59,7 @@ func buildRequestBodyFromPayload(t *testing.T, modelName string) map[string]any

|

||||

"properties": {

|

||||

"mode": {

|

||||

"type": "string",

|

||||

"deprecated": true,

|

||||

"enum": ["a", "b"],

|

||||

"enumTitles": ["A", "B"]

|

||||

}

|

||||

@@ -156,4 +157,7 @@ func assertSchemaSanitizedAndPropertyPreserved(t *testing.T, params map[string]a

|

||||

if _, ok := mode["enumTitles"]; ok {

|

||||

t.Fatalf("enumTitles should be removed from nested schema")

|

||||

}

|

||||

if _, ok := mode["deprecated"]; ok {

|

||||

t.Fatalf("deprecated should be removed from nested schema")

|

||||

}

|

||||

}

|

||||

|

||||

@@ -187,17 +187,15 @@ func (e *ClaudeExecutor) Execute(ctx context.Context, auth *cliproxyauth.Auth, r

|

||||

}

|

||||

recordAPIResponseMetadata(ctx, e.cfg, httpResp.StatusCode, httpResp.Header.Clone())

|

||||

if httpResp.StatusCode < 200 || httpResp.StatusCode >= 300 {

|

||||

// Decompress error responses (e.g. gzip-compressed 400 errors from Anthropic API).

|

||||

errBody := httpResp.Body

|

||||

if ce := httpResp.Header.Get("Content-Encoding"); ce != "" {

|

||||

var decErr error

|

||||

errBody, decErr = decodeResponseBody(httpResp.Body, ce)

|

||||

if decErr != nil {

|

||||

recordAPIResponseError(ctx, e.cfg, decErr)

|

||||

msg := fmt.Sprintf("failed to decode error response body (encoding=%s): %v", ce, decErr)

|

||||

logWithRequestID(ctx).Warn(msg)

|

||||

return resp, statusErr{code: httpResp.StatusCode, msg: msg}

|

||||

}

|

||||

// Decompress error responses — pass the Content-Encoding value (may be empty)

|

||||

// and let decodeResponseBody handle both header-declared and magic-byte-detected

|

||||

// compression. This keeps error-path behaviour consistent with the success path.

|

||||

errBody, decErr := decodeResponseBody(httpResp.Body, httpResp.Header.Get("Content-Encoding"))

|

||||

if decErr != nil {

|

||||

recordAPIResponseError(ctx, e.cfg, decErr)

|

||||

msg := fmt.Sprintf("failed to decode error response body: %v", decErr)

|

||||

logWithRequestID(ctx).Warn(msg)

|

||||

return resp, statusErr{code: httpResp.StatusCode, msg: msg}

|

||||

}

|

||||

b, readErr := io.ReadAll(errBody)

|

||||

if readErr != nil {

|

||||

@@ -352,17 +350,15 @@ func (e *ClaudeExecutor) ExecuteStream(ctx context.Context, auth *cliproxyauth.A

|

||||

}

|

||||

recordAPIResponseMetadata(ctx, e.cfg, httpResp.StatusCode, httpResp.Header.Clone())

|

||||

if httpResp.StatusCode < 200 || httpResp.StatusCode >= 300 {

|

||||

// Decompress error responses (e.g. gzip-compressed 400 errors from Anthropic API).

|

||||

errBody := httpResp.Body

|

||||

if ce := httpResp.Header.Get("Content-Encoding"); ce != "" {

|

||||

var decErr error

|

||||

errBody, decErr = decodeResponseBody(httpResp.Body, ce)

|

||||

if decErr != nil {

|

||||

recordAPIResponseError(ctx, e.cfg, decErr)

|

||||

msg := fmt.Sprintf("failed to decode error response body (encoding=%s): %v", ce, decErr)

|

||||

logWithRequestID(ctx).Warn(msg)

|

||||

return nil, statusErr{code: httpResp.StatusCode, msg: msg}

|

||||

}

|

||||

// Decompress error responses — pass the Content-Encoding value (may be empty)

|

||||

// and let decodeResponseBody handle both header-declared and magic-byte-detected

|

||||

// compression. This keeps error-path behaviour consistent with the success path.

|

||||

errBody, decErr := decodeResponseBody(httpResp.Body, httpResp.Header.Get("Content-Encoding"))

|

||||

if decErr != nil {

|

||||

recordAPIResponseError(ctx, e.cfg, decErr)

|

||||

msg := fmt.Sprintf("failed to decode error response body: %v", decErr)

|

||||

logWithRequestID(ctx).Warn(msg)

|

||||

return nil, statusErr{code: httpResp.StatusCode, msg: msg}

|

||||

}

|

||||

b, readErr := io.ReadAll(errBody)

|

||||

if readErr != nil {

|

||||

@@ -521,17 +517,15 @@ func (e *ClaudeExecutor) CountTokens(ctx context.Context, auth *cliproxyauth.Aut

|

||||

}

|

||||

recordAPIResponseMetadata(ctx, e.cfg, resp.StatusCode, resp.Header.Clone())

|

||||

if resp.StatusCode < 200 || resp.StatusCode >= 300 {

|

||||

// Decompress error responses (e.g. gzip-compressed 400 errors from Anthropic API).

|

||||

errBody := resp.Body

|

||||

if ce := resp.Header.Get("Content-Encoding"); ce != "" {

|

||||

var decErr error

|

||||

errBody, decErr = decodeResponseBody(resp.Body, ce)

|

||||

if decErr != nil {

|

||||

recordAPIResponseError(ctx, e.cfg, decErr)

|

||||

msg := fmt.Sprintf("failed to decode error response body (encoding=%s): %v", ce, decErr)

|

||||

logWithRequestID(ctx).Warn(msg)

|

||||

return cliproxyexecutor.Response{}, statusErr{code: resp.StatusCode, msg: msg}

|

||||

}

|

||||

// Decompress error responses — pass the Content-Encoding value (may be empty)

|

||||

// and let decodeResponseBody handle both header-declared and magic-byte-detected

|

||||

// compression. This keeps error-path behaviour consistent with the success path.

|

||||

errBody, decErr := decodeResponseBody(resp.Body, resp.Header.Get("Content-Encoding"))

|

||||

if decErr != nil {

|

||||

recordAPIResponseError(ctx, e.cfg, decErr)

|

||||

msg := fmt.Sprintf("failed to decode error response body: %v", decErr)

|

||||

logWithRequestID(ctx).Warn(msg)

|

||||

return cliproxyexecutor.Response{}, statusErr{code: resp.StatusCode, msg: msg}

|

||||

}

|

||||

b, readErr := io.ReadAll(errBody)

|

||||

if readErr != nil {

|

||||

@@ -662,12 +656,61 @@ func (c *compositeReadCloser) Close() error {

|

||||

return firstErr

|

||||

}

|

||||

|

||||

// peekableBody wraps a bufio.Reader around the original ReadCloser so that

|

||||

// magic bytes can be inspected without consuming them from the stream.

|

||||

type peekableBody struct {

|

||||

*bufio.Reader

|

||||

closer io.Closer

|

||||

}

|

||||

|

||||

func (p *peekableBody) Close() error {

|

||||

return p.closer.Close()

|

||||

}

|

||||

|

||||

func decodeResponseBody(body io.ReadCloser, contentEncoding string) (io.ReadCloser, error) {

|

||||

if body == nil {

|

||||

return nil, fmt.Errorf("response body is nil")

|

||||

}

|

||||

if contentEncoding == "" {

|

||||

return body, nil

|

||||

// No Content-Encoding header. Attempt best-effort magic-byte detection to

|

||||

// handle misbehaving upstreams that compress without setting the header.

|

||||

// Only gzip (1f 8b) and zstd (28 b5 2f fd) have reliable magic sequences;

|

||||

// br and deflate have none and are left as-is.

|

||||

// The bufio wrapper preserves unread bytes so callers always see the full

|

||||

// stream regardless of whether decompression was applied.

|

||||

pb := &peekableBody{Reader: bufio.NewReader(body), closer: body}

|

||||

magic, peekErr := pb.Peek(4)

|

||||

if peekErr == nil || (peekErr == io.EOF && len(magic) >= 2) {

|

||||

switch {

|

||||

case len(magic) >= 2 && magic[0] == 0x1f && magic[1] == 0x8b:

|

||||

gzipReader, gzErr := gzip.NewReader(pb)

|

||||

if gzErr != nil {

|

||||

_ = pb.Close()

|

||||

return nil, fmt.Errorf("magic-byte gzip: failed to create reader: %w", gzErr)

|

||||

}

|

||||

return &compositeReadCloser{

|

||||

Reader: gzipReader,

|

||||

closers: []func() error{

|

||||

gzipReader.Close,

|

||||

pb.Close,

|

||||

},

|

||||

}, nil

|

||||

case len(magic) >= 4 && magic[0] == 0x28 && magic[1] == 0xb5 && magic[2] == 0x2f && magic[3] == 0xfd:

|

||||

decoder, zdErr := zstd.NewReader(pb)

|

||||

if zdErr != nil {

|

||||

_ = pb.Close()

|

||||

return nil, fmt.Errorf("magic-byte zstd: failed to create reader: %w", zdErr)

|

||||

}

|

||||

return &compositeReadCloser{

|

||||

Reader: decoder,

|

||||

closers: []func() error{

|

||||

func() error { decoder.Close(); return nil },

|

||||

pb.Close,

|

||||

},

|

||||

}, nil

|

||||

}

|

||||

}

|

||||

return pb, nil

|

||||

}

|

||||

encodings := strings.Split(contentEncoding, ",")

|

||||

for _, raw := range encodings {

|

||||

@@ -844,11 +887,15 @@ func applyClaudeHeaders(r *http.Request, auth *cliproxyauth.Auth, apiKey string,

|

||||

r.Header.Set("User-Agent", hdrDefault(hd.UserAgent, "claude-cli/2.1.63 (external, cli)"))

|

||||

}

|

||||

r.Header.Set("Connection", "keep-alive")

|

||||

r.Header.Set("Accept-Encoding", "gzip, deflate, br, zstd")

|

||||

if stream {

|

||||

r.Header.Set("Accept", "text/event-stream")

|

||||

// SSE streams must not be compressed: the downstream scanner reads

|

||||

// line-delimited text and cannot parse compressed bytes. Using

|

||||

// "identity" tells the upstream to send an uncompressed stream.

|

||||

r.Header.Set("Accept-Encoding", "identity")

|

||||

} else {

|

||||

r.Header.Set("Accept", "application/json")

|

||||

r.Header.Set("Accept-Encoding", "gzip, deflate, br, zstd")

|

||||

}

|

||||

// Keep OS/Arch mapping dynamic (not configurable).

|

||||

// They intentionally continue to derive from runtime.GOOS/runtime.GOARCH.

|

||||

@@ -857,6 +904,12 @@ func applyClaudeHeaders(r *http.Request, auth *cliproxyauth.Auth, apiKey string,

|

||||

attrs = auth.Attributes

|

||||

}

|

||||

util.ApplyCustomHeadersFromAttrs(r, attrs)

|

||||

// Re-enforce Accept-Encoding: identity after ApplyCustomHeadersFromAttrs, which

|

||||

// may override it with a user-configured value. Compressed SSE breaks the line

|

||||

// scanner regardless of user preference, so this is non-negotiable for streams.

|

||||

if stream {

|

||||

r.Header.Set("Accept-Encoding", "identity")

|

||||

}

|

||||

}

|

||||

|

||||

func claudeCreds(a *cliproxyauth.Auth) (apiKey, baseURL string) {

|

||||

|

||||

@@ -2,6 +2,7 @@ package executor

|

||||

|

||||

import (

|

||||

"bytes"

|

||||

"compress/gzip"

|

||||

"context"

|

||||

"io"

|

||||

"net/http"

|

||||

@@ -9,6 +10,7 @@ import (

|

||||

"strings"

|

||||

"testing"

|

||||

|

||||

"github.com/klauspost/compress/zstd"

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/config"

|

||||

cliproxyauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/cliproxy/auth"

|

||||

cliproxyexecutor "github.com/router-for-me/CLIProxyAPI/v6/sdk/cliproxy/executor"

|

||||

@@ -583,3 +585,385 @@ func testClaudeExecutorInvalidCompressedErrorBody(

|

||||

t.Fatalf("expected status code 400, got: %v", err)

|

||||

}

|

||||

}

|

||||

|

||||

// TestClaudeExecutor_ExecuteStream_SetsIdentityAcceptEncoding verifies that streaming

|

||||

// requests use Accept-Encoding: identity so the upstream cannot respond with a

|

||||

// compressed SSE body that would silently break the line scanner.

|

||||

func TestClaudeExecutor_ExecuteStream_SetsIdentityAcceptEncoding(t *testing.T) {

|

||||

var gotEncoding, gotAccept string

|

||||

server := httptest.NewServer(http.HandlerFunc(func(w http.ResponseWriter, r *http.Request) {

|

||||

gotEncoding = r.Header.Get("Accept-Encoding")

|

||||

gotAccept = r.Header.Get("Accept")

|

||||

w.Header().Set("Content-Type", "text/event-stream")

|

||||

_, _ = w.Write([]byte("data: {\"type\":\"message_stop\"}\n\n"))

|

||||

}))

|

||||

defer server.Close()

|

||||

|

||||

executor := NewClaudeExecutor(&config.Config{})

|

||||

auth := &cliproxyauth.Auth{Attributes: map[string]string{

|

||||

"api_key": "key-123",

|

||||

"base_url": server.URL,

|

||||

}}

|

||||

payload := []byte(`{"messages":[{"role":"user","content":[{"type":"text","text":"hi"}]}]}`)

|

||||

|

||||

result, err := executor.ExecuteStream(context.Background(), auth, cliproxyexecutor.Request{

|

||||

Model: "claude-3-5-sonnet-20241022",

|

||||

Payload: payload,

|

||||

}, cliproxyexecutor.Options{

|

||||

SourceFormat: sdktranslator.FromString("claude"),

|

||||

})

|

||||

if err != nil {

|

||||

t.Fatalf("ExecuteStream error: %v", err)

|

||||

}

|

||||

for chunk := range result.Chunks {

|

||||

if chunk.Err != nil {

|

||||

t.Fatalf("unexpected chunk error: %v", chunk.Err)

|

||||

}

|

||||

}

|

||||

|

||||

if gotEncoding != "identity" {

|

||||

t.Errorf("Accept-Encoding = %q, want %q", gotEncoding, "identity")

|

||||

}

|

||||

if gotAccept != "text/event-stream" {

|

||||

t.Errorf("Accept = %q, want %q", gotAccept, "text/event-stream")

|

||||

}

|

||||

}

|

||||

|

||||

// TestClaudeExecutor_Execute_SetsCompressedAcceptEncoding verifies that non-streaming

|

||||

// requests keep the full accept-encoding to allow response compression (which

|

||||

// decodeResponseBody handles correctly).

|

||||

func TestClaudeExecutor_Execute_SetsCompressedAcceptEncoding(t *testing.T) {

|

||||

var gotEncoding, gotAccept string

|

||||

server := httptest.NewServer(http.HandlerFunc(func(w http.ResponseWriter, r *http.Request) {

|

||||

gotEncoding = r.Header.Get("Accept-Encoding")

|

||||

gotAccept = r.Header.Get("Accept")

|

||||

w.Header().Set("Content-Type", "application/json")

|

||||

_, _ = w.Write([]byte(`{"id":"msg_1","type":"message","model":"claude-3-5-sonnet-20241022","role":"assistant","content":[{"type":"text","text":"hi"}],"usage":{"input_tokens":1,"output_tokens":1}}`))

|

||||

}))

|

||||

defer server.Close()

|

||||

|

||||

executor := NewClaudeExecutor(&config.Config{})

|

||||

auth := &cliproxyauth.Auth{Attributes: map[string]string{

|

||||

"api_key": "key-123",

|

||||

"base_url": server.URL,

|

||||

}}

|

||||

payload := []byte(`{"messages":[{"role":"user","content":[{"type":"text","text":"hi"}]}]}`)

|

||||

|

||||

_, err := executor.Execute(context.Background(), auth, cliproxyexecutor.Request{

|

||||

Model: "claude-3-5-sonnet-20241022",

|

||||

Payload: payload,

|

||||

}, cliproxyexecutor.Options{

|

||||

SourceFormat: sdktranslator.FromString("claude"),

|

||||

})

|

||||

if err != nil {

|

||||

t.Fatalf("Execute error: %v", err)

|

||||

}

|

||||

|

||||

if gotEncoding != "gzip, deflate, br, zstd" {

|

||||

t.Errorf("Accept-Encoding = %q, want %q", gotEncoding, "gzip, deflate, br, zstd")

|

||||

}

|

||||

if gotAccept != "application/json" {

|

||||

t.Errorf("Accept = %q, want %q", gotAccept, "application/json")

|

||||

}

|

||||

}

|

||||

|

||||

// TestClaudeExecutor_ExecuteStream_GzipSuccessBodyDecoded verifies that a streaming

|

||||

// HTTP 200 response with Content-Encoding: gzip is correctly decompressed before

|

||||

// the line scanner runs, so SSE chunks are not silently dropped.

|

||||

func TestClaudeExecutor_ExecuteStream_GzipSuccessBodyDecoded(t *testing.T) {

|

||||

var buf bytes.Buffer

|

||||

gz := gzip.NewWriter(&buf)

|

||||

_, _ = gz.Write([]byte("data: {\"type\":\"message_stop\"}\n"))

|

||||

_ = gz.Close()

|

||||

compressedBody := buf.Bytes()

|

||||

|

||||

server := httptest.NewServer(http.HandlerFunc(func(w http.ResponseWriter, r *http.Request) {

|

||||

w.Header().Set("Content-Type", "text/event-stream")

|

||||

w.Header().Set("Content-Encoding", "gzip")

|

||||

_, _ = w.Write(compressedBody)

|

||||

}))

|

||||

defer server.Close()

|

||||

|

||||

executor := NewClaudeExecutor(&config.Config{})

|

||||

auth := &cliproxyauth.Auth{Attributes: map[string]string{

|

||||

"api_key": "key-123",

|

||||

"base_url": server.URL,

|

||||

}}

|

||||

payload := []byte(`{"messages":[{"role":"user","content":[{"type":"text","text":"hi"}]}]}`)

|

||||

|

||||

result, err := executor.ExecuteStream(context.Background(), auth, cliproxyexecutor.Request{

|

||||

Model: "claude-3-5-sonnet-20241022",

|

||||

Payload: payload,

|

||||

}, cliproxyexecutor.Options{

|

||||

SourceFormat: sdktranslator.FromString("claude"),

|

||||

})

|

||||

if err != nil {

|

||||

t.Fatalf("ExecuteStream error: %v", err)

|

||||

}

|

||||

|

||||

var combined strings.Builder

|

||||

for chunk := range result.Chunks {

|

||||

if chunk.Err != nil {

|

||||

t.Fatalf("chunk error: %v", chunk.Err)

|

||||

}

|

||||

combined.Write(chunk.Payload)

|

||||

}

|

||||

|

||||

if combined.Len() == 0 {

|

||||

t.Fatal("expected at least one chunk from gzip-encoded SSE body, got none (body was not decompressed)")

|

||||

}

|

||||

if !strings.Contains(combined.String(), "message_stop") {

|

||||

t.Errorf("expected SSE content in chunks, got: %q", combined.String())

|

||||

}

|

||||

}

|

||||

|

||||

// TestDecodeResponseBody_MagicByteGzipNoHeader verifies that decodeResponseBody

|

||||

// detects gzip-compressed content via magic bytes even when Content-Encoding is absent.

|

||||

func TestDecodeResponseBody_MagicByteGzipNoHeader(t *testing.T) {

|

||||

const plaintext = "data: {\"type\":\"message_stop\"}\n"

|

||||

|

||||

var buf bytes.Buffer

|

||||

gz := gzip.NewWriter(&buf)

|

||||

_, _ = gz.Write([]byte(plaintext))

|

||||

_ = gz.Close()

|

||||

|

||||

rc := io.NopCloser(&buf)

|

||||

decoded, err := decodeResponseBody(rc, "")

|

||||

if err != nil {

|

||||

t.Fatalf("decodeResponseBody error: %v", err)

|

||||

}

|

||||

defer decoded.Close()

|

||||

|

||||

got, err := io.ReadAll(decoded)

|

||||

if err != nil {

|

||||

t.Fatalf("ReadAll error: %v", err)

|

||||

}

|

||||

if string(got) != plaintext {

|

||||

t.Errorf("decoded = %q, want %q", got, plaintext)

|

||||

}

|

||||

}

|

||||

|

||||

// TestDecodeResponseBody_PlainTextNoHeader verifies that decodeResponseBody returns

|

||||

// plain text untouched when Content-Encoding is absent and no magic bytes match.

|

||||

func TestDecodeResponseBody_PlainTextNoHeader(t *testing.T) {

|

||||

const plaintext = "data: {\"type\":\"message_stop\"}\n"

|

||||

rc := io.NopCloser(strings.NewReader(plaintext))

|

||||

decoded, err := decodeResponseBody(rc, "")

|

||||

if err != nil {

|

||||

t.Fatalf("decodeResponseBody error: %v", err)

|

||||

}

|

||||

defer decoded.Close()

|

||||

|

||||

got, err := io.ReadAll(decoded)

|

||||

if err != nil {

|

||||

t.Fatalf("ReadAll error: %v", err)

|

||||

}

|

||||

if string(got) != plaintext {

|

||||

t.Errorf("decoded = %q, want %q", got, plaintext)

|

||||

}

|

||||

}

|

||||

|

||||

// TestClaudeExecutor_ExecuteStream_GzipNoContentEncodingHeader verifies the full

|

||||

// pipeline: when the upstream returns a gzip-compressed SSE body WITHOUT setting

|

||||

// Content-Encoding (a misbehaving upstream), the magic-byte sniff in

|

||||

// decodeResponseBody still decompresses it, so chunks reach the caller.

|

||||

func TestClaudeExecutor_ExecuteStream_GzipNoContentEncodingHeader(t *testing.T) {

|

||||

var buf bytes.Buffer

|

||||

gz := gzip.NewWriter(&buf)

|

||||

_, _ = gz.Write([]byte("data: {\"type\":\"message_stop\"}\n"))

|

||||

_ = gz.Close()

|

||||

compressedBody := buf.Bytes()

|

||||

|

||||

server := httptest.NewServer(http.HandlerFunc(func(w http.ResponseWriter, r *http.Request) {

|

||||

w.Header().Set("Content-Type", "text/event-stream")

|

||||

// Intentionally omit Content-Encoding to simulate misbehaving upstream.

|

||||

_, _ = w.Write(compressedBody)

|

||||

}))

|

||||

defer server.Close()

|

||||

|

||||

executor := NewClaudeExecutor(&config.Config{})

|

||||

auth := &cliproxyauth.Auth{Attributes: map[string]string{

|

||||

"api_key": "key-123",

|

||||

"base_url": server.URL,

|

||||

}}

|

||||

payload := []byte(`{"messages":[{"role":"user","content":[{"type":"text","text":"hi"}]}]}`)

|

||||

|

||||

result, err := executor.ExecuteStream(context.Background(), auth, cliproxyexecutor.Request{

|

||||

Model: "claude-3-5-sonnet-20241022",

|

||||

Payload: payload,

|

||||

}, cliproxyexecutor.Options{

|

||||

SourceFormat: sdktranslator.FromString("claude"),

|

||||

})

|

||||

if err != nil {

|

||||

t.Fatalf("ExecuteStream error: %v", err)

|

||||

}

|

||||

|

||||

var combined strings.Builder

|

||||

for chunk := range result.Chunks {

|

||||

if chunk.Err != nil {

|

||||

t.Fatalf("chunk error: %v", chunk.Err)

|

||||

}

|

||||

combined.Write(chunk.Payload)

|

||||

}

|

||||

|

||||

if combined.Len() == 0 {

|

||||

t.Fatal("expected chunks from gzip body without Content-Encoding header, got none (magic-byte sniff failed)")

|

||||

}

|

||||

if !strings.Contains(combined.String(), "message_stop") {

|

||||

t.Errorf("unexpected chunk content: %q", combined.String())

|

||||

}

|

||||

}

|

||||

|

||||

// TestClaudeExecutor_ExecuteStream_AcceptEncodingOverrideCannotBypassIdentity verifies

|

||||

// that injecting Accept-Encoding via auth.Attributes cannot override the stream

|

||||

// path's enforced identity encoding.

|

||||

func TestClaudeExecutor_ExecuteStream_AcceptEncodingOverrideCannotBypassIdentity(t *testing.T) {

|

||||

var gotEncoding string

|

||||

server := httptest.NewServer(http.HandlerFunc(func(w http.ResponseWriter, r *http.Request) {

|

||||

gotEncoding = r.Header.Get("Accept-Encoding")

|

||||

w.Header().Set("Content-Type", "text/event-stream")

|

||||

_, _ = w.Write([]byte("data: {\"type\":\"message_stop\"}\n\n"))

|

||||

}))

|

||||

defer server.Close()

|

||||

|

||||

executor := NewClaudeExecutor(&config.Config{})

|

||||

// Inject Accept-Encoding via the custom header attribute mechanism.

|

||||

auth := &cliproxyauth.Auth{Attributes: map[string]string{

|

||||

"api_key": "key-123",

|

||||

"base_url": server.URL,

|

||||

"header:Accept-Encoding": "gzip, deflate, br, zstd",

|

||||

}}

|

||||

payload := []byte(`{"messages":[{"role":"user","content":[{"type":"text","text":"hi"}]}]}`)

|

||||

|

||||

result, err := executor.ExecuteStream(context.Background(), auth, cliproxyexecutor.Request{

|

||||

Model: "claude-3-5-sonnet-20241022",

|

||||

Payload: payload,

|

||||

}, cliproxyexecutor.Options{

|

||||

SourceFormat: sdktranslator.FromString("claude"),

|

||||

})

|

||||

if err != nil {

|

||||

t.Fatalf("ExecuteStream error: %v", err)

|

||||

}

|

||||

for chunk := range result.Chunks {

|

||||

if chunk.Err != nil {

|

||||

t.Fatalf("unexpected chunk error: %v", chunk.Err)

|

||||

}

|

||||

}

|

||||

|

||||

if gotEncoding != "identity" {

|

||||

t.Errorf("Accept-Encoding = %q; stream path must enforce identity regardless of auth.Attributes override", gotEncoding)

|

||||

}

|

||||

}

|

||||

|

||||

// TestDecodeResponseBody_MagicByteZstdNoHeader verifies that decodeResponseBody

|

||||

// detects zstd-compressed content via magic bytes (28 b5 2f fd) even when

|

||||

// Content-Encoding is absent.

|

||||

func TestDecodeResponseBody_MagicByteZstdNoHeader(t *testing.T) {

|

||||

const plaintext = "data: {\"type\":\"message_stop\"}\n"

|

||||

|

||||

var buf bytes.Buffer

|

||||

enc, err := zstd.NewWriter(&buf)

|

||||

if err != nil {

|

||||

t.Fatalf("zstd.NewWriter: %v", err)

|

||||

}

|

||||

_, _ = enc.Write([]byte(plaintext))

|

||||

_ = enc.Close()

|

||||

|

||||

rc := io.NopCloser(&buf)

|

||||

decoded, err := decodeResponseBody(rc, "")

|

||||

if err != nil {

|

||||

t.Fatalf("decodeResponseBody error: %v", err)

|

||||

}

|

||||

defer decoded.Close()

|

||||

|

||||

got, err := io.ReadAll(decoded)

|

||||

if err != nil {

|

||||

t.Fatalf("ReadAll error: %v", err)

|

||||

}

|

||||

if string(got) != plaintext {

|

||||

t.Errorf("decoded = %q, want %q", got, plaintext)

|

||||

}

|

||||

}

|

||||

|

||||

// TestClaudeExecutor_Execute_GzipErrorBodyNoContentEncodingHeader verifies that the

|

||||

// error path (4xx) correctly decompresses a gzip body even when the upstream omits

|

||||

// the Content-Encoding header. This closes the gap left by PR #1771, which only

|

||||

// fixed header-declared compression on the error path.

|

||||

func TestClaudeExecutor_Execute_GzipErrorBodyNoContentEncodingHeader(t *testing.T) {

|

||||

const errJSON = `{"type":"error","error":{"type":"invalid_request_error","message":"test error"}}`

|

||||

|

||||

var buf bytes.Buffer

|

||||

gz := gzip.NewWriter(&buf)

|

||||

_, _ = gz.Write([]byte(errJSON))

|

||||

_ = gz.Close()

|

||||

compressedBody := buf.Bytes()

|

||||

|

||||

server := httptest.NewServer(http.HandlerFunc(func(w http.ResponseWriter, r *http.Request) {

|

||||

w.Header().Set("Content-Type", "application/json")

|

||||

// Intentionally omit Content-Encoding to simulate misbehaving upstream.

|

||||

w.WriteHeader(http.StatusBadRequest)

|

||||

_, _ = w.Write(compressedBody)

|

||||

}))

|

||||

defer server.Close()

|

||||

|

||||

executor := NewClaudeExecutor(&config.Config{})

|

||||

auth := &cliproxyauth.Auth{Attributes: map[string]string{

|

||||

"api_key": "key-123",

|

||||

"base_url": server.URL,

|

||||

}}

|

||||

payload := []byte(`{"messages":[{"role":"user","content":[{"type":"text","text":"hi"}]}]}`)

|

||||

|

||||

_, err := executor.Execute(context.Background(), auth, cliproxyexecutor.Request{

|

||||

Model: "claude-3-5-sonnet-20241022",

|

||||

Payload: payload,

|

||||

}, cliproxyexecutor.Options{

|

||||

SourceFormat: sdktranslator.FromString("claude"),

|

||||

})

|

||||

if err == nil {

|

||||

t.Fatal("expected an error for 400 response, got nil")

|

||||

}

|

||||

if !strings.Contains(err.Error(), "test error") {

|

||||

t.Errorf("error message should contain decompressed JSON, got: %q", err.Error())

|

||||

}

|

||||

}

|

||||

|

||||

// TestClaudeExecutor_ExecuteStream_GzipErrorBodyNoContentEncodingHeader verifies

|

||||

// the same for the streaming executor: 4xx gzip body without Content-Encoding is

|

||||

// decoded and the error message is readable.

|

||||

func TestClaudeExecutor_ExecuteStream_GzipErrorBodyNoContentEncodingHeader(t *testing.T) {

|

||||

const errJSON = `{"type":"error","error":{"type":"invalid_request_error","message":"stream test error"}}`

|

||||

|

||||

var buf bytes.Buffer

|

||||

gz := gzip.NewWriter(&buf)

|

||||

_, _ = gz.Write([]byte(errJSON))

|

||||

_ = gz.Close()

|

||||

compressedBody := buf.Bytes()

|

||||

|

||||

server := httptest.NewServer(http.HandlerFunc(func(w http.ResponseWriter, r *http.Request) {

|

||||

w.Header().Set("Content-Type", "application/json")

|

||||

// Intentionally omit Content-Encoding to simulate misbehaving upstream.

|

||||

w.WriteHeader(http.StatusBadRequest)

|

||||

_, _ = w.Write(compressedBody)

|

||||

}))

|

||||

defer server.Close()

|

||||

|

||||

executor := NewClaudeExecutor(&config.Config{})

|

||||

auth := &cliproxyauth.Auth{Attributes: map[string]string{

|

||||

"api_key": "key-123",

|

||||

"base_url": server.URL,

|

||||

}}

|

||||

payload := []byte(`{"messages":[{"role":"user","content":[{"type":"text","text":"hi"}]}]}`)

|

||||

|

||||

_, err := executor.ExecuteStream(context.Background(), auth, cliproxyexecutor.Request{

|

||||

Model: "claude-3-5-sonnet-20241022",

|

||||

Payload: payload,

|

||||

}, cliproxyexecutor.Options{

|

||||

SourceFormat: sdktranslator.FromString("claude"),

|

||||

})

|

||||

if err == nil {

|

||||

t.Fatal("expected an error for 400 response, got nil")

|

||||

}

|

||||

if !strings.Contains(err.Error(), "stream test error") {

|

||||

t.Errorf("error message should contain decompressed JSON, got: %q", err.Error())

|

||||

}

|

||||

}

|

||||

|

||||

@@ -616,6 +616,10 @@ func (e *CodexExecutor) cacheHelper(ctx context.Context, from sdktranslator.Form

|

||||

if promptCacheKey.Exists() {

|

||||

cache.ID = promptCacheKey.String()

|

||||

}

|

||||

} else if from == "openai" {

|

||||

if apiKey := strings.TrimSpace(apiKeyFromContext(ctx)); apiKey != "" {

|

||||

cache.ID = uuid.NewSHA1(uuid.NameSpaceOID, []byte("cli-proxy-api:codex:prompt-cache:"+apiKey)).String()

|

||||

}

|

||||

}

|

||||

|

||||

if cache.ID != "" {

|

||||

|

||||

64

internal/runtime/executor/codex_executor_cache_test.go

Normal file

64

internal/runtime/executor/codex_executor_cache_test.go

Normal file

@@ -0,0 +1,64 @@

|

||||

package executor

|

||||

|

||||

import (

|

||||

"context"

|

||||

"io"

|

||||

"net/http/httptest"

|

||||

"testing"

|

||||

|

||||

"github.com/gin-gonic/gin"

|

||||

"github.com/google/uuid"

|

||||

cliproxyexecutor "github.com/router-for-me/CLIProxyAPI/v6/sdk/cliproxy/executor"

|

||||

sdktranslator "github.com/router-for-me/CLIProxyAPI/v6/sdk/translator"

|

||||

"github.com/tidwall/gjson"

|

||||

)

|

||||

|

||||

func TestCodexExecutorCacheHelper_OpenAIChatCompletions_StablePromptCacheKeyFromAPIKey(t *testing.T) {

|

||||

recorder := httptest.NewRecorder()

|

||||

ginCtx, _ := gin.CreateTestContext(recorder)

|

||||

ginCtx.Set("apiKey", "test-api-key")

|

||||

|

||||

ctx := context.WithValue(context.Background(), "gin", ginCtx)

|

||||

executor := &CodexExecutor{}

|

||||

rawJSON := []byte(`{"model":"gpt-5.3-codex","stream":true}`)

|

||||

req := cliproxyexecutor.Request{

|

||||

Model: "gpt-5.3-codex",

|

||||

Payload: []byte(`{"model":"gpt-5.3-codex"}`),

|

||||

}

|

||||

url := "https://example.com/responses"

|

||||

|

||||

httpReq, err := executor.cacheHelper(ctx, sdktranslator.FromString("openai"), url, req, rawJSON)

|

||||

if err != nil {

|

||||

t.Fatalf("cacheHelper error: %v", err)

|

||||

}

|

||||

|

||||

body, errRead := io.ReadAll(httpReq.Body)

|

||||

if errRead != nil {

|

||||

t.Fatalf("read request body: %v", errRead)

|

||||

}

|

||||

|

||||

expectedKey := uuid.NewSHA1(uuid.NameSpaceOID, []byte("cli-proxy-api:codex:prompt-cache:test-api-key")).String()

|

||||

gotKey := gjson.GetBytes(body, "prompt_cache_key").String()

|

||||

if gotKey != expectedKey {

|

||||

t.Fatalf("prompt_cache_key = %q, want %q", gotKey, expectedKey)

|

||||

}

|

||||

if gotConversation := httpReq.Header.Get("Conversation_id"); gotConversation != expectedKey {

|

||||

t.Fatalf("Conversation_id = %q, want %q", gotConversation, expectedKey)

|

||||

}

|

||||

if gotSession := httpReq.Header.Get("Session_id"); gotSession != expectedKey {

|

||||

t.Fatalf("Session_id = %q, want %q", gotSession, expectedKey)

|

||||

}

|

||||

|

||||

httpReq2, err := executor.cacheHelper(ctx, sdktranslator.FromString("openai"), url, req, rawJSON)

|

||||

if err != nil {

|

||||

t.Fatalf("cacheHelper error (second call): %v", err)

|

||||

}

|

||||

body2, errRead2 := io.ReadAll(httpReq2.Body)

|

||||

if errRead2 != nil {

|

||||

t.Fatalf("read request body (second call): %v", errRead2)

|

||||

}

|

||||

gotKey2 := gjson.GetBytes(body2, "prompt_cache_key").String()

|

||||

if gotKey2 != expectedKey {

|

||||

t.Fatalf("prompt_cache_key (second call) = %q, want %q", gotKey2, expectedKey)

|

||||

}

|

||||

}

|

||||

@@ -31,7 +31,7 @@ import (

|

||||

)

|

||||

|

||||

const (

|

||||

codexResponsesWebsocketBetaHeaderValue = "responses_websockets=2026-02-04"

|

||||

codexResponsesWebsocketBetaHeaderValue = "responses_websockets=2026-02-06"

|

||||

codexResponsesWebsocketIdleTimeout = 5 * time.Minute

|

||||

codexResponsesWebsocketHandshakeTO = 30 * time.Second

|

||||

)

|

||||

@@ -57,11 +57,6 @@ type codexWebsocketSession struct {

|

||||

wsURL string

|

||||

authID string

|

||||

|

||||

// connCreateSent tracks whether a `response.create` message has been successfully sent

|

||||

// on the current websocket connection. The upstream expects the first message on each

|

||||

// connection to be `response.create`.

|

||||

connCreateSent bool

|

||||

|

||||

writeMu sync.Mutex

|

||||

|

||||

activeMu sync.Mutex

|

||||

@@ -212,13 +207,7 @@ func (e *CodexWebsocketsExecutor) Execute(ctx context.Context, auth *cliproxyaut

|

||||

defer sess.reqMu.Unlock()

|

||||

}

|

||||

|

||||

allowAppend := true

|

||||

if sess != nil {

|

||||

sess.connMu.Lock()

|

||||

allowAppend = sess.connCreateSent

|

||||

sess.connMu.Unlock()

|

||||

}

|

||||

wsReqBody := buildCodexWebsocketRequestBody(body, allowAppend)

|

||||

wsReqBody := buildCodexWebsocketRequestBody(body)

|

||||

recordAPIRequest(ctx, e.cfg, upstreamRequestLog{

|

||||

URL: wsURL,

|

||||

Method: "WEBSOCKET",

|

||||

@@ -280,10 +269,7 @@ func (e *CodexWebsocketsExecutor) Execute(ctx context.Context, auth *cliproxyaut

|

||||

// execution session.

|

||||

connRetry, _, errDialRetry := e.ensureUpstreamConn(ctx, auth, sess, authID, wsURL, wsHeaders)

|

||||

if errDialRetry == nil && connRetry != nil {

|

||||

sess.connMu.Lock()

|

||||

allowAppend = sess.connCreateSent

|

||||

sess.connMu.Unlock()

|

||||

wsReqBodyRetry := buildCodexWebsocketRequestBody(body, allowAppend)

|

||||

wsReqBodyRetry := buildCodexWebsocketRequestBody(body)

|

||||

recordAPIRequest(ctx, e.cfg, upstreamRequestLog{

|

||||

URL: wsURL,

|

||||

Method: "WEBSOCKET",

|

||||

@@ -312,7 +298,6 @@ func (e *CodexWebsocketsExecutor) Execute(ctx context.Context, auth *cliproxyaut

|

||||

return resp, errSend

|

||||

}

|

||||

}

|

||||

markCodexWebsocketCreateSent(sess, conn, wsReqBody)

|

||||

|

||||

for {

|

||||

if ctx != nil && ctx.Err() != nil {

|

||||

@@ -403,26 +388,20 @@ func (e *CodexWebsocketsExecutor) ExecuteStream(ctx context.Context, auth *clipr

|

||||

wsHeaders = applyCodexWebsocketHeaders(ctx, wsHeaders, auth, apiKey)

|

||||

|

||||

var authID, authLabel, authType, authValue string

|

||||

if auth != nil {

|

||||

authID = auth.ID

|

||||

authLabel = auth.Label

|

||||

authType, authValue = auth.AccountInfo()

|

||||

}

|

||||

authID = auth.ID

|

||||

authLabel = auth.Label

|

||||

authType, authValue = auth.AccountInfo()

|

||||

|

||||

executionSessionID := executionSessionIDFromOptions(opts)

|

||||

var sess *codexWebsocketSession

|

||||

if executionSessionID != "" {

|

||||

sess = e.getOrCreateSession(executionSessionID)

|

||||

sess.reqMu.Lock()

|

||||

if sess != nil {

|

||||

sess.reqMu.Lock()

|

||||

}

|

||||

}

|

||||

|

||||

allowAppend := true

|

||||

if sess != nil {

|

||||

sess.connMu.Lock()

|

||||

allowAppend = sess.connCreateSent

|

||||

sess.connMu.Unlock()

|

||||

}

|

||||

wsReqBody := buildCodexWebsocketRequestBody(body, allowAppend)

|

||||

wsReqBody := buildCodexWebsocketRequestBody(body)

|

||||

recordAPIRequest(ctx, e.cfg, upstreamRequestLog{

|

||||

URL: wsURL,

|

||||

Method: "WEBSOCKET",

|

||||

@@ -483,10 +462,7 @@ func (e *CodexWebsocketsExecutor) ExecuteStream(ctx context.Context, auth *clipr

|

||||

sess.reqMu.Unlock()

|

||||

return nil, errDialRetry

|

||||

}

|

||||

sess.connMu.Lock()

|

||||

allowAppend = sess.connCreateSent

|

||||

sess.connMu.Unlock()

|

||||

wsReqBodyRetry := buildCodexWebsocketRequestBody(body, allowAppend)

|

||||

wsReqBodyRetry := buildCodexWebsocketRequestBody(body)

|

||||

recordAPIRequest(ctx, e.cfg, upstreamRequestLog{

|

||||

URL: wsURL,

|

||||

Method: "WEBSOCKET",

|

||||

@@ -515,7 +491,6 @@ func (e *CodexWebsocketsExecutor) ExecuteStream(ctx context.Context, auth *clipr

|

||||

return nil, errSend

|

||||

}

|

||||

}

|

||||

markCodexWebsocketCreateSent(sess, conn, wsReqBody)

|

||||

|

||||

out := make(chan cliproxyexecutor.StreamChunk)

|

||||

go func() {

|

||||

@@ -657,31 +632,14 @@ func writeCodexWebsocketMessage(sess *codexWebsocketSession, conn *websocket.Con

|

||||

return conn.WriteMessage(websocket.TextMessage, payload)

|

||||

}

|

||||

|

||||

func buildCodexWebsocketRequestBody(body []byte, allowAppend bool) []byte {

|

||||

func buildCodexWebsocketRequestBody(body []byte) []byte {

|

||||

if len(body) == 0 {

|

||||

return nil

|

||||

}

|

||||

|

||||

// Codex CLI websocket v2 uses `response.create` with `previous_response_id` for incremental turns.

|

||||

// The upstream ChatGPT Codex websocket currently rejects that with close 1008 (policy violation).

|

||||

// Fall back to v1 `response.append` semantics on the same websocket connection to keep the session alive.

|

||||

//

|

||||

// NOTE: The upstream expects the first websocket event on each connection to be `response.create`,

|

||||

// so we only use `response.append` after we have initialized the current connection.

|

||||

if allowAppend {

|

||||

if prev := strings.TrimSpace(gjson.GetBytes(body, "previous_response_id").String()); prev != "" {

|

||||

inputNode := gjson.GetBytes(body, "input")

|

||||

wsReqBody := []byte(`{}`)

|

||||

wsReqBody, _ = sjson.SetBytes(wsReqBody, "type", "response.append")

|

||||

if inputNode.Exists() && inputNode.IsArray() && strings.TrimSpace(inputNode.Raw) != "" {

|

||||

wsReqBody, _ = sjson.SetRawBytes(wsReqBody, "input", []byte(inputNode.Raw))

|

||||

return wsReqBody

|

||||

}

|

||||

wsReqBody, _ = sjson.SetRawBytes(wsReqBody, "input", []byte("[]"))

|

||||

return wsReqBody

|

||||

}

|

||||

}

|

||||

|

||||

// Match codex-rs websocket v2 semantics: every request is `response.create`.

|

||||

// Incremental follow-up turns continue on the same websocket using

|

||||

// `previous_response_id` + incremental `input`, not `response.append`.

|