mirror of

https://github.com/router-for-me/CLIProxyAPIPlus.git

synced 2026-03-09 15:25:17 +00:00

Compare commits

20 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

352cb98ff0 | ||

|

|

5850492a93 | ||

|

|

fdbd4041ca | ||

|

|

ebef1fae2a | ||

|

|

4bbeb92e9a | ||

|

|

b436dad8bc | ||

|

|

6ae15d6c44 | ||

|

|

0468bde0d6 | ||

|

|

1d7329e797 | ||

|

|

48ffc4dee7 | ||

|

|

7ebd8f0c44 | ||

|

|

b680c146c1 | ||

|

|

d26ad8224d | ||

|

|

5c84d69d42 | ||

|

|

527e4b7f26 | ||

|

|

b48485b42b | ||

|

|

79009bb3d4 | ||

|

|

26fc611f86 | ||

|

|

4e99525279 | ||

|

|

2615f489d6 |

@@ -10,7 +10,7 @@ The Plus release stays in lockstep with the mainline features.

|

||||

|

||||

## Differences from the Mainline

|

||||

|

||||

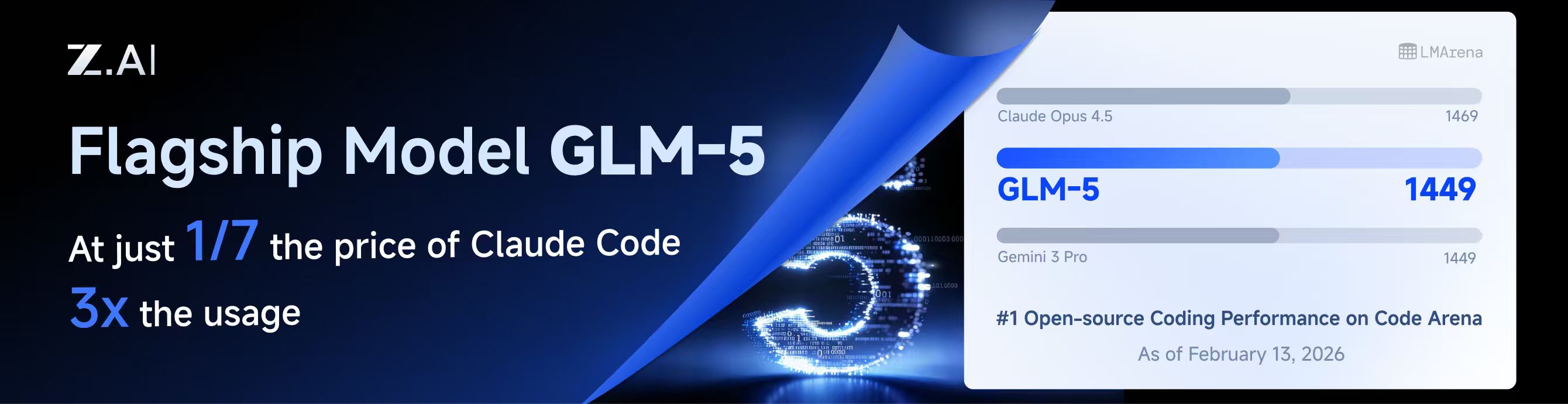

[](https://z.ai/subscribe?ic=8JVLJQFSKB)

|

||||

[](https://z.ai/subscribe?ic=8JVLJQFSKB)

|

||||

|

||||

## New Features (Plus Enhanced)

|

||||

|

||||

|

||||

@@ -10,7 +10,7 @@

|

||||

|

||||

## 与主线版本版本差异

|

||||

|

||||

[](https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII)

|

||||

[](https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII)

|

||||

|

||||

## 新增功能 (Plus 增强版)

|

||||

|

||||

|

||||

@@ -233,6 +233,9 @@ nonstream-keepalive-interval: 0

|

||||

# alias: "vertex-flash" # client-visible alias

|

||||

# - name: "gemini-2.5-pro"

|

||||

# alias: "vertex-pro"

|

||||

# excluded-models: # optional: models to exclude from listing

|

||||

# - "imagen-3.0-generate-002"

|

||||

# - "imagen-*"

|

||||

|

||||

# Amp Integration

|

||||

# ampcode:

|

||||

|

||||

@@ -16,6 +16,7 @@ import (

|

||||

"net/url"

|

||||

"os"

|

||||

"path/filepath"

|

||||

"runtime"

|

||||

"sort"

|

||||

"strconv"

|

||||

"strings"

|

||||

@@ -698,17 +699,20 @@ func (h *Handler) authIDForPath(path string) string {

|

||||

if path == "" {

|

||||

return ""

|

||||

}

|

||||

if h == nil || h.cfg == nil {

|

||||

return path

|

||||

id := path

|

||||

if h != nil && h.cfg != nil {

|

||||

authDir := strings.TrimSpace(h.cfg.AuthDir)

|

||||

if authDir != "" {

|

||||

if rel, errRel := filepath.Rel(authDir, path); errRel == nil && rel != "" {

|

||||

id = rel

|

||||

}

|

||||

}

|

||||

}

|

||||

authDir := strings.TrimSpace(h.cfg.AuthDir)

|

||||

if authDir == "" {

|

||||

return path

|

||||

// On Windows, normalize ID casing to avoid duplicate auth entries caused by case-insensitive paths.

|

||||

if runtime.GOOS == "windows" {

|

||||

id = strings.ToLower(id)

|

||||

}

|

||||

if rel, err := filepath.Rel(authDir, path); err == nil && rel != "" {

|

||||

return rel

|

||||

}

|

||||

return path

|

||||

return id

|

||||

}

|

||||

|

||||

func (h *Handler) registerAuthFromFile(ctx context.Context, path string, data []byte) error {

|

||||

|

||||

@@ -516,12 +516,13 @@ func (h *Handler) PutVertexCompatKeys(c *gin.Context) {

|

||||

}

|

||||

func (h *Handler) PatchVertexCompatKey(c *gin.Context) {

|

||||

type vertexCompatPatch struct {

|

||||

APIKey *string `json:"api-key"`

|

||||

Prefix *string `json:"prefix"`

|

||||

BaseURL *string `json:"base-url"`

|

||||

ProxyURL *string `json:"proxy-url"`

|

||||

Headers *map[string]string `json:"headers"`

|

||||

Models *[]config.VertexCompatModel `json:"models"`

|

||||

APIKey *string `json:"api-key"`

|

||||

Prefix *string `json:"prefix"`

|

||||

BaseURL *string `json:"base-url"`

|

||||

ProxyURL *string `json:"proxy-url"`

|

||||

Headers *map[string]string `json:"headers"`

|

||||

Models *[]config.VertexCompatModel `json:"models"`

|

||||

ExcludedModels *[]string `json:"excluded-models"`

|

||||

}

|

||||

var body struct {

|

||||

Index *int `json:"index"`

|

||||

@@ -585,6 +586,9 @@ func (h *Handler) PatchVertexCompatKey(c *gin.Context) {

|

||||

if body.Value.Models != nil {

|

||||

entry.Models = append([]config.VertexCompatModel(nil), (*body.Value.Models)...)

|

||||

}

|

||||

if body.Value.ExcludedModels != nil {

|

||||

entry.ExcludedModels = config.NormalizeExcludedModels(*body.Value.ExcludedModels)

|

||||

}

|

||||

normalizeVertexCompatKey(&entry)

|

||||

h.cfg.VertexCompatAPIKey[targetIndex] = entry

|

||||

h.cfg.SanitizeVertexCompatKeys()

|

||||

@@ -1029,6 +1033,7 @@ func normalizeVertexCompatKey(entry *config.VertexCompatKey) {

|

||||

entry.BaseURL = strings.TrimSpace(entry.BaseURL)

|

||||

entry.ProxyURL = strings.TrimSpace(entry.ProxyURL)

|

||||

entry.Headers = config.NormalizeHeaders(entry.Headers)

|

||||

entry.ExcludedModels = config.NormalizeExcludedModels(entry.ExcludedModels)

|

||||

if len(entry.Models) == 0 {

|

||||

return

|

||||

}

|

||||

|

||||

@@ -34,6 +34,9 @@ type VertexCompatKey struct {

|

||||

|

||||

// Models defines the model configurations including aliases for routing.

|

||||

Models []VertexCompatModel `yaml:"models,omitempty" json:"models,omitempty"`

|

||||

|

||||

// ExcludedModels lists model IDs that should be excluded for this provider.

|

||||

ExcludedModels []string `yaml:"excluded-models,omitempty" json:"excluded-models,omitempty"`

|

||||

}

|

||||

|

||||

func (k VertexCompatKey) GetAPIKey() string { return k.APIKey }

|

||||

@@ -74,6 +77,7 @@ func (cfg *Config) SanitizeVertexCompatKeys() {

|

||||

}

|

||||

entry.ProxyURL = strings.TrimSpace(entry.ProxyURL)

|

||||

entry.Headers = NormalizeHeaders(entry.Headers)

|

||||

entry.ExcludedModels = NormalizeExcludedModels(entry.ExcludedModels)

|

||||

|

||||

// Sanitize models: remove entries without valid alias

|

||||

sanitizedModels := make([]VertexCompatModel, 0, len(entry.Models))

|

||||

|

||||

@@ -59,6 +59,7 @@ func buildRequestBodyFromPayload(t *testing.T, modelName string) map[string]any

|

||||

"properties": {

|

||||

"mode": {

|

||||

"type": "string",

|

||||

"deprecated": true,

|

||||

"enum": ["a", "b"],

|

||||

"enumTitles": ["A", "B"]

|

||||

}

|

||||

@@ -156,4 +157,7 @@ func assertSchemaSanitizedAndPropertyPreserved(t *testing.T, params map[string]a

|

||||

if _, ok := mode["enumTitles"]; ok {

|

||||

t.Fatalf("enumTitles should be removed from nested schema")

|

||||

}

|

||||

if _, ok := mode["deprecated"]; ok {

|

||||

t.Fatalf("deprecated should be removed from nested schema")

|

||||

}

|

||||

}

|

||||

|

||||

@@ -490,18 +490,46 @@ func (e *GitHubCopilotExecutor) applyHeaders(r *http.Request, apiToken string, b

|

||||

r.Header.Set("X-Request-Id", uuid.NewString())

|

||||

|

||||

initiator := "user"

|

||||

if len(body) > 0 {

|

||||

if messages := gjson.GetBytes(body, "messages"); messages.Exists() && messages.IsArray() {

|

||||

for _, msg := range messages.Array() {

|

||||

role := msg.Get("role").String()

|

||||

if role == "assistant" || role == "tool" {

|

||||

initiator = "agent"

|

||||

break

|

||||

}

|

||||

if role := detectLastConversationRole(body); role == "assistant" || role == "tool" {

|

||||

initiator = "agent"

|

||||

}

|

||||

r.Header.Set("X-Initiator", initiator)

|

||||

}

|

||||

|

||||

func detectLastConversationRole(body []byte) string {

|

||||

if len(body) == 0 {

|

||||

return ""

|

||||

}

|

||||

|

||||

if messages := gjson.GetBytes(body, "messages"); messages.Exists() && messages.IsArray() {

|

||||

arr := messages.Array()

|

||||

for i := len(arr) - 1; i >= 0; i-- {

|

||||

if role := arr[i].Get("role").String(); role != "" {

|

||||

return role

|

||||

}

|

||||

}

|

||||

}

|

||||

r.Header.Set("X-Initiator", initiator)

|

||||

|

||||

if inputs := gjson.GetBytes(body, "input"); inputs.Exists() && inputs.IsArray() {

|

||||

arr := inputs.Array()

|

||||

for i := len(arr) - 1; i >= 0; i-- {

|

||||

item := arr[i]

|

||||

|

||||

// Most Responses input items carry a top-level role.

|

||||

if role := item.Get("role").String(); role != "" {

|

||||

return role

|

||||

}

|

||||

|

||||

switch item.Get("type").String() {

|

||||

case "function_call", "function_call_arguments":

|

||||

return "assistant"

|

||||

case "function_call_output", "function_call_response", "tool_result":

|

||||

return "tool"

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

return ""

|

||||

}

|

||||

|

||||

// detectVisionContent checks if the request body contains vision/image content.

|

||||

|

||||

@@ -262,15 +262,15 @@ func TestApplyHeaders_XInitiator_UserOnly(t *testing.T) {

|

||||

}

|

||||

}

|

||||

|

||||

func TestApplyHeaders_XInitiator_AgentWithAssistantAndUserToolResult(t *testing.T) {

|

||||

func TestApplyHeaders_XInitiator_UserWhenLastRoleIsUser(t *testing.T) {

|

||||

t.Parallel()

|

||||

e := &GitHubCopilotExecutor{}

|

||||

req, _ := http.NewRequest(http.MethodPost, "https://example.com", nil)

|

||||

// Claude Code typical flow: last message is user (tool result), but has assistant in history

|

||||

// Last role governs the initiator decision.

|

||||

body := []byte(`{"messages":[{"role":"user","content":"hello"},{"role":"assistant","content":"I will read the file"},{"role":"user","content":"tool result here"}]}`)

|

||||

e.applyHeaders(req, "token", body)

|

||||

if got := req.Header.Get("X-Initiator"); got != "agent" {

|

||||

t.Fatalf("X-Initiator = %q, want agent (assistant exists in messages)", got)

|

||||

if got := req.Header.Get("X-Initiator"); got != "user" {

|

||||

t.Fatalf("X-Initiator = %q, want user (last role is user)", got)

|

||||

}

|

||||

}

|

||||

|

||||

@@ -285,6 +285,39 @@ func TestApplyHeaders_XInitiator_AgentWithToolRole(t *testing.T) {

|

||||

}

|

||||

}

|

||||

|

||||

func TestApplyHeaders_XInitiator_InputArrayLastAssistantMessage(t *testing.T) {

|

||||

t.Parallel()

|

||||

e := &GitHubCopilotExecutor{}

|

||||

req, _ := http.NewRequest(http.MethodPost, "https://example.com", nil)

|

||||

body := []byte(`{"input":[{"type":"message","role":"user","content":[{"type":"input_text","text":"Hi"}]},{"type":"message","role":"assistant","content":[{"type":"output_text","text":"Hello"}]}]}`)

|

||||

e.applyHeaders(req, "token", body)

|

||||

if got := req.Header.Get("X-Initiator"); got != "agent" {

|

||||

t.Fatalf("X-Initiator = %q, want agent (last role is assistant)", got)

|

||||

}

|

||||

}

|

||||

|

||||

func TestApplyHeaders_XInitiator_InputArrayLastUserMessage(t *testing.T) {

|

||||

t.Parallel()

|

||||

e := &GitHubCopilotExecutor{}

|

||||

req, _ := http.NewRequest(http.MethodPost, "https://example.com", nil)

|

||||

body := []byte(`{"input":[{"type":"message","role":"assistant","content":[{"type":"output_text","text":"I can help"}]},{"type":"message","role":"user","content":[{"type":"input_text","text":"Do X"}]}]}`)

|

||||

e.applyHeaders(req, "token", body)

|

||||

if got := req.Header.Get("X-Initiator"); got != "user" {

|

||||

t.Fatalf("X-Initiator = %q, want user (last role is user)", got)

|

||||

}

|

||||

}

|

||||

|

||||

func TestApplyHeaders_XInitiator_InputArrayLastFunctionCallOutput(t *testing.T) {

|

||||

t.Parallel()

|

||||

e := &GitHubCopilotExecutor{}

|

||||

req, _ := http.NewRequest(http.MethodPost, "https://example.com", nil)

|

||||

body := []byte(`{"input":[{"type":"message","role":"user","content":[{"type":"input_text","text":"Use tool"}]},{"type":"function_call","call_id":"c1","name":"Read","arguments":"{}"},{"type":"function_call_output","call_id":"c1","output":"ok"}]}`)

|

||||

e.applyHeaders(req, "token", body)

|

||||

if got := req.Header.Get("X-Initiator"); got != "agent" {

|

||||

t.Fatalf("X-Initiator = %q, want agent (last item maps to tool role)", got)

|

||||

}

|

||||

}

|

||||

|

||||

// --- Tests for x-github-api-version header (Problem M) ---

|

||||

|

||||

func TestApplyHeaders_GitHubAPIVersion(t *testing.T) {

|

||||

|

||||

@@ -431,6 +431,33 @@ func ConvertClaudeRequestToAntigravity(modelName string, inputRawJSON []byte, _

|

||||

out, _ = sjson.SetRaw(out, "request.tools", toolsJSON)

|

||||

}

|

||||

|

||||

// tool_choice

|

||||

toolChoiceResult := gjson.GetBytes(rawJSON, "tool_choice")

|

||||

if toolChoiceResult.Exists() {

|

||||

toolChoiceType := ""

|

||||

toolChoiceName := ""

|

||||

if toolChoiceResult.IsObject() {

|

||||

toolChoiceType = toolChoiceResult.Get("type").String()

|

||||

toolChoiceName = toolChoiceResult.Get("name").String()

|

||||

} else if toolChoiceResult.Type == gjson.String {

|

||||

toolChoiceType = toolChoiceResult.String()

|

||||

}

|

||||

|

||||

switch toolChoiceType {

|

||||

case "auto":

|

||||

out, _ = sjson.Set(out, "request.toolConfig.functionCallingConfig.mode", "AUTO")

|

||||

case "none":

|

||||

out, _ = sjson.Set(out, "request.toolConfig.functionCallingConfig.mode", "NONE")

|

||||

case "any":

|

||||

out, _ = sjson.Set(out, "request.toolConfig.functionCallingConfig.mode", "ANY")

|

||||

case "tool":

|

||||

out, _ = sjson.Set(out, "request.toolConfig.functionCallingConfig.mode", "ANY")

|

||||

if toolChoiceName != "" {

|

||||

out, _ = sjson.Set(out, "request.toolConfig.functionCallingConfig.allowedFunctionNames", []string{toolChoiceName})

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

// Map Anthropic thinking -> Gemini thinkingBudget/include_thoughts when type==enabled

|

||||

if t := gjson.GetBytes(rawJSON, "thinking"); enableThoughtTranslate && t.Exists() && t.IsObject() {

|

||||

switch t.Get("type").String() {

|

||||

@@ -441,9 +468,22 @@ func ConvertClaudeRequestToAntigravity(modelName string, inputRawJSON []byte, _

|

||||

out, _ = sjson.Set(out, "request.generationConfig.thinkingConfig.includeThoughts", true)

|

||||

}

|

||||

case "adaptive", "auto":

|

||||

// Keep adaptive/auto as a high level sentinel; ApplyThinking resolves it

|

||||

// to model-specific max capability.

|

||||

out, _ = sjson.Set(out, "request.generationConfig.thinkingConfig.thinkingLevel", "high")

|

||||

// For adaptive thinking:

|

||||

// - If output_config.effort is explicitly present, pass through as thinkingLevel.

|

||||

// - Otherwise, treat it as "enabled with target-model maximum" and emit high.

|

||||

// ApplyThinking handles clamping to target model's supported levels.

|

||||

effort := ""

|

||||

if v := gjson.GetBytes(rawJSON, "output_config.effort"); v.Exists() && v.Type == gjson.String {

|

||||

effort = strings.ToLower(strings.TrimSpace(v.String()))

|

||||

}

|

||||

if effort != "" {

|

||||

if effort == "max" {

|

||||

effort = "high"

|

||||

}

|

||||

out, _ = sjson.Set(out, "request.generationConfig.thinkingConfig.thinkingLevel", effort)

|

||||

} else {

|

||||

out, _ = sjson.Set(out, "request.generationConfig.thinkingConfig.thinkingLevel", "high")

|

||||

}

|

||||

out, _ = sjson.Set(out, "request.generationConfig.thinkingConfig.includeThoughts", true)

|

||||

}

|

||||

}

|

||||

|

||||

@@ -193,6 +193,42 @@ func TestConvertClaudeRequestToAntigravity_ToolDeclarations(t *testing.T) {

|

||||

}

|

||||

}

|

||||

|

||||

func TestConvertClaudeRequestToAntigravity_ToolChoice_SpecificTool(t *testing.T) {

|

||||

inputJSON := []byte(`{

|

||||

"model": "gemini-3-flash-preview",

|

||||

"messages": [

|

||||

{

|

||||

"role": "user",

|

||||

"content": [

|

||||

{"type": "text", "text": "hi"}

|

||||

]

|

||||

}

|

||||

],

|

||||

"tools": [

|

||||

{

|

||||

"name": "json",

|

||||

"description": "A JSON tool",

|

||||

"input_schema": {

|

||||

"type": "object",

|

||||

"properties": {}

|

||||

}

|

||||

}

|

||||

],

|

||||

"tool_choice": {"type": "tool", "name": "json"}

|

||||

}`)

|

||||

|

||||

output := ConvertClaudeRequestToAntigravity("gemini-3-flash-preview", inputJSON, false)

|

||||

outputStr := string(output)

|

||||

|

||||

if got := gjson.Get(outputStr, "request.toolConfig.functionCallingConfig.mode").String(); got != "ANY" {

|

||||

t.Fatalf("Expected toolConfig.functionCallingConfig.mode 'ANY', got '%s'", got)

|

||||

}

|

||||

allowed := gjson.Get(outputStr, "request.toolConfig.functionCallingConfig.allowedFunctionNames").Array()

|

||||

if len(allowed) != 1 || allowed[0].String() != "json" {

|

||||

t.Fatalf("Expected allowedFunctionNames ['json'], got %s", gjson.Get(outputStr, "request.toolConfig.functionCallingConfig.allowedFunctionNames").Raw)

|

||||

}

|

||||

}

|

||||

|

||||

func TestConvertClaudeRequestToAntigravity_ToolUse(t *testing.T) {

|

||||

inputJSON := []byte(`{

|

||||

"model": "claude-3-5-sonnet-20240620",

|

||||

@@ -1199,3 +1235,64 @@ func TestConvertClaudeRequestToAntigravity_ToolAndThinking_NoExistingSystem(t *t

|

||||

t.Errorf("Interleaved thinking hint should be in created systemInstruction, got: %v", sysInstruction.Raw)

|

||||

}

|

||||

}

|

||||

|

||||

func TestConvertClaudeRequestToAntigravity_AdaptiveThinking_EffortLevels(t *testing.T) {

|

||||

tests := []struct {

|

||||

name string

|

||||

effort string

|

||||

expected string

|

||||

}{

|

||||

{"low", "low", "low"},

|

||||

{"medium", "medium", "medium"},

|

||||

{"high", "high", "high"},

|

||||

{"max", "max", "high"},

|

||||

}

|

||||

|

||||

for _, tt := range tests {

|

||||

tt := tt

|

||||

t.Run(tt.name, func(t *testing.T) {

|

||||

inputJSON := []byte(`{

|

||||

"model": "claude-opus-4-6-thinking",

|

||||

"messages": [{"role": "user", "content": [{"type": "text", "text": "Hello"}]}],

|

||||

"thinking": {"type": "adaptive"},

|

||||

"output_config": {"effort": "` + tt.effort + `"}

|

||||

}`)

|

||||

|

||||

output := ConvertClaudeRequestToAntigravity("claude-opus-4-6-thinking", inputJSON, false)

|

||||

outputStr := string(output)

|

||||

|

||||

thinkingConfig := gjson.Get(outputStr, "request.generationConfig.thinkingConfig")

|

||||

if !thinkingConfig.Exists() {

|

||||

t.Fatal("thinkingConfig should exist for adaptive thinking")

|

||||

}

|

||||

if thinkingConfig.Get("thinkingLevel").String() != tt.expected {

|

||||

t.Errorf("Expected thinkingLevel %q, got %q", tt.expected, thinkingConfig.Get("thinkingLevel").String())

|

||||

}

|

||||

if !thinkingConfig.Get("includeThoughts").Bool() {

|

||||

t.Error("includeThoughts should be true")

|

||||

}

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

func TestConvertClaudeRequestToAntigravity_AdaptiveThinking_NoEffort(t *testing.T) {

|

||||

inputJSON := []byte(`{

|

||||

"model": "claude-opus-4-6-thinking",

|

||||

"messages": [{"role": "user", "content": [{"type": "text", "text": "Hello"}]}],

|

||||

"thinking": {"type": "adaptive"}

|

||||

}`)

|

||||

|

||||

output := ConvertClaudeRequestToAntigravity("claude-opus-4-6-thinking", inputJSON, false)

|

||||

outputStr := string(output)

|

||||

|

||||

thinkingConfig := gjson.Get(outputStr, "request.generationConfig.thinkingConfig")

|

||||

if !thinkingConfig.Exists() {

|

||||

t.Fatal("thinkingConfig should exist for adaptive thinking without effort")

|

||||

}

|

||||

if thinkingConfig.Get("thinkingLevel").String() != "high" {

|

||||

t.Errorf("Expected default thinkingLevel \"high\", got %q", thinkingConfig.Get("thinkingLevel").String())

|

||||

}

|

||||

if !thinkingConfig.Get("includeThoughts").Bool() {

|

||||

t.Error("includeThoughts should be true")

|

||||

}

|

||||

}

|

||||

|

||||

@@ -255,6 +255,8 @@ func ConvertClaudeRequestToCodex(modelName string, inputRawJSON []byte, _ bool)

|

||||

tool, _ = sjson.SetRaw(tool, "parameters", normalizeToolParameters(toolResult.Get("input_schema").Raw))

|

||||

tool, _ = sjson.Delete(tool, "input_schema")

|

||||

tool, _ = sjson.Delete(tool, "parameters.$schema")

|

||||

tool, _ = sjson.Delete(tool, "cache_control")

|

||||

tool, _ = sjson.Delete(tool, "defer_loading")

|

||||

tool, _ = sjson.Set(tool, "strict", false)

|

||||

template, _ = sjson.SetRaw(template, "tools.-1", tool)

|

||||

}

|

||||

|

||||

@@ -74,8 +74,13 @@ func ConvertCodexResponseToOpenAI(_ context.Context, modelName string, originalR

|

||||

}

|

||||

|

||||

// Extract and set the model version.

|

||||

cachedModel := (*param).(*ConvertCliToOpenAIParams).Model

|

||||

if modelResult := gjson.GetBytes(rawJSON, "model"); modelResult.Exists() {

|

||||

template, _ = sjson.Set(template, "model", modelResult.String())

|

||||

} else if cachedModel != "" {

|

||||

template, _ = sjson.Set(template, "model", cachedModel)

|

||||

} else if modelName != "" {

|

||||

template, _ = sjson.Set(template, "model", modelName)

|

||||

}

|

||||

|

||||

template, _ = sjson.Set(template, "created", (*param).(*ConvertCliToOpenAIParams).CreatedAt)

|

||||

|

||||

@@ -0,0 +1,47 @@

|

||||

package chat_completions

|

||||

|

||||

import (

|

||||

"context"

|

||||

"testing"

|

||||

|

||||

"github.com/tidwall/gjson"

|

||||

)

|

||||

|

||||

func TestConvertCodexResponseToOpenAI_StreamSetsModelFromResponseCreated(t *testing.T) {

|

||||

ctx := context.Background()

|

||||

var param any

|

||||

|

||||

modelName := "gpt-5.3-codex"

|

||||

|

||||

out := ConvertCodexResponseToOpenAI(ctx, modelName, nil, nil, []byte(`data: {"type":"response.created","response":{"id":"resp_123","created_at":1700000000,"model":"gpt-5.3-codex"}}`), ¶m)

|

||||

if len(out) != 0 {

|

||||

t.Fatalf("expected no output for response.created, got %d chunks", len(out))

|

||||

}

|

||||

|

||||

out = ConvertCodexResponseToOpenAI(ctx, modelName, nil, nil, []byte(`data: {"type":"response.output_text.delta","delta":"hello"}`), ¶m)

|

||||

if len(out) != 1 {

|

||||

t.Fatalf("expected 1 chunk, got %d", len(out))

|

||||

}

|

||||

|

||||

gotModel := gjson.Get(out[0], "model").String()

|

||||

if gotModel != modelName {

|

||||

t.Fatalf("expected model %q, got %q", modelName, gotModel)

|

||||

}

|

||||

}

|

||||

|

||||

func TestConvertCodexResponseToOpenAI_FirstChunkUsesRequestModelName(t *testing.T) {

|

||||

ctx := context.Background()

|

||||

var param any

|

||||

|

||||

modelName := "gpt-5.3-codex"

|

||||

|

||||

out := ConvertCodexResponseToOpenAI(ctx, modelName, nil, nil, []byte(`data: {"type":"response.output_text.delta","delta":"hello"}`), ¶m)

|

||||

if len(out) != 1 {

|

||||

t.Fatalf("expected 1 chunk, got %d", len(out))

|

||||

}

|

||||

|

||||

gotModel := gjson.Get(out[0], "model").String()

|

||||

if gotModel != modelName {

|

||||

t.Fatalf("expected model %q, got %q", modelName, gotModel)

|

||||

}

|

||||

}

|

||||

@@ -156,6 +156,7 @@ func ConvertClaudeRequestToCLI(modelName string, inputRawJSON []byte, _ bool) []

|

||||

tool, _ = sjson.Delete(tool, "input_examples")

|

||||

tool, _ = sjson.Delete(tool, "type")

|

||||

tool, _ = sjson.Delete(tool, "cache_control")

|

||||

tool, _ = sjson.Delete(tool, "defer_loading")

|

||||

if gjson.Valid(tool) && gjson.Parse(tool).IsObject() {

|

||||

if !hasTools {

|

||||

out, _ = sjson.SetRaw(out, "request.tools", `[{"functionDeclarations":[]}]`)

|

||||

@@ -171,6 +172,33 @@ func ConvertClaudeRequestToCLI(modelName string, inputRawJSON []byte, _ bool) []

|

||||

}

|

||||

}

|

||||

|

||||

// tool_choice

|

||||

toolChoiceResult := gjson.GetBytes(rawJSON, "tool_choice")

|

||||

if toolChoiceResult.Exists() {

|

||||

toolChoiceType := ""

|

||||

toolChoiceName := ""

|

||||

if toolChoiceResult.IsObject() {

|

||||

toolChoiceType = toolChoiceResult.Get("type").String()

|

||||

toolChoiceName = toolChoiceResult.Get("name").String()

|

||||

} else if toolChoiceResult.Type == gjson.String {

|

||||

toolChoiceType = toolChoiceResult.String()

|

||||

}

|

||||

|

||||

switch toolChoiceType {

|

||||

case "auto":

|

||||

out, _ = sjson.Set(out, "request.toolConfig.functionCallingConfig.mode", "AUTO")

|

||||

case "none":

|

||||

out, _ = sjson.Set(out, "request.toolConfig.functionCallingConfig.mode", "NONE")

|

||||

case "any":

|

||||

out, _ = sjson.Set(out, "request.toolConfig.functionCallingConfig.mode", "ANY")

|

||||

case "tool":

|

||||

out, _ = sjson.Set(out, "request.toolConfig.functionCallingConfig.mode", "ANY")

|

||||

if toolChoiceName != "" {

|

||||

out, _ = sjson.Set(out, "request.toolConfig.functionCallingConfig.allowedFunctionNames", []string{toolChoiceName})

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

// Map Anthropic thinking -> Gemini CLI thinkingConfig when enabled

|

||||

// Translator only does format conversion, ApplyThinking handles model capability validation.

|

||||

if t := gjson.GetBytes(rawJSON, "thinking"); t.Exists() && t.IsObject() {

|

||||

|

||||

@@ -0,0 +1,42 @@

|

||||

package claude

|

||||

|

||||

import (

|

||||

"testing"

|

||||

|

||||

"github.com/tidwall/gjson"

|

||||

)

|

||||

|

||||

func TestConvertClaudeRequestToCLI_ToolChoice_SpecificTool(t *testing.T) {

|

||||

inputJSON := []byte(`{

|

||||

"model": "gemini-3-flash-preview",

|

||||

"messages": [

|

||||

{

|

||||

"role": "user",

|

||||

"content": [

|

||||

{"type": "text", "text": "hi"}

|

||||

]

|

||||

}

|

||||

],

|

||||

"tools": [

|

||||

{

|

||||

"name": "json",

|

||||

"description": "A JSON tool",

|

||||

"input_schema": {

|

||||

"type": "object",

|

||||

"properties": {}

|

||||

}

|

||||

}

|

||||

],

|

||||

"tool_choice": {"type": "tool", "name": "json"}

|

||||

}`)

|

||||

|

||||

output := ConvertClaudeRequestToCLI("gemini-3-flash-preview", inputJSON, false)

|

||||

|

||||

if got := gjson.GetBytes(output, "request.toolConfig.functionCallingConfig.mode").String(); got != "ANY" {

|

||||

t.Fatalf("Expected request.toolConfig.functionCallingConfig.mode 'ANY', got '%s'", got)

|

||||

}

|

||||

allowed := gjson.GetBytes(output, "request.toolConfig.functionCallingConfig.allowedFunctionNames").Array()

|

||||

if len(allowed) != 1 || allowed[0].String() != "json" {

|

||||

t.Fatalf("Expected allowedFunctionNames ['json'], got %s", gjson.GetBytes(output, "request.toolConfig.functionCallingConfig.allowedFunctionNames").Raw)

|

||||

}

|

||||

}

|

||||

@@ -137,6 +137,7 @@ func ConvertClaudeRequestToGemini(modelName string, inputRawJSON []byte, _ bool)

|

||||

tool, _ = sjson.Delete(tool, "input_examples")

|

||||

tool, _ = sjson.Delete(tool, "type")

|

||||

tool, _ = sjson.Delete(tool, "cache_control")

|

||||

tool, _ = sjson.Delete(tool, "defer_loading")

|

||||

if gjson.Valid(tool) && gjson.Parse(tool).IsObject() {

|

||||

if !hasTools {

|

||||

out, _ = sjson.SetRaw(out, "tools", `[{"functionDeclarations":[]}]`)

|

||||

@@ -152,6 +153,33 @@ func ConvertClaudeRequestToGemini(modelName string, inputRawJSON []byte, _ bool)

|

||||

}

|

||||

}

|

||||

|

||||

// tool_choice

|

||||

toolChoiceResult := gjson.GetBytes(rawJSON, "tool_choice")

|

||||

if toolChoiceResult.Exists() {

|

||||

toolChoiceType := ""

|

||||

toolChoiceName := ""

|

||||

if toolChoiceResult.IsObject() {

|

||||

toolChoiceType = toolChoiceResult.Get("type").String()

|

||||

toolChoiceName = toolChoiceResult.Get("name").String()

|

||||

} else if toolChoiceResult.Type == gjson.String {

|

||||

toolChoiceType = toolChoiceResult.String()

|

||||

}

|

||||

|

||||

switch toolChoiceType {

|

||||

case "auto":

|

||||

out, _ = sjson.Set(out, "toolConfig.functionCallingConfig.mode", "AUTO")

|

||||

case "none":

|

||||

out, _ = sjson.Set(out, "toolConfig.functionCallingConfig.mode", "NONE")

|

||||

case "any":

|

||||

out, _ = sjson.Set(out, "toolConfig.functionCallingConfig.mode", "ANY")

|

||||

case "tool":

|

||||

out, _ = sjson.Set(out, "toolConfig.functionCallingConfig.mode", "ANY")

|

||||

if toolChoiceName != "" {

|

||||

out, _ = sjson.Set(out, "toolConfig.functionCallingConfig.allowedFunctionNames", []string{toolChoiceName})

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

// Map Anthropic thinking -> Gemini thinking config when enabled

|

||||

// Translator only does format conversion, ApplyThinking handles model capability validation.

|

||||

if t := gjson.GetBytes(rawJSON, "thinking"); t.Exists() && t.IsObject() {

|

||||

|

||||

@@ -0,0 +1,42 @@

|

||||

package claude

|

||||

|

||||

import (

|

||||

"testing"

|

||||

|

||||

"github.com/tidwall/gjson"

|

||||

)

|

||||

|

||||

func TestConvertClaudeRequestToGemini_ToolChoice_SpecificTool(t *testing.T) {

|

||||

inputJSON := []byte(`{

|

||||

"model": "gemini-3-flash-preview",

|

||||

"messages": [

|

||||

{

|

||||

"role": "user",

|

||||

"content": [

|

||||

{"type": "text", "text": "hi"}

|

||||

]

|

||||

}

|

||||

],

|

||||

"tools": [

|

||||

{

|

||||

"name": "json",

|

||||

"description": "A JSON tool",

|

||||

"input_schema": {

|

||||

"type": "object",

|

||||

"properties": {}

|

||||

}

|

||||

}

|

||||

],

|

||||

"tool_choice": {"type": "tool", "name": "json"}

|

||||

}`)

|

||||

|

||||

output := ConvertClaudeRequestToGemini("gemini-3-flash-preview", inputJSON, false)

|

||||

|

||||

if got := gjson.GetBytes(output, "toolConfig.functionCallingConfig.mode").String(); got != "ANY" {

|

||||

t.Fatalf("Expected toolConfig.functionCallingConfig.mode 'ANY', got '%s'", got)

|

||||

}

|

||||

allowed := gjson.GetBytes(output, "toolConfig.functionCallingConfig.allowedFunctionNames").Array()

|

||||

if len(allowed) != 1 || allowed[0].String() != "json" {

|

||||

t.Fatalf("Expected allowedFunctionNames ['json'], got %s", gjson.GetBytes(output, "toolConfig.functionCallingConfig.allowedFunctionNames").Raw)

|

||||

}

|

||||

}

|

||||

@@ -354,22 +354,7 @@ func ConvertOpenAIResponsesRequestToGemini(modelName string, inputRawJSON []byte

|

||||

funcDecl, _ = sjson.Set(funcDecl, "description", desc.String())

|

||||

}

|

||||

if params := tool.Get("parameters"); params.Exists() {

|

||||

// Convert parameter types from OpenAI format to Gemini format

|

||||

cleaned := params.Raw

|

||||

// Convert type values to uppercase for Gemini

|

||||

paramsResult := gjson.Parse(cleaned)

|

||||

if properties := paramsResult.Get("properties"); properties.Exists() {

|

||||

properties.ForEach(func(key, value gjson.Result) bool {

|

||||

if propType := value.Get("type"); propType.Exists() {

|

||||

upperType := strings.ToUpper(propType.String())

|

||||

cleaned, _ = sjson.Set(cleaned, "properties."+key.String()+".type", upperType)

|

||||

}

|

||||

return true

|

||||

})

|

||||

}

|

||||

// Set the overall type to OBJECT

|

||||

cleaned, _ = sjson.Set(cleaned, "type", "OBJECT")

|

||||

funcDecl, _ = sjson.SetRaw(funcDecl, "parametersJsonSchema", cleaned)

|

||||

funcDecl, _ = sjson.SetRaw(funcDecl, "parametersJsonSchema", params.Raw)

|

||||

}

|

||||

|

||||

geminiTools, _ = sjson.SetRaw(geminiTools, "0.functionDeclarations.-1", funcDecl)

|

||||

|

||||

@@ -430,7 +430,7 @@ func removeUnsupportedKeywords(jsonStr string) string {

|

||||

keywords := append(unsupportedConstraints,

|

||||

"$schema", "$defs", "definitions", "const", "$ref", "$id", "additionalProperties",

|

||||

"propertyNames", "patternProperties", // Gemini doesn't support these schema keywords

|

||||

"enumTitles", "prefill", // Claude/OpenCode schema metadata fields unsupported by Gemini

|

||||

"enumTitles", "prefill", "deprecated", // Schema metadata fields unsupported by Gemini

|

||||

)

|

||||

|

||||

deletePaths := make([]string, 0)

|

||||

|

||||

@@ -304,6 +304,11 @@ func BuildConfigChangeDetails(oldCfg, newCfg *config.Config) []string {

|

||||

if oldModels.hash != newModels.hash {

|

||||

changes = append(changes, fmt.Sprintf("vertex[%d].models: updated (%d -> %d entries)", i, oldModels.count, newModels.count))

|

||||

}

|

||||

oldExcluded := SummarizeExcludedModels(o.ExcludedModels)

|

||||

newExcluded := SummarizeExcludedModels(n.ExcludedModels)

|

||||

if oldExcluded.hash != newExcluded.hash {

|

||||

changes = append(changes, fmt.Sprintf("vertex[%d].excluded-models: updated (%d -> %d entries)", i, oldExcluded.count, newExcluded.count))

|

||||

}

|

||||

if !equalStringMap(o.Headers, n.Headers) {

|

||||

changes = append(changes, fmt.Sprintf("vertex[%d].headers: updated", i))

|

||||

}

|

||||

|

||||

@@ -319,7 +319,7 @@ func (s *ConfigSynthesizer) synthesizeVertexCompat(ctx *SynthesisContext) []*cor

|

||||

CreatedAt: now,

|

||||

UpdatedAt: now,

|

||||

}

|

||||

ApplyAuthExcludedModelsMeta(a, cfg, nil, "apikey")

|

||||

ApplyAuthExcludedModelsMeta(a, cfg, compat.ExcludedModels, "apikey")

|

||||

out = append(out, a)

|

||||

}

|

||||

return out

|

||||

|

||||

@@ -5,6 +5,7 @@ import (

|

||||

"fmt"

|

||||

"os"

|

||||

"path/filepath"

|

||||

"runtime"

|

||||

"strconv"

|

||||

"strings"

|

||||

"time"

|

||||

@@ -72,6 +73,10 @@ func (s *FileSynthesizer) Synthesize(ctx *SynthesisContext) ([]*coreauth.Auth, e

|

||||

if rel, errRel := filepath.Rel(ctx.AuthDir, full); errRel == nil && rel != "" {

|

||||

id = rel

|

||||

}

|

||||

// On Windows, normalize ID casing to avoid duplicate auth entries caused by case-insensitive paths.

|

||||

if runtime.GOOS == "windows" {

|

||||

id = strings.ToLower(id)

|

||||

}

|

||||

|

||||

proxyURL := ""

|

||||

if p, ok := metadata["proxy_url"].(string); ok {

|

||||

|

||||

@@ -10,6 +10,7 @@ import (

|

||||

"net/url"

|

||||

"os"

|

||||

"path/filepath"

|

||||

"runtime"

|

||||

"strings"

|

||||

"sync"

|

||||

"time"

|

||||

@@ -266,14 +267,17 @@ func (s *FileTokenStore) readAuthFile(path, baseDir string) (*cliproxyauth.Auth,

|

||||

}

|

||||

|

||||

func (s *FileTokenStore) idFor(path, baseDir string) string {

|

||||

if baseDir == "" {

|

||||

return path

|

||||

id := path

|

||||

if baseDir != "" {

|

||||

if rel, errRel := filepath.Rel(baseDir, path); errRel == nil && rel != "" {

|

||||

id = rel

|

||||

}

|

||||

}

|

||||

rel, err := filepath.Rel(baseDir, path)

|

||||

if err != nil {

|

||||

return path

|

||||

// On Windows, normalize ID casing to avoid duplicate auth entries caused by case-insensitive paths.

|

||||

if runtime.GOOS == "windows" {

|

||||

id = strings.ToLower(id)

|

||||

}

|

||||

return rel

|

||||

return id

|

||||

}

|

||||

|

||||

func (s *FileTokenStore) resolveAuthPath(auth *cliproxyauth.Auth) (string, error) {

|

||||

|

||||

@@ -463,9 +463,14 @@ func (m *Manager) Update(ctx context.Context, auth *Auth) (*Auth, error) {

|

||||

return nil, nil

|

||||

}

|

||||

m.mu.Lock()

|

||||

if existing, ok := m.auths[auth.ID]; ok && existing != nil && !auth.indexAssigned && auth.Index == "" {

|

||||

auth.Index = existing.Index

|

||||

auth.indexAssigned = existing.indexAssigned

|

||||

if existing, ok := m.auths[auth.ID]; ok && existing != nil {

|

||||

if !auth.indexAssigned && auth.Index == "" {

|

||||

auth.Index = existing.Index

|

||||

auth.indexAssigned = existing.indexAssigned

|

||||

}

|

||||

if len(auth.ModelStates) == 0 && len(existing.ModelStates) > 0 {

|

||||

auth.ModelStates = existing.ModelStates

|

||||

}

|

||||

}

|

||||

auth.EnsureIndex()

|

||||

m.auths[auth.ID] = auth.Clone()

|

||||

|

||||

49

sdk/cliproxy/auth/conductor_update_test.go

Normal file

49

sdk/cliproxy/auth/conductor_update_test.go

Normal file

@@ -0,0 +1,49 @@

|

||||

package auth

|

||||

|

||||

import (

|

||||

"context"

|

||||

"testing"

|

||||

)

|

||||

|

||||

func TestManager_Update_PreservesModelStates(t *testing.T) {

|

||||

m := NewManager(nil, nil, nil)

|

||||

|

||||

model := "test-model"

|

||||

backoffLevel := 7

|

||||

|

||||

if _, errRegister := m.Register(context.Background(), &Auth{

|

||||

ID: "auth-1",

|

||||

Provider: "claude",

|

||||

Metadata: map[string]any{"k": "v"},

|

||||

ModelStates: map[string]*ModelState{

|

||||

model: {

|

||||

Quota: QuotaState{BackoffLevel: backoffLevel},

|

||||

},

|

||||

},

|

||||

}); errRegister != nil {

|

||||

t.Fatalf("register auth: %v", errRegister)

|

||||

}

|

||||

|

||||

if _, errUpdate := m.Update(context.Background(), &Auth{

|

||||

ID: "auth-1",

|

||||

Provider: "claude",

|

||||

Metadata: map[string]any{"k": "v2"},

|

||||

}); errUpdate != nil {

|

||||

t.Fatalf("update auth: %v", errUpdate)

|

||||

}

|

||||

|

||||

updated, ok := m.GetByID("auth-1")

|

||||

if !ok || updated == nil {

|

||||

t.Fatalf("expected auth to be present")

|

||||

}

|

||||

if len(updated.ModelStates) == 0 {

|

||||

t.Fatalf("expected ModelStates to be preserved")

|

||||

}

|

||||

state := updated.ModelStates[model]

|

||||

if state == nil {

|

||||

t.Fatalf("expected model state to be present")

|

||||

}

|

||||

if state.Quota.BackoffLevel != backoffLevel {

|

||||

t.Fatalf("expected BackoffLevel to be %d, got %d", backoffLevel, state.Quota.BackoffLevel)

|

||||

}

|

||||

}

|

||||

@@ -301,6 +301,9 @@ func (s *Service) applyCoreAuthAddOrUpdate(ctx context.Context, auth *coreauth.A

|

||||

auth.CreatedAt = existing.CreatedAt

|

||||

auth.LastRefreshedAt = existing.LastRefreshedAt

|

||||

auth.NextRefreshAfter = existing.NextRefreshAfter

|

||||

if len(auth.ModelStates) == 0 && len(existing.ModelStates) > 0 {

|

||||

auth.ModelStates = existing.ModelStates

|

||||

}

|

||||

op = "update"

|

||||

_, err = s.coreManager.Update(ctx, auth)

|

||||

} else {

|

||||

@@ -817,10 +820,13 @@ func (s *Service) registerModelsForAuth(a *coreauth.Auth) {

|

||||

case "vertex":

|

||||

// Vertex AI Gemini supports the same model identifiers as Gemini.

|

||||

models = registry.GetGeminiVertexModels()

|

||||

if authKind == "apikey" {

|

||||

if entry := s.resolveConfigVertexCompatKey(a); entry != nil && len(entry.Models) > 0 {

|

||||

if entry := s.resolveConfigVertexCompatKey(a); entry != nil {

|

||||

if len(entry.Models) > 0 {

|

||||

models = buildVertexCompatConfigModels(entry)

|

||||

}

|

||||

if authKind == "apikey" {

|

||||

excluded = entry.ExcludedModels

|

||||

}

|

||||

}

|

||||

models = applyExcludedModels(models, excluded)

|

||||

case "gemini-cli":

|

||||

|

||||

Reference in New Issue

Block a user