mirror of

https://github.com/router-for-me/CLIProxyAPIPlus.git

synced 2026-03-09 15:25:17 +00:00

Compare commits

120 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

68934942d0 | ||

|

|

09fec34e1c | ||

|

|

9229708b6c | ||

|

|

914db94e79 | ||

|

|

660bd7eff5 | ||

|

|

b907d21851 | ||

|

|

d6cc976d1f | ||

|

|

8aa2cce8c5 | ||

|

|

bf9b2c49df | ||

|

|

77b42c6165 | ||

|

|

446150a747 | ||

|

|

1cbc4834e1 | ||

|

|

a8a5d03c33 | ||

|

|

76aa917882 | ||

|

|

6ac9b31e4e | ||

|

|

0ad3e8457f | ||

|

|

444a47ae63 | ||

|

|

725f4fdff4 | ||

|

|

c23e46f45d | ||

|

|

b148820c35 | ||

|

|

134f41496d | ||

|

|

c5838dd58d | ||

|

|

b6ca5ef7ce | ||

|

|

1ae994b4aa | ||

|

|

84e9793e61 | ||

|

|

32e64dacfd | ||

|

|

cc1d8f6629 | ||

|

|

5446cd2b02 | ||

|

|

8de0885b7d | ||

|

|

16243f18fd | ||

|

|

a6ce5f36e6 | ||

|

|

e73cf42e28 | ||

|

|

b45343e812 | ||

|

|

8599b1560e | ||

|

|

8bde8c37c0 | ||

|

|

82df5bf88a | ||

|

|

acb1066de8 | ||

|

|

27c68f5bb2 | ||

|

|

68dd2bfe82 | ||

|

|

65a87815e7 | ||

|

|

b80793ca82 | ||

|

|

601550f238 | ||

|

|

41b1cf2273 | ||

|

|

2baf35b3ef | ||

|

|

846e75b893 | ||

|

|

fc0257d6d9 | ||

|

|

f3c164d345 | ||

|

|

4040b1e766 | ||

|

|

3b4f9f43db | ||

|

|

37a09ecb23 | ||

|

|

0da34d3c2d | ||

|

|

74bf7eda8f | ||

|

|

9032042cfa | ||

|

|

030bf5e6c7 | ||

|

|

d3100085b0 | ||

|

|

f481d25133 | ||

|

|

8c6c90da74 | ||

|

|

24bcfd9c03 | ||

|

|

816fb4c5da | ||

|

|

c1bb77c7c9 | ||

|

|

6bcac3a55a | ||

|

|

fc346f4537 | ||

|

|

43e531a3b6 | ||

|

|

d24ea4ce2a | ||

|

|

2c30c981ae | ||

|

|

aa1da8a858 | ||

|

|

f1e9a787d7 | ||

|

|

4eeec297de | ||

|

|

77cc4ce3a0 | ||

|

|

37dfea1d3f | ||

|

|

e6626c672a | ||

|

|

c66cb0afd2 | ||

|

|

fb48eee973 | ||

|

|

bb44e5ec44 | ||

|

|

c785c1a3ca | ||

|

|

514ae341c8 | ||

|

|

0659ffab75 | ||

|

|

8ce07f38dd | ||

|

|

7cb398d167 | ||

|

|

c3e12c5e58 | ||

|

|

1825fc7503 | ||

|

|

48732ba05e | ||

|

|

acf483c9e6 | ||

|

|

3b3e0d1141 | ||

|

|

7acd428507 | ||

|

|

0aaf177640 | ||

|

|

450d1227bd | ||

|

|

492b9c46f0 | ||

|

|

6e634fe3f9 | ||

|

|

4e26182d14 | ||

|

|

8f97a5f77c | ||

|

|

eb7571936c | ||

|

|

5382764d8a | ||

|

|

49c8ec69d0 | ||

|

|

3b421c8181 | ||

|

|

2a4d3e60f3 | ||

|

|

8b5af2ab84 | ||

|

|

d887716ebd | ||

|

|

5dc1848466 | ||

|

|

9491517b26 | ||

|

|

9370b5bd04 | ||

|

|

abb51a0d93 | ||

|

|

c8d809131b | ||

|

|

dd71c73a9f | ||

|

|

afc8a0f9be | ||

|

|

a99522224f | ||

|

|

f5d46b9ca2 | ||

|

|

d693d7993b | ||

|

|

5936f9895c | ||

|

|

0cbfe7f457 | ||

|

|

b9ae4ab803 | ||

|

|

65debb874f | ||

|

|

3caadac003 | ||

|

|

6a9e3a6b84 | ||

|

|

269972440a | ||

|

|

cce13e6ad2 | ||

|

|

8a565dcad8 | ||

|

|

d536110404 | ||

|

|

48e957ddff | ||

|

|

94563d622c |

@@ -31,6 +31,7 @@ bin/*

|

||||

.agent/*

|

||||

.agents/*

|

||||

.opencode/*

|

||||

.idea/*

|

||||

.bmad/*

|

||||

_bmad/*

|

||||

_bmad-output/*

|

||||

|

||||

1

.gitignore

vendored

1

.gitignore

vendored

@@ -44,6 +44,7 @@ GEMINI.md

|

||||

.agents/*

|

||||

.agents/*

|

||||

.opencode/*

|

||||

.idea/*

|

||||

.bmad/*

|

||||

_bmad/*

|

||||

_bmad-output/*

|

||||

|

||||

58

README.md

58

README.md

@@ -10,23 +10,59 @@ The Plus release stays in lockstep with the mainline features.

|

||||

|

||||

## Differences from the Mainline

|

||||

|

||||

- Added GitHub Copilot support (OAuth login), provided by [em4go](https://github.com/em4go/CLIProxyAPI/tree/feature/github-copilot-auth)

|

||||

- Added Kiro (AWS CodeWhisperer) support (OAuth login), provided by [fuko2935](https://github.com/fuko2935/CLIProxyAPI/tree/feature/kiro-integration), [Ravens2121](https://github.com/Ravens2121/CLIProxyAPIPlus/)

|

||||

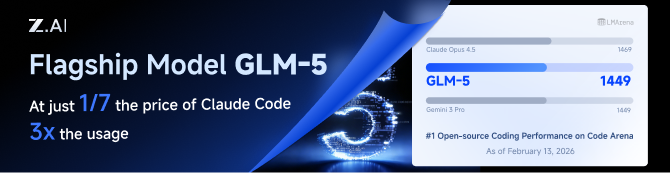

[](https://z.ai/subscribe?ic=8JVLJQFSKB)

|

||||

|

||||

## New Features (Plus Enhanced)

|

||||

|

||||

- **OAuth Web Authentication**: Browser-based OAuth login for Kiro with beautiful web UI

|

||||

- **Rate Limiter**: Built-in request rate limiting to prevent API abuse

|

||||

- **Background Token Refresh**: Automatic token refresh 10 minutes before expiration

|

||||

- **Metrics & Monitoring**: Request metrics collection for monitoring and debugging

|

||||

- **Device Fingerprint**: Device fingerprint generation for enhanced security

|

||||

- **Cooldown Management**: Smart cooldown mechanism for API rate limits

|

||||

- **Usage Checker**: Real-time usage monitoring and quota management

|

||||

- **Model Converter**: Unified model name conversion across providers

|

||||

- **UTF-8 Stream Processing**: Improved streaming response handling

|

||||

GLM CODING PLAN is a subscription service designed for AI coding, starting at just $10/month. It provides access to their flagship GLM-4.7 & (GLM-5 Only Available for Pro Users)model across 10+ popular AI coding tools (Claude Code, Cline, Roo Code, etc.), offering developers top-tier, fast, and stable coding experiences.

|

||||

|

||||

## Kiro Authentication

|

||||

|

||||

### CLI Login

|

||||

|

||||

> **Note:** Google/GitHub login is not available for third-party applications due to AWS Cognito restrictions.

|

||||

|

||||

**AWS Builder ID** (recommended):

|

||||

|

||||

```bash

|

||||

# Device code flow

|

||||

./CLIProxyAPI --kiro-aws-login

|

||||

|

||||

# Authorization code flow

|

||||

./CLIProxyAPI --kiro-aws-authcode

|

||||

```

|

||||

|

||||

**Import token from Kiro IDE:**

|

||||

|

||||

```bash

|

||||

./CLIProxyAPI --kiro-import

|

||||

```

|

||||

|

||||

To get a token from Kiro IDE:

|

||||

|

||||

1. Open Kiro IDE and login with Google (or GitHub)

|

||||

2. Find the token file: `~/.kiro/kiro-auth-token.json`

|

||||

3. Run: `./CLIProxyAPI --kiro-import`

|

||||

|

||||

**AWS IAM Identity Center (IDC):**

|

||||

|

||||

```bash

|

||||

./CLIProxyAPI --kiro-idc-login --kiro-idc-start-url https://d-xxxxxxxxxx.awsapps.com/start

|

||||

|

||||

# Specify region

|

||||

./CLIProxyAPI --kiro-idc-login --kiro-idc-start-url https://d-xxxxxxxxxx.awsapps.com/start --kiro-idc-region us-west-2

|

||||

```

|

||||

|

||||

**Additional flags:**

|

||||

|

||||

| Flag | Description |

|

||||

|------|-------------|

|

||||

| `--no-browser` | Don't open browser automatically, print URL instead |

|

||||

| `--no-incognito` | Use existing browser session (Kiro defaults to incognito). Useful for corporate SSO that requires an authenticated browser session |

|

||||

| `--kiro-idc-start-url` | IDC Start URL (required with `--kiro-idc-login`) |

|

||||

| `--kiro-idc-region` | IDC region (default: `us-east-1`) |

|

||||

| `--kiro-idc-flow` | IDC flow type: `authcode` (default) or `device` |

|

||||

|

||||

### Web-based OAuth Login

|

||||

|

||||

Access the Kiro OAuth web interface at:

|

||||

|

||||

60

README_CN.md

60

README_CN.md

@@ -10,22 +10,58 @@

|

||||

|

||||

## 与主线版本版本差异

|

||||

|

||||

- 新增 GitHub Copilot 支持(OAuth 登录),由[em4go](https://github.com/em4go/CLIProxyAPI/tree/feature/github-copilot-auth)提供

|

||||

- 新增 Kiro (AWS CodeWhisperer) 支持 (OAuth 登录), 由[fuko2935](https://github.com/fuko2935/CLIProxyAPI/tree/feature/kiro-integration)、[Ravens2121](https://github.com/Ravens2121/CLIProxyAPIPlus/)提供

|

||||

[](https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII)

|

||||

|

||||

## 新增功能 (Plus 增强版)

|

||||

|

||||

- **OAuth Web 认证**: 基于浏览器的 Kiro OAuth 登录,提供美观的 Web UI

|

||||

- **请求限流器**: 内置请求限流,防止 API 滥用

|

||||

- **后台令牌刷新**: 过期前 10 分钟自动刷新令牌

|

||||

- **监控指标**: 请求指标收集,用于监控和调试

|

||||

- **设备指纹**: 设备指纹生成,增强安全性

|

||||

- **冷却管理**: 智能冷却机制,应对 API 速率限制

|

||||

- **用量检查器**: 实时用量监控和配额管理

|

||||

- **模型转换器**: 跨供应商的统一模型名称转换

|

||||

- **UTF-8 流处理**: 改进的流式响应处理

|

||||

GLM CODING PLAN 是专为AI编码打造的订阅套餐,每月最低仅需20元,即可在十余款主流AI编码工具如 Claude Code、Cline、Roo Code 中畅享智谱旗舰模型GLM-4.7(受限于算力,目前仅限Pro用户开放),为开发者提供顶尖的编码体验。

|

||||

|

||||

## Kiro 认证

|

||||

智谱AI为本产品提供了特别优惠,使用以下链接购买可以享受九折优惠:https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII

|

||||

|

||||

### 命令行登录

|

||||

|

||||

> **注意:** 由于 AWS Cognito 限制,Google/GitHub 登录不可用于第三方应用。

|

||||

|

||||

**AWS Builder ID**(推荐):

|

||||

|

||||

```bash

|

||||

# 设备码流程

|

||||

./CLIProxyAPI --kiro-aws-login

|

||||

|

||||

# 授权码流程

|

||||

./CLIProxyAPI --kiro-aws-authcode

|

||||

```

|

||||

|

||||

**从 Kiro IDE 导入令牌:**

|

||||

|

||||

```bash

|

||||

./CLIProxyAPI --kiro-import

|

||||

```

|

||||

|

||||

获取令牌步骤:

|

||||

|

||||

1. 打开 Kiro IDE,使用 Google(或 GitHub)登录

|

||||

2. 找到令牌文件:`~/.kiro/kiro-auth-token.json`

|

||||

3. 运行:`./CLIProxyAPI --kiro-import`

|

||||

|

||||

**AWS IAM Identity Center (IDC):**

|

||||

|

||||

```bash

|

||||

./CLIProxyAPI --kiro-idc-login --kiro-idc-start-url https://d-xxxxxxxxxx.awsapps.com/start

|

||||

|

||||

# 指定区域

|

||||

./CLIProxyAPI --kiro-idc-login --kiro-idc-start-url https://d-xxxxxxxxxx.awsapps.com/start --kiro-idc-region us-west-2

|

||||

```

|

||||

|

||||

**附加参数:**

|

||||

|

||||

| 参数 | 说明 |

|

||||

|------|------|

|

||||

| `--no-browser` | 不自动打开浏览器,打印 URL |

|

||||

| `--no-incognito` | 使用已有浏览器会话(Kiro 默认使用无痕模式),适用于需要已登录浏览器会话的企业 SSO 场景 |

|

||||

| `--kiro-idc-start-url` | IDC Start URL(`--kiro-idc-login` 必需) |

|

||||

| `--kiro-idc-region` | IDC 区域(默认:`us-east-1`) |

|

||||

| `--kiro-idc-flow` | IDC 流程类型:`authcode`(默认)或 `device` |

|

||||

|

||||

### 网页端 OAuth 登录

|

||||

|

||||

|

||||

@@ -72,6 +72,7 @@ func main() {

|

||||

// Command-line flags to control the application's behavior.

|

||||

var login bool

|

||||

var codexLogin bool

|

||||

var codexDeviceLogin bool

|

||||

var claudeLogin bool

|

||||

var qwenLogin bool

|

||||

var kiloLogin bool

|

||||

@@ -86,6 +87,10 @@ func main() {

|

||||

var kiroAWSLogin bool

|

||||

var kiroAWSAuthCode bool

|

||||

var kiroImport bool

|

||||

var kiroIDCLogin bool

|

||||

var kiroIDCStartURL string

|

||||

var kiroIDCRegion string

|

||||

var kiroIDCFlow string

|

||||

var githubCopilotLogin bool

|

||||

var projectID string

|

||||

var vertexImport string

|

||||

@@ -99,6 +104,7 @@ func main() {

|

||||

// Define command-line flags for different operation modes.

|

||||

flag.BoolVar(&login, "login", false, "Login Google Account")

|

||||

flag.BoolVar(&codexLogin, "codex-login", false, "Login to Codex using OAuth")

|

||||

flag.BoolVar(&codexDeviceLogin, "codex-device-login", false, "Login to Codex using device code flow")

|

||||

flag.BoolVar(&claudeLogin, "claude-login", false, "Login to Claude using OAuth")

|

||||

flag.BoolVar(&qwenLogin, "qwen-login", false, "Login to Qwen using OAuth")

|

||||

flag.BoolVar(&kiloLogin, "kilo-login", false, "Login to Kilo AI using device flow")

|

||||

@@ -115,6 +121,10 @@ func main() {

|

||||

flag.BoolVar(&kiroAWSLogin, "kiro-aws-login", false, "Login to Kiro using AWS Builder ID (device code flow)")

|

||||

flag.BoolVar(&kiroAWSAuthCode, "kiro-aws-authcode", false, "Login to Kiro using AWS Builder ID (authorization code flow, better UX)")

|

||||

flag.BoolVar(&kiroImport, "kiro-import", false, "Import Kiro token from Kiro IDE (~/.aws/sso/cache/kiro-auth-token.json)")

|

||||

flag.BoolVar(&kiroIDCLogin, "kiro-idc-login", false, "Login to Kiro using IAM Identity Center (IDC)")

|

||||

flag.StringVar(&kiroIDCStartURL, "kiro-idc-start-url", "", "IDC start URL (required with --kiro-idc-login)")

|

||||

flag.StringVar(&kiroIDCRegion, "kiro-idc-region", "", "IDC region (default: us-east-1)")

|

||||

flag.StringVar(&kiroIDCFlow, "kiro-idc-flow", "", "IDC flow type: authcode (default) or device")

|

||||

flag.BoolVar(&githubCopilotLogin, "github-copilot-login", false, "Login to GitHub Copilot using device flow")

|

||||

flag.StringVar(&projectID, "project_id", "", "Project ID (Gemini only, not required)")

|

||||

flag.StringVar(&configPath, "config", DefaultConfigPath, "Configure File Path")

|

||||

@@ -502,6 +512,9 @@ func main() {

|

||||

} else if codexLogin {

|

||||

// Handle Codex login

|

||||

cmd.DoCodexLogin(cfg, options)

|

||||

} else if codexDeviceLogin {

|

||||

// Handle Codex device-code login

|

||||

cmd.DoCodexDeviceLogin(cfg, options)

|

||||

} else if claudeLogin {

|

||||

// Handle Claude login

|

||||

cmd.DoClaudeLogin(cfg, options)

|

||||

@@ -521,24 +534,34 @@ func main() {

|

||||

// Note: This config mutation is safe - auth commands exit after completion

|

||||

// and don't share config with StartService (which is in the else branch)

|

||||

setKiroIncognitoMode(cfg, useIncognito, noIncognito)

|

||||

kiro.InitFingerprintConfig(cfg)

|

||||

cmd.DoKiroLogin(cfg, options)

|

||||

} else if kiroGoogleLogin {

|

||||

// For Kiro auth, default to incognito mode for multi-account support

|

||||

// Users can explicitly override with --no-incognito

|

||||

// Note: This config mutation is safe - auth commands exit after completion

|

||||

setKiroIncognitoMode(cfg, useIncognito, noIncognito)

|

||||

kiro.InitFingerprintConfig(cfg)

|

||||

cmd.DoKiroGoogleLogin(cfg, options)

|

||||

} else if kiroAWSLogin {

|

||||

// For Kiro auth, default to incognito mode for multi-account support

|

||||

// Users can explicitly override with --no-incognito

|

||||

setKiroIncognitoMode(cfg, useIncognito, noIncognito)

|

||||

kiro.InitFingerprintConfig(cfg)

|

||||

cmd.DoKiroAWSLogin(cfg, options)

|

||||

} else if kiroAWSAuthCode {

|

||||

// For Kiro auth with authorization code flow (better UX)

|

||||

setKiroIncognitoMode(cfg, useIncognito, noIncognito)

|

||||

kiro.InitFingerprintConfig(cfg)

|

||||

cmd.DoKiroAWSAuthCodeLogin(cfg, options)

|

||||

} else if kiroImport {

|

||||

kiro.InitFingerprintConfig(cfg)

|

||||

cmd.DoKiroImport(cfg, options)

|

||||

} else if kiroIDCLogin {

|

||||

// For Kiro IDC auth, default to incognito mode for multi-account support

|

||||

setKiroIncognitoMode(cfg, useIncognito, noIncognito)

|

||||

kiro.InitFingerprintConfig(cfg)

|

||||

cmd.DoKiroIDCLogin(cfg, options, kiroIDCStartURL, kiroIDCRegion, kiroIDCFlow)

|

||||

} else {

|

||||

// In cloud deploy mode without config file, just wait for shutdown signals

|

||||

if isCloudDeploy && !configFileExists {

|

||||

|

||||

@@ -80,6 +80,10 @@ passthrough-headers: false

|

||||

# Number of times to retry a request. Retries will occur if the HTTP response code is 403, 408, 500, 502, 503, or 504.

|

||||

request-retry: 3

|

||||

|

||||

# Maximum number of different credentials to try for one failed request.

|

||||

# Set to 0 to keep legacy behavior (try all available credentials).

|

||||

max-retry-credentials: 0

|

||||

|

||||

# Maximum wait time in seconds for a cooled-down credential before triggering a retry.

|

||||

max-retry-interval: 30

|

||||

|

||||

@@ -179,6 +183,8 @@ nonstream-keepalive-interval: 0

|

||||

#kiro:

|

||||

# - token-file: "~/.aws/sso/cache/kiro-auth-token.json" # path to Kiro token file

|

||||

# agent-task-type: "" # optional: "vibe" or empty (API default)

|

||||

# start-url: "https://your-company.awsapps.com/start" # optional: IDC start URL (preset for login)

|

||||

# region: "us-east-1" # optional: OIDC region for IDC login and token refresh

|

||||

# - access-token: "aoaAAAAA..." # or provide tokens directly

|

||||

# refresh-token: "aorAAAAA..."

|

||||

# profile-arn: "arn:aws:codewhisperer:us-east-1:..."

|

||||

|

||||

@@ -48,14 +48,11 @@ import (

|

||||

var lastRefreshKeys = []string{"last_refresh", "lastRefresh", "last_refreshed_at", "lastRefreshedAt"}

|

||||

|

||||

const (

|

||||

anthropicCallbackPort = 54545

|

||||

geminiCallbackPort = 8085

|

||||

codexCallbackPort = 1455

|

||||

geminiCLIEndpoint = "https://cloudcode-pa.googleapis.com"

|

||||

geminiCLIVersion = "v1internal"

|

||||

geminiCLIUserAgent = "google-api-nodejs-client/9.15.1"

|

||||

geminiCLIApiClient = "gl-node/22.17.0"

|

||||

geminiCLIClientMetadata = "ideType=IDE_UNSPECIFIED,platform=PLATFORM_UNSPECIFIED,pluginType=GEMINI"

|

||||

anthropicCallbackPort = 54545

|

||||

geminiCallbackPort = 8085

|

||||

codexCallbackPort = 1455

|

||||

geminiCLIEndpoint = "https://cloudcode-pa.googleapis.com"

|

||||

geminiCLIVersion = "v1internal"

|

||||

)

|

||||

|

||||

type callbackForwarder struct {

|

||||

@@ -412,6 +409,9 @@ func (h *Handler) buildAuthFileEntry(auth *coreauth.Auth) gin.H {

|

||||

if !auth.LastRefreshedAt.IsZero() {

|

||||

entry["last_refresh"] = auth.LastRefreshedAt

|

||||

}

|

||||

if !auth.NextRetryAfter.IsZero() {

|

||||

entry["next_retry_after"] = auth.NextRetryAfter

|

||||

}

|

||||

if path != "" {

|

||||

entry["path"] = path

|

||||

entry["source"] = "file"

|

||||

@@ -951,11 +951,17 @@ func (h *Handler) saveTokenRecord(ctx context.Context, record *coreauth.Auth) (s

|

||||

if store == nil {

|

||||

return "", fmt.Errorf("token store unavailable")

|

||||

}

|

||||

if h.postAuthHook != nil {

|

||||

if err := h.postAuthHook(ctx, record); err != nil {

|

||||

return "", fmt.Errorf("post-auth hook failed: %w", err)

|

||||

}

|

||||

}

|

||||

return store.Save(ctx, record)

|

||||

}

|

||||

|

||||

func (h *Handler) RequestAnthropicToken(c *gin.Context) {

|

||||

ctx := context.Background()

|

||||

ctx = PopulateAuthContext(ctx, c)

|

||||

|

||||

fmt.Println("Initializing Claude authentication...")

|

||||

|

||||

@@ -1100,6 +1106,7 @@ func (h *Handler) RequestAnthropicToken(c *gin.Context) {

|

||||

|

||||

func (h *Handler) RequestGeminiCLIToken(c *gin.Context) {

|

||||

ctx := context.Background()

|

||||

ctx = PopulateAuthContext(ctx, c)

|

||||

proxyHTTPClient := util.SetProxy(&h.cfg.SDKConfig, &http.Client{})

|

||||

ctx = context.WithValue(ctx, oauth2.HTTPClient, proxyHTTPClient)

|

||||

|

||||

@@ -1358,6 +1365,7 @@ func (h *Handler) RequestGeminiCLIToken(c *gin.Context) {

|

||||

|

||||

func (h *Handler) RequestCodexToken(c *gin.Context) {

|

||||

ctx := context.Background()

|

||||

ctx = PopulateAuthContext(ctx, c)

|

||||

|

||||

fmt.Println("Initializing Codex authentication...")

|

||||

|

||||

@@ -1503,6 +1511,7 @@ func (h *Handler) RequestCodexToken(c *gin.Context) {

|

||||

|

||||

func (h *Handler) RequestAntigravityToken(c *gin.Context) {

|

||||

ctx := context.Background()

|

||||

ctx = PopulateAuthContext(ctx, c)

|

||||

|

||||

fmt.Println("Initializing Antigravity authentication...")

|

||||

|

||||

@@ -1667,6 +1676,7 @@ func (h *Handler) RequestAntigravityToken(c *gin.Context) {

|

||||

|

||||

func (h *Handler) RequestQwenToken(c *gin.Context) {

|

||||

ctx := context.Background()

|

||||

ctx = PopulateAuthContext(ctx, c)

|

||||

|

||||

fmt.Println("Initializing Qwen authentication...")

|

||||

|

||||

@@ -1722,6 +1732,7 @@ func (h *Handler) RequestQwenToken(c *gin.Context) {

|

||||

|

||||

func (h *Handler) RequestKimiToken(c *gin.Context) {

|

||||

ctx := context.Background()

|

||||

ctx = PopulateAuthContext(ctx, c)

|

||||

|

||||

fmt.Println("Initializing Kimi authentication...")

|

||||

|

||||

@@ -1798,6 +1809,7 @@ func (h *Handler) RequestKimiToken(c *gin.Context) {

|

||||

|

||||

func (h *Handler) RequestIFlowToken(c *gin.Context) {

|

||||

ctx := context.Background()

|

||||

ctx = PopulateAuthContext(ctx, c)

|

||||

|

||||

fmt.Println("Initializing iFlow authentication...")

|

||||

|

||||

@@ -1917,8 +1929,6 @@ func (h *Handler) RequestGitHubToken(c *gin.Context) {

|

||||

state := fmt.Sprintf("gh-%d", time.Now().UnixNano())

|

||||

|

||||

// Initialize Copilot auth service

|

||||

// We need to import "github.com/router-for-me/CLIProxyAPI/v6/internal/auth/copilot" first if not present

|

||||

// Assuming copilot package is imported as "copilot"

|

||||

deviceClient := copilot.NewDeviceFlowClient(h.cfg)

|

||||

|

||||

// Initiate device flow

|

||||

@@ -1932,7 +1942,7 @@ func (h *Handler) RequestGitHubToken(c *gin.Context) {

|

||||

authURL := deviceCode.VerificationURI

|

||||

userCode := deviceCode.UserCode

|

||||

|

||||

RegisterOAuthSession(state, "github")

|

||||

RegisterOAuthSession(state, "github-copilot")

|

||||

|

||||

go func() {

|

||||

fmt.Printf("Please visit %s and enter code: %s\n", authURL, userCode)

|

||||

@@ -1944,9 +1954,13 @@ func (h *Handler) RequestGitHubToken(c *gin.Context) {

|

||||

return

|

||||

}

|

||||

|

||||

username, errUser := deviceClient.FetchUserInfo(ctx, tokenData.AccessToken)

|

||||

userInfo, errUser := deviceClient.FetchUserInfo(ctx, tokenData.AccessToken)

|

||||

if errUser != nil {

|

||||

log.Warnf("Failed to fetch user info: %v", errUser)

|

||||

}

|

||||

|

||||

username := userInfo.Login

|

||||

if username == "" {

|

||||

username = "github-user"

|

||||

}

|

||||

|

||||

@@ -1955,18 +1969,26 @@ func (h *Handler) RequestGitHubToken(c *gin.Context) {

|

||||

TokenType: tokenData.TokenType,

|

||||

Scope: tokenData.Scope,

|

||||

Username: username,

|

||||

Email: userInfo.Email,

|

||||

Name: userInfo.Name,

|

||||

Type: "github-copilot",

|

||||

}

|

||||

|

||||

fileName := fmt.Sprintf("github-%s.json", username)

|

||||

fileName := fmt.Sprintf("github-copilot-%s.json", username)

|

||||

label := userInfo.Email

|

||||

if label == "" {

|

||||

label = username

|

||||

}

|

||||

record := &coreauth.Auth{

|

||||

ID: fileName,

|

||||

Provider: "github",

|

||||

Provider: "github-copilot",

|

||||

Label: label,

|

||||

FileName: fileName,

|

||||

Storage: tokenStorage,

|

||||

Metadata: map[string]any{

|

||||

"email": username,

|

||||

"email": userInfo.Email,

|

||||

"username": username,

|

||||

"name": userInfo.Name,

|

||||

},

|

||||

}

|

||||

|

||||

@@ -1980,7 +2002,7 @@ func (h *Handler) RequestGitHubToken(c *gin.Context) {

|

||||

fmt.Printf("Authentication successful! Token saved to %s\n", savedPath)

|

||||

fmt.Println("You can now use GitHub Copilot services through this CLI")

|

||||

CompleteOAuthSession(state)

|

||||

CompleteOAuthSessionsByProvider("github")

|

||||

CompleteOAuthSessionsByProvider("github-copilot")

|

||||

}()

|

||||

|

||||

c.JSON(200, gin.H{

|

||||

@@ -2359,9 +2381,7 @@ func callGeminiCLI(ctx context.Context, httpClient *http.Client, endpoint string

|

||||

return fmt.Errorf("create request: %w", errRequest)

|

||||

}

|

||||

req.Header.Set("Content-Type", "application/json")

|

||||

req.Header.Set("User-Agent", geminiCLIUserAgent)

|

||||

req.Header.Set("X-Goog-Api-Client", geminiCLIApiClient)

|

||||

req.Header.Set("Client-Metadata", geminiCLIClientMetadata)

|

||||

req.Header.Set("User-Agent", misc.GeminiCLIUserAgent(""))

|

||||

|

||||

resp, errDo := httpClient.Do(req)

|

||||

if errDo != nil {

|

||||

@@ -2431,7 +2451,7 @@ func checkCloudAPIIsEnabled(ctx context.Context, httpClient *http.Client, projec

|

||||

return false, fmt.Errorf("failed to create request: %w", errRequest)

|

||||

}

|

||||

req.Header.Set("Content-Type", "application/json")

|

||||

req.Header.Set("User-Agent", geminiCLIUserAgent)

|

||||

req.Header.Set("User-Agent", misc.GeminiCLIUserAgent(""))

|

||||

resp, errDo := httpClient.Do(req)

|

||||

if errDo != nil {

|

||||

return false, fmt.Errorf("failed to execute request: %w", errDo)

|

||||

@@ -2452,7 +2472,7 @@ func checkCloudAPIIsEnabled(ctx context.Context, httpClient *http.Client, projec

|

||||

return false, fmt.Errorf("failed to create request: %w", errRequest)

|

||||

}

|

||||

req.Header.Set("Content-Type", "application/json")

|

||||

req.Header.Set("User-Agent", geminiCLIUserAgent)

|

||||

req.Header.Set("User-Agent", misc.GeminiCLIUserAgent(""))

|

||||

resp, errDo = httpClient.Do(req)

|

||||

if errDo != nil {

|

||||

return false, fmt.Errorf("failed to execute request: %w", errDo)

|

||||

@@ -2521,6 +2541,14 @@ func (h *Handler) GetAuthStatus(c *gin.Context) {

|

||||

c.JSON(http.StatusOK, gin.H{"status": "wait"})

|

||||

}

|

||||

|

||||

// PopulateAuthContext extracts request info and adds it to the context

|

||||

func PopulateAuthContext(ctx context.Context, c *gin.Context) context.Context {

|

||||

info := &coreauth.RequestInfo{

|

||||

Query: c.Request.URL.Query(),

|

||||

Headers: c.Request.Header,

|

||||

}

|

||||

return coreauth.WithRequestInfo(ctx, info)

|

||||

}

|

||||

const kiroCallbackPort = 9876

|

||||

|

||||

func (h *Handler) RequestKiroToken(c *gin.Context) {

|

||||

|

||||

@@ -47,6 +47,7 @@ type Handler struct {

|

||||

allowRemoteOverride bool

|

||||

envSecret string

|

||||

logDir string

|

||||

postAuthHook coreauth.PostAuthHook

|

||||

}

|

||||

|

||||

// NewHandler creates a new management handler instance.

|

||||

@@ -128,6 +129,11 @@ func (h *Handler) SetLogDirectory(dir string) {

|

||||

h.logDir = dir

|

||||

}

|

||||

|

||||

// SetPostAuthHook registers a hook to be called after auth record creation but before persistence.

|

||||

func (h *Handler) SetPostAuthHook(hook coreauth.PostAuthHook) {

|

||||

h.postAuthHook = hook

|

||||

}

|

||||

|

||||

// Middleware enforces access control for management endpoints.

|

||||

// All requests (local and remote) require a valid management key.

|

||||

// Additionally, remote access requires allow-remote-management=true.

|

||||

|

||||

@@ -15,6 +15,7 @@ import (

|

||||

"strings"

|

||||

|

||||

"github.com/gin-gonic/gin"

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/internal/misc"

|

||||

log "github.com/sirupsen/logrus"

|

||||

)

|

||||

|

||||

@@ -77,6 +78,9 @@ func createReverseProxy(upstreamURL string, secretSource SecretSource) (*httputi

|

||||

req.Header.Del("X-Api-Key")

|

||||

req.Header.Del("X-Goog-Api-Key")

|

||||

|

||||

// Remove proxy, client identity, and browser fingerprint headers

|

||||

misc.ScrubProxyAndFingerprintHeaders(req)

|

||||

|

||||

// Remove query-based credentials if they match the authenticated client API key.

|

||||

// This prevents leaking client auth material to the Amp upstream while avoiding

|

||||

// breaking unrelated upstream query parameters.

|

||||

|

||||

@@ -52,6 +52,7 @@ type serverOptionConfig struct {

|

||||

keepAliveEnabled bool

|

||||

keepAliveTimeout time.Duration

|

||||

keepAliveOnTimeout func()

|

||||

postAuthHook auth.PostAuthHook

|

||||

}

|

||||

|

||||

// ServerOption customises HTTP server construction.

|

||||

@@ -59,10 +60,8 @@ type ServerOption func(*serverOptionConfig)

|

||||

|

||||

func defaultRequestLoggerFactory(cfg *config.Config, configPath string) logging.RequestLogger {

|

||||

configDir := filepath.Dir(configPath)

|

||||

if base := util.WritablePath(); base != "" {

|

||||

return logging.NewFileRequestLogger(cfg.RequestLog, filepath.Join(base, "logs"), configDir, cfg.ErrorLogsMaxFiles)

|

||||

}

|

||||

return logging.NewFileRequestLogger(cfg.RequestLog, "logs", configDir, cfg.ErrorLogsMaxFiles)

|

||||

logsDir := logging.ResolveLogDirectory(cfg)

|

||||

return logging.NewFileRequestLogger(cfg.RequestLog, logsDir, configDir, cfg.ErrorLogsMaxFiles)

|

||||

}

|

||||

|

||||

// WithMiddleware appends additional Gin middleware during server construction.

|

||||

@@ -112,6 +111,13 @@ func WithRequestLoggerFactory(factory func(*config.Config, string) logging.Reque

|

||||

}

|

||||

}

|

||||

|

||||

// WithPostAuthHook registers a hook to be called after auth record creation.

|

||||

func WithPostAuthHook(hook auth.PostAuthHook) ServerOption {

|

||||

return func(cfg *serverOptionConfig) {

|

||||

cfg.postAuthHook = hook

|

||||

}

|

||||

}

|

||||

|

||||

// Server represents the main API server.

|

||||

// It encapsulates the Gin engine, HTTP server, handlers, and configuration.

|

||||

type Server struct {

|

||||

@@ -252,7 +258,7 @@ func NewServer(cfg *config.Config, authManager *auth.Manager, accessManager *sdk

|

||||

s.oldConfigYaml, _ = yaml.Marshal(cfg)

|

||||

s.applyAccessConfig(nil, cfg)

|

||||

if authManager != nil {

|

||||

authManager.SetRetryConfig(cfg.RequestRetry, time.Duration(cfg.MaxRetryInterval)*time.Second)

|

||||

authManager.SetRetryConfig(cfg.RequestRetry, time.Duration(cfg.MaxRetryInterval)*time.Second, cfg.MaxRetryCredentials)

|

||||

}

|

||||

managementasset.SetCurrentConfig(cfg)

|

||||

auth.SetQuotaCooldownDisabled(cfg.DisableCooling)

|

||||

@@ -263,6 +269,9 @@ func NewServer(cfg *config.Config, authManager *auth.Manager, accessManager *sdk

|

||||

}

|

||||

logDir := logging.ResolveLogDirectory(cfg)

|

||||

s.mgmt.SetLogDirectory(logDir)

|

||||

if optionState.postAuthHook != nil {

|

||||

s.mgmt.SetPostAuthHook(optionState.postAuthHook)

|

||||

}

|

||||

s.localPassword = optionState.localPassword

|

||||

|

||||

// Setup routes

|

||||

@@ -935,7 +944,7 @@ func (s *Server) UpdateClients(cfg *config.Config) {

|

||||

}

|

||||

|

||||

if s.handlers != nil && s.handlers.AuthManager != nil {

|

||||

s.handlers.AuthManager.SetRetryConfig(cfg.RequestRetry, time.Duration(cfg.MaxRetryInterval)*time.Second)

|

||||

s.handlers.AuthManager.SetRetryConfig(cfg.RequestRetry, time.Duration(cfg.MaxRetryInterval)*time.Second, cfg.MaxRetryCredentials)

|

||||

}

|

||||

|

||||

// Update log level dynamically when debug flag changes

|

||||

|

||||

@@ -7,9 +7,11 @@ import (

|

||||

"path/filepath"

|

||||

"strings"

|

||||

"testing"

|

||||

"time"

|

||||

|

||||

gin "github.com/gin-gonic/gin"

|

||||

proxyconfig "github.com/router-for-me/CLIProxyAPI/v6/internal/config"

|

||||

internallogging "github.com/router-for-me/CLIProxyAPI/v6/internal/logging"

|

||||

sdkaccess "github.com/router-for-me/CLIProxyAPI/v6/sdk/access"

|

||||

"github.com/router-for-me/CLIProxyAPI/v6/sdk/cliproxy/auth"

|

||||

sdkconfig "github.com/router-for-me/CLIProxyAPI/v6/sdk/config"

|

||||

@@ -109,3 +111,100 @@ func TestAmpProviderModelRoutes(t *testing.T) {

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

func TestDefaultRequestLoggerFactory_UsesResolvedLogDirectory(t *testing.T) {

|

||||

t.Setenv("WRITABLE_PATH", "")

|

||||

t.Setenv("writable_path", "")

|

||||

|

||||

originalWD, errGetwd := os.Getwd()

|

||||

if errGetwd != nil {

|

||||

t.Fatalf("failed to get current working directory: %v", errGetwd)

|

||||

}

|

||||

|

||||

tmpDir := t.TempDir()

|

||||

if errChdir := os.Chdir(tmpDir); errChdir != nil {

|

||||

t.Fatalf("failed to switch working directory: %v", errChdir)

|

||||

}

|

||||

defer func() {

|

||||

if errChdirBack := os.Chdir(originalWD); errChdirBack != nil {

|

||||

t.Fatalf("failed to restore working directory: %v", errChdirBack)

|

||||

}

|

||||

}()

|

||||

|

||||

// Force ResolveLogDirectory to fallback to auth-dir/logs by making ./logs not a writable directory.

|

||||

if errWriteFile := os.WriteFile(filepath.Join(tmpDir, "logs"), []byte("not-a-directory"), 0o644); errWriteFile != nil {

|

||||

t.Fatalf("failed to create blocking logs file: %v", errWriteFile)

|

||||

}

|

||||

|

||||

configDir := filepath.Join(tmpDir, "config")

|

||||

if errMkdirConfig := os.MkdirAll(configDir, 0o755); errMkdirConfig != nil {

|

||||

t.Fatalf("failed to create config dir: %v", errMkdirConfig)

|

||||

}

|

||||

configPath := filepath.Join(configDir, "config.yaml")

|

||||

|

||||

authDir := filepath.Join(tmpDir, "auth")

|

||||

if errMkdirAuth := os.MkdirAll(authDir, 0o700); errMkdirAuth != nil {

|

||||

t.Fatalf("failed to create auth dir: %v", errMkdirAuth)

|

||||

}

|

||||

|

||||

cfg := &proxyconfig.Config{

|

||||

SDKConfig: proxyconfig.SDKConfig{

|

||||

RequestLog: false,

|

||||

},

|

||||

AuthDir: authDir,

|

||||

ErrorLogsMaxFiles: 10,

|

||||

}

|

||||

|

||||

logger := defaultRequestLoggerFactory(cfg, configPath)

|

||||

fileLogger, ok := logger.(*internallogging.FileRequestLogger)

|

||||

if !ok {

|

||||

t.Fatalf("expected *FileRequestLogger, got %T", logger)

|

||||

}

|

||||

|

||||

errLog := fileLogger.LogRequestWithOptions(

|

||||

"/v1/chat/completions",

|

||||

http.MethodPost,

|

||||

map[string][]string{"Content-Type": []string{"application/json"}},

|

||||

[]byte(`{"input":"hello"}`),

|

||||

http.StatusBadGateway,

|

||||

map[string][]string{"Content-Type": []string{"application/json"}},

|

||||

[]byte(`{"error":"upstream failure"}`),

|

||||

nil,

|

||||

nil,

|

||||

nil,

|

||||

true,

|

||||

"issue-1711",

|

||||

time.Now(),

|

||||

time.Now(),

|

||||

)

|

||||

if errLog != nil {

|

||||

t.Fatalf("failed to write forced error request log: %v", errLog)

|

||||

}

|

||||

|

||||

authLogsDir := filepath.Join(authDir, "logs")

|

||||

authEntries, errReadAuthDir := os.ReadDir(authLogsDir)

|

||||

if errReadAuthDir != nil {

|

||||

t.Fatalf("failed to read auth logs dir %s: %v", authLogsDir, errReadAuthDir)

|

||||

}

|

||||

foundErrorLogInAuthDir := false

|

||||

for _, entry := range authEntries {

|

||||

if strings.HasPrefix(entry.Name(), "error-") && strings.HasSuffix(entry.Name(), ".log") {

|

||||

foundErrorLogInAuthDir = true

|

||||

break

|

||||

}

|

||||

}

|

||||

if !foundErrorLogInAuthDir {

|

||||

t.Fatalf("expected forced error log in auth fallback dir %s, got entries: %+v", authLogsDir, authEntries)

|

||||

}

|

||||

|

||||

configLogsDir := filepath.Join(configDir, "logs")

|

||||

configEntries, errReadConfigDir := os.ReadDir(configLogsDir)

|

||||

if errReadConfigDir != nil && !os.IsNotExist(errReadConfigDir) {

|

||||

t.Fatalf("failed to inspect config logs dir %s: %v", configLogsDir, errReadConfigDir)

|

||||

}

|

||||

for _, entry := range configEntries {

|

||||

if strings.HasPrefix(entry.Name(), "error-") && strings.HasSuffix(entry.Name(), ".log") {

|

||||

t.Fatalf("unexpected forced error log in config dir %s", configLogsDir)

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

@@ -36,11 +36,21 @@ type ClaudeTokenStorage struct {

|

||||

|

||||

// Expire is the timestamp when the current access token expires.

|

||||

Expire string `json:"expired"`

|

||||

|

||||

// Metadata holds arbitrary key-value pairs injected via hooks.

|

||||

// It is not exported to JSON directly to allow flattening during serialization.

|

||||

Metadata map[string]any `json:"-"`

|

||||

}

|

||||

|

||||

// SetMetadata allows external callers to inject metadata into the storage before saving.

|

||||

func (ts *ClaudeTokenStorage) SetMetadata(meta map[string]any) {

|

||||

ts.Metadata = meta

|

||||

}

|

||||

|

||||

// SaveTokenToFile serializes the Claude token storage to a JSON file.

|

||||

// This method creates the necessary directory structure and writes the token

|

||||

// data in JSON format to the specified file path for persistent storage.

|

||||

// It merges any injected metadata into the top-level JSON object.

|

||||

//

|

||||

// Parameters:

|

||||

// - authFilePath: The full path where the token file should be saved

|

||||

@@ -65,8 +75,14 @@ func (ts *ClaudeTokenStorage) SaveTokenToFile(authFilePath string) error {

|

||||

_ = f.Close()

|

||||

}()

|

||||

|

||||

// Merge metadata using helper

|

||||

data, errMerge := misc.MergeMetadata(ts, ts.Metadata)

|

||||

if errMerge != nil {

|

||||

return fmt.Errorf("failed to merge metadata: %w", errMerge)

|

||||

}

|

||||

|

||||

// Encode and write the token data as JSON

|

||||

if err = json.NewEncoder(f).Encode(ts); err != nil {

|

||||

if err = json.NewEncoder(f).Encode(data); err != nil {

|

||||

return fmt.Errorf("failed to write token to file: %w", err)

|

||||

}

|

||||

return nil

|

||||

|

||||

@@ -15,7 +15,7 @@ import (

|

||||

"golang.org/x/net/proxy"

|

||||

)

|

||||

|

||||

// utlsRoundTripper implements http.RoundTripper using utls with Firefox fingerprint

|

||||

// utlsRoundTripper implements http.RoundTripper using utls with Chrome fingerprint

|

||||

// to bypass Cloudflare's TLS fingerprinting on Anthropic domains.

|

||||

type utlsRoundTripper struct {

|

||||

// mu protects the connections map and pending map

|

||||

@@ -100,7 +100,9 @@ func (t *utlsRoundTripper) getOrCreateConnection(host, addr string) (*http2.Clie

|

||||

return h2Conn, nil

|

||||

}

|

||||

|

||||

// createConnection creates a new HTTP/2 connection with Firefox TLS fingerprint

|

||||

// createConnection creates a new HTTP/2 connection with Chrome TLS fingerprint.

|

||||

// Chrome's TLS fingerprint is closer to Node.js/OpenSSL (which real Claude Code uses)

|

||||

// than Firefox, reducing the mismatch between TLS layer and HTTP headers.

|

||||

func (t *utlsRoundTripper) createConnection(host, addr string) (*http2.ClientConn, error) {

|

||||

conn, err := t.dialer.Dial("tcp", addr)

|

||||

if err != nil {

|

||||

@@ -108,7 +110,7 @@ func (t *utlsRoundTripper) createConnection(host, addr string) (*http2.ClientCon

|

||||

}

|

||||

|

||||

tlsConfig := &tls.Config{ServerName: host}

|

||||

tlsConn := tls.UClient(conn, tlsConfig, tls.HelloFirefox_Auto)

|

||||

tlsConn := tls.UClient(conn, tlsConfig, tls.HelloChrome_Auto)

|

||||

|

||||

if err := tlsConn.Handshake(); err != nil {

|

||||

conn.Close()

|

||||

@@ -156,7 +158,7 @@ func (t *utlsRoundTripper) RoundTrip(req *http.Request) (*http.Response, error)

|

||||

}

|

||||

|

||||

// NewAnthropicHttpClient creates an HTTP client that bypasses TLS fingerprinting

|

||||

// for Anthropic domains by using utls with Firefox fingerprint.

|

||||

// for Anthropic domains by using utls with Chrome fingerprint.

|

||||

// It accepts optional SDK configuration for proxy settings.

|

||||

func NewAnthropicHttpClient(cfg *config.SDKConfig) *http.Client {

|

||||

return &http.Client{

|

||||

|

||||

@@ -71,16 +71,26 @@ func (o *CodexAuth) GenerateAuthURL(state string, pkceCodes *PKCECodes) (string,

|

||||

// It performs an HTTP POST request to the OpenAI token endpoint with the provided

|

||||

// authorization code and PKCE verifier.

|

||||

func (o *CodexAuth) ExchangeCodeForTokens(ctx context.Context, code string, pkceCodes *PKCECodes) (*CodexAuthBundle, error) {

|

||||

return o.ExchangeCodeForTokensWithRedirect(ctx, code, RedirectURI, pkceCodes)

|

||||

}

|

||||

|

||||

// ExchangeCodeForTokensWithRedirect exchanges an authorization code for tokens using

|

||||

// a caller-provided redirect URI. This supports alternate auth flows such as device

|

||||

// login while preserving the existing token parsing and storage behavior.

|

||||

func (o *CodexAuth) ExchangeCodeForTokensWithRedirect(ctx context.Context, code, redirectURI string, pkceCodes *PKCECodes) (*CodexAuthBundle, error) {

|

||||

if pkceCodes == nil {

|

||||

return nil, fmt.Errorf("PKCE codes are required for token exchange")

|

||||

}

|

||||

if strings.TrimSpace(redirectURI) == "" {

|

||||

return nil, fmt.Errorf("redirect URI is required for token exchange")

|

||||

}

|

||||

|

||||

// Prepare token exchange request

|

||||

data := url.Values{

|

||||

"grant_type": {"authorization_code"},

|

||||

"client_id": {ClientID},

|

||||

"code": {code},

|

||||

"redirect_uri": {RedirectURI},

|

||||

"redirect_uri": {strings.TrimSpace(redirectURI)},

|

||||

"code_verifier": {pkceCodes.CodeVerifier},

|

||||

}

|

||||

|

||||

@@ -266,6 +276,10 @@ func (o *CodexAuth) RefreshTokensWithRetry(ctx context.Context, refreshToken str

|

||||

if err == nil {

|

||||

return tokenData, nil

|

||||

}

|

||||

if isNonRetryableRefreshErr(err) {

|

||||

log.Warnf("Token refresh attempt %d failed with non-retryable error: %v", attempt+1, err)

|

||||

return nil, err

|

||||

}

|

||||

|

||||

lastErr = err

|

||||

log.Warnf("Token refresh attempt %d failed: %v", attempt+1, err)

|

||||

@@ -274,6 +288,14 @@ func (o *CodexAuth) RefreshTokensWithRetry(ctx context.Context, refreshToken str

|

||||

return nil, fmt.Errorf("token refresh failed after %d attempts: %w", maxRetries, lastErr)

|

||||

}

|

||||

|

||||

func isNonRetryableRefreshErr(err error) bool {

|

||||

if err == nil {

|

||||

return false

|

||||

}

|

||||

raw := strings.ToLower(err.Error())

|

||||

return strings.Contains(raw, "refresh_token_reused")

|

||||

}

|

||||

|

||||

// UpdateTokenStorage updates an existing CodexTokenStorage with new token data.

|

||||

// This is typically called after a successful token refresh to persist the new credentials.

|

||||

func (o *CodexAuth) UpdateTokenStorage(storage *CodexTokenStorage, tokenData *CodexTokenData) {

|

||||

|

||||

44

internal/auth/codex/openai_auth_test.go

Normal file

44

internal/auth/codex/openai_auth_test.go

Normal file

@@ -0,0 +1,44 @@

|

||||

package codex

|

||||

|

||||

import (

|

||||

"context"

|

||||

"io"

|

||||

"net/http"

|

||||

"strings"

|

||||

"sync/atomic"

|

||||

"testing"

|

||||

)

|

||||

|

||||

type roundTripFunc func(*http.Request) (*http.Response, error)

|

||||

|

||||

func (f roundTripFunc) RoundTrip(req *http.Request) (*http.Response, error) {

|

||||

return f(req)

|

||||

}

|

||||

|

||||

func TestRefreshTokensWithRetry_NonRetryableOnlyAttemptsOnce(t *testing.T) {

|

||||

var calls int32

|

||||

auth := &CodexAuth{

|

||||

httpClient: &http.Client{

|

||||

Transport: roundTripFunc(func(req *http.Request) (*http.Response, error) {

|

||||

atomic.AddInt32(&calls, 1)

|

||||

return &http.Response{

|

||||

StatusCode: http.StatusBadRequest,

|

||||

Body: io.NopCloser(strings.NewReader(`{"error":"invalid_grant","code":"refresh_token_reused"}`)),

|

||||

Header: make(http.Header),

|

||||

Request: req,

|

||||

}, nil

|

||||

}),

|

||||

},

|

||||

}

|

||||

|

||||

_, err := auth.RefreshTokensWithRetry(context.Background(), "dummy_refresh_token", 3)

|

||||

if err == nil {

|

||||

t.Fatalf("expected error for non-retryable refresh failure")

|

||||

}

|

||||

if !strings.Contains(strings.ToLower(err.Error()), "refresh_token_reused") {

|

||||

t.Fatalf("expected refresh_token_reused in error, got: %v", err)

|

||||

}

|

||||

if got := atomic.LoadInt32(&calls); got != 1 {

|

||||

t.Fatalf("expected 1 refresh attempt, got %d", got)

|

||||

}

|

||||

}

|

||||

@@ -32,11 +32,21 @@ type CodexTokenStorage struct {

|

||||

Type string `json:"type"`

|

||||

// Expire is the timestamp when the current access token expires.

|

||||

Expire string `json:"expired"`

|

||||

|

||||

// Metadata holds arbitrary key-value pairs injected via hooks.

|

||||

// It is not exported to JSON directly to allow flattening during serialization.

|

||||

Metadata map[string]any `json:"-"`

|

||||

}

|

||||

|

||||

// SetMetadata allows external callers to inject metadata into the storage before saving.

|

||||

func (ts *CodexTokenStorage) SetMetadata(meta map[string]any) {

|

||||

ts.Metadata = meta

|

||||

}

|

||||

|

||||

// SaveTokenToFile serializes the Codex token storage to a JSON file.

|

||||

// This method creates the necessary directory structure and writes the token

|

||||

// data in JSON format to the specified file path for persistent storage.

|

||||

// It merges any injected metadata into the top-level JSON object.

|

||||

//

|

||||

// Parameters:

|

||||

// - authFilePath: The full path where the token file should be saved

|

||||

@@ -58,7 +68,13 @@ func (ts *CodexTokenStorage) SaveTokenToFile(authFilePath string) error {

|

||||

_ = f.Close()

|

||||

}()

|

||||

|

||||

if err = json.NewEncoder(f).Encode(ts); err != nil {

|

||||

// Merge metadata using helper

|

||||

data, errMerge := misc.MergeMetadata(ts, ts.Metadata)

|

||||

if errMerge != nil {

|

||||

return fmt.Errorf("failed to merge metadata: %w", errMerge)

|

||||

}

|

||||

|

||||

if err = json.NewEncoder(f).Encode(data); err != nil {

|

||||

return fmt.Errorf("failed to write token to file: %w", err)

|

||||

}

|

||||

return nil

|

||||

|

||||

@@ -82,15 +82,21 @@ func (c *CopilotAuth) WaitForAuthorization(ctx context.Context, deviceCode *Devi

|

||||

}

|

||||

|

||||

// Fetch the GitHub username

|

||||

username, err := c.deviceClient.FetchUserInfo(ctx, tokenData.AccessToken)

|

||||

userInfo, err := c.deviceClient.FetchUserInfo(ctx, tokenData.AccessToken)

|

||||

if err != nil {

|

||||

log.Warnf("copilot: failed to fetch user info: %v", err)

|

||||

username = "unknown"

|

||||

}

|

||||

|

||||

username := userInfo.Login

|

||||

if username == "" {

|

||||

username = "github-user"

|

||||

}

|

||||

|

||||

return &CopilotAuthBundle{

|

||||

TokenData: tokenData,

|

||||

Username: username,

|

||||

Email: userInfo.Email,

|

||||

Name: userInfo.Name,

|

||||

}, nil

|

||||

}

|

||||

|

||||

@@ -150,12 +156,12 @@ func (c *CopilotAuth) ValidateToken(ctx context.Context, accessToken string) (bo

|

||||

return false, "", nil

|

||||

}

|

||||

|

||||

username, err := c.deviceClient.FetchUserInfo(ctx, accessToken)

|

||||

userInfo, err := c.deviceClient.FetchUserInfo(ctx, accessToken)

|

||||

if err != nil {

|

||||

return false, "", err

|

||||

}

|

||||

|

||||

return true, username, nil

|

||||

return true, userInfo.Login, nil

|

||||

}

|

||||

|

||||

// CreateTokenStorage creates a new CopilotTokenStorage from auth bundle.

|

||||

@@ -165,6 +171,8 @@ func (c *CopilotAuth) CreateTokenStorage(bundle *CopilotAuthBundle) *CopilotToke

|

||||

TokenType: bundle.TokenData.TokenType,

|

||||

Scope: bundle.TokenData.Scope,

|

||||

Username: bundle.Username,

|

||||

Email: bundle.Email,

|

||||

Name: bundle.Name,

|

||||

Type: "github-copilot",

|

||||

}

|

||||

}

|

||||

|

||||

@@ -53,7 +53,7 @@ func NewDeviceFlowClient(cfg *config.Config) *DeviceFlowClient {

|

||||

func (c *DeviceFlowClient) RequestDeviceCode(ctx context.Context) (*DeviceCodeResponse, error) {

|

||||

data := url.Values{}

|

||||

data.Set("client_id", copilotClientID)

|

||||

data.Set("scope", "user:email")

|

||||

data.Set("scope", "read:user user:email")

|

||||

|

||||

req, err := http.NewRequestWithContext(ctx, http.MethodPost, copilotDeviceCodeURL, strings.NewReader(data.Encode()))

|

||||

if err != nil {

|

||||

@@ -211,15 +211,25 @@ func (c *DeviceFlowClient) exchangeDeviceCode(ctx context.Context, deviceCode st

|

||||

}, nil

|

||||

}

|

||||

|

||||

// FetchUserInfo retrieves the GitHub username for the authenticated user.

|

||||

func (c *DeviceFlowClient) FetchUserInfo(ctx context.Context, accessToken string) (string, error) {

|

||||

// GitHubUserInfo holds GitHub user profile information.

|

||||

type GitHubUserInfo struct {

|

||||

// Login is the GitHub username.

|

||||

Login string

|

||||

// Email is the primary email address (may be empty if not public).

|

||||

Email string

|

||||

// Name is the display name.

|

||||

Name string

|

||||

}

|

||||

|

||||

// FetchUserInfo retrieves the GitHub user profile for the authenticated user.

|

||||

func (c *DeviceFlowClient) FetchUserInfo(ctx context.Context, accessToken string) (GitHubUserInfo, error) {

|

||||

if accessToken == "" {

|

||||

return "", NewAuthenticationError(ErrUserInfoFailed, fmt.Errorf("access token is empty"))

|

||||

return GitHubUserInfo{}, NewAuthenticationError(ErrUserInfoFailed, fmt.Errorf("access token is empty"))

|

||||

}

|

||||

|

||||

req, err := http.NewRequestWithContext(ctx, http.MethodGet, copilotUserInfoURL, nil)

|

||||

if err != nil {

|

||||

return "", NewAuthenticationError(ErrUserInfoFailed, err)

|

||||

return GitHubUserInfo{}, NewAuthenticationError(ErrUserInfoFailed, err)

|

||||

}

|

||||

req.Header.Set("Authorization", "Bearer "+accessToken)

|

||||

req.Header.Set("Accept", "application/json")

|

||||

@@ -227,7 +237,7 @@ func (c *DeviceFlowClient) FetchUserInfo(ctx context.Context, accessToken string

|

||||

|

||||

resp, err := c.httpClient.Do(req)

|

||||

if err != nil {

|

||||

return "", NewAuthenticationError(ErrUserInfoFailed, err)

|

||||

return GitHubUserInfo{}, NewAuthenticationError(ErrUserInfoFailed, err)

|

||||

}

|

||||

defer func() {

|

||||

if errClose := resp.Body.Close(); errClose != nil {

|

||||

@@ -237,19 +247,25 @@ func (c *DeviceFlowClient) FetchUserInfo(ctx context.Context, accessToken string

|

||||

|

||||

if !isHTTPSuccess(resp.StatusCode) {

|

||||

bodyBytes, _ := io.ReadAll(resp.Body)

|

||||

return "", NewAuthenticationError(ErrUserInfoFailed, fmt.Errorf("status %d: %s", resp.StatusCode, string(bodyBytes)))

|

||||

return GitHubUserInfo{}, NewAuthenticationError(ErrUserInfoFailed, fmt.Errorf("status %d: %s", resp.StatusCode, string(bodyBytes)))

|

||||

}

|

||||

|

||||

var userInfo struct {

|

||||

var raw struct {

|

||||

Login string `json:"login"`

|

||||

Email string `json:"email"`

|

||||

Name string `json:"name"`

|

||||

}

|

||||

if err = json.NewDecoder(resp.Body).Decode(&userInfo); err != nil {

|

||||

return "", NewAuthenticationError(ErrUserInfoFailed, err)

|

||||

if err = json.NewDecoder(resp.Body).Decode(&raw); err != nil {

|

||||

return GitHubUserInfo{}, NewAuthenticationError(ErrUserInfoFailed, err)

|

||||

}

|

||||

|

||||

if userInfo.Login == "" {

|

||||

return "", NewAuthenticationError(ErrUserInfoFailed, fmt.Errorf("empty username"))

|

||||

if raw.Login == "" {

|

||||

return GitHubUserInfo{}, NewAuthenticationError(ErrUserInfoFailed, fmt.Errorf("empty username"))

|

||||

}

|

||||

|

||||

return userInfo.Login, nil

|

||||

return GitHubUserInfo{

|

||||

Login: raw.Login,

|

||||

Email: raw.Email,

|

||||

Name: raw.Name,

|

||||

}, nil

|

||||

}

|

||||

|

||||

213

internal/auth/copilot/oauth_test.go

Normal file

213

internal/auth/copilot/oauth_test.go

Normal file

@@ -0,0 +1,213 @@

|

||||

package copilot

|

||||

|

||||

import (

|

||||

"context"

|

||||

"encoding/json"

|

||||

"net/http"

|

||||

"net/http/httptest"

|

||||

"strings"

|

||||

"testing"

|

||||

)

|

||||

|

||||

// roundTripFunc lets us inject a custom transport for testing.

|

||||

type roundTripFunc func(*http.Request) (*http.Response, error)

|

||||

|

||||

func (f roundTripFunc) RoundTrip(r *http.Request) (*http.Response, error) { return f(r) }

|

||||

|

||||

// newTestClient returns an *http.Client whose requests are redirected to the given test server,

|

||||

// regardless of the original URL host.

|

||||

func newTestClient(srv *httptest.Server) *http.Client {

|

||||

return &http.Client{

|

||||

Transport: roundTripFunc(func(req *http.Request) (*http.Response, error) {

|

||||

req2 := req.Clone(req.Context())

|

||||

req2.URL.Scheme = "http"

|

||||

req2.URL.Host = strings.TrimPrefix(srv.URL, "http://")

|

||||

return srv.Client().Transport.RoundTrip(req2)

|

||||

}),

|

||||

}

|

||||

}

|

||||

|

||||

// TestFetchUserInfo_FullProfile verifies that FetchUserInfo returns login, email, and name.

|

||||

func TestFetchUserInfo_FullProfile(t *testing.T) {

|

||||

srv := httptest.NewServer(http.HandlerFunc(func(w http.ResponseWriter, r *http.Request) {

|

||||

if !strings.HasPrefix(r.Header.Get("Authorization"), "Bearer ") {

|

||||

w.WriteHeader(http.StatusUnauthorized)

|

||||

return

|

||||

}

|

||||

w.Header().Set("Content-Type", "application/json")

|

||||

_ = json.NewEncoder(w).Encode(map[string]string{

|

||||

"login": "octocat",

|

||||

"email": "octocat@github.com",

|

||||

"name": "The Octocat",

|

||||

})

|

||||

}))

|

||||

defer srv.Close()

|

||||

|

||||

client := &DeviceFlowClient{httpClient: newTestClient(srv)}

|

||||

info, err := client.FetchUserInfo(context.Background(), "test-token")

|

||||

if err != nil {

|

||||

t.Fatalf("unexpected error: %v", err)

|

||||

}

|

||||

if info.Login != "octocat" {

|

||||

t.Errorf("Login: got %q, want %q", info.Login, "octocat")

|

||||

}

|

||||

if info.Email != "octocat@github.com" {

|

||||

t.Errorf("Email: got %q, want %q", info.Email, "octocat@github.com")

|

||||

}

|

||||

if info.Name != "The Octocat" {

|

||||

t.Errorf("Name: got %q, want %q", info.Name, "The Octocat")

|

||||

}

|

||||

}

|

||||

|

||||

// TestFetchUserInfo_EmptyEmail verifies graceful handling when email is absent (private account).

|

||||

func TestFetchUserInfo_EmptyEmail(t *testing.T) {

|

||||

srv := httptest.NewServer(http.HandlerFunc(func(w http.ResponseWriter, r *http.Request) {

|

||||

w.Header().Set("Content-Type", "application/json")

|

||||

// GitHub returns null for private emails.

|

||||

_, _ = w.Write([]byte(`{"login":"privateuser","email":null,"name":"Private User"}`))

|

||||

}))

|

||||

defer srv.Close()

|

||||

|

||||

client := &DeviceFlowClient{httpClient: newTestClient(srv)}

|

||||

info, err := client.FetchUserInfo(context.Background(), "test-token")

|

||||

if err != nil {

|

||||

t.Fatalf("unexpected error: %v", err)

|

||||

}

|

||||

if info.Login != "privateuser" {

|

||||

t.Errorf("Login: got %q, want %q", info.Login, "privateuser")

|

||||

}

|

||||

if info.Email != "" {

|

||||

t.Errorf("Email: got %q, want empty string", info.Email)

|

||||

}

|

||||

if info.Name != "Private User" {

|

||||

t.Errorf("Name: got %q, want %q", info.Name, "Private User")

|

||||

}

|

||||

}

|

||||

|

||||

// TestFetchUserInfo_EmptyToken verifies error is returned for empty access token.

|

||||

func TestFetchUserInfo_EmptyToken(t *testing.T) {

|

||||

client := &DeviceFlowClient{httpClient: http.DefaultClient}

|

||||

_, err := client.FetchUserInfo(context.Background(), "")

|

||||

if err == nil {

|

||||

t.Fatal("expected error for empty token, got nil")

|

||||

}

|

||||

}

|

||||

|

||||

// TestFetchUserInfo_EmptyLogin verifies error is returned when API returns no login.

|

||||

func TestFetchUserInfo_EmptyLogin(t *testing.T) {

|

||||

srv := httptest.NewServer(http.HandlerFunc(func(w http.ResponseWriter, r *http.Request) {

|

||||

w.Header().Set("Content-Type", "application/json")

|

||||

_, _ = w.Write([]byte(`{"email":"someone@example.com","name":"No Login"}`))

|

||||

}))

|

||||

defer srv.Close()

|

||||

|

||||

client := &DeviceFlowClient{httpClient: newTestClient(srv)}

|

||||

_, err := client.FetchUserInfo(context.Background(), "test-token")

|

||||

if err == nil {

|

||||

t.Fatal("expected error for empty login, got nil")

|

||||

}

|

||||

}

|

||||

|

||||

// TestFetchUserInfo_HTTPError verifies error is returned on non-2xx response.

|

||||

func TestFetchUserInfo_HTTPError(t *testing.T) {

|

||||

srv := httptest.NewServer(http.HandlerFunc(func(w http.ResponseWriter, r *http.Request) {

|

||||

w.WriteHeader(http.StatusUnauthorized)

|

||||

_, _ = w.Write([]byte(`{"message":"Bad credentials"}`))

|

||||

}))

|

||||

defer srv.Close()

|

||||

|

||||

client := &DeviceFlowClient{httpClient: newTestClient(srv)}

|

||||

_, err := client.FetchUserInfo(context.Background(), "bad-token")

|

||||

if err == nil {

|

||||

t.Fatal("expected error for 401 response, got nil")

|

||||

}

|

||||

}

|

||||

|

||||

// TestCopilotTokenStorage_EmailNameFields verifies Email and Name serialise correctly.

|

||||

func TestCopilotTokenStorage_EmailNameFields(t *testing.T) {

|

||||

ts := &CopilotTokenStorage{

|

||||

AccessToken: "ghu_abc",

|

||||

TokenType: "bearer",

|

||||

Scope: "read:user user:email",

|

||||

Username: "octocat",

|

||||

Email: "octocat@github.com",

|

||||

Name: "The Octocat",

|

||||

Type: "github-copilot",

|

||||

}

|

||||

|

||||

data, err := json.Marshal(ts)

|

||||

if err != nil {

|

||||

t.Fatalf("marshal error: %v", err)

|

||||

}

|

||||

|

||||

var out map[string]any

|

||||

if err = json.Unmarshal(data, &out); err != nil {

|

||||

t.Fatalf("unmarshal error: %v", err)

|

||||

}

|

||||

|

||||

for _, key := range []string{"access_token", "username", "email", "name", "type"} {

|

||||

if _, ok := out[key]; !ok {

|

||||

t.Errorf("expected key %q in JSON output, not found", key)

|

||||

}

|

||||

}

|

||||

if out["email"] != "octocat@github.com" {

|

||||

t.Errorf("email: got %v, want %q", out["email"], "octocat@github.com")

|

||||

}

|

||||

if out["name"] != "The Octocat" {

|

||||

t.Errorf("name: got %v, want %q", out["name"], "The Octocat")

|

||||

}

|

||||

}

|

||||

|

||||

// TestCopilotTokenStorage_OmitEmptyEmailName verifies email/name are omitted when empty (omitempty).

|

||||

func TestCopilotTokenStorage_OmitEmptyEmailName(t *testing.T) {

|

||||

ts := &CopilotTokenStorage{

|

||||

AccessToken: "ghu_abc",

|

||||

Username: "octocat",

|

||||

Type: "github-copilot",

|

||||

}

|

||||

|

||||

data, err := json.Marshal(ts)

|

||||

if err != nil {

|

||||

t.Fatalf("marshal error: %v", err)

|

||||

}

|

||||

|

||||

var out map[string]any

|

||||

if err = json.Unmarshal(data, &out); err != nil {

|

||||

t.Fatalf("unmarshal error: %v", err)

|

||||

}

|

||||