diff --git a/chrome-extension/README.md b/chrome-extension/README.md

new file mode 100644

index 0000000..ab353c3

--- /dev/null

+++ b/chrome-extension/README.md

@@ -0,0 +1,13 @@

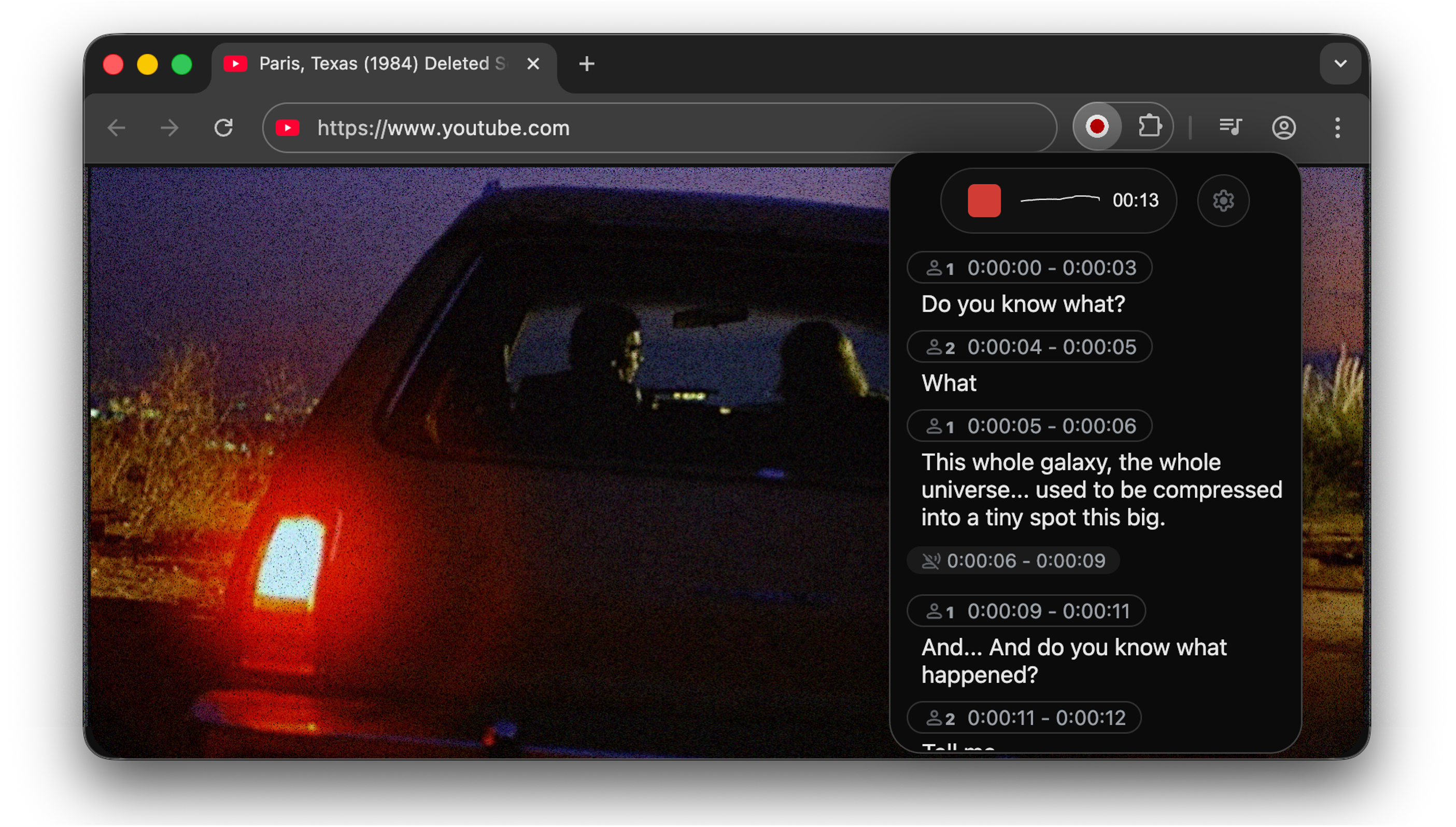

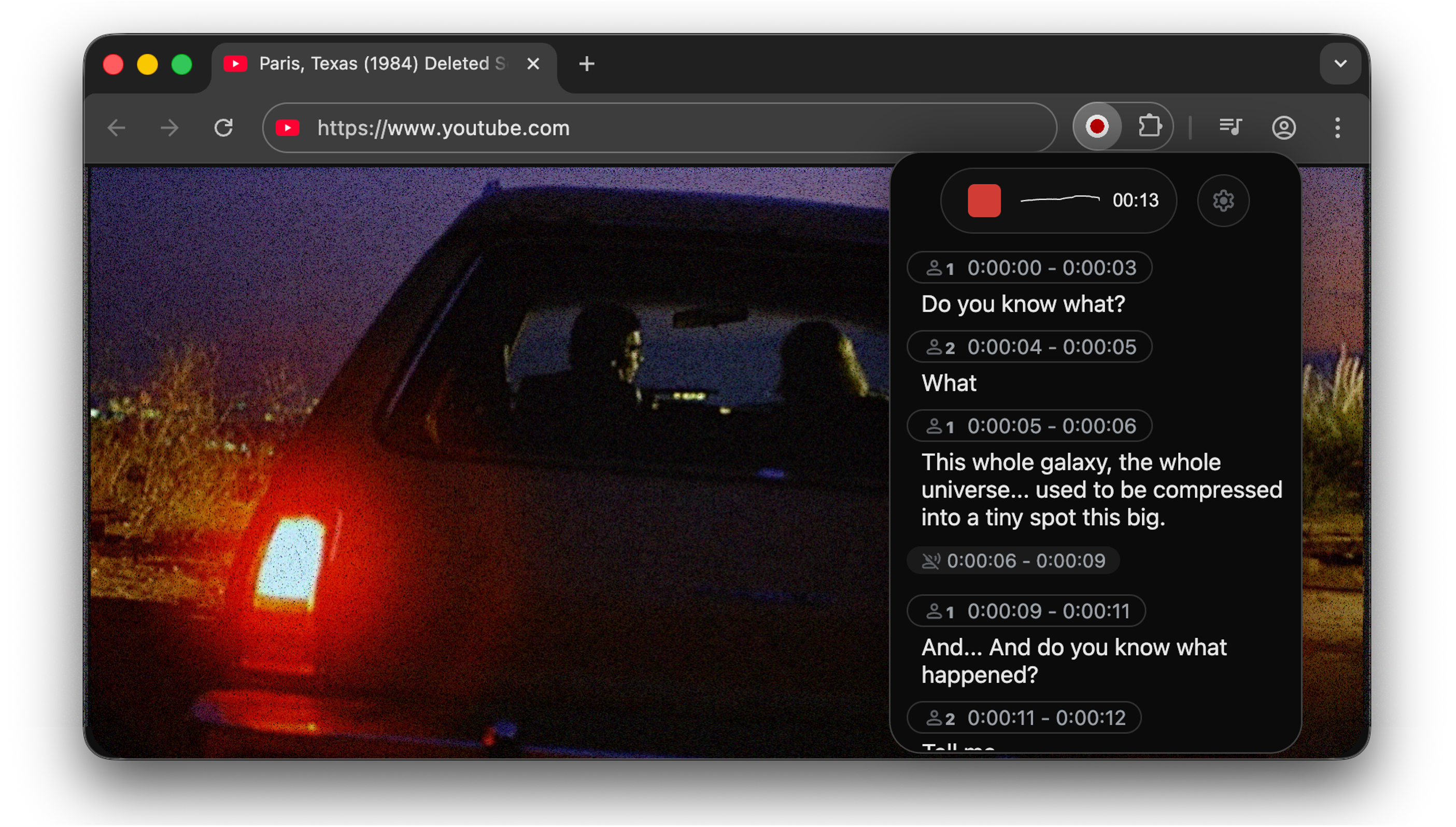

+## WhisperLiveKit Chrome Extension v0.1.0

+Capture the audio of your current tab, transcribe or translate it using WhisperliveKit. **Still unstable**

+

+ +

+## Running this extension

+1. Clone this repository.

+2. Load this directory in Chrome as an unpacked extension.

+

+

+## Devs:

+- Impossible to capture audio from tabs if extension is a pannel, unfortunately: https://issues.chromium.org/issues/40926394

+- To capture microphone in an extension, there are tricks: https://github.com/justinmann/sidepanel-audio-issue , https://medium.com/@lynchee.owo/how-to-enable-microphone-access-in-chrome-extensions-by-code-924295170080 (comments)

diff --git a/chrome-extension/demo-extension.png b/chrome-extension/demo-extension.png

new file mode 100644

index 0000000..ef6e7e2

Binary files /dev/null and b/chrome-extension/demo-extension.png differ

diff --git a/chrome-extension/example_tab_capture.js b/chrome-extension/example_tab_capture.js

new file mode 100644

index 0000000..8095371

--- /dev/null

+++ b/chrome-extension/example_tab_capture.js

@@ -0,0 +1,315 @@

+const extend = function() { //helper function to merge objects

+ let target = arguments[0],

+ sources = [].slice.call(arguments, 1);

+ for (let i = 0; i < sources.length; ++i) {

+ let src = sources[i];

+ for (key in src) {

+ let val = src[key];

+ target[key] = typeof val === "object"

+ ? extend(typeof target[key] === "object" ? target[key] : {}, val)

+ : val;

+ }

+ }

+ return target;

+};

+

+const WORKER_FILE = {

+ wav: "WavWorker.js",

+ mp3: "Mp3Worker.js"

+};

+

+// default configs

+const CONFIGS = {

+ workerDir: "/workers/", // worker scripts dir (end with /)

+ numChannels: 2, // number of channels

+ encoding: "wav", // encoding (can be changed at runtime)

+

+ // runtime options

+ options: {

+ timeLimit: 1200, // recording time limit (sec)

+ encodeAfterRecord: true, // process encoding after recording

+ progressInterval: 1000, // encoding progress report interval (millisec)

+ bufferSize: undefined, // buffer size (use browser default)

+

+ // encoding-specific options

+ wav: {

+ mimeType: "audio/wav"

+ },

+ mp3: {

+ mimeType: "audio/mpeg",

+ bitRate: 192 // (CBR only): bit rate = [64 .. 320]

+ }

+ }

+};

+

+class Recorder {

+

+ constructor(source, configs) { //creates audio context from the source and connects it to the worker

+ extend(this, CONFIGS, configs || {});

+ this.context = source.context;

+ if (this.context.createScriptProcessor == null)

+ this.context.createScriptProcessor = this.context.createJavaScriptNode;

+ this.input = this.context.createGain();

+ source.connect(this.input);

+ this.buffer = [];

+ this.initWorker();

+ }

+

+ isRecording() {

+ return this.processor != null;

+ }

+

+ setEncoding(encoding) {

+ if(!this.isRecording() && this.encoding !== encoding) {

+ this.encoding = encoding;

+ this.initWorker();

+ }

+ }

+

+ setOptions(options) {

+ if (!this.isRecording()) {

+ extend(this.options, options);

+ this.worker.postMessage({ command: "options", options: this.options});

+ }

+ }

+

+ startRecording() {

+ if(!this.isRecording()) {

+ let numChannels = this.numChannels;

+ let buffer = this.buffer;

+ let worker = this.worker;

+ this.processor = this.context.createScriptProcessor(

+ this.options.bufferSize,

+ this.numChannels, this.numChannels);

+ this.input.connect(this.processor);

+ this.processor.connect(this.context.destination);

+ this.processor.onaudioprocess = function(event) {

+ for (var ch = 0; ch < numChannels; ++ch)

+ buffer[ch] = event.inputBuffer.getChannelData(ch);

+ worker.postMessage({ command: "record", buffer: buffer });

+ };

+ this.worker.postMessage({

+ command: "start",

+ bufferSize: this.processor.bufferSize

+ });

+ this.startTime = Date.now();

+ }

+ }

+

+ cancelRecording() {

+ if(this.isRecording()) {

+ this.input.disconnect();

+ this.processor.disconnect();

+ delete this.processor;

+ this.worker.postMessage({ command: "cancel" });

+ }

+ }

+

+ finishRecording() {

+ if (this.isRecording()) {

+ this.input.disconnect();

+ this.processor.disconnect();

+ delete this.processor;

+ this.worker.postMessage({ command: "finish" });

+ }

+ }

+

+ cancelEncoding() {

+ if (this.options.encodeAfterRecord)

+ if (!this.isRecording()) {

+ this.onEncodingCanceled(this);

+ this.initWorker();

+ }

+ }

+

+ initWorker() {

+ if (this.worker != null)

+ this.worker.terminate();

+ this.onEncoderLoading(this, this.encoding);

+ this.worker = new Worker(this.workerDir + WORKER_FILE[this.encoding]);

+ let _this = this;

+ this.worker.onmessage = function(event) {

+ let data = event.data;

+ switch (data.command) {

+ case "loaded":

+ _this.onEncoderLoaded(_this, _this.encoding);

+ break;

+ case "timeout":

+ _this.onTimeout(_this);

+ break;

+ case "progress":

+ _this.onEncodingProgress(_this, data.progress);

+ break;

+ case "complete":

+ _this.onComplete(_this, data.blob);

+ }

+ }

+ this.worker.postMessage({

+ command: "init",

+ config: {

+ sampleRate: this.context.sampleRate,

+ numChannels: this.numChannels

+ },

+ options: this.options

+ });

+ }

+

+ onEncoderLoading(recorder, encoding) {}

+ onEncoderLoaded(recorder, encoding) {}

+ onTimeout(recorder) {}

+ onEncodingProgress(recorder, progress) {}

+ onEncodingCanceled(recorder) {}

+ onComplete(recorder, blob) {}

+

+}

+

+const audioCapture = (timeLimit, muteTab, format, quality, limitRemoved) => {

+ chrome.tabCapture.capture({audio: true}, (stream) => { // sets up stream for capture

+ let startTabId; //tab when the capture is started

+ let timeout;

+ let completeTabID; //tab when the capture is stopped

+ let audioURL = null; //resulting object when encoding is completed

+ chrome.tabs.query({active:true, currentWindow: true}, (tabs) => startTabId = tabs[0].id) //saves start tab

+ const liveStream = stream;

+ const audioCtx = new AudioContext();

+ const source = audioCtx.createMediaStreamSource(stream);

+ let mediaRecorder = new Recorder(source); //initiates the recorder based on the current stream

+ mediaRecorder.setEncoding(format); //sets encoding based on options

+ if(limitRemoved) { //removes time limit

+ mediaRecorder.setOptions({timeLimit: 10800});

+ } else {

+ mediaRecorder.setOptions({timeLimit: timeLimit/1000});

+ }

+ if(format === "mp3") {

+ mediaRecorder.setOptions({mp3: {bitRate: quality}});

+ }

+ mediaRecorder.startRecording();

+

+ function onStopCommand(command) { //keypress

+ if (command === "stop") {

+ stopCapture();

+ }

+ }

+ function onStopClick(request) { //click on popup

+ if(request === "stopCapture") {

+ stopCapture();

+ } else if (request === "cancelCapture") {

+ cancelCapture();

+ } else if (request.cancelEncodeID) {

+ if(request.cancelEncodeID === startTabId && mediaRecorder) {

+ mediaRecorder.cancelEncoding();

+ }

+ }

+ }

+ chrome.commands.onCommand.addListener(onStopCommand);

+ chrome.runtime.onMessage.addListener(onStopClick);

+ mediaRecorder.onComplete = (recorder, blob) => {

+ audioURL = window.URL.createObjectURL(blob);

+ if(completeTabID) {

+ chrome.tabs.sendMessage(completeTabID, {type: "encodingComplete", audioURL});

+ }

+ mediaRecorder = null;

+ }

+ mediaRecorder.onEncodingProgress = (recorder, progress) => {

+ if(completeTabID) {

+ chrome.tabs.sendMessage(completeTabID, {type: "encodingProgress", progress: progress});

+ }

+ }

+

+ const stopCapture = function() {

+ let endTabId;

+ //check to make sure the current tab is the tab being captured

+ chrome.tabs.query({active: true, currentWindow: true}, (tabs) => {

+ endTabId = tabs[0].id;

+ if(mediaRecorder && startTabId === endTabId){

+ mediaRecorder.finishRecording();

+ chrome.tabs.create({url: "complete.html"}, (tab) => {

+ completeTabID = tab.id;

+ let completeCallback = () => {

+ chrome.tabs.sendMessage(tab.id, {type: "createTab", format: format, audioURL, startID: startTabId});

+ }

+ setTimeout(completeCallback, 500);

+ });

+ closeStream(endTabId);

+ }

+ })

+ }

+

+ const cancelCapture = function() {

+ let endTabId;

+ chrome.tabs.query({active: true, currentWindow: true}, (tabs) => {

+ endTabId = tabs[0].id;

+ if(mediaRecorder && startTabId === endTabId){

+ mediaRecorder.cancelRecording();

+ closeStream(endTabId);

+ }

+ })

+ }

+

+//removes the audio context and closes recorder to save memory

+ const closeStream = function(endTabId) {

+ chrome.commands.onCommand.removeListener(onStopCommand);

+ chrome.runtime.onMessage.removeListener(onStopClick);

+ mediaRecorder.onTimeout = () => {};

+ audioCtx.close();

+ liveStream.getAudioTracks()[0].stop();

+ sessionStorage.removeItem(endTabId);

+ chrome.runtime.sendMessage({captureStopped: endTabId});

+ }

+

+ mediaRecorder.onTimeout = stopCapture;

+

+ if(!muteTab) {

+ let audio = new Audio();

+ audio.srcObject = liveStream;

+ audio.play();

+ }

+ });

+}

+

+

+

+//sends reponses to and from the popup menu

+chrome.runtime.onMessage.addListener((request, sender, sendResponse) => {

+ if (request.currentTab && sessionStorage.getItem(request.currentTab)) {

+ sendResponse(sessionStorage.getItem(request.currentTab));

+ } else if (request.currentTab){

+ sendResponse(false);

+ } else if (request === "startCapture") {

+ startCapture();

+ }

+});

+

+const startCapture = function() {

+ chrome.tabs.query({active: true, currentWindow: true}, (tabs) => {

+ // CODE TO BLOCK CAPTURE ON YOUTUBE, DO NOT REMOVE

+ // if(tabs[0].url.toLowerCase().includes("youtube")) {

+ // chrome.tabs.create({url: "error.html"});

+ // } else {

+ if(!sessionStorage.getItem(tabs[0].id)) {

+ sessionStorage.setItem(tabs[0].id, Date.now());

+ chrome.storage.sync.get({

+ maxTime: 1200000,

+ muteTab: false,

+ format: "mp3",

+ quality: 192,

+ limitRemoved: false

+ }, (options) => {

+ let time = options.maxTime;

+ if(time > 1200000) {

+ time = 1200000

+ }

+ audioCapture(time, options.muteTab, options.format, options.quality, options.limitRemoved);

+ });

+ chrome.runtime.sendMessage({captureStarted: tabs[0].id, startTime: Date.now()});

+ }

+ // }

+ });

+};

+

+

+chrome.commands.onCommand.addListener((command) => {

+ if (command === "start") {

+ startCapture();

+ }

+});

\ No newline at end of file

diff --git a/chrome-extension/manifest.json b/chrome-extension/manifest.json

new file mode 100644

index 0000000..a925ee5

--- /dev/null

+++ b/chrome-extension/manifest.json

@@ -0,0 +1,17 @@

+{

+ "manifest_version": 3,

+ "name": "WhisperLiveKit Tab Capture",

+ "version": "1.0",

+ "description": "Capture and transcribe audio from browser tabs using WhisperLiveKit.",

+ "action": {

+ "default_title": "WhisperLiveKit Tab Capture",

+ "default_popup": "popup.html"

+ },

+ "permissions": ["scripting", "tabCapture", "offscreen", "activeTab", "storage"],

+ "web_accessible_resources": [

+ {

+ "resources": ["requestPermissions.html", "requestPermissions.js"],

+ "matches": [""]

+ }

+ ]

+}

diff --git a/chrome-extension/popup.html b/chrome-extension/popup.html

new file mode 100644

index 0000000..1677c5d

--- /dev/null

+++ b/chrome-extension/popup.html

@@ -0,0 +1,73 @@

+

+

+

+

+

+

+ WhisperLiveKit

+

+

+

+

+

+

+## Running this extension

+1. Clone this repository.

+2. Load this directory in Chrome as an unpacked extension.

+

+

+## Devs:

+- Impossible to capture audio from tabs if extension is a pannel, unfortunately: https://issues.chromium.org/issues/40926394

+- To capture microphone in an extension, there are tricks: https://github.com/justinmann/sidepanel-audio-issue , https://medium.com/@lynchee.owo/how-to-enable-microphone-access-in-chrome-extensions-by-code-924295170080 (comments)

diff --git a/chrome-extension/demo-extension.png b/chrome-extension/demo-extension.png

new file mode 100644

index 0000000..ef6e7e2

Binary files /dev/null and b/chrome-extension/demo-extension.png differ

diff --git a/chrome-extension/example_tab_capture.js b/chrome-extension/example_tab_capture.js

new file mode 100644

index 0000000..8095371

--- /dev/null

+++ b/chrome-extension/example_tab_capture.js

@@ -0,0 +1,315 @@

+const extend = function() { //helper function to merge objects

+ let target = arguments[0],

+ sources = [].slice.call(arguments, 1);

+ for (let i = 0; i < sources.length; ++i) {

+ let src = sources[i];

+ for (key in src) {

+ let val = src[key];

+ target[key] = typeof val === "object"

+ ? extend(typeof target[key] === "object" ? target[key] : {}, val)

+ : val;

+ }

+ }

+ return target;

+};

+

+const WORKER_FILE = {

+ wav: "WavWorker.js",

+ mp3: "Mp3Worker.js"

+};

+

+// default configs

+const CONFIGS = {

+ workerDir: "/workers/", // worker scripts dir (end with /)

+ numChannels: 2, // number of channels

+ encoding: "wav", // encoding (can be changed at runtime)

+

+ // runtime options

+ options: {

+ timeLimit: 1200, // recording time limit (sec)

+ encodeAfterRecord: true, // process encoding after recording

+ progressInterval: 1000, // encoding progress report interval (millisec)

+ bufferSize: undefined, // buffer size (use browser default)

+

+ // encoding-specific options

+ wav: {

+ mimeType: "audio/wav"

+ },

+ mp3: {

+ mimeType: "audio/mpeg",

+ bitRate: 192 // (CBR only): bit rate = [64 .. 320]

+ }

+ }

+};

+

+class Recorder {

+

+ constructor(source, configs) { //creates audio context from the source and connects it to the worker

+ extend(this, CONFIGS, configs || {});

+ this.context = source.context;

+ if (this.context.createScriptProcessor == null)

+ this.context.createScriptProcessor = this.context.createJavaScriptNode;

+ this.input = this.context.createGain();

+ source.connect(this.input);

+ this.buffer = [];

+ this.initWorker();

+ }

+

+ isRecording() {

+ return this.processor != null;

+ }

+

+ setEncoding(encoding) {

+ if(!this.isRecording() && this.encoding !== encoding) {

+ this.encoding = encoding;

+ this.initWorker();

+ }

+ }

+

+ setOptions(options) {

+ if (!this.isRecording()) {

+ extend(this.options, options);

+ this.worker.postMessage({ command: "options", options: this.options});

+ }

+ }

+

+ startRecording() {

+ if(!this.isRecording()) {

+ let numChannels = this.numChannels;

+ let buffer = this.buffer;

+ let worker = this.worker;

+ this.processor = this.context.createScriptProcessor(

+ this.options.bufferSize,

+ this.numChannels, this.numChannels);

+ this.input.connect(this.processor);

+ this.processor.connect(this.context.destination);

+ this.processor.onaudioprocess = function(event) {

+ for (var ch = 0; ch < numChannels; ++ch)

+ buffer[ch] = event.inputBuffer.getChannelData(ch);

+ worker.postMessage({ command: "record", buffer: buffer });

+ };

+ this.worker.postMessage({

+ command: "start",

+ bufferSize: this.processor.bufferSize

+ });

+ this.startTime = Date.now();

+ }

+ }

+

+ cancelRecording() {

+ if(this.isRecording()) {

+ this.input.disconnect();

+ this.processor.disconnect();

+ delete this.processor;

+ this.worker.postMessage({ command: "cancel" });

+ }

+ }

+

+ finishRecording() {

+ if (this.isRecording()) {

+ this.input.disconnect();

+ this.processor.disconnect();

+ delete this.processor;

+ this.worker.postMessage({ command: "finish" });

+ }

+ }

+

+ cancelEncoding() {

+ if (this.options.encodeAfterRecord)

+ if (!this.isRecording()) {

+ this.onEncodingCanceled(this);

+ this.initWorker();

+ }

+ }

+

+ initWorker() {

+ if (this.worker != null)

+ this.worker.terminate();

+ this.onEncoderLoading(this, this.encoding);

+ this.worker = new Worker(this.workerDir + WORKER_FILE[this.encoding]);

+ let _this = this;

+ this.worker.onmessage = function(event) {

+ let data = event.data;

+ switch (data.command) {

+ case "loaded":

+ _this.onEncoderLoaded(_this, _this.encoding);

+ break;

+ case "timeout":

+ _this.onTimeout(_this);

+ break;

+ case "progress":

+ _this.onEncodingProgress(_this, data.progress);

+ break;

+ case "complete":

+ _this.onComplete(_this, data.blob);

+ }

+ }

+ this.worker.postMessage({

+ command: "init",

+ config: {

+ sampleRate: this.context.sampleRate,

+ numChannels: this.numChannels

+ },

+ options: this.options

+ });

+ }

+

+ onEncoderLoading(recorder, encoding) {}

+ onEncoderLoaded(recorder, encoding) {}

+ onTimeout(recorder) {}

+ onEncodingProgress(recorder, progress) {}

+ onEncodingCanceled(recorder) {}

+ onComplete(recorder, blob) {}

+

+}

+

+const audioCapture = (timeLimit, muteTab, format, quality, limitRemoved) => {

+ chrome.tabCapture.capture({audio: true}, (stream) => { // sets up stream for capture

+ let startTabId; //tab when the capture is started

+ let timeout;

+ let completeTabID; //tab when the capture is stopped

+ let audioURL = null; //resulting object when encoding is completed

+ chrome.tabs.query({active:true, currentWindow: true}, (tabs) => startTabId = tabs[0].id) //saves start tab

+ const liveStream = stream;

+ const audioCtx = new AudioContext();

+ const source = audioCtx.createMediaStreamSource(stream);

+ let mediaRecorder = new Recorder(source); //initiates the recorder based on the current stream

+ mediaRecorder.setEncoding(format); //sets encoding based on options

+ if(limitRemoved) { //removes time limit

+ mediaRecorder.setOptions({timeLimit: 10800});

+ } else {

+ mediaRecorder.setOptions({timeLimit: timeLimit/1000});

+ }

+ if(format === "mp3") {

+ mediaRecorder.setOptions({mp3: {bitRate: quality}});

+ }

+ mediaRecorder.startRecording();

+

+ function onStopCommand(command) { //keypress

+ if (command === "stop") {

+ stopCapture();

+ }

+ }

+ function onStopClick(request) { //click on popup

+ if(request === "stopCapture") {

+ stopCapture();

+ } else if (request === "cancelCapture") {

+ cancelCapture();

+ } else if (request.cancelEncodeID) {

+ if(request.cancelEncodeID === startTabId && mediaRecorder) {

+ mediaRecorder.cancelEncoding();

+ }

+ }

+ }

+ chrome.commands.onCommand.addListener(onStopCommand);

+ chrome.runtime.onMessage.addListener(onStopClick);

+ mediaRecorder.onComplete = (recorder, blob) => {

+ audioURL = window.URL.createObjectURL(blob);

+ if(completeTabID) {

+ chrome.tabs.sendMessage(completeTabID, {type: "encodingComplete", audioURL});

+ }

+ mediaRecorder = null;

+ }

+ mediaRecorder.onEncodingProgress = (recorder, progress) => {

+ if(completeTabID) {

+ chrome.tabs.sendMessage(completeTabID, {type: "encodingProgress", progress: progress});

+ }

+ }

+

+ const stopCapture = function() {

+ let endTabId;

+ //check to make sure the current tab is the tab being captured

+ chrome.tabs.query({active: true, currentWindow: true}, (tabs) => {

+ endTabId = tabs[0].id;

+ if(mediaRecorder && startTabId === endTabId){

+ mediaRecorder.finishRecording();

+ chrome.tabs.create({url: "complete.html"}, (tab) => {

+ completeTabID = tab.id;

+ let completeCallback = () => {

+ chrome.tabs.sendMessage(tab.id, {type: "createTab", format: format, audioURL, startID: startTabId});

+ }

+ setTimeout(completeCallback, 500);

+ });

+ closeStream(endTabId);

+ }

+ })

+ }

+

+ const cancelCapture = function() {

+ let endTabId;

+ chrome.tabs.query({active: true, currentWindow: true}, (tabs) => {

+ endTabId = tabs[0].id;

+ if(mediaRecorder && startTabId === endTabId){

+ mediaRecorder.cancelRecording();

+ closeStream(endTabId);

+ }

+ })

+ }

+

+//removes the audio context and closes recorder to save memory

+ const closeStream = function(endTabId) {

+ chrome.commands.onCommand.removeListener(onStopCommand);

+ chrome.runtime.onMessage.removeListener(onStopClick);

+ mediaRecorder.onTimeout = () => {};

+ audioCtx.close();

+ liveStream.getAudioTracks()[0].stop();

+ sessionStorage.removeItem(endTabId);

+ chrome.runtime.sendMessage({captureStopped: endTabId});

+ }

+

+ mediaRecorder.onTimeout = stopCapture;

+

+ if(!muteTab) {

+ let audio = new Audio();

+ audio.srcObject = liveStream;

+ audio.play();

+ }

+ });

+}

+

+

+

+//sends reponses to and from the popup menu

+chrome.runtime.onMessage.addListener((request, sender, sendResponse) => {

+ if (request.currentTab && sessionStorage.getItem(request.currentTab)) {

+ sendResponse(sessionStorage.getItem(request.currentTab));

+ } else if (request.currentTab){

+ sendResponse(false);

+ } else if (request === "startCapture") {

+ startCapture();

+ }

+});

+

+const startCapture = function() {

+ chrome.tabs.query({active: true, currentWindow: true}, (tabs) => {

+ // CODE TO BLOCK CAPTURE ON YOUTUBE, DO NOT REMOVE

+ // if(tabs[0].url.toLowerCase().includes("youtube")) {

+ // chrome.tabs.create({url: "error.html"});

+ // } else {

+ if(!sessionStorage.getItem(tabs[0].id)) {

+ sessionStorage.setItem(tabs[0].id, Date.now());

+ chrome.storage.sync.get({

+ maxTime: 1200000,

+ muteTab: false,

+ format: "mp3",

+ quality: 192,

+ limitRemoved: false

+ }, (options) => {

+ let time = options.maxTime;

+ if(time > 1200000) {

+ time = 1200000

+ }

+ audioCapture(time, options.muteTab, options.format, options.quality, options.limitRemoved);

+ });

+ chrome.runtime.sendMessage({captureStarted: tabs[0].id, startTime: Date.now()});

+ }

+ // }

+ });

+};

+

+

+chrome.commands.onCommand.addListener((command) => {

+ if (command === "start") {

+ startCapture();

+ }

+});

\ No newline at end of file

diff --git a/chrome-extension/manifest.json b/chrome-extension/manifest.json

new file mode 100644

index 0000000..a925ee5

--- /dev/null

+++ b/chrome-extension/manifest.json

@@ -0,0 +1,17 @@

+{

+ "manifest_version": 3,

+ "name": "WhisperLiveKit Tab Capture",

+ "version": "1.0",

+ "description": "Capture and transcribe audio from browser tabs using WhisperLiveKit.",

+ "action": {

+ "default_title": "WhisperLiveKit Tab Capture",

+ "default_popup": "popup.html"

+ },

+ "permissions": ["scripting", "tabCapture", "offscreen", "activeTab", "storage"],

+ "web_accessible_resources": [

+ {

+ "resources": ["requestPermissions.html", "requestPermissions.js"],

+ "matches": [""]

+ }

+ ]

+}

diff --git a/chrome-extension/popup.html b/chrome-extension/popup.html

new file mode 100644

index 0000000..1677c5d

--- /dev/null

+++ b/chrome-extension/popup.html

@@ -0,0 +1,73 @@

+

+

+

+

+

+

+ WhisperLiveKit

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

No audio detected...

";

+ return;

+ }

+

+ const showLoading = !isFinalizing && (lines || []).some((it) => it.speaker == 0);

+ const showTransLag = !isFinalizing && remaining_time_transcription > 0;

+ const showDiaLag = !isFinalizing && !!buffer_diarization && remaining_time_diarization > 0;

+ const signature = JSON.stringify({

+ lines: (lines || []).map((it) => ({ speaker: it.speaker, text: it.text, beg: it.beg, end: it.end })),

+ buffer_transcription: buffer_transcription || "",

+ buffer_diarization: buffer_diarization || "",

+ status: current_status,

+ showLoading,

+ showTransLag,

+ showDiaLag,

+ isFinalizing: !!isFinalizing,

+ });

+ if (lastSignature === signature) {

+ const t = document.querySelector(".lag-transcription-value");

+ if (t) t.textContent = fmt1(remaining_time_transcription);

+ const d = document.querySelector(".lag-diarization-value");

+ if (d) d.textContent = fmt1(remaining_time_diarization);

+ const ld = document.querySelector(".loading-diarization-value");

+ if (ld) ld.textContent = fmt1(remaining_time_diarization);

+ return;

+ }

+ lastSignature = signature;

+

+ const linesHtml = (lines || [])

+ .map((item, idx) => {

+ let timeInfo = "";

+ if (item.beg !== undefined && item.end !== undefined) {

+ timeInfo = ` ${item.beg} - ${item.end}`;

+ }

+

+ let speakerLabel = "";

+ if (item.speaker === -2) {

+ speakerLabel = `Silence${timeInfo}`;

+ } else if (item.speaker == 0 && !isFinalizing) {

+ speakerLabel = `${fmt1(

+ remaining_time_diarization

+ )} second(s) of audio are undergoing diarization`;

+ } else if (item.speaker !== 0) {

+ speakerLabel = `Speaker ${item.speaker}${timeInfo}`;

+ }

+

+ let currentLineText = item.text || "";

+

+ if (idx === lines.length - 1) {

+ if (!isFinalizing && item.speaker !== -2) {

+ if (remaining_time_transcription > 0) {

+ speakerLabel += `Lag ${fmt1(

+ remaining_time_transcription

+ )}s`;

+ }

+ if (buffer_diarization && remaining_time_diarization > 0) {

+ speakerLabel += `Lag${fmt1(

+ remaining_time_diarization

+ )}s`;

+ }

+ }

+

+ if (buffer_diarization) {

+ if (isFinalizing) {

+ currentLineText +=

+ (currentLineText.length > 0 && buffer_diarization.trim().length > 0 ? " " : "") + buffer_diarization.trim();

+ } else {

+ currentLineText += `${buffer_diarization}`;

+ }

+ }

+ if (buffer_transcription) {

+ if (isFinalizing) {

+ currentLineText +=

+ (currentLineText.length > 0 && buffer_transcription.trim().length > 0 ? " " : "") +

+ buffer_transcription.trim();

+ } else {

+ currentLineText += `${buffer_transcription}`;

+ }

+ }

+ }

+

+ return currentLineText.trim().length > 0 || speakerLabel.length > 0

+ ? `${speakerLabel}

${currentLineText}

`

+ : `${speakerLabel}

`;

+ })

+ .join("");

+

+ linesTranscriptDiv.innerHTML = linesHtml;

+ window.scrollTo({ top: document.body.scrollHeight, behavior: "smooth" });

+}

+

+function updateTimer() {

+ if (!startTime) return;

+

+ const elapsed = Math.floor((Date.now() - startTime) / 1000);

+ const minutes = Math.floor(elapsed / 60).toString().padStart(2, "0");

+ const seconds = (elapsed % 60).toString().padStart(2, "0");

+ timerElement.textContent = `${minutes}:${seconds}`;

+}

+

+function drawWaveform() {

+ if (!analyser) return;

+

+ const bufferLength = analyser.frequencyBinCount;

+ const dataArray = new Uint8Array(bufferLength);

+ analyser.getByteTimeDomainData(dataArray);

+

+ waveCtx.clearRect(

+ 0,

+ 0,

+ waveCanvas.width / (window.devicePixelRatio || 1),

+ waveCanvas.height / (window.devicePixelRatio || 1)

+ );

+ waveCtx.lineWidth = 1;

+ waveCtx.strokeStyle = waveStroke;

+ waveCtx.beginPath();

+

+ const sliceWidth = (waveCanvas.width / (window.devicePixelRatio || 1)) / bufferLength;

+ let x = 0;

+

+ for (let i = 0; i < bufferLength; i++) {

+ const v = dataArray[i] / 128.0;

+ const y = (v * (waveCanvas.height / (window.devicePixelRatio || 1))) / 2;

+

+ if (i === 0) {

+ waveCtx.moveTo(x, y);

+ } else {

+ waveCtx.lineTo(x, y);

+ }

+

+ x += sliceWidth;

+ }

+

+ waveCtx.lineTo(

+ waveCanvas.width / (window.devicePixelRatio || 1),

+ (waveCanvas.height / (window.devicePixelRatio || 1)) / 2

+ );

+ waveCtx.stroke();

+

+ animationFrame = requestAnimationFrame(drawWaveform);

+}

+

+async function startRecording() {

+ try {

+ try {

+ wakeLock = await navigator.wakeLock.request("screen");

+ } catch (err) {

+ console.log("Error acquiring wake lock.");

+ }

+

+ let stream;

+ try {

+ // Try tab capture first

+ stream = await new Promise((resolve, reject) => {

+ chrome.tabCapture.capture({audio: true}, (s) => {

+ if (s) {

+ resolve(s);

+ } else {

+ reject(new Error('Tab capture failed or not available'));

+ }

+ });

+ });

+ statusText.textContent = "Using tab audio capture.";

+ } catch (tabError) {

+ console.log('Tab capture not available, falling back to microphone', tabError);

+ // Fallback to microphone

+ const audioConstraints = selectedMicrophoneId

+ ? { audio: { deviceId: { exact: selectedMicrophoneId } } }

+ : { audio: true };

+ stream = await navigator.mediaDevices.getUserMedia(audioConstraints);

+ statusText.textContent = "Using microphone audio.";

+ }

+

+ audioContext = new (window.AudioContext || window.webkitAudioContext)();

+ analyser = audioContext.createAnalyser();

+ analyser.fftSize = 256;

+ microphone = audioContext.createMediaStreamSource(stream);

+ microphone.connect(analyser);

+

+ recorder = new MediaRecorder(stream, { mimeType: "audio/webm" });

+ recorder.ondataavailable = (e) => {

+ if (websocket && websocket.readyState === WebSocket.OPEN) {

+ websocket.send(e.data);

+ }

+ };

+ recorder.start(chunkDuration);

+

+ startTime = Date.now();

+ timerInterval = setInterval(updateTimer, 1000);

+ drawWaveform();

+

+ isRecording = true;

+ updateUI();

+ } catch (err) {

+ if (window.location.hostname === "0.0.0.0") {

+ statusText.textContent =

+ "Error accessing audio input. Browsers may block audio access on 0.0.0.0. Try using localhost:8000 instead.";

+ } else {

+ statusText.textContent = "Error accessing audio input. Please check permissions.";

+ }

+ console.error(err);

+ }

+}

+

+async function stopRecording() {

+ if (wakeLock) {

+ try {

+ await wakeLock.release();

+ } catch (e) {

+ // ignore

+ }

+ wakeLock = null;

+ }

+

+ userClosing = true;

+ waitingForStop = true;

+

+ if (websocket && websocket.readyState === WebSocket.OPEN) {

+ const emptyBlob = new Blob([], { type: "audio/webm" });

+ websocket.send(emptyBlob);

+ statusText.textContent = "Recording stopped. Processing final audio...";

+ }

+

+ if (recorder) {

+ recorder.stop();

+ recorder = null;

+ }

+

+ if (microphone) {

+ microphone.disconnect();

+ microphone = null;

+ }

+

+ if (analyser) {

+ analyser = null;

+ }

+

+ if (audioContext && audioContext.state !== "closed") {

+ try {

+ await audioContext.close();

+ } catch (e) {

+ console.warn("Could not close audio context:", e);

+ }

+ audioContext = null;

+ }

+

+ if (animationFrame) {

+ cancelAnimationFrame(animationFrame);

+ animationFrame = null;

+ }

+

+ if (timerInterval) {

+ clearInterval(timerInterval);

+ timerInterval = null;

+ }

+ timerElement.textContent = "00:00";

+ startTime = null;

+

+ isRecording = false;

+ updateUI();

+}

+

+async function toggleRecording() {

+ if (!isRecording) {

+ if (waitingForStop) {

+ console.log("Waiting for stop, early return");

+ return;

+ }

+ console.log("Connecting to WebSocket");

+ try {

+ if (websocket && websocket.readyState === WebSocket.OPEN) {

+ await startRecording();

+ } else {

+ await setupWebSocket();

+ await startRecording();

+ }

+ } catch (err) {

+ statusText.textContent = "Could not connect to WebSocket or access mic. Aborted.";

+ console.error(err);

+ }

+ } else {

+ console.log("Stopping recording");

+ stopRecording();

+ }

+}

+

+function updateUI() {

+ recordButton.classList.toggle("recording", isRecording);

+ recordButton.disabled = waitingForStop;

+

+ if (waitingForStop) {

+ if (statusText.textContent !== "Recording stopped. Processing final audio...") {

+ statusText.textContent = "Please wait for processing to complete...";

+ }

+ } else if (isRecording) {

+ statusText.textContent = "Recording...";

+ } else {

+ if (

+ statusText.textContent !== "Finished processing audio! Ready to record again." &&

+ statusText.textContent !== "Processing finalized or connection closed."

+ ) {

+ statusText.textContent = "Click to start transcription";

+ }

+ }

+ if (!waitingForStop) {

+ recordButton.disabled = false;

+ }

+}

+

+recordButton.addEventListener("click", toggleRecording);

+

+if (microphoneSelect) {

+ microphoneSelect.addEventListener("change", handleMicrophoneChange);

+}

+// document.addEventListener('DOMContentLoaded', async () => {

+// try {

+// await enumerateMicrophones();

+// } catch (error) {

+// console.log("Could not enumerate microphones on load:", error);

+// }

+// });

+// navigator.mediaDevices.addEventListener('devicechange', async () => {

+// console.log('Device change detected, re-enumerating microphones');

+// try {

+// await enumerateMicrophones();

+// } catch (error) {

+// console.log("Error re-enumerating microphones:", error);

+// }

+// });

diff --git a/chrome-extension/web/src/dark_mode.svg b/chrome-extension/web/src/dark_mode.svg

new file mode 100644

index 0000000..a083e1a

--- /dev/null

+++ b/chrome-extension/web/src/dark_mode.svg

@@ -0,0 +1 @@

+

\ No newline at end of file

diff --git a/chrome-extension/web/src/light_mode.svg b/chrome-extension/web/src/light_mode.svg

new file mode 100644

index 0000000..66b6e74

--- /dev/null

+++ b/chrome-extension/web/src/light_mode.svg

@@ -0,0 +1 @@

+

\ No newline at end of file

diff --git a/chrome-extension/web/src/system_mode.svg b/chrome-extension/web/src/system_mode.svg

new file mode 100644

index 0000000..7a8a0d2

--- /dev/null

+++ b/chrome-extension/web/src/system_mode.svg

@@ -0,0 +1 @@

+

\ No newline at end of file

diff --git a/whisperlivekit/simul_whisper/simul_whisper.py b/whisperlivekit/simul_whisper/simul_whisper.py

index 0b8649e..c1f8c2e 100644

--- a/whisperlivekit/simul_whisper/simul_whisper.py

+++ b/whisperlivekit/simul_whisper/simul_whisper.py

@@ -399,17 +399,17 @@ class PaddedAlignAttWhisper:

mlx_mel_padded = mlx_log_mel_spectrogram(audio=input_segments.detach(), n_mels=self.model.dims.n_mels, padding=N_SAMPLES)

mlx_mel = mlx_pad_or_trim(mlx_mel_padded, N_FRAMES, axis=-2)

mlx_encoder_feature = self.mlx_encoder.encoder(mlx_mel[None])

- encoder_feature = torch.as_tensor(mlx_encoder_feature)

+ encoder_feature = torch.tensor(np.array(mlx_encoder_feature))

content_mel_len = int((mlx_mel_padded.shape[0] - mlx_mel.shape[0])/2)

- device = encoder_feature.device #'cpu' is apple silicon

+ device = 'cpu'

elif self.fw_encoder:

audio_length_seconds = len(input_segments) / 16000

content_mel_len = int(audio_length_seconds * 100)//2

mel_padded_2 = self.fw_feature_extractor(waveform=input_segments.numpy(), padding=N_SAMPLES)[None, :]

mel = fw_pad_or_trim(mel_padded_2, N_FRAMES, axis=-1)

encoder_feature_ctranslate = self.fw_encoder.encode(mel)

- encoder_feature = torch.as_tensor(encoder_feature_ctranslate)

- device = encoder_feature.device

+ encoder_feature = torch.Tensor(np.array(encoder_feature_ctranslate))

+ device = 'cpu'

else:

# mel + padding to 30s

mel_padded = log_mel_spectrogram(input_segments, n_mels=self.model.dims.n_mels, padding=N_SAMPLES,

+

+## Running this extension

+1. Clone this repository.

+2. Load this directory in Chrome as an unpacked extension.

+

+

+## Devs:

+- Impossible to capture audio from tabs if extension is a pannel, unfortunately: https://issues.chromium.org/issues/40926394

+- To capture microphone in an extension, there are tricks: https://github.com/justinmann/sidepanel-audio-issue , https://medium.com/@lynchee.owo/how-to-enable-microphone-access-in-chrome-extensions-by-code-924295170080 (comments)

diff --git a/chrome-extension/demo-extension.png b/chrome-extension/demo-extension.png

new file mode 100644

index 0000000..ef6e7e2

Binary files /dev/null and b/chrome-extension/demo-extension.png differ

diff --git a/chrome-extension/example_tab_capture.js b/chrome-extension/example_tab_capture.js

new file mode 100644

index 0000000..8095371

--- /dev/null

+++ b/chrome-extension/example_tab_capture.js

@@ -0,0 +1,315 @@

+const extend = function() { //helper function to merge objects

+ let target = arguments[0],

+ sources = [].slice.call(arguments, 1);

+ for (let i = 0; i < sources.length; ++i) {

+ let src = sources[i];

+ for (key in src) {

+ let val = src[key];

+ target[key] = typeof val === "object"

+ ? extend(typeof target[key] === "object" ? target[key] : {}, val)

+ : val;

+ }

+ }

+ return target;

+};

+

+const WORKER_FILE = {

+ wav: "WavWorker.js",

+ mp3: "Mp3Worker.js"

+};

+

+// default configs

+const CONFIGS = {

+ workerDir: "/workers/", // worker scripts dir (end with /)

+ numChannels: 2, // number of channels

+ encoding: "wav", // encoding (can be changed at runtime)

+

+ // runtime options

+ options: {

+ timeLimit: 1200, // recording time limit (sec)

+ encodeAfterRecord: true, // process encoding after recording

+ progressInterval: 1000, // encoding progress report interval (millisec)

+ bufferSize: undefined, // buffer size (use browser default)

+

+ // encoding-specific options

+ wav: {

+ mimeType: "audio/wav"

+ },

+ mp3: {

+ mimeType: "audio/mpeg",

+ bitRate: 192 // (CBR only): bit rate = [64 .. 320]

+ }

+ }

+};

+

+class Recorder {

+

+ constructor(source, configs) { //creates audio context from the source and connects it to the worker

+ extend(this, CONFIGS, configs || {});

+ this.context = source.context;

+ if (this.context.createScriptProcessor == null)

+ this.context.createScriptProcessor = this.context.createJavaScriptNode;

+ this.input = this.context.createGain();

+ source.connect(this.input);

+ this.buffer = [];

+ this.initWorker();

+ }

+

+ isRecording() {

+ return this.processor != null;

+ }

+

+ setEncoding(encoding) {

+ if(!this.isRecording() && this.encoding !== encoding) {

+ this.encoding = encoding;

+ this.initWorker();

+ }

+ }

+

+ setOptions(options) {

+ if (!this.isRecording()) {

+ extend(this.options, options);

+ this.worker.postMessage({ command: "options", options: this.options});

+ }

+ }

+

+ startRecording() {

+ if(!this.isRecording()) {

+ let numChannels = this.numChannels;

+ let buffer = this.buffer;

+ let worker = this.worker;

+ this.processor = this.context.createScriptProcessor(

+ this.options.bufferSize,

+ this.numChannels, this.numChannels);

+ this.input.connect(this.processor);

+ this.processor.connect(this.context.destination);

+ this.processor.onaudioprocess = function(event) {

+ for (var ch = 0; ch < numChannels; ++ch)

+ buffer[ch] = event.inputBuffer.getChannelData(ch);

+ worker.postMessage({ command: "record", buffer: buffer });

+ };

+ this.worker.postMessage({

+ command: "start",

+ bufferSize: this.processor.bufferSize

+ });

+ this.startTime = Date.now();

+ }

+ }

+

+ cancelRecording() {

+ if(this.isRecording()) {

+ this.input.disconnect();

+ this.processor.disconnect();

+ delete this.processor;

+ this.worker.postMessage({ command: "cancel" });

+ }

+ }

+

+ finishRecording() {

+ if (this.isRecording()) {

+ this.input.disconnect();

+ this.processor.disconnect();

+ delete this.processor;

+ this.worker.postMessage({ command: "finish" });

+ }

+ }

+

+ cancelEncoding() {

+ if (this.options.encodeAfterRecord)

+ if (!this.isRecording()) {

+ this.onEncodingCanceled(this);

+ this.initWorker();

+ }

+ }

+

+ initWorker() {

+ if (this.worker != null)

+ this.worker.terminate();

+ this.onEncoderLoading(this, this.encoding);

+ this.worker = new Worker(this.workerDir + WORKER_FILE[this.encoding]);

+ let _this = this;

+ this.worker.onmessage = function(event) {

+ let data = event.data;

+ switch (data.command) {

+ case "loaded":

+ _this.onEncoderLoaded(_this, _this.encoding);

+ break;

+ case "timeout":

+ _this.onTimeout(_this);

+ break;

+ case "progress":

+ _this.onEncodingProgress(_this, data.progress);

+ break;

+ case "complete":

+ _this.onComplete(_this, data.blob);

+ }

+ }

+ this.worker.postMessage({

+ command: "init",

+ config: {

+ sampleRate: this.context.sampleRate,

+ numChannels: this.numChannels

+ },

+ options: this.options

+ });

+ }

+

+ onEncoderLoading(recorder, encoding) {}

+ onEncoderLoaded(recorder, encoding) {}

+ onTimeout(recorder) {}

+ onEncodingProgress(recorder, progress) {}

+ onEncodingCanceled(recorder) {}

+ onComplete(recorder, blob) {}

+

+}

+

+const audioCapture = (timeLimit, muteTab, format, quality, limitRemoved) => {

+ chrome.tabCapture.capture({audio: true}, (stream) => { // sets up stream for capture

+ let startTabId; //tab when the capture is started

+ let timeout;

+ let completeTabID; //tab when the capture is stopped

+ let audioURL = null; //resulting object when encoding is completed

+ chrome.tabs.query({active:true, currentWindow: true}, (tabs) => startTabId = tabs[0].id) //saves start tab

+ const liveStream = stream;

+ const audioCtx = new AudioContext();

+ const source = audioCtx.createMediaStreamSource(stream);

+ let mediaRecorder = new Recorder(source); //initiates the recorder based on the current stream

+ mediaRecorder.setEncoding(format); //sets encoding based on options

+ if(limitRemoved) { //removes time limit

+ mediaRecorder.setOptions({timeLimit: 10800});

+ } else {

+ mediaRecorder.setOptions({timeLimit: timeLimit/1000});

+ }

+ if(format === "mp3") {

+ mediaRecorder.setOptions({mp3: {bitRate: quality}});

+ }

+ mediaRecorder.startRecording();

+

+ function onStopCommand(command) { //keypress

+ if (command === "stop") {

+ stopCapture();

+ }

+ }

+ function onStopClick(request) { //click on popup

+ if(request === "stopCapture") {

+ stopCapture();

+ } else if (request === "cancelCapture") {

+ cancelCapture();

+ } else if (request.cancelEncodeID) {

+ if(request.cancelEncodeID === startTabId && mediaRecorder) {

+ mediaRecorder.cancelEncoding();

+ }

+ }

+ }

+ chrome.commands.onCommand.addListener(onStopCommand);

+ chrome.runtime.onMessage.addListener(onStopClick);

+ mediaRecorder.onComplete = (recorder, blob) => {

+ audioURL = window.URL.createObjectURL(blob);

+ if(completeTabID) {

+ chrome.tabs.sendMessage(completeTabID, {type: "encodingComplete", audioURL});

+ }

+ mediaRecorder = null;

+ }

+ mediaRecorder.onEncodingProgress = (recorder, progress) => {

+ if(completeTabID) {

+ chrome.tabs.sendMessage(completeTabID, {type: "encodingProgress", progress: progress});

+ }

+ }

+

+ const stopCapture = function() {

+ let endTabId;

+ //check to make sure the current tab is the tab being captured

+ chrome.tabs.query({active: true, currentWindow: true}, (tabs) => {

+ endTabId = tabs[0].id;

+ if(mediaRecorder && startTabId === endTabId){

+ mediaRecorder.finishRecording();

+ chrome.tabs.create({url: "complete.html"}, (tab) => {

+ completeTabID = tab.id;

+ let completeCallback = () => {

+ chrome.tabs.sendMessage(tab.id, {type: "createTab", format: format, audioURL, startID: startTabId});

+ }

+ setTimeout(completeCallback, 500);

+ });

+ closeStream(endTabId);

+ }

+ })

+ }

+

+ const cancelCapture = function() {

+ let endTabId;

+ chrome.tabs.query({active: true, currentWindow: true}, (tabs) => {

+ endTabId = tabs[0].id;

+ if(mediaRecorder && startTabId === endTabId){

+ mediaRecorder.cancelRecording();

+ closeStream(endTabId);

+ }

+ })

+ }

+

+//removes the audio context and closes recorder to save memory

+ const closeStream = function(endTabId) {

+ chrome.commands.onCommand.removeListener(onStopCommand);

+ chrome.runtime.onMessage.removeListener(onStopClick);

+ mediaRecorder.onTimeout = () => {};

+ audioCtx.close();

+ liveStream.getAudioTracks()[0].stop();

+ sessionStorage.removeItem(endTabId);

+ chrome.runtime.sendMessage({captureStopped: endTabId});

+ }

+

+ mediaRecorder.onTimeout = stopCapture;

+

+ if(!muteTab) {

+ let audio = new Audio();

+ audio.srcObject = liveStream;

+ audio.play();

+ }

+ });

+}

+

+

+

+//sends reponses to and from the popup menu

+chrome.runtime.onMessage.addListener((request, sender, sendResponse) => {

+ if (request.currentTab && sessionStorage.getItem(request.currentTab)) {

+ sendResponse(sessionStorage.getItem(request.currentTab));

+ } else if (request.currentTab){

+ sendResponse(false);

+ } else if (request === "startCapture") {

+ startCapture();

+ }

+});

+

+const startCapture = function() {

+ chrome.tabs.query({active: true, currentWindow: true}, (tabs) => {

+ // CODE TO BLOCK CAPTURE ON YOUTUBE, DO NOT REMOVE

+ // if(tabs[0].url.toLowerCase().includes("youtube")) {

+ // chrome.tabs.create({url: "error.html"});

+ // } else {

+ if(!sessionStorage.getItem(tabs[0].id)) {

+ sessionStorage.setItem(tabs[0].id, Date.now());

+ chrome.storage.sync.get({

+ maxTime: 1200000,

+ muteTab: false,

+ format: "mp3",

+ quality: 192,

+ limitRemoved: false

+ }, (options) => {

+ let time = options.maxTime;

+ if(time > 1200000) {

+ time = 1200000

+ }

+ audioCapture(time, options.muteTab, options.format, options.quality, options.limitRemoved);

+ });

+ chrome.runtime.sendMessage({captureStarted: tabs[0].id, startTime: Date.now()});

+ }

+ // }

+ });

+};

+

+

+chrome.commands.onCommand.addListener((command) => {

+ if (command === "start") {

+ startCapture();

+ }

+});

\ No newline at end of file

diff --git a/chrome-extension/manifest.json b/chrome-extension/manifest.json

new file mode 100644

index 0000000..a925ee5

--- /dev/null

+++ b/chrome-extension/manifest.json

@@ -0,0 +1,17 @@

+{

+ "manifest_version": 3,

+ "name": "WhisperLiveKit Tab Capture",

+ "version": "1.0",

+ "description": "Capture and transcribe audio from browser tabs using WhisperLiveKit.",

+ "action": {

+ "default_title": "WhisperLiveKit Tab Capture",

+ "default_popup": "popup.html"

+ },

+ "permissions": ["scripting", "tabCapture", "offscreen", "activeTab", "storage"],

+ "web_accessible_resources": [

+ {

+ "resources": ["requestPermissions.html", "requestPermissions.js"],

+ "matches": ["

+

+## Running this extension

+1. Clone this repository.

+2. Load this directory in Chrome as an unpacked extension.

+

+

+## Devs:

+- Impossible to capture audio from tabs if extension is a pannel, unfortunately: https://issues.chromium.org/issues/40926394

+- To capture microphone in an extension, there are tricks: https://github.com/justinmann/sidepanel-audio-issue , https://medium.com/@lynchee.owo/how-to-enable-microphone-access-in-chrome-extensions-by-code-924295170080 (comments)

diff --git a/chrome-extension/demo-extension.png b/chrome-extension/demo-extension.png

new file mode 100644

index 0000000..ef6e7e2

Binary files /dev/null and b/chrome-extension/demo-extension.png differ

diff --git a/chrome-extension/example_tab_capture.js b/chrome-extension/example_tab_capture.js

new file mode 100644

index 0000000..8095371

--- /dev/null

+++ b/chrome-extension/example_tab_capture.js

@@ -0,0 +1,315 @@

+const extend = function() { //helper function to merge objects

+ let target = arguments[0],

+ sources = [].slice.call(arguments, 1);

+ for (let i = 0; i < sources.length; ++i) {

+ let src = sources[i];

+ for (key in src) {

+ let val = src[key];

+ target[key] = typeof val === "object"

+ ? extend(typeof target[key] === "object" ? target[key] : {}, val)

+ : val;

+ }

+ }

+ return target;

+};

+

+const WORKER_FILE = {

+ wav: "WavWorker.js",

+ mp3: "Mp3Worker.js"

+};

+

+// default configs

+const CONFIGS = {

+ workerDir: "/workers/", // worker scripts dir (end with /)

+ numChannels: 2, // number of channels

+ encoding: "wav", // encoding (can be changed at runtime)

+

+ // runtime options

+ options: {

+ timeLimit: 1200, // recording time limit (sec)

+ encodeAfterRecord: true, // process encoding after recording

+ progressInterval: 1000, // encoding progress report interval (millisec)

+ bufferSize: undefined, // buffer size (use browser default)

+

+ // encoding-specific options

+ wav: {

+ mimeType: "audio/wav"

+ },

+ mp3: {

+ mimeType: "audio/mpeg",

+ bitRate: 192 // (CBR only): bit rate = [64 .. 320]

+ }

+ }

+};

+

+class Recorder {

+

+ constructor(source, configs) { //creates audio context from the source and connects it to the worker

+ extend(this, CONFIGS, configs || {});

+ this.context = source.context;

+ if (this.context.createScriptProcessor == null)

+ this.context.createScriptProcessor = this.context.createJavaScriptNode;

+ this.input = this.context.createGain();

+ source.connect(this.input);

+ this.buffer = [];

+ this.initWorker();

+ }

+

+ isRecording() {

+ return this.processor != null;

+ }

+

+ setEncoding(encoding) {

+ if(!this.isRecording() && this.encoding !== encoding) {

+ this.encoding = encoding;

+ this.initWorker();

+ }

+ }

+

+ setOptions(options) {

+ if (!this.isRecording()) {

+ extend(this.options, options);

+ this.worker.postMessage({ command: "options", options: this.options});

+ }

+ }

+

+ startRecording() {

+ if(!this.isRecording()) {

+ let numChannels = this.numChannels;

+ let buffer = this.buffer;

+ let worker = this.worker;

+ this.processor = this.context.createScriptProcessor(

+ this.options.bufferSize,

+ this.numChannels, this.numChannels);

+ this.input.connect(this.processor);

+ this.processor.connect(this.context.destination);

+ this.processor.onaudioprocess = function(event) {

+ for (var ch = 0; ch < numChannels; ++ch)

+ buffer[ch] = event.inputBuffer.getChannelData(ch);

+ worker.postMessage({ command: "record", buffer: buffer });

+ };

+ this.worker.postMessage({

+ command: "start",

+ bufferSize: this.processor.bufferSize

+ });

+ this.startTime = Date.now();

+ }

+ }

+

+ cancelRecording() {

+ if(this.isRecording()) {

+ this.input.disconnect();

+ this.processor.disconnect();

+ delete this.processor;

+ this.worker.postMessage({ command: "cancel" });

+ }

+ }

+

+ finishRecording() {

+ if (this.isRecording()) {

+ this.input.disconnect();

+ this.processor.disconnect();

+ delete this.processor;

+ this.worker.postMessage({ command: "finish" });

+ }

+ }

+

+ cancelEncoding() {

+ if (this.options.encodeAfterRecord)

+ if (!this.isRecording()) {

+ this.onEncodingCanceled(this);

+ this.initWorker();

+ }

+ }

+

+ initWorker() {

+ if (this.worker != null)

+ this.worker.terminate();

+ this.onEncoderLoading(this, this.encoding);

+ this.worker = new Worker(this.workerDir + WORKER_FILE[this.encoding]);

+ let _this = this;

+ this.worker.onmessage = function(event) {

+ let data = event.data;

+ switch (data.command) {

+ case "loaded":

+ _this.onEncoderLoaded(_this, _this.encoding);

+ break;

+ case "timeout":

+ _this.onTimeout(_this);

+ break;

+ case "progress":

+ _this.onEncodingProgress(_this, data.progress);

+ break;

+ case "complete":

+ _this.onComplete(_this, data.blob);

+ }

+ }

+ this.worker.postMessage({

+ command: "init",

+ config: {

+ sampleRate: this.context.sampleRate,

+ numChannels: this.numChannels

+ },

+ options: this.options

+ });

+ }

+

+ onEncoderLoading(recorder, encoding) {}

+ onEncoderLoaded(recorder, encoding) {}

+ onTimeout(recorder) {}

+ onEncodingProgress(recorder, progress) {}

+ onEncodingCanceled(recorder) {}

+ onComplete(recorder, blob) {}

+

+}

+

+const audioCapture = (timeLimit, muteTab, format, quality, limitRemoved) => {

+ chrome.tabCapture.capture({audio: true}, (stream) => { // sets up stream for capture

+ let startTabId; //tab when the capture is started

+ let timeout;

+ let completeTabID; //tab when the capture is stopped

+ let audioURL = null; //resulting object when encoding is completed

+ chrome.tabs.query({active:true, currentWindow: true}, (tabs) => startTabId = tabs[0].id) //saves start tab

+ const liveStream = stream;

+ const audioCtx = new AudioContext();

+ const source = audioCtx.createMediaStreamSource(stream);

+ let mediaRecorder = new Recorder(source); //initiates the recorder based on the current stream

+ mediaRecorder.setEncoding(format); //sets encoding based on options

+ if(limitRemoved) { //removes time limit

+ mediaRecorder.setOptions({timeLimit: 10800});

+ } else {

+ mediaRecorder.setOptions({timeLimit: timeLimit/1000});

+ }

+ if(format === "mp3") {

+ mediaRecorder.setOptions({mp3: {bitRate: quality}});

+ }

+ mediaRecorder.startRecording();

+

+ function onStopCommand(command) { //keypress

+ if (command === "stop") {

+ stopCapture();

+ }

+ }

+ function onStopClick(request) { //click on popup

+ if(request === "stopCapture") {

+ stopCapture();

+ } else if (request === "cancelCapture") {

+ cancelCapture();

+ } else if (request.cancelEncodeID) {

+ if(request.cancelEncodeID === startTabId && mediaRecorder) {

+ mediaRecorder.cancelEncoding();

+ }

+ }

+ }

+ chrome.commands.onCommand.addListener(onStopCommand);

+ chrome.runtime.onMessage.addListener(onStopClick);

+ mediaRecorder.onComplete = (recorder, blob) => {

+ audioURL = window.URL.createObjectURL(blob);

+ if(completeTabID) {

+ chrome.tabs.sendMessage(completeTabID, {type: "encodingComplete", audioURL});

+ }

+ mediaRecorder = null;

+ }

+ mediaRecorder.onEncodingProgress = (recorder, progress) => {

+ if(completeTabID) {

+ chrome.tabs.sendMessage(completeTabID, {type: "encodingProgress", progress: progress});

+ }

+ }

+

+ const stopCapture = function() {

+ let endTabId;

+ //check to make sure the current tab is the tab being captured

+ chrome.tabs.query({active: true, currentWindow: true}, (tabs) => {

+ endTabId = tabs[0].id;

+ if(mediaRecorder && startTabId === endTabId){

+ mediaRecorder.finishRecording();

+ chrome.tabs.create({url: "complete.html"}, (tab) => {

+ completeTabID = tab.id;

+ let completeCallback = () => {

+ chrome.tabs.sendMessage(tab.id, {type: "createTab", format: format, audioURL, startID: startTabId});

+ }

+ setTimeout(completeCallback, 500);

+ });

+ closeStream(endTabId);

+ }

+ })

+ }

+

+ const cancelCapture = function() {

+ let endTabId;

+ chrome.tabs.query({active: true, currentWindow: true}, (tabs) => {

+ endTabId = tabs[0].id;

+ if(mediaRecorder && startTabId === endTabId){

+ mediaRecorder.cancelRecording();

+ closeStream(endTabId);

+ }

+ })

+ }

+

+//removes the audio context and closes recorder to save memory

+ const closeStream = function(endTabId) {

+ chrome.commands.onCommand.removeListener(onStopCommand);

+ chrome.runtime.onMessage.removeListener(onStopClick);

+ mediaRecorder.onTimeout = () => {};

+ audioCtx.close();

+ liveStream.getAudioTracks()[0].stop();

+ sessionStorage.removeItem(endTabId);

+ chrome.runtime.sendMessage({captureStopped: endTabId});

+ }

+

+ mediaRecorder.onTimeout = stopCapture;

+

+ if(!muteTab) {

+ let audio = new Audio();

+ audio.srcObject = liveStream;

+ audio.play();

+ }

+ });

+}

+

+

+

+//sends reponses to and from the popup menu

+chrome.runtime.onMessage.addListener((request, sender, sendResponse) => {

+ if (request.currentTab && sessionStorage.getItem(request.currentTab)) {

+ sendResponse(sessionStorage.getItem(request.currentTab));

+ } else if (request.currentTab){

+ sendResponse(false);

+ } else if (request === "startCapture") {

+ startCapture();

+ }

+});

+

+const startCapture = function() {

+ chrome.tabs.query({active: true, currentWindow: true}, (tabs) => {

+ // CODE TO BLOCK CAPTURE ON YOUTUBE, DO NOT REMOVE

+ // if(tabs[0].url.toLowerCase().includes("youtube")) {

+ // chrome.tabs.create({url: "error.html"});

+ // } else {

+ if(!sessionStorage.getItem(tabs[0].id)) {

+ sessionStorage.setItem(tabs[0].id, Date.now());

+ chrome.storage.sync.get({

+ maxTime: 1200000,

+ muteTab: false,

+ format: "mp3",

+ quality: 192,

+ limitRemoved: false

+ }, (options) => {

+ let time = options.maxTime;

+ if(time > 1200000) {

+ time = 1200000

+ }

+ audioCapture(time, options.muteTab, options.format, options.quality, options.limitRemoved);

+ });

+ chrome.runtime.sendMessage({captureStarted: tabs[0].id, startTime: Date.now()});

+ }

+ // }

+ });

+};

+

+

+chrome.commands.onCommand.addListener((command) => {

+ if (command === "start") {

+ startCapture();

+ }

+});

\ No newline at end of file

diff --git a/chrome-extension/manifest.json b/chrome-extension/manifest.json

new file mode 100644

index 0000000..a925ee5

--- /dev/null

+++ b/chrome-extension/manifest.json

@@ -0,0 +1,17 @@

+{

+ "manifest_version": 3,

+ "name": "WhisperLiveKit Tab Capture",

+ "version": "1.0",

+ "description": "Capture and transcribe audio from browser tabs using WhisperLiveKit.",

+ "action": {

+ "default_title": "WhisperLiveKit Tab Capture",

+ "default_popup": "popup.html"

+ },

+ "permissions": ["scripting", "tabCapture", "offscreen", "activeTab", "storage"],

+ "web_accessible_resources": [

+ {

+ "resources": ["requestPermissions.html", "requestPermissions.js"],

+ "matches": ["