diff --git a/README.md b/README.md

index 1787ad3..1f15dcd 100644

--- a/README.md

+++ b/README.md

@@ -54,7 +54,15 @@ pip install whisperlivekit

> - See [tokenizer.py](https://github.com/QuentinFuxa/WhisperLiveKit/blob/main/whisperlivekit/simul_whisper/whisper/tokenizer.py) for the list of all available languages.

> - For HTTPS requirements, see the **Parameters** section for SSL configuration options.

-

+#### Use it to capture audio from web pages.

+

+Go to `chrome-extension` for instructions.

+

+

+

+

+

#### Optional Dependencies

diff --git a/chrome-extension/README.md b/chrome-extension/README.md

index 3c4298a..bd4a8c7 100644

--- a/chrome-extension/README.md

+++ b/chrome-extension/README.md

@@ -1,11 +1,13 @@

-## WhisperLiveKit Chrome Extension v0.1.0

-Capture the audio of your current tab, transcribe or translate it using WhisperliveKit. **Still unstable**

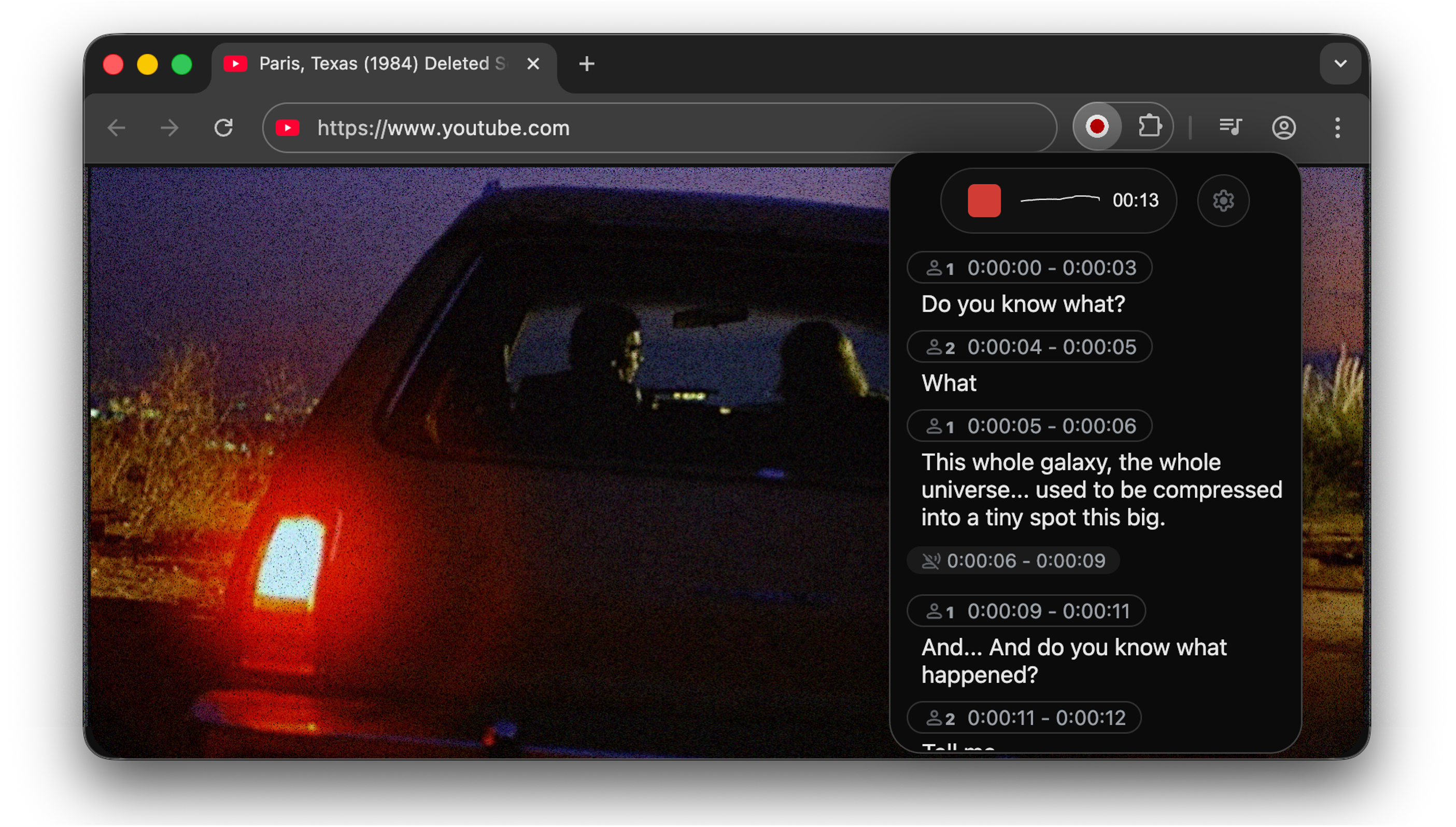

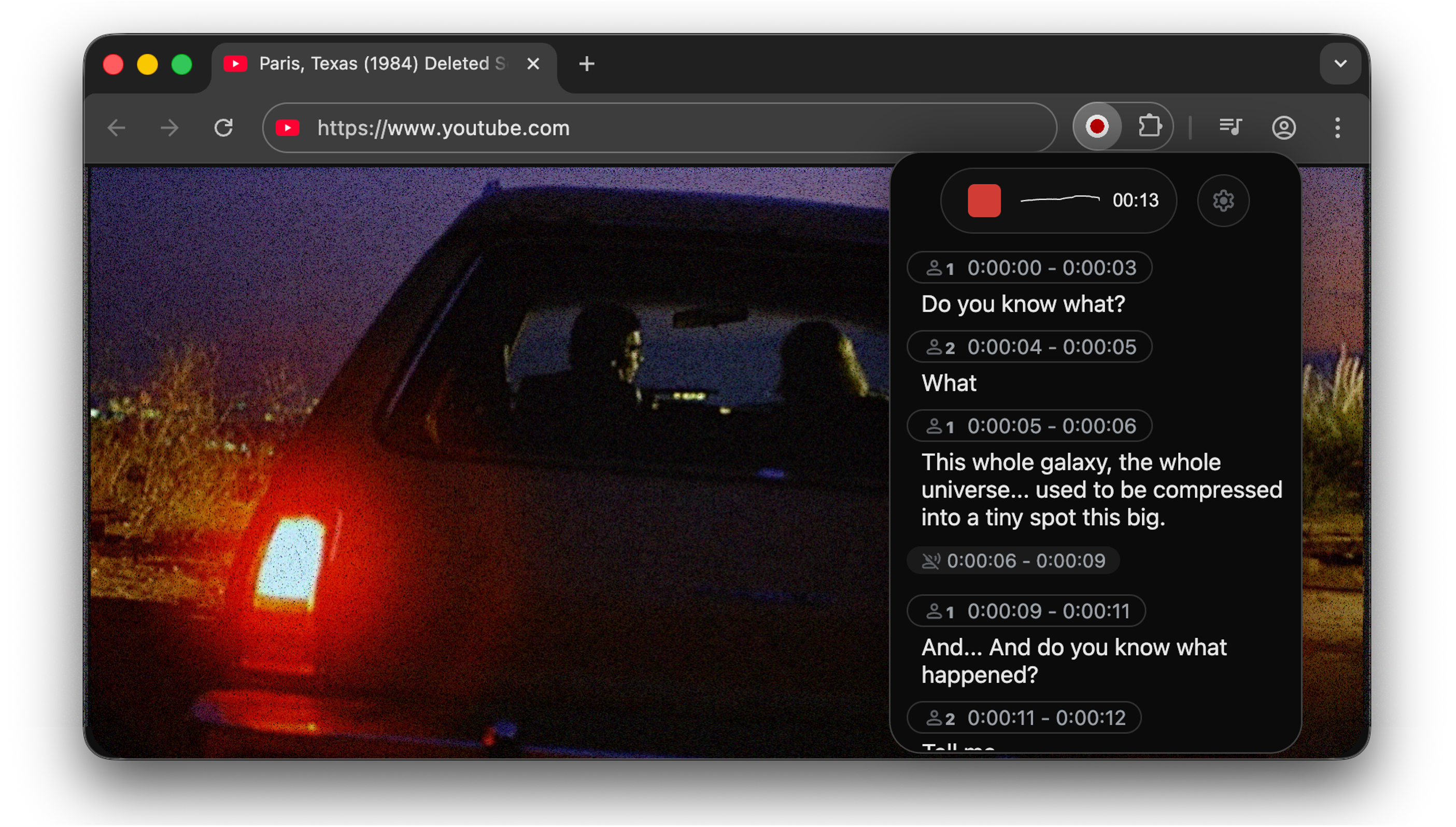

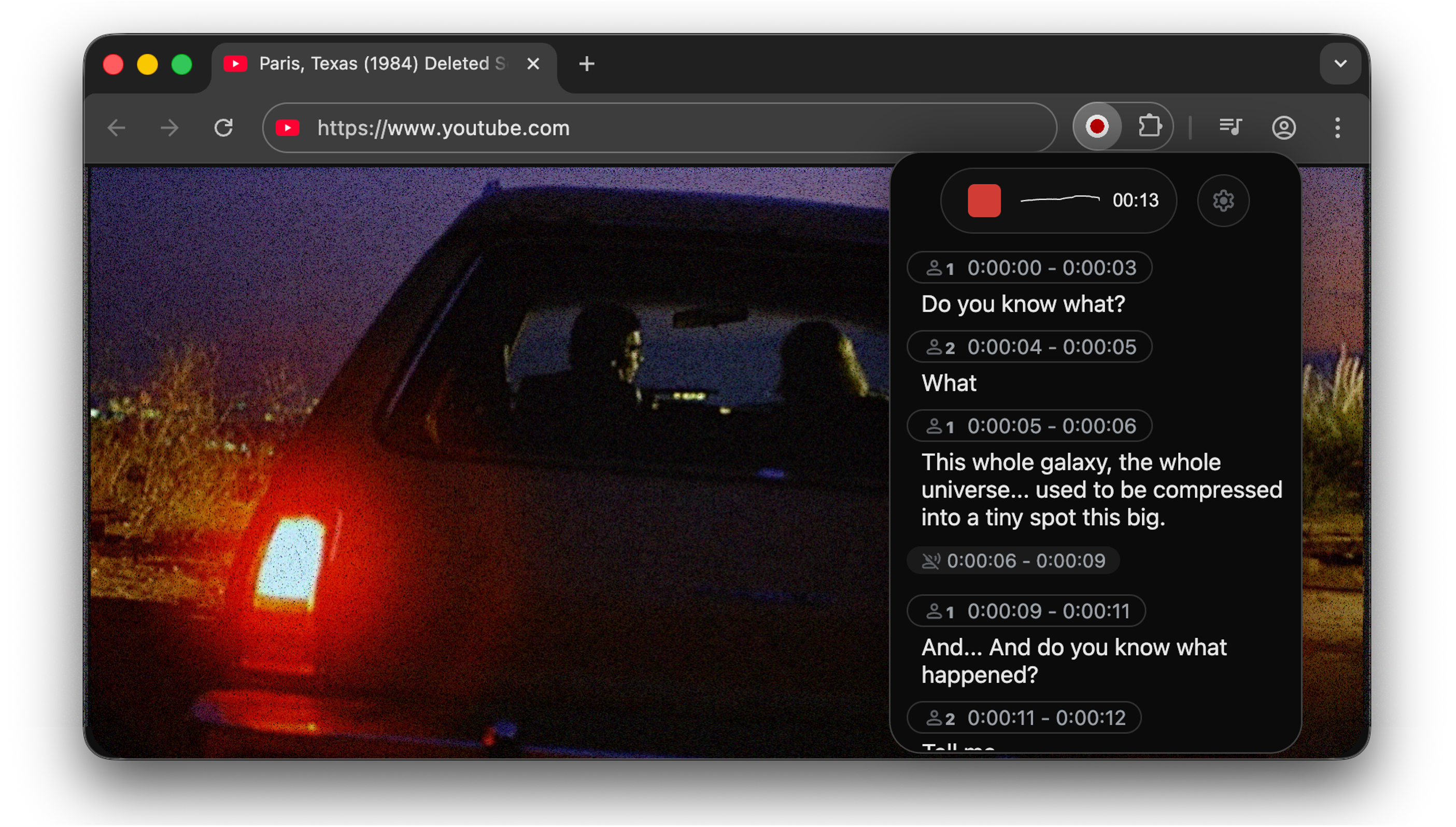

+## WhisperLiveKit Chrome Extension v0.1.1

+Capture the audio of your current tab, transcribe diarize and translate it using WhisperliveKit, in Chrome and other Chromium-based browsers.

+

+> Currently, only the tab audio is captured; your microphone audio is not recorded.

Default Microphone ';

-

- availableMicrophones.forEach((device, index) => {

- const option = document.createElement('option');

- option.value = device.deviceId;

- option.textContent = device.label || `Microphone ${index + 1}`;

- microphoneSelect.appendChild(option);

- });

-

- const savedMicId = localStorage.getItem('selectedMicrophone');

- if (savedMicId && availableMicrophones.some(mic => mic.deviceId === savedMicId)) {

- microphoneSelect.value = savedMicId;

- selectedMicrophoneId = savedMicId;

- }

-}

-

-function handleMicrophoneChange() {

- selectedMicrophoneId = microphoneSelect.value || null;

- localStorage.setItem('selectedMicrophone', selectedMicrophoneId || '');

-

- const selectedDevice = availableMicrophones.find(mic => mic.deviceId === selectedMicrophoneId);

- const deviceName = selectedDevice ? selectedDevice.label : 'Default Microphone';

-

- console.log(`Selected microphone: ${deviceName}`);

- statusText.textContent = `Microphone changed to: ${deviceName}`;

-

- if (isRecording) {

- statusText.textContent = "Switching microphone... Please wait.";

- stopRecording().then(() => {

- setTimeout(() => {

- toggleRecording();

- }, 1000);

- });

- }

-}

-

-// Helpers

-function fmt1(x) {

- const n = Number(x);

- return Number.isFinite(n) ? n.toFixed(1) : x;

-}

-

-// Default WebSocket URL computation

-const host = window.location.hostname || "localhost";

-const port = window.location.port;

-const protocol = window.location.protocol === "https:" ? "wss" : "ws";

-const defaultWebSocketUrl = websocketUrl;

-

-// Populate default caption and input

-if (websocketDefaultSpan) websocketDefaultSpan.textContent = defaultWebSocketUrl;

-websocketInput.value = defaultWebSocketUrl;

-websocketUrl = defaultWebSocketUrl;

-

-// Optional chunk selector (guard for presence)

-if (chunkSelector) {

- chunkSelector.addEventListener("change", () => {

- chunkDuration = parseInt(chunkSelector.value);

- });

-}

-

-// WebSocket input change handling

-websocketInput.addEventListener("change", () => {

- const urlValue = websocketInput.value.trim();

- if (!urlValue.startsWith("ws://") && !urlValue.startsWith("wss://")) {

- statusText.textContent = "Invalid WebSocket URL (must start with ws:// or wss://)";

- return;

- }

- websocketUrl = urlValue;

- statusText.textContent = "WebSocket URL updated. Ready to connect.";

-});

-

-function setupWebSocket() {

- return new Promise((resolve, reject) => {

- try {

- websocket = new WebSocket(websocketUrl);

- } catch (error) {

- statusText.textContent = "Invalid WebSocket URL. Please check and try again.";

- reject(error);

- return;

- }

-

- websocket.onopen = () => {

- statusText.textContent = "Connected to server.";

- resolve();

- };

-

- websocket.onclose = () => {

- if (userClosing) {

- if (waitingForStop) {

- statusText.textContent = "Processing finalized or connection closed.";

- if (lastReceivedData) {

- renderLinesWithBuffer(

- lastReceivedData.lines || [],

- lastReceivedData.buffer_diarization || "",

- lastReceivedData.buffer_transcription || "",

- 0,

- 0,

- true

- );

- }

- }

- } else {

- statusText.textContent = "Disconnected from the WebSocket server. (Check logs if model is loading.)";

- if (isRecording) {

- stopRecording();

- }

- }

- isRecording = false;

- waitingForStop = false;

- userClosing = false;

- lastReceivedData = null;

- websocket = null;

- updateUI();

- };

-

- websocket.onerror = () => {

- statusText.textContent = "Error connecting to WebSocket.";

- reject(new Error("Error connecting to WebSocket"));

- };

-

- websocket.onmessage = (event) => {

- const data = JSON.parse(event.data);

-

- if (data.type === "ready_to_stop") {

- console.log("Ready to stop received, finalizing display and closing WebSocket.");

- waitingForStop = false;

-

- if (lastReceivedData) {

- renderLinesWithBuffer(

- lastReceivedData.lines || [],

- lastReceivedData.buffer_diarization || "",

- lastReceivedData.buffer_transcription || "",

- 0,

- 0,

- true

- );

- }

- statusText.textContent = "Finished processing audio! Ready to record again.";

- recordButton.disabled = false;

-

- if (websocket) {

- websocket.close();

- }

- return;

- }

-

- lastReceivedData = data;

-

- const {

- lines = [],

- buffer_transcription = "",

- buffer_diarization = "",

- remaining_time_transcription = 0,

- remaining_time_diarization = 0,

- status = "active_transcription",

- } = data;

-

- renderLinesWithBuffer(

- lines,

- buffer_diarization,

- buffer_transcription,

- remaining_time_diarization,

- remaining_time_transcription,

- false,

- status

- );

- };

- });

-}

-

-function renderLinesWithBuffer(

- lines,

- buffer_diarization,

- buffer_transcription,

- remaining_time_diarization,

- remaining_time_transcription,

- isFinalizing = false,

- current_status = "active_transcription"

-) {

- if (current_status === "no_audio_detected") {

- linesTranscriptDiv.innerHTML =

- "No audio detected...

";

- return;

- }

-

- const showLoading = !isFinalizing && (lines || []).some((it) => it.speaker == 0);

- const showTransLag = !isFinalizing && remaining_time_transcription > 0;

- const showDiaLag = !isFinalizing && !!buffer_diarization && remaining_time_diarization > 0;

- const signature = JSON.stringify({

- lines: (lines || []).map((it) => ({ speaker: it.speaker, text: it.text, start: it.start, end: it.end })),

- buffer_transcription: buffer_transcription || "",

- buffer_diarization: buffer_diarization || "",

- status: current_status,

- showLoading,

- showTransLag,

- showDiaLag,

- isFinalizing: !!isFinalizing,

- });

- if (lastSignature === signature) {

- const t = document.querySelector(".lag-transcription-value");

- if (t) t.textContent = fmt1(remaining_time_transcription);

- const d = document.querySelector(".lag-diarization-value");

- if (d) d.textContent = fmt1(remaining_time_diarization);

- const ld = document.querySelector(".loading-diarization-value");

- if (ld) ld.textContent = fmt1(remaining_time_diarization);

- return;

- }

- lastSignature = signature;

-

- const linesHtml = (lines || [])

- .map((item, idx) => {

- let timeInfo = "";

- if (item.start !== undefined && item.end !== undefined) {

- timeInfo = ` ${item.start} - ${item.end}`;

- }

-

- let speakerLabel = "";

- if (item.speaker === -2) {

- speakerLabel = `Silence${timeInfo} `;

- } else if (item.speaker == 0 && !isFinalizing) {

- speakerLabel = `${fmt1(

- remaining_time_diarization

- )} second(s) of audio are undergoing diarizationSpeaker ${item.speaker}${timeInfo} `;

- }

-

- let currentLineText = item.text || "";

-

- if (idx === lines.length - 1) {

- if (!isFinalizing && item.speaker !== -2) {

- if (remaining_time_transcription > 0) {

- speakerLabel += `${fmt1(

- remaining_time_transcription

- )} s${fmt1(

- remaining_time_diarization

- )} s${buffer_diarization} `;

- }

- }

- if (buffer_transcription) {

- if (isFinalizing) {

- currentLineText +=

- (currentLineText.length > 0 && buffer_transcription.trim().length > 0 ? " " : "") +

- buffer_transcription.trim();

- } else {

- currentLineText += `${buffer_transcription} `;

- }

- }

- }

-

- return currentLineText.trim().length > 0 || speakerLabel.length > 0

- ? `${speakerLabel}

${currentLineText}

`

- : `${speakerLabel}

`;

- })

- .join("");

-

- linesTranscriptDiv.innerHTML = linesHtml;

- window.scrollTo({ top: document.body.scrollHeight, behavior: "smooth" });

-}

-

-function updateTimer() {

- if (!startTime) return;

-

- const elapsed = Math.floor((Date.now() - startTime) / 1000);

- const minutes = Math.floor(elapsed / 60).toString().padStart(2, "0");

- const seconds = (elapsed % 60).toString().padStart(2, "0");

- timerElement.textContent = `${minutes}:${seconds}`;

-}

-

-function drawWaveform() {

- if (!analyser) return;

-

- const bufferLength = analyser.frequencyBinCount;

- const dataArray = new Uint8Array(bufferLength);

- analyser.getByteTimeDomainData(dataArray);

-

- waveCtx.clearRect(

- 0,

- 0,

- waveCanvas.width / (window.devicePixelRatio || 1),

- waveCanvas.height / (window.devicePixelRatio || 1)

- );

- waveCtx.lineWidth = 1;

- waveCtx.strokeStyle = waveStroke;

- waveCtx.beginPath();

-

- const sliceWidth = (waveCanvas.width / (window.devicePixelRatio || 1)) / bufferLength;

- let x = 0;

-

- for (let i = 0; i < bufferLength; i++) {

- const v = dataArray[i] / 128.0;

- const y = (v * (waveCanvas.height / (window.devicePixelRatio || 1))) / 2;

-

- if (i === 0) {

- waveCtx.moveTo(x, y);

- } else {

- waveCtx.lineTo(x, y);

- }

-

- x += sliceWidth;

- }

-

- waveCtx.lineTo(

- waveCanvas.width / (window.devicePixelRatio || 1),

- (waveCanvas.height / (window.devicePixelRatio || 1)) / 2

- );

- waveCtx.stroke();

-

- animationFrame = requestAnimationFrame(drawWaveform);

-}

-

-async function startRecording() {

- try {

- try {

- wakeLock = await navigator.wakeLock.request("screen");

- } catch (err) {

- console.log("Error acquiring wake lock.");

- }

-

- let stream;

- try {

- // Try tab capture first

- stream = await new Promise((resolve, reject) => {

- chrome.tabCapture.capture({audio: true}, (s) => {

- if (s) {

- resolve(s);

- } else {

- reject(new Error('Tab capture failed or not available'));

- }

- });

- });

- statusText.textContent = "Using tab audio capture.";

- } catch (tabError) {

- console.log('Tab capture not available, falling back to microphone', tabError);

- // Fallback to microphone

- const audioConstraints = selectedMicrophoneId

- ? { audio: { deviceId: { exact: selectedMicrophoneId } } }

- : { audio: true };

- stream = await navigator.mediaDevices.getUserMedia(audioConstraints);

- statusText.textContent = "Using microphone audio.";

- }

-

- audioContext = new (window.AudioContext || window.webkitAudioContext)();

- analyser = audioContext.createAnalyser();

- analyser.fftSize = 256;

- microphone = audioContext.createMediaStreamSource(stream);

- microphone.connect(analyser);

-

- recorder = new MediaRecorder(stream, { mimeType: "audio/webm" });

- recorder.ondataavailable = (e) => {

- if (websocket && websocket.readyState === WebSocket.OPEN) {

- websocket.send(e.data);

- }

- };

- recorder.start(chunkDuration);

-

- startTime = Date.now();

- timerInterval = setInterval(updateTimer, 1000);

- drawWaveform();

-

- isRecording = true;

- updateUI();

- } catch (err) {

- if (window.location.hostname === "0.0.0.0") {

- statusText.textContent =

- "Error accessing audio input. Browsers may block audio access on 0.0.0.0. Try using localhost:8000 instead.";

- } else {

- statusText.textContent = "Error accessing audio input. Please check permissions.";

- }

- console.error(err);

- }

-}

-

-async function stopRecording() {

- if (wakeLock) {

- try {

- await wakeLock.release();

- } catch (e) {

- // ignore

- }

- wakeLock = null;

- }

-

- userClosing = true;

- waitingForStop = true;

-

- if (websocket && websocket.readyState === WebSocket.OPEN) {

- const emptyBlob = new Blob([], { type: "audio/webm" });

- websocket.send(emptyBlob);

- statusText.textContent = "Recording stopped. Processing final audio...";

- }

-

- if (recorder) {

- recorder.stop();

- recorder = null;

- }

-

- if (microphone) {

- microphone.disconnect();

- microphone = null;

- }

-

- if (analyser) {

- analyser = null;

- }

-

- if (audioContext && audioContext.state !== "closed") {

- try {

- await audioContext.close();

- } catch (e) {

- console.warn("Could not close audio context:", e);

- }

- audioContext = null;

- }

-

- if (animationFrame) {

- cancelAnimationFrame(animationFrame);

- animationFrame = null;

- }

-

- if (timerInterval) {

- clearInterval(timerInterval);

- timerInterval = null;

- }

- timerElement.textContent = "00:00";

- startTime = null;

-

- isRecording = false;

- updateUI();

-}

-

-async function toggleRecording() {

- if (!isRecording) {

- if (waitingForStop) {

- console.log("Waiting for stop, early return");

- return;

- }

- console.log("Connecting to WebSocket");

- try {

- if (websocket && websocket.readyState === WebSocket.OPEN) {

- await startRecording();

- } else {

- await setupWebSocket();

- await startRecording();

- }

- } catch (err) {

- statusText.textContent = "Could not connect to WebSocket or access mic. Aborted.";

- console.error(err);

- }

- } else {

- console.log("Stopping recording");

- stopRecording();

- }

-}

-

-function updateUI() {

- recordButton.classList.toggle("recording", isRecording);

- recordButton.disabled = waitingForStop;

-

- if (waitingForStop) {

- if (statusText.textContent !== "Recording stopped. Processing final audio...") {

- statusText.textContent = "Please wait for processing to complete...";

- }

- } else if (isRecording) {

- statusText.textContent = "Recording...";

- } else {

- if (

- statusText.textContent !== "Finished processing audio! Ready to record again." &&

- statusText.textContent !== "Processing finalized or connection closed."

- ) {

- statusText.textContent = "Click to start transcription";

- }

- }

- if (!waitingForStop) {

- recordButton.disabled = false;

- }

-}

-

-recordButton.addEventListener("click", toggleRecording);

-

-if (microphoneSelect) {

- microphoneSelect.addEventListener("change", handleMicrophoneChange);

-}

-

-// Settings toggle functionality

-settingsToggle.addEventListener("click", () => {

- settingsDiv.classList.toggle("visible");

- settingsToggle.classList.toggle("active");

-});

-

-document.addEventListener('DOMContentLoaded', async () => {

- try {

- await enumerateMicrophones();

- } catch (error) {

- console.log("Could not enumerate microphones on load:", error);

- }

-});

-navigator.mediaDevices.addEventListener('devicechange', async () => {

- console.log('Device change detected, re-enumerating microphones');

- try {

- await enumerateMicrophones();

- } catch (error) {

- console.log("Error re-enumerating microphones:", error);

- }

-});

-

-

-async function run() {

- const micPermission = await navigator.permissions.query({

- name: "microphone",

- });

-

- document.getElementById(

- "audioPermission"

- ).innerText = `MICROPHONE: ${micPermission.state}`;

-

- if (micPermission.state !== "granted") {

- chrome.tabs.create({ url: "welcome.html" });

- }

-

- const intervalId = setInterval(async () => {

- const micPermission = await navigator.permissions.query({

- name: "microphone",

- });

- if (micPermission.state === "granted") {

- document.getElementById(

- "audioPermission"

- ).innerText = `MICROPHONE: ${micPermission.state}`;

- clearInterval(intervalId);

- }

- }, 100);

-}

-

-void run();

diff --git a/chrome-extension/manifest.json b/chrome-extension/manifest.json

index 2d8e3ab..1ed6a13 100644

--- a/chrome-extension/manifest.json

+++ b/chrome-extension/manifest.json

@@ -3,9 +3,6 @@

"name": "WhisperLiveKit Tab Capture",

"version": "1.0",

"description": "Capture and transcribe audio from browser tabs using WhisperLiveKit.",

- "background": {

- "service_worker": "background.js"

- },

"icons": {

"16": "icons/icon16.png",

"32": "icons/icon32.png",

@@ -14,7 +11,7 @@

},

"action": {

"default_title": "WhisperLiveKit Tab Capture",

- "default_popup": "popup.html"

+ "default_popup": "live_transcription.html"

},

"permissions": [

"scripting",

@@ -22,16 +19,5 @@

"offscreen",

"activeTab",

"storage"

- ],

- "web_accessible_resources": [

- {

- "resources": [

- "requestPermissions.html",

- "requestPermissions.js"

- ],

- "matches": [

- ""

- ]

- }

]

}

\ No newline at end of file

diff --git a/chrome-extension/popup.html b/chrome-extension/popup.html

deleted file mode 100644

index 088d384..0000000

--- a/chrome-extension/popup.html

+++ /dev/null

@@ -1,78 +0,0 @@

-

-

-

-

- WhisperLiveKit

-

-

-

-

-

-

-

-

-

-

-

- Websocket URL

-

-

-

-

Select Microphone

-

- Default Microphone

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

diff --git a/chrome-extension/web/live_transcription.css b/chrome-extension/web/live_transcription.css

deleted file mode 100644

index 97c2c97..0000000

--- a/chrome-extension/web/live_transcription.css

+++ /dev/null

@@ -1,539 +0,0 @@

-:root {

- --bg: #ffffff;

- --text: #111111;

- --muted: #666666;

- --border: #e5e5e5;

- --chip-bg: rgba(0, 0, 0, 0.04);

- --chip-text: #000000;

- --spinner-border: #8d8d8d5c;

- --spinner-top: #b0b0b0;

- --silence-bg: #f3f3f3;

- --loading-bg: rgba(255, 77, 77, 0.06);

- --button-bg: #ffffff;

- --button-border: #e9e9e9;

- --wave-stroke: #000000;

- --label-dia-text: #868686;

- --label-trans-text: #111111;

-}

-

-@media (prefers-color-scheme: dark) {

- :root:not([data-theme="light"]) {

- --bg: #0b0b0b;

- --text: #e6e6e6;

- --muted: #9aa0a6;

- --border: #333333;

- --chip-bg: rgba(255, 255, 255, 0.08);

- --chip-text: #e6e6e6;

- --spinner-border: #555555;

- --spinner-top: #dddddd;

- --silence-bg: #1a1a1a;

- --loading-bg: rgba(255, 77, 77, 0.12);

- --button-bg: #111111;

- --button-border: #333333;

- --wave-stroke: #e6e6e6;

- --label-dia-text: #b3b3b3;

- --label-trans-text: #ffffff;

- }

-}

-

-:root[data-theme="dark"] {

- --bg: #0b0b0b;

- --text: #e6e6e6;

- --muted: #9aa0a6;

- --border: #333333;

- --chip-bg: rgba(255, 255, 255, 0.08);

- --chip-text: #e6e6e6;

- --spinner-border: #555555;

- --spinner-top: #dddddd;

- --silence-bg: #1a1a1a;

- --loading-bg: rgba(255, 77, 77, 0.12);

- --button-bg: #111111;

- --button-border: #333333;

- --wave-stroke: #e6e6e6;

- --label-dia-text: #b3b3b3;

- --label-trans-text: #ffffff;

-}

-

-:root[data-theme="light"] {

- --bg: #ffffff;

- --text: #111111;

- --muted: #666666;

- --border: #e5e5e5;

- --chip-bg: rgba(0, 0, 0, 0.04);

- --chip-text: #000000;

- --spinner-border: #8d8d8d5c;

- --spinner-top: #b0b0b0;

- --silence-bg: #f3f3f3;

- --loading-bg: rgba(255, 77, 77, 0.06);

- --button-bg: #ffffff;

- --button-border: #e9e9e9;

- --wave-stroke: #000000;

- --label-dia-text: #868686;

- --label-trans-text: #111111;

-}

-

-body {

- font-family: ui-sans-serif, system-ui, sans-serif, 'Apple Color Emoji', 'Segoe UI Emoji', 'Segoe UI Symbol', 'Noto Color Emoji';

- margin: 20px;

- text-align: center;

- background-color: var(--bg);

- color: var(--text);

-}

-

-.settings-toggle {

- margin-top: 4px;

- width: 40px;

- height: 40px;

- border: none;

- border-radius: 50%;

- background-color: var(--button-bg);

- cursor: pointer;

- transition: all 0.3s ease;

- /* border: 1px solid var(--button-border); */

- display: flex;

- align-items: center;

- justify-content: center;

- position: relative;

-}

-

-.settings-toggle:hover {

- background-color: var(--chip-bg);

-}

-

-.settings-toggle img {

- width: 24px;

- height: 24px;

- opacity: 0.7;

- transition: opacity 0.2s ease, transform 0.3s ease;

-}

-

-.settings-toggle:hover img {

- opacity: 1;

-}

-

-.settings-toggle.active img {

- transform: rotate(80deg);

-}

-

-/* Record button */

-#recordButton {

- width: 50px;

- height: 50px;

- border: none;

- border-radius: 50%;

- background-color: var(--button-bg);

- cursor: pointer;

- transition: all 0.3s ease;

- border: 1px solid var(--button-border);

- display: flex;

- align-items: center;

- justify-content: center;

- position: relative;

-}

-

-#recordButton.recording {

- width: 180px;

- border-radius: 40px;

- justify-content: flex-start;

- padding-left: 20px;

-}

-

-#recordButton:active {

- transform: scale(0.95);

-}

-

-.shape-container {

- width: 25px;

- height: 25px;

- display: flex;

- align-items: center;

- justify-content: center;

- flex-shrink: 0;

-}

-

-.shape {

- width: 25px;

- height: 25px;

- background-color: rgb(209, 61, 53);

- border-radius: 50%;

- transition: all 0.3s ease;

-}

-

-#recordButton:disabled .shape {

- background-color: #6e6d6d;

-}

-

-#recordButton.recording .shape {

- border-radius: 5px;

- width: 25px;

- height: 25px;

-}

-

-/* Recording elements */

-.recording-info {

- display: none;

- align-items: center;

- margin-left: 15px;

- flex-grow: 1;

-}

-

-#recordButton.recording .recording-info {

- display: flex;

-}

-

-.wave-container {

- width: 60px;

- height: 30px;

- position: relative;

- display: flex;

- align-items: center;

- justify-content: center;

-}

-

-#waveCanvas {

- width: 100%;

- height: 100%;

-}

-

-.timer {

- font-size: 14px;

- font-weight: 500;

- color: var(--text);

- margin-left: 10px;

-}

-

-#status {

- margin-top: 20px;

- font-size: 16px;

- color: var(--text);

-}

-

-/* Settings */

-.settings-container {

- display: flex;

- justify-content: center;

- align-items: flex-start;

- gap: 15px;

- margin-top: 20px;

- flex-wrap: wrap;

-}

-

-.settings {

- display: none;

- flex-wrap: wrap;

- align-items: flex-start;

- gap: 12px;

- transition: opacity 0.3s ease;

-}

-

-.settings.visible {

- display: flex;

-}

-

-.field {

- display: flex;

- flex-direction: column;

- align-items: flex-start;

- gap: 3px;

-}

-

-#chunkSelector,

-#websocketInput,

-#themeSelector,

-#microphoneSelect {

- font-size: 16px;

- padding: 5px 8px;

- border-radius: 8px;

- border: 1px solid var(--border);

- background-color: var(--button-bg);

- color: var(--text);

- max-height: 30px;

-}

-

-#microphoneSelect {

- width: 100%;

- max-width: 190px;

- min-width: 120px;

-}

-

-#chunkSelector:focus,

-#websocketInput:focus,

-#themeSelector:focus,

-#microphoneSelect:focus {

- outline: none;

- border-color: #007bff;

- box-shadow: 0 0 0 3px rgba(0, 123, 255, 0.15);

-}

-

-label {

- font-size: 13px;

- color: var(--muted);

-}

-

-.ws-default {

- font-size: 12px;

- color: var(--muted);

-}

-

-/* Segmented pill control for Theme */

-.segmented {

- display: inline-flex;

- align-items: stretch;

- border: 1px solid var(--button-border);

- background-color: var(--button-bg);

- border-radius: 999px;

- overflow: hidden;

-}

-

-.segmented input[type="radio"] {

- position: absolute;

- opacity: 0;

- pointer-events: none;

-}

-

-.theme-selector-container {

- display: flex;

- align-items: center;

- margin-top: 17px;

-}

-

-.segmented label {

- display: inline-flex;

- align-items: center;

- gap: 6px;

- padding: 6px 12px;

- font-size: 14px;

- color: var(--muted);

- cursor: pointer;

- user-select: none;

- transition: background-color 0.2s ease, color 0.2s ease;

-}

-

-.segmented label span {

- display: none;

-}

-

-.segmented label:hover span {

- display: inline;

-}

-

-.segmented label:hover {

- background-color: var(--chip-bg);

-}

-

-.segmented img {

- width: 16px;

- height: 16px;

-}

-

-.segmented input[type="radio"]:checked + label {

- background-color: var(--chip-bg);

- color: var(--text);

-}

-

-.segmented input[type="radio"]:focus-visible + label,

-.segmented input[type="radio"]:focus + label {

- outline: 2px solid #007bff;

- outline-offset: 2px;

- border-radius: 999px;

-}

-

-/* Transcript area */

-#linesTranscript {

- margin: 20px auto;

- max-width: 700px;

- text-align: left;

- font-size: 16px;

-}

-

-#linesTranscript p {

- margin: 0px 0;

-}

-

-#linesTranscript strong {

- color: var(--text);

-}

-

-#speaker {

- border: 1px solid var(--border);

- border-radius: 100px;

- padding: 2px 10px;

- font-size: 14px;

- margin-bottom: 0px;

-}

-

-.label_diarization {

- background-color: var(--chip-bg);

- border-radius: 8px 8px 8px 8px;

- padding: 2px 10px;

- margin-left: 10px;

- display: inline-block;

- white-space: nowrap;

- font-size: 14px;

- margin-bottom: 0px;

- color: var(--label-dia-text);

-}

-

-.label_transcription {

- background-color: var(--chip-bg);

- border-radius: 8px 8px 8px 8px;

- padding: 2px 10px;

- display: inline-block;

- white-space: nowrap;

- margin-left: 10px;

- font-size: 14px;

- margin-bottom: 0px;

- color: var(--label-trans-text);

-}

-

-#timeInfo {

- color: var(--muted);

- margin-left: 10px;

-}

-

-.textcontent {

- font-size: 16px;

- padding-left: 10px;

- margin-bottom: 10px;

- margin-top: 1px;

- padding-top: 5px;

- border-radius: 0px 0px 0px 10px;

-}

-

-.buffer_diarization {

- color: var(--label-dia-text);

- margin-left: 4px;

-}

-

-.buffer_transcription {

- color: #7474748c;

- margin-left: 4px;

-}

-

-.spinner {

- display: inline-block;

- width: 8px;

- height: 8px;

- border: 2px solid var(--spinner-border);

- border-top: 2px solid var(--spinner-top);

- border-radius: 50%;

- animation: spin 0.7s linear infinite;

- vertical-align: middle;

- margin-bottom: 2px;

- margin-right: 5px;

-}

-

-@keyframes spin {

- to {

- transform: rotate(360deg);

- }

-}

-

-.silence {

- color: var(--muted);

- background-color: var(--silence-bg);

- font-size: 13px;

- border-radius: 30px;

- padding: 2px 10px;

-}

-

-.loading {

- color: var(--muted);

- background-color: var(--loading-bg);

- border-radius: 8px 8px 8px 0px;

- padding: 2px 10px;

- font-size: 14px;

- margin-bottom: 0px;

-}

-

-/* for smaller screens */

-/* @media (max-width: 450px) {

- .settings-container {

- flex-direction: column;

- gap: 10px;

- align-items: center;

- }

-

- .settings {

- justify-content: center;

- gap: 8px;

- width: 100%;

- }

-

- .field {

- align-items: center;

- width: 100%;

- }

-

- #websocketInput,

- #microphoneSelect {

- min-width: 200px;

- max-width: 100%;

- }

-

- .theme-selector-container {

- margin-top: 10px;

- }

-} */

-

-/* @media (max-width: 768px) and (min-width: 451px) {

- .settings-container {

- gap: 10px;

- }

-

- .settings {

- gap: 8px;

- }

-

- #websocketInput,

- #microphoneSelect {

- min-width: 150px;

- max-width: 300px;

- }

-} */

-

-/* @media (max-width: 480px) {

- body {

- margin: 10px;

- }

-

- .settings-toggle {

- width: 35px;

- height: 35px;

- }

-

- .settings-toggle img {

- width: 20px;

- height: 20px;

- }

-

- .settings {

- flex-direction: column;

- align-items: center;

- gap: 6px;

- }

-

- #websocketInput,

- #microphoneSelect {

- max-width: 400px;

- }

-

- .segmented label {

- padding: 4px 8px;

- font-size: 12px;

- }

-

- .segmented img {

- width: 14px;

- height: 14px;

- }

-} */

-

-

-html

-{

- width: 400px; /* max: 800px */

- height: 600px; /* max: 600px */

- border-radius: 10px;

-

-}

diff --git a/chrome-extension/web/src/dark_mode.svg b/chrome-extension/web/src/dark_mode.svg

deleted file mode 100644

index a083e1a..0000000

--- a/chrome-extension/web/src/dark_mode.svg

+++ /dev/null

@@ -1 +0,0 @@

-Welcome

-

-

-

- This page exists to workaround an issue with Chrome that blocks permission

- requests from chrome extensions

-

-

-

diff --git a/sync_extension.py b/sync_extension.py

new file mode 100644

index 0000000..0ccae60

--- /dev/null

+++ b/sync_extension.py

@@ -0,0 +1,38 @@

+import shutil

+import os

+from pathlib import Path

+

+def sync_extension_files():

+ """Copy core files from web directory to Chrome extension directory."""

+

+ web_dir = Path("whisperlivekit/web")

+ extension_dir = Path("chrome-extension")

+

+ files_to_sync = [

+ "live_transcription.html", "live_transcription.js", "live_transcription.css"

+ ]

+

+ svg_files = [

+ "system_mode.svg",

+ "light_mode.svg",

+ "dark_mode.svg",

+ "settings.svg"

+ ]

+

+ for file in files_to_sync:

+ src_path = web_dir / file

+ dest_path = extension_dir / file

+

+ dest_path.parent.mkdir(parents=True, exist_ok=True)

+ shutil.copy2(src_path, dest_path)

+

+ for svg_file in svg_files:

+ src_path = web_dir / "src" / svg_file

+ dest_path = extension_dir / "web" / "src" / svg_file

+ dest_path.parent.mkdir(parents=True, exist_ok=True)

+ shutil.copy2(src_path, dest_path)

+

+

+if __name__ == "__main__":

+

+ sync_extension_files()

\ No newline at end of file

diff --git a/whisperlivekit/web/live_transcription.css b/whisperlivekit/web/live_transcription.css

index 0ce7065..a97a70c 100644

--- a/whisperlivekit/web/live_transcription.css

+++ b/whisperlivekit/web/live_transcription.css

@@ -72,6 +72,12 @@

--label-trans-text: #111111;

}

+html.is-extension

+{

+ width: 350px;

+ height: 500px;

+}

+

body {

font-family: ui-sans-serif, system-ui, sans-serif, 'Apple Color Emoji', 'Segoe UI Emoji', 'Segoe UI Symbol', 'Noto Color Emoji';

margin: 0;

@@ -191,6 +197,7 @@ body {

justify-content: center;

align-items: center;

gap: 15px;

+ position: relative;

}

.settings {

@@ -200,6 +207,52 @@ body {

gap: 12px;

}

+.settings-toggle {

+ width: 40px;

+ height: 40px;

+ border: none;

+ border-radius: 50%;

+ background-color: var(--button-bg);

+ border: 1px solid var(--button-border);

+ cursor: pointer;

+ display: none;

+ align-items: center;

+ justify-content: center;

+ transition: all 0.2s ease;

+}

+

+.settings-toggle:hover {

+ background-color: var(--chip-bg);

+}

+

+.settings-toggle.active {

+ background-color: var(--chip-bg);

+}

+

+.settings-toggle img {

+ width: 20px;

+ height: 20px;

+}

+

+@media (max-width: 10000px) {

+ .settings-toggle {

+ display: flex;

+ }

+

+ .settings {

+ display: none;

+ top: 100%;

+ background: var(--bg);

+ border: 1px solid var(--border);

+ border-radius: 18px;

+ padding: 12px;

+ }

+

+ .settings.visible {

+ display: flex;

+ }

+}

+

.field {

display: flex;

flex-direction: column;

@@ -454,7 +507,7 @@ label {

}

/* for smaller screens */

-@media (max-width: 768px) {

+@media (max-width: 200px) {

.header-container {

padding: 15px;

}

diff --git a/whisperlivekit/web/live_transcription.html b/whisperlivekit/web/live_transcription.html

index 2e7b518..ed7ecb8 100644

--- a/whisperlivekit/web/live_transcription.html

+++ b/whisperlivekit/web/live_transcription.html

@@ -5,7 +5,7 @@

WhisperLiveKit

-

+

+

Websocket URL

@@ -67,7 +71,7 @@

-

+

+

+ +

+ ## Running this extension

-1. Clone this repository.

-2. Load this directory in Chrome as an unpacked extension.

+1. Run `python sync_extension.py` to copy frontend files to the `chrome-extension` directory.

+2. Load the `chrome-extension` directory in Chrome as an unpacked extension.

## Devs:

diff --git a/chrome-extension/demo-extension.png b/chrome-extension/demo-extension.png

index ef6e7e2..2107c77 100644

Binary files a/chrome-extension/demo-extension.png and b/chrome-extension/demo-extension.png differ

diff --git a/chrome-extension/live_transcription.js b/chrome-extension/live_transcription.js

deleted file mode 100644

index 84a5472..0000000

--- a/chrome-extension/live_transcription.js

+++ /dev/null

@@ -1,669 +0,0 @@

-/* Theme, WebSocket, recording, rendering logic extracted from inline script and adapted for segmented theme control and WS caption */

-let isRecording = false;

-let websocket = null;

-let recorder = null;

-let chunkDuration = 100;

-let websocketUrl = "ws://localhost:8000/asr";

-let userClosing = false;

-let wakeLock = null;

-let startTime = null;

-let timerInterval = null;

-let audioContext = null;

-let analyser = null;

-let microphone = null;

-let waveCanvas = document.getElementById("waveCanvas");

-let waveCtx = waveCanvas.getContext("2d");

-let animationFrame = null;

-let waitingForStop = false;

-let lastReceivedData = null;

-let lastSignature = null;

-let availableMicrophones = [];

-let selectedMicrophoneId = null;

-

-waveCanvas.width = 60 * (window.devicePixelRatio || 1);

-waveCanvas.height = 30 * (window.devicePixelRatio || 1);

-waveCtx.scale(window.devicePixelRatio || 1, window.devicePixelRatio || 1);

-

-const statusText = document.getElementById("status");

-const recordButton = document.getElementById("recordButton");

-const chunkSelector = document.getElementById("chunkSelector");

-const websocketInput = document.getElementById("websocketInput");

-const websocketDefaultSpan = document.getElementById("wsDefaultUrl");

-const linesTranscriptDiv = document.getElementById("linesTranscript");

-const timerElement = document.querySelector(".timer");

-const themeRadios = document.querySelectorAll('input[name="theme"]');

-const microphoneSelect = document.getElementById("microphoneSelect");

-const settingsToggle = document.getElementById("settingsToggle");

-const settingsDiv = document.querySelector(".settings");

-

-

-

-chrome.runtime.onInstalled.addListener((details) => {

- if (details.reason.search(/install/g) === -1) {

- return

- }

- chrome.tabs.create({

- url: chrome.runtime.getURL("welcome.html"),

- active: true

- })

-})

-

-function getWaveStroke() {

- const styles = getComputedStyle(document.documentElement);

- const v = styles.getPropertyValue("--wave-stroke").trim();

- return v || "#000";

-}

-

-let waveStroke = getWaveStroke();

-function updateWaveStroke() {

- waveStroke = getWaveStroke();

-}

-

-function applyTheme(pref) {

- if (pref === "light") {

- document.documentElement.setAttribute("data-theme", "light");

- } else if (pref === "dark") {

- document.documentElement.setAttribute("data-theme", "dark");

- } else {

- document.documentElement.removeAttribute("data-theme");

- }

- updateWaveStroke();

-}

-

-// Persisted theme preference

-const savedThemePref = localStorage.getItem("themePreference") || "system";

-applyTheme(savedThemePref);

-if (themeRadios.length) {

- themeRadios.forEach((r) => {

- r.checked = r.value === savedThemePref;

- r.addEventListener("change", () => {

- if (r.checked) {

- localStorage.setItem("themePreference", r.value);

- applyTheme(r.value);

- }

- });

- });

-}

-

-// React to OS theme changes when in "system" mode

-const darkMq = window.matchMedia && window.matchMedia("(prefers-color-scheme: dark)");

-const handleOsThemeChange = () => {

- const pref = localStorage.getItem("themePreference") || "system";

- if (pref === "system") updateWaveStroke();

-};

-if (darkMq && darkMq.addEventListener) {

- darkMq.addEventListener("change", handleOsThemeChange);

-} else if (darkMq && darkMq.addListener) {

- // deprecated, but included for Safari compatibility

- darkMq.addListener(handleOsThemeChange);

-}

-

-async function enumerateMicrophones() {

- try {

- const micPermission = await navigator.permissions.query({

- name: "microphone",

- });

-

- const stream = await navigator.mediaDevices.getUserMedia({ audio: true });

- stream.getTracks().forEach(track => track.stop());

-

- const devices = await navigator.mediaDevices.enumerateDevices();

- availableMicrophones = devices.filter(device => device.kind === 'audioinput');

-

- populateMicrophoneSelect();

- console.log(`Found ${availableMicrophones.length} microphone(s)`);

- } catch (error) {

- console.error('Error enumerating microphones:', error);

- statusText.textContent = "Error accessing microphones. Please grant permission.";

- }

-}

-

-function populateMicrophoneSelect() {

- if (!microphoneSelect) return;

-

- microphoneSelect.innerHTML = '';

-

- availableMicrophones.forEach((device, index) => {

- const option = document.createElement('option');

- option.value = device.deviceId;

- option.textContent = device.label || `Microphone ${index + 1}`;

- microphoneSelect.appendChild(option);

- });

-

- const savedMicId = localStorage.getItem('selectedMicrophone');

- if (savedMicId && availableMicrophones.some(mic => mic.deviceId === savedMicId)) {

- microphoneSelect.value = savedMicId;

- selectedMicrophoneId = savedMicId;

- }

-}

-

-function handleMicrophoneChange() {

- selectedMicrophoneId = microphoneSelect.value || null;

- localStorage.setItem('selectedMicrophone', selectedMicrophoneId || '');

-

- const selectedDevice = availableMicrophones.find(mic => mic.deviceId === selectedMicrophoneId);

- const deviceName = selectedDevice ? selectedDevice.label : 'Default Microphone';

-

- console.log(`Selected microphone: ${deviceName}`);

- statusText.textContent = `Microphone changed to: ${deviceName}`;

-

- if (isRecording) {

- statusText.textContent = "Switching microphone... Please wait.";

- stopRecording().then(() => {

- setTimeout(() => {

- toggleRecording();

- }, 1000);

- });

- }

-}

-

-// Helpers

-function fmt1(x) {

- const n = Number(x);

- return Number.isFinite(n) ? n.toFixed(1) : x;

-}

-

-// Default WebSocket URL computation

-const host = window.location.hostname || "localhost";

-const port = window.location.port;

-const protocol = window.location.protocol === "https:" ? "wss" : "ws";

-const defaultWebSocketUrl = websocketUrl;

-

-// Populate default caption and input

-if (websocketDefaultSpan) websocketDefaultSpan.textContent = defaultWebSocketUrl;

-websocketInput.value = defaultWebSocketUrl;

-websocketUrl = defaultWebSocketUrl;

-

-// Optional chunk selector (guard for presence)

-if (chunkSelector) {

- chunkSelector.addEventListener("change", () => {

- chunkDuration = parseInt(chunkSelector.value);

- });

-}

-

-// WebSocket input change handling

-websocketInput.addEventListener("change", () => {

- const urlValue = websocketInput.value.trim();

- if (!urlValue.startsWith("ws://") && !urlValue.startsWith("wss://")) {

- statusText.textContent = "Invalid WebSocket URL (must start with ws:// or wss://)";

- return;

- }

- websocketUrl = urlValue;

- statusText.textContent = "WebSocket URL updated. Ready to connect.";

-});

-

-function setupWebSocket() {

- return new Promise((resolve, reject) => {

- try {

- websocket = new WebSocket(websocketUrl);

- } catch (error) {

- statusText.textContent = "Invalid WebSocket URL. Please check and try again.";

- reject(error);

- return;

- }

-

- websocket.onopen = () => {

- statusText.textContent = "Connected to server.";

- resolve();

- };

-

- websocket.onclose = () => {

- if (userClosing) {

- if (waitingForStop) {

- statusText.textContent = "Processing finalized or connection closed.";

- if (lastReceivedData) {

- renderLinesWithBuffer(

- lastReceivedData.lines || [],

- lastReceivedData.buffer_diarization || "",

- lastReceivedData.buffer_transcription || "",

- 0,

- 0,

- true

- );

- }

- }

- } else {

- statusText.textContent = "Disconnected from the WebSocket server. (Check logs if model is loading.)";

- if (isRecording) {

- stopRecording();

- }

- }

- isRecording = false;

- waitingForStop = false;

- userClosing = false;

- lastReceivedData = null;

- websocket = null;

- updateUI();

- };

-

- websocket.onerror = () => {

- statusText.textContent = "Error connecting to WebSocket.";

- reject(new Error("Error connecting to WebSocket"));

- };

-

- websocket.onmessage = (event) => {

- const data = JSON.parse(event.data);

-

- if (data.type === "ready_to_stop") {

- console.log("Ready to stop received, finalizing display and closing WebSocket.");

- waitingForStop = false;

-

- if (lastReceivedData) {

- renderLinesWithBuffer(

- lastReceivedData.lines || [],

- lastReceivedData.buffer_diarization || "",

- lastReceivedData.buffer_transcription || "",

- 0,

- 0,

- true

- );

- }

- statusText.textContent = "Finished processing audio! Ready to record again.";

- recordButton.disabled = false;

-

- if (websocket) {

- websocket.close();

- }

- return;

- }

-

- lastReceivedData = data;

-

- const {

- lines = [],

- buffer_transcription = "",

- buffer_diarization = "",

- remaining_time_transcription = 0,

- remaining_time_diarization = 0,

- status = "active_transcription",

- } = data;

-

- renderLinesWithBuffer(

- lines,

- buffer_diarization,

- buffer_transcription,

- remaining_time_diarization,

- remaining_time_transcription,

- false,

- status

- );

- };

- });

-}

-

-function renderLinesWithBuffer(

- lines,

- buffer_diarization,

- buffer_transcription,

- remaining_time_diarization,

- remaining_time_transcription,

- isFinalizing = false,

- current_status = "active_transcription"

-) {

- if (current_status === "no_audio_detected") {

- linesTranscriptDiv.innerHTML =

- "

## Running this extension

-1. Clone this repository.

-2. Load this directory in Chrome as an unpacked extension.

+1. Run `python sync_extension.py` to copy frontend files to the `chrome-extension` directory.

+2. Load the `chrome-extension` directory in Chrome as an unpacked extension.

## Devs:

diff --git a/chrome-extension/demo-extension.png b/chrome-extension/demo-extension.png

index ef6e7e2..2107c77 100644

Binary files a/chrome-extension/demo-extension.png and b/chrome-extension/demo-extension.png differ

diff --git a/chrome-extension/live_transcription.js b/chrome-extension/live_transcription.js

deleted file mode 100644

index 84a5472..0000000

--- a/chrome-extension/live_transcription.js

+++ /dev/null

@@ -1,669 +0,0 @@

-/* Theme, WebSocket, recording, rendering logic extracted from inline script and adapted for segmented theme control and WS caption */

-let isRecording = false;

-let websocket = null;

-let recorder = null;

-let chunkDuration = 100;

-let websocketUrl = "ws://localhost:8000/asr";

-let userClosing = false;

-let wakeLock = null;

-let startTime = null;

-let timerInterval = null;

-let audioContext = null;

-let analyser = null;

-let microphone = null;

-let waveCanvas = document.getElementById("waveCanvas");

-let waveCtx = waveCanvas.getContext("2d");

-let animationFrame = null;

-let waitingForStop = false;

-let lastReceivedData = null;

-let lastSignature = null;

-let availableMicrophones = [];

-let selectedMicrophoneId = null;

-

-waveCanvas.width = 60 * (window.devicePixelRatio || 1);

-waveCanvas.height = 30 * (window.devicePixelRatio || 1);

-waveCtx.scale(window.devicePixelRatio || 1, window.devicePixelRatio || 1);

-

-const statusText = document.getElementById("status");

-const recordButton = document.getElementById("recordButton");

-const chunkSelector = document.getElementById("chunkSelector");

-const websocketInput = document.getElementById("websocketInput");

-const websocketDefaultSpan = document.getElementById("wsDefaultUrl");

-const linesTranscriptDiv = document.getElementById("linesTranscript");

-const timerElement = document.querySelector(".timer");

-const themeRadios = document.querySelectorAll('input[name="theme"]');

-const microphoneSelect = document.getElementById("microphoneSelect");

-const settingsToggle = document.getElementById("settingsToggle");

-const settingsDiv = document.querySelector(".settings");

-

-

-

-chrome.runtime.onInstalled.addListener((details) => {

- if (details.reason.search(/install/g) === -1) {

- return

- }

- chrome.tabs.create({

- url: chrome.runtime.getURL("welcome.html"),

- active: true

- })

-})

-

-function getWaveStroke() {

- const styles = getComputedStyle(document.documentElement);

- const v = styles.getPropertyValue("--wave-stroke").trim();

- return v || "#000";

-}

-

-let waveStroke = getWaveStroke();

-function updateWaveStroke() {

- waveStroke = getWaveStroke();

-}

-

-function applyTheme(pref) {

- if (pref === "light") {

- document.documentElement.setAttribute("data-theme", "light");

- } else if (pref === "dark") {

- document.documentElement.setAttribute("data-theme", "dark");

- } else {

- document.documentElement.removeAttribute("data-theme");

- }

- updateWaveStroke();

-}

-

-// Persisted theme preference

-const savedThemePref = localStorage.getItem("themePreference") || "system";

-applyTheme(savedThemePref);

-if (themeRadios.length) {

- themeRadios.forEach((r) => {

- r.checked = r.value === savedThemePref;

- r.addEventListener("change", () => {

- if (r.checked) {

- localStorage.setItem("themePreference", r.value);

- applyTheme(r.value);

- }

- });

- });

-}

-

-// React to OS theme changes when in "system" mode

-const darkMq = window.matchMedia && window.matchMedia("(prefers-color-scheme: dark)");

-const handleOsThemeChange = () => {

- const pref = localStorage.getItem("themePreference") || "system";

- if (pref === "system") updateWaveStroke();

-};

-if (darkMq && darkMq.addEventListener) {

- darkMq.addEventListener("change", handleOsThemeChange);

-} else if (darkMq && darkMq.addListener) {

- // deprecated, but included for Safari compatibility

- darkMq.addListener(handleOsThemeChange);

-}

-

-async function enumerateMicrophones() {

- try {

- const micPermission = await navigator.permissions.query({

- name: "microphone",

- });

-

- const stream = await navigator.mediaDevices.getUserMedia({ audio: true });

- stream.getTracks().forEach(track => track.stop());

-

- const devices = await navigator.mediaDevices.enumerateDevices();

- availableMicrophones = devices.filter(device => device.kind === 'audioinput');

-

- populateMicrophoneSelect();

- console.log(`Found ${availableMicrophones.length} microphone(s)`);

- } catch (error) {

- console.error('Error enumerating microphones:', error);

- statusText.textContent = "Error accessing microphones. Please grant permission.";

- }

-}

-

-function populateMicrophoneSelect() {

- if (!microphoneSelect) return;

-

- microphoneSelect.innerHTML = '';

-

- availableMicrophones.forEach((device, index) => {

- const option = document.createElement('option');

- option.value = device.deviceId;

- option.textContent = device.label || `Microphone ${index + 1}`;

- microphoneSelect.appendChild(option);

- });

-

- const savedMicId = localStorage.getItem('selectedMicrophone');

- if (savedMicId && availableMicrophones.some(mic => mic.deviceId === savedMicId)) {

- microphoneSelect.value = savedMicId;

- selectedMicrophoneId = savedMicId;

- }

-}

-

-function handleMicrophoneChange() {

- selectedMicrophoneId = microphoneSelect.value || null;

- localStorage.setItem('selectedMicrophone', selectedMicrophoneId || '');

-

- const selectedDevice = availableMicrophones.find(mic => mic.deviceId === selectedMicrophoneId);

- const deviceName = selectedDevice ? selectedDevice.label : 'Default Microphone';

-

- console.log(`Selected microphone: ${deviceName}`);

- statusText.textContent = `Microphone changed to: ${deviceName}`;

-

- if (isRecording) {

- statusText.textContent = "Switching microphone... Please wait.";

- stopRecording().then(() => {

- setTimeout(() => {

- toggleRecording();

- }, 1000);

- });

- }

-}

-

-// Helpers

-function fmt1(x) {

- const n = Number(x);

- return Number.isFinite(n) ? n.toFixed(1) : x;

-}

-

-// Default WebSocket URL computation

-const host = window.location.hostname || "localhost";

-const port = window.location.port;

-const protocol = window.location.protocol === "https:" ? "wss" : "ws";

-const defaultWebSocketUrl = websocketUrl;

-

-// Populate default caption and input

-if (websocketDefaultSpan) websocketDefaultSpan.textContent = defaultWebSocketUrl;

-websocketInput.value = defaultWebSocketUrl;

-websocketUrl = defaultWebSocketUrl;

-

-// Optional chunk selector (guard for presence)

-if (chunkSelector) {

- chunkSelector.addEventListener("change", () => {

- chunkDuration = parseInt(chunkSelector.value);

- });

-}

-

-// WebSocket input change handling

-websocketInput.addEventListener("change", () => {

- const urlValue = websocketInput.value.trim();

- if (!urlValue.startsWith("ws://") && !urlValue.startsWith("wss://")) {

- statusText.textContent = "Invalid WebSocket URL (must start with ws:// or wss://)";

- return;

- }

- websocketUrl = urlValue;

- statusText.textContent = "WebSocket URL updated. Ready to connect.";

-});

-

-function setupWebSocket() {

- return new Promise((resolve, reject) => {

- try {

- websocket = new WebSocket(websocketUrl);

- } catch (error) {

- statusText.textContent = "Invalid WebSocket URL. Please check and try again.";

- reject(error);

- return;

- }

-

- websocket.onopen = () => {

- statusText.textContent = "Connected to server.";

- resolve();

- };

-

- websocket.onclose = () => {

- if (userClosing) {

- if (waitingForStop) {

- statusText.textContent = "Processing finalized or connection closed.";

- if (lastReceivedData) {

- renderLinesWithBuffer(

- lastReceivedData.lines || [],

- lastReceivedData.buffer_diarization || "",

- lastReceivedData.buffer_transcription || "",

- 0,

- 0,

- true

- );

- }

- }

- } else {

- statusText.textContent = "Disconnected from the WebSocket server. (Check logs if model is loading.)";

- if (isRecording) {

- stopRecording();

- }

- }

- isRecording = false;

- waitingForStop = false;

- userClosing = false;

- lastReceivedData = null;

- websocket = null;

- updateUI();

- };

-

- websocket.onerror = () => {

- statusText.textContent = "Error connecting to WebSocket.";

- reject(new Error("Error connecting to WebSocket"));

- };

-

- websocket.onmessage = (event) => {

- const data = JSON.parse(event.data);

-

- if (data.type === "ready_to_stop") {

- console.log("Ready to stop received, finalizing display and closing WebSocket.");

- waitingForStop = false;

-

- if (lastReceivedData) {

- renderLinesWithBuffer(

- lastReceivedData.lines || [],

- lastReceivedData.buffer_diarization || "",

- lastReceivedData.buffer_transcription || "",

- 0,

- 0,

- true

- );

- }

- statusText.textContent = "Finished processing audio! Ready to record again.";

- recordButton.disabled = false;

-

- if (websocket) {

- websocket.close();

- }

- return;

- }

-

- lastReceivedData = data;

-

- const {

- lines = [],

- buffer_transcription = "",

- buffer_diarization = "",

- remaining_time_transcription = 0,

- remaining_time_diarization = 0,

- status = "active_transcription",

- } = data;

-

- renderLinesWithBuffer(

- lines,

- buffer_diarization,

- buffer_transcription,

- remaining_time_diarization,

- remaining_time_transcription,

- false,

- status

- );

- };

- });

-}

-

-function renderLinesWithBuffer(

- lines,

- buffer_diarization,

- buffer_transcription,

- remaining_time_diarization,

- remaining_time_transcription,

- isFinalizing = false,

- current_status = "active_transcription"

-) {

- if (current_status === "no_audio_detected") {

- linesTranscriptDiv.innerHTML =

- "