diff --git a/.dockerignore b/.dockerignore

index ef021aea..843c7e04 100644

--- a/.dockerignore

+++ b/.dockerignore

@@ -31,6 +31,7 @@ bin/*

.agent/*

.agents/*

.opencode/*

+.idea/*

.bmad/*

_bmad/*

_bmad-output/*

diff --git a/.github/workflows/docker-image.yml b/.github/workflows/docker-image.yml

index 3aacf4f5..443462df 100644

--- a/.github/workflows/docker-image.yml

+++ b/.github/workflows/docker-image.yml

@@ -10,13 +10,15 @@ env:

DOCKERHUB_REPO: eceasy/cli-proxy-api

jobs:

- docker:

+ docker_amd64:

runs-on: ubuntu-latest

steps:

- name: Checkout

uses: actions/checkout@v4

- - name: Set up QEMU

- uses: docker/setup-qemu-action@v3

+ - name: Refresh models catalog

+ run: |

+ git fetch --depth 1 https://github.com/router-for-me/models.git main

+ git show FETCH_HEAD:models.json > internal/registry/models/models.json

- name: Set up Docker Buildx

uses: docker/setup-buildx-action@v3

- name: Login to DockerHub

@@ -26,21 +28,120 @@ jobs:

password: ${{ secrets.DOCKERHUB_TOKEN }}

- name: Generate Build Metadata

run: |

- echo VERSION=`git describe --tags --always --dirty` >> $GITHUB_ENV

+ echo "VERSION=${GITHUB_REF_NAME}" >> $GITHUB_ENV

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

- - name: Build and push

+ - name: Build and push (amd64)

uses: docker/build-push-action@v6

with:

context: .

- platforms: |

- linux/amd64

- linux/arm64

+ platforms: linux/amd64

push: true

build-args: |

VERSION=${{ env.VERSION }}

COMMIT=${{ env.COMMIT }}

BUILD_DATE=${{ env.BUILD_DATE }}

tags: |

- ${{ env.DOCKERHUB_REPO }}:latest

- ${{ env.DOCKERHUB_REPO }}:${{ env.VERSION }}

+ ${{ env.DOCKERHUB_REPO }}:latest-amd64

+ ${{ env.DOCKERHUB_REPO }}:${{ env.VERSION }}-amd64

+

+ docker_arm64:

+ runs-on: ubuntu-24.04-arm

+ steps:

+ - name: Checkout

+ uses: actions/checkout@v4

+ - name: Refresh models catalog

+ run: |

+ git fetch --depth 1 https://github.com/router-for-me/models.git main

+ git show FETCH_HEAD:models.json > internal/registry/models/models.json

+ - name: Set up Docker Buildx

+ uses: docker/setup-buildx-action@v3

+ - name: Login to DockerHub

+ uses: docker/login-action@v3

+ with:

+ username: ${{ secrets.DOCKERHUB_USERNAME }}

+ password: ${{ secrets.DOCKERHUB_TOKEN }}

+ - name: Generate Build Metadata

+ run: |

+ echo "VERSION=${GITHUB_REF_NAME}" >> $GITHUB_ENV

+ echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

+ echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

+ - name: Build and push (arm64)

+ uses: docker/build-push-action@v6

+ with:

+ context: .

+ platforms: linux/arm64

+ push: true

+ build-args: |

+ VERSION=${{ env.VERSION }}

+ COMMIT=${{ env.COMMIT }}

+ BUILD_DATE=${{ env.BUILD_DATE }}

+ tags: |

+ ${{ env.DOCKERHUB_REPO }}:latest-arm64

+ ${{ env.DOCKERHUB_REPO }}:${{ env.VERSION }}-arm64

+

+ docker_manifest:

+ runs-on: ubuntu-latest

+ needs:

+ - docker_amd64

+ - docker_arm64

+ steps:

+ - name: Checkout

+ uses: actions/checkout@v4

+ - name: Set up Docker Buildx

+ uses: docker/setup-buildx-action@v3

+ - name: Login to DockerHub

+ uses: docker/login-action@v3

+ with:

+ username: ${{ secrets.DOCKERHUB_USERNAME }}

+ password: ${{ secrets.DOCKERHUB_TOKEN }}

+ - name: Generate Build Metadata

+ run: |

+ echo "VERSION=${GITHUB_REF_NAME}" >> $GITHUB_ENV

+ echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

+ echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

+ - name: Create and push multi-arch manifests

+ run: |

+ docker buildx imagetools create \

+ --tag "${DOCKERHUB_REPO}:latest" \

+ "${DOCKERHUB_REPO}:latest-amd64" \

+ "${DOCKERHUB_REPO}:latest-arm64"

+ docker buildx imagetools create \

+ --tag "${DOCKERHUB_REPO}:${VERSION}" \

+ "${DOCKERHUB_REPO}:${VERSION}-amd64" \

+ "${DOCKERHUB_REPO}:${VERSION}-arm64"

+ - name: Cleanup temporary tags

+ continue-on-error: true

+ env:

+ DOCKERHUB_USERNAME: ${{ secrets.DOCKERHUB_USERNAME }}

+ DOCKERHUB_TOKEN: ${{ secrets.DOCKERHUB_TOKEN }}

+ run: |

+ set -euo pipefail

+ namespace="${DOCKERHUB_REPO%%/*}"

+ repo_name="${DOCKERHUB_REPO#*/}"

+

+ token="$(

+ curl -fsSL \

+ -H 'Content-Type: application/json' \

+ -d "{\"username\":\"${DOCKERHUB_USERNAME}\",\"password\":\"${DOCKERHUB_TOKEN}\"}" \

+ 'https://hub.docker.com/v2/users/login/' \

+ | python3 -c 'import json,sys; print(json.load(sys.stdin)["token"])'

+ )"

+

+ delete_tag() {

+ local tag="$1"

+ local url="https://hub.docker.com/v2/repositories/${namespace}/${repo_name}/tags/${tag}/"

+ local http_code

+ http_code="$(curl -sS -o /dev/null -w "%{http_code}" -X DELETE -H "Authorization: JWT ${token}" "${url}" || true)"

+ if [ "${http_code}" = "204" ] || [ "${http_code}" = "404" ]; then

+ echo "Docker Hub tag removed (or missing): ${DOCKERHUB_REPO}:${tag} (HTTP ${http_code})"

+ return 0

+ fi

+ echo "Docker Hub tag delete failed: ${DOCKERHUB_REPO}:${tag} (HTTP ${http_code})"

+ return 0

+ }

+

+ delete_tag "latest-amd64"

+ delete_tag "latest-arm64"

+ delete_tag "${VERSION}-amd64"

+ delete_tag "${VERSION}-arm64"

diff --git a/.github/workflows/pr-test-build.yml b/.github/workflows/pr-test-build.yml

index 477ff049..75f4c520 100644

--- a/.github/workflows/pr-test-build.yml

+++ b/.github/workflows/pr-test-build.yml

@@ -12,6 +12,10 @@ jobs:

steps:

- name: Checkout

uses: actions/checkout@v4

+ - name: Refresh models catalog

+ run: |

+ git fetch --depth 1 https://github.com/router-for-me/models.git main

+ git show FETCH_HEAD:models.json > internal/registry/models/models.json

- name: Set up Go

uses: actions/setup-go@v5

with:

diff --git a/.github/workflows/release.yaml b/.github/workflows/release.yaml

index 4bb5e63b..4043e4a5 100644

--- a/.github/workflows/release.yaml

+++ b/.github/workflows/release.yaml

@@ -16,21 +16,25 @@ jobs:

- uses: actions/checkout@v4

with:

fetch-depth: 0

+ - name: Refresh models catalog

+ run: |

+ git fetch --depth 1 https://github.com/router-for-me/models.git main

+ git show FETCH_HEAD:models.json > internal/registry/models/models.json

- run: git fetch --force --tags

- uses: actions/setup-go@v4

with:

- go-version: '>=1.24.0'

+ go-version: '>=1.26.0'

cache: true

- name: Generate Build Metadata

run: |

- echo VERSION=`git describe --tags --always --dirty` >> $GITHUB_ENV

+ echo "VERSION=${GITHUB_REF_NAME}" >> $GITHUB_ENV

echo COMMIT=`git rev-parse --short HEAD` >> $GITHUB_ENV

echo BUILD_DATE=`date -u +%Y-%m-%dT%H:%M:%SZ` >> $GITHUB_ENV

- uses: goreleaser/goreleaser-action@v4

with:

distribution: goreleaser

version: latest

- args: release --clean

+ args: release --clean --skip=validate

env:

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

VERSION: ${{ env.VERSION }}

diff --git a/.gitignore b/.gitignore

index b1c2beef..38152671 100644

--- a/.gitignore

+++ b/.gitignore

@@ -41,6 +41,7 @@ GEMINI.md

.agents/*

.agents/*

.opencode/*

+.idea/*

.bmad/*

_bmad/*

_bmad-output/*

diff --git a/.goreleaser.yml b/.goreleaser.yml

index 31d05e6d..df828102 100644

--- a/.goreleaser.yml

+++ b/.goreleaser.yml

@@ -1,3 +1,5 @@

+version: 2

+

builds:

- id: "cli-proxy-api"

env:

diff --git a/Dockerfile b/Dockerfile

index 8623dc5e..3e10c4f9 100644

--- a/Dockerfile

+++ b/Dockerfile

@@ -1,4 +1,4 @@

-FROM golang:1.24-alpine AS builder

+FROM golang:1.26-alpine AS builder

WORKDIR /app

diff --git a/README.md b/README.md

index 382434d6..ac78a5b8 100644

--- a/README.md

+++ b/README.md

@@ -10,11 +10,11 @@ So you can use local or multi-account CLI access with OpenAI(include Responses)/

## Sponsor

-[](https://z.ai/subscribe?ic=8JVLJQFSKB)

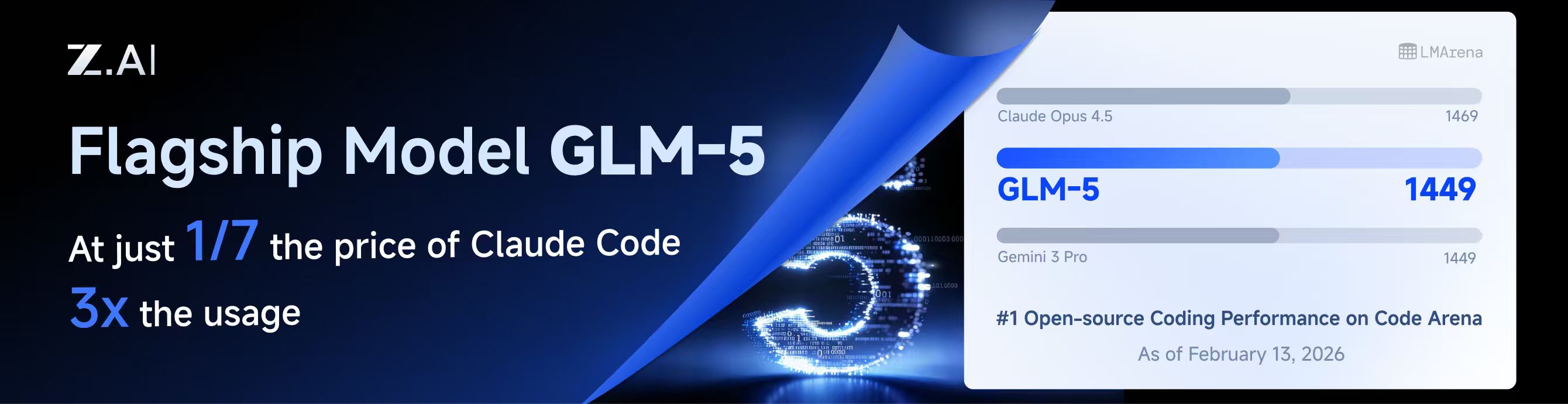

+[](https://z.ai/subscribe?ic=8JVLJQFSKB)

This project is sponsored by Z.ai, supporting us with their GLM CODING PLAN.

-GLM CODING PLAN is a subscription service designed for AI coding, starting at just $3/month. It provides access to their flagship GLM-4.7 model across 10+ popular AI coding tools (Claude Code, Cline, Roo Code, etc.), offering developers top-tier, fast, and stable coding experiences.

+GLM CODING PLAN is a subscription service designed for AI coding, starting at just $10/month. It provides access to their flagship GLM-4.7 & (GLM-5 Only Available for Pro Users)model across 10+ popular AI coding tools (Claude Code, Cline, Roo Code, etc.), offering developers top-tier, fast, and stable coding experiences.

Get 10% OFF GLM CODING PLAN:https://z.ai/subscribe?ic=8JVLJQFSKB

@@ -27,8 +27,8 @@ Get 10% OFF GLM CODING PLAN:https://z.ai/subscribe?ic=8JVLJQFSKB

| Thanks to PackyCode for sponsoring this project! PackyCode is a reliable and efficient API relay service provider, offering relay services for Claude Code, Codex, Gemini, and more. PackyCode provides special discounts for our software users: register using this link and enter the "cliproxyapi" promo code during recharge to get 10% off. |

- |

-Thanks to Cubence for sponsoring this project! Cubence is a reliable and efficient API relay service provider, offering relay services for Claude Code, Codex, Gemini, and more. Cubence provides special discounts for our software users: register using this link and enter the "CLIPROXYAPI" promo code during recharge to get 10% off. |

+ |

+Thanks to AICodeMirror for sponsoring this project! AICodeMirror provides official high-stability relay services for Claude Code / Codex / Gemini CLI, with enterprise-grade concurrency, fast invoicing, and 24/7 dedicated technical support. Claude Code / Codex / Gemini official channels at 38% / 2% / 9% of original price, with extra discounts on top-ups! AICodeMirror offers special benefits for CLIProxyAPI users: register via this link to enjoy 20% off your first top-up, and enterprise customers can get up to 25% off! |

@@ -138,6 +138,29 @@ Windows desktop app built with Tauri + React for monitoring AI coding assistant

A lightweight web admin panel for CLIProxyAPI with health checks, resource monitoring, real-time logs, auto-update, request statistics and pricing display. Supports one-click installation and systemd service.

+### [CLIProxyAPI Tray](https://github.com/kitephp/CLIProxyAPI_Tray)

+

+A Windows tray application implemented using PowerShell scripts, without relying on any third-party libraries. The main features include: automatic creation of shortcuts, silent running, password management, channel switching (Main / Plus), and automatic downloading and updating.

+

+### [霖君](https://github.com/wangdabaoqq/LinJun)

+

+霖君 is a cross-platform desktop application for managing AI programming assistants, supporting macOS, Windows, and Linux systems. Unified management of Claude Code, Gemini CLI, OpenAI Codex, Qwen Code, and other AI coding tools, with local proxy for multi-account quota tracking and one-click configuration.

+

+### [CLIProxyAPI Dashboard](https://github.com/itsmylife44/cliproxyapi-dashboard)

+

+A modern web-based management dashboard for CLIProxyAPI built with Next.js, React, and PostgreSQL. Features real-time log streaming, structured configuration editing, API key management, OAuth provider integration for Claude/Gemini/Codex, usage analytics, container management, and config sync with OpenCode via companion plugin - no manual YAML editing needed.

+

+### [All API Hub](https://github.com/qixing-jk/all-api-hub)

+

+Browser extension for one-stop management of New API-compatible relay site accounts, featuring balance and usage dashboards, auto check-in, one-click key export to common apps, in-page API availability testing, and channel/model sync and redirection. It integrates with CLIProxyAPI through the Management API for one-click provider import and config sync.

+

+### [Shadow AI](https://github.com/HEUDavid/shadow-ai)

+

+Shadow AI is an AI assistant tool designed specifically for restricted environments. It provides a stealthy operation

+mode without windows or traces, and enables cross-device AI Q&A interaction and control via the local area network (

+LAN). Essentially, it is an automated collaboration layer of "screen/audio capture + AI inference + low-friction delivery",

+helping users to immersively use AI assistants across applications on controlled devices or in restricted environments.

+

> [!NOTE]

> If you developed a project based on CLIProxyAPI, please open a PR to add it to this list.

@@ -149,6 +172,12 @@ Those projects are ports of CLIProxyAPI or inspired by it:

A Next.js implementation inspired by CLIProxyAPI, easy to install and use, built from scratch with format translation (OpenAI/Claude/Gemini/Ollama), combo system with auto-fallback, multi-account management with exponential backoff, a Next.js web dashboard, and support for CLI tools (Cursor, Claude Code, Cline, RooCode) - no API keys needed.

+### [OmniRoute](https://github.com/diegosouzapw/OmniRoute)

+

+Never stop coding. Smart routing to FREE & low-cost AI models with automatic fallback.

+

+OmniRoute is an AI gateway for multi-provider LLMs: an OpenAI-compatible endpoint with smart routing, load balancing, retries, and fallbacks. Add policies, rate limits, caching, and observability for reliable, cost-aware inference.

+

> [!NOTE]

> If you have developed a port of CLIProxyAPI or a project inspired by it, please open a PR to add it to this list.

diff --git a/README_CN.md b/README_CN.md

index 872b6a59..7ee7db43 100644

--- a/README_CN.md

+++ b/README_CN.md

@@ -10,13 +10,13 @@

## 赞助商

-[](https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII)

+[](https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII)

本项目由 Z智谱 提供赞助, 他们通过 GLM CODING PLAN 对本项目提供技术支持。

-GLM CODING PLAN 是专为AI编码打造的订阅套餐,每月最低仅需20元,即可在十余款主流AI编码工具如 Claude Code、Cline、Roo Code 中畅享智谱旗舰模型GLM-4.7,为开发者提供顶尖的编码体验。

+GLM CODING PLAN 是专为AI编码打造的订阅套餐,每月最低仅需20元,即可在十余款主流AI编码工具如 Claude Code、Cline、Roo Code 中畅享智谱旗舰模型GLM-4.7(受限于算力,目前仅限Pro用户开放),为开发者提供顶尖的编码体验。

-智谱AI为本软件提供了特别优惠,使用以下链接购买可以享受九折优惠:https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII

+智谱AI为本产品提供了特别优惠,使用以下链接购买可以享受九折优惠:https://www.bigmodel.cn/claude-code?ic=RRVJPB5SII

---

@@ -27,8 +27,8 @@ GLM CODING PLAN 是专为AI编码打造的订阅套餐,每月最低仅需20元

感谢 PackyCode 对本项目的赞助!PackyCode 是一家可靠高效的 API 中转服务商,提供 Claude Code、Codex、Gemini 等多种服务的中转。PackyCode 为本软件用户提供了特别优惠:使用此链接注册,并在充值时输入 "cliproxyapi" 优惠码即可享受九折优惠。 |

- |

-感谢 Cubence 对本项目的赞助!Cubence 是一家可靠高效的 API 中转服务商,提供 Claude Code、Codex、Gemini 等多种服务的中转。Cubence 为本软件用户提供了特别优惠:使用此链接注册,并在充值时输入 "CLIPROXYAPI" 优惠码即可享受九折优惠。 |

+ |

+感谢 AICodeMirror 赞助了本项目!AICodeMirror 提供 Claude Code / Codex / Gemini CLI 官方高稳定中转服务,支持企业级高并发、极速开票、7×24 专属技术支持。 Claude Code / Codex / Gemini 官方渠道低至 3.8 / 0.2 / 0.9 折,充值更有折上折!AICodeMirror 为 CLIProxyAPI 的用户提供了特别福利,通过此链接注册的用户,可享受首充8折,企业客户最高可享 7.5 折! |

@@ -137,6 +137,26 @@ Windows 桌面应用,基于 Tauri + React 构建,用于通过 CLIProxyAPI

面向 CLIProxyAPI 的 Web 管理面板,提供健康检查、资源监控、日志查看、自动更新、请求统计与定价展示,支持一键安装与 systemd 服务。

+### [CLIProxyAPI Tray](https://github.com/kitephp/CLIProxyAPI_Tray)

+

+Windows 托盘应用,基于 PowerShell 脚本实现,不依赖任何第三方库。主要功能包括:自动创建快捷方式、静默运行、密码管理、通道切换(Main / Plus)以及自动下载与更新。

+

+### [霖君](https://github.com/wangdabaoqq/LinJun)

+

+霖君是一款用于管理AI编程助手的跨平台桌面应用,支持macOS、Windows、Linux系统。统一管理Claude Code、Gemini CLI、OpenAI Codex、Qwen Code等AI编程工具,本地代理实现多账户配额跟踪和一键配置。

+

+### [CLIProxyAPI Dashboard](https://github.com/itsmylife44/cliproxyapi-dashboard)

+

+一个面向 CLIProxyAPI 的现代化 Web 管理仪表盘,基于 Next.js、React 和 PostgreSQL 构建。支持实时日志流、结构化配置编辑、API Key 管理、Claude/Gemini/Codex 的 OAuth 提供方集成、使用量分析、容器管理,并可通过配套插件与 OpenCode 同步配置,无需手动编辑 YAML。

+

+### [All API Hub](https://github.com/qixing-jk/all-api-hub)

+

+用于一站式管理 New API 兼容中转站账号的浏览器扩展,提供余额与用量看板、自动签到、密钥一键导出到常用应用、网页内 API 可用性测试,以及渠道与模型同步和重定向。支持通过 CLIProxyAPI Management API 一键导入 Provider 与同步配置。

+

+### [Shadow AI](https://github.com/HEUDavid/shadow-ai)

+

+Shadow AI 是一款专为受限环境设计的 AI 辅助工具。提供无窗口、无痕迹的隐蔽运行方式,并通过局域网实现跨设备的 AI 问答交互与控制。本质上是一个「屏幕/音频采集 + AI 推理 + 低摩擦投送」的自动化协作层,帮助用户在受控设备/受限环境下沉浸式跨应用地使用 AI 助手。

+

> [!NOTE]

> 如果你开发了基于 CLIProxyAPI 的项目,请提交一个 PR(拉取请求)将其添加到此列表中。

@@ -148,6 +168,12 @@ Windows 桌面应用,基于 Tauri + React 构建,用于通过 CLIProxyAPI

基于 Next.js 的实现,灵感来自 CLIProxyAPI,易于安装使用;自研格式转换(OpenAI/Claude/Gemini/Ollama)、组合系统与自动回退、多账户管理(指数退避)、Next.js Web 控制台,并支持 Cursor、Claude Code、Cline、RooCode 等 CLI 工具,无需 API 密钥。

+### [OmniRoute](https://github.com/diegosouzapw/OmniRoute)

+

+代码不止,创新不停。智能路由至免费及低成本 AI 模型,并支持自动故障转移。

+

+OmniRoute 是一个面向多供应商大语言模型的 AI 网关:它提供兼容 OpenAI 的端点,具备智能路由、负载均衡、重试及回退机制。通过添加策略、速率限制、缓存和可观测性,确保推理过程既可靠又具备成本意识。

+

> [!NOTE]

> 如果你开发了 CLIProxyAPI 的移植或衍生项目,请提交 PR 将其添加到此列表中。

diff --git a/assets/aicodemirror.png b/assets/aicodemirror.png

new file mode 100644

index 00000000..b4585bcf

Binary files /dev/null and b/assets/aicodemirror.png differ

diff --git a/assets/cubence.png b/assets/cubence.png

deleted file mode 100644

index c61f12f6..00000000

Binary files a/assets/cubence.png and /dev/null differ

diff --git a/cmd/fetch_antigravity_models/main.go b/cmd/fetch_antigravity_models/main.go

new file mode 100644

index 00000000..0cf45d3b

--- /dev/null

+++ b/cmd/fetch_antigravity_models/main.go

@@ -0,0 +1,275 @@

+// Command fetch_antigravity_models connects to the Antigravity API using the

+// stored auth credentials and saves the dynamically fetched model list to a

+// JSON file for inspection or offline use.

+//

+// Usage:

+//

+// go run ./cmd/fetch_antigravity_models [flags]

+//

+// Flags:

+//

+// --auths-dir Directory containing auth JSON files (default: "auths")

+// --output Output JSON file path (default: "antigravity_models.json")

+// --pretty Pretty-print the output JSON (default: true)

+package main

+

+import (

+ "context"

+ "encoding/json"

+ "flag"

+ "fmt"

+ "io"

+ "net/http"

+ "os"

+ "path/filepath"

+ "strings"

+ "time"

+

+ "github.com/router-for-me/CLIProxyAPI/v6/internal/logging"

+ sdkauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/auth"

+ coreauth "github.com/router-for-me/CLIProxyAPI/v6/sdk/cliproxy/auth"

+ "github.com/router-for-me/CLIProxyAPI/v6/sdk/proxyutil"

+ log "github.com/sirupsen/logrus"

+ "github.com/tidwall/gjson"

+)

+

+const (

+ antigravityBaseURLDaily = "https://daily-cloudcode-pa.googleapis.com"

+ antigravitySandboxBaseURLDaily = "https://daily-cloudcode-pa.sandbox.googleapis.com"

+ antigravityBaseURLProd = "https://cloudcode-pa.googleapis.com"

+ antigravityModelsPath = "/v1internal:fetchAvailableModels"

+)

+

+func init() {

+ logging.SetupBaseLogger()

+ log.SetLevel(log.InfoLevel)

+}

+

+// modelOutput wraps the fetched model list with fetch metadata.

+type modelOutput struct {

+ Models []modelEntry `json:"models"`

+}

+

+// modelEntry contains only the fields we want to keep for static model definitions.

+type modelEntry struct {

+ ID string `json:"id"`

+ Object string `json:"object"`

+ OwnedBy string `json:"owned_by"`

+ Type string `json:"type"`

+ DisplayName string `json:"display_name"`

+ Name string `json:"name"`

+ Description string `json:"description"`

+ ContextLength int `json:"context_length,omitempty"`

+ MaxCompletionTokens int `json:"max_completion_tokens,omitempty"`

+}

+

+func main() {

+ var authsDir string

+ var outputPath string

+ var pretty bool

+

+ flag.StringVar(&authsDir, "auths-dir", "auths", "Directory containing auth JSON files")

+ flag.StringVar(&outputPath, "output", "antigravity_models.json", "Output JSON file path")

+ flag.BoolVar(&pretty, "pretty", true, "Pretty-print the output JSON")

+ flag.Parse()

+

+ // Resolve relative paths against the working directory.

+ wd, err := os.Getwd()

+ if err != nil {

+ fmt.Fprintf(os.Stderr, "error: cannot get working directory: %v\n", err)

+ os.Exit(1)

+ }

+ if !filepath.IsAbs(authsDir) {

+ authsDir = filepath.Join(wd, authsDir)

+ }

+ if !filepath.IsAbs(outputPath) {

+ outputPath = filepath.Join(wd, outputPath)

+ }

+

+ fmt.Printf("Scanning auth files in: %s\n", authsDir)

+

+ // Load all auth records from the directory.

+ fileStore := sdkauth.NewFileTokenStore()

+ fileStore.SetBaseDir(authsDir)

+

+ ctx := context.Background()

+ auths, err := fileStore.List(ctx)

+ if err != nil {

+ fmt.Fprintf(os.Stderr, "error: failed to list auth files: %v\n", err)

+ os.Exit(1)

+ }

+ if len(auths) == 0 {

+ fmt.Fprintf(os.Stderr, "error: no auth files found in %s\n", authsDir)

+ os.Exit(1)

+ }

+

+ // Find the first enabled antigravity auth.

+ var chosen *coreauth.Auth

+ for _, a := range auths {

+ if a == nil || a.Disabled {

+ continue

+ }

+ if strings.EqualFold(strings.TrimSpace(a.Provider), "antigravity") {

+ chosen = a

+ break

+ }

+ }

+ if chosen == nil {

+ fmt.Fprintf(os.Stderr, "error: no enabled antigravity auth found in %s\n", authsDir)

+ os.Exit(1)

+ }

+

+ fmt.Printf("Using auth: id=%s label=%s\n", chosen.ID, chosen.Label)

+

+ // Fetch models from the upstream Antigravity API.

+ fmt.Println("Fetching Antigravity model list from upstream...")

+

+ fetchCtx, cancel := context.WithTimeout(ctx, 30*time.Second)

+ defer cancel()

+

+ models := fetchModels(fetchCtx, chosen)

+ if len(models) == 0 {

+ fmt.Fprintln(os.Stderr, "warning: no models returned (API may be unavailable or token expired)")

+ } else {

+ fmt.Printf("Fetched %d models.\n", len(models))

+ }

+

+ // Build the output payload.

+ out := modelOutput{

+ Models: models,

+ }

+

+ // Marshal to JSON.

+ var raw []byte

+ if pretty {

+ raw, err = json.MarshalIndent(out, "", " ")

+ } else {

+ raw, err = json.Marshal(out)

+ }

+ if err != nil {

+ fmt.Fprintf(os.Stderr, "error: failed to marshal JSON: %v\n", err)

+ os.Exit(1)

+ }

+

+ if err = os.WriteFile(outputPath, raw, 0o644); err != nil {

+ fmt.Fprintf(os.Stderr, "error: failed to write output file %s: %v\n", outputPath, err)

+ os.Exit(1)

+ }

+

+ fmt.Printf("Model list saved to: %s\n", outputPath)

+}

+

+func fetchModels(ctx context.Context, auth *coreauth.Auth) []modelEntry {

+ accessToken := metaStringValue(auth.Metadata, "access_token")

+ if accessToken == "" {

+ fmt.Fprintln(os.Stderr, "error: no access token found in auth")

+ return nil

+ }

+

+ baseURLs := []string{antigravityBaseURLProd, antigravityBaseURLDaily, antigravitySandboxBaseURLDaily}

+

+ for _, baseURL := range baseURLs {

+ modelsURL := baseURL + antigravityModelsPath

+

+ var payload []byte

+ if auth != nil && auth.Metadata != nil {

+ if pid, ok := auth.Metadata["project_id"].(string); ok && strings.TrimSpace(pid) != "" {

+ payload = []byte(fmt.Sprintf(`{"project": "%s"}`, strings.TrimSpace(pid)))

+ }

+ }

+ if len(payload) == 0 {

+ payload = []byte(`{}`)

+ }

+

+ httpReq, errReq := http.NewRequestWithContext(ctx, http.MethodPost, modelsURL, strings.NewReader(string(payload)))

+ if errReq != nil {

+ continue

+ }

+ httpReq.Close = true

+ httpReq.Header.Set("Content-Type", "application/json")

+ httpReq.Header.Set("Authorization", "Bearer "+accessToken)

+ httpReq.Header.Set("User-Agent", "antigravity/1.19.6 darwin/arm64")

+

+ httpClient := &http.Client{Timeout: 30 * time.Second}

+ if transport, _, errProxy := proxyutil.BuildHTTPTransport(auth.ProxyURL); errProxy == nil && transport != nil {

+ httpClient.Transport = transport

+ }

+ httpResp, errDo := httpClient.Do(httpReq)

+ if errDo != nil {

+ continue

+ }

+

+ bodyBytes, errRead := io.ReadAll(httpResp.Body)

+ httpResp.Body.Close()

+ if errRead != nil {

+ continue

+ }

+

+ if httpResp.StatusCode < http.StatusOK || httpResp.StatusCode >= http.StatusMultipleChoices {

+ continue

+ }

+

+ result := gjson.GetBytes(bodyBytes, "models")

+ if !result.Exists() {

+ continue

+ }

+

+ var models []modelEntry

+

+ for originalName, modelData := range result.Map() {

+ modelID := strings.TrimSpace(originalName)

+ if modelID == "" {

+ continue

+ }

+ // Skip internal/experimental models

+ switch modelID {

+ case "chat_20706", "chat_23310", "tab_flash_lite_preview", "tab_jump_flash_lite_preview", "gemini-2.5-flash-thinking", "gemini-2.5-pro":

+ continue

+ }

+

+ displayName := modelData.Get("displayName").String()

+ if displayName == "" {

+ displayName = modelID

+ }

+

+ entry := modelEntry{

+ ID: modelID,

+ Object: "model",

+ OwnedBy: "antigravity",

+ Type: "antigravity",

+ DisplayName: displayName,

+ Name: modelID,

+ Description: displayName,

+ }

+

+ if maxTok := modelData.Get("maxTokens").Int(); maxTok > 0 {

+ entry.ContextLength = int(maxTok)

+ }

+ if maxOut := modelData.Get("maxOutputTokens").Int(); maxOut > 0 {

+ entry.MaxCompletionTokens = int(maxOut)

+ }

+

+ models = append(models, entry)

+ }

+

+ return models

+ }

+

+ return nil

+}

+

+func metaStringValue(m map[string]interface{}, key string) string {

+ if m == nil {

+ return ""

+ }

+ v, ok := m[key]

+ if !ok {

+ return ""

+ }

+ switch val := v.(type) {

+ case string:

+ return val

+ default:

+ return ""

+ }

+}

diff --git a/cmd/server/main.go b/cmd/server/main.go

index 740a7511..1defccf0 100644

--- a/cmd/server/main.go

+++ b/cmd/server/main.go

@@ -8,6 +8,7 @@ import (

"errors"

"flag"

"fmt"

+ "io"

"io/fs"

"net/url"

"os"

@@ -23,8 +24,10 @@ import (

"github.com/router-for-me/CLIProxyAPI/v6/internal/logging"

"github.com/router-for-me/CLIProxyAPI/v6/internal/managementasset"

"github.com/router-for-me/CLIProxyAPI/v6/internal/misc"

+ "github.com/router-for-me/CLIProxyAPI/v6/internal/registry"

"github.com/router-for-me/CLIProxyAPI/v6/internal/store"

_ "github.com/router-for-me/CLIProxyAPI/v6/internal/translator"

+ "github.com/router-for-me/CLIProxyAPI/v6/internal/tui"

"github.com/router-for-me/CLIProxyAPI/v6/internal/usage"

"github.com/router-for-me/CLIProxyAPI/v6/internal/util"

sdkAuth "github.com/router-for-me/CLIProxyAPI/v6/sdk/auth"

@@ -56,6 +59,7 @@ func main() {

// Command-line flags to control the application's behavior.

var login bool

var codexLogin bool

+ var codexDeviceLogin bool

var claudeLogin bool

var qwenLogin bool

var iflowLogin bool

@@ -63,15 +67,19 @@ func main() {

var noBrowser bool

var oauthCallbackPort int

var antigravityLogin bool

+ var kimiLogin bool

var projectID string

var vertexImport string

var vertexImportPrefix string

var configPath string

var password string

+ var tuiMode bool

+ var standalone bool

// Define command-line flags for different operation modes.

flag.BoolVar(&login, "login", false, "Login Google Account")

flag.BoolVar(&codexLogin, "codex-login", false, "Login to Codex using OAuth")

+ flag.BoolVar(&codexDeviceLogin, "codex-device-login", false, "Login to Codex using device code flow")

flag.BoolVar(&claudeLogin, "claude-login", false, "Login to Claude using OAuth")

flag.BoolVar(&qwenLogin, "qwen-login", false, "Login to Qwen using OAuth")

flag.BoolVar(&iflowLogin, "iflow-login", false, "Login to iFlow using OAuth")

@@ -79,11 +87,14 @@ func main() {

flag.BoolVar(&noBrowser, "no-browser", false, "Don't open browser automatically for OAuth")

flag.IntVar(&oauthCallbackPort, "oauth-callback-port", 0, "Override OAuth callback port (defaults to provider-specific port)")

flag.BoolVar(&antigravityLogin, "antigravity-login", false, "Login to Antigravity using OAuth")

+ flag.BoolVar(&kimiLogin, "kimi-login", false, "Login to Kimi using OAuth")

flag.StringVar(&projectID, "project_id", "", "Project ID (Gemini only, not required)")

flag.StringVar(&configPath, "config", DefaultConfigPath, "Configure File Path")

flag.StringVar(&vertexImport, "vertex-import", "", "Import Vertex service account key JSON file")

flag.StringVar(&vertexImportPrefix, "vertex-import-prefix", "", "Prefix for Vertex model namespacing (use with -vertex-import)")

flag.StringVar(&password, "password", "", "")

+ flag.BoolVar(&tuiMode, "tui", false, "Start with terminal management UI")

+ flag.BoolVar(&standalone, "standalone", false, "In TUI mode, start an embedded local server")

flag.CommandLine.Usage = func() {

out := flag.CommandLine.Output()

@@ -445,7 +456,7 @@ func main() {

}

// Register built-in access providers before constructing services.

- configaccess.Register()

+ configaccess.Register(&cfg.SDKConfig)

// Handle different command modes based on the provided flags.

@@ -461,6 +472,9 @@ func main() {

} else if codexLogin {

// Handle Codex login

cmd.DoCodexLogin(cfg, options)

+ } else if codexDeviceLogin {

+ // Handle Codex device-code login

+ cmd.DoCodexDeviceLogin(cfg, options)

} else if claudeLogin {

// Handle Claude login

cmd.DoClaudeLogin(cfg, options)

@@ -470,6 +484,8 @@ func main() {

cmd.DoIFlowLogin(cfg, options)

} else if iflowCookie {

cmd.DoIFlowCookieAuth(cfg, options)

+ } else if kimiLogin {

+ cmd.DoKimiLogin(cfg, options)

} else {

// In cloud deploy mode without config file, just wait for shutdown signals

if isCloudDeploy && !configFileExists {

@@ -477,8 +493,85 @@ func main() {

cmd.WaitForCloudDeploy()

return

}

- // Start the main proxy service

- managementasset.StartAutoUpdater(context.Background(), configFilePath)

- cmd.StartService(cfg, configFilePath, password)

+ if tuiMode {

+ if standalone {

+ // Standalone mode: start an embedded local server and connect TUI client to it.

+ managementasset.StartAutoUpdater(context.Background(), configFilePath)

+ registry.StartModelsUpdater(context.Background())

+ hook := tui.NewLogHook(2000)

+ hook.SetFormatter(&logging.LogFormatter{})

+ log.AddHook(hook)

+

+ origStdout := os.Stdout

+ origStderr := os.Stderr

+ origLogOutput := log.StandardLogger().Out

+ log.SetOutput(io.Discard)

+

+ devNull, errOpenDevNull := os.Open(os.DevNull)

+ if errOpenDevNull == nil {

+ os.Stdout = devNull

+ os.Stderr = devNull

+ }

+

+ restoreIO := func() {

+ os.Stdout = origStdout

+ os.Stderr = origStderr

+ log.SetOutput(origLogOutput)

+ if devNull != nil {

+ _ = devNull.Close()

+ }

+ }

+

+ localMgmtPassword := fmt.Sprintf("tui-%d-%d", os.Getpid(), time.Now().UnixNano())

+ if password == "" {

+ password = localMgmtPassword

+ }

+

+ cancel, done := cmd.StartServiceBackground(cfg, configFilePath, password)

+

+ client := tui.NewClient(cfg.Port, password)

+ ready := false

+ backoff := 100 * time.Millisecond

+ for i := 0; i < 30; i++ {

+ if _, errGetConfig := client.GetConfig(); errGetConfig == nil {

+ ready = true

+ break

+ }

+ time.Sleep(backoff)

+ if backoff < time.Second {

+ backoff = time.Duration(float64(backoff) * 1.5)

+ }

+ }

+

+ if !ready {

+ restoreIO()

+ cancel()

+ <-done

+ fmt.Fprintf(os.Stderr, "TUI error: embedded server is not ready\n")

+ return

+ }

+

+ if errRun := tui.Run(cfg.Port, password, hook, origStdout); errRun != nil {

+ restoreIO()

+ fmt.Fprintf(os.Stderr, "TUI error: %v\n", errRun)

+ } else {

+ restoreIO()

+ }

+

+ cancel()

+ <-done

+ } else {

+ // Default TUI mode: pure management client.

+ // The proxy server must already be running.

+ if errRun := tui.Run(cfg.Port, password, nil, os.Stdout); errRun != nil {

+ fmt.Fprintf(os.Stderr, "TUI error: %v\n", errRun)

+ }

+ }

+ } else {

+ // Start the main proxy service

+ managementasset.StartAutoUpdater(context.Background(), configFilePath)

+ registry.StartModelsUpdater(context.Background())

+ cmd.StartService(cfg, configFilePath, password)

+ }

}

}

diff --git a/config.example.yaml b/config.example.yaml

index 83e92627..3718a07a 100644

--- a/config.example.yaml

+++ b/config.example.yaml

@@ -40,6 +40,11 @@ api-keys:

# Enable debug logging

debug: false

+# Enable pprof HTTP debug server (host:port). Keep it bound to localhost for safety.

+pprof:

+ enable: false

+ addr: "127.0.0.1:8316"

+

# When true, disable high-overhead HTTP middleware features to reduce per-request memory usage under high concurrency.

commercial-mode: false

@@ -50,18 +55,31 @@ logging-to-file: false

# files are deleted until within the limit. Set to 0 to disable.

logs-max-total-size-mb: 0

+# Maximum number of error log files retained when request logging is disabled.

+# When exceeded, the oldest error log files are deleted. Default is 10. Set to 0 to disable cleanup.

+error-logs-max-files: 10

+

# When false, disable in-memory usage statistics aggregation

usage-statistics-enabled: false

# Proxy URL. Supports socks5/http/https protocols. Example: socks5://user:pass@192.168.1.1:1080/

+# Per-entry proxy-url also supports "direct" or "none" to bypass both the global proxy-url and environment proxies explicitly.

proxy-url: ""

# When true, unprefixed model requests only use credentials without a prefix (except when prefix == model name).

force-model-prefix: false

+# When true, forward filtered upstream response headers to downstream clients.

+# Default is false (disabled).

+passthrough-headers: false

+

# Number of times to retry a request. Retries will occur if the HTTP response code is 403, 408, 500, 502, 503, or 504.

request-retry: 3

+# Maximum number of different credentials to try for one failed request.

+# Set to 0 to keep legacy behavior (try all available credentials).

+max-retry-credentials: 0

+

# Maximum wait time in seconds for a cooled-down credential before triggering a retry.

max-retry-interval: 30

@@ -85,10 +103,6 @@ nonstream-keepalive-interval: 0

# keepalive-seconds: 15 # Default: 0 (disabled). <= 0 disables keep-alives.

# bootstrap-retries: 1 # Default: 0 (disabled). Retries before first byte is sent.

-# When true, enable official Codex instructions injection for Codex API requests.

-# When false (default), CodexInstructionsForModel returns immediately without modification.

-codex-instructions-enabled: false

-

# Gemini API keys

# gemini-api-key:

# - api-key: "AIzaSy...01"

@@ -97,6 +111,7 @@ codex-instructions-enabled: false

# headers:

# X-Custom-Header: "custom-value"

# proxy-url: "socks5://proxy.example.com:1080"

+# # proxy-url: "direct" # optional: explicit direct connect for this credential

# models:

# - name: "gemini-2.5-flash" # upstream model name

# alias: "gemini-flash" # client alias mapped to the upstream model

@@ -115,6 +130,7 @@ codex-instructions-enabled: false

# headers:

# X-Custom-Header: "custom-value"

# proxy-url: "socks5://proxy.example.com:1080" # optional: per-key proxy override

+# # proxy-url: "direct" # optional: explicit direct connect for this credential

# models:

# - name: "gpt-5-codex" # upstream model name

# alias: "codex-latest" # client alias mapped to the upstream model

@@ -133,6 +149,7 @@ codex-instructions-enabled: false

# headers:

# X-Custom-Header: "custom-value"

# proxy-url: "socks5://proxy.example.com:1080" # optional: per-key proxy override

+# # proxy-url: "direct" # optional: explicit direct connect for this credential

# models:

# - name: "claude-3-5-sonnet-20241022" # upstream model name

# alias: "claude-sonnet-latest" # client alias mapped to the upstream model

@@ -150,6 +167,23 @@ codex-instructions-enabled: false

# sensitive-words: # optional: words to obfuscate with zero-width characters

# - "API"

# - "proxy"

+# cache-user-id: true # optional: default is false; set true to reuse cached user_id per API key instead of generating a random one each request

+

+# Default headers for Claude API requests. Update when Claude Code releases new versions.

+# These are used as fallbacks when the client does not send its own headers.

+# claude-header-defaults:

+# user-agent: "claude-cli/2.1.44 (external, sdk-cli)"

+# package-version: "0.74.0"

+# runtime-version: "v24.3.0"

+# timeout: "600"

+

+# Default headers for Codex OAuth model requests.

+# These are used only for file-backed/OAuth Codex requests when the client

+# does not send the header. `user-agent` applies to HTTP and websocket requests;

+# `beta-features` only applies to websocket requests. They do not apply to codex-api-key entries.

+# codex-header-defaults:

+# user-agent: "codex_cli_rs/0.114.0 (Mac OS 14.2.0; x86_64) vscode/1.111.0"

+# beta-features: "multi_agent"

# OpenAI compatibility providers

# openai-compatibility:

@@ -161,17 +195,30 @@ codex-instructions-enabled: false

# api-key-entries:

# - api-key: "sk-or-v1-...b780"

# proxy-url: "socks5://proxy.example.com:1080" # optional: per-key proxy override

+# # proxy-url: "direct" # optional: explicit direct connect for this credential

# - api-key: "sk-or-v1-...b781" # without proxy-url

# models: # The models supported by the provider.

# - name: "moonshotai/kimi-k2:free" # The actual model name.

# alias: "kimi-k2" # The alias used in the API.

+# # You may repeat the same alias to build an internal model pool.

+# # The client still sees only one alias in the model list.

+# # Requests to that alias will round-robin across the upstream names below,

+# # and if the chosen upstream fails before producing output, the request will

+# # continue with the next upstream model in the same alias pool.

+# - name: "qwen3.5-plus"

+# alias: "claude-opus-4.66"

+# - name: "glm-5"

+# alias: "claude-opus-4.66"

+# - name: "kimi-k2.5"

+# alias: "claude-opus-4.66"

-# Vertex API keys (Vertex-compatible endpoints, use API key + base URL)

+# Vertex API keys (Vertex-compatible endpoints, base-url is optional)

# vertex-api-key:

# - api-key: "vk-123..." # x-goog-api-key header

# prefix: "test" # optional: require calls like "test/vertex-pro" to target this credential

-# base-url: "https://example.com/api" # e.g. https://zenmux.ai/api

+# base-url: "https://example.com/api" # optional, e.g. https://zenmux.ai/api; falls back to Google Vertex when omitted

# proxy-url: "socks5://proxy.example.com:1080" # optional per-key proxy override

+# # proxy-url: "direct" # optional: explicit direct connect for this credential

# headers:

# X-Custom-Header: "custom-value"

# models: # optional: map aliases to upstream model names

@@ -179,6 +226,9 @@ codex-instructions-enabled: false

# alias: "vertex-flash" # client-visible alias

# - name: "gemini-2.5-pro"

# alias: "vertex-pro"

+# excluded-models: # optional: models to exclude from listing

+# - "imagen-3.0-generate-002"

+# - "imagen-*"

# Amp Integration

# ampcode:

@@ -216,25 +266,10 @@ codex-instructions-enabled: false

# Global OAuth model name aliases (per channel)

# These aliases rename model IDs for both model listing and request routing.

-# Supported channels: gemini-cli, vertex, aistudio, antigravity, claude, codex, qwen, iflow.

+# Supported channels: gemini-cli, vertex, aistudio, antigravity, claude, codex, qwen, iflow, kimi.

# NOTE: Aliases do not apply to gemini-api-key, codex-api-key, claude-api-key, openai-compatibility, vertex-api-key, or ampcode.

# You can repeat the same name with different aliases to expose multiple client model names.

-oauth-model-alias:

- antigravity:

- - name: "rev19-uic3-1p"

- alias: "gemini-2.5-computer-use-preview-10-2025"

- - name: "gemini-3-pro-image"

- alias: "gemini-3-pro-image-preview"

- - name: "gemini-3-pro-high"

- alias: "gemini-3-pro-preview"

- - name: "gemini-3-flash"

- alias: "gemini-3-flash-preview"

- - name: "claude-sonnet-4-5"

- alias: "gemini-claude-sonnet-4-5"

- - name: "claude-sonnet-4-5-thinking"

- alias: "gemini-claude-sonnet-4-5-thinking"

- - name: "claude-opus-4-5-thinking"

- alias: "gemini-claude-opus-4-5-thinking"

+# oauth-model-alias:

# gemini-cli:

# - name: "gemini-2.5-pro" # original model name under this channel

# alias: "g2.5p" # client-visible alias

@@ -245,6 +280,9 @@ oauth-model-alias:

# aistudio:

# - name: "gemini-2.5-pro"

# alias: "g2.5p"

+# antigravity:

+# - name: "gemini-3-pro-high"

+# alias: "gemini-3-pro-preview"

# claude:

# - name: "claude-sonnet-4-5-20250929"

# alias: "cs4.5"

@@ -257,6 +295,9 @@ oauth-model-alias:

# iflow:

# - name: "glm-4.7"

# alias: "glm-god"

+# kimi:

+# - name: "kimi-k2.5"

+# alias: "k2.5"

# OAuth provider excluded models

# oauth-excluded-models:

@@ -279,30 +320,39 @@ oauth-model-alias:

# - "vision-model"

# iflow:

# - "tstars2.0"

+# kimi:

+# - "kimi-k2-thinking"

# Optional payload configuration

# payload:

# default: # Default rules only set parameters when they are missing in the payload.

# - models:

# - name: "gemini-2.5-pro" # Supports wildcards (e.g., "gemini-*")

-# protocol: "gemini" # restricts the rule to a specific protocol, options: openai, gemini, claude, codex

+# protocol: "gemini" # restricts the rule to a specific protocol, options: openai, gemini, claude, codex, antigravity

# params: # JSON path (gjson/sjson syntax) -> value

# "generationConfig.thinkingConfig.thinkingBudget": 32768

# default-raw: # Default raw rules set parameters using raw JSON when missing (must be valid JSON).

# - models:

# - name: "gemini-2.5-pro" # Supports wildcards (e.g., "gemini-*")

-# protocol: "gemini" # restricts the rule to a specific protocol, options: openai, gemini, claude, codex

+# protocol: "gemini" # restricts the rule to a specific protocol, options: openai, gemini, claude, codex, antigravity

# params: # JSON path (gjson/sjson syntax) -> raw JSON value (strings are used as-is, must be valid JSON)

# "generationConfig.responseJsonSchema": "{\"type\":\"object\",\"properties\":{\"answer\":{\"type\":\"string\"}}}"

# override: # Override rules always set parameters, overwriting any existing values.

# - models:

# - name: "gpt-*" # Supports wildcards (e.g., "gpt-*")

-# protocol: "codex" # restricts the rule to a specific protocol, options: openai, gemini, claude, codex

+# protocol: "codex" # restricts the rule to a specific protocol, options: openai, gemini, claude, codex, antigravity

# params: # JSON path (gjson/sjson syntax) -> value

# "reasoning.effort": "high"

# override-raw: # Override raw rules always set parameters using raw JSON (must be valid JSON).

# - models:

# - name: "gpt-*" # Supports wildcards (e.g., "gpt-*")

-# protocol: "codex" # restricts the rule to a specific protocol, options: openai, gemini, claude, codex

+# protocol: "codex" # restricts the rule to a specific protocol, options: openai, gemini, claude, codex, antigravity

# params: # JSON path (gjson/sjson syntax) -> raw JSON value (strings are used as-is, must be valid JSON)

# "response_format": "{\"type\":\"json_schema\",\"json_schema\":{\"name\":\"answer\",\"schema\":{\"type\":\"object\"}}}"

+# filter: # Filter rules remove specified parameters from the payload.

+# - models:

+# - name: "gemini-2.5-pro" # Supports wildcards (e.g., "gemini-*")

+# protocol: "gemini" # restricts the rule to a specific protocol, options: openai, gemini, claude, codex, antigravity

+# params: # JSON paths (gjson/sjson syntax) to remove from the payload

+# - "generationConfig.thinkingConfig.thinkingBudget"

+# - "generationConfig.responseJsonSchema"

diff --git a/docs/sdk-access.md b/docs/sdk-access.md

index e4e69629..343c851b 100644

--- a/docs/sdk-access.md

+++ b/docs/sdk-access.md

@@ -7,80 +7,71 @@ The `github.com/router-for-me/CLIProxyAPI/v6/sdk/access` package centralizes inb

```go

import (

sdkaccess "github.com/router-for-me/CLIProxyAPI/v6/sdk/access"

- "github.com/router-for-me/CLIProxyAPI/v6/internal/config"

)

```

Add the module with `go get github.com/router-for-me/CLIProxyAPI/v6/sdk/access`.

+## Provider Registry

+

+Providers are registered globally and then attached to a `Manager` as a snapshot:

+

+- `RegisterProvider(type, provider)` installs a pre-initialized provider instance.

+- Registration order is preserved the first time each `type` is seen.

+- `RegisteredProviders()` returns the providers in that order.

+

## Manager Lifecycle

```go

manager := sdkaccess.NewManager()

-providers, err := sdkaccess.BuildProviders(cfg)

-if err != nil {

- return err

-}

-manager.SetProviders(providers)

+manager.SetProviders(sdkaccess.RegisteredProviders())

```

* `NewManager` constructs an empty manager.

* `SetProviders` replaces the provider slice using a defensive copy.

* `Providers` retrieves a snapshot that can be iterated safely from other goroutines.

-* `BuildProviders` translates `config.Config` access declarations into runnable providers. When the config omits explicit providers but defines inline API keys, the helper auto-installs the built-in `config-api-key` provider.

+

+If the manager itself is `nil` or no providers are configured, the call returns `nil, nil`, allowing callers to treat access control as disabled.

## Authenticating Requests

```go

-result, err := manager.Authenticate(ctx, req)

+result, authErr := manager.Authenticate(ctx, req)

switch {

-case err == nil:

+case authErr == nil:

// Authentication succeeded; result describes the provider and principal.

-case errors.Is(err, sdkaccess.ErrNoCredentials):

+case sdkaccess.IsAuthErrorCode(authErr, sdkaccess.AuthErrorCodeNoCredentials):

// No recognizable credentials were supplied.

-case errors.Is(err, sdkaccess.ErrInvalidCredential):

+case sdkaccess.IsAuthErrorCode(authErr, sdkaccess.AuthErrorCodeInvalidCredential):

// Supplied credentials were present but rejected.

default:

- // Transport-level failure was returned by a provider.

+ // Internal/transport failure was returned by a provider.

}

```

-`Manager.Authenticate` walks the configured providers in order. It returns on the first success, skips providers that surface `ErrNotHandled`, and tracks whether any provider reported `ErrNoCredentials` or `ErrInvalidCredential` for downstream error reporting.

-

-If the manager itself is `nil` or no providers are registered, the call returns `nil, nil`, allowing callers to treat access control as disabled without branching on errors.

+`Manager.Authenticate` walks the configured providers in order. It returns on the first success, skips providers that return `AuthErrorCodeNotHandled`, and aggregates `AuthErrorCodeNoCredentials` / `AuthErrorCodeInvalidCredential` for a final result.

Each `Result` includes the provider identifier, the resolved principal, and optional metadata (for example, which header carried the credential).

-## Configuration Layout

+## Built-in `config-api-key` Provider

-The manager expects access providers under the `auth.providers` key inside `config.yaml`:

+The proxy includes one built-in access provider:

+

+- `config-api-key`: Validates API keys declared under top-level `api-keys`.

+ - Credential sources: `Authorization: Bearer`, `X-Goog-Api-Key`, `X-Api-Key`, `?key=`, `?auth_token=`

+ - Metadata: `Result.Metadata["source"]` is set to the matched source label.

+

+In the CLI server and `sdk/cliproxy`, this provider is registered automatically based on the loaded configuration.

```yaml

-auth:

- providers:

- - name: inline-api

- type: config-api-key

- api-keys:

- - sk-test-123

- - sk-prod-456

+api-keys:

+ - sk-test-123

+ - sk-prod-456

```

-Fields map directly to `config.AccessProvider`: `name` labels the provider, `type` selects the registered factory, `sdk` can name an external module, `api-keys` seeds inline credentials, and `config` passes provider-specific options.

+## Loading Providers from External Go Modules

-### Loading providers from external SDK modules

-

-To consume a provider shipped in another Go module, point the `sdk` field at the module path and import it for its registration side effect:

-

-```yaml

-auth:

- providers:

- - name: partner-auth

- type: partner-token

- sdk: github.com/acme/xplatform/sdk/access/providers/partner

- config:

- region: us-west-2

- audience: cli-proxy

-```

+To consume a provider shipped in another Go module, import it for its registration side effect:

```go

import (

@@ -89,19 +80,11 @@ import (

)

```

-The blank identifier import ensures `init` runs so `sdkaccess.RegisterProvider` executes before `BuildProviders` is called.

-

-## Built-in Providers

-

-The SDK ships with one provider out of the box:

-

-- `config-api-key`: Validates API keys declared inline or under top-level `api-keys`. It accepts the key from `Authorization: Bearer`, `X-Goog-Api-Key`, `X-Api-Key`, or the `?key=` query string and reports `ErrInvalidCredential` when no match is found.

-

-Additional providers can be delivered by third-party packages. When a provider package is imported, it registers itself with `sdkaccess.RegisterProvider`.

+The blank identifier import ensures `init` runs so `sdkaccess.RegisterProvider` executes before you call `RegisteredProviders()` (or before `cliproxy.NewBuilder().Build()`).

### Metadata and auditing

-`Result.Metadata` carries provider-specific context. The built-in `config-api-key` provider, for example, stores the credential source (`authorization`, `x-goog-api-key`, `x-api-key`, or `query-key`). Populate this map in custom providers to enrich logs and downstream auditing.

+`Result.Metadata` carries provider-specific context. The built-in `config-api-key` provider, for example, stores the credential source (`authorization`, `x-goog-api-key`, `x-api-key`, `query-key`, `query-auth-token`). Populate this map in custom providers to enrich logs and downstream auditing.

## Writing Custom Providers

@@ -110,13 +93,13 @@ type customProvider struct{}

func (p *customProvider) Identifier() string { return "my-provider" }

-func (p *customProvider) Authenticate(ctx context.Context, r *http.Request) (*sdkaccess.Result, error) {

+func (p *customProvider) Authenticate(ctx context.Context, r *http.Request) (*sdkaccess.Result, *sdkaccess.AuthError) {

token := r.Header.Get("X-Custom")

if token == "" {

- return nil, sdkaccess.ErrNoCredentials

+ return nil, sdkaccess.NewNotHandledError()

}

if token != "expected" {

- return nil, sdkaccess.ErrInvalidCredential

+ return nil, sdkaccess.NewInvalidCredentialError()

}

return &sdkaccess.Result{

Provider: p.Identifier(),

@@ -126,51 +109,46 @@ func (p *customProvider) Authenticate(ctx context.Context, r *http.Request) (*sd

}

func init() {

- sdkaccess.RegisterProvider("custom", func(cfg *config.AccessProvider, root *config.Config) (sdkaccess.Provider, error) {

- return &customProvider{}, nil

- })

+ sdkaccess.RegisterProvider("custom", &customProvider{})

}

```

-A provider must implement `Identifier()` and `Authenticate()`. To expose it to configuration, call `RegisterProvider` inside `init`. Provider factories receive the specific `AccessProvider` block plus the full root configuration for contextual needs.

+A provider must implement `Identifier()` and `Authenticate()`. To make it available to the access manager, call `RegisterProvider` inside `init` with an initialized provider instance.

## Error Semantics

-- `ErrNoCredentials`: no credentials were present or recognized by any provider.

-- `ErrInvalidCredential`: at least one provider processed the credentials but rejected them.

-- `ErrNotHandled`: instructs the manager to fall through to the next provider without affecting aggregate error reporting.

+- `NewNoCredentialsError()` (`AuthErrorCodeNoCredentials`): no credentials were present or recognized. (HTTP 401)

+- `NewInvalidCredentialError()` (`AuthErrorCodeInvalidCredential`): credentials were present but rejected. (HTTP 401)

+- `NewNotHandledError()` (`AuthErrorCodeNotHandled`): fall through to the next provider.

+- `NewInternalAuthError(message, cause)` (`AuthErrorCodeInternal`): transport/system failure. (HTTP 500)

-Return custom errors to surface transport failures; they propagate immediately to the caller instead of being masked.

+Errors propagate immediately to the caller unless they are classified as `not_handled` / `no_credentials` / `invalid_credential` and can be aggregated by the manager.

## Integration with cliproxy Service

-`sdk/cliproxy` wires `@sdk/access` automatically when you build a CLI service via `cliproxy.NewBuilder`. Supplying a preconfigured manager allows you to extend or override the default providers:

+`sdk/cliproxy` wires `@sdk/access` automatically when you build a CLI service via `cliproxy.NewBuilder`. Supplying a manager lets you reuse the same instance in your host process:

```go

coreCfg, _ := config.LoadConfig("config.yaml")

-providers, _ := sdkaccess.BuildProviders(coreCfg)

-manager := sdkaccess.NewManager()

-manager.SetProviders(providers)

+accessManager := sdkaccess.NewManager()

svc, _ := cliproxy.NewBuilder().

WithConfig(coreCfg).

- WithAccessManager(manager).

+ WithConfigPath("config.yaml").

+ WithRequestAccessManager(accessManager).

Build()

```

-The service reuses the manager for every inbound request, ensuring consistent authentication across embedded deployments and the canonical CLI binary.

+Register any custom providers (typically via blank imports) before calling `Build()` so they are present in the global registry snapshot.

-### Hot reloading providers

+### Hot reloading

-When configuration changes, rebuild providers and swap them into the manager:

+When configuration changes, refresh any config-backed providers and then reset the manager's provider chain:

```go

-providers, err := sdkaccess.BuildProviders(newCfg)

-if err != nil {

- log.Errorf("reload auth providers failed: %v", err)

- return

-}

-accessManager.SetProviders(providers)

+// configaccess is github.com/router-for-me/CLIProxyAPI/v6/internal/access/config_access

+configaccess.Register(&newCfg.SDKConfig)

+accessManager.SetProviders(sdkaccess.RegisteredProviders())

```

-This mirrors the behaviour in `cliproxy.Service.refreshAccessProviders` and `api.Server.applyAccessConfig`, enabling runtime updates without restarting the process.

+This mirrors the behaviour in `internal/access.ApplyAccessProviders`, enabling runtime updates without restarting the process.

diff --git a/docs/sdk-access_CN.md b/docs/sdk-access_CN.md

index b3f26497..38aafe11 100644

--- a/docs/sdk-access_CN.md

+++ b/docs/sdk-access_CN.md

@@ -7,80 +7,71 @@

```go

import (

sdkaccess "github.com/router-for-me/CLIProxyAPI/v6/sdk/access"

- "github.com/router-for-me/CLIProxyAPI/v6/internal/config"

)

```

通过 `go get github.com/router-for-me/CLIProxyAPI/v6/sdk/access` 添加依赖。

+## Provider Registry

+

+访问提供者是全局注册,然后以快照形式挂到 `Manager` 上:

+

+- `RegisterProvider(type, provider)` 注册一个已经初始化好的 provider 实例。

+- 每个 `type` 第一次出现时会记录其注册顺序。

+- `RegisteredProviders()` 会按该顺序返回 provider 列表。

+

## 管理器生命周期

```go

manager := sdkaccess.NewManager()

-providers, err := sdkaccess.BuildProviders(cfg)

-if err != nil {

- return err

-}

-manager.SetProviders(providers)

+manager.SetProviders(sdkaccess.RegisteredProviders())

```

- `NewManager` 创建空管理器。

- `SetProviders` 替换提供者切片并做防御性拷贝。

- `Providers` 返回适合并发读取的快照。

-- `BuildProviders` 将 `config.Config` 中的访问配置转换成可运行的提供者。当配置没有显式声明但包含顶层 `api-keys` 时,会自动挂载内建的 `config-api-key` 提供者。

+

+如果管理器本身为 `nil` 或未配置任何 provider,调用会返回 `nil, nil`,可视为关闭访问控制。

## 认证请求

```go

-result, err := manager.Authenticate(ctx, req)

+result, authErr := manager.Authenticate(ctx, req)

switch {

-case err == nil:

+case authErr == nil:

// Authentication succeeded; result carries provider and principal.

-case errors.Is(err, sdkaccess.ErrNoCredentials):

+case sdkaccess.IsAuthErrorCode(authErr, sdkaccess.AuthErrorCodeNoCredentials):

// No recognizable credentials were supplied.

-case errors.Is(err, sdkaccess.ErrInvalidCredential):

+case sdkaccess.IsAuthErrorCode(authErr, sdkaccess.AuthErrorCodeInvalidCredential):

// Credentials were present but rejected.

default:

// Provider surfaced a transport-level failure.

}

```

-`Manager.Authenticate` 按配置顺序遍历提供者。遇到成功立即返回,`ErrNotHandled` 会继续尝试下一个;若发现 `ErrNoCredentials` 或 `ErrInvalidCredential`,会在遍历结束后汇总给调用方。

-

-若管理器本身为 `nil` 或尚未注册提供者,调用会返回 `nil, nil`,让调用方无需针对错误做额外分支即可关闭访问控制。

+`Manager.Authenticate` 会按顺序遍历 provider:遇到成功立即返回,`AuthErrorCodeNotHandled` 会继续尝试下一个;`AuthErrorCodeNoCredentials` / `AuthErrorCodeInvalidCredential` 会在遍历结束后汇总给调用方。

`Result` 提供认证提供者标识、解析出的主体以及可选元数据(例如凭证来源)。

-## 配置结构

+## 内建 `config-api-key` Provider

-在 `config.yaml` 的 `auth.providers` 下定义访问提供者:

+代理内置一个访问提供者:

+

+- `config-api-key`:校验 `config.yaml` 顶层的 `api-keys`。

+ - 凭证来源:`Authorization: Bearer`、`X-Goog-Api-Key`、`X-Api-Key`、`?key=`、`?auth_token=`

+ - 元数据:`Result.Metadata["source"]` 会写入匹配到的来源标识

+

+在 CLI 服务端与 `sdk/cliproxy` 中,该 provider 会根据加载到的配置自动注册。

```yaml

-auth:

- providers:

- - name: inline-api

- type: config-api-key

- api-keys:

- - sk-test-123

- - sk-prod-456

+api-keys:

+ - sk-test-123

+ - sk-prod-456

```

-条目映射到 `config.AccessProvider`:`name` 指定实例名,`type` 选择注册的工厂,`sdk` 可引用第三方模块,`api-keys` 提供内联凭证,`config` 用于传递特定选项。

+## 引入外部 Go 模块提供者

-### 引入外部 SDK 提供者

-

-若要消费其它 Go 模块输出的访问提供者,可在配置里填写 `sdk` 字段并在代码中引入该包,利用其 `init` 注册过程:

-

-```yaml

-auth:

- providers:

- - name: partner-auth

- type: partner-token

- sdk: github.com/acme/xplatform/sdk/access/providers/partner

- config:

- region: us-west-2

- audience: cli-proxy

-```

+若要消费其它 Go 模块输出的访问提供者,直接用空白标识符导入以触发其 `init` 注册即可:

```go

import (

@@ -89,19 +80,11 @@ import (

)

```

-通过空白标识符导入即可确保 `init` 调用,先于 `BuildProviders` 完成 `sdkaccess.RegisterProvider`。

-

-## 内建提供者

-

-当前 SDK 默认内置:

-

-- `config-api-key`:校验配置中的 API Key。它从 `Authorization: Bearer`、`X-Goog-Api-Key`、`X-Api-Key` 以及查询参数 `?key=` 提取凭证,不匹配时抛出 `ErrInvalidCredential`。

-

-导入第三方包即可通过 `sdkaccess.RegisterProvider` 注册更多类型。

+空白导入可确保 `init` 先执行,从而在你调用 `RegisteredProviders()`(或 `cliproxy.NewBuilder().Build()`)之前完成 `sdkaccess.RegisterProvider`。

### 元数据与审计

-`Result.Metadata` 用于携带提供者特定的上下文信息。内建的 `config-api-key` 会记录凭证来源(`authorization`、`x-goog-api-key`、`x-api-key` 或 `query-key`)。自定义提供者同样可以填充该 Map,以便丰富日志与审计场景。

+`Result.Metadata` 用于携带提供者特定的上下文信息。内建的 `config-api-key` 会记录凭证来源(`authorization`、`x-goog-api-key`、`x-api-key`、`query-key`、`query-auth-token`)。自定义提供者同样可以填充该 Map,以便丰富日志与审计场景。

## 编写自定义提供者

@@ -110,13 +93,13 @@ type customProvider struct{}

func (p *customProvider) Identifier() string { return "my-provider" }

-func (p *customProvider) Authenticate(ctx context.Context, r *http.Request) (*sdkaccess.Result, error) {

+func (p *customProvider) Authenticate(ctx context.Context, r *http.Request) (*sdkaccess.Result, *sdkaccess.AuthError) {

token := r.Header.Get("X-Custom")

if token == "" {

- return nil, sdkaccess.ErrNoCredentials

+ return nil, sdkaccess.NewNotHandledError()

}

if token != "expected" {

- return nil, sdkaccess.ErrInvalidCredential

+ return nil, sdkaccess.NewInvalidCredentialError()

}

return &sdkaccess.Result{

Provider: p.Identifier(),

@@ -126,51 +109,46 @@ func (p *customProvider) Authenticate(ctx context.Context, r *http.Request) (*sd

}

func init() {

- sdkaccess.RegisterProvider("custom", func(cfg *config.AccessProvider, root *config.Config) (sdkaccess.Provider, error) {

- return &customProvider{}, nil

- })

+ sdkaccess.RegisterProvider("custom", &customProvider{})

}

```

-自定义提供者需要实现 `Identifier()` 与 `Authenticate()`。在 `init` 中调用 `RegisterProvider` 暴露给配置层,工厂函数既能读取当前条目,也能访问完整根配置。

+自定义提供者需要实现 `Identifier()` 与 `Authenticate()`。在 `init` 中用已初始化实例调用 `RegisterProvider` 注册到全局 registry。

## 错误语义

-- `ErrNoCredentials`:任何提供者都未识别到凭证。

-- `ErrInvalidCredential`:至少一个提供者处理了凭证但判定无效。

-- `ErrNotHandled`:告诉管理器跳到下一个提供者,不影响最终错误统计。

+- `NewNoCredentialsError()`(`AuthErrorCodeNoCredentials`):未提供或未识别到凭证。(HTTP 401)

+- `NewInvalidCredentialError()`(`AuthErrorCodeInvalidCredential`):凭证存在但校验失败。(HTTP 401)

+- `NewNotHandledError()`(`AuthErrorCodeNotHandled`):告诉管理器跳到下一个 provider。

+- `NewInternalAuthError(message, cause)`(`AuthErrorCodeInternal`):网络/系统错误。(HTTP 500)

-自定义错误(例如网络异常)会马上冒泡返回。

+除可汇总的 `not_handled` / `no_credentials` / `invalid_credential` 外,其它错误会立即冒泡返回。

## 与 cliproxy 集成

-使用 `sdk/cliproxy` 构建服务时会自动接入 `@sdk/access`。如果需要扩展内置行为,可传入自定义管理器:

+使用 `sdk/cliproxy` 构建服务时会自动接入 `@sdk/access`。如果希望在宿主进程里复用同一个 `Manager` 实例,可传入自定义管理器:

```go

coreCfg, _ := config.LoadConfig("config.yaml")

-providers, _ := sdkaccess.BuildProviders(coreCfg)

-manager := sdkaccess.NewManager()

-manager.SetProviders(providers)

+accessManager := sdkaccess.NewManager()

svc, _ := cliproxy.NewBuilder().

WithConfig(coreCfg).

- WithAccessManager(manager).

+ WithConfigPath("config.yaml").

+ WithRequestAccessManager(accessManager).

Build()

```

-服务会复用该管理器处理每一个入站请求,实现与 CLI 二进制一致的访问控制体验。

+请在调用 `Build()` 之前完成自定义 provider 的注册(通常通过空白导入触发 `init`),以确保它们被包含在全局 registry 的快照中。

### 动态热更新提供者

-当配置发生变化时,可以重新构建提供者并替换当前列表:

+当配置发生变化时,刷新依赖配置的 provider,然后重置 manager 的 provider 链:

```go

-providers, err := sdkaccess.BuildProviders(newCfg)

-if err != nil {

- log.Errorf("reload auth providers failed: %v", err)

- return

-}

-accessManager.SetProviders(providers)

+// configaccess is github.com/router-for-me/CLIProxyAPI/v6/internal/access/config_access

+configaccess.Register(&newCfg.SDKConfig)

+accessManager.SetProviders(sdkaccess.RegisteredProviders())

```

-这一流程与 `cliproxy.Service.refreshAccessProviders` 和 `api.Server.applyAccessConfig` 保持一致,避免为更新访问策略而重启进程。

+这一流程与 `internal/access.ApplyAccessProviders` 保持一致,避免为更新访问策略而重启进程。

diff --git a/examples/custom-provider/main.go b/examples/custom-provider/main.go

index 9dab183e..7c611f9e 100644

--- a/examples/custom-provider/main.go

+++ b/examples/custom-provider/main.go

@@ -159,13 +159,13 @@ func (MyExecutor) CountTokens(context.Context, *coreauth.Auth, clipexec.Request,

return clipexec.Response{}, errors.New("count tokens not implemented")

}

-func (MyExecutor) ExecuteStream(ctx context.Context, a *coreauth.Auth, req clipexec.Request, opts clipexec.Options) (<-chan clipexec.StreamChunk, error) {

+func (MyExecutor) ExecuteStream(ctx context.Context, a *coreauth.Auth, req clipexec.Request, opts clipexec.Options) (*clipexec.StreamResult, error) {

ch := make(chan clipexec.StreamChunk, 1)

go func() {

defer close(ch)

ch <- clipexec.StreamChunk{Payload: []byte("data: {\"ok\":true}\n\n")}

}()

- return ch, nil

+ return &clipexec.StreamResult{Chunks: ch}, nil

}

func (MyExecutor) Refresh(ctx context.Context, a *coreauth.Auth) (*coreauth.Auth, error) {

@@ -205,7 +205,7 @@ func main() {

// Optional: add a simple middleware + custom request logger

api.WithMiddleware(func(c *gin.Context) { c.Header("X-Example", "custom-provider"); c.Next() }),

api.WithRequestLoggerFactory(func(cfg *config.Config, cfgPath string) logging.RequestLogger {

- return logging.NewFileRequestLogger(true, "logs", filepath.Dir(cfgPath))

+ return logging.NewFileRequestLoggerWithOptions(true, "logs", filepath.Dir(cfgPath), cfg.ErrorLogsMaxFiles)

}),

).

WithHooks(hooks).

diff --git a/examples/http-request/main.go b/examples/http-request/main.go

index 4daee547..a667a9ca 100644

--- a/examples/http-request/main.go

+++ b/examples/http-request/main.go

@@ -58,7 +58,7 @@ func (EchoExecutor) Execute(context.Context, *coreauth.Auth, clipexec.Request, c

return clipexec.Response{}, errors.New("echo executor: Execute not implemented")

}

-func (EchoExecutor) ExecuteStream(context.Context, *coreauth.Auth, clipexec.Request, clipexec.Options) (<-chan clipexec.StreamChunk, error) {

+func (EchoExecutor) ExecuteStream(context.Context, *coreauth.Auth, clipexec.Request, clipexec.Options) (*clipexec.StreamResult, error) {

return nil, errors.New("echo executor: ExecuteStream not implemented")

}

diff --git a/go.mod b/go.mod

index 963d9c49..34237de9 100644

--- a/go.mod

+++ b/go.mod

@@ -1,9 +1,13 @@

module github.com/router-for-me/CLIProxyAPI/v6

-go 1.24.0

+go 1.26.0

require (

github.com/andybalholm/brotli v1.0.6

+ github.com/atotto/clipboard v0.1.4

+ github.com/charmbracelet/bubbles v1.0.0

+ github.com/charmbracelet/bubbletea v1.3.10

+ github.com/charmbracelet/lipgloss v1.1.0

github.com/fsnotify/fsnotify v1.9.0

github.com/gin-gonic/gin v1.10.1

github.com/go-git/go-git/v6 v6.0.0-20251009132922-75a182125145

@@ -13,6 +17,7 @@ require (

github.com/joho/godotenv v1.5.1

github.com/klauspost/compress v1.17.4

github.com/minio/minio-go/v7 v7.0.66

+ github.com/refraction-networking/utls v1.8.2

github.com/sirupsen/logrus v1.9.3

github.com/skratchdot/open-golang v0.0.0-20200116055534-eef842397966

github.com/tidwall/gjson v1.18.0

@@ -21,6 +26,7 @@ require (

golang.org/x/crypto v0.45.0

golang.org/x/net v0.47.0

golang.org/x/oauth2 v0.30.0

+ golang.org/x/sync v0.18.0

gopkg.in/natefinch/lumberjack.v2 v2.2.1

gopkg.in/yaml.v3 v3.0.1

)

@@ -29,8 +35,16 @@ require (

cloud.google.com/go/compute/metadata v0.3.0 // indirect

github.com/Microsoft/go-winio v0.6.2 // indirect

github.com/ProtonMail/go-crypto v1.3.0 // indirect

+ github.com/aymanbagabas/go-osc52/v2 v2.0.1 // indirect

github.com/bytedance/sonic v1.11.6 // indirect

github.com/bytedance/sonic/loader v0.1.1 // indirect

+ github.com/charmbracelet/colorprofile v0.4.1 // indirect

+ github.com/charmbracelet/x/ansi v0.11.6 // indirect

+ github.com/charmbracelet/x/cellbuf v0.0.15 // indirect

+ github.com/charmbracelet/x/term v0.2.2 // indirect

+ github.com/clipperhouse/displaywidth v0.9.0 // indirect

+ github.com/clipperhouse/stringish v0.1.1 // indirect

+ github.com/clipperhouse/uax29/v2 v2.5.0 // indirect

github.com/cloudflare/circl v1.6.1 // indirect

github.com/cloudwego/base64x v0.1.4 // indirect

github.com/cloudwego/iasm v0.2.0 // indirect

@@ -38,6 +52,7 @@ require (

github.com/dlclark/regexp2 v1.11.5 // indirect

github.com/dustin/go-humanize v1.0.1 // indirect

github.com/emirpasic/gods v1.18.1 // indirect

+ github.com/erikgeiser/coninput v0.0.0-20211004153227-1c3628e74d0f // indirect

github.com/gabriel-vasile/mimetype v1.4.3 // indirect

github.com/gin-contrib/sse v0.1.0 // indirect

github.com/go-git/gcfg/v2 v2.0.2 // indirect

@@ -54,21 +69,28 @@ require (

github.com/kevinburke/ssh_config v1.4.0 // indirect

github.com/klauspost/cpuid/v2 v2.3.0 // indirect

github.com/leodido/go-urn v1.4.0 // indirect

+ github.com/lucasb-eyer/go-colorful v1.3.0 // indirect

github.com/mattn/go-isatty v0.0.20 // indirect

+ github.com/mattn/go-localereader v0.0.1 // indirect

+ github.com/mattn/go-runewidth v0.0.19 // indirect

github.com/minio/md5-simd v1.1.2 // indirect

github.com/minio/sha256-simd v1.0.1 // indirect

github.com/modern-go/concurrent v0.0.0-20180306012644-bacd9c7ef1dd // indirect

github.com/modern-go/reflect2 v1.0.2 // indirect

+ github.com/muesli/ansi v0.0.0-20230316100256-276c6243b2f6 // indirect

+ github.com/muesli/cancelreader v0.2.2 // indirect

+ github.com/muesli/termenv v0.16.0 // indirect

github.com/pelletier/go-toml/v2 v2.2.2 // indirect

github.com/pjbgf/sha1cd v0.5.0 // indirect

+ github.com/rivo/uniseg v0.4.7 // indirect

github.com/rs/xid v1.5.0 // indirect

github.com/sergi/go-diff v1.4.0 // indirect

github.com/tidwall/match v1.1.1 // indirect

github.com/tidwall/pretty v1.2.0 // indirect

github.com/twitchyliquid64/golang-asm v0.15.1 // indirect

github.com/ugorji/go/codec v1.2.12 // indirect

+ github.com/xo/terminfo v0.0.0-20220910002029-abceb7e1c41e // indirect

golang.org/x/arch v0.8.0 // indirect

- golang.org/x/sync v0.18.0 // indirect

golang.org/x/sys v0.38.0 // indirect

golang.org/x/text v0.31.0 // indirect

google.golang.org/protobuf v1.34.1 // indirect

diff --git a/go.sum b/go.sum

index 4705336b..3c424c5e 100644

--- a/go.sum

+++ b/go.sum

@@ -10,10 +10,34 @@ github.com/anmitsu/go-shlex v0.0.0-20200514113438-38f4b401e2be h1:9AeTilPcZAjCFI

github.com/anmitsu/go-shlex v0.0.0-20200514113438-38f4b401e2be/go.mod h1:ySMOLuWl6zY27l47sB3qLNK6tF2fkHG55UZxx8oIVo4=

github.com/armon/go-socks5 v0.0.0-20160902184237-e75332964ef5 h1:0CwZNZbxp69SHPdPJAN/hZIm0C4OItdklCFmMRWYpio=

github.com/armon/go-socks5 v0.0.0-20160902184237-e75332964ef5/go.mod h1:wHh0iHkYZB8zMSxRWpUBQtwG5a7fFgvEO+odwuTv2gs=

+github.com/atotto/clipboard v0.1.4 h1:EH0zSVneZPSuFR11BlR9YppQTVDbh5+16AmcJi4g1z4=

+github.com/atotto/clipboard v0.1.4/go.mod h1:ZY9tmq7sm5xIbd9bOK4onWV4S6X0u6GY7Vn0Yu86PYI=

+github.com/aymanbagabas/go-osc52/v2 v2.0.1 h1:HwpRHbFMcZLEVr42D4p7XBqjyuxQH5SMiErDT4WkJ2k=

+github.com/aymanbagabas/go-osc52/v2 v2.0.1/go.mod h1:uYgXzlJ7ZpABp8OJ+exZzJJhRNQ2ASbcXHWsFqH8hp8=

github.com/bytedance/sonic v1.11.6 h1:oUp34TzMlL+OY1OUWxHqsdkgC/Zfc85zGqw9siXjrc0=

github.com/bytedance/sonic v1.11.6/go.mod h1:LysEHSvpvDySVdC2f87zGWf6CIKJcAvqab1ZaiQtds4=

github.com/bytedance/sonic/loader v0.1.1 h1:c+e5Pt1k/cy5wMveRDyk2X4B9hF4g7an8N3zCYjJFNM=

github.com/bytedance/sonic/loader v0.1.1/go.mod h1:ncP89zfokxS5LZrJxl5z0UJcsk4M4yY2JpfqGeCtNLU=

+github.com/charmbracelet/bubbles v1.0.0 h1:12J8/ak/uCZEMQ6KU7pcfwceyjLlWsDLAxB5fXonfvc=

+github.com/charmbracelet/bubbles v1.0.0/go.mod h1:9d/Zd5GdnauMI5ivUIVisuEm3ave1XwXtD1ckyV6r3E=

+github.com/charmbracelet/bubbletea v1.3.10 h1:otUDHWMMzQSB0Pkc87rm691KZ3SWa4KUlvF9nRvCICw=

+github.com/charmbracelet/bubbletea v1.3.10/go.mod h1:ORQfo0fk8U+po9VaNvnV95UPWA1BitP1E0N6xJPlHr4=

+github.com/charmbracelet/colorprofile v0.4.1 h1:a1lO03qTrSIRaK8c3JRxJDZOvhvIeSco3ej+ngLk1kk=

+github.com/charmbracelet/colorprofile v0.4.1/go.mod h1:U1d9Dljmdf9DLegaJ0nGZNJvoXAhayhmidOdcBwAvKk=

+github.com/charmbracelet/lipgloss v1.1.0 h1:vYXsiLHVkK7fp74RkV7b2kq9+zDLoEU4MZoFqR/noCY=

+github.com/charmbracelet/lipgloss v1.1.0/go.mod h1:/6Q8FR2o+kj8rz4Dq0zQc3vYf7X+B0binUUBwA0aL30=

+github.com/charmbracelet/x/ansi v0.11.6 h1:GhV21SiDz/45W9AnV2R61xZMRri5NlLnl6CVF7ihZW8=

+github.com/charmbracelet/x/ansi v0.11.6/go.mod h1:2JNYLgQUsyqaiLovhU2Rv/pb8r6ydXKS3NIttu3VGZQ=

+github.com/charmbracelet/x/cellbuf v0.0.15 h1:ur3pZy0o6z/R7EylET877CBxaiE1Sp1GMxoFPAIztPI=

+github.com/charmbracelet/x/cellbuf v0.0.15/go.mod h1:J1YVbR7MUuEGIFPCaaZ96KDl5NoS0DAWkskup+mOY+Q=

+github.com/charmbracelet/x/term v0.2.2 h1:xVRT/S2ZcKdhhOuSP4t5cLi5o+JxklsoEObBSgfgZRk=

+github.com/charmbracelet/x/term v0.2.2/go.mod h1:kF8CY5RddLWrsgVwpw4kAa6TESp6EB5y3uxGLeCqzAI=

+github.com/clipperhouse/displaywidth v0.9.0 h1:Qb4KOhYwRiN3viMv1v/3cTBlz3AcAZX3+y9OLhMtAtA=

+github.com/clipperhouse/displaywidth v0.9.0/go.mod h1:aCAAqTlh4GIVkhQnJpbL0T/WfcrJXHcj8C0yjYcjOZA=

+github.com/clipperhouse/stringish v0.1.1 h1:+NSqMOr3GR6k1FdRhhnXrLfztGzuG+VuFDfatpWHKCs=

+github.com/clipperhouse/stringish v0.1.1/go.mod h1:v/WhFtE1q0ovMta2+m+UbpZ+2/HEXNWYXQgCt4hdOzA=

+github.com/clipperhouse/uax29/v2 v2.5.0 h1:x7T0T4eTHDONxFJsL94uKNKPHrclyFI0lm7+w94cO8U=

+github.com/clipperhouse/uax29/v2 v2.5.0/go.mod h1:Wn1g7MK6OoeDT0vL+Q0SQLDz/KpfsVRgg6W7ihQeh4g=

github.com/cloudflare/circl v1.6.1 h1:zqIqSPIndyBh1bjLVVDHMPpVKqp8Su/V+6MeDzzQBQ0=

github.com/cloudflare/circl v1.6.1/go.mod h1:uddAzsPgqdMAYatqJ0lsjX1oECcQLIlRpzZh3pJrofs=

github.com/cloudwego/base64x v0.1.4 h1:jwCgWpFanWmN8xoIUHa2rtzmkd5J2plF/dnLS6Xd/0Y=

@@ -33,6 +57,8 @@ github.com/elazarl/goproxy v1.7.2 h1:Y2o6urb7Eule09PjlhQRGNsqRfPmYI3KKQLFpCAV3+o

github.com/elazarl/goproxy v1.7.2/go.mod h1:82vkLNir0ALaW14Rc399OTTjyNREgmdL2cVoIbS6XaE=

github.com/emirpasic/gods v1.18.1 h1:FXtiHYKDGKCW2KzwZKx0iC0PQmdlorYgdFG9jPXJ1Bc=

github.com/emirpasic/gods v1.18.1/go.mod h1:8tpGGwCnJ5H4r6BWwaV6OrWmMoPhUl5jm/FMNAnJvWQ=

+github.com/erikgeiser/coninput v0.0.0-20211004153227-1c3628e74d0f h1:Y/CXytFA4m6baUTXGLOoWe4PQhGxaX0KpnayAqC48p4=

+github.com/erikgeiser/coninput v0.0.0-20211004153227-1c3628e74d0f/go.mod h1:vw97MGsxSvLiUE2X8qFplwetxpGLQrlU1Q9AUEIzCaM=

github.com/fsnotify/fsnotify v1.9.0 h1:2Ml+OJNzbYCTzsxtv8vKSFD9PbJjmhYF14k/jKC7S9k=

github.com/fsnotify/fsnotify v1.9.0/go.mod h1:8jBTzvmWwFyi3Pb8djgCCO5IBqzKJ/Jwo8TRcHyHii0=

github.com/gabriel-vasile/mimetype v1.4.3 h1:in2uUcidCuFcDKtdcBxlR0rJ1+fsokWf+uqxgUFjbI0=

@@ -99,8 +125,14 @@ github.com/kr/text v0.1.0 h1:45sCR5RtlFHMR4UwH9sdQ5TC8v0qDQCHnXt+kaKSTVE=

github.com/kr/text v0.1.0/go.mod h1:4Jbv+DJW3UT/LiOwJeYQe1efqtUx/iVham/4vfdArNI=

github.com/leodido/go-urn v1.4.0 h1:WT9HwE9SGECu3lg4d/dIA+jxlljEa1/ffXKmRjqdmIQ=

github.com/leodido/go-urn v1.4.0/go.mod h1:bvxc+MVxLKB4z00jd1z+Dvzr47oO32F/QSNjSBOlFxI=

+github.com/lucasb-eyer/go-colorful v1.3.0 h1:2/yBRLdWBZKrf7gB40FoiKfAWYQ0lqNcbuQwVHXptag=

+github.com/lucasb-eyer/go-colorful v1.3.0/go.mod h1:R4dSotOR9KMtayYi1e77YzuveK+i7ruzyGqttikkLy0=

github.com/mattn/go-isatty v0.0.20 h1:xfD0iDuEKnDkl03q4limB+vH+GxLEtL/jb4xVJSWWEY=